Difference between revisions of "Journal:An integrated data analytics platform"

Shawndouglas (talk | contribs) (Saving and adding more.) |

Shawndouglas (talk | contribs) (Finished adding rest of content.) |

||

| (3 intermediate revisions by the same user not shown) | |||

| Line 9: | Line 9: | ||

|affiliations = NASA Jet Propulsion Laboratory, Center for Ocean-Atmospheric Prediction Studies, National Center for Atmospheric Research,<br />George Mason University | |affiliations = NASA Jet Propulsion Laboratory, Center for Ocean-Atmospheric Prediction Studies, National Center for Atmospheric Research,<br />George Mason University | ||

|contact = Email: thomas dot huang at jpl dot nasa dot gov | |contact = Email: thomas dot huang at jpl dot nasa dot gov | ||

|editors = | |editors = Chiba, Sanae | ||

|pub_year = 2019 | |pub_year = 2019 | ||

|vol_iss = '''6''' | |vol_iss = '''6''' | ||

| Line 19: | Line 19: | ||

|download = [https://www.frontiersin.org/articles/10.3389/fmars.2019.00354/pdf https://www.frontiersin.org/articles/10.3389/fmars.2019.00354/pdf] (PDF) | |download = [https://www.frontiersin.org/articles/10.3389/fmars.2019.00354/pdf https://www.frontiersin.org/articles/10.3389/fmars.2019.00354/pdf] (PDF) | ||

}} | }} | ||

==Abstract== | ==Abstract== | ||

A scientific integrated data analytics platform (IDAP) is an environment that enables the confluence of resources for scientific investigation. It harmonizes data, tools, and computational resources to enable the research community to focus on the investigation rather than spending time on security, data preparation, management, etc. OceanWorks is a National Aeronautics and Space Administration (NASA) technology integration project to establish a [[Cloud computing|cloud-based]] integrated ocean science [[Data analysis|data analytics]] platform for managing ocean science research data at NASA’s Physical Oceanography Distributed Active Archive Center (PO.DAAC). The platform focuses on advancement and maturity by bringing together several NASA open-source, big data projects for parallel analytics, anomaly detection, ''in situ''-to-satellite data matching, quality-screened data subsetting, search relevancy, and data discovery. Our communities are relying on data available through [[Distributed computing|distributed data centers]] to conduct their research. In typical investigations, scientists would (1) search for data, (2) evaluate the relevance of that data, (3) download it, and (4) then apply algorithms to identify trends, anomalies, or other attributes of the data. Such a workflow cannot scale if the research involves a massive amount of data or multi-variate measurements. With the upcoming NASA Surface Water and Ocean Topography (SWOT) mission expected to produce over 20 petabytes (PB) of observational data during its three-year nominal mission, the volume of data will challenge all existing earth science data archival, distribution, and analysis paradigms. This paper discusses how OceanWorks enhances the analysis of physical ocean data where the computation is done on an elastic cloud platform next to the archive to deliver fast, web-accessible services for working with oceanographic measurements. | A scientific integrated data analytics platform (IDAP) is an environment that enables the confluence of resources for scientific investigation. It harmonizes data, tools, and computational resources to enable the research community to focus on the investigation rather than spending time on security, data preparation, management, etc. OceanWorks is a National Aeronautics and Space Administration (NASA) technology integration project to establish a [[Cloud computing|cloud-based]] integrated ocean science [[Data analysis|data analytics]] platform for managing ocean science research data at NASA’s Physical Oceanography Distributed Active Archive Center (PO.DAAC). The platform focuses on advancement and maturity by bringing together several NASA open-source, big data projects for parallel analytics, anomaly detection, ''in situ''-to-satellite data matching, quality-screened data subsetting, search relevancy, and data discovery. Our communities are relying on data available through [[Distributed computing|distributed data centers]] to conduct their research. In typical investigations, scientists would (1) search for data, (2) evaluate the relevance of that data, (3) download it, and (4) then apply algorithms to identify trends, anomalies, or other attributes of the data. Such a workflow cannot scale if the research involves a massive amount of data or multi-variate measurements. With the upcoming NASA Surface Water and Ocean Topography (SWOT) mission expected to produce over 20 petabytes (PB) of observational data during its three-year nominal mission, the volume of data will challenge all existing earth science data archival, distribution, and analysis paradigms. This paper discusses how OceanWorks enhances the analysis of physical ocean data where the computation is done on an elastic cloud platform next to the archive to deliver fast, web-accessible services for working with oceanographic measurements. | ||

| Line 57: | Line 53: | ||

==OceanWorks components== | ==OceanWorks components== | ||

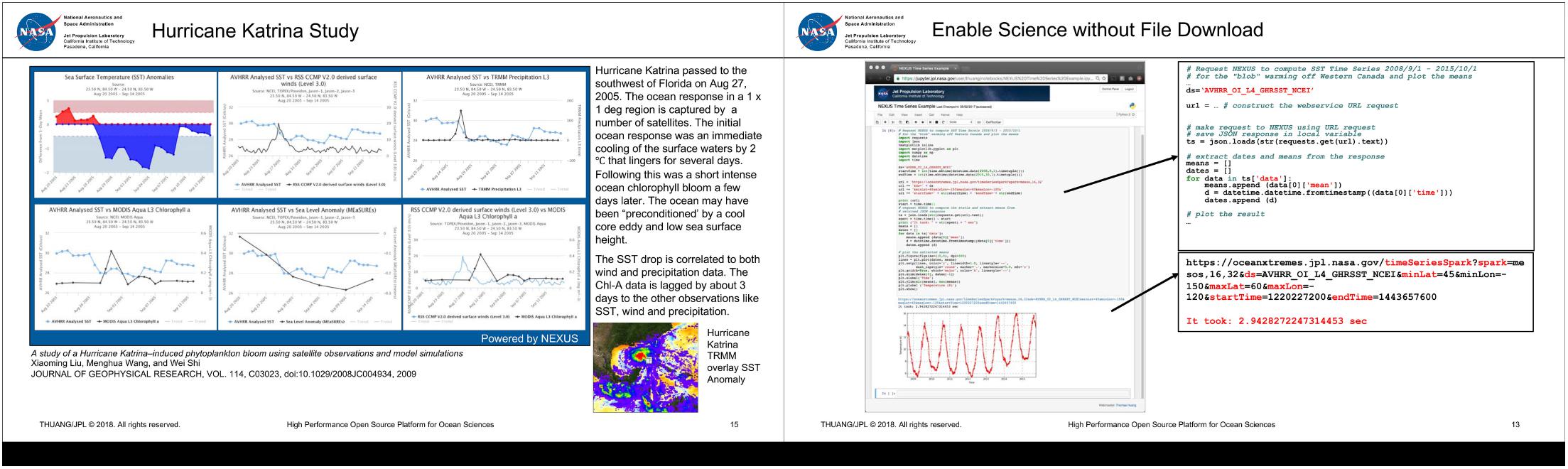

OceanWorks is an orchestration of several NASA big data technologies as a coherent web service platform. Rather than focus on one science application, this web service platform enables various types of applications. Figure 2 show how to use OceanWorks to facilitate on-the-fly analysis of Hurricane Katrina<ref name="LiuAStudy09">{{cite journal |title=A study of a Hurricane Katrina–induced phytoplankton bloom using satellite observations and model simulations |journal=Journal of Geophysical Research Oceans |author=Liu, X.; Wang, M.; Shi, W. |volume=114 |issue=C3 |page=C03023 |year=2009 |doi=10.1029/2008JC004934}}</ref> and to use [[Jupyter Notebook]] to interact with OceanWorks to analyze the "Blob" in the northeast Pacific.<ref name="CavoleBiolog16">{{cite journal |title=Biological Impacts of the 2013–2015 Warm-Water Anomaly in the Northeast Pacific: Winners, Losers, and the Future |journal=Oceanography |author=Cavole, L.M.; Demko, A.M.; Diner, R.E. et al. |volume=29 |issue=2 |page=273–85 |year=2016 |doi=10.5670/oceanog.2016.32}}</ref> This section discusses some of the key components of OceanWorks. | |||

[[File:Fig2 Armstrong FrontMarineSci2019 6.jpg|1400px]] | |||

{{clear}} | |||

{| | |||

| STYLE="vertical-align:top;"| | |||

{| border="0" cellpadding="5" cellspacing="0" width="1400px" | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;"| <blockquote>'''Figure 2.''' Example OceanWorks services</blockquote> | |||

|- | |||

|} | |||

|} | |||

===Data analytics=== | |||

We have been developing analytics solutions around common file packaging standards such as netCDF and HDF. We evangelize for the Climate and Forecast (CF) metadata convention and the Attribute Convention for Dataset Discovery (ACDD) to promote interoperability and improve our searches. Yet, there is very little progress in tackling our current big data analytic challenges, which include how to work with petabyte-scale data and being able to quickly look up the most relevant data for a given research. While the current method of subsetting and analyzing one daily global observational file at a time is the most straightforward, it is an unsustainable approach for analyzing petabytes of data. The common bottleneck is in working with large collections of files. Since these are global files, researchers are finding themselves having to move (or copy) more data than they need for their regional analysis. Web service solutions such as OPeNDAP and THREDDS provide a web service API to work with these data, but their implementation still involves iterating through large collection of files. | |||

OceanWorks’ analytics engine is called NEXUS.<ref name="HuangEmerging15">{{cite journal |title=Emerging Big Data Technologies for Geoscience - NEXUS: The Deep Data Platform |journal=2016 Federation of Earth Science Information Partners Winter Meeting |author=Huang, T.; Armstrong, E.; Chang, G. et al. |year=2015 |url=http://commons.esipfed.org/node/8810}}</ref> It takes on a different approach for storing and analyzing large collections of geospatial, array-based data by breaking the netCDF/HDF file data into data tiles and storing them in a cloud-scale data management system. With each data tile having its own geospatial index, a regional subset operation only requires the retrieval of the relevant tiles into the analytic engine. Our recent benchmark shows NEXUS can compute an area-averaged time series hundreds time faster than a traditional file-based approach.<ref name="JacobDesign17">{{cite journal |title=Design Patterns to Achieve 300x Speedup for Oceanographic Analytics in the Cloud |journal=2017 American Geophysical Union Fall Meeting |author=Jacob, J.C.; Greguska III, F.R.; Huang, T. et al. |year=2017 |url=https://ui.adsabs.harvard.edu/abs/2017AGUFMIN23F..06J/abstract}}</ref> The traditional file-based approach typically involves subsetting large collection of time-based granule files before applying analysis on the subsetted data. Much of the traditional file-based approach is spent on file manipulation. | |||

OceanWorks enables advanced analytics that can easily scale to the available computation hardware along the full spectrum, from an ordinary laptop or desktop computer, to a multi-node server class cluster computer, to a private or public cloud computer. The architectural drivers are: | |||

* both REST and Python API interfaces to the analytics; | |||

* in-memory map-reduce style of computation; | |||

* horizontal scaling, such that computational resources can be added or removed on demand; | |||

* rapid access to data tiles that form natural spatio-temporal partition boundaries for parallelization; | |||

* computations performed close to the data store to minimize network traffic; and | |||

* container-based deployment. | |||

The REST and Python API enables OceanWorks to be easily plugged into a variety of web-based user interfaces, each tuned to particular domains. Calls to OceanWorks from Jupyter Notebook enables interactive cloud-scale, science-grade analytics. | |||

Built-in analytics are provided for the following algorithms: | |||

:1. Area-averaged time series to compute statistics (e.g., mean, minimum, maximum, standard deviation) of a single variable or two variables being compared; can optionally apply seasonal or low-pass filters to the result | |||

:2. Time-averaged map to produce a geospatial map that averages gridded measurements over time at each grid coordinate within a user-defined spatio-temporal bounding box | |||

:3. Correlation map to compute the correlation coefficient at each grid coordinate within a user-specified spatio-temporal bounding box for two identically gridded datasets | |||

:4. Climatological map to compute monthly climatology for a user-specified month and year range | |||

:5. Daily difference average to subtract a dataset from its climatology, then, for each timestamp, average the pixel-by-pixel differences within a user-specified spatio-temporal bounding box | |||

:6. ''In situ'' matching to discover ''in situ'' measurements that correspond to a gridded satellite measurement | |||

Additionally, authenticated or trusted users may inject their own custom algorithm code for execution within OceanWorks. An API is provided to pass the custom code as either a single or multi-line string or as a Python file or module. | |||

===''In situ''-to-satellite matching=== | |||

Comparison of measurements from different ocean observing systems is a frequently used method to assess the quality and accuracy of measurements. The matching or collocating and evaluation of ''in situ'' and satellite measurements is a particularly valuable method because the physical characteristics of the observing systems are so different, and therefore the errors related to instrumentation and sampling are not convoluted. The satellite community tends to use collocated ''in situ'' measurements to develop, improve, calibrate, and validate the integrity of retrieval algorithms (e.g., the 2003 work of Bourassa ''et al.''<ref name="BourassaSeaWinds03">{{cite journal |title=SeaWinds validation with research vessels |journal=Journal of Geophysical Research Oceans |author=Bourassa, M.A.; Legler, D.M.; O'Brien, J.J. et al. |volume=108 |issue=C2 |page=3019 |year=2003 |doi=10.1029/2001JC001028}}</ref>). The ''in situ'' observational community uses collocated satellite data to assess the quality of extreme/suspicious values and to add spatial context to the often sparse point values. In both of these research realms there are many more detailed use cases, e.g., near real-time decision support of field programs, planning exercises for future observing system deployments, and development of integrated ''in situ'' plus satellite data, global gridded analyses products that are useful for stand-alone research and for model initialization and boundary conditions. | |||

There are several major data challenges related to successful ''in situ'' and satellite data collation research. Disparate data volume and variety is the primary challenge. Individual satellite collections are typically large in volume, have relatively homogeneous sampling, are derived from a single platform, are composed of a consistent set of parameters, and are represented as scan lines, swaths, or globally gridded fields. ''In situ'' observations typically bring the variety challenge into the problem. They are often replete with heterogeneous observing platforms (ships, drifting and stationary buoys, glides, etc.), instrumentation types and sampling methods, highly varying sampling rates, and sparse spatio-temporal coverage over the global ocean. Another major challenge for collation-based research is logistical. The archives of ''in situ'' and satellite data are often distributed at different centers, have a variety of access methods that need to be understood and applied, and have different data formats and quality control [[information]]. Additionally, these types of data can over time dynamically extend (adding data to the time series) or have completely new versions with critical data quality improvements. The OceanWorks match-up service<ref name="SmithInteg18">{{cite journal |title=Integrating the Distributed Oceanographic Match-Up Service into OceanWorks (OD44A-2773) |journal=2018 Ocean Sciences Meeting |author=Smith, S.R.; Elya, J.L.; Bourassa, M.A. et al. |year=2018 |url=https://agu.confex.com/agu/os18/meetingapp.cgi/Paper/311722}}</ref> resolves these major challenges and many other secondary challenges. | |||

===Quality-screened subsetting=== | |||

When working with earth science data and information, whether derived from an ''in situ'' platform or airborne and satellite instruments, users often need to access, understand, and apply data quality information such as quality flags related to instrument and algorithm performance, physical plausibility, or other environmental characteristics or conditions. The ability to screen the physical data records via services that apply standardized sets of quality flags, states, or conditions is imperative to allow scientists to seamlessly use these data to meet their requirements for error and accuracy. | |||

In the oceanographic ''in situ'' realm there are a number of models and conventions in use by the community. The OceanWorks project has chosen the IODE (International Oceanographic Data and Information Exchange) convention<ref name="IOCOcean13">{{cite web |url=https://www.iode.org/index.php?option=com_oe&task=viewDocumentRecord&docID=10762 |title=Ocean Data Standards, Vol.3: Recommendation for a Quality Flag Scheme for the Exchange of Oceanographic and Marine Meteorological Data |work=IOC Manuals and Guides, 54, Vol. 3 |author=Intergovernmental Oceanographic Commission of UNESCO |publisher=UNESCO |date=18 April 2013}}</ref>, an internationally recognized and developed approach to tag ''in situ'' observations using both a primary and secondary level of quality flags. OceanWorks will screen ''in situ'' data using five primary level flags. This approach was chosen because of its simplicity, which allows a direct mapping and transformation of the native quality flags embedded in the source ''in situ'' datasets (e.g., ICOADS<ref name="FreemanICOADS17">{{cite journal |title=ICOADS Release 3.0: a major update to the historical marine climate record |journal=International Journal of Climatology |author=Freeman, E.; Woodruff, S.D.; Worley, S.J. et al. |volume=37 |issue=5 |pages=2211–32 |year=2017 |doi=10.1002/joc.4775}}</ref> and SAMOS) into the IODE scheme. | |||

In the oceanographic satellite realm, a similar need for standardization is exacerbated by the increasingly dense availability of quality information in the form of data accuracy, processing algorithms states and failures, environmental conditions, and auxiliary variables that are packed as conditions into quality variables represented as scalar or bit flags. This level of complexity makes it often difficult and confusing for a science user to understand and apply the proper flags to screen for meaningful physical data. The NASA's Virtual Quality Screening Service (VQSS) software project<ref name="ArmstrongAServ16">{{cite journal |title=A service for the application of data quality information to NASA earth science satellite records |journal=2016 American Geophysical Union Fall Meeting |author=Armstrong, E.M.; Xing, Z.; Fry, C. et al. |year=2016 |url=http://adsabs.harvard.edu/abs/2016AGUFMIN53C1905A}}</ref> addressed these issues by implementing a service infrastructure to expose, apply, and extract quality screening information through implementations of strategic databases and web services, data discovery, and exposure of granule-based quality information via interactive menus. Fundamentally, VQSS leveraged on the availability of Climate and Forecast (CF) metadata conventions applied to the satellite quality variables that strictly standardizes the structure and content of quality information through its attributes: <tt>flag_values</tt>, <tt>flag_mask</tt>, and <tt>flag_meanings</tt>. Employed web services are able to seamlessly extract physical information in the form of netCDF and JSON outputs based on screening conditions using these bit flag and scaler conditions, auxiliary variables for data threshold conditions, and many other use cases. OceanWorks employs this architecture to allow users a similar capability to apply the quality information embedded in the gridded and ungridded input satellite data sources for sea surface temperature, ocean color, sea level, wind, and precipitation parameters. | |||

===Search relevancy and discovery=== | |||

Retrieving appropriate datasets is the prerequisite for data analysis; however, as the size of our archives increases faster than ever, it poses a great challenge for researchers and developers to efficiently identify the desired dataset(s). The PO.DAAC supplies the earth science community with a large number of over 600 unique publicly accessible datasets collected by satellites and other missions. Although the PO.DAAC portal provides a valuable free-text keyword search service to facilitate the searching process, it still has significant limitations. First, the default keyword-based search method is popular in geospatial portals, which does not take semantic meaning of the query into account. For example, this may occur when the search engine cannot retrieve metadata only containing “SLP” for a query of “sea level pressure.” Second, only single attributes such as spatial resolution, processing level, and monthly popularity are used in the default ranking algorithm in most geospatial portals instead of multidimensional preferences that should be considered in the ranking process. Third, the PO.DAAC portal has an unsatisfactory implementation of data relevancy, with useful datasets often getting buried in the search return list or being non-existent. Improvements to data relevancy would provide immediate improvements in the user search experience and results.<ref name="JiangASmart18">{{cite journal |title=A Smart Web-Based Geospatial Data Discovery System with Oceanographic Data as an Example |journal=International Journal of Geo-Information |author=Jiang, Y.; Li, Y.; Yang, C. et al. |volume=7 |issue=2 |page=62 |year=2018 |doi=10.3390/ijgi7020062}}</ref> | |||

OceanWorks is equipped with a data discovery engine containing a profile analyzer<ref name="JiangAComp17">{{cite journal |title=A comprehensive methodology for discovering semantic relationships among geospatial vocabularies using oceanographic data discovery as an example |journal=International Journal of Geographical Information Science |author=Jiang, Y.; Li, Y.; Yang, C. et al. |volume=31 |issue=11 |page=2310–28 |year=2017 |doi=10.1080/13658816.2017.1357819}}</ref>, a knowledge base, and a smart engine. Raw web usage logs are collected from multiple servers and grouped into sessions through the profile analyzer. Reconstructed sessions are valuable sources of learning vocabulary linkages in addition to [[metadata]].<ref name="JiangAComp17" /> A RankSVM model<ref name="JoachimsOptim02">{{cite journal |title=Optimizing search engines using clickthrough data |journal=Proceedings of the Eighth ACM SIGKDD International Conference on Knowledge Discovery and Data Mining |author=Joachims, T. |page=133–42 |year=2002 |doi=10.1145/775047.775067}}</ref> is trained on a few predefined ranking features with optimal ranking list provided by domain experts, aiming to increase the rank of data more relevant to the query.<ref name="JiangASmart18" /> A recommender calculates the relevancy between metadata using their attributes and logs. A knowledge base is populated to store information like domain term linkages, metadata relevancy, as well as a pretrained model for ranking and recommendation. When a user inputs a query in the search box, highly related terms are extracted from the knowledge base to expand the original search query, and the search engine will retrieve data using the rewritten query instead of the input query, resulting in a higher recall score. The retrieved datasets are not to be displayed to the user directly but rather reranked by the pretrained model to achieve a better ranking list. If the user chooses to view a metadata record, the recommender will retrieve a list of related datasets to the current dataset being viewed, helping the user efficiently find additional resources.<ref name="JiangTowards18">{{cite journal |title=Towards intelligent geospatial data discovery: A machine learning framework for search ranking |journal=International Journal of Digital Earth |author=Jiang, Y.; Li, Y.; Yang, C. et al. |volume=11 |issue=9 |page=956–71 |year=2018 |doi=10.1080/17538947.2017.1371255}}</ref> In summary, the optimal workflow allows the data consumer to acquire a dataset efficiently and accurately using advanced machine learning methods. | |||

==Applications and infusion== | |||

OceanWorks has been deployed for use by a number of NASA projects. Some of these include the NASA Sea Level Change Portal (SLCP), the GRACE Science Portal, and work is currently underway to integrate it with the State of the Ocean (SOTO) tool as part of the NASA PO.DAAC. Each project has slightly different needs, but all of them are able to utilize OceanWorks to fulfill their requirements. | |||

The NASA SLCP contains a wealth of information about how the Earth’s sea level is changing. It acts as a one-stop shop for everything from news articles to data analysis. OceanWorks has been deployed as the engine behind the data analysis tool integrated with the portal. The data analysis tool focuses on providing fast and easy-to-use data analysis on a curated list of datasets that are important to the understanding of sea level change. Because OceanWorks is able to be deployed in many configurations depending on project requirements, it was a perfect fit for providing the data analysis capabilities required by SLCP. In this particular instance, only a single instance of OceanWorks was required to power the analysis because the datasets being analyzed are limited in resolution and frequency. This allows for real-time interactive analysis through the JavaScript front-end. | |||

Similar to the NASA SLCP, the GRACE Science portal has limited requirements with respect to the amount of data that needs to be analyzed. However, this project required deployment to a public cloud infrastructure with different network security constraints. So, while the user interface and data are similar in nature, the back end server is hosted using Amazon Web Services (AWS). This implementation is possible because OceanWorks provides the flexibility to be deployed on a laptop, a single server, a bare metal cluster, or on a public cloud. | |||

The NASA PO.DAAC deployment has different requirements from both SLCP and GRACE. The datasets hosted by PO.DAAC are very large and cover a wide time period. In order to provide analysis capabilities for these larger datasets, more than one server is needed for analysis. OceanWorks was built for this situation and can utilize Apache Spark to scale horizontally and spread the compute requirements across a cluster of machines. With this cluster setup, OceanWorks is able to handle the analysis of larger, more dense datasets. (See the supplementary material at the end for examples of this.) | |||

The multiple deployments of OceanWorks have proven that it is capable of handling a wide range of requirements and deployment scenarios. From single node to multi node, on-premise to in the cloud, and small data to big data, the flexibility and power of OceanWorks permits diverse implementations. | |||

==Challenges and outlook== | |||

The Apache Science Data Analytics Platform (SDAP) is the open-source implementation of OceanWorks. The project team recognizes it will take years of collaborative effort to create a big data solution that satisfies the needs of various science disciplines. However, OceanWorks has demonstrated how to create a community-driven technology through a well-managed open source development process. Unlike many emerging earth science big data solutions, SDAP is designed as a platform with a simple RESTful API that supports clients developed in any programming language. This facade-based architectural approach enables SDAP to continue to evolve and leverage any new open-source big data technology. OceanWorks only addressed some of the ocean science needs. It requires contributions from our community to help continue to evolve this open-source technology. | |||

This project team would like this community to develop and infuse a common, open-source ocean analytic engine next to our distributed archives of ocean artifacts. Researchers or tool developers can interact with any of these analytics services, managed by the data centers, without having to move massive amounts of data over the internet. | |||

==Supplementary material== | |||

The supplementary material for this article can be found online at the [https://www.frontiersin.org/articles/10.3389/fmars.2019.00354/full#supplementary-material original journal's website]. | |||

==Acknowledgements== | |||

This study was carried out at the Jet Propulsion Laboratory, California Institute of Technology, in collaboration with the Center for Ocean-Atmospheric Prediction Studies (COAPS) at the Florida State University, National Center for Atmospheric Research (NCAR), and the George Mason University (GMU), under a contract with the National Aeronautics and Space Administration. Reference herein to any specific commercial product, process, or service by trade name, trademark, manufacturer, or otherwise, does not constitute or imply its endorsement by the United States Government or the Jet Propulsion Laboratory, California Institute of Technology. | |||

===Author contributions=== | |||

All authors contributed to this community paper are members of the Apache open-source NASA AIST OceanWorks project, called the Science Data Analytics Platform (SDAP). | |||

===Conflict of interest=== | |||

The authors declare that the research was conducted in the absence of any commercial or financial relationships that could be construed as a potential conflict of interest. | |||

==References== | ==References== | ||

Latest revision as of 21:59, 9 September 2019

| Full article title | An integrated data analytics platform |

|---|---|

| Journal | Frontiers in Marine Science |

| Author(s) |

Armstrong, Edward M.; Bourassa, Mark A.; Cram, Thomas A.; DeBellis, Maya; Elya, Jocelyn; Greguska III, Frank R.; Huang, Thomas; Jacob, Joseph C.; Ji, Zaihua; Jiang, Yongyao; Li, Yun; Quach, Nga; McGibbney, Lewis; Smith, Shawn; Tsontos, Vardis M.; Wilson, Brian; Worley, Steven J.; Yang, Chaowei; Yam, Elizabeth |

| Author affiliation(s) |

NASA Jet Propulsion Laboratory, Center for Ocean-Atmospheric Prediction Studies, National Center for Atmospheric Research, George Mason University |

| Primary contact | Email: thomas dot huang at jpl dot nasa dot gov |

| Editors | Chiba, Sanae |

| Year published | 2019 |

| Volume and issue | 6 |

| Page(s) | 354 |

| DOI | 10.3389/fmars.2019.00354 |

| ISSN | 2296-7745 |

| Distribution license | Creative Commons Attribution 4.0 International |

| Website | https://www.frontiersin.org/articles/10.3389/fmars.2019.00354/full |

| Download | https://www.frontiersin.org/articles/10.3389/fmars.2019.00354/pdf (PDF) |

Abstract

A scientific integrated data analytics platform (IDAP) is an environment that enables the confluence of resources for scientific investigation. It harmonizes data, tools, and computational resources to enable the research community to focus on the investigation rather than spending time on security, data preparation, management, etc. OceanWorks is a National Aeronautics and Space Administration (NASA) technology integration project to establish a cloud-based integrated ocean science data analytics platform for managing ocean science research data at NASA’s Physical Oceanography Distributed Active Archive Center (PO.DAAC). The platform focuses on advancement and maturity by bringing together several NASA open-source, big data projects for parallel analytics, anomaly detection, in situ-to-satellite data matching, quality-screened data subsetting, search relevancy, and data discovery. Our communities are relying on data available through distributed data centers to conduct their research. In typical investigations, scientists would (1) search for data, (2) evaluate the relevance of that data, (3) download it, and (4) then apply algorithms to identify trends, anomalies, or other attributes of the data. Such a workflow cannot scale if the research involves a massive amount of data or multi-variate measurements. With the upcoming NASA Surface Water and Ocean Topography (SWOT) mission expected to produce over 20 petabytes (PB) of observational data during its three-year nominal mission, the volume of data will challenge all existing earth science data archival, distribution, and analysis paradigms. This paper discusses how OceanWorks enhances the analysis of physical ocean data where the computation is done on an elastic cloud platform next to the archive to deliver fast, web-accessible services for working with oceanographic measurements.

Keywords: big data, cloud computing, ocean science, data analysis, matchup, anomaly detection, open source

Introduction

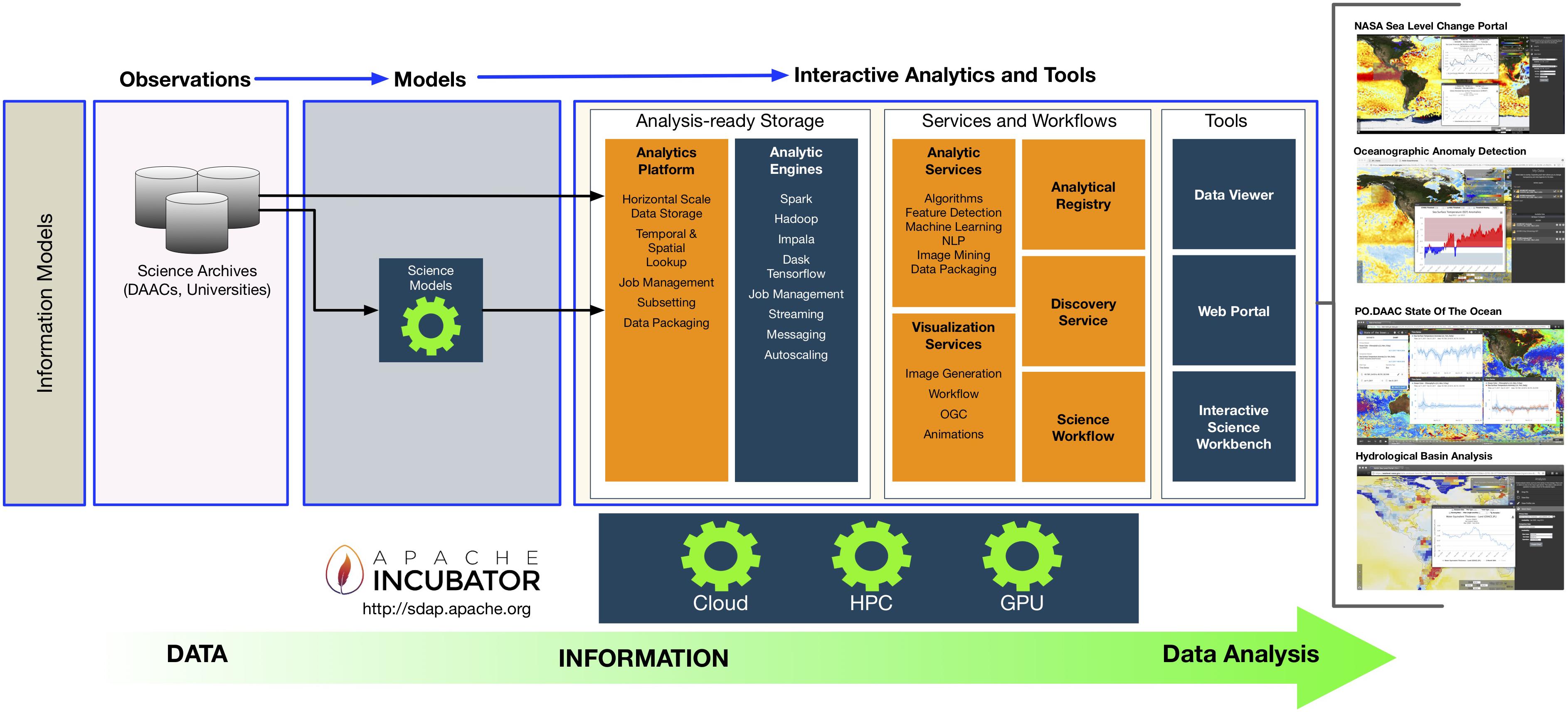

With increasing global temperature, warming of the ocean, and melting of ice sheets and glaciers, numerous impacts can be observed. From changes in anomalous ocean temperature and circulation patterns to increasing extreme weather events and more intense tropical cyclones, sea level rise and storm surge affecting coastlines can be observed, and with them drastic changes and shifts in marine ecosystems. To date, science investigating these phenomena requires researchers to work with a disjointed collection of tools such as search, reprojection, visualization, subsetting, and statistical analysis. Researchers are finding themselves having to convert nomenclature between these tools, including something as mundane as dataset name and representation of geospatial coordinates. Researchers are also at times required to transform the data into a more common representation in order to correlate measurements collected from different instruments. To solve this disjointed data research problem, the concept of an integrated data analytics platform (IDAP) (Figure 1) may help tackle these data wrangling, management, and analysis challenges so researchers can focus on their investigation.

|

In recent years, NASA’s Advanced Information Systems Technology (AIST) and Advancing Collaborating Connections for Earth System Science (ACCESS) programs have invested in developing new technologies targeting big ocean data on cloud computing platforms. Their goal is to address some of the challenges of managing oceanographic big data by leveraging modern computing infrastructure and horizontal-scale software methodologies. Rather than developing a single ocean data analysis application, we have developed a data service platform to enable many analytic applications and lay the foundation for community-driven oceanography research.

OceanWorks[1] is a NASA AIST project to mature NASA’s recent investments through integrated technologies and to provide the oceanographic community with a range of useful and advanced data manipulation and analytics capabilities. As an IDAP, OceanWorks harmonizes data, tools, and computational resources to enable oceanographers to focus on the investigation rather than spending time on security, data preparation, management, etc. Oceanographers have become increasingly frustrated with the growing number of research tool silos and their lack of coherence. A user might use one tool to search data sets and then must manually translate the dataset name, time, and spatial extends in order to satisfy the nomenclature of yet another tool (e.g., subsetting tool). To address this frustration, OceanWorks was developed to implement an IDAP for oceanographers. This platform is designed to be extensible and promote community contribution by providing an integrated collection of features, including:

- data analysis;

- data-Intensive anomaly detection;

- distributed in situ-to-satellite data matching;

- search relevancy;

- quality-screened data subsetting; and

- upload-and-execute custom parallel analytic algorithms.

In 2017 the OceanWorks project team donated all of the project’s source code to the Apache Software Foundation and established the official Science Data Analytics Platform (SDAP) project for community-driven development of the cloud-based data access and analysis platform. Today, the OceanWorks project is still in active development but through the open-source paradigm.

OceanWorks components

OceanWorks is an orchestration of several NASA big data technologies as a coherent web service platform. Rather than focus on one science application, this web service platform enables various types of applications. Figure 2 show how to use OceanWorks to facilitate on-the-fly analysis of Hurricane Katrina[2] and to use Jupyter Notebook to interact with OceanWorks to analyze the "Blob" in the northeast Pacific.[3] This section discusses some of the key components of OceanWorks.

|

Data analytics

We have been developing analytics solutions around common file packaging standards such as netCDF and HDF. We evangelize for the Climate and Forecast (CF) metadata convention and the Attribute Convention for Dataset Discovery (ACDD) to promote interoperability and improve our searches. Yet, there is very little progress in tackling our current big data analytic challenges, which include how to work with petabyte-scale data and being able to quickly look up the most relevant data for a given research. While the current method of subsetting and analyzing one daily global observational file at a time is the most straightforward, it is an unsustainable approach for analyzing petabytes of data. The common bottleneck is in working with large collections of files. Since these are global files, researchers are finding themselves having to move (or copy) more data than they need for their regional analysis. Web service solutions such as OPeNDAP and THREDDS provide a web service API to work with these data, but their implementation still involves iterating through large collection of files.

OceanWorks’ analytics engine is called NEXUS.[4] It takes on a different approach for storing and analyzing large collections of geospatial, array-based data by breaking the netCDF/HDF file data into data tiles and storing them in a cloud-scale data management system. With each data tile having its own geospatial index, a regional subset operation only requires the retrieval of the relevant tiles into the analytic engine. Our recent benchmark shows NEXUS can compute an area-averaged time series hundreds time faster than a traditional file-based approach.[5] The traditional file-based approach typically involves subsetting large collection of time-based granule files before applying analysis on the subsetted data. Much of the traditional file-based approach is spent on file manipulation.

OceanWorks enables advanced analytics that can easily scale to the available computation hardware along the full spectrum, from an ordinary laptop or desktop computer, to a multi-node server class cluster computer, to a private or public cloud computer. The architectural drivers are:

- both REST and Python API interfaces to the analytics;

- in-memory map-reduce style of computation;

- horizontal scaling, such that computational resources can be added or removed on demand;

- rapid access to data tiles that form natural spatio-temporal partition boundaries for parallelization;

- computations performed close to the data store to minimize network traffic; and

- container-based deployment.

The REST and Python API enables OceanWorks to be easily plugged into a variety of web-based user interfaces, each tuned to particular domains. Calls to OceanWorks from Jupyter Notebook enables interactive cloud-scale, science-grade analytics.

Built-in analytics are provided for the following algorithms:

- 1. Area-averaged time series to compute statistics (e.g., mean, minimum, maximum, standard deviation) of a single variable or two variables being compared; can optionally apply seasonal or low-pass filters to the result

- 2. Time-averaged map to produce a geospatial map that averages gridded measurements over time at each grid coordinate within a user-defined spatio-temporal bounding box

- 3. Correlation map to compute the correlation coefficient at each grid coordinate within a user-specified spatio-temporal bounding box for two identically gridded datasets

- 4. Climatological map to compute monthly climatology for a user-specified month and year range

- 5. Daily difference average to subtract a dataset from its climatology, then, for each timestamp, average the pixel-by-pixel differences within a user-specified spatio-temporal bounding box

- 6. In situ matching to discover in situ measurements that correspond to a gridded satellite measurement

Additionally, authenticated or trusted users may inject their own custom algorithm code for execution within OceanWorks. An API is provided to pass the custom code as either a single or multi-line string or as a Python file or module.

In situ-to-satellite matching

Comparison of measurements from different ocean observing systems is a frequently used method to assess the quality and accuracy of measurements. The matching or collocating and evaluation of in situ and satellite measurements is a particularly valuable method because the physical characteristics of the observing systems are so different, and therefore the errors related to instrumentation and sampling are not convoluted. The satellite community tends to use collocated in situ measurements to develop, improve, calibrate, and validate the integrity of retrieval algorithms (e.g., the 2003 work of Bourassa et al.[6]). The in situ observational community uses collocated satellite data to assess the quality of extreme/suspicious values and to add spatial context to the often sparse point values. In both of these research realms there are many more detailed use cases, e.g., near real-time decision support of field programs, planning exercises for future observing system deployments, and development of integrated in situ plus satellite data, global gridded analyses products that are useful for stand-alone research and for model initialization and boundary conditions.

There are several major data challenges related to successful in situ and satellite data collation research. Disparate data volume and variety is the primary challenge. Individual satellite collections are typically large in volume, have relatively homogeneous sampling, are derived from a single platform, are composed of a consistent set of parameters, and are represented as scan lines, swaths, or globally gridded fields. In situ observations typically bring the variety challenge into the problem. They are often replete with heterogeneous observing platforms (ships, drifting and stationary buoys, glides, etc.), instrumentation types and sampling methods, highly varying sampling rates, and sparse spatio-temporal coverage over the global ocean. Another major challenge for collation-based research is logistical. The archives of in situ and satellite data are often distributed at different centers, have a variety of access methods that need to be understood and applied, and have different data formats and quality control information. Additionally, these types of data can over time dynamically extend (adding data to the time series) or have completely new versions with critical data quality improvements. The OceanWorks match-up service[7] resolves these major challenges and many other secondary challenges.

Quality-screened subsetting

When working with earth science data and information, whether derived from an in situ platform or airborne and satellite instruments, users often need to access, understand, and apply data quality information such as quality flags related to instrument and algorithm performance, physical plausibility, or other environmental characteristics or conditions. The ability to screen the physical data records via services that apply standardized sets of quality flags, states, or conditions is imperative to allow scientists to seamlessly use these data to meet their requirements for error and accuracy.

In the oceanographic in situ realm there are a number of models and conventions in use by the community. The OceanWorks project has chosen the IODE (International Oceanographic Data and Information Exchange) convention[8], an internationally recognized and developed approach to tag in situ observations using both a primary and secondary level of quality flags. OceanWorks will screen in situ data using five primary level flags. This approach was chosen because of its simplicity, which allows a direct mapping and transformation of the native quality flags embedded in the source in situ datasets (e.g., ICOADS[9] and SAMOS) into the IODE scheme.

In the oceanographic satellite realm, a similar need for standardization is exacerbated by the increasingly dense availability of quality information in the form of data accuracy, processing algorithms states and failures, environmental conditions, and auxiliary variables that are packed as conditions into quality variables represented as scalar or bit flags. This level of complexity makes it often difficult and confusing for a science user to understand and apply the proper flags to screen for meaningful physical data. The NASA's Virtual Quality Screening Service (VQSS) software project[10] addressed these issues by implementing a service infrastructure to expose, apply, and extract quality screening information through implementations of strategic databases and web services, data discovery, and exposure of granule-based quality information via interactive menus. Fundamentally, VQSS leveraged on the availability of Climate and Forecast (CF) metadata conventions applied to the satellite quality variables that strictly standardizes the structure and content of quality information through its attributes: flag_values, flag_mask, and flag_meanings. Employed web services are able to seamlessly extract physical information in the form of netCDF and JSON outputs based on screening conditions using these bit flag and scaler conditions, auxiliary variables for data threshold conditions, and many other use cases. OceanWorks employs this architecture to allow users a similar capability to apply the quality information embedded in the gridded and ungridded input satellite data sources for sea surface temperature, ocean color, sea level, wind, and precipitation parameters.

Search relevancy and discovery

Retrieving appropriate datasets is the prerequisite for data analysis; however, as the size of our archives increases faster than ever, it poses a great challenge for researchers and developers to efficiently identify the desired dataset(s). The PO.DAAC supplies the earth science community with a large number of over 600 unique publicly accessible datasets collected by satellites and other missions. Although the PO.DAAC portal provides a valuable free-text keyword search service to facilitate the searching process, it still has significant limitations. First, the default keyword-based search method is popular in geospatial portals, which does not take semantic meaning of the query into account. For example, this may occur when the search engine cannot retrieve metadata only containing “SLP” for a query of “sea level pressure.” Second, only single attributes such as spatial resolution, processing level, and monthly popularity are used in the default ranking algorithm in most geospatial portals instead of multidimensional preferences that should be considered in the ranking process. Third, the PO.DAAC portal has an unsatisfactory implementation of data relevancy, with useful datasets often getting buried in the search return list or being non-existent. Improvements to data relevancy would provide immediate improvements in the user search experience and results.[11]

OceanWorks is equipped with a data discovery engine containing a profile analyzer[12], a knowledge base, and a smart engine. Raw web usage logs are collected from multiple servers and grouped into sessions through the profile analyzer. Reconstructed sessions are valuable sources of learning vocabulary linkages in addition to metadata.[12] A RankSVM model[13] is trained on a few predefined ranking features with optimal ranking list provided by domain experts, aiming to increase the rank of data more relevant to the query.[11] A recommender calculates the relevancy between metadata using their attributes and logs. A knowledge base is populated to store information like domain term linkages, metadata relevancy, as well as a pretrained model for ranking and recommendation. When a user inputs a query in the search box, highly related terms are extracted from the knowledge base to expand the original search query, and the search engine will retrieve data using the rewritten query instead of the input query, resulting in a higher recall score. The retrieved datasets are not to be displayed to the user directly but rather reranked by the pretrained model to achieve a better ranking list. If the user chooses to view a metadata record, the recommender will retrieve a list of related datasets to the current dataset being viewed, helping the user efficiently find additional resources.[14] In summary, the optimal workflow allows the data consumer to acquire a dataset efficiently and accurately using advanced machine learning methods.

Applications and infusion

OceanWorks has been deployed for use by a number of NASA projects. Some of these include the NASA Sea Level Change Portal (SLCP), the GRACE Science Portal, and work is currently underway to integrate it with the State of the Ocean (SOTO) tool as part of the NASA PO.DAAC. Each project has slightly different needs, but all of them are able to utilize OceanWorks to fulfill their requirements.

The NASA SLCP contains a wealth of information about how the Earth’s sea level is changing. It acts as a one-stop shop for everything from news articles to data analysis. OceanWorks has been deployed as the engine behind the data analysis tool integrated with the portal. The data analysis tool focuses on providing fast and easy-to-use data analysis on a curated list of datasets that are important to the understanding of sea level change. Because OceanWorks is able to be deployed in many configurations depending on project requirements, it was a perfect fit for providing the data analysis capabilities required by SLCP. In this particular instance, only a single instance of OceanWorks was required to power the analysis because the datasets being analyzed are limited in resolution and frequency. This allows for real-time interactive analysis through the JavaScript front-end.

Similar to the NASA SLCP, the GRACE Science portal has limited requirements with respect to the amount of data that needs to be analyzed. However, this project required deployment to a public cloud infrastructure with different network security constraints. So, while the user interface and data are similar in nature, the back end server is hosted using Amazon Web Services (AWS). This implementation is possible because OceanWorks provides the flexibility to be deployed on a laptop, a single server, a bare metal cluster, or on a public cloud.

The NASA PO.DAAC deployment has different requirements from both SLCP and GRACE. The datasets hosted by PO.DAAC are very large and cover a wide time period. In order to provide analysis capabilities for these larger datasets, more than one server is needed for analysis. OceanWorks was built for this situation and can utilize Apache Spark to scale horizontally and spread the compute requirements across a cluster of machines. With this cluster setup, OceanWorks is able to handle the analysis of larger, more dense datasets. (See the supplementary material at the end for examples of this.)

The multiple deployments of OceanWorks have proven that it is capable of handling a wide range of requirements and deployment scenarios. From single node to multi node, on-premise to in the cloud, and small data to big data, the flexibility and power of OceanWorks permits diverse implementations.

Challenges and outlook

The Apache Science Data Analytics Platform (SDAP) is the open-source implementation of OceanWorks. The project team recognizes it will take years of collaborative effort to create a big data solution that satisfies the needs of various science disciplines. However, OceanWorks has demonstrated how to create a community-driven technology through a well-managed open source development process. Unlike many emerging earth science big data solutions, SDAP is designed as a platform with a simple RESTful API that supports clients developed in any programming language. This facade-based architectural approach enables SDAP to continue to evolve and leverage any new open-source big data technology. OceanWorks only addressed some of the ocean science needs. It requires contributions from our community to help continue to evolve this open-source technology.

This project team would like this community to develop and infuse a common, open-source ocean analytic engine next to our distributed archives of ocean artifacts. Researchers or tool developers can interact with any of these analytics services, managed by the data centers, without having to move massive amounts of data over the internet.

Supplementary material

The supplementary material for this article can be found online at the original journal's website.

Acknowledgements

This study was carried out at the Jet Propulsion Laboratory, California Institute of Technology, in collaboration with the Center for Ocean-Atmospheric Prediction Studies (COAPS) at the Florida State University, National Center for Atmospheric Research (NCAR), and the George Mason University (GMU), under a contract with the National Aeronautics and Space Administration. Reference herein to any specific commercial product, process, or service by trade name, trademark, manufacturer, or otherwise, does not constitute or imply its endorsement by the United States Government or the Jet Propulsion Laboratory, California Institute of Technology.

Author contributions

All authors contributed to this community paper are members of the Apache open-source NASA AIST OceanWorks project, called the Science Data Analytics Platform (SDAP).

Conflict of interest

The authors declare that the research was conducted in the absence of any commercial or financial relationships that could be construed as a potential conflict of interest.

References

- ↑ Huang, T.; Armstrong, E.M.; Greguska, F.R. et al. (2018). "High Performance Open-Source Big Ocean Science Platform (OD51A-07)". 2018 Ocean Sciences Meeting. https://agu.confex.com/agu/os18/meetingapp.cgi/Paper/314599.

- ↑ Liu, X.; Wang, M.; Shi, W. (2009). "A study of a Hurricane Katrina–induced phytoplankton bloom using satellite observations and model simulations". Journal of Geophysical Research Oceans 114 (C3): C03023. doi:10.1029/2008JC004934.

- ↑ Cavole, L.M.; Demko, A.M.; Diner, R.E. et al. (2016). "Biological Impacts of the 2013–2015 Warm-Water Anomaly in the Northeast Pacific: Winners, Losers, and the Future". Oceanography 29 (2): 273–85. doi:10.5670/oceanog.2016.32.

- ↑ Huang, T.; Armstrong, E.; Chang, G. et al. (2015). "Emerging Big Data Technologies for Geoscience - NEXUS: The Deep Data Platform". 2016 Federation of Earth Science Information Partners Winter Meeting. http://commons.esipfed.org/node/8810.

- ↑ Jacob, J.C.; Greguska III, F.R.; Huang, T. et al. (2017). "Design Patterns to Achieve 300x Speedup for Oceanographic Analytics in the Cloud". 2017 American Geophysical Union Fall Meeting. https://ui.adsabs.harvard.edu/abs/2017AGUFMIN23F..06J/abstract.

- ↑ Bourassa, M.A.; Legler, D.M.; O'Brien, J.J. et al. (2003). "SeaWinds validation with research vessels". Journal of Geophysical Research Oceans 108 (C2): 3019. doi:10.1029/2001JC001028.

- ↑ Smith, S.R.; Elya, J.L.; Bourassa, M.A. et al. (2018). "Integrating the Distributed Oceanographic Match-Up Service into OceanWorks (OD44A-2773)". 2018 Ocean Sciences Meeting. https://agu.confex.com/agu/os18/meetingapp.cgi/Paper/311722.

- ↑ Intergovernmental Oceanographic Commission of UNESCO (18 April 2013). "Ocean Data Standards, Vol.3: Recommendation for a Quality Flag Scheme for the Exchange of Oceanographic and Marine Meteorological Data". IOC Manuals and Guides, 54, Vol. 3. UNESCO. https://www.iode.org/index.php?option=com_oe&task=viewDocumentRecord&docID=10762.

- ↑ Freeman, E.; Woodruff, S.D.; Worley, S.J. et al. (2017). "ICOADS Release 3.0: a major update to the historical marine climate record". International Journal of Climatology 37 (5): 2211–32. doi:10.1002/joc.4775.

- ↑ Armstrong, E.M.; Xing, Z.; Fry, C. et al. (2016). "A service for the application of data quality information to NASA earth science satellite records". 2016 American Geophysical Union Fall Meeting. http://adsabs.harvard.edu/abs/2016AGUFMIN53C1905A.

- ↑ 11.0 11.1 Jiang, Y.; Li, Y.; Yang, C. et al. (2018). "A Smart Web-Based Geospatial Data Discovery System with Oceanographic Data as an Example". International Journal of Geo-Information 7 (2): 62. doi:10.3390/ijgi7020062.

- ↑ 12.0 12.1 Jiang, Y.; Li, Y.; Yang, C. et al. (2017). "A comprehensive methodology for discovering semantic relationships among geospatial vocabularies using oceanographic data discovery as an example". International Journal of Geographical Information Science 31 (11): 2310–28. doi:10.1080/13658816.2017.1357819.

- ↑ Joachims, T. (2002). "Optimizing search engines using clickthrough data". Proceedings of the Eighth ACM SIGKDD International Conference on Knowledge Discovery and Data Mining: 133–42. doi:10.1145/775047.775067.

- ↑ Jiang, Y.; Li, Y.; Yang, C. et al. (2018). "Towards intelligent geospatial data discovery: A machine learning framework for search ranking". International Journal of Digital Earth 11 (9): 956–71. doi:10.1080/17538947.2017.1371255.

Notes

This presentation is faithful to the original, with only a few minor changes to presentation, grammar, and punctuation for improved readability. In some cases important information was missing from the references, and that information was added. The singular footnote was turned into an inline link.