User:Shawndouglas/Sandbox

|

|

This is my sandbox, where I play with features and test MediaWiki code. If you wish to leave a comment for me, please see my discussion page instead. |

Sandbox begins below

| Full article title | Application of text analytics to extract and analyze material–application pairs from a large scientific corpus |

|---|---|

| Journal | Frontiers in Research Metrics and Analytics |

| Author(s) | Kalathil, Nikhil; Byrnes, John J.; Randazzese, Lucien; Hartnett, Daragh P.; Freyman, Christina A. |

| Author affiliation(s) | Center for Innovation Strategy and Policy and the Artificial Intelligence Center, SRI International |

| Primary contact | Email: christina dot freyman at sri dot com |

| Year published | 2018 |

| Volume and issue | 2 |

| Page(s) | 15 |

| DOI | 10.3389/frma.2017.00015 |

| ISSN | 2504-0537 |

| Distribution license | Creative Commons Attribution 4.0 International |

| Website | https://www.frontiersin.org/articles/10.3389/frma.2017.00015/full |

| Download | https://www.frontiersin.org/articles/10.3389/frma.2017.00015/pdf (PDF) |

|

|

This article should not be considered complete until this message box has been removed. This is a work in progress. |

Abstract

When assessing the importance of materials (or other components) to a given set of applications, machine analysis of a very large corpus of scientific abstracts can provide an analyst a base of insights to develop further. The use of text analytics reduces the time required to conduct an evaluation, while allowing analysts to experiment with a multitude of different hypotheses. Because the scope and quantity of metadata analyzed can, and should, be large, any divergence from what a human analyst determines and what the text analysis shows provides a prompt for the human analyst to reassess any preliminary findings. In this work, we have successfully extracted material–application pairs and ranked them on their importance. This method provides a novel way to map scientific advances in a particular material to the application for which it is used. Approximately 438,000 titles and abstracts of scientific papers published from 1992 to 2011 were used to examine 16 materials. This analysis used coclustering text analysis to associate individual materials with specific clean energy applications, evaluate the importance of materials to specific applications, and assess their importance to clean energy overall. Our analysis reproduced the judgments of experts in assigning material importance to applications. The validated methods were then used to map the replacement of one material with another material in a specific application (batteries).

Keywords: machine learning classification, science policy, coclustering, text analytics, critical materials, big data

Introduction

Scientific research and technological development are inherently combinatorial practices.[1] Researchers draw from, and build on, existing work in advancing the state of the art. Increasing the ability of researchers to review and understand previous research can stimulate and accelerate scientific progress. However, the number of scientific publications grows exponentially every year both on the aggregate level and in an individual field.[2] It is impossible for any single researcher or organization to keep up with the vastness of new scientific publications. The ability to use text analytics to map the current state of the art to detect progress would enable more efficient analyses of data.

The Intelligence Advanced Research Projects Activity recognized the scale problem in 2011, creating the research program Foresight and Understanding from Scientific Exposition. Under this program, SRI and other performers processed “the massive, multi-discipline, growing, noisy, and multilingual body of scientific and patent literature from around the world and automatically generated and prioritized technical terms within emerging technical areas, nominated those that exhibit technical emergence, and provided compelling evidence for the emergence.”[3] The work presented here applies and extends that platform to efficiently identify and describe the past and present evolution of research on a given set of materials. This work applies text analytics to demonstrate how these computational tools can be used by analysts to analyze much larger sets of data and develop more iterative and adaptive material assessments to better inform and shape government and industry research strategy and resource allocation.

Materials

Ground truth

The Department of Energy (DOE) has a specific interest in critical materials related to the energy economy. The DOE identifies critical materials through analysis of their use (demand) and supply. The approach balances an analysis of market dynamics (the vulnerability of materials to economic, geopolitical, and natural supply shocks) with technological analysis (the reliance of certain technologies on various materials). The DOE's R&D agenda is directly informed by assessments of material criticality. The DOE, the National Research Council, and the European Economic and Social Committee have all articulated a need for better measurements of material criticality. However, criticality depends on a multitude of different factors, including socioeconomic factors.[4] Various organizations across the world define resource criticality according to their own independent metrics and methodologies, and designations of criticality tend to vary dramatically.[4][5][6][7][8][9][10]

Experts tasked with assessing the role of materials must make decisions about what materials to focus on, what applications to review, what data sources to consult, and what analyses to pursue.[10] The amount of data available to assess is vast and far too large for any single analyst or organization to address comprehensively. In addition, to the best of our knowledge, previous assessments of material criticality have not involved a comprehensive review of scientific research on material use. [Graedel and colleagues have published extensively using raw data on supply and other indicators to measure criticality, see Graedel et al. (2012[11], 2015[10]) and Panousi et al. (2016[12]) and the references contained within.] Recent developments in text analytic computational approaches present a unique opportunity to develop new analytic approaches for assessing material criticality in a comprehensive, replicable, iterative manner.

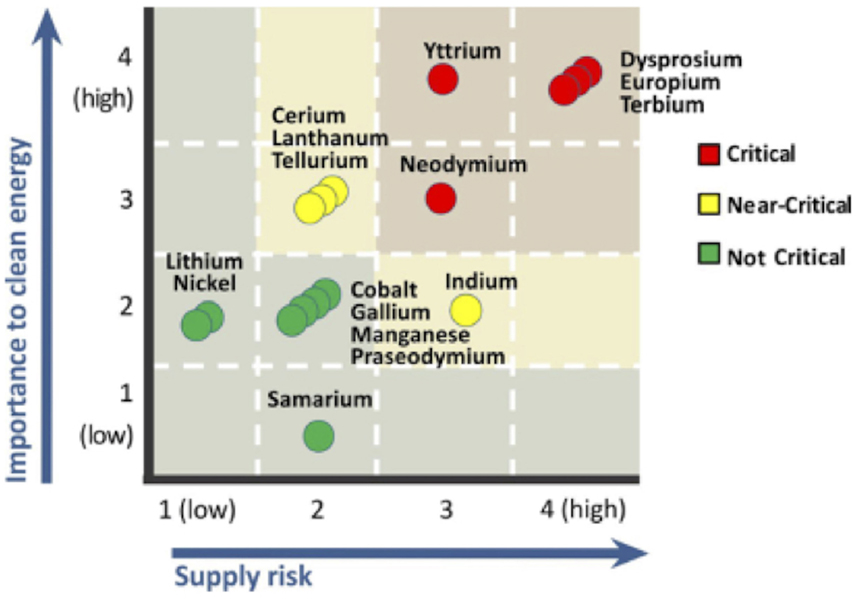

The Department of Energy’s 2011 Critical Materials Strategy (CMS) Report uses importance to clean energy as one dimension of the criticality matrix (see Figure 1).[13] In this regard, the DOE report serves as a form of ground truth for the validation of our technique, though the DOE report considered supply risk as the second dimension to criticality, which the analysis described in this paper does not address.

|

Scientific publications

Data on scientific research articles was obtained from the Web of Science (WoS) database available from Thomson Reuters (now Clarivate Analytics). WoS contains metadata records. In principle, we could have analyzed this entire database; however, for budget-related reasons, the document set was limited by a topic search of keywords that appear in a document title, abstract, author-provided keywords, and WoS-added keywords for the following:

- the 16 materials listed in the 2011 CMS, or

- the 285 unique alloys/composites of the 16 critical materials.

The document set was also limited to articles published between 1992 and 2011, the 20-year period leading up the DOE's most recent critical material assessment.

The 16 materials listed in the 2011 CMS include europium, terbium, yttrium, dysprosium, neodymium, cerium, tellurium, lanthanum, indium, lithium, gallium, praseodymium, nickel, manganese, cobalt, and samarium. Excluded from our documents set was any publication appearing in 80 fields considered not likely to cover research in scope (e.g., fields in the social sciences, biological sciences, etc.). We used the 16 materials listed above because we were interested in validating a methodology against the 2011 CMI Strategy report, and these are the materials mentioned therein. The resulting data set consisted of approximately 438,000 abstracts of scientific papers published from 1992 to 2011.

Methods

Text analytics and coclustering

The principle behind coclustering is the statistical analysis of the occurrences of terms in the text. This includes the processing of the relationships both between terms and neighboring (or nearby) terms, and between terms and the documents in which they occur. The approach presented here grouped papers by looking for sets of papers containing similar sets of terms. As detailed below, our analytic methods process meaning beyond simple counts of words, and thus, for example, put papers about earthquakes and papers about tremors in the same group, but would exclude papers in the medical space that discuss hand tremors.

Coclustering is based on an important technique in natural language processing which involves the embedding of terms into a real vector space; i.e., each word of the language is assigned a point in a high-dimensional space. Given a vector representation for a term, terms can be clustered using standard clustering techniques (also known as cluster analysis), such as hierarchical agglomeration, principle components analysis, K-means, and distribution mixture modeling.[14] This was first done in the 1980s under the name latent semantic analysis (LSA).[15] In the 1990s, neural networks were applied to find embeddings for terms using a technique called context vectors[16][17] A Bayesian analysis of context vectors in the late 1990s provided probabilistic interpretation and enabled applying information-theoretic techniques.[16][18] We refer to this technique as Association Grounded Semantics (AGS). A similar Bayesian analysis of LSA resulted in a technique referred to as probabilistic-LSA[19], which was later extended to a technique known as latent Dirichlet allocation (LDA).[20] LDA is commonly referred to as “topic modeling” and is probably the most widely applied technique for discovering groups of similar terms and similar documents. Much more recently, Google directly extended the context vector approach of the early 1990s to derive word embeddings using much greater computing power and much larger datasets than had been used in the past, resulting in the word2vec product, which is now widely known.[21][22]

The LDA model assumes that documents are collections of topics, and those topics generate terms, making it difficult to apply LDA to terms other than in the contexts of documents. Because coclustering treats the document as a context for a term, any other context of a term can be substituted in the coclustering model. For example, contexts may be neighboring terms or capital letters or punctuation. This allows us to apply coclustering to a much wider variety of feature types than is accommodated by LDA. In particular, “distributional clustering” (clustering of terms based on their distributions over nearby terms), which has been proven to be useful in information extraction[23][24] is captured by coclustering. In future work, we anticipate recognizing material names and application references using these techniques.

References

- ↑ Arthur, W.B. (2009). The Nature of Technology: What It Is and How It Evolves. Simon and Schuster. p. 256. ISBN 9781439165782.

- ↑ National Science Board (11 January 2016). "Science and Engineering Indicators 2016" (PDF). National Science Foundation. pp. 899. https://www.nsf.gov/statistics/2016/nsb20161/uploads/1/nsb20161.pdf.

- ↑ "IARPA Launches New Program to Enable the Rapid Discovery of Emerging Technical Capabilities". Office of the Director of National Intelligence. 27 September 2011. https://www.dni.gov/index.php/newsroom/press-releases/press-releases-2011/item/327-iarpa-launches-new-program-to-enable-the-rapid-discovery-of-emerging-technical-capabilities.

- ↑ 4.0 4.1 Poulton, M.M.; Jagers, S.C.; Linde, S. et al. (2013). "State of the World's Nonfuel Mineral Resources: Supply, Demand, and Socio-Institutional Fundamentals". Annual Review of Environment and Resources 38: 345–371. doi:10.1146/annurev-environ-022310-094734.

- ↑ Committee on Critical Mineral Impacts on the U.S. Economy (2008). Minerals, Critical Minerals, and the U.S. Economy. National Academies Press. pp. 262. doi:10.17226/12034. ISBN 9780309112826. https://www.nap.edu/catalog/12034/minerals-critical-minerals-and-the-us-economy.

- ↑ Committee on Assessing the Need for a Defense Stockpile (2008). Managing Materials for a Twenty-first Century Military. National Academies Press. pp. 206. doi:10.17226/12028. ISBN 9780309177924. https://www.nap.edu/catalog/12028/managing-materials-for-a-twenty-first-century-military.

- ↑ Erdmann, L.; Graedel, T.E. (2011). "Criticality of non-fuel minerals: A review of major approaches and analyses". Environmental Science and Technology 45 (18): 7620–30. doi:10.1021/es200563g. PMID 21834560.

- ↑ "Communication from the Commission to the European Parliament, the Council, the European Economic and Social Committee and the Committee of the Regions Tackling the Challenges in Commodity Markets and on Raw Materials". Eur-Lex. European Union. 2 February 2011. https://eur-lex.europa.eu/legal-content/EN/TXT/?uri=celex%3A52011DC0025.

- ↑ "Report on Critical Raw Materials for the E.U.: Report of the Ad hoc Working Group on defining critical raw materials" (PDF). European Commission. May 2014. pp. 41. https://ec.europa.eu/docsroom/documents/10010/attachments/1/translations/en/renditions/pdf.

- ↑ 10.0 10.1 10.2 Graedel, T.E.; Harper, E.M.; Nassar, N.T. et al. (2015). "Criticality of metals and metalloids". Proceedings of the National Academy of Sciences of the United States of America 112 (14): 4257-62. doi:10.1073/pnas.1500415112. PMC PMC4394315. PMID 25831527. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC4394315.

- ↑ Graedel, T.E.; Barr, R.; Chandler, C. et al. (2012). "Methodology of metal criticality determination". Environmental Science and Technology 46 (2): 1063–70. doi:10.1021/es203534z. PMID 22191617.

- ↑ Panousi, S.; Harper, E.M.; Nuss, P. et al. (2016). "Criticality of Seven Specialty Metals". Journal of Industrial Ecology 20 (4): 837-853. doi:10.1111/jiec.12295.

- ↑ Office of Policy and International Affairs (December 2011). "Critical Materials Strategy" (PDF). U.S. Department of Energy. https://www.energy.gov/sites/prod/files/DOE_CMS2011_FINAL_Full.pdf.

- ↑ Hastie, T.; Tibshirani, R.; Friedman, J. (2009). The Elements of Statistical Learning. Springer. pp. 745. ISBN 9780387848570.

- ↑ Furnas, G.W.; Landauer, T.K.; Gomez, L.M.; Dumais, S.T. (1987). "The vocabulary problem in human-system communication". Communications of the ACM 30 (11): 964–971. doi:10.1145/32206.32212.

- ↑ 16.0 16.1 Gallant, S.I.; Caid, W.R.; Carleton, J. et al. (1992). "HNC's MatchPlus system". ACM SIGIR Forum 26 (2): 34–38. doi:10.1145/146565.146569.

- ↑ Caid, W.R.; Dumais, S.T.; Gallant, S.I. et al. (1995). "Learned vector-space models for document retrieval". Information Processing and Management 31 (3): 419–429. doi:10.1016/0306-4573(94)00056-9.

- ↑ Zhu, H.; Rohwer, R. (1995). "Bayesian invariant measurements of generalization". Neural Processing Letters 2 (6): 28–31. doi:10.1007/BF02309013.

- ↑ Hofmann, T. (1999). "Probabilistic latent semantic indexing". Proceedings of the 22nd Annual International ACM SIGIR Conference on Research and Development in Information Retrieval: 50–57. doi:10.1145/312624.312649.

- ↑ Blei, D.M.; Ng, A.Y.; Jordan, M.I. (2003). "Latent Dirichlet Allocation". Journal of Machine Learning Research 3 (1): 993–1022. http://www.jmlr.org/papers/v3/blei03a.html.

- ↑ Mikolov, T.; Chen, K.; Corrado, G.; Dean, J. (7 September 2013). "Efficient Estimation of Word Representations in Vector Space". Cornell University Library. https://arxiv.org/abs/1301.3781.

- ↑ Bellemare, M.G.; Naddaf, Y.; Veness, J.; Bowling, M. (2015). "The arcade learning environment: An evaluation platform for general agents". Proceedings of the 24th International Conference on Artificial Intelligence: 4148–4152.

- ↑ Freitag, D. (2004). "Toward unsupervised whole-corpus tagging". Proceedings of the 20th International Conference on Computational Linguistics: 357. doi:10.3115/1220355.1220407.

- ↑ Freitag, D. (2004). "Trained Named Entity Recognition using Distributional Clusters". Proceedings of the 2004 Conference on Empirical Methods in Natural Language Processing: 262–69. http://www.aclweb.org/anthology/W04-3234.

Notes

This presentation is faithful to the original, with only a few minor changes to presentation. In some cases important information was missing from the references, and that information was added. The original article lists references alphabetically, but this version — by design — lists them in order of appearance.