Difference between revisions of "Journal:Histopathology image classification: Highlighting the gap between manual analysis and AI automation"

Shawndouglas (talk | contribs) (Saving and adding more.) |

Shawndouglas (talk | contribs) (Saving and adding more.) |

||

| Line 514: | Line 514: | ||

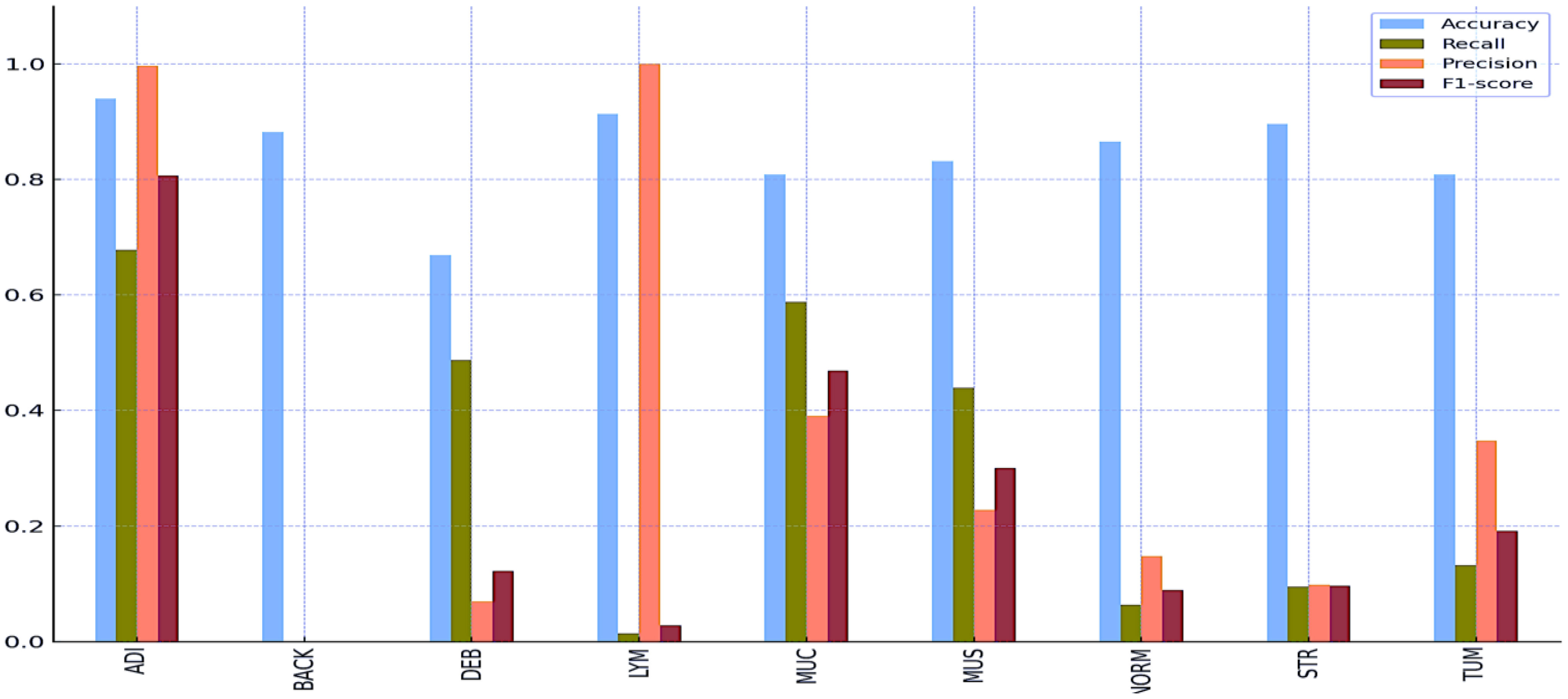

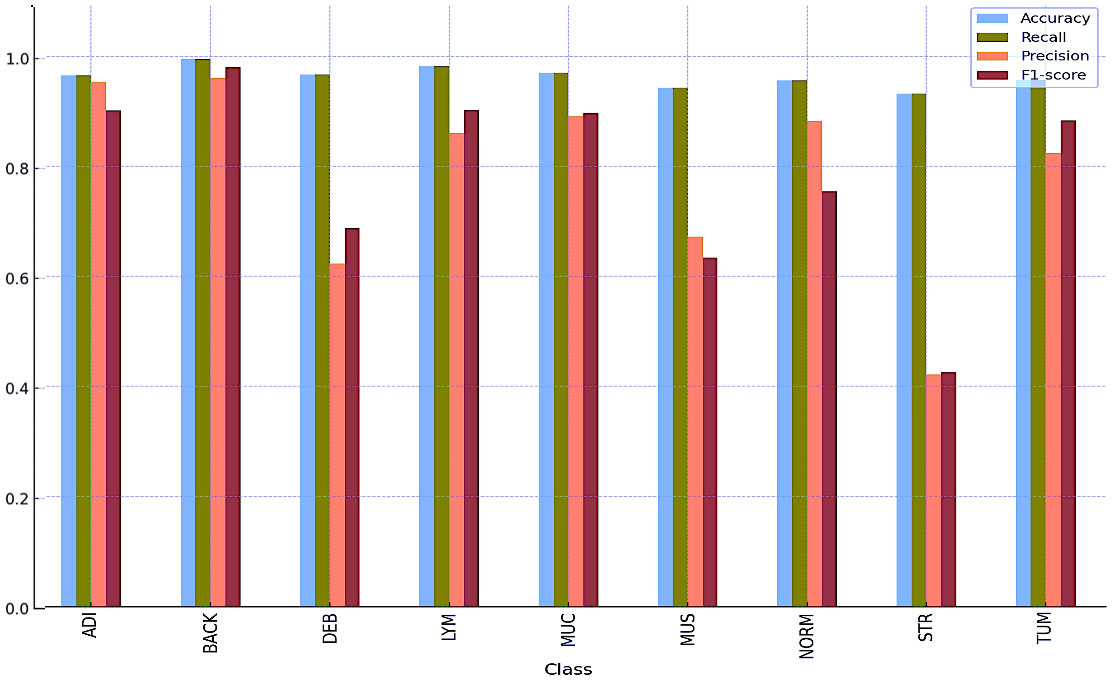

Tables 8 and 9 and Figures 5 and 6 also include the multiple classification results using AI automation for all classes before and after normalization was applied. With the application of normalization, the increases observed in the evaluation metrics of especially low-performing classes show that the model significantly improves its classification. Table 8 contains two values of particular significance: 0 and NaN (Not a Number) within the “BACK” category. This can indicate that the class failed or did not manage to calculate for specific metrics. As shown in Table 9, an overall performance improvement was observed after updating these NaN and 0 values. As a result of normalization, the performance of each class became more consistent and equal. This suggests that the model exhibits enhanced robustness and consistency in its output to normalization. | Tables 8 and 9 and Figures 5 and 6 also include the multiple classification results using AI automation for all classes before and after normalization was applied. With the application of normalization, the increases observed in the evaluation metrics of especially low-performing classes show that the model significantly improves its classification. Table 8 contains two values of particular significance: 0 and NaN (Not a Number) within the “BACK” category. This can indicate that the class failed or did not manage to calculate for specific metrics. As shown in Table 9, an overall performance improvement was observed after updating these NaN and 0 values. As a result of normalization, the performance of each class became more consistent and equal. This suggests that the model exhibits enhanced robustness and consistency in its output to normalization. | ||

{| | |||

| style="vertical-align:top;" | | |||

{| class="wikitable" border="1" cellpadding="5" cellspacing="0" width="60%" | |||

|- | |||

| colspan="5" style="background-color:white; padding-left:10px; padding-right:10px;" |'''Table 8.''' Multi-class classification results without normalization using AI automation. ADI - adipose; BACK - background; DEB - debris; LYM - lymphocytes; MUC - mucus; MUS - smooth muscle; NORM - normal colonic mucosa; STR - cancer-associated stroma; TUM - colorectal adenocarcinoma epithelium; NaN - not a number. | |||

|- | |||

! style="background-color:#e2e2e2; padding-left:10px; padding-right:10px;" |H&E image class | |||

! style="background-color:#e2e2e2; padding-left:10px; padding-right:10px;" |Accuracy | |||

! style="background-color:#e2e2e2; padding-left:10px; padding-right:10px;" |Recall | |||

! style="background-color:#e2e2e2; padding-left:10px; padding-right:10px;" |Precision | |||

! style="background-color:#e2e2e2; padding-left:10px; padding-right:10px;" |F1 score | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |ADI | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.9396 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.6779 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.9967 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.8069 | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |BACK | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.8820 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |NaN | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0 | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |DEB | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.6689 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.4867 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.0697 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.1219 | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |LYM | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.9130 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.0142 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |1.0000 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.0280 | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |MUC | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.8085 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.5874 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.3907 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.4693 | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |MUS | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.8311 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.4392 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.2279 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.3001 | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |NORM | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.8656 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.0634 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.1478 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.0888 | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |STR | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.8955 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.0950 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.0978 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.0964 | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |TUM | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.8084 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.1322 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.3475 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.1915 | |||

|- | |||

|} | |||

|} | |||

{| | |||

| style="vertical-align:top;" | | |||

{| class="wikitable" border="1" cellpadding="5" cellspacing="0" width="60%" | |||

|- | |||

| colspan="5" style="background-color:white; padding-left:10px; padding-right:10px;" |'''Table 9.''' Multi-class classification results after normalization using AI automation. ADI - adipose; BACK - background; DEB - debris; LYM - lymphocytes; MUC - mucus; MUS - smooth muscle; NORM - normal colonic mucosa; STR - cancer-associated stroma; TUM - colorectal adenocarcinoma epithelium. | |||

|- | |||

! style="background-color:#e2e2e2; padding-left:10px; padding-right:10px;" |H&E image class | |||

! style="background-color:#e2e2e2; padding-left:10px; padding-right:10px;" |Accuracy | |||

! style="background-color:#e2e2e2; padding-left:10px; padding-right:10px;" |Recall | |||

! style="background-color:#e2e2e2; padding-left:10px; padding-right:10px;" |Precision | |||

! style="background-color:#e2e2e2; padding-left:10px; padding-right:10px;" |F1 score | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |ADI | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.9652 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.9652 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.9533 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.9015 | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |BACK | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.9951 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.9951 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.9603 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.9798 | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |DEB | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.9671 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.9671 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.6235 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.6878 | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |LYM | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.9819 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.9819 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.8600 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.9025 | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |MUC | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.9699 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.9699 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.8911 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.8963 | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |MUS | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.9429 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.9429 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.6723 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.6339 | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |NORM | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.9557 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.9557 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.8825 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.7543 | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |STR | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.9320 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.9320 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.4219 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.4259 | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |TUM | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.9567 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.9567 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.8237 | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |0.8830 | |||

|- | |||

|} | |||

|} | |||

[[File:Fig5 Dogan FrontOnc2024 13.jpg|800px]] | |||

{{clear}} | |||

{| | |||

| style="vertical-align:top;" | | |||

{| border="0" cellpadding="5" cellspacing="0" width="800px" | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |<blockquote>'''Figure 5.''' Multi-class classification results without normalization using AI automation.</blockquote> | |||

|- | |||

|} | |||

|} | |||

[[File:Fig6 Dogan FrontOnc2024 13.jpg|800px]] | |||

{{clear}} | |||

{| | |||

| style="vertical-align:top;" | | |||

{| border="0" cellpadding="5" cellspacing="0" width="800px" | |||

|- | |||

| style="background-color:white; padding-left:10px; padding-right:10px;" |<blockquote>'''Figure 6.''' Multi-class classification results after normalization using AI automation..</blockquote> | |||

|- | |||

|} | |||

|} | |||

==Discussion== | |||

Revision as of 15:47, 21 May 2024

| Full article title | Histopathology image classification: Highlighting the gap between manual analysis and AI automation |

|---|---|

| Journal | Frontiers in Oncology |

| Author(s) | Doğan, Refika S.; Yılmaz, Bülent |

| Author affiliation(s) | Abdullah Gül University, Gulf University for Science and Technology |

| Primary contact | refikasultan dot dogan at agu dot edu dot tr |

| Editors | Pagador, J. Blas |

| Year published | 2024 |

| Volume and issue | 13 |

| Article # | 1325271 |

| DOI | 10.3389/fonc.2023.1325271 |

| ISSN | 2234-943X |

| Distribution license | Creative Commons Attribution 4.0 International |

| Website | https://www.frontiersin.org/journals/oncology/articles/10.3389/fonc.2023.1325271/full |

| Download | https://www.frontiersin.org/journals/oncology/articles/10.3389/fonc.2023.1325271/pdf (PDF) |

|

|

This article should be considered a work in progress and incomplete. Consider this article incomplete until this notice is removed. |

|

|

This article contains rendered mathematical formulae. You may require the TeX All the Things plugin for Chrome or the Native MathML add-on and fonts for Firefox if they don't render properly for you. |

Abstract

The field of histopathological image analysis has evolved significantly with the advent of digital pathology, leading to the development of automated models capable of classifying tissues and structures within diverse pathological images. Artificial intelligence (AI) algorithms, such as convolutional neural networks (CNNs), have shown remarkable capabilities in pathology image analysis tasks, including tumor identification, metastasis detection, and patient prognosis assessment. However, traditional manual analysis methods have generally shown low accuracy in diagnosing colorectal cancer using histopathological images.

This study investigates the use of AI in image classification and image analytics using histopathological images using the histogram of oriented gradients method. The study develops an AI-based architecture for image classification using histopathological images, aiming to achieve high performance with less complexity through specific parameters and layers. In this study, we investigate the complicated state of histopathological image classification, explicitly focusing on categorizing nine distinct tissue types. Our research used open-source multi-centered image datasets that included records of 100,000 non-overlapping images from 86 patients for training and 7,180 non-overlapping images from 50 patients for testing. The study compares two distinct approaches, training AI-based algorithms and manual machine learning (ML) models, to automate tissue classification. This research comprises two primary classification tasks: binary classification, distinguishing between normal and tumor tissues, and multi-classification, encompassing nine tissue types, including adipose, background, debris, stroma, lymphocytes, mucus, smooth muscle, normal colon mucosa, and tumor.

Our findings show that AI-based systems can achieve 0.91 and 0.97 accuracy in binary and multi-class classifications. In comparison, the histogram of directed gradient features and the random forest classifier achieved accuracy rates of 0.75 and 0.44 in binary and multi-class classifications, respectively. Our AI-based methods are generalizable, allowing them to be integrated into histopathology diagnostics procedures and improve diagnostic accuracy and efficiency. The CNN model outperforms existing ML techniques, demonstrating its potential to improve the precision and effectiveness of histopathology image analysis. This research emphasizes the importance of maintaining data consistency and applying normalization methods during the data preparation stage for analysis. It particularly highlights the potential of AI to assess histopathological images.

Keywords: data science, image processing, artificial intelligence, histopathology images, colon cancer

Introduction

Histopathological image analysis is a fundamental method for diagnosing and screening cancer, especially in disorders affecting the digestive system. It is a type of analysis used to diagnose and treat cancer. In the case of pathologists, the physical and visual examinations of complex images often come in the form of resolutions up to 100,000 x 100,000 pixels. On the other hand, the method of pathological image analysis has long been dependent on this approach, known for its time-consuming and labor-intensive characteristics. New approaches are needed to increase the efficiency and accuracy of pathological image analysis. Up to this point, the realization of digital pathology approaches has seen significant progress. Digitization of high-resolution histopathology images allows comprehensive analysis using complex computational methods. As a result, there has been a significant increase in interest in medical image analysis for creating automatic models that can precisely categorize relevant tissues and structures in various clinical images. Early research in this area focused on predicting the malignancy of colon lesions and distinguishing between malignant and normal tissue by extracting features from microscopic images. Esgiar et al. [1] analyzed 44 healthy and 58 cancerous features obtained from microscope images. As a result of the analysis, the percentage of occurrence matrices used equaled 90 percent. These first steps form the basis for more complex procedures that integrate rapid image processing techniques and the functions of visualization software. Digital pathology has recently emerged as a widespread diagnostic tool, primarily through artificial intelligence (AI) algorithms. [2, 3] It has demonstrated impressive capability in processing pathology images in an advanced manner. [4, 5] Advanced techniques, identification of tumors, detection of metastasis, and assessment of patient prognosis are utilized regularly. Through the utilization of this process, the automatic segmentation of pathological images, generation of predictions, and the utilization of relevant observations from this complex visual data have been planned. [6, 7]

Convolutional neural networks (CNNs) have received significant focus among various machine learning (ML) techniques in AI research. As a result of the application of deep learning in previous biological research, ML has been extensively accepted and used. [8–10] CNNs distinguish themselves from other ML methods because of their extraordinary accuracy, generalization capacity, and computational economy. Each patient’s histopathology photographs contain important quantitative data, known as hematoxylin-eosin (H&E) stained tissue slides. Notably, Kather et al. [11] have explored the potential of CNN-based approaches to predict disease progression directly from the available H&E images. In a retrospective study, their findings underscored CNN’s remarkable ability to assess the human tumor microenvironment and prognosticate outcomes based on the analysis of histopathological images. This breakthrough showcases the transformative potential of such AI-based methodologies in revolutionizing the field of medical image analysis, offering new avenues for efficient and objective diagnostic and prognostic assessments.

On the other hand, in the literature, manual analysis methods are also available to classify and predict disease outcomes using the H&E images. Compared to AI-based algorithms, traditional manual analysis generally performs lower. It is highlighted in the literature that the performance of traditional methods like local binary pattern (LBP) and Haralick is poor. [12, 13] These studies emphasized that deep learning is more effective in diagnosing colorectal cancer using histopathology images, and that traditional ML methods are poor. The accuracy of LBP is 0.76 percent, and Haralick’s is 0.75. In this context, since methods such as LBP and Haralick showed low accuracy in the literature, we decided to adopt an approach other than these two methods. We chose to carry out this study with the histogram of oriented gradients (HoG) method. Unlike other studies in the literature, we performed analysis using HoG features for the first time in this study. Our choice offers an alternative perspective to traditional methods and deep learning studies. The results obtained using HoG features make a new contribution to the literature. This study offers a unique perspective to the literature by highlighting the value of analysis using HoG on a specific data set.

Table 1 provides an overview of manual analysis and AI-based studies from various literature sources. In a study by Jiang [14], a high accuracy rate of 0.89 was achieved using InceptionV3 Multi-Scale Gradients and generative adversarial network (GAN) for classifying colorectal cancer histopathological images. Kather et al. [6] resulted in an accuracy metric of 0.87 using texture-based approaches, decision trees, and support vector machines (SVMs) to analyze tissues of multiple classes in colorectal cancer histology. Other studies include Popovici et al. [15] at 0.84 with VGG-f (MatConvNet library) for the prediction of molecular subtypes, 0.84 with Xu [16] using CNN for the classification of stromal and epithelial regions in histopathology images, and 0.83 with Mossotto [17] using optimized SVM for the classification of inflammatory bowel disease. Tsai [19] demonstrated 0.80 accuracy metrics with CNN for detecting pneumonia in chest X-rays. These results show that AI-based classification studies generally achieve high accuracy rates. The primary emphasis of these studies revolves around AI methods employed in analyzing histopathological images, with a particular focus on CNNs. These networks have demonstrated exceptional levels of precision in a wide range of medical applications. These algorithms have demonstrated remarkable outcomes in cancer diagnosis and screening domains. CNNs provide substantial benefits compared to conventional approaches, owing to their ability to handle and evaluate intricate histological data. These methods also excel in their capacity to detect patterns, textures, and structures in high-resolution images, thereby complementing or, in certain instances, even substituting the human review processes of pathologists. The promise of these AI-based techniques to change the field of medical picture processing is well acknowledged.

| ||||||||||||||||||||||||||||||||||||||||

Materials and methods

Dataset

Our research was based on the use of two separate datasets, carefully selected and prepared for use as our training and testing sets. We carefully compiled the training dataset (NCT-CRC-HE-100K) from the pathology archives of the NCT Biobank (National Center for Tumor Diseases, Germany), including records from 86 patients. The University Medical Center Mannheim (UMM), Germany [11, 21] generated the testing dataset using the NCT-VAL-HE-7K dataset. It included data from 50 patients. We obtained the datasets from open-source images after carefully removing them from formalin-fixed paraffin-embedded tissues of colorectal cancer. The dataset we used for training and testing consisted of 100,000 high-resolution H&E (hematoxylin and eosin) images.

From these images, we selected 7,180 non-overlapping sub-images, also known as sub-images. Each of these sub-images measures 0.5 microns in thickness and boasts dimensions of 224x224 pixels. The richness of our dataset is further highlighted by the inclusion of nine distinct tissue textures, each encapsulating the subtle difficulties of various tissue types. These encompass a broad spectrum, from adipose tissue to lymphocytes, mucus, and cancer epithelial cells. Table 2 meticulously presents the distribution of images within the test and training datasets, segmented by their respective tissue classes. For instance, we meticulously assembled a training dataset featuring a robust 14,317 samples within the colorectal cancer tissue class. Simultaneously, the testing dataset for this class comprises 1,233 samples. These detailed statistics play a crucial role in providing readers with a comprehensive understanding of the data distribution and the relative sizes of each class within the study, forming the foundation for our subsequent analyses and model development.

| |||||||||||||||||||||||||||||||||

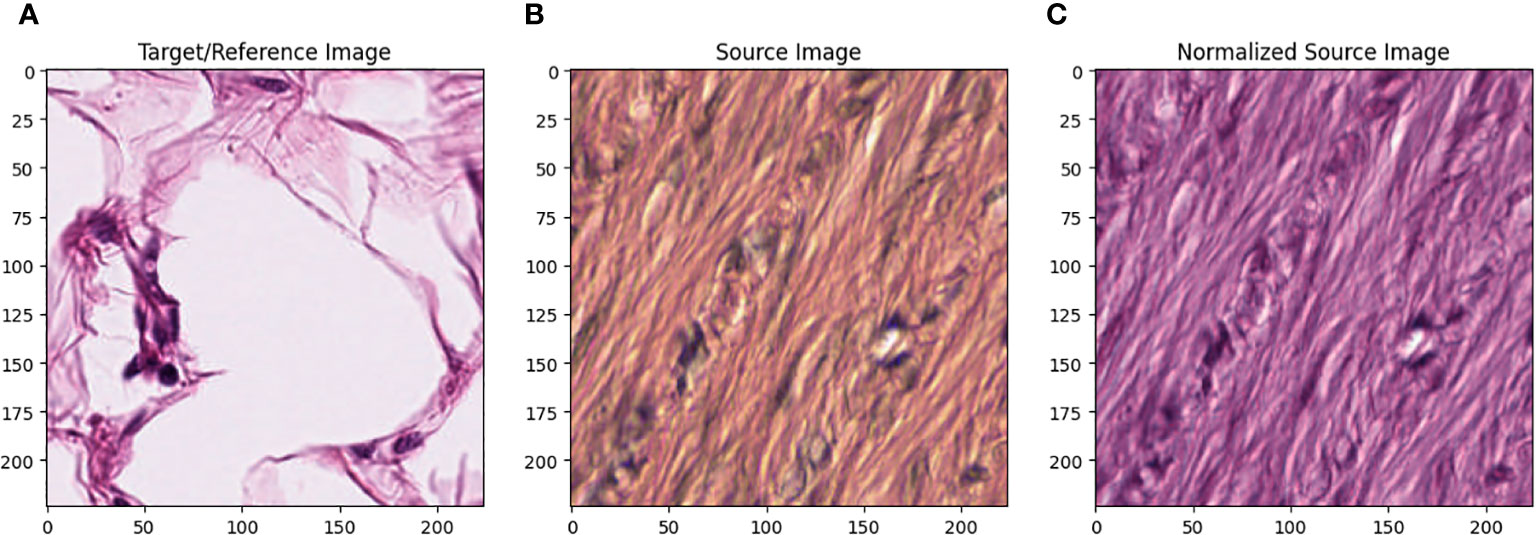

All images in the training set were normalized using the Macenko method. [22] Figure 1 describes the effect of Macenko normalization on sample images. The torchstain library [23], which supports a PyTorch-based approach, is available for color normalization of the image using the Macenko method.

|

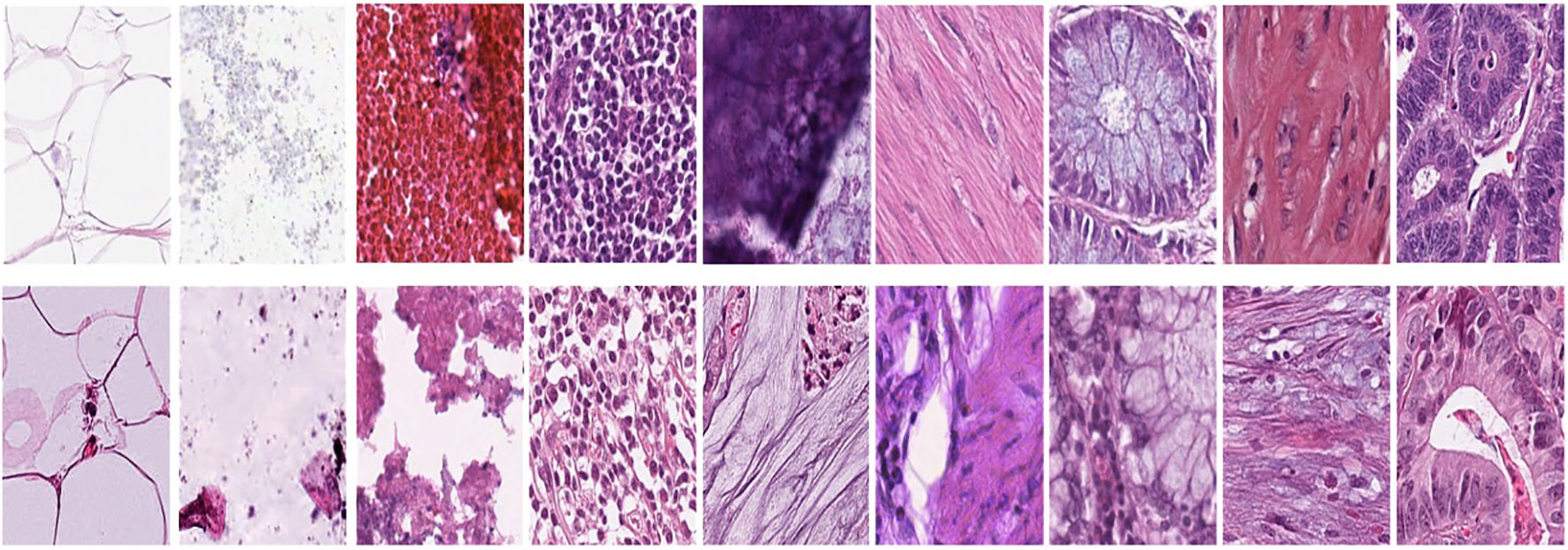

Figure 1A represents this method’s target/reference image, while Figure 1B represents the source images. Macenko normalization aims to make the color distribution of the source images compatible with the target image. In the example shown in the figure, the result of the normalization process applied on the source images (Figure 1B), taking the target image (Figure 1A) as a reference, allows us to obtain a more consistent and similar color profile by reducing color mismatches, as seen in Figure 1C. This will make obtaining more reliable results in machine learning or image analytics applications possible. Normalization was performed on the dataset on which the model was trained, and applying this normalization to the test set can increase the model’s generalization ability. However, the test set represents real-world setups and consists of images routinely obtained in the pathology department. Therefore, since these images wanted to train a clinically meaningful model with different color conditions, they were not applied to the normalization test set. In this way, we also investigated the effect of applying color normalization on classifying different types of tissues. The original data set—shown in the first row of Figure 2—from nine different tissue samples has substantially different color stains; however, the second row of Figure 2 shows their normalized versions. These images are transformed to the same average intensity level.

|

Manual analysis algorithm

In traditional manual analysis, the classification process was emphasized by extracting HoG features. HoG features represent a class of local descriptors that extract crucial characteristics from images and videos. They have found typical applications across various domains, encompassing tasks such as image classification, object detection, and object tracking. [24, 25]

The following parameters were used to extract HoG features:

- Number of orientations: nine; this is the number of gradient directions calculated in each cell.

- Cells per pixel: each cell consists of 10x10 pixels.

- Blocks per cell: each block contains 2x2 cells.

- Rooting and block normalization: Using the `transform_sqrt=True` and `block_norm=“L1”` options, rooting and L1 norm-based block normalization were performed to reduce lighting and shading effects. The resulting features are more robust and amenable to comparison, especially under variable lighting conditions. This can improve the model’s overall performance in image recognition and classification tasks.

Using these parameters increases the efficiency and accuracy of the HoG feature extraction process, thus ensuring high performance in colorectal cancer tissue classification.

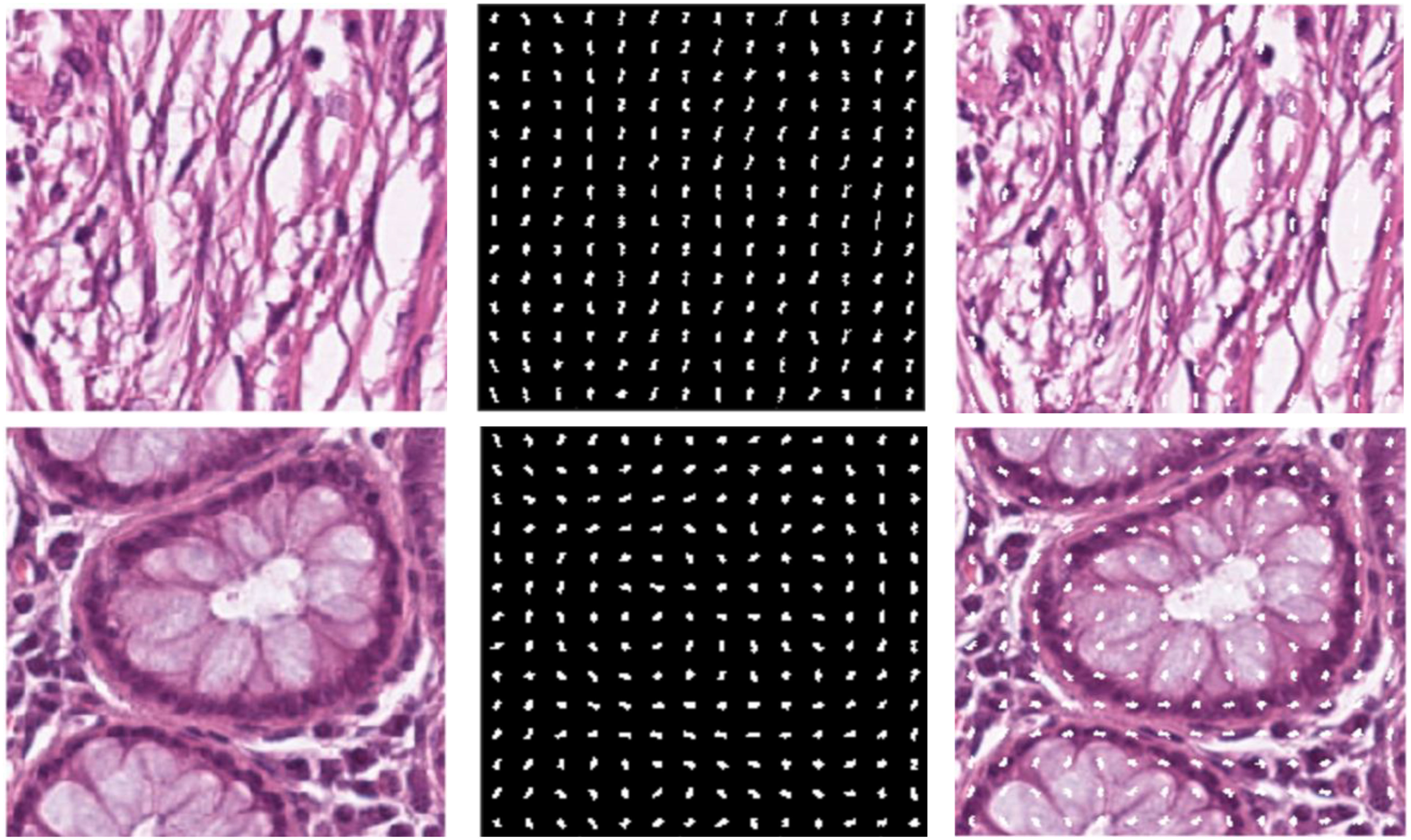

We chose HoG features, one of the local descriptors, because HOG processes the image by dividing it into small regions called cells. As illustrated in Figure 3, the cells are created with the original images. In the created cells, the gradients of the pixels in the x-direction (Sx) and the y-direction (Sy) are calculated as such:

The gradient direction θ is computed using the computed gradients as such [26]:

|

After calculating the gradients, the histograms are calculated, and these histograms are combined to form blocks. Normalization is performed on the blocks to avoid lighting and shading effects. The study involved a comprehensive analysis of the image set, where all images were initially standardized to a dimension of 224x224. This standardization was conducted to enhance the classification performance of the features derived from these images. To achieve this, a bilinear interpolation method was applied, resizing the images to a standardized dimension of 200x200. The decision to reduce the image dimensions from 224x224 pixels to 200x200 pixels was taken to optimize the calculation time. In particular, a 224x224 image produces 7,200 feature vectors, while a 200x200 image performing the same process produces 5,832 feature vectors. The feature vector formula is explained as follows:

... where N is the number of pixels in the image; W and H are the image’s width and height, respectively; CW cell weight and CH cell height define cell dimensions; and CpBx and CpBy are the number of cells per block, representing the number of directions calculated for each cell in the HoG feature vector. Not only does dimensionality reduction have the advantage of reducing computational time, but smaller-sized feature vectors can potentially reduce memory usage and the overall complexity of the model. This is due to optimizing model training and prediction times, especially when working on large data sets.

This study preferred the random forest (RF) algorithm as the ML model for colorectal cancer tissue classification. RF is one of the ensembles learning methods and creates a robust and generalizable model by combining multiple decision trees. The main reason for this choice is that RF performs well on different data sets. It can work effectively on complex and multidimensional data sets. RF can operate effectively on large data sets and high-dimensional feature spaces. RF is resilient to noise and anomalies in the data set. It can also evaluate relationships between variables, increasing the stability of the model. RF can deal with overfitting problems, preventing the model from overfitting the training data. These features support the suitability of the RF algorithm for colorectal cancer tissue classification. As a result of preliminary tests and analyses, it was decided that RF was the most suitable model. This choice is intended to obtain reliable results.

RF applications are practical biomedical imaging and tissue analysis tools with features such as high-dimensional data processing ability, accuracy, and robustness. Challenges to this implementation, such as computational efficiency, potential overfitting, and especially interpretability and explainability, are also significant. It was stated that the algorithm was possible and was considered to increase security, especially for the future of medicine and clinical research. It is interesting to note that comprehensive feature selection plays a critical role in learning and comprehensively makes the consolidated results more accurate and robust. This is extremely important in increasing efficiency in medicine and clinical research. [27, 28]

AI-based automation

In this study, a remarkable CNN architecture was developed for the image classification problem. The developed model aimed to achieve high performance with less complexity through specific parameters and layers. We used a simple CNN-based architecture and trained it using the same H&E images, the same data we used in the manual analysis part. We aim to compare the performance of manual analysis and AI-based automation methods in classifying colorectal cancer tissue images. Table 3 shows the parameter and structure information of the CNN model.

| |||||||||||||||||||||||||||||||||||||||||||||||||||

In the manual analysis section, steps were taken to extract features from images and train the model using these features. Nevertheless, we used images directly as input in the AI automation part, then created a model suitable for the purpose and carried out the training process. In the AI automation approach, we used local filters, intermediate steps, and a multilayer artificial neural network model to train the base CNN model (Table 3). [10] This table explains the layers, structures, and hyperparameters of the CNN model used in the AI automation section. This model includes direct use of images and essential operations such as sequential convolution, batch normalization, and rectified linear unit (ReLU) activation. Finally, it uses the classification of results with softmax activation and a cross-entropy-based loss function. This model reflects a complex structure aimed at classifying colorectal cancer tissue images. This approach has played an important role in comparing the performance of manual analysis and AI automation methods.

This AI automation model is designed to extract and classify features in histopathological images. In the first layer, the model gets input from color (RGB) images of 28x28 pixels. Input images are processed with "zscore" normalization, which brings the mean of the data to zero and its standard deviation to one. The model's architecture then includes a series of convolutional layers, batch normalization, and ReLU activation functions. Convolution layers move over the image to extract feature maps and highlight important features. Batch normalization helps train the network faster and provides more stable performance. ReLU activation functions filter out negative pixel values, increasing the learning ability of the model. Maximum pooling layers shrink the feature maps and increase the model’s scalability. As a result of these layers, the model includes high-level features such as learning and increasing complexity. Finally, the model uses fully connected layers to assign learned features to specific classes and uses the softmax activation function to make the results more consistent.

On the other hand, its existence allows a probability distribution to be provided for each class. The cross-entropy loss function optimizes the learning of the model with accurate classification labels and manual analysis techniques, which are examples of techniques that can be used to optimize the process. As a result of this model, AI automation can improve feature extraction and classification capabilities in histopathology image classification tasks.

The study selected parameters to train the model based on starting values commonly accepted in the literature. [29] In the early stages of the training process, researchers attempted to achieve gradual improvement by choosing a varying initial learning rate. The researchers determined that the maximum number was 50 and presented the maximum number as 100, allowing the model to follow the training data over a long period. At each epoch, the model rearranged the training data to produce a more comprehensive and independent representation unaffected by prior learning. We chose a batch size ranging from 32 to 64 to ensure uniform processing of the samples. We used these parameters to select validation data and determine the evaluation frequency. Continuous evaluation of the model throughout the training process is not only optimal but also guaranteed. By eliminating cases of overfitting, the model achieved greater generalizability, and more reliable results were confirmed. We conducted rigorous testing and used a trial-and-error approach to determine these parameters to monitor the model’s performance. Research findings show that the selected parameters yield the best results, and the model effectively facilitates learning from the dataset.

During the last training session, we carefully determined the exact parameter values that led to the successful training of the model: We set the initial learning rate to 0.01 and the maximum number of epochs to 50, blending is the process of combining data we carried out from different sources. Every complete pass across the entire dataset is performed at every epoch. This is intended to ensure the size is set to 64 during the process. You will also see that 64 data samples are processed together. He managed to complete the task for 20 days successfully. The model in question is a specific learning rate that uses the number of epochs and other parameters that reflect a unique scenario to be trained. We carefully chose these parameters for the model to achieve the necessary level of success and assure the best possible fit to the data set.

In this study, the researchers developed a CNN model to improve their ability to extract essential features and classify histopathology images. The model takes 28x28x3 RGB images as input, processing them with "zscore" normalization. The model structure includes three 3x3 convolution layers containing 8, 16, and 32 filters. The ReLU activation function was used after each convolution layer. Additionally, there are 2x2 sized max pooling layers following each convolution layer. In the final stages of the model, there are three fully connected layers; the softmax activation function was preferred as the last layer. In terms of training parameters, explained in Table 4, the model is initially trained with a learning rate of 0.01 and works on the data set for a maximum of 50 epochs. Data shuffling is applied at the end of each epoch, and 64 is selected as the batch size. The performance and generalization ability of the model are continuously monitored with validation dataset evaluations performed every 20 epochs. Determining these parameters ensures that the model adapts to the data set most appropriately and reaches the desired level of success, and also helps the model avoid possible problems such as overfitting during the training process. With this configuration, the model has the necessary feature extraction and classification capabilities to produce effective and accurate results in histopathological image classification.

| ||||||||||||||

The cross-entropy loss calculates the difference between the probability distribution that the model predicts and the probability distribution of the actual labels. The formula for binary classification is as follows:

... where L represents the loss function, y represents the actual label value (1 or 0), and p represents the probability predicted by the model.

The formula for multiclass classification is usually:

... where M denotes the number of classes, yo,c is the binary representation (1 or 0) indicating o whether or not the instance belongs to class c. If that instance belongs to class c, this value is 1; otherwise, it is 0. po,c is the probability that the model predicts that sample o belongs to class c.

One of the reasons for choosing cross-entropy loss is direct probability evaluation. Cross-entropy directly evaluates how close the probabilities produced by the model are to the actual labels, making it a natural choice for classification problems. Faster convergence is another reason for choosing it. This function helps the model converge faster and more efficiently during gradient-based optimization, mainly thanks to the logarithmic component. Cross entropy works on probabilities directly affecting the model’s performance in classification problems. This directly improves the model’s ability to predict class labels accurately. Cross-entropy loss imposes a significant penalty on incorrect predictions, especially in cases where the model is very confident in its incorrect prediction. This prevents the model from making incorrect predictions that are overconfident.

The CNN model developed in this study was evaluated in terms of computational complexity depending on the model’s architecture and learning process. Our model is analyzed for time and space complexity, considering factors such as interlayer transitions and filter sizes. Each convolution layer has O (k.n2) time complexity to extract features between adjacent pixels. Here k represents the filter size and n2 represents the size of the image. Our model has a time complexity of O (k.n2.d) for one training epoch, where d is expressed as depth (number of layers). Space complexity is directly related to weight matrices and feature maps and specifies the amount of memory the model requires. As a result of this study, it was observed that the model can scale effectively in large data sets and exhibit high performance in practical applications.

The number of parameters for the first convolutional layer can be calculated using the following equation:

Plugging in our parameters, we get (3 * 3 * 3 + 1) * 8, and obtain 224. This operation is computationally exhaustive but with only eight filters, therefore, complexity is moderate.

Model evaluation

This study used two essential methods to evaluate model performance and obtain reliable results. First, the metrics used to evaluate the model’s performance and the reasons for choosing these metrics are stated. Then, it details why the 10-fold stratified cross-validation method was preferred during the training of the manual analysis model.

It is crucial to choose the right metrics to evaluate model performance. In this study, commonly used metrics such as accuracy, precision, recall, and F1 score were preferred to measure the model’s classification performance, explained in the following equations, respectively:

Accuracy is the ratio of correctly classified samples to the total number of samples. That is, it refers to the ratio of true positives and true negatives to the total samples. Precision shows the proportion of samples predicted to be positive that are positive. It refers to the ratio of true positives to total positive predictions. Recall shows the ratio of true positives to the total number of positive samples. While accuracy refers to overall correct predictions, precision and sensitivity evaluate the model’s performance in more detail, especially in unbalanced class distributions. F1 score is a performance measure calculated as the harmonic mean of precision and recall values. In unbalanced class distributions, the F1 score is used to evaluate the model’s performance on both classes in a balanced way. In unbalanced class distributions, especially in cases where the majority class has more samples, the model must make true positive and true negative predictions in a balanced way.

For the model to generalize reliably and to avoid overfitting problems, the 10-fold stratified cross-validation method was preferred. This method divides the dataset into 10 equal folds and uses each fold as validation data while training the model using the remaining nine-fold as training data. This process continues until each fold is used as validation data. Stratification preserves the class proportions in each tissue type, allowing the model to learn and evaluate equally in each class. This ensures the model can generalize over various data samples, allowing us to obtain reliable results.

In this study, a paired t-test was used to determine that the classification results of the proposed approach were not obtained by chance. The significance of the difference in the overall accuracy achieved by the models was assessed by calculating Paired t-test p values for the overall accuracy of the classification performance achieved by the models. Statistical analysis was performed using the stats module of the Scipy library (version 1.11.3) [30] of Python (version 3.8). P values less than 0.05 were considered statistically significant. The p-value of the paired t-test is explained in the results part.

Within the scope of this research, a CNN model was developed using MATLAB R2023a and AI automation Toolbox 16.3 versions for AI automation tasks. MATLAB was used for data manipulation, training, and evaluation of results. The manual analysis classification model was built using Python 3.8 along with the deep learning model. This model is integrated with scikit-learn (v0.24.2) and NumPy (v1.20.3) libraries. Python has been used in feature extraction and classification tasks. In terms of hardware, the study was run on a personal computer, MacBook Air, with an Apple M1 chip with eight cores and 8 GB of memory. macOS Big Sur (v11.2.3) was used as the operating system. These hardware and software configurations increase the understandability of the methodology, ensure the reproducibility of results, and facilitate comparability of similar studies.

Our research used a large dataset of H&E images of various classes. Specifically, the dataset contains 10,407 ADI, 10,566 BACK, 11,512 DEB, 11,557 LYM, 8,896 MUC, 13,536 MUS, 8,763 NORM, 10,446 STR, and 14,317 TUM images for training, and the test sets are detailed in Table 2. Remarkably, training our CNN model on a dataset of approximately 100,000 images was completed in 200 seconds, demonstrating that our approach is practical even with large-scale data.

Results

Our classification study started with a comprehensive examination aimed at distinguishing normal and tumor tissues selected from a diverse collection of nine distinct tissue types. This initial phase of our research involved utilizing HoG features extracted from the images. We employed the random forest classifier model to assess the effectiveness of this approach.

For a more visual representation of our results, figures explain the confusion matrices derived from the CNN model and the manual analysis results in the supplementary part. This visual insight provides a comprehensive view of the classification performance.

These complex confusion matrices in high-dimensional datasets provide in-depth information about the model’s ability to classify tissues. In particular, the ADI class has a high accuracy rate in both normalized and non-normalized scenarios. The LYM class exhibits low sensitivity when the data is not standardized. Furthermore, the TUM class demonstrates high accuracy and sensitivity, especially in normalized situation. It is clear from this that the model can accurately identify cancerous tumors.

On the other hand, the STR class has low accuracy and sensitivity, particularly in a non-normalized situation. This may indicate that this texture is more challenging to categorize than other textures. As a consequence of this, these matrices are an essential instrument for assessing the performance of the model on various types of tissue and for gaining an understanding of the model’s strengths and shortcomings. In addition, it sheds light on the challenges associated with the classification of uncommon classes and the potential for normalization to ease these challenges. These findings potentially provide valuable direction for future work to enhance the model and improve its performance.

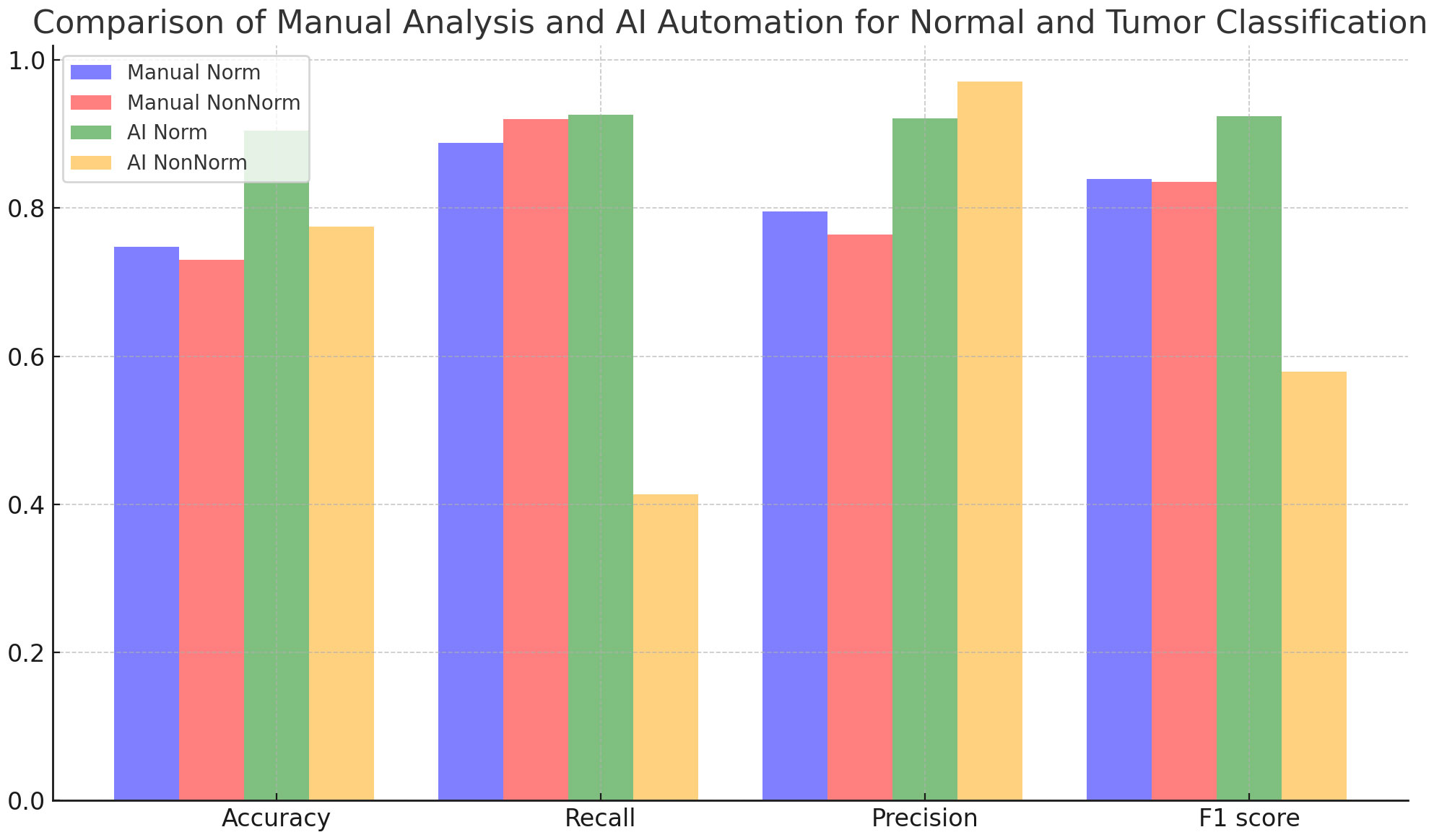

Figure 4 and Table 5 evaluate the effect of normalization in four different cases, with and without normalization, and includes accuracy results obtained with manual analysis and AI automation models for different tissues. When normalized, manual analysis accuracy for N-T tissues increased from 0.75 to 0.91, which was highly significant (p=7,72x10-41). Without normalization, accuracy increased from 0.74 to 0.78 for the same comparison, which was statistically significant (p=0.0033). Additionally, the effect of normalization varies between tissues; significant changes in accuracy are observed depending on the tissues (p<0.05). According to the analysis results, the p-value is under 0.05, a statistically significant level. This shows that the differences between the analyzed results are unlikely to be coincidental, and the reliability of the findings is statistically supported.

|

| ||||||||||||||||||||||||||||

Table 6 compares the classification results performed by manual analysis with the effect of normalization. Although normalization increased accuracy, this was not statistically significant (p = 0.2108). While recall decreased slightly with normalization, this decrease is statistically significant (p = 0.0005). Precision increases with normalization, which is statistically significant (p=0.0162). Regarding the F1 score, the effect of normalization is not statistically significant (p = 0.7144).

| ||||||||||||||||||||||||

Table 7 contains an analysis in which normal and tumor classifications performed by AI automation are evaluated under the influence of normalization. Normalization caused statistically significant improvements in accuracy, recall, precision, and F1 score metrics (p=1.08x10-28, p=1.66x10-26, p=3.87x10-12, p=2.01x10-14), respectively. These results show that normalization is efficacious in improving AI automation-based classification performance.

| ||||||||||||||||||||||||

Tables 8 and 9 and Figures 5 and 6 also include the multiple classification results using AI automation for all classes before and after normalization was applied. With the application of normalization, the increases observed in the evaluation metrics of especially low-performing classes show that the model significantly improves its classification. Table 8 contains two values of particular significance: 0 and NaN (Not a Number) within the “BACK” category. This can indicate that the class failed or did not manage to calculate for specific metrics. As shown in Table 9, an overall performance improvement was observed after updating these NaN and 0 values. As a result of normalization, the performance of each class became more consistent and equal. This suggests that the model exhibits enhanced robustness and consistency in its output to normalization.

| |||||||||||||||||||||||||||||||||||||||||||||||||||||||

| |||||||||||||||||||||||||||||||||||||||||||||||||||||||

|

|

Discussion

References

Notes

This presentation is faithful to the original, with only a few minor changes to presentation, though grammar and word usage was substantially updated for improved readability. In some cases important information was missing from the references, and that information was added.