LII:Choosing and Implementing a Cloud-based Service for Your Laboratory/What is cloud computing?

1. What is cloud computing?

If you were alive in the late 2000s and doing most anything related to computers and the internet, you were bound to encounter the latest internet buzzword: cloud computing.[1][2] A certain mysticism was seemingly attached to the concept, that your files and applications could reside on the internet, "out there in the 'cloud.'"[1] "But what is this 'cloud'?" many would ask. A plethora of media articles, journal articles, blogs, and company websites were published to give practically everyone's take on what the cloud was and wasn't meant to be.[3] However, the then growing consensus of cloud computing as networked and scalable architecture meant to rapidly provide application and infrastructure services at reasonable prices to internet users[2][3] largely matches up with today's definition. Pulling from both The Institution of Engineering and Technology[4] and Amazon Web Services[5], we come up with cloud computing as:

an internet-based computing paradigm in which standardized and virtualized resources are used to rapidly, elastically, and cost-effectively provide a variety of globally available, "always-on" computing services to users on a continuous or as-needed basis

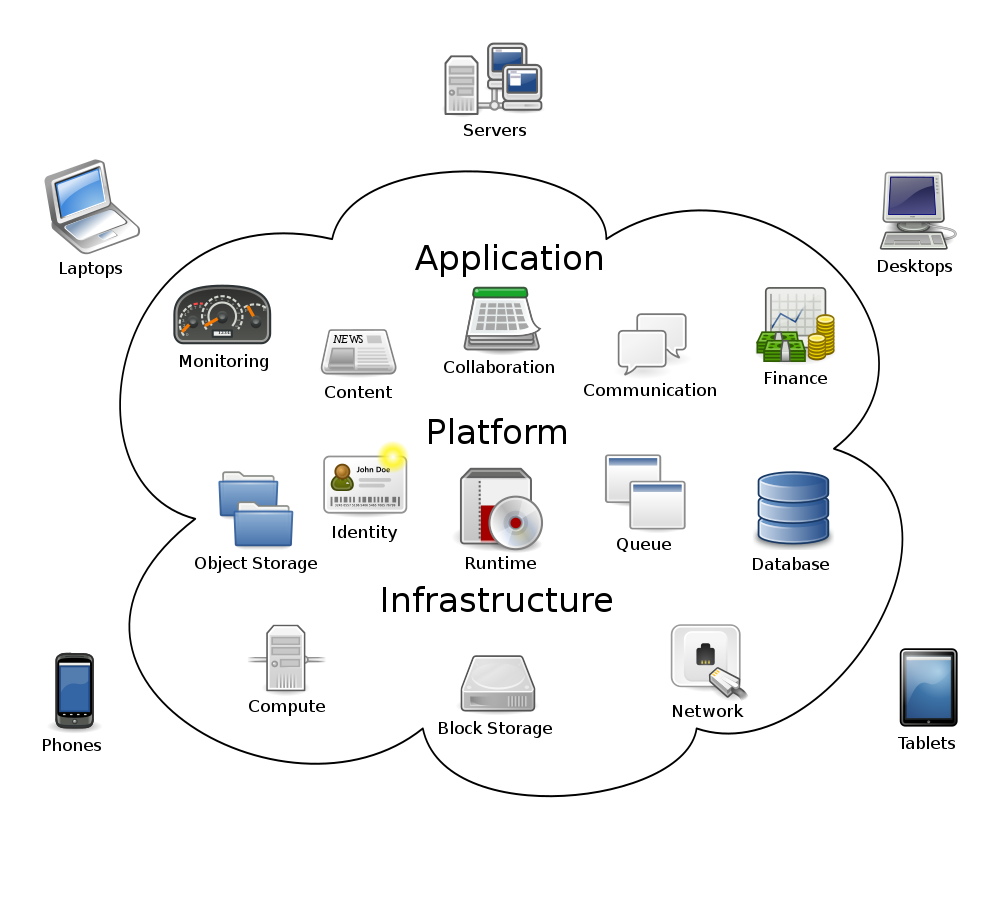

Of course, those computing services come in a variety of flavors, the most common being software, platform, and infrastructure "as a service" (SaaS, PaaS, and IaaS, respectively). These conveniently correspond to the underlying architectural layers of the services, with infrastructure at the base, platform on top of that, and software (or application) on top of that.

Figure 1 portrays a simplified visualization of cloud computing architecture layers, as well as examples of activities that happen on those layers. This concept has also been visualized by others using pyramids and pancake stacks of layers, but the concept remains the same. At the base is the computing infrastructure, including the physical data centers and their networking equipment, servers, hypervisors, application programming interfaces (APIs), and operating systems. This infrastructure is the foundation that supports not only applications users want to run but also that acts as the developmental foundation of users not wanting to implement their own infrastructure. On top of all that can be found platforms or middleware, which serve as software development and deployment environments (that include databases, web servers, load balancers, etc.) or connectivity tools for analytics, workflow management, system integration, and security management. And on top of that are applications, typically designed to run optimally in cloud environments and accessed via web browsers or apps using internet—i.e. networking—connectivity and computing devices.[6]

Customers who require application hosting, internet-hosted software development platforms, or underlying computing infrastructure (e.g., data storage, computational time, etc.)—particularly when they can't or don't want to invest in their own hardware—are increasingly turning to the cloud computing paradigm. Even before a worldwide COVID-19 pandemic started to take shape in late 2019, the global cloud services market was expected to reach $266.4 billion by the end of 2020, with Gartner expecting that to represent a 17 percent increase from 2019.[7] As work-from-home practices expanded significantly in 2020 due to the pandemic, expectations that the trend would last post-pandemic pushed estimates of overall cloud-based workloads moving from physical work offices to the cloud to 55 percent by 2022, with the cloud services market reaching $600 billion in 2023[8] and $1 trillion by 2030.[9] This growing migration to cloud computing has many implications for organizations of all types, including laboratories.

1.1 History and evolution

Cloud computing has its strongest origins in the "web services" phase of internet development. In November 2000, Mind Electric CEO and distributed computing visionary Graham Glass, writing for IBM, described web services as "building blocks for creating open distributed systems" that "allow companies and individuals to quickly and cheaply make their digital assets available worldwide," while prognosticating that web services "will catalyze a shift from client-server to peer-to-peer architectures."[10] At that point, the likes of Microsoft and IBM were already developing toolkits for creating and deploying web services[10], with IBM releasing an initial high-level report in May 2001 on IBM's web services architecture approach. In that paper, web services were described by its author Heather Kreger as allowing "companies to reduce the cost of doing e-business, to deploy solutions faster, and to open up new opportunities," while also allowing "applications to be integrated more rapidly, easily, and less expensively than ever before."[11]

Here's a recap of thinking on web services at the turn of the century:

- "[act as] building blocks for creating open distributed systems"[10]

- "quickly and cheaply make ... digital assets available worldwide"[10]

- "catalyze a shift from client-server to peer-to-peer architectures"[10]

- "reduce the cost of doing e-business, to deploy solutions faster, and to open up new opportunities"[11]

- "[allow] applications to be integrated more rapidly, easily, and less expensively than ever before"[11]

We'll come back to that. For the next stop, however, we have to consider the case of Amazon and how they viewed web services at that time. Leading up to the twenty-first century, Amazon was beginning to expand beyond its book selling roots, opening up its marketplace to other third parties (affiliates) to sell their own goods on Amazon's platform. That effort required an expansion of IT infrastructure to support web-scale third-party selling, but as it turned out, a lot of that IT infrastructure, while reliable and cost-effective, had been previously added piecemeal, with many components getting "tangled" along the way. Amazon project leads and external partners were clamoring for better infrastructure services. This required untangling the IT and associated provider data into an internally scalable, centralized infrastructure that allowed for smoother communication and data management using well-documented APIs.[12][13] By 2003, the company was indirectly acting as a services industry to its partners. "Why not act upon this strength?" was the sentiment that quickly developed that year, with Amazon choosing to use its internal compute, storage, and database infrastructure and related expertise to its advantage.[13]

At that point, the paradigm of web services expanded to include infrastructure as a service or IaaS, with compute, storage, and database services running over the internet for web developers to utilize.[12][13] "If you believe developers will build applications from scratch using web services as primitive building blocks, then the operating system becomes the internet,” noted AWS CEO Andy Jassy in a 2015 retrospective interview.[12] From that concept evolved the idea of determining what it would take to allow any entity to run their technology applications over their web-service-based IaaS platform. In August 2006, Amazon introduced its Amazon Elastic Compute Cloud (Amazon EC2), "a web service that provides resizable compute capacity in the cloud."[14][15] This quickly prompted others in academic and scientific fields to continue the conversation of turning IT and its infrastructure into a service.[15][16] In turn, conversations changed, discussing the opportunities inherent to "cloud computing," including Google and IBM partnering to virtualize computers on new data centers for boosting academic research and teaching new computer science students[17][18], IBM releasing a white paper on cloud computing[19] and announcing its Blue Cloud initiative[20], and Google doubling down on its cloud-based software offerings in competition with Microsoft.[21]

In IBM's 2007 white paper, they described cloud computing as a "pool of virtualized computer resources" that can[19]:

- "host a variety of different workloads, including batch-style back-end jobs and interactive, user-facing applications";

- "allow workloads to be deployed and scaled-out quickly through the rapid provisioning of virtual machines or physical machines";

- "support redundant, self-recovering, highly scalable programming models that allow workloads to recover from many unavoidable hardware/software failures";

- "monitor resource use in real time to enable rebalancing of allocations when needed"; and

- "be a cost efficient model for delivering information services, reducing IT management complexity, promoting innovation, and increasing responsiveness through real-time workload balancing."

In 2011, the National Institute of Standards and Technology (NIST) came up with a more standards-based definition to cloud computing. They described it as "a model for enabling ubiquitous, convenient, on-demand network access to a shared pool of configurable computing resources (e.g., networks, servers, storage, applications, and services) that can be rapidly provisioned and released with minimal management effort or service provider interaction."[22] They went on to highlight the five essential characteristics further[22]:

- On-demand self-service: The unilateral provision of computing resources should be an automatic or nearly automatic process.

- Broad network access: Thin- or thick-client platforms, both hardwired and mobile, should allow for standardized, networkable access to those computing resources.

- Resource pooling: A multi-tenant model requires the provisioning of resources to serve a wide customer base, with a layer of abstraction that gives the user a sense of location independence from those resources.

- Rapid elasticity: The platform's resources should be readily and/or automatically scalable commensurate with demand, such that the user sees no negative impact in their activities.

- Measured service: The resources should be automatically controlled and optimized by a measured service or metering system, transparently providing accurate and timely information about resource usage.

When we compare these 2007 and 2011 definitions of cloud computing with the comments on web services by Glass and Kreger at the turn of the century (as well as our own derived definition prior), we can't help but see how the early vision for cloud computing has taken shape today. First, web services can indeed be paired with other technologies to form a distributed system, in this case a centralized and scalable computing infrastructure that can be used by practically anyone to run software, develop applications, and "host a variety of different workloads."[19] Second, those workloads can be quickly deployed worldwide, wherever there is internet access, and typically at a fair price, when compared to the costs of on-premises data management.[23] Third, new opportunities are indeed developing for organizations seeking to tap into the on-demand, rapid, scalable, and cost-efficient nature of cloud computing.[24][25] And finally, benefits are being seen in the integration of applications via the cloud, particularly as more options for multicloud and hybrid cloud integration develop.[26] The early vision that perhaps hasn't been realized is found in Glass' "shift from client-server to peer-to-peer architectures," though discussions about the promise of peer-to-peer cloud computing have occurred since.[27]

Though clearly linked to web services and the early vision of cloud computing in the 2000s, the cloud computing of the 2020s is a remarkably more advanced and continually evolving technology. However, it's still not without its challenges today. The data security, privacy, and governance of computing in general, and cloud computing in particular, will continue to require more rigorous approaches, as will reducing remaining data silos in organizations with pivots to hybrid cloud, multicloud, and serverless cloud implementations.[28][29] But what is "hybrid cloud"? "Serverless cloud?" The next section goes into further detail.

1.2 Cloud computing services and deployment models

You've probably heard terms like "software as a service" and "public cloud," and you may very well be familiar with their significance already. However, let's briefly run through the terminology associated with cloud services and deployments, as that terminology gets used abundantly, and it's best we're all clear on it from the start. Additionally, the cloud computing paradigm is expanding into areas like "hybrid cloud" and "serverless computing," concepts which may be new to many.

Mentioned earlier was NIST's 2011 definition of cloud computing. When that was published, NIST defined three service models and four deployment models (Table 1)[22]:

| Table 1. The three service models and four deployment models for cloud computing, as defined by the National Institute of Standards and Technology (NIST) in 2011[22] | |

| Service models | |

|---|---|

| Model | Description |

| Software as a Service (SaaS) | "The capability provided to the consumer is to use the provider’s applications running on a cloud infrastructure. The applications are accessible from various client devices through either a thin client interface, such as a web browser (e.g., web-based email), or a program interface. The consumer does not manage or control the underlying cloud infrastructure including network, servers, operating systems, storage, or even individual application capabilities, with the possible exception of limited user-specific application configuration settings." |

| Platform as a Service (PaaS) | "The capability provided to the consumer is to deploy onto the cloud infrastructure consumer-created or acquired applications created using programming languages, libraries, services, and tools supported by the provider. The consumer does not manage or control the underlying cloud infrastructure including network, servers, operating systems, or storage, but has control over the deployed applications and possibly configuration settings for the application-hosting environment." |

| Infrastructure as a Service (IaaS) | "The capability provided to the consumer is to provision processing, storage, networks, and other fundamental computing resources where the consumer is able to deploy and run arbitrary software, which can include operating systems and applications. The consumer does not manage or control the underlying cloud infrastructure but has control over operating systems, storage, and deployed applications; and possibly limited control of select networking components (e.g., host firewalls)." |

| Deployment models | |

| Model | Description |

| Private cloud | "The cloud infrastructure is provisioned for exclusive use by a single organization comprising multiple consumers (e.g., business units). It may be owned, managed, and operated by the organization, a third party, or some combination of them, and it may exist on or off premises." |

| Community cloud | "The cloud infrastructure is provisioned for exclusive use by a specific community of consumers from organizations that have shared concerns (e.g., mission, security requirements, policy, and compliance considerations). It may be owned, managed, and operated by one or more of the organizations in the community, a third party, or some combination of them, and it may exist on or off premises." |

| Public cloud | "The cloud infrastructure is provisioned for open use by the general public. It may be owned, managed, and operated by a business, academic, or government organization, or some combination of them. It exists on the premises of the cloud provider." |

| Hybrid cloud | "The cloud infrastructure is a composition of two or more distinct cloud infrastructures (private, community, or public) that remain unique entities, but are bound together by standardized or proprietary technology that enables data and application portability (e.g., cloud bursting for load balancing between clouds)." |

Nearly a decade later, the picture painted in Table 1 is now more nuanced and varied, with slight changes in definitions, as well as additions to the service and deployment models. Cloudflare actually does a splendid job of describing these service and deployment models, so let's paraphrase from them, as seen in Table 2.

| Table 2. A more modern look at service models and deployment models for cloud computing, as inspired largely by Cloudflare | |

| Service models | |

|---|---|

| Model | Description |

| Software as a Service (SaaS) | This service model allows customers, via an internet connection and a web browser or app, to use software provided by and operated upon a cloud provider and its computing infrastructure. The customer isn't worried about anything hardware-related; from their perspective, they just want the software hosted by the cloud provider to be reliably and effectively operational. If the desired software is tied to strong regulatory or security standards, however, the customer must thoroughly vet the vendor, and even then, there is some risk in taking the word of the vendor that the application is properly secure since customers usually won't be able to test the software's security themselves (e.g., via a penetration test).[30] |

| Platform as a Service (PaaS) | This service model allows customers, via an internet connection and a web browser, to use not only the computing infrastructure (e.g., servers, hard drives, networking equipment) of the cloud provider but also development tools, operating systems, database management tools, middleware, etc. required to build web applications. As such, this allows a development team to spread around the world and still productively collaborate using the cloud provider's platform. However, app developers are essentially locked into the vendor's development environment, and additional security challenges may be introduced if the cloud provider has extended its infrastructure to one or more third parties. Finally, PaaS isn't truly "serverless," as applications won't automatically scale unless programmed to do so, and processes must be running most or all the time in order to be immediately available to users.[31] |

| Infrastructure as a Service (IaaS) | This service model allows customers to use the computing infrastructure of a cloud provider, via the internet, rather than invest in their own on-premises computing infrastructure. From their internet connection and a web browser, the customer can set up and allocate the resources required (i.e., scalable infrastructure) to build and host web applications, store data, run code, etc. These activities are often facilitated with the help of virtualization and container technologies.[32] |

| Function as a Service (FaaS) | This service model allows customers to run isolated or modular bits of code, preferably on local or "edge" cloud servers (more on that later), when triggered by an element, such as an internet-connected device taking a reading or a user selecting an option in a web application. The customer has the luxury of focusing on writing and fine-tuning the code, and the cloud provider is the one responsible for allocating the necessary server and backend resources to ensure the code is run rapidly and effectively. As such, the customer doesn't have to think about servers at all, making FaaS a "serverless" computing model.[33] |

| Backend as a Service (BaaS) | This service model allows customers to focus on front-end application services like client-side logic and user interface (UI), while the cloud provider provides the backend services for user authentication, database management, data storage, etc. The customer uses APIs and software development kits (SDKs) provided by the cloud provider to integrate customer frontend application code with the vendor's backend functionality. As such, the customer doesn't have to think about servers, virtual machines, etc. However, in most cases, BaaS isn't truly serverless like FaaS, as actions aren't usually triggered by an element but rather run continuously (not as scalable as serverless). Additionally, BaaS isn't generally set up to run on the network's edge.[34] |

| Deployment models | |

| Model | Description |

| Private cloud | This deployment model involves the provision of cloud computing infrastructure and services exclusively to one customer. Those infrastructure and service offerings may be hosted locally on-site or be remotely and privately managed and accessed via the internet and a web browser. Those organizations with high security and regulatory requirements may benefit from a private cloud, as they have direct control over how those policies are implemented on the infrastructure and services (i.e., don't have to consider the needs of other users sharing the cloud, as in public cloud). However, private cloud may come with higher costs.[35] |

| Community cloud | This deployment model, while not discussed often, still has some relevancy today. This model falls somewhere between private and public cloud, allowing authorized customers who need to work jointly on projects and applications, or require specific computing resources, in an integrated manner. The authorized customers typically have shared interests, policies, and security/regulatory requirements. Like private cloud, the computing infrastructure and services may be hosted locally at one of the customer's locations or be remotely and privately managed, with each customer accessing the community cloud via the internet. Given a set of common interest, policies, and requirements, the community cloud benefits all customers using the community cloud, as does the flexibility, scalability, and availability of cloud computing in general. However, with more users comes more security risk, and more detailed role-based or group-based security levels and enforcement may be required. Additionally, there must be solid communication and agreement among all members of the community to ensure the community cloud operates as efficiently and securely as possible.[36] |

| Public cloud | This deployment model is what typically comes to mind when "cloud computing" is discussed, involving a cloud provider that provides computing resources to multiple customers at the same time, though each individual customer's applications, data, and resources remain "hidden" from all other customers not authorized to view and access them. Those provided resources come in many different services model, with SaaS, PaaS, and IaaS being the most common. Traditionally, the public cloud has been touted as being a cost-effective, less complex, relatively secure means of handling computing resources and applications. However, for organizations tied to strong regulatory or security standards, the organizaiton must thoroughly vet the cloud vendor and its approach to security and compliance, as the provider may not be able to meet regulatory needs. There's also the concern of vendor lock-in or even loss of data if the customer becomes too dependent on that one vendor's services.[37] |

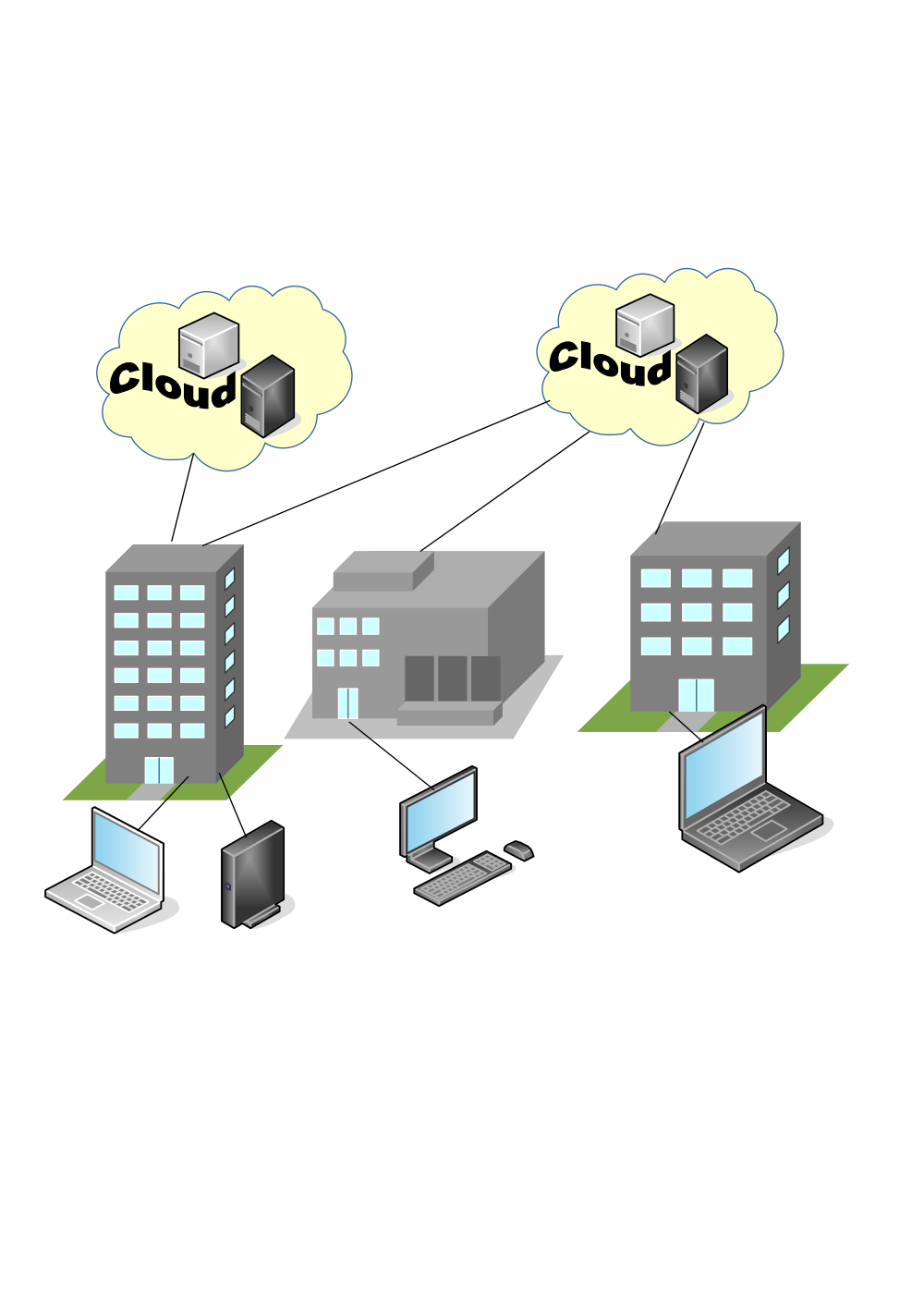

| Hybrid cloud | This deployment model takes both private cloud and public cloud models and tightly integrates them, along with potentially any existing on-premises computing infrastructure. (Figure 2) The optimal end result is one seamless operating computing infrastructure, e.g., where a private cloud and on-premises infrastructure houses critical operations and a public cloud is used for data and information backup or computing resource scaling. Advantages include great flexibility in deployments, improved backup options, resource scalability, and potential cost savings. Downsides include greater effort required to integrate complex systems and make them sufficiently secure. Note that hybrid cloud is different from multicloud in that it combines both public and private computing components.[38] |

| Multicloud | This deployment model takes the concept of public cloud and multiplies it. Instead of the customer relying on a singular public cloud provider, they spread their cloud hosting, data storage, and application stack usage across more than one provider. The advantage to the customer is redundancy protection for data and systems and better value by tapping into different services. Downside comes with increased complexity in managing a multicloud deployment, as well as the potential for increased network latency and a greater cyber-attack surface. Note that multicloud requires multiple public clouds, though a private cloud can also be in the mix.[39] |

| Distributed cloud | This up-and-coming deployment model takes public cloud and expands it, such that a provider's public cloud infrastructure can be located in multiple places but be leveraged by a customer wherever they are, from a single control structure. As IBM puts it: "In effect, distributed cloud extends the provider's centralized cloud with geographically distributed micro-cloud satellites. The cloud provider retains central control over the operations, updates, governance, security, and reliability of all distributed infrastructure."[40] Meanwhile, the customer can still access all infrastructure and services as an integrated cloud from a single control structure. The benefit of this model is that, unlike multicloud, latency issues can largely be eliminated, and the risk of failures in infrastructure and services can be further mitigated. Customers in regulated environments may also see benefits as data required to be in a specific geographic location can be better guaranteed with the distributed cloud. However, some challenges to this distributed model involve the allocation of public cloud resources to distributed use and determining who's responsible for bandwidth use.[41] |

While Table 2 addresses the basic ideas inherent to these service and deployment models, even providing some upside and downside notes, we still need to make further comparisons in order to highlight some fundamental differences in otherwise seemingly similar models. Let's first compare PaaS with serverless computing or FaaS. Then we'll examine the differences among hybrid, multi-, and distributed cloud models.

1.2.1 Platform-as-a-service vs. serverless computing

As a service model, platform as a service or PaaS uses both the infrastructure layer and the platform layer of cloud computing. Hosted on the infrastructure are platform components like development tools, operating systems, database management tools, middleware, and more, which are useful for application design, development, testing, and deployment, as well as web service integration, database integration, state management, application versioning, and application instrumentation.[42][43][44] In that regard, the user of the PaaS need not think about the backend processes.

Similarly, serverless computing or FaaS largely abstracts away (think "out of sight, out of mind") the servers running any software or code the user chooses to run on the cloud provider's infrastructure. The user practically doesn't need to know anything about the underlying hardware and operating system, or how that hardware and software handles the computational load of running your software or code, as the cloud provider ends up completely responsible for that. However, this is where the similarities stop.

Let's use Amazon's AWS Lambda serverless computing service as an example for comparison with PaaS. Imagine you have some code you want performed on your website when an internet of things (IoT) device in the field takes a reading for your environmental laboratory. From your AWS Lambda account, you can "stage and invoke" the code you've written (it can be in any programming language) "from within one of the support languages in the AWS Lambda runtime environment."[45][46] In turn, that runtime environment runs on top of Amazon Linux 2, an Amazon-developed version of Red Hat Enterprise running as a Linux server operating system.[47] This can then be packaged into a container image that runs on Amazon's servers.[46] When a designated event occurs (in this example, the internet-connected device taking a reading), a message is communicated—typically via an API—to the serverless code, which is then triggered, and Amazon Lambda provisions only the necessary resources to see the code to completion. Then the AWS server spins down those resources afterwards.[48] Yes, there are servers still involved, but the critical point is the customer need only to properly package their code up, without any concern whatsoever of how the AWS server manages its use and performance. In that regard, the code is said to be run in a "serverless" fashion, not because the servers aren't involved but because the code developer is effectively abstracted from the servers running and managing the code; the developer is left to only worry about the code itself.[48][49]

PaaS is not serverless, however. First, a truly serverless model is significantly different in its scalability. The serverless model is meant to instantly provide computing resources based upon a "trigger" or programmed element, and then wind down those resources. This is perfect for the environmental lab wanting to upload remote sensor data to the cloud after each collection time; only the resources required for performing the action to completion are required, minimizing cost. However, this doesn't work well for a PaaS solution, which doesn't scale up automatically unless specifically programmed to. Sure, the developer using PaaS has more control over the development environment, but resources must be scaled up manually and left continuously running, making it less agile than serverless. This makes PaaS more suitable for more prescriptive and deliberate application development, though its usage-based pricing is a bit less precise than serverless. Additionally, serverless models aren't typically offered with development tools, as usually is the case with PaaS, so the serverless code developer must turn to their own development tools.[31][50]

1.2.2 Hybrid cloud vs. multicloud vs. distributed cloud

At casual glance, one might be led to believe these three deployment models aren't all that different. However, there are some core differences to point out, which may affect an organization's deployment strategy significantly. As Table 2 notes:

- Hybrid cloud takes private cloud and public cloud models (as well as an organization's local infrastructure) and tightly integrates them. This indicates a wide mix of computing services is being used in an integrated fashion to create value.[38][51]

- Multicloud takes the concept of public cloud and multiplies it. This indicates that two or more public clouds are being used, without a private cloud to muddy the integration.[39]

- Distributed cloud takes public cloud and expands it to multiple localized or "edge" locations. This indicates that a public cloud service's resources are strategically dispersed in locations as required by the user, while remaining accessible from and complementary to the user's private cloud or on-premises data center.[41][52]

As such, an organization's existing infrastructure and business demands, combined with its aspirations for moving into the cloud, will dictate their deployment model. But there are also advantages and disadvantages to each which may further dictate an organization's deployment decision. First, all three models provide some level of redundancy. If a failure occurs in one computing core (be it public, private, or local), another core can ideally provide backup services to fill the gap. However, each model does this in a slightly different way. In a similar way, if additional compute resources are required due to a spike in demand, each model can ramp up resources to smooth the demand spike. Hybrid and distributed clouds also have the benefit of making any future transition to a purely public cloud (be it singular or multi-) easier as part of an organization's processes and data are already found in public cloud.

Beyond these benefits, things diverge a bit. While hybrid clouds provide flexibility to maintain sensitive data in a private cloud or on-site, where security can be more tightly controlled, private clouds are resource-intensive to maintain. Additionally, due to the complexity of integrating that private cloud with all other resources, the hybrid cloud reveals a greater attack surface, complicates security protocols, and raises integration costs.[38] Multicloud has the benefit of reducing vendor lock-in (discussed later in this guide) by implementing resource utilization and storage across more than one public cloud provider. Should a need to migrate away from one vendor arrive, it's easier to continue critical services with the other public cloud vendor. This also lends to "shopping around" for public cloud services as costs lower and offerings change. However, this multicloud approach brings with it its own integration challenges, including differences in technologies between vendors, latency complexities between the services, increased points of attack with more integrations, and load balancing issues between the services.[39] A distributed cloud model removes some of that latency and makes it easier to manage integrations and reduce network failure risks from one control center. It also benefits organizations requiring localized data storage due to regulations. However, with multiple servers being involved, it makes it a bit more difficult to troubleshoot integration and network issues across hardware and software. Additionally, implementation costs are likely to be higher, and security for replicated data across multiple locations becomes more complex and risky.[41][53]

1.2.3 Edge computing?

The concept of "edge" has been mentioned a few times in this chapter, but the topic of clouds, edges, cloud computing, and edge computing should be briefly addressed further, particularly as edges and edge computing gain traction in some industries.[54] Edges and edge computing share a few similarities to clouds and cloud computing, but they are largely different. These concepts can be broken down as such[54][55][56]:

- Clouds: "places where data can be stored or applications can run. They are software-defined environments created by datacenters or server farms." These involve strategic locations where network connectivity is essentially reliable.

- Edges: "also places where data is collected. They are physical environments made up of hardware outside a datacenter." These are often remote locations where network connectivity is limited at best.

- Cloud computing: an "act of running workloads in a cloud."

- Edge computing: an "act of running workloads on edge devices."

In this case, the locations are differentiated from the actions, though it's notable that actions involving the two aren't exclusive. For example, if remote sensors collect data at the edge of a network and, rather than processing it locally at the edge, transfer it to the cloud for processing, edge computing hasn't happened; it only happens if data is collected and processed all at the edge.[55] Additionally, those remote sensors—which are representative of internet of things (IoT) technology—shouldn't be assumed as connected to both a cloud and edge; they all can be connected as part of a network, but they don't have to be. Linux company Red Hat adds[55]:

Clouds can exist without the Internet of Things (IoT) or edge devices. IoT and edge can exist without clouds. IoT can exist without edge devices or edge computing. IoT devices may connect to an edge or a cloud. Some edge devices connect to a cloud or private datacenter, others edge devices only connect to similarly central locations intermittently, and others never connect to anything—at all.

However, edge computing associated with laboratory-tangential industry activities such as environmental monitoring, manufacturing, and mining will most often have IoT devices that don't necessarily rely on a central location or cloud. These industry activities rely on edges and edge computing because 1. those data-based activities must be executed as near to "immediately" as possible, and 2. those data-based activities usually involve large volumes of data, which are impractical to send to the cloud.[54][55][56]

That said, is your laboratory seeking cloud computing services or edge computing services? The most likely answer is cloud computing, particularly if you're seeking a cloud-based software solution to help manage your laboratory activities. However, there may be a few cases where edge computing makes sense for your laboratory, particularly if its a field laboratory in a more remote location. For example, the U.S. Department of Energy's Urban Integrated Field Laboratory is helping U.S. cities like Chicago using localized instrument clusters to gather climate data for local processing.[57] Another example can be found in a biological manufacturing laboratory with a strong need to integrate high-data-output devices to process and act upon that data locally.[58] Finally, consider the laboratory with significant need for environmental monitoring for mission-critical samples; such a laboratory may also benefit from an IoT + edge computing pairing that provides local redundancy in that monitoring program, especially during power and internet disruptions.[59] Just remember that these examples, however, don't represent a majority of lab's needs, which will largely depend more upon clouds and cloud computing to accomplish their goals more effectively.

1.3 The relationship between cloud computing and the open source paradigm

Cloud computing is built on a wide array of technologies and utilities, including many built on the open source paradigm. According to the Open Source Initiative, open-source software, hardware, etc. is open-source not only because of its implied open access to how it's constructed (e.g., source code, schematics) but also for a number of other reasons[60]:

- It should be without restriction in how it is "distributed" or used within an aggregate software distribution of many components.

- It should allow derivatives and modifications under the same terms as the original license, and that license should be portable with the derived or modified item.

- It should permit distribution of software, hardware, etc. built from modified source code or schematics.

- It should be without restriction in what person, organization, business, etc. is permitted to use it.

- Its license should not place restrictions on other software or hardware schematics distributed with the original item.

- Its license should not place technology-specific restriction on how the item is implemented.

Licenses vary widely from product to product, but broadly speaking, this all means if a commercial venture wants to run a significant chunk of its cloud operations on open-source technologies, it should be able to do so, as long as all license requirements are met. This same principle can be seen in early pushes for "open cloud," which emphasizes the need for "interoperability and portability across different clouds" through principles similar to the Open Source Initiative.[61]

One need look no further than to Linux, a family of open-source operating systems, to discover how open-source solutions have gained prevalence in cloud computing and other enterprises. More than 95 percent of the top one million web domains are served up using Linux-based servers.[62] In 2019, 96.3 percent of the top one billion enterprise business servers were running on Linux.[63] And Canonical's open-source Ubuntu Linux distribution has garnered a growing reputation in cloud computing and other enterprise scenarios due to its focus on security.[64]

In fact, Microsoft shifted its formerly anti-Linux stance in the mid-2010s to a stronger embrace of the open-source OS. In 2014, it began offering several Linux distributions in its Azure public cloud platform and infrastructure and announced it would make server-side .NET open-source, while also adding Linux support to its SQL Server and joining the Linux Foundation in 2016.[65][66][67] Why the philosophy change? As Microsoft's Database Systems Manager Rohan Kumar put it in 2016: "In the messy, real world of enterprise IT, hybrid shops are the norm and customers don't need or want vendors to force their hands when it comes to operating systems. Serving these customers means giving them flexibility."[65] That flexibility expanded to open sourcing SONiC, its network operating system, in 2017 and PowerShell, it's task automation and configuration tool, in 2018. Microsoft's Teams client was made available for Linux in 2019[67], and other elements of Microsoft Windows continue to see increased compatibility with Linux distributions such as Ubuntu.[68]

Others in Big Tech have also made contributions to open-source cloud-based technologies. Take for example Kubernetes, originally a Google project that eventually was open-sourced in 2014.[69] The open-source container management tool soon after was donated to the Cloud Native Computing Foundation (CNCF) run by the Linux Foundation, "to help facilitate collaboration among developers and operators on common technologies for deploying cloud native applications and services."[70] Since then, Kubernetes has become an integral part of many a cloud infrastructure due to its ability to provide lightweight, portable containerization—a complete runtime environment—to a bundle of applications run in the cloud. The software also manages resource scaling for applications, manages underlying infrastructure deployment, and allows for automatically mounting local and cloud storages.[71] The open-source nature of the code also allows an organization's developers to review Kubernetes’ code to ensure it's meeting security policies and regulations, as well as make their own tweaks as needed.[72] Writing for Hewlett Packard in 2020, entrepreneur Matt Sarrel estimated that some 70 to 85 percent of containerized applications are doing it on top of some version of Kubernetes.[72]

Finally, other open-source software tools complement cloud computing efforts. For example, applications like Apache CloudStack, Cloudify, ManageIQ, and OpenStack put open-source cloud management in the hands of a cloud-ops team.[73] Eucalyptus is "open-source software for building AWS-compatible private and hybrid clouds."[74] Keylime is a security tool that allows users "to check for themselves that the cloud storing their data is as secure as the cloud computer owners say it is."[75] Rook is a "cloud-native storage orchestrator" that provides a variety of storage management tools to allow cloud developers to "natively integrate with cloud-native environments."[76] And the OpenStack project, with its collection of software components enabling cloud infrastructure, can't be forgotten.[77] These and other open-source tools continue to drive how cloud computing is implemented, managed, and monitored, while highlighting the importance of the open source paradigm to cloud computing.

References

- ↑ 1.0 1.1 Pogue, D. (17 July 2008). "In Sync to Pierce the Cloud". The New York Times. Archived from the original on 05 January 2018. https://web.archive.org/web/20180105205750/https://www.nytimes.com/2008/07/17/technology/personaltech/17pogue.html. Retrieved 28 July 2023.

- ↑ 2.0 2.1 Wang, L.; von Laszewski, G.; Younge, A. et al. (2010). "Cloud Computing: A Perspective Study". New Generation Computing 28: 137–46. doi:10.1007/s00354-008-0081-5. https://scholarworks.rit.edu/cgi/viewcontent.cgi?article=1748&context=other.

- ↑ 3.0 3.1 Chamberlin, B. (28 October 2008). "Cloud Computing: What is it?". BillChamberlin.com. https://www.billchamberlin.com/cloud-computing-what-is-it/. Retrieved 28 July 2023.

- ↑ French, J. (2021). "Cloud computing and web services". The Institution of Engineering and Technology. https://www.theiet.org/publishing/inspec/researching-hot-topics/cloud-computing-and-web-services/. Retrieved 28 July 2023.

- ↑ "What Is Cloud Native?". Amazon Web Services. https://aws.amazon.com/what-is/cloud-native/. Retrieved 28 July 2023.

- ↑ Maurer, T.; Hinck, G. (31 August 2020). "Cloud Security: A Primer for Policymakers". Carnegie Endowment for International Peace. https://carnegieendowment.org/2020/08/31/cloud-security-primer-for-policymakers-pub-82597. Retrieved 28 July 2023.

- ↑ Costello, K.; Rimol, M. (13 November 2020). "Gartner Forecasts Worldwide Public Cloud Revenue to Grow 17% in 2020". Gartner. https://www.gartner.com/en/newsroom/press-releases/2019-11-13-gartner-forecasts-worldwide-public-cloud-revenue-to-grow-17-percent-in-2020. Retrieved 28 July 2023.

- ↑ "Gartner Forecasts Worldwide Public Cloud End-User Spending to Reach Nearly $600 Billion in 2023". Gartner. 19 April 2023. https://www.gartner.com/en/newsroom/press-releases/2023-04-19-gartner-forecasts-worldwide-public-cloud-end-user-spending-to-reach-nearly-600-billion-in-2023. Retrieved 14 August 2023.

- ↑ Reinicke, C. (30 March 2020). "3 reasons one Wall Street firm says to stick with cloud stocks amid the coronavirus-induced market rout". Market Insider. https://markets.businessinsider.com/news/stocks/wedbush-reasons-own-cloud-stocks-coronavirus-pandemic-tech-buy-2020-3-1029045273#2-the-move-to-cloud-will-accelerate-more-quickly-amid-the-coronavirus-pandemic2. Retrieved 28 July 2023.

- ↑ 10.0 10.1 10.2 10.3 10.4 Glass, G. (November 2000). "The Web services (r)evolution, Part 1: Applying Web services to applications". IBM developerWorks. IBM. Archived from the original on 24 April 2001. https://web.archive.org/web/20010424015036/http://www-106.ibm.com/developerworks/library/ws-peer1.html. Retrieved 28 July 2023.

- ↑ 11.0 11.1 11.2 Kreger, H. (May 2001). "Web Services Conceptual Architecture (WSCA 1.0)" (PDF). IBM Software Group. https://www.researchgate.net/profile/Heather-Kreger/publication/235720479_Web_Services_Conceptual_Architecture_WSCA_10/links/563a67e008ae337ef2984607/Web-Services-Conceptual-Architecture-WSCA-10.pdf. Retrieved 28 July 2023.

- ↑ 12.0 12.1 12.2 Furrier, J. (29 January 2015). "Exclusive: The Story of AWS and Andy Jassy’s Trillion Dollar Baby". Medium.com. https://medium.com/@furrier/original-content-the-story-of-aws-and-andy-jassys-trillion-dollar-baby-4e8a35fd7ed. Retrieved 28 July 2023.

- ↑ 13.0 13.1 13.2 Miller, R. (2 July 2016). "How AWS came to be". TechCrunch. https://techcrunch.com/2016/07/02/andy-jassys-brief-history-of-the-genesis-of-aws/. Retrieved 28 July 2023.

- ↑ "Announcing Amazon Elastic Compute Cloud (Amazon EC2) - beta". Amazon Web Services. 24 August 2006. https://aws.amazon.com/about-aws/whats-new/2006/08/24/announcing-amazon-elastic-compute-cloud-amazon-ec2---beta/. Retrieved 28 July 2023.

- ↑ 15.0 15.1 Butler, D. (2006). "Amazon puts network power online". Nature 444 (528). doi:10.1038/444528a.

- ↑ Ke, J.-s. (2006). "ITeS - Transcending the Traditional Service Model". Proceedings of the 2006 IEEE International Conference on e-Business Engineering: 2. doi:10.1109/ICEBE.2006.66.

- ↑ Lohr, S. (8 October 2007). "Google and I.B.M. Join in 'Cloud Computing' Research" (PDF). The New York Times. Archived from the original on 08 October 2007. http://www.csun.edu/pubrels/clips/Oct07/10-08-07E.pdf. Retrieved 28 July 2023.

- ↑ Hand, E. (2007). "Head in the clouds". Nature 449 (963). doi:10.1038/449963a.

- ↑ 19.0 19.1 19.2 Boss, G.; Malladi, P.; Quan, D. et al. (8 October 2007). "Cloud Computing" (PDF). IBM Corporation. Archived from the original on 06 February 2009. https://web.archive.org/web/20090206015244/http://download.boulder.ibm.com/ibmdl/pub/software/dw/wes/hipods/Cloud_computing_wp_final_8Oct.pdf. Retrieved 28 July 2023.

- ↑ Lohr, S. (15 November 2007). "I.B.M. to Push 'Cloud Computing,' Using Data From Afar". The New York Times. https://www.nytimes.com/2007/11/15/technology/15blue.html. Retrieved 28 July 2023.

- ↑ Lohr, S.; Helft, M. (16 December 2007). "Google Gets Ready to Rumble With Microsoft" (PDF). The New York Times. Archived from the original on 16 December 2007. https://signallake.com/innovation/GoogleMicrosoft121607.pdf. Retrieved 28 July 2023.

- ↑ 22.0 22.1 22.2 22.3 Mell, P.; Grance, T. (September 2011). "The NIST Definition of Cloud Computing" (PDF). NIST. https://nvlpubs.nist.gov/nistpubs/Legacy/SP/nistspecialpublication800-145.pdf. Retrieved 28 July 2023.

- ↑ Violino, B. (16 March 2020). "Where to look for cost savings in the cloud". InfoWorld. https://www.infoworld.com/article/3532288/where-to-look-for-cost-savings-in-the-cloud.html. Retrieved 28 July 2023.

- ↑ Ojala, A. (2016). "Discovering and creating business opportunities for cloud services". Journal of Systems and Software 113: 408–17. doi:10.1016/j.jss.2015.11.004.

- ↑ Pettey, C. (26 October 2020). "Cloud Shift Impacts All IT Markets". Smarter with Gartner. https://www.gartner.com/smarterwithgartner/cloud-shift-impacts-all-it-markets/. Retrieved 28 July 2023.

- ↑ Pettey, C. (14 May 2019). "5 Approaches to Cloud Applications Integration". Smarter with Gartner. https://www.gartner.com/smarterwithgartner/5-approaches-cloud-applications-integration/. Retrieved 28 July 2023.

- ↑ Babaoglu, O.; Marzolla, M. (2014). "The People's Cloud". IEEE Spectrum 51 (10): 50–55. doi:10.1109/MSPEC.2014.6905491. https://spectrum.ieee.org/escape-from-the-data-center-the-promise-of-peertopeer-cloud-computing.

- ↑ Goodison, D. (20 November 2020). "10 Future Cloud Computing Trends To Watch In 2021". CRN. https://www.crn.com/news/cloud/10-future-cloud-computing-trends-to-watch-in-2021. Retrieved 28 July 2023.

- ↑ DTCC (November 2020). "Cloud Technology: Powerful and Evolving" (PDF). http://www.dtcc.com/-/media/Files/Downloads/WhitePapers/DTCC-Cloud-Journey-WP. Retrieved 28 July 2023.

- ↑ "What Is SaaS? SaaS Definition". Cloudflare, Inc. https://www.cloudflare.com/learning/cloud/what-is-saas/. Retrieved 28 July 2023.

- ↑ 31.0 31.1 "What is Platform-as-a-Service (PaaS)?". Cloudflare, Inc. https://www.cloudflare.com/learning/serverless/glossary/platform-as-a-service-paas/. Retrieved 28 July 2023.

- ↑ "What Is IaaS (Infrastructure-as-a-Service)?". Cloudflare, Inc. https://www.cloudflare.com/learning/cloud/what-is-iaas/. Retrieved 28 July 2023.

- ↑ "What is Function-as-a-Service (FaaS)?". Cloudflare, Inc. https://www.cloudflare.com/learning/serverless/glossary/function-as-a-service-faas/. Retrieved 28 July 2023.

- ↑ "What is BaaS? Backend-as-a-Service vs. serverless". Cloudflare, Inc. https://www.cloudflare.com/learning/serverless/glossary/backend-as-a-service-baas/. Retrieved 28 July 2023.

- ↑ "What Is a Private Cloud? Private Cloud vs. Public Cloud". Cloudflare, Inc. https://www.cloudflare.com/learning/cloud/what-is-a-private-cloud/. Retrieved 28 July 2023.

- ↑ Tucakov, D. (18 June 2020). "What is Community Cloud? Benefits & Examples with Use Cases". phoenixNAP Blog. phoenixNAP. https://phoenixnap.com/blog/community-cloud. Retrieved 28 July 2023.

- ↑ "What Is Hybrid Cloud? Hybrid Cloud Definition". Cloudflare, Inc. https://www.cloudflare.com/learning/cloud/what-is-a-public-cloud/. Retrieved 28 July 2023.

- ↑ 38.0 38.1 38.2 "What Is Hybrid Cloud? Hybrid Cloud Definition". Cloudflare, Inc. https://www.cloudflare.com/learning/cloud/what-is-hybrid-cloud/. Retrieved 28 July 2023.

- ↑ 39.0 39.1 39.2 "What Is Multicloud? Multicloud Definition". Cloudflare, Inc. https://www.cloudflare.com/learning/cloud/what-is-multicloud/. Retrieved 28 July 2023.

- ↑ IBM Cloud Education (3 November 2020). "Distributed cloud". IBM. https://www.ibm.com/topics/distributed-cloud. Retrieved 28 July 2023.

- ↑ 41.0 41.1 41.2 Costello, K. (12 August 2020). "The CIO’s Guide to Distributed Cloud". Smarter With Gartner. https://www.gartner.com/smarterwithgartner/the-cios-guide-to-distributed-cloud. Retrieved 28 July 2023.

- ↑ Boniface, M.; Nasser, B.; Papay, J. et al. (2010). "Platform-as-a-Service Architecture for Real-Time Quality of Service Management in Clouds". Proceedings of the Fifth International Conference on Internet and Web Applications and Services: 155–60. doi:10.1109/ICIW.2010.91.

- ↑ Xiong, H.; Fowley, F.; Pahl, C. et al. (2014). "Scalable Architectures for Platform-as-a-Service Clouds: Performance and Cost Analysis". Proceedings of the 2014 European Conference on Software Architecture: 226–33. doi:10.1007/978-3-319-09970-5_21.

- ↑ Carey, S. (22 July 2022). "What is PaaS (platform-as-a-service)? A simpler way to build software applications". InfoWorld. https://www.infoworld.com/article/3223434/what-is-paas-platform-as-a-service-a-simpler-way-to-build-software-applications.html. Retrieved 28 July 2023.

- ↑ "Serverless Architectures with AWS Lambda: Overview and Best Practices" (PDF). Amazon Web Services. November 2017. https://d1.awsstatic.com/whitepapers/serverless-architectures-with-aws-lambda.pdf. Retrieved 28 July 2023.

- ↑ 46.0 46.1 "Lambda runtimes". Amazon Web Services. https://docs.aws.amazon.com/lambda/latest/dg/lambda-runtimes.html. Retrieved 28 July 2023.

- ↑ Morelo, D. (2020). "What is Amazon Linux 2?". LinuxHint. https://linuxhint.com/what_is_amazon_linux_2/. Retrieved 28 July 2023.

- ↑ 48.0 48.1 "Serverless computing: A cheat sheet". TechRepublic. 25 December 2020. https://www.techrepublic.com/article/serverless-computing-the-smart-persons-guide/. Retrieved 28 July 2023.

- ↑ Fruhlinger, J. (15 July 2019). "What is serverless? Serverless computing explained". InfoWorld. https://www.infoworld.com/article/3406501/what-is-serverless-serverless-computing-explained.html. Retrieved 28 July 2023.

- ↑ Sander, J. (1 May 2019). "Serverless computing vs platform-as-a-service: Which is right for your business?". ZDNet. https://www.zdnet.com/article/serverless-computing-vs-platform-as-a-service-which-is-right-for-your-business/. Retrieved 28 July 2023.

- ↑ Hurwitz, J.S.; Kaufman, M.; Halper, F. et al. (2021). "What is Hybrid Cloud Computing?". Dummies.com. John Wiley & Sons, Inc. https://www.dummies.com/article/technology/information-technology/networking/cloud-computing/what-is-hybrid-cloud-computing-174473/. Retrieved 28 July 2023.

- ↑ "What is Distributed Cloud Computing?". Edge Academy. StackPath. 2021. Archived from the original on 28 February 2021. https://web.archive.org/web/20210228040550/https://www.stackpath.com/edge-academy/distributed-cloud-computing/. Retrieved 28 July 2023.

- ↑ "What is Distributed Cloud". Entradasoft. 2020. Archived from the original on 16 September 2020. https://web.archive.org/web/20200916021206/http://entradasoft.com/blogs/what-is-distributed-cloud. Retrieved 28 July 2023.

- ↑ 54.0 54.1 54.2 Arora, S. (8 August 2023). "Edge Computing Vs. Cloud Computing: Key Differences to Know". Simplilearn. https://www.simplilearn.com/edge-computing-vs-cloud-computing-article. Retrieved 16 August 2023.

- ↑ 55.0 55.1 55.2 55.3 "Cloud vs. edge". Red Hat, Inc. 14 November 2022. https://www.redhat.com/en/topics/cloud-computing/cloud-vs-edge. Retrieved 16 August 2023.

- ↑ 56.0 56.1 Bigelow, S.J. (December 2021). "What is edge computing? Everything you need to know". TechTarget Data Center. Archived from the original on 08 June 2023. https://web.archive.org/web/20230608045737/https://www.techtarget.com/searchdatacenter/definition/edge-computing. Retrieved 16 August 2023.

- ↑ "Chicago State University to serve as ‘scientific supersite’ to study climate change impact". Argonne National Laboratory. 18 July 2023. https://www.anl.gov/article/chicago-state-university-to-serve-as-scientific-supersite-to-study-climate-change-impact. Retrieved 16 August 2023.

- ↑ "Laboratory Automation". Edgenesis, Inc. 2023. https://edgenesis.com/solutions/moreDetail/LaboratoryAutomation. Retrieved 16 August 2023.

- ↑ "Industrial IoT, Edge Computing and Data Streams: A Comprehensive Guide". Flowfinity Blog. 2 August 2023. https://www.flowfinity.com/blog/industrial-iot-guide.aspx. Retrieved 16 August 2023.

- ↑ "The Open Source Definition, Version 1.9". Open Source Initiative. 3 June 2007. https://opensource.org/osd/. Retrieved 28 July 2023.

- ↑ Olavsrud, T. (13 April 2012). "Why Open Source Is the Key to Cloud Innovation". CIO. https://www.cio.com/article/284247/software-as-a-service-why-open-source-is-the-key-to-cloud-innovation.html. Retrieved 28 July 2023.

- ↑ Price, D. (27 March 2018). "The True Market Shares of Windows vs. Linux Compared". MakeUseOf. https://www.makeuseof.com/tag/linux-market-share/. Retrieved 28 July 2023.

- ↑ "Linux Operating System Market Size, Share & Covid-19 Impact Analysis, By Distribution (Virtual Machines, Servers and Desktops), By End-use (Commercial/Enterprise and Individual), and Regional Forecast, 2020-2027". Fortune Business Insights. June 2020. https://www.fortunebusinessinsights.com/linux-operating-system-market-103037. Retrieved 28 July 2023.

- ↑ Burt, J. (23 April 2020). "Locking Down Linux for the Enterprise". The Next Platform. https://www.nextplatform.com/2020/04/23/locking-down-linux-for-the-enterprise/. Retrieved 28 July 2023.

- ↑ 65.0 65.1 Olavsrud, T. (21 November 2016). "Microsoft embraces open source in the cloud and on-premises". CIO. https://www.cio.com/article/236651/microsoft-embraces-open-source-in-the-cloud-and-on-premises.html. Retrieved 28 July 2023.

- ↑ Ibanez, L. (19 November 2014). "Microsoft gets on board with open source". OpenSource.com. https://opensource.com/business/14/11/microsoft-dot-net-empower-open-source-communities. Retrieved 28 July 2023.

- ↑ 67.0 67.1 Branscombe, M. (2 December 2020). "What is Microsoft doing with Linux? Everything you need to know about its plans for open source". TechRepublic. https://www.techrepublic.com/article/what-is-microsoft-doing-with-linux-everything-you-need-to-know-about-its-plans-for-open-source/. Retrieved 28 July 2023.

- ↑ Barnes, H. (11 October 2020). "No, Microsoft is not rebasing Windows to Linux". Box of Cables. https://boxofcables.dev/no-microsoft-is-not-rebasing-windows-to-linux/. Retrieved 28 July 2023.

- ↑ Metz, C. (18 June 2014). "Google Open Sources Its Secret Weapon in Cloud Computing". Wired. https://www.wired.com/2014/06/google-kubernetes/. Retrieved 28 July 2023.

- ↑ Lardinois, F. (21 July 2015). "As Kubernetes Hits 1.0, Google Donates Technology To Newly Formed Cloud Native Computing Foundation". Tech Crunch. https://techcrunch.com/2015/07/21/as-kubernetes-hits-1-0-google-donates-technology-to-newly-formed-cloud-native-computing-foundation-with-ibm-intel-twitter-and-others/. Retrieved 28 July 2023.

- ↑ The Linux Foundation (3 January 2023). "Overview". Kubernetes Documentation. https://kubernetes.io/docs/concepts/overview/. Retrieved 28 July 2023.

- ↑ 72.0 72.1 Sarrel, M. (4 February 2020). "Why cloud-native open source Kubernetes matters". enterprise.nxt. Hewlett Packard Enterprise. Archived from the original on 18 December 2022. https://web.archive.org/web/20221218143215/https://www.hpe.com/us/en/insights/articles/why-cloud-native-open-source-kubernetes-matters-2002.html. Retrieved 28 July 2023.

- ↑ Linthicum, D. (2020). "4 essential open-source tools for cloud management". TechBeacon. https://techbeacon.com/enterprise-it/4-essential-open-source-tools-cloud-management. Retrieved 28 July 2023.

- ↑ "Eucalyptus". Appscale Systems. https://www.eucalyptus.cloud/. Retrieved 28 July 2023.

- ↑ Millar, M. (27 August 2019). "Laboratory staff develop new cybersecurity solutions for cloud computing". Lincoln Laboratory - MIT. https://www.ll.mit.edu/news/laboratory-staff-develop-new-cybersecurity-solutions-cloud-computing. Retrieved 28 July 2023.

- ↑ "Deploying highly scalable cloud storage with Rook, Part 1 (Ceph Storage)". GCore. 27 March 2023. https://gcore.com/learning/deploying-highly-scalable-cloud-storage-with-rook-part-1-ceph-storage/. Retrieved 14 August 2023.

- ↑ "OpenStack". Open Infrastructure Foundation. https://www.openstack.org/. Retrieved 28 July 2023.

Citation information for this chapter

Chapter: 1. What is cloud computing?

Title: Choosing and Implementing a Cloud-based Service for Your Laboratory

Edition: Second edition

Author for citation: Shawn E. Douglas

License for content: Creative Commons Attribution-ShareAlike 4.0 International

Publication date: August 2023