Data center

A data center is a facility used to house computer systems and associated components such as telecommunications and storage systems. A data center generally includes redundant or backup power supplies, redundant data communications connections, environmental controls (e.g. air conditioning and fire suppression), and various security devices. Data centers vary in size[1], with some large data centers capable of using as much electricity as a "medium-size town."[2]

History

Data centers have their roots in the huge computer rooms of the early ages of the computing industry. Early computer systems were complex to operate and maintain, and required a special environment in which to operate. Many cables were necessary to connect all the components, and methods to accommodate and organize these were devised, such as standard racks to mount equipment, raised floors, and cable trays (installed overhead or under the elevated floor). Also, a single mainframe required a great deal of power and had to be cooled to avoid overheating. Security was important; computers were expensive, and were often used for military purposes.[3]

During the boom of the microcomputer industry, especially during the 1980s, computers started to be deployed everywhere, in many cases with little or no care about operating requirements. However, as information technology (IT) operations started to grow in complexity, companies grew aware of the need to control IT resources.[4] With the advent of Linux and the subsequent proliferation of freely available Unix-compatible PC operating systems during the 1990s, as well as MS-DOS finally giving way to a multi-tasking capable Windows operating system, personal computers started to replace the older systems found in computer rooms.[3][5] These were called "servers," as time sharing operating systems like Unix rely heavily on the client-server model to facilitate sharing unique resources between multiple users. The availability of inexpensive networking equipment, coupled with new standards for network structured cabling, made it possible to use a hierarchical design that put the servers in a specific room inside the company. The use of the term "data center," as applied to specially designed computer rooms, started to gain popular recognition.[6]

The dot-com boom of the late '90s and early '00s brought about significant investment into what would be called Internet data centers (IDCs). As these grew in size, new technologies and practices were designed to better handle the scale and operational requirements of these facilities. These practices eventually migrated toward private data centers and were adopted largely because of their practical results.[7][3] As cloud computing became more prominent in the 2000s, business and government organizations scrutinized data centers to a higher degree in areas such as security, availability, environmental impact, and adherence to standards.[7]

Standards and design guidelines

IT operations are a crucial aspect of most organizations' business continuity, often relying on their information systems to run business operations. Organizations thus depend on reliable infrastructure for IT operations in order to minimize any chance of disruption caused by power failure and/or security breach. That reliable infrastructure is normally built on a sound set of widely accepted standards and design guidelines.

The Telecommunications Industry Association's (TIA's) Telecommunications Infrastructure Standard for Data Centers (ANSI/TIA-942) is one such example, specifying "the minimum requirements for the telecommunications infrastructure" of organizations large and small, from large "multi-tenant Internet hosting data centers" to smaller "single-tenant enterprise data centers."[8] Up until early 2014, TIA also ranked data centers from Tier 1, essentially a server room, to Tier 4, which hosts mission-critical computer systems with fully redundant subsystems and compartmentalized security zones controlled by biometric access controls methods.[8]

The Uptime Institute, "an unbiased, third-party data center research, education, and consulting organization,"[9] also has a four-tier standard (described in their document Tier Standard: Operational Sustainability) that describes the availability of data from the hardware at a data center, with the higher tiers offering greater availability.[10] The institute, however, at times voiced discontent with TIA's use of tiers in its standard, and in March 2014 TIA announced it would remove the word "tier" from its ANSI/TIA-942 standard.[11]

Other standards and guidelines for data center planning, installation, and operation include:

- BICSI's (Building Industry Consulting Services International's) ANSI/BICSI 002-2011: a data center standard which integrates information and standards from other entities[12]

- OVE's ÖVE/ÖNORM EN 50600-1: a developing European standard for "data centre facilities and infrastructures"[13][14]

- Telcordia's NEBS (Network Equipment - Building System) documents: telecommunication and environmental design guidelines for data center spaces[15]

- eco's Datacenter Star Audit (DCSA): auditing documents that allow IT personnel to assess the functionality of planned or operational data centers[16]

- American Institute of CPAs' (AICPA's) SOC (Service Organization Control) Reports: auditing reports which provide "a standard benchmark by which two data center audits can be compared against the same set of criteria"[17][18]

- International Organization for Standardization's (ISO's) various standards: optimal operation of a data center is dictated by several ISO standards, including ISO 14001:2004 Environmental Management System Standard, ISO / IEC 27001:2005 Information Security Management System Standard, and ISO 50001:2011 Energy Management System Standard[19][20]

Design considerations

A data center can occupy one room of a building, one or more floors, or an entire building. Most of the equipment is often in the form of servers mounted in 19-inch rack cabinets, which are usually placed in single rows forming corridors or aisles between them. This allows people access to the front and rear of each cabinet. Servers differ greatly in size from rack units to large freestanding storage silos which occupy many square feet of floor space. Some equipment such as mainframe computers and storage devices are often as big as the racks themselves and are placed alongside them. Local building codes may govern minimum ceiling heights.

What follows is a list of other considerations made during the design and implementation phase.

| Data center design considerations | |

|---|---|

| Consideration | Description |

| Design programming | Architecture of the building aside, three additional considerations to design programming data centers are facility topology design (space planning), engineering infrastructure design (mechanical systems such as cooling and electrical systems including power), and technology infrastructure design (cable plant). Each is influenced by performance assessments and modelling to identify gaps pertaining to the operator's performance desires over time.[1] |

| Design modeling and recommendation | Modeling criteria are used to develop future-state scenarios for space, power, cooling, and costs.[21] Based on previous design reviews and the modeling results, recommendations on power, cooling capacity, and resiliency level can be made. Additionally, availability expectations may also be reviewed. |

| Conceptual and detail design | A conceptual design combines the results of design recommendation with "what if" scenarios to ensure all operational outcomes are met in order to future-proof the facility, including the addition of modular expansion components that can be constructed, moved, or added to quickly as needs change. This process yields a proof of concept, which is then incorporated into a detail design that focuses on creating the facility schematics, construction documents, and IT infrastructure design and documentation.[1] |

| Mechanical and electrical engineering infrastructure design | Mechanical engineering infrastructure design addresses mechanical systems involved in maintaining the interior environment of a data center, while electrical engineering infrastructure design focuses on designing electrical configurations that accommodate the data center's various services and reliability requirements.[22]

Both phases involve recognizing the need to save space, energy, and costs. Availability expectations are fully considered as part of these savings. For example, if the estimated cost of downtime within a specified time unit exceeds the amortized capital costs and operational expenses, a higher level of availability should be factored into the design. If, however, the cost of avoiding downtime greatly exceeds the cost of downtime itself, a lower level of availability will likely get factored into the design.[23] |

| Technology infrastructure design | Numerous cabling systems for data center environments exist, including horizontal cabling; voice, modem, and facsimile telecommunications services; premises switching equipment; computer and telecommunications management connections; monitoring station connections; and data communications. Technology infrastructure design addresses all of these systems.[1] |

| Site selection | When choosing a location for a data center, aspects such as proximity to available power grids, telecommunications infrastructure, networking services, transportation lines, and emergency services must be considered. Another consideration is climatic conditions, which may dictate what cooling technologies should be deployed.[24] |

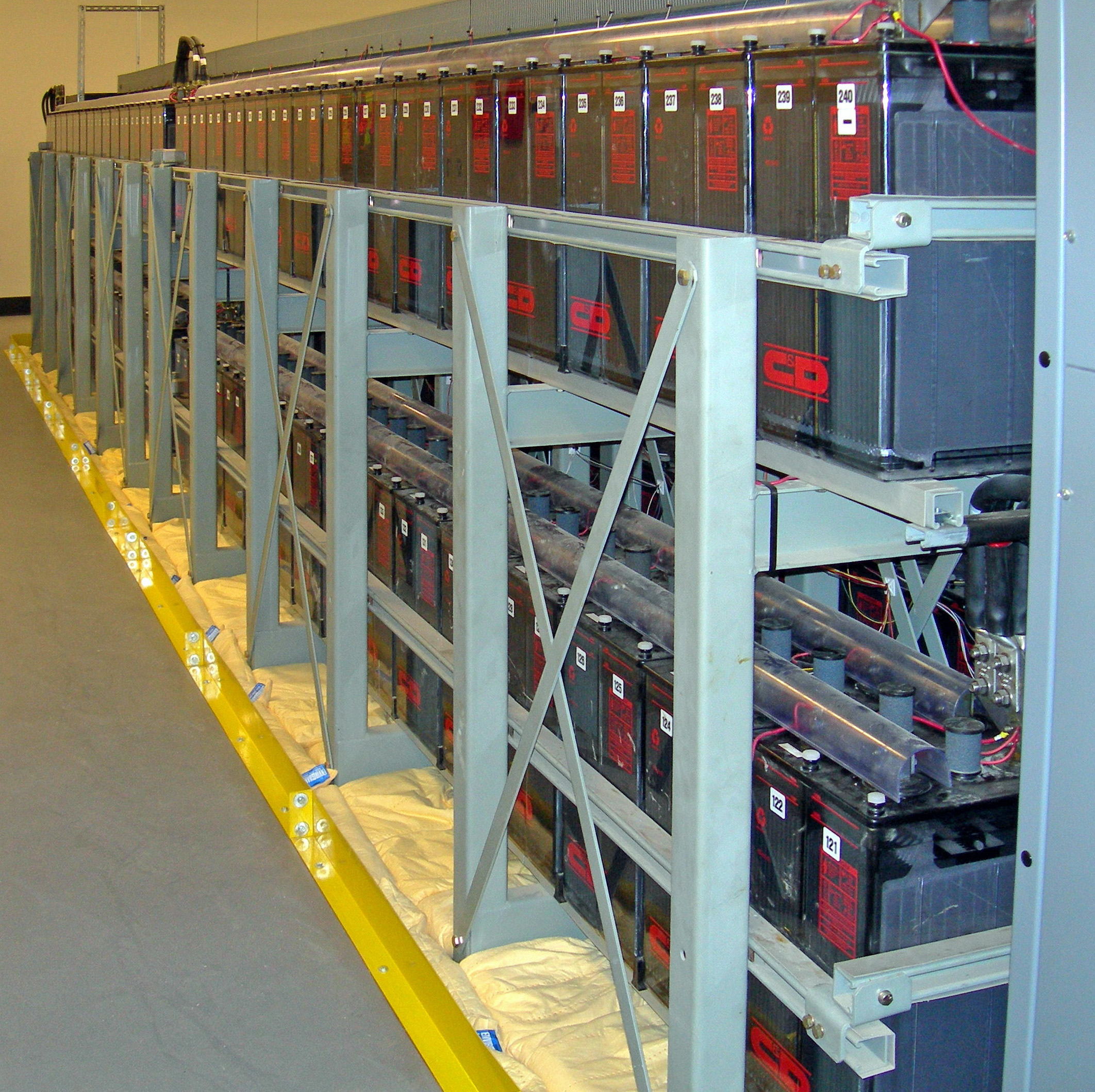

| Power management | The power supply to a data center must be uninterrupted. To achieve this, designers will use a combination of uninterruptible power supplies, battery banks, and/or fueled turbine generators. Static transfer switches are typically used to ensure instantaneous switchover from one supply to the other in the event of a power failure. |

| Environmental control | As heat is a byproduct of supplying power to electrical components, appropriate cooling mechanisms like air conditioning or economizer cooling — which uses outside air to cool components — must be used. In addition to cooling the air, humidity levels must be controlled to prevent both condensation from too much humidity and static electricity discharge from too little humidity.[26] Additional considerations are made for using raised flooring to cater for better and uniform air distribution and circulation as well as providing cabling space.[27] However, the concern of zinc whisker build-up on those raised floor tiles and other metal racks must also be addressed; they risk short circuiting electrical components after being dislodged during maintenance and installs.[28] |

| Fire protection | Like most other buildings, fire protection and prevention systems are required. However, due to the investment in and criticality of the data center, extra measures are designed into the facility and its operation. High-sensitivity aspirating smoke detectors can be connected to several types of alarms, allowing for a soft warning alarm for technicians to investigate at one threshold and the activation of fire suppression systems at another threshold. Aside from manual fire extinguishers, gas-based or clean agent fire suppression systems provide fire suppression without the possibility of ruining more equipment than necessary using water-based systems, which are still used for the most critical of situations.[29] Passive fire wall materials are also installed to restrict fire to only a portion of the facility. |

| Physical security | Depending on the sensitivity of information contained in the data center, physical access controls are used to prevent unauthorized entry into the facility. Small posts of bollards may be placed to prevent vehicles or carts above a certain size from passing. Access control vestibules or "man traps" with two sets of interlocking doors may be used to force verification of credentials before admittance into the center. Video cameras, security guards, and fingerprint recognition devices may also be applied as part of a security protocol.[30] |

Energy use

Energy use is a central issue for data centers. Power draw for data centers ranges from a few kW for a rack of servers in a closet to several tens of MW for large facilities. Some facilities have power densities more than 100 times that of a typical office building.[31] For higher power density facilities, electricity costs are a dominant operating expense and account for over 10% of the total cost of ownership (TCO) of a data center.[32] By 2012 the cost of power for the data center is expected to exceed the cost of the original capital investment.[33]

Greenhouse gas emissions

and sometimes are a significant source of air pollution in the form of diesel exhaust.

Capabilities exist to install modern retrofit devices on older diesel generators, including those found in data centers, to reduce emissions.

Additionally, engines manufactured in the U.S. beginning in 2014 must meet strict emissions reduction requirements according to the U.S. Environmental Protection Agency's "Tier 4" regulations for off-road uses including those found in diesel generators. These regulations require near zero levels of emissions. In 2007 the entire information and communication technologies or ICT sector was estimated to be responsible for roughly 2% of global carbon emissions with data centers accounting for 14% of the ICT footprint.[36] The US EPA estimates that servers and data centers are responsible for up to 1.5% of the total US electricity consumption,[37] or roughly .5% of US GHG emissions,[38] for 2007. Given a business as usual scenario greenhouse gas emissions from data centers is projected to more than double from 2007 levels by 2020.[36]

Siting is one of the factors that affect the energy consumption and environmental effects of a datacenter. In areas where climate favors cooling and lots of renewable electricity is available the environmental effects will be more moderate. Thus countries with favorable conditions, such as: Canada,[39] Finland,[40] Sweden[41] and Switzerland,[42] are trying to attract cloud computing data centers.

In an 18-month investigation by scholars at Rice University’s Baker Institute for Public Policy in Houston and the Institute for Sustainable and Applied Infodynamics in Singapore, data center-related emissions will more than triple by 2020. [43]

Energy efficiency

The most commonly used metric to determine the energy efficiency of a data center is power usage effectiveness, or PUE. This simple ratio is the total power entering the data center divided by the power used by the IT equipment.

Power used by support equipment, often referred to as overhead load, mainly consists of cooling systems, power delivery, and other facility infrastructure like lighting. The average data center in the US has a PUE of 2.0,[37] meaning that the facility uses one watt of overhead power for every watt delivered to IT equipment. State-of-the-art data center energy efficiency is estimated to be roughly 1.2.[44] Some large data center operators like Microsoft and Yahoo! have published projections of PUE for facilities in development; Google publishes quarterly actual efficiency performance from data centers in operation.[45]

The U.S. Environmental Protection Agency has an Energy Star rating for standalone or large data centers. To qualify for the ecolabel, a data center must be within the top quartile of energy efficiency of all reported facilities.[46]

European Union also has a similar initiative: EU Code of Conduct for Data Centres[47]

Energy use analysis

Often, the first step toward curbing energy use in a data center is to understand how energy is being used in the data center. Multiple types of analysis exist to measure data center energy use. Aspects measured include not just energy used by IT equipment itself, but also by the data center facility equipment, such as chillers and fans.[48]

Power and cooling analysis

Power is the largest recurring cost to the user of a data center.[49] A power and cooling analysis, also referred to as a thermal assessment, measures the relative temperatures in specific areas as well as the capacity of the cooling systems to handle specific ambient temperatures.[50] A power and cooling analysis can help to identify hot spots, over-cooled areas that can handle greater power use density, the breakpoint of equipment loading, the effectiveness of a raised-floor strategy, and optimal equipment positioning (such as AC units) to balance temperatures across the data center. Power cooling density is a measure of how much square footage the center can cool at maximum capacity.[51]

Energy efficiency analysis

An energy efficiency analysis measures the energy use of data center IT and facilities equipment. A typical energy efficiency analysis measures factors such as a data center’s power use effectiveness (PUE) against industry standards, identifies mechanical and electrical sources of inefficiency, and identifies air-management metrics.[52]

Computational fluid dynamics (CFD) analysis

This type of analysis uses sophisticated tools and techniques to understand the unique thermal conditions present in each data center—predicting the temperature, airflow, and pressure behavior of a data center to assess performance and energy consumption, using numerical modeling.[53] By predicting the effects of these environmental conditions, CFD analysis in the data center can be used to predict the impact of high-density racks mixed with low-density racks[54] and the onward impact on cooling resources, poor infrastructure management practices and AC failure of AC shutdown for scheduled maintenance.

Thermal zone mapping

Thermal zone mapping uses sensors and computer modeling to create a three-dimensional image of the hot and cool zones in a data center.[55]

This information can help to identify optimal positioning of data center equipment. For example, critical servers might be placed in a cool zone that is serviced by redundant AC units.

Green datacenters

Datacenters use a lot of power, consumed by two main usages: the power required to run the actual equipment and then the power required to cool the equipment. The first category is addressed by designing computers and storage systems that are more and more power-efficient. And to bring down the cooling costs datacenter designers try to use natural ways to cool the equipment. Many datacenters have to be located near people-concentrations to manage the equipment, but there are also many circumstances where the datacenter can be miles away from the users and don't need a lot of local management. Examples of this are the 'mass' datacenters like Google or Facebook: these DC's are built around many standarised servers and storage-arrays and the actual users of the systems are located all around the world. After the initial build of a datacenter there is not much staff required to keep it running: especially datacenters that provide mass-storage or computing power don't need to be near population centers. Datacenters in arctic locations where outside air provides all cooling are getting more popular as cooling and electricity are the two main variable cost components.[56]

Network infrastructure

Communications in data centers today are most often based on networks running the IP protocol suite. Data centers contain a set of routers and switches that transport traffic between the servers and to the outside world. Redundancy of the Internet connection is often provided by using two or more upstream service providers (see Multihoming).

Some of the servers at the data center are used for running the basic Internet and intranet services needed by internal users in the organization, e.g., e-mail servers, proxy servers, and DNS servers.

Network security elements are also usually deployed: firewalls, VPN gateways, intrusion detection systems, etc. Also common are monitoring systems for the network and some of the applications. Additional off site monitoring systems are also typical, in case of a failure of communications inside the data center.

Data center infrastructure management

Data center infrastructure management (DCIM) is the integration of information technology (IT) and facility management disciplines to centralize monitoring, management and intelligent capacity planning of a data center's critical systems. Achieved through the implementation of specialized software, hardware and sensors, DCIM enables common, real-time monitoring and management platform for all interdependent systems across IT and facility infrastructures.

Depending on the type of implementation, DCIM products can help data center managers identify and eliminate sources of risk to increase availability of critical IT systems. DCIM products also can be used to identify interdependencies between facility and IT infrastructures to alert the facility manager to gaps in system redundancy, and provide dynamic, holistic benchmarks on power consumption and efficiency to measure the effectiveness of “green IT” initiatives.

Measuring and understanding important data center efficiency metrics. A lot of the discussion in this area has focused on energy issues, but other metrics beyond the PUE can give a more detailed picture of the data center operations. Server, storage, and staff utilization metrics can contribute to a more complete view of an enterprise data center. In many cases, disc capacity goes unused and in many instances the organizations run their servers at 20% utilization or less.[57] More effective automation tools can also improve the number of servers or virtual machines that a single admin can handle.

DCIM providers are increasingly linking with computational fluid dynamics providers to predict complex airflow patterns in the data center. The CFD component is necessary to quantify the impact of planned future changes on cooling resilience, capacity and efficiency.[58]

Applications

The main purpose of a data center is running the applications that handle the core business and operational data of the organization. Such systems may be proprietary and developed internally by the organization, or bought from enterprise software vendors. Such common applications are ERP and CRM systems.

A data center may be concerned with just operations architecture or it may provide other services as well.

Often these applications will be composed of multiple hosts, each running a single component. Common components of such applications are databases, file servers, application servers, middleware, and various others.

Data centers are also used for off site backups. Companies may subscribe to backup services provided by a data center. This is often used in conjunction with backup tapes. Backups can be taken off servers locally on to tapes. However, tapes stored on site pose a security threat and are also susceptible to fire and flooding. Larger companies may also send their backups off site for added security. This can be done by backing up to a data center. Encrypted backups can be sent over the Internet to another data center where they can be stored securely.

For quick deployment or disaster recovery, several large hardware vendors have developed mobile solutions that can be installed and made operational in very short time. Companies such as Cisco Systems,[59] Sun Microsystems (Sun Modular Datacenter),[60][61] Bull (mobull),[62] IBM (Portable Modular Data Center), HP (Performance Optimized Datacenter),[63] Huawei (Container Data Center Solution),[64] and Google (Google Modular Data Center) have developed systems that could be used for this purpose.[65][66]

Notes

This article reuses numerous content elements from the Wikipedia article.

References

- ↑ 1.0 1.1 1.2 1.3 Bruno, Anthony; Jordan, Steve (2011). Official Cert Guide: CCDA 640-864 (4th ed.). Cisco Press. p. 130. ISBN 9780132372145. http://books.google.com/books?id=cuOoV5u3WCUC&pg=PA130. Retrieved 26 August 2014.

- ↑ Glanz, James (22 September 2012). "Power, Pollution and the Internet". The New York Times. http://www.nytimes.com/2012/09/23/technology/data-centers-waste-vast-amounts-of-energy-belying-industry-image.html. Retrieved 26 August 2014.

- ↑ 3.0 3.1 3.2 Bartels, Angela (31 August 2011). "[INFOGRAPHIC Data Center Evolution: 1960 to 2000"]. The Rackspace Blog! & Newsroom. Rackspace, US Inc. http://www.rackspace.com/blog/datacenter-evolution-1960-to-2000/. Retrieved 26 August 2014.

- ↑ Murray, John P. (26 October 1981). "Data Center Problems: You're Not Alone". Computerworld. http://books.google.com/books?id=1REkdf3I86oC&pg=PA35. Retrieved 26 August 2014.

- ↑ Barton, Jim (1 October 2003). "From Server Room to Living Room". Queue. Association for Computing Machinery. http://queue.acm.org/detail.cfm?id=945076. Retrieved 26 August 2014.

- ↑ Axelrod, C. Warren; Blanding, Steve (ed.) (1998). "Enterprise Operations Management Handbook". CRC Press. pp. 75–84. ISBN 9781420052169. http://books.google.com/books?id=XrEwl7VWXXMC&pg=PA75. Retrieved 26 August 2014.

- ↑ 7.0 7.1 Katz, Randy H (1 February 2009). "Tech Titans Building Boom". IEEE Spectrum. IEEE. http://spectrum.ieee.org/green-tech/buildings/tech-titans-building-boom. Retrieved 26 August 2014.

- ↑ 8.0 8.1 "TIA-942: Telecommunications Infrastructure Standard for Data Centers". Telecommunications Industry Association. 1 March 2014. https://global.ihs.com/doc_detail.cfm?&rid=TIA&input_doc_number=TIA-942&item_s_key=00414811&item_key_date=860905&input_doc_number=TIA-942&input_doc_title=#abstract. Retrieved 26 August 2014.

- ↑ "About Uptime Institute". 451 Group, Inc. http://uptimeinstitute.com/about-us. Retrieved 26 August 2014.

- ↑ "Uptime Institute Publications". 451 Group, Inc. http://uptimeinstitute.com/publications. Retrieved 26 August 2014.

- ↑ "TIA to remove the word ‘Tier’ from its 942 Data Center standards". Cabling Installation & Maintenance. PennWell Corporation. 18 March 2014. http://www.cablinginstall.com/articles/2014/03/tia-942-tiers.html. Retrieved 26 August 2014.

- ↑ "ANSI/BICSI 002-2011, Data Center Design and Implementation Best Practices". BISCI. https://www.bicsi.org/book_details.aspx?Book=BICSI-002-CM-11-v5&d=0. Retrieved 26 August 2014.

- ↑ "The European Standard EN 50600". CIS International. http://www.cis-cert.com/Pages/com/System-Zertifizierung/Data-Centers/Certification/European-Standard-EN-50600.aspx. Retrieved 26 August 2014.

- ↑ "ÖVE/ÖNORM EN 50600-1:2013-06-01". Österreichischer Verband für Elektrotechnik. https://www.ove.at/webshop/artikel/9f5a9db895-ove-onorm-en-50600-1-2013-06-01.html. Retrieved 26 August 2014.

- ↑ "NEBS Documents and Technical Services". Telcordia Technologies, Inc. http://telecom-info.telcordia.com/site-cgi/ido/docs2.pl?ID=239065400&page=nebs. Retrieved 26 August 2014.

- ↑ "About DCSA". eco. http://www.dcaudit.com/about-dcsa.html. Retrieved 26 August 2014.

- ↑ Klein, Mike (3 March 2011). "SAS 70, SSAE 16, SOC and Data Center Standards". Data Center Knowledge. iNET Interactive. http://www.datacenterknowledge.com/archives/2011/03/03/sas-70-ssae-16-soc-and-data-center-standards/. Retrieved 26 August 2014.

- ↑ "SOC Reports Information for CPAs". AICPA. http://www.aicpa.org/interestareas/frc/assuranceadvisoryservices/pages/cpas.aspx. Retrieved 26 August 2014.

- ↑ "ISO Certified Data Centers". Equinix, Inc. http://www.equinix.com/solutions/by-services/colocation/standards-and-compliance/iso-certified-data-centers/. Retrieved 26 August 2014.

- ↑ "Inside our data centers". Google Data Centers. Google. https://www.google.com/about/datacenters/inside/. Retrieved 26 August 2014.

- ↑ Mullins, Robert (29 June 2011). "Romonet Offers Predictive Modelling Tool For Data Center Planning". Information Week Network Computing. UBM Tech. http://www.networkcomputing.com/data-centers/romonet-offers-predictive-modeling-tool-for-data-center-planning/d/d-id/1232857?. Retrieved 26 August 2014.

- ↑ Jew, Jonathan (May/June 2010). "BICSI Data Center Standard: A Resource for Today’s Data Center Operators and Designers". BICSI News Magazine. BISCI. pp. 26–30. http://www.nxtbook.com/nxtbooks/bicsi/news_20100506/#/26. Retrieved 26 August 2014.

- ↑ Clark, Jeffrey (12 October 2011). "The Price of Data Center Availability". The Data Center Journal. http://www.datacenterjournal.com/design/the-price-of-data-center-availability/. Retrieved 26 August 2014.

- ↑ Tucci, Linda (7 May 2008). "Five tips on selecting a data center location". SearchCIO. TechTarget. http://searchcio.techtarget.com/news/1312614/Five-tips-on-selecting-a-data-center-location. Retrieved 26 August 2014.

- ↑ "Evaluating the Economic Impact of UPS Technology" (PDF). Liebert Corporation. 2004. Archived from the original on 22 November 2010. https://web.archive.org/web/20101122074817/http://emersonnetworkpower.com/en-US/Brands/Liebert/Documents/White%20Papers/Evaluating%20the%20Economic%20Impact%20of%20UPS%20Technology.pdf. Retrieved 27 August 2014.

- ↑ ASHRAE Technical Committee 9.9 (2012). Thermal Guidelines for Data Processing Environments (3rd ed.). American Society of Heating, Refrigerating and Air-Conditioning Engineers. pp. 136. ISBN 9781936504336. http://books.google.com/books?id=AgWcMQEACAAJ. Retrieved 27 August 2014.

- ↑ GR-2930 "NEBS: Raised Floor Generic Requirements for Network and Data Centers". Telcordia Technologies, Inc. July 2012. http://telecom-info.telcordia.com/site-cgi/ido/docs.cgi?ID=SEARCH&DOCUMENT=GR-2930& GR-2930. Retrieved 27 August 2014.

- ↑ "Other Metal Whiskers". Tin Whisker (and Other Metal Whisker) Homepage. NASA. 24 January 2011. http://nepp.nasa.gov/whisker/other_whisker/index.htm. Retrieved 27 August 2014.

- ↑ Tubbs, Jeffrey; DiSalvo, Garr; Neviackas, Andrew (December 2013). "Data Center Fire Protection". FacilitiesNet. Trade Press. http://www.facilitiesnet.com/datacenters/article/A-Comprehensive-Approach-To-Data-Center-Fire-Safety--14593. Retrieved 27 August 2014.

- ↑ "Effective Data Center Physical Security Best Practices for SAS 70 Compliance". NDB LLP. 2008. http://www.sas70.us.com/industries/data-center-colocations.php. Retrieved 27 August 2014.

- ↑ "Data Center Energy Consumption Trends". U.S. Department of Energy. http://www1.eere.energy.gov/femp/program/dc_energy_consumption.html. Retrieved 2010-06-10.

- ↑ J Koomey, C. Belady, M. Patterson, A. Santos, K.D. Lange. Assessing Trends Over Time in Performance, Costs, and Energy Use for Servers Released on the web August 17th, 2009.

- ↑ "Quick Start Guide to Increase Data Center Energy Efficiency". U.S. Department of Energy. http://www1.eere.energy.gov/femp/pdfs/data_center_qsguide.pdf. Retrieved 2010-06-10.

- ↑ James Glanz (September 23, 2012). "Data Barns in a Farm Town, Gobbling Power and Flexing Muscle". The New York Times. http://www.nytimes.com/2012/09/24/technology/data-centers-in-rural-washington-state-gobble-power.html. Retrieved September 25, 2012.

- ↑ "CASE STUDIES OF STATIONARY RECIPROCATING DIESEL ENGINE RETROFIT PROJECTS". Manufacturers of Emission Controls Association. November 2009. http://www.meca.org/galleries/files/Stationary_Engine_Diesel_Retrofit_Case_Studies_1109final.pdf. Retrieved August 5, 2014.

- ↑ 36.0 36.1 "Smart 2020: Enabling the low carbon economy in the information age". The Climate Group for the Global e-Sustainability Initiative. http://www.smart2020.org/_assets/files/03_Smart2020Report_lo_res.pdf. Retrieved 2008-05-11.

- ↑ 37.0 37.1 "Report to Congress on Server and Data Center Energy Efficiency". U.S. Environmental Protection Agency ENERGY STAR Program. http://www.energystar.gov/ia/partners/prod_development/downloads/EPA_Datacenter_Report_Congress_Final1.pdf.

- ↑ A calculation of data center electricity burden cited in the Report to Congress on Server and Data Center Energy Efficiency and electricity generation contributions to green house gas emissions published by the EPA in the Greenhouse Gas Emissions Inventory Report. Retrieved 2010-06-08.

- ↑ Canada Called Prime Real Estate for Massive Data Computers - Globe & Mail Retrieved June 29, 2011.

- ↑ Finland - First Choice for Siting Your Cloud Computing Data Center.. Retrieved 4 August 2010.

- ↑ Stockholm sets sights on data center customers. Accessed 4 August 2010.

- ↑ Swiss Carbon-Neutral Servers Hit the Cloud.. Retrieved 4 August 2010.

- ↑ Katrice R. Jalbuena (October 15, 2010). "Green business news.". EcoSeed. http://ecoseed.org/en/business-article-list/article/1-business/8219-i-t-industry-risks-output-cut-in-low-carbon-economy. Retrieved November 11, 2010.

- ↑ "Data Center Energy Forecast". Silicon Valley Leadership Group. https://microsite.accenture.com/svlgreport/Documents/pdf/SVLG_Report.pdf.

- ↑ "Google Efficiency Update". Data Center Knowledge. http://www.datacenterknowledge.com/archives/2009/10/15/google-efficiency-update-pue-of-1-22/. Retrieved 2010-06-08.

- ↑ Commentary on introduction of Energy Star for Data Centers "Introducing EPA ENERGY STAR for Data Centers" (Web site). Jack Pouchet. 27 September 2010. http://www.emerson.com/edc/post/2010/06/15/Introducing-EPA-ENERGY-STARc2ae-for-Data-Centers.aspx. Retrieved 2010-09-27.

- ↑ "EU Code of Conduct for Data Centres". Re.jrc.ec.europa.eu. http://re.jrc.ec.europa.eu/energyefficiency/html/standby_initiative_data_centers.htm. Retrieved 2013-08-30.

- ↑ Sweeney, Jim. "Reducing Data Center Power and Energy Consumption: Saving Money and 'Going Green,' " GTSI Solutions, pages 2–3. [1]

- ↑ Cosmano, Joe, "Choosing a Data Center", {{{website{{{}}}}}} (Disaster Recovery Journal), http://www.atlantic.net/images/pdf/choosing_a_data_center.pdf. Retrieved 2012-07-21

- ↑ Needle, David. “HP's Green Data Center Portfolio Keeps Growing,” InternetNews, July 25, 2007. [2]

- ↑ Inc. staff, "How to Choose a Data Center", {{{website{{{}}}}}}, http://www.inc.com/guides/2010/11/how-to-choose-a-data-center_pagen_2.html. Retrieved 2012-07-21

- ↑ Siranosian, Kathryn. “HP Shows Companies How to Integrate Energy Management and Carbon Reduction,” TriplePundit, April 5, 2011. [3]

- ↑ Bullock, Michael. “Computation Fluid Dynamics - Hot topic at Data Center World,” Transitional Data Services,” March 18, 2010. [4]

- ↑ Bouley, Dennis (editor). “Impact of Virtualization on Data Center Physical Infrastructure,” The Green grid, 2010. [5]

- ↑ Fontecchio, Mark. “HP Thermal Zone Mapping plots data center hot spots,” SearchDataCenter, July 25, 2007. [6]

- ↑ Gizmag Fjord-cooled DC in Norway claims to be greenest, 23 December 2011. Visited: 1 April 2012

- ↑ "Measuring Data Center Efficiency: Easier Said Than Done". Dell.com. http://content.dell.com/us/en/enterprise/d/large-business/measure-data-center-efficiency.aspx. Retrieved 2012-06-25.

- ↑ http://www.gartner.com/it-glossary/computational-fluid-dynamic-cfd-analysis

- ↑ "Info and video about Cisco's solution". Datacentreknowledge. 15 May 2007. http://www.datacenterknowledge.com/archives/2008/May/15/ciscos_mobile_emergency_data_center.html. Retrieved 2008-05-11.

- ↑ "Technical specs of Sun's Blackbox". Archived from the original on 2008-05-13. http://web.archive.org/web/20080513090300/http://www.sun.com/products/sunmd/s20/specifications.jsp. Retrieved 2008-05-11.

- ↑ And English Wiki article on Sun's modular datacentre

- ↑ Kidger, Daniel. "Mobull Plug and Boot Datacenter". Bull. http://www.bull.com/extreme-computing/mobull.html. Retrieved 2011-05-24.

- ↑ "HP Performance Optimized Datacenter (POD) 20c and 40c - Product Overview". H18004.www1.hp.com. http://h18004.www1.hp.com/products/servers/solutions/datacentersolutions/pod/index.html. Retrieved 2013-08-30.

- ↑ "Huawei's Container Data Center Solution". Huawei. http://www.huawei.com/ilink/enenterprise/download/HW_143893. Retrieved 2014-05-17.

- ↑ Kraemer, Brian (11 June 2008). "IBM's Project Big Green Takes Second Step". ChannelWeb. http://www.crn.com/hardware/208403225. Retrieved 2008-05-11.

- ↑ "Modular/Container Data Centers Procurement Guide: Optimizing for Energy Efficiency and Quick Deployment" (PDF). http://hightech.lbl.gov/documents/data_centers/modular-dc-procurement-guide.pdf. Retrieved 2013-08-30.