Journal:Named data networking for genomics data management and integrated workflows

| Full article title | Named data networking for genomics data management and integrated workflows |

|---|---|

| Journal | Frontiers in Big Data |

| Author(s) | Ogle, Cameron; Reddick, David; McKnight, Coleman; Biggs, Tyler; Pauly, Rini; Ficklin, Stephen P.; Feltus, F. Alex; Shannigrahi, Susmit |

| Author affiliation(s) | Clemson University, Tennessee Tech University, Washington State University |

| Primary contact | Email: sshannigrahi at tntech dot edu |

| Editors | Shi, F. |

| Year published | 2021 |

| Volume and issue | 4 |

| Article # | 582468 |

| DOI | 10.3389/fdata.2021.582468 |

| ISSN | 2624-909X |

| Distribution license | Creative Commons Attribution 4.0 International |

| Website | https://www.frontiersin.org/articles/10.3389/fdata.2021.582468/full |

| Download | https://www.frontiersin.org/articles/10.3389/fdata.2021.582468/pdf (PDF) |

Abstract

Advanced imaging and DNA sequencing technologies now enable the diverse biology community to routinely generate and analyze terabytes of high-resolution biological data. The community is rapidly heading toward the petascale in single-investigator laboratory settings. As evidence, the National Center for Biotechnology Information (NCBI) Sequence Read Archive (SRA) central DNA sequence repository alone contains over 45 petabytes of biological data. Given the geometric growth of this and other genomics repositories, an exabyte of mineable biological data is imminent. The challenges of effectively utilizing these datasets are enormous, as they are not only large in size but also stored in various geographically distributed repositories such as those hosted by the NCBI, as well as in the DNA Data Bank of Japan (DDBJ), European Bioinformatics Institute (EBI), and NASA’s GeneLab.

In this work, we first systematically point out the data management challenges of the genomics community. We then introduce named data networking (NDN), a novel but well-researched internet architecture capable of solving these challenges at the network layer. NDN performs all operations such as forwarding requests to data sources, content discovery, access, and retrieval using content names (that are similar to traditional filenames or filepaths), all while eliminating the need for a location layer (the IP address) for data management. Utilizing NDN for genomics workflows simplifies data discovery, speeds up data retrieval using in-network caching of popular datasets, and allows the community to create infrastructure that supports operations such as creating federation of content repositories, retrieval from multiple sources, remote data subsetting, and others. Using name-based operations also streamlines deployment and integration of workflows with various cloud platforms.

We make four signigicant contributions with this work. First, we enumerate the cyberinfrastructure challenges of the genomics community that NDN can alleviate. Second, we describe our efforts in applying NDN for a contemporary genomics workflow (GEMmaker) and quantify the improvements. The preliminary evaluation shows a sixfold speed up in data insertion into the workflow. Third, as a pilot, we have used an NDN naming scheme (agreed upon by the community and discussed in the "Method" section) to publish data from broadly used data repositories, including the NCBI SRA. We have loaded the NDN testbed with these pre-processed genomes that can be accessed over NDN and used by anyone interested in those datasets. Finally, we discuss our continued effort in integrating NDN with cloud computing platforms, such as the Pacific Research Platform (PRP).

The reader should note that the goal of this paper is to introduce NDN to the genomics community and discuss NDN’s properties that can benefit the genomics community. We do not present an extensive performance evaluation of NDN; we are working on extending and evaluating our pilot deployment and will present systematic results in a future work.

Keywords: genomics data, genomics workflows, large science data, cloud computing, named data networking

Introduction

Scientific communities are entering a new era of exploration and discovery in many fields, driven by high-density data accumulation. A few examples are climate science[1], high-energy particle physics (HEP)[2], astrophysics[3][4], genomics[5], seismology[6], and biomedical research[7], just to name a few. Often referred to as “data-intensive” science, these communities utilize and generate extremely large volumes of data, often reaching into the petabytes[8] and soon projected to reach into the exabytes.

Data-intensive science has created radically new opportunities. Take for example high-throughput DNA sequencing (HTDS). Until recently, HTDS was slow and expensive, and only a few institutes were capable of performing it at scale.[9] With the advances in supercomputers, specialized DNA sequencers, and better bioinformatics algorithms, the effectiveness and cost of sequencing has dropped considerably and continues to drop. For example, sequencing the first reference human genome cost around $2.7 billion over 15 years, while currently it costs under $1,000 to resequence a human genome.[10] With commercial incentives, several companies are offering fragmented genome re-sequencing under $100, performed in only a few days. This massive drop in cost and improvement in speed supports more advanced scientific discovery. For example, earlier scientists could only test their hypothesis on a small number of genomes or gene expression conditions within or between species. With more publicly available datasets[5], scientists can test their hypotheses against a larger number of genomes, potentially enabling them to identify rare mutations, precisely classify diseases based on a specific patient, and, thusly, more accurately treat the disease.[11]

While the growth of DNA sequencing is encouraging, it has also created difficulty in genomics data management. For example, the National Center for Biotechnology Information’s (NCBI) Sequence Read Archive (SRA) database hosts 42 petabytes of publicly accessible DNA sequence data.[12] Scientists desiring to use public data must discover (or locate) the data and move it from globally distributed sites to on-premize clusters and distributed computing platforms, including public and commercial clouds. Public repositories such as the NCBI SRA contain a subset of all available genomics data.[13] Similar repositories are hosted by NASA, the National Institutes of Health (NIH), and other organizations. Even though these datasets are highly curated, each public repository uses their own standards for data naming, retrieval, and discovery that makes locating and utilizing these datasets difficult.

Moreover, data management problems require the community to build and the scientists to spend time learning complex infrastructures (e.g., cloud platforms, grids) and creating tools, scripts, and workflows that can (semi-) automate their research. The current trend of moving from localized institutional storage and computing to an on-demand cloud computing model adds another layer of complexity to the workflows. The next generation of scientific breakthroughs may require massive data. Our ability to manage, distribute, and utilize these types of extreme-scale datasets and securely integrate them with computational platforms may dictate our success (or failure) in future scientific research.

Our experience in designing and deploying protocols for "big data" science[8][14][15][16][17][18] suggests that using hierarchical and community-developed names for storing, discovering, and accessing data can dramatically simplify scientific data management systems (SDMSs), and that the network is the ideal place for integrating domain workflows with distributed services. In this work, we propose a named ecosystem over an evolving but well-researched future internet architecture: named data networking (NDN). NDN utilizes content names for all data management operations such as content addressing, content discovery, and retrieval. Utilizing content names for all network operations massively simplifies data management infrastructure. Users simply ask for the content by name (e.g., “/ncbi/homo/sapiens/hg38”) and the network delivers the content to the user.

Using content names that are understood by the end-user over an NDN network provides multiple advantages: natural caching of popular content near the users, unified access mechanisms, and location-agnostic publication of data and services. For example, a dataset properly named can be downloaded by, for example, NCBI or GeneLab at NASA, whichever is closer to the researcher. Additionally, the derived data (results, annotations, publications) are easily publishable into the network (possibly after vetting and quality control by NCBI or NASA) and immediately discoverable if appropriate naming conventions are agreed upon and followed. Finally, NDN shifts the trust to content itself; each piece of content is cryptographically signed by the data producer and verifiable by anyone for provenance.

In this work, we first introduce NDN and the architectural constructs that make it attractive for the genomics community. We then discuss the data management and cyberinfrastructure challenges faced by the genomics community and how NDN can help alleviate them. We then present our pilot study applying NDN to a contemporary genomics workflow GEMmaker[19] and evaluate the integration. Finally, we discuss future research directions and an integration roadmap with cloud computing services.

Named data networking

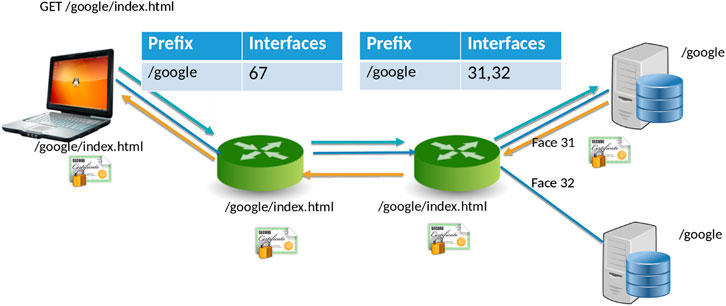

NDN[20] is a new networking paradigm that adopts a drastically different communication model than that current IP model. In NDN, data is accessed by content names (e.g., “/Human/DNA/Genome/hg38”) rather than through the host where it resides (e.g., ftp://ftp.ncbi.nlm.nih.gov/refseq/H_sapiens/annotation/GRCh38_latest/refseq_identifiers/GRCh38_latest_genomic.fna.gz). Naming the data allows the network to participate in operations that were not feasible before. Specifically, the network can take part in discovering and local caching of the data, merging similar requests, retrieval from multiple distributed data sources, and more. In NDN, the communication primitive is straightforward (Figure 1): the consumer asks for the content by content name (an “Interest” in NDN terminology), and the network forwards the request toward the publisher.

|

For communication, NDN uses two types of packets: Interest and Data. The content consumer initiates communication in NDN. To retrieve data, a consumer sends out an Interest packet into the network, which carries a name that identifies the desired data. One such content name (similar to a resource identifier) might be “/google/index.html”. A network router maintains a name-based forwarding table (FIB) (Figure 1). The router remembers the interface from which the request arrives and then forwards the Interest packet by looking up the name in its FIB. FIBs are populated using a name-based routing protocol such as Named-data Link State Routing Protocol (NLSR).[21]

NDN routes and forwards packets based on content names[22], which eliminates various problems that addresses pose in the IP architecture, such as address space exhaustion, Network Address Translation (NAT) traversal, mobility, and address management. In NDN, routers perform component-based longest prefix match of the Interest name the FIB. Routing in NDN is similar to IP routing. Instead of announcing IP prefixes, an NDN router announces name prefixes that it is willing to serve (e.g., “/google”). The announcement is propagated through the network and eventually populates the FIB of every router. Routers match incoming Interests against the FIB using longest prefix match. For example, “/google/videos/movie1.mpg” might match “/google” or “/google/video”. Though an unbounded namespace raises the question of how to maintain control over the routing table sizes and whether looking up variable-length, hierarchical names can be done at line rate, previous works have shown that it is indeed possible to forward packets at 100 Gbps or more.[23][24]

When the Interest reaches a node or router with the requested data, it packages the content under the same name (i.e., the request name), signs it with the producer’s signature, and returns it. For example, a request for “/google/index.html” brings back data under the same name “/google/index.html” that contains a payload with the actual data and the data producer’s (i.e., Google) signature. This Data packet follows the reverse path taken by the Interest. Note that Interest or Data packets do not carry any host information or IP addresses; they are simply forwarded based on names (for Interest packets) or state in the routers (for Data packets). Since every NDN Data packet is signed, the router can store it locally in a cache to satisfy future requests.

Hierarchical naming

There is no restriction on how content is named in NDN except they must be human-readable and hierarchical, and globally unique. The scientific communities develop the naming schemes as they see fit, and the uniqueness of names can be ensured by name registrars (similar to existing DNS Registrars).

The NDN design assumes hierarchically structured names, e.g., a genome sequence published by NCBI may have the name “/NCBI/Human/DNA/Genome/hg38”, where “/” indicates a separator between name components. The whole sequence may not fit in a single Data packet, so the segments (or chunks) of the sequence will have the names “/NCBI/Human/DNA/Genome/hg38/{1..n}“. Data that is routed and retrieved globally must have a globally unique name. This is achieved by creating a hierarchy of naming components, just like Domain Name System (DNS). In the example above, all sequences under NCBI will potentially reside under “/NCBI”; “/NCBI” is the name prefix that will be announced into the network. This hierarchical structure of names is useful both for applications and the network. For applications, it provides an opportunity to create structured, organized names. On the other hand, the network does not need to know all the possible content names, only a prefix. For example, “/NCBI” is sufficient for forwarding.

Data-centric security

In NDN, security is built into the content. Each piece of data is signed by the data producer and is carried with the content. Data signatures are mandatory; on receiving the data, applications can decide if they trust the publisher or not. The signature, coupled with data publisher information, enables the determination of data provenance. NDN’s data-centric security helps establish data provenance, e.g., users can verify content with names that begin with “/NCBI” is digitally signed by NCBI’s key.

NDN’s data-centric security decouples content from its original publisher and enables in-network caching; it is no longer critical where the data comes from since the client can verify the authenticity of the data. Unsigned data is rejected either in the network or at the receiving client. The receiver can get content from anyone, such as a repository, a router cache, or a neighbor—as well as the original publisher—and verify that the data is authentic.

In-network caching

Automatic in-network caching is enabled by naming data because a router can cache data packets in its content store to satisfy future requests. Unlike today’s Internet, NDN routers can reuse the cached data packets since they have persistent names and the producer’s signature. The cache (or Content Store) is an in-memory buffer that keeps packets temporarily for future requests. Data such as reference genomes can benefit from caching since caching the content near the user speeds up content delivery and reduces the load on the data servers. In addition to the CS, NDN supports persistent, disk-based repositories (repos).[25] These storage devices can support caching at a larger scale and CDN-like functionality without additional application-layer engineering.

In our previous work with the climate science and high-energy physics communities, we saw that even though scientific data is large, a strong locality of reference exists. We found that for climate data, even a 1 GB cache in the network speeds up data distribution significantly.[17] We observe similar patterns in the genomics community, where some of the reference genomes are very popular. These caches do not have to be at the core of the network. We anticipate most of the benefits will come from caching at the edge. For example, a large cache provisioned at the network gateway of a lab will benefit the scientists at that lab. In this case, the lab will provision and maintain their caches. If data is popular across many organizations, it is in the operators best interest to cache the data at the core since this will reduce latency and network traffic. Given that the price of data storage has gone down significantly (an 8 TB (8000 GB) hard-drive costs around $150, at the time of writing this paper), it does not significantly add to the operating costs of the labs. Additionally, new routers and switches are increasingly being shipped with storage, reducing the need for additional capital expenditure. Additionally, caching and cache maintenance is automated in NDN (it follows content popularity), eliminating the need to configure and maintain such storage.

Having introduced NDN in this section, we now enumerate the genomics data management problems and how NDN can solve them in the following section.

Genomics cyberinfrastructure challenges and solutions using NDN

The genomics community has made significant progress in recent decades. However, this progress has not been without challenges. A core challenge, like many other science domains, is data volume. Due to the low-cost sequencing instruments, the genomics community is rapidly approaching petascale data production at sequencing facilities housed in universities, research, and commercial centers. For example, the SRA repository at NCBI in Maryland, United States contains over 45 petabytes of high-throughput DNA sequence data, and there are other similar genomic data repositories around the world.[26][27] These data are complemented with metadata (though not always present or complete) representing evolutionary relationships, biological sample sources, measurement techniques, and biological conditions.[12]

Furthermore, while a large amount of data is accessible from large repositories such as the NCBI repository, a significant amount of genomics data resides in thousands of institutional repositories.[28][29][30] The current (preferred) way to publish data is to upload it to a central repository, e.g., NCBI, which is time-consuming and often requires effort from the scientists. The massively distributed nature of the data makes the genomics community unique. In other scientific communities, such as high-energy physics (HEP), climate, and astronomy, only a few large scale repositories serve most of the data.[31] For example, the Large Hadron Collider (LHC) produces most of the data for the HEP community at CERN, the telescopes (such as LSST and the to-be-built SKA) produces most of the data for astrophysics, and the supercomputers at various national labs produce climate simulation outputs.[32]

Modern genomic data comes in the form of reference genomes with coordinate-based annotation files, “dynamic” measurements of genome output (e.g., RNA-seq, CHIP-seq), and individual genome resequencing data. Reference genomes are used by many researchers across the world who can benefit from efficient data delivery mechanisms. The dynamic functional genomics and resequencing genomics datasets are often larger in size and of more focused use. All data is typically retrieved using various contemporary technologies such as sneakernet[33], SCP/FTP, IBM Aspera[34], Globus[35], and iRODS.[36][37] While reference data is often easier to locate and download, the dynamic and resequencing datasets often are not since they are strewn over geographically distributed institutional repositories.

Locating and retrieving data are not the only problems that the genomics community face. The rest of this section enumerates the cyberinfrastructure requirements of the genomics community, problems encountered due to the current point-to-point TCP/IP-based model of the internet, and how NDN can solve these.

The massive storage problem

The genomics community is producing more data than it is currently feasible to store locally.[13] This phenomenon will accelerate as modern field-based or hand-held sequencers become more prevalent in individual research labs and commercial sequencing providers. Increasingly, valuable data is at risk of being lost, potentially forever. While the community must invest in storage capacity, the existing storage strategies need to be optimized, such as deduplication of popular datasets (e.g., the reference genomes). Moreover, popular datasets that are often reused must be available quickly and reliably to reduce the need for copying data.

NDN-based solution

With NDN, data can come from anywhere, including in-network caches. Fast access to popular data reduces the need to download and store datasets locally. They can be quickly downloaded and deleted after the experiments. Multiple researchers in the same area can benefit from this approach since they no longer need to individually download datasets from NCBI, rather from a in-network cache that is automatically populated by the network. Further, the data downloaded from this cache can be verified publicly for provenance. Another solution is to push the computation to the data. This can be accomplished by adding a lambda (computational function) to the Interest name. The data source (e.g., a data producer or a dedicated service) interprets the lambda upon receiving the Interest and returns the computed results. Scientists don’t have to download and store large datasets every time they need to run an experiment.

The data discovery problem

Genomics data is currently published from central locations (e.g., NCBI, NASA). The challenges in data discovery come not only from the fact that one needs to know all the locations of these datasets but also to navigate different naming schemes and discovery mechanisms provided by the hosting entity. There are many community-supported efforts to define controlled vocabularies and ontologies to help describe data.[38][39][40] These metadata then can be parsed, indexed, and organized for data discovery. A scientist, for example, can associate appropriate metadata with source data, resulting data, and data collections. Moreover, the application of metadata to data is non-uniform, non-standard, and often inconsistent, making them difficult to utilize for consistent naming or data discovery.

NDN-based solution

NDN does not provide data discovery by itself. Once data is named consistently by a certain community or subcommunity, these names can be indexed by a separate application (see our previous work[15] that provides name discovery functions and operated over NDN). Since name discovery in NDN is sufficient for data retrieval—an application can request for this name—no additional steps are necessary. Note that NDN only requires a hierarchical naming structure; how individual communities name their datasets (/biology/genome vs. /genome/biology) is up to them.[41]

A distributed catalog[15] that stores the content names is sufficient to provide efficient name discovery. Since an NDN-based catalog will only hold a community-specific set of names (not the actual data), the synchronization, update, and delete operations are lightweight.[42] These names in these catalogs can be added, updated, and deleted as necessary. We refer the reader to our previous work for the details of how such a catalog can be created and maintained in NDN.[15]

The fast and scalable data access problem

Currently, genomics data retrievals range from downloading a significant amount of data from a central data archive (e.g., NCBI) to downloading only the desired data that can be staged on local or cloud storage systems. For example, the researchers often need to retrieve genome reference data on demand for comparison. Downloading large amounts of datasets over long-distance internet links can be slow and error-prone. Further, the current internet model does not work very well over long distance links.[43] Even with extremely high-speed links, it is particularly difficult to utilize all the available bandwidth.

NDN-based solution

NDN provides access to data from “anywhere,” including storage nodes, in-network caches, and any entities that might have the data. This property allows scientists to reuse already downloaded datasets that are nearby (e.g., dataset downloaded by another scientist in the same lab). Additionally, in NDN data follows the content popularity, as it is cached in the in-network devices automatically. The more popular content is, the higher the likelihood it would be cached nearby. All data is digitally signed, ensuring provenance is preserved.

Getting content fast and from nearby locations may be convenient to download data when needed and delete them when the computation is finished. For example, the reference human genome has been downloaded by us and our students hundreds of times in the last two decades. Secure and verifiable data downloaded on-demand will reduce the amount of storage needed.

The problem of minimizing transfer volume

The massive data volume needed by genomic workflows can easily saturate institutional networks. For example, the size of sequence data (in FASTQ format) or processed sequence alignments (in binary sequence alignment map or BAM format) for one experiment can easily aggregate into terabytes. If stored in online repositories, these data might be downloaded many times by researchers extending existing studies, leading to high bandwidth usage. One solution is to subset the data and download only the necessary portion. However, several challenges remain. For example, if multiple copies of the file exist, the network/application layers can not take advantage of that to pull different subsets in parallel.

However, depending on the size and type of the analysis being performed, subsetting of the data may not be appropriate. Currently, that means the scientist would be required to download all datasets (or staged at a remote site) before the computation can begin. However, instead of downloading large amounts of data, pushing computation to data might be much more lightweight.

For example, to determine if a scientific avenue (e.g., a large-scale experiment with millions of genomes) or dataset is worth pursuing, the scientists often run smaller-scale experiments for early signs of interesting properties. A key issue is determining the smallest number of records required to produce the same scientific result as the full dataset, and we previously point to a simple saturation point as determined by transcript detection.[44] Once a saturation point has been reached, one could pause and examine the results. If there is an interesting signal, then there is nothing preventing the user from processing more sequence records. However, if there is no signal, one could drop the experiment and move on to other datasets. However, this method currently requires downloading the full experimental datasets and running computations against them.

NDN-based solution

NDN supports subsetting the data at the source, and transferring only the necessary portions reduces bandwidth consumption. This is already possible through BAM file slicing to select data specific to genomic regions. With NDN, the request can carry the required subsetting parameters and allow the user/applications to download only the part of the data required for computation. NDN can also parallelize subsetting in the event that multiple slices are needed, and data is replicated over multiple repositories. When subsetting is not appropriate, NDN is able to push computation to data by appending the computation to the Interest name (or adding them as the payload to the interest). The result comes back to the requester under the same name and is also cached for future use, reducing bandwidth usage. Furthermore, in some genomics workflows, caching of computation can reduce the load on the compute and servers (such as those hosted in NCBI or cloud platforms).

The secure collaboration problem

Genomics data, especially unpublished or identifiable human data, can be very sensitive. Scientists often need to secure data due to privacy requirements, non-disclosure agreements, or legal restrictions (e.g., HIPPA). Without a security framework, securing data and enforcing permissions becomes difficult. Suitable data access methods with proper access control is therefore required for privacy and legal requirements. At the same time, scientific collaborations often need to share data between groups without violating security restrictions. Suitable frameworks must exist for utilizing open-source sequenced data for research, albeit with appropriately restricted access. The lack of an infrastructure that allows secure access to a large number of sequenced human genomes prevents population genetics researchers from identifying rare mutations or test hypotheses on analogous experiments which can lead to medical advancement. Encryption and data security models, along with proper access control is highly necessary as data breaches of protected data can lead to massive fines, forcing institutions to severely limit the scope of allowable controlled data access on local cyberinfrastructure.

NDN-based solution

To support secure data sharing among collaborators, all data in NDN is digitally signed, providing data provenance. When privacy is needed, NDN allows encryption of content, facilitating secure collaborations. Furthermore, the verification of trust and associated operations (such as decryption) can be automated in NDN; this is called schematized trust.[45] One example of schematized trust might be the following: a scientist attempting to decrypt a data packet starting with “/NCBI” must also present a key that is signed by “/NCBI” and begins with the “/NCBI/scientistA”. More complex, name-based trust schemes are also possible.

This section discussed NDN properties that can address data management and cyberinfrastructure challenges faced by the genomics community. In the following section, we present a pilot study that uses a current genomics workflow that demonstrates some of these improvements in a real-world scenario.

Method

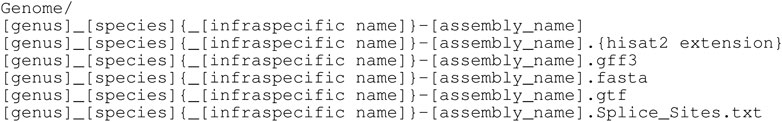

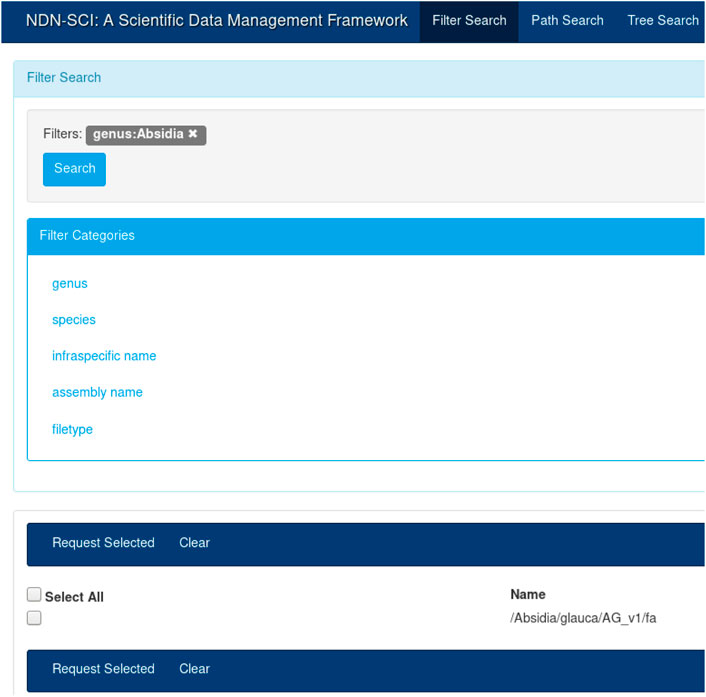

To demonstrate how NDN can benefit genomics workflows, we integrated NDN with a current genomics workflow (GEMmaker) and deployed our integrated solution over a real, distributed NDN testbed.[16] The experiment has multiple parts: 1) naming data in a way that is understood by NDN as well as acceptable to the genomics community (Figure 2); 2) publishing data into the testbed and making them discoverable to the users using a distributed catalog and a UI (Figure 3); 3) modifying GEMmaker to interact with the data published in the testbed; and 4) comparing the performance of the new integration to the existing workflow. The following sections describe these efforts in detail.

|

|

NDN testbed

For this work, we utilized a geographically distributed six-node testbed that was deployed at Colorado State University, ESNet, and UCAR supercomputing center. The testbed had six high-performance nodes (each with 40 cores, 128 GB memory, and 50 TB storage) and connected over ESnet[46] using 10 Gbps dedicated links. All nodes ran the latest version of Fedora, and the network stack was tuned for big-data transfers.[43] Specifically, we tuned the network interfaces to increase the buffer size and used large ethernet frames (9000-byte jumbo frames). We also tuned the TCP stack according to the ESnet specification, including increasing read and write buffers, utilizing cubic and htcp congestion control algorithms. We also tuned the UDP stack to increase read/write buffers as well as specifying CPU cores.[43]

Data naming and publication

NDN recommends globally unique, hierarchical, and semantically meaningful names. This is a natural fit for the genomics community since they have established taxonomies dating as far back as 1773.

Note that while NDN requires names to be globally unique, there is no need for a global convention even among a particular community. For example, two names pointing to the same content— /Biology/Genome/Homo/Sapiens and /NCBI/Genome/Homo/Sapiens—are perfectly acceptable. Each community is free to name their own content as they see fit. The uniqueness in these names come from the first component of the name (the prefix), which is /Biology and /NCBI, respectively. We anticipate each entity (e.g., an organization such as NCBI, a university, a community genome project) will have their own namespaces, possibly procured from commercial name registrars, the same way DNS namespaces are obtained today.

In the genomics community, very commonly used datasets can even be assigned their own namespaces. For example, a globally unique namespace (e.g., /human/genome) may be reserved for human genomes for convenience. However, that special namespace does not preclude an organization from publishing the same genomes from another namespace (e.g., /NCBI/human/genome). While NDN operates on names, it does not interpret the semantic meaning of the name components at the network layer. For example, NCBI announces the /NCBI prefix into the network that the NDN routers store. When an Interest named /NCBI/Genome/Homo/Sapiens arrives at the router, the router performs a longest prefix match on the name and matches the interface corresponding to /NCBI. This way, the network is able to forward Interests and data but does not need to interpret the individual components.

It is true that a name component (e.g., “hg38”) might have different meaning in different communities. It is the job of the application layer to interpret and use this components as they see fit. In our previous work, we built a catalog (an application) that mapped individual components to their semantic meaning (see Figure 3). Different communities will build different applications on top of NDN to understand the semantic meaning of a name.

The other problem is name assignment and reconciliation. In today’s internet, ICCAN[47] and the domain registrars play a significant role in assigning, maintaining and reconciling DNS namespaces (e.g., assigning google.com namespace to Google). In NDN, these organizations will continue to control and assign namespaces that are used over the internet.

In NDN, the data producer is responsible for publishing content under a name prefix (/biology or /NCBI). NCBI will acquire such namespaces from a name registrar. At that point, only NCBI is allowed to announce the /NCBI prefix into the internet and publish content under that name prefix. The onus of updating namespaces is on the data publisher and the name registrars. Note that no such NDN name registrar exists today, but we expect similar organizations to exist in the future.

When a user looks up a content name (e.g., /NCBI/Genome/Homo/Sapiens) in a catalog and expresses an Interest, the Interest is forwarded by the network and eventually reaches the NCBI server that announced /NCBI. Namespace reconciliation is not necessary for the network to function properly. Let’s imagine NCBI is authorized to publish datasets under two namespaces—/Biology and /NCBI—and it publishes the human genome under “/Biology/Genome/Homo/Sapiens” and “/NCBI/Genome/Homo/Sapiens”. A user can utilize both names to retrieve content—the network does not need to interpret the meanings of the names—and the interpretation of name and content is up to the applications (and users) requesting and serving the datasets.

As part of the NSF SciDAS project[48], we store large amounts of whole genome reference and auxiliary data in a distributed data grid (iRODS). These whole genome references were initially retrieved from the Ensembl database[49] using the Python-based Pynome[50] package and consist of hundreds of Eukaryotic species. Pynome processes these data to provide indexed files which are not available on Ensembl and which are needed for common genomic applications performed by researchers around the world. These data are organized in an evolution-based, hierarchical manner which is an excellent naming convention for an NDN framework.

For this study, we used an NDN name translator that we created as part of our previous work[51] to translate these existing names into NDN compatible names. Once translated, the content names became the unique reference for these datasets. Figure 2 shows the reference genome DNA sequence names created from the existing hierarchical naming convention. For example, one such name would look like “/Absidia/glauca/AG_v1/fa”. We could find that some of these names may or may not contain certain components, for example, infraspecific name in the above example. We then translated and imported these names into our NDN data management framework.[8] All subsequent operations such as discovery, retrieval, and integration with workflows used these names.

After naming, we published these datasets under the derived names on the NDN testbed servers. In this pilot test, we used three nodes to publish the data. However, each testbed server published the same data under the same name, replicating the content. Depending on the client location, requests were routed to the closest replica. If one replica went down, nothing needed to change on the client’s end—the NDN network routed the request to a different replica. We then used this testbed setup in conjunction with GEMmaker to test NDN’s usefulness. We discuss the results in the evaluation section.

The UI in Figure 3 provides an intuitive way to search for names that were published on the testbed. A user could create a query by selecting different components from the left-hand menu (e.g., by selecting “Absidia” under genus). The user can start typing the full name, and the UI will provide a set of autocompleted names. Finally, the user could choose to view the entire name tree using the tree view. The catalog and the UI are necessary for dataset discovery; while NDN operates on names, it is more efficient and fast to discover names using a catalog. Once discovered, the names could be used for all subsequent operations. For example, once the names are known, the user can initiate retrieval or other operations, as discussed before. The API also provides a command-line interface (CLI) for name discovery, allowing the users to integrate the name discovery (and subsequent operations) with domain workflows.

Since NDN operates on names, data naming in NDN affects application and network behaviors. The way a piece of content is named has profound impacts on content discovery, routing of user requests, data retrieval, and security. Besides, the naming of individual pieces of content seriously affects how the NDN behaves. For brevity, we do not discuss those naming trade-offs here but point the reader to our recent work on content naming in NDN.[41]

Integration with GEMmaker

GEMmaker[19] is a workflow that takes as input a number of large RNA sequence (RNA-seq) data files and a whole genome reference file to quantify gene expression-levels underlying a specific set of experimental parameters. It produces a Gene Expression Matrix (GEM), which is a data structure that can be used to compare gene expression across the input RNA-seq samples. GEMs are commonly used in genomics and have been instrumental in several studies.[52][53][54][55] GEMmaker consists of five major steps:

- 1. Read RNA-seq data files as input.

- 2. Trim low-quality portions of the sequences.

- 3. Map sequences to a reference genome.

- 4. Count levels of gene expression in each RNA-seq sample.

- 5. Merge the gene expression values of each sample into a Gene Expression Matrix.

GEMmaker is regulated by the Nextflow[56][57] workflow manager, which automates the fluid execution of each step in GEMmaker, which are defined as Nextflow processes. Nextflow has a variety of executors that enable the deployment of processes to a number of HPC and cloud environments, including Kubernetes (K8s).

Without NDN, the typical process a user follows to execute GEMmaker in a K8s environment is as follows. First, the user moves the whole genome reference files onto a Persistent Volume Claim (PVC), a K8s artifact that provides persistent storage to users. Nextflow is able to mount and unmount GEMmaker pods directly to this PVC, which stores all input, intermediate, and output data. When the user executes GEMmaker, Nextflow deploys pods that automatically retrieve the RNA-seq data directly from NCBI using Aspera or FTP services. One pod is submitted for each RNA sample in parallel, so ideally all RNA-seq data is downloaded simultaneously. This represents the bulk of data movement in GEMmaker. Once each sample of RNA-seq data is downloaded to the PVC, new pods are deployed to execute the next step of the workflow for each sample. Each step in GEMmaker produces a new intermediate file used as input for the next step, all of which is written to the PVC. Once GEMmaker completes, the resulting GEM can be downloaded from the PVC by the user.

Currently, pulling data from host-specific services is built into the GEMmaker workflow. For example, the NCBI SRA-toolkit can pull data from SRA but not all genome data repositories. With NDN, the process can be abstracted from the workflow logic as data is preloaded into the NDN testbed from any host, downloaded by name at step 1, reference data is pulled by name at step 3, and data is created and uploaded with a new name at step 5. In contrast to the typical execution of GEMmaker, with NDN, users need not retrieve the whole genome reference or the final GEM, and retrieval of RNA-seq data can occur independent of Aspera or FTP protocols, supporting a greater variety of data repositories or even locally created data (so long as a local publisher makes it available). Users need only provide the NDN names for moving data and accessing cloud APIs.

For GEMmaker integration, we created a NDN-capable program that pulled data from the NDN testbed using the SRA ID. We modified GEMmaker to replace the existing RNA-seq data retrieval step with this NDN retrieval program and to retrieve the whole genome references. These whole genome references are the same described previously that were generated by Pynome and cataloged in the testbed. For this small-scale testing, we added the NDN routes to the testbed machines manually. However, the NDN community provides multiple routing protocols[58] that can automate the routing updates.

Results

Performance evaluation

In order to understand how caching affects data distribution, we moved datasets of different sizes between the NDN testbed and Clemson University. The SRA sequences were published into the testbed, and then the workflow modified to download data over NDN. NDN-based download only needs to know the name of the content. The rest of the infrastructure is opaque to the user.

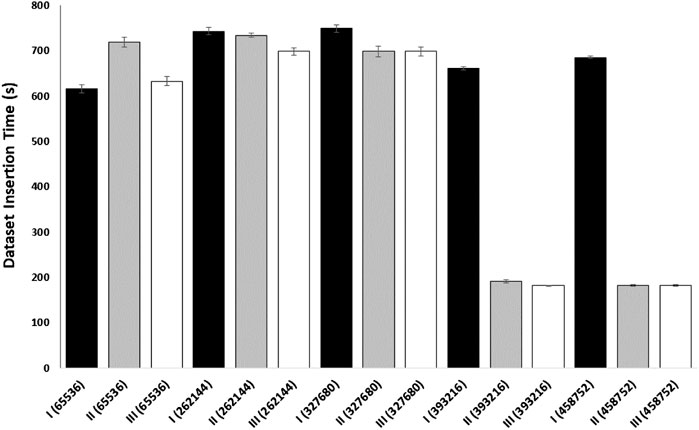

For these experiments, the standard NDN tools[22] for publication and retrieval were used. Once the files were downloaded, they were utilized in the workflow. For the first experiment, we copied different sized files using existing IP based tools (wget). For comparison, we then utilized NDN-based standard tools (ndncatchunks and ndnputchunks) for the same files, with in-network caching disabled, followed by caching enabled. Each transfer experiment was repeated three times.

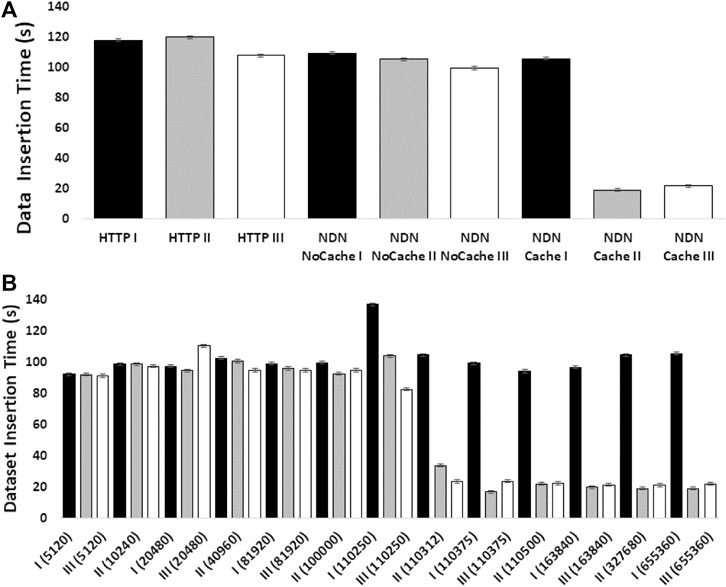

The experiments showed how NDN can improve content retrieval using in-network caching. Figure 4A shows a comparison of data download speed between NDN and HTTP. The first three bars represent three sequential downloads of a set of three SRA datasets from the NCBI repository using HTTP. The next three bars show three sequential downloads using NDN retrieval from the NDN testbed without caching. Since we manually staged the data, we knew that data source was approximately 1,500 miles away from the requester. Even then, the download performance was comparable with the HTTP performance. The real performance gain came from caching the datasets as seen in the last three bars where the first transfer was similar to HTTP and NDN without caching (two minutes) while the next two transfers only took around 20 seconds after caching kicked in. These results point toward a massive improvement opportunity since many genomics workflows download hundreds or even thousands of SRAs for a given experiment.

|

Figure 4B shows how much in-network cache was needed to accomplish speedup using in-network caching and how the caching capacity affects the speedup. The x-axis of this figure shows the cache size in the number of NDN data packets (by default, each packet is 4,400 bytes). We find that at around 500 MB cache size, we start to see speed improvements.

We performed an additional experiment to better evaluate caching on data transfer in a cloud environment (Figure 5). In this caching experiment, gateway and endpoint containers were employed to determine the time it takes to download a SRA sequence dataset. The gateway container used NDN to pull the SRA sequence from a remote NDN repository and create the network cache. The endpoint container (with a cache size of 0) acted as the consumer and created a face to the gateway used to pull the data. Both the endpoint and the client were run on separate nodes to replicate real cloud scenarios. This experiment demonstrates how an NDN container can cache a dataset for use by any endpoint on the same network and indicates that an insufficiently sized content store on the gateway will prevent network caching, resulting in slower download times. This provides a basic example of how popular genomic sequences could be cached for use by multiple researchers working on the same network in the cloud.

|

The actual cache sizes in the real-world would depend on request patterns as well as file sizes—we are currently working on quantifying this. In any case, it is certainly feasible to utilize NDN’s caching ability for very popular datasets, such as the human reference genomes. Our previous work shows that in big-science communities, even a small amount of cache significantly speeds up delivery due to the temporal locality of requests.[17]

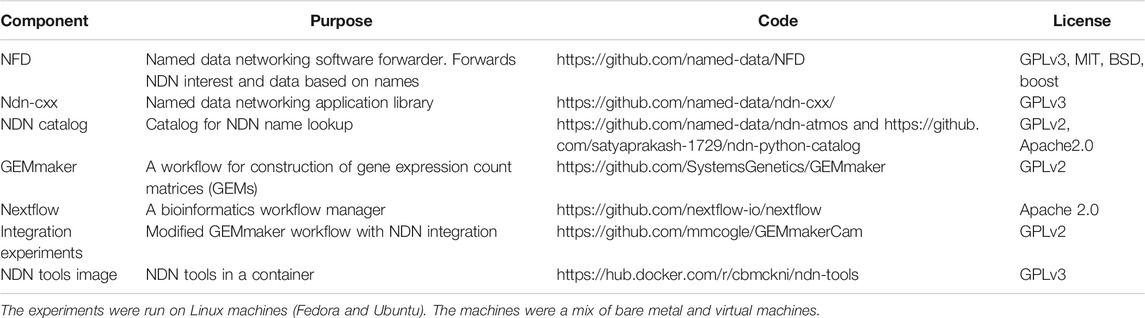

Codebase

All code used for these experiments are publicly available and distributed under open source licences (Table 1). NFD is the Named Data Networking forwarder that works as a software router for Interests and Data packets. ndn-cxx is an NDN library that provides the necessary API for creating NDN based “apps”. NDN catalog is an NDN based Name lookup system—an application can send an Interest to the catalog and receive the name of a dataset. The application can then utilize the name for all subsequent operations. Two versions of the catalog exists, ndn-atmos is written in C++ while ndn-python-catalog is implemented in Python.

|

Nextflow is a general purpose workflow manager. GEMmaker is a genomics workflow adpated for Nextflow (see Section above for more details). For these experiments, we modified GEMmaker to request data over NDN instead of standard mechanisms (such as HTTP). This modified workflow is available under this repository.

Discussion and future directions

Discussion

This work demonstrates the preliminary integration of NDN with genomics workflows. While the work shows the promise of NDN toward a simplified but capable cyberinfrastructure, several other aspects remain to be addressed before NDN can completely integrate with genomics workflows and cloud computing platforms. In this section we discuss the technical challenges as well as the economic considerations that are yet to be addressed.

Economic considerations

NDN is a new internet architecture that operates differently that the current internet. Consequently, the users and the network operators need to consider the economic cost of moving to an NDN paradigm. This section outlines some of the economic considerations. There are two primary cost of moving to an NDN paradigm: the cost of upgrading current network equipment and the cost of storage if caching is desired.

As of writing this paper, NDN routers are predominately software-based. To utilize these software-based routers, the researcher needs to install NFD (an NDN packet forwarder) and NDN libraries on a machine (a server or even a laptop). All experiments in this paper were done on commodity hardware and did not require any additional capital investment. NDN is able to run as an overlay on top of the existing IP infrastructure or on Layer two circuits; in this work, we utilized NDN as an overlay on existing internet connectivity.

Any commodity hardware (servers or desktop) with storage (depends on the workflow requirement) and a few GB memory is capable of supporting NDN. Given the current low cost of storage (an 8 TB hard drive costs around $150) even the cost of moving to a dedicated server is low. However, workflows with large storage requirement will need some capital investment. To minimize this cost and provide an alternative, we are working on two storage access mechanisms. First, when installing new storage is feasible (e.g., cost of storage continues to fall and a petabyte of storage costs around $30,000 at the time of writing this paper) it might be convenient for large research organizations to install dedicated storage that holds and serves NDN data packets; this approach improves NDN’s performance since objects are already packetized and signed by the data producers. The second approach is interfacing NDN with existing storage repositories such as HTTP and FTP servers. As NDN Interests come in, they are translated into appropriate system calls (e.g., POSIX, HTTP, or FTP) and the NDN Data packets are created on-demand. This approach is slower than the first approach but does not require any additional hardware or storage, reducing the deployment cost.

The benefits of NDN (caching, etc.) becomes apparent when more users utilize an NDN-based network. As the networking community moves toward testbeds and deployments such as NSF-funded FABRIC[59] that incorporate NDN into their core design, the research labs and institutes connected to these networks would be able to take advantage of those infrastructures. Additionally, connecting a software NDN router to these networks and testbeds are often free (assuming an institute is already paying for internet access). These networks will create and deploy large scale in-network caches near the users and in the internet core as users continue to request data from. As users exchange data over these networks, data will be automatically cached and served from in-network caches. In the future, when ISPs deploy NDN routers, the researchers will be able to take advantage of in-network caching without added cost. However, we expect large scale ISP deployment of NDN to take a few more years.

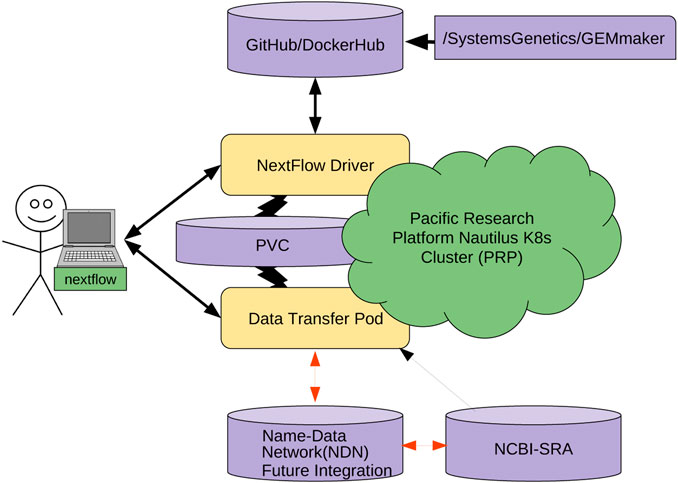

The other cost is the learning and integration cost with existing workflows. This is not trivial; NDN requires careful naming considerations, aligning workflows with a name base network, and data publication. To make this process straightforward (Figure 6), we are working on containerizing several individual pieces. We hope that containerizing NDN, data transfer pods, and other components will allow researchers to simply mix-and-match different containers and integrate them with workflows without resorting to complex configuration and integration efforts. Having discussed the economic considerations, we now discuss the technical challenges that remain.

|

Future directions

Software performance

While our work shows some attractive properties of NDN for the genomics community, there are well-known shortcomings of NDN. For example, the forwarder we used (NFD) was single-threaded and therefore its throughput is low. This has been recently addressed by a new forwarder (ndn-dpdk) that can perform forwarding at 100 Gbps. We are currently working on integrating the genomics workflow with NDN-DPDK. We hope to demonstrate further improvements as the protocol and software stack continues to mature.

Accessing distributed data over NDN=

Genomics data generation is highly distributed as data is generated in academic institutions, research labs, and the industry. While the HTDS datasets are eventually converted into in FASTQ format[60], the storage and downstream analysis data formats are highly heterogeneous with different naming systems, different storage techniques, and inconsistent and non-standard use of metadata. Seamlessly accessing these diverse datasets is an enormous challenge. Additionally, multiple types of repositories exist with different scopes: national-level (e.g., NCBI, EBI, and DDBJ) with large scale sequencing data for many organisms, community-supported repositories focused on a species or small clade of organisms, and single investigator web sites containing ad hoc datasets. Storing these datasets in different repositories that are hosted under various domain names is unlikely to scale very well for two primary reasons. First, different hosting entities utilize different schemes for data access APIs (e.g., URLs), making it necessary to understand and parse various naming schemes. Second, it is hard to find and catalog each institutional repository.

NDN provides a scalable approach to publish and retrieve immutable content using their names in a location-agnostic fashion. NDN uses the content names for routing and request forwarding, ensuring all publicly available data can be access directly without the need for frequent housekeeping. For example, currently moving a repository under a new domain name requires a large amount of housekeeping, such as renaming the data under a new URL or linking new and old data. With NDN, the physical location of the data has no bearing on how they are named or distributed. When data is replicated, NDN brings the requests to the “nearest” data replica. “Nearest” in NDN can be defined as the physically nearest replica, the most performant replica, or a combination of these (and other) factors.

However, several unexplored challenges exist on applying NDN to distributed data. First, NDN operates on names. Finding these names requires the service of a third-party software or name provider. A catalog that enumerates all names under a namespace (as we discuss before) can provide this service. However, it is not yet obvious who would be responsible for running and updating the authoritative versions of these catalogs. When data is replicated, we also need to address the issue of data consistency across multiple repositories. This is still an active research direction that requires considerable attention.

Distributed repositories over NDN

A single centralized repository can become a bottleneck when subjected to a large number of queries or download requests. Moreover, it becomes a single point of failure and introduces increased latency for distant clients. NDN makes it easier to create distributed repositories since they no longer need to be tracked by their IP addresses. For example, in this work, we created a federation of three geographically distributed repositories. These repositories had to announce the prefix they intended to serve (e.g., “/genome”). Even when one or more repositories go down or become unreachable, no additional maintenance is necessary; NDN routes the request to the available repositories as long as at least one repository is reachable. Similarly, when new repositories are added, the process is completely transparent to the user and does not require any action from the network administrator. However, several aspects remain to be addressed: how to allow data producers to publish long-term data efficiently, how to replicate datasets across repositories (partially or completely), and how to retrieve content most efficiently.

Publication of more genomics datasets and metadata into the NDN testbed

There are currently hundreds of reference genomes in the NDN testbed, and we are working on updating Pynome to include more current genome builds, genomes from services other than Ensembl, and support for popular sequence aligner programs (e.g., StAR, Salmon, Kallisto, and Hisat2). We are also working on loading the metadata and SRA RNA-seq files at scale into the NDN testbed. By studying the usage logs of our integration, we will better understand the benefit NDN brings to genomics workflows. Further, the convergence of searchable metadata from multiple data repositories published in the same NDN testbed will allow for a common search and access point for genomic data.

Integration with Docker

For further simplification of workflows, we have created a Docker container with NDN tools, forwarder, and application libraries built-in. The resulting container is fairly lightweight. We plan to publish the image to a public repository where scientists can download and utilize the docker build “as-is”. These images can be deployed to a variety of cloud platforms without modifications, further simplifying the NDN access to genomics workflows.

GEMmaker is able to run Nextflow processes inside specified containers. By adding a container that is configured with NDN to the GEMmaker directory, scripts can run inside the NDN container during a normal GEMmaker workflow. The GEMmaker workflow can then use the ndn-tools to download the SRA sequences, both from ndn-only or NDN-interfaced existing repositories. This method also provides the opportunity for decreased data retrieval time due to NDN in-network caching and allows GEMMaker to benefit from all the NDN features we described earlier.

Integration With Kubernetes

The genomics community is moving toward a cloud-based model. Container orchestration platforms (such as Kubernetes) are more commonly being used in favor of traditional HPC clusters. We believe that enabling users to easily move data from an NDN network to a Kubernetes cluster is imperative for the widespread adoption of this use case.

To achieve this goal, we are engineering the integration of NDN with a Data Transfer Pod (DTP) that runs as a deployment of different containers that enable users to read and write data to a Kubernetes cluster. A DTP is a configurable collection of containers, each representing a different data transfer protocol/interface. A DTP uses the officially maintained images of each protocol/interface, removing the need for integration, aside from adding the container to the DTP deployment, which is an almost identical process for each protocol/interface. This makes adding new protocols very simple, as there is no need to build a custom image for each protocol/interface, as long as an image already exists. Almost all interfaces/protocols used by the community have officially maintained images associated with them.

The DTP tool will allow Kubernetes users to easily access data stored on NDN-based repositories. Each DTP container provides the client with a mechanism to utilize a different data transfer method (e.g., NDN, Aspera, Globus, S3). Using a DTP aims to address the need for simple access to data from a variety of sources. A DTP is not coupled with any particular workflow, so users will be able to pull or push NDN data as a pre- or post-processing step of their workflow, without modifying the workflow itself. The DTP can also be used to pull data from other sources if it is not present in an NDN framework.

We are modifying the existing GEMmaker workflow to use an NDN via the DTP that queries the NDN framework for the required reference genome files and SRA datasets. If the SRA dataset file exists in the NDN framework, the DTP will pull the data onto a Kubernetes persistent volume claim (PVC). For example, we are adapting NDN to work with the Pacific Research Platform (PRP)[61] Nautilus Kubernetes cluster (6). If the user knows the content name (or metadata) but content does not exist in NDN format, the DTP will pull the dataset from SRA with Aspera and publish it in the NDN testbed and then pull into PRP. Once published, the dataset will now exist in the NDN testbed and benefit from NDN attributes, including caching. Once the DTP completes its job, the GEMmaker workflow will function in the same way it does now so no new code needs to be written. We are also developing a cache retention policy to allow the SRA files to evaporate if they are not accessed after a certain period of time.

Limitations

The NDN prototype we used (NFD) and other components we used (catalogs and repo) are research prototypes. The performance and scalability of these prototypes are being improved by the NDN community. Additionally, utilization of NDN containers on PRP has not been explored before; we are working on optimizing both the containers and their interactions with the cloud platforms. We are also working on better understanding the caching and storage needs of the genomics community by looking at real-world request patterns and object sizes associated with them.

Conclusion

In this paper, we enumerate the cyberinfrastruture challenges faced by the genomics community. We discuss NDN, a novel but well-researched future internet architecture that can address these challenges at the network layer. We present our efforts in integrating NDN with a genomics workflow, GEMmaker. We describe the creation of an NDN-complaint naming scheme that is also acceptable to the genomics community. We find that genomics names are already hierarchical and easily translated into NDN names. We publish the actual datasets into an NDN testbed and show that NDN can serve data from anywhere, simplifying data management. Finally, through the integration with GEMmaker, we show NDN’s in-network caching can speed up data retrieval and insertion into the workflow by six times.

Acknowledgements

Author contributions

FF, SS, and SF are the PIs who designed the study, prepared and edited the manuscript, and provided guidance to the students and programmers. CO, DR, CM, TB, and RP are the students and programmers who performed GEMmaker integration, deployed the code and prototype on Pacific Research Platform and evaluated it. They also helped writing the manuscript.

Data availability statement

The datasets presented in this study can be found in online repositories. The names of the repository/repositories and accession number(s) can be found in the article.

Funding

This work was supported by National Science Foundation Award #1659300 “CC*Data: National Cyberinfrastructure for Scientific Data Analysis at Scale (SciDAS),” National Science Foundation Award #2019012 “CC* Integration-Large: N-DISE: NDN for Data Intensive Science Experiments,” and Tennessee Tech Faculty Research Grant. National Science Foundation Award #2019163 CC* Integration-Small: Error Free File Transfer for Big Science.

Conflict of interest

The authors declare that the research was conducted in the absence of any commercial or financial relationships that could be construed as a potential conflict of interest.

References

- ↑ Cinquini, L.; Chrichton, D.; Mattmann, C. et al. (2014). "The Earth System Grid Federation: An open infrastructure for access to distributed geospatial data". Future Generation Computer Systems 36: 400–17. doi:10.1016/j.future.2013.07.002.

- ↑ ATLAS Collaboration; Aad, G.; Abat, E. et al. (2008). "The ATLAS Experiment at the CERN Large Hadron Collider". Journal of Instrumentation 3: S08003. doi:10.1088/1748-0221/3/08/S08003.

- ↑ Dewdney, P.E.; Hall, P.J.; Schillizzi, R.T. et al. (2009). "The Square Kilometre Array". Proceedings of the IEEE 97 (8): 1482-1496. doi:10.1109/JPROC.2009.2021005.

- ↑ LSST Dark Energy Science Collaboration; Abate, A.; Aldering, G. et al. (2012). "Large Synoptic Survey Telescope Dark Energy Science Collaboration". arXiv: 1–133. https://arxiv.org/abs/1211.0310v1.

- ↑ 5.0 5.1 Sayers, E.W.; Beck, J.; Brister, J.R. et al. (2020). "Database resources of the National Center for Biotechnology Information". Nucleic Acids Research (D1): D9–D16. doi:10.1093/nar/gkz899. PMC PMC6943063. PMID 31602479. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC6943063.

- ↑ Tsuchiya, S.; Sakamoto, Y.; Tsuchimoto, Y. et al. (2012). "Big Data Processing in Cloud Environments" (PDF). Fujitsu Scientific & Technical Journal 48 (2): 159–68. https://www.fujitsu.com/downloads/MAG/vol48-2/paper09.pdf.

- ↑ Luo, J.; Wu, M.; Gopukumar, D. et al. (2016). "Big Data Application in Biomedical Research and Health Care: A Literature Review". Biomedical Informatics Insights 8: 1–10. doi:10.4137/BII.S31559. PMC PMC4720168. PMID 26843812. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC4720168.

- ↑ 8.0 8.1 8.2 Shannigrahi, S.; Fan, C.; Papadopoulos, C. et al. (2018). "NDN-SCI for managing large scale genomics data". Proceedings of the 5th ACM Conference on Information-Centric Networking: 204–05. doi:10.1145/3267955.3269022.

- ↑ McCombie, W.R.; McPherson, J.D.; Mardis, E.R. (2016). "Next-Generation Sequencing Technologies". Cold Spring Harbor Perspectives in Medicine 9 (11): 1–10. doi:10.4137/BII.S31559. PMC PMC4720168. PMID 26843812. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC4720168.

- ↑ National Human Genome Research Institute (2020). "The Cost of Sequencing a Human Genome". National Institutes of Health. https://www.genome.gov/about-genomics/fact-sheets/Sequencing-Human-Genome-cost. Retrieved 16 March 2020.

- ↑ Lowy-Gallego, E.; Fairley, S.; Zheng-Bradley, X. et al. (2019). "Variant calling on the GRCh38 assembly with the data from phase three of the 1000 Genomes Project". Wellcome Open Research 4: 50. doi:10.12688/wellcomeopenres.15126.2. PMC PMC7059836. PMID 32175479. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC7059836.

- ↑ 12.0 12.1 NCBI (2020). "Sequence Read Archive". NCBI. https://trace.ncbi.nlm.nih.gov/Traces/sra/. Retrieved 04 February 2020.

- ↑ 13.0 13.1 Stephens, Z.D.; Lee, S.Y.; Faghri, F. et al. (2015). "Big Data: Astronomical or Genomical?". PLoS Biology 13 (7): e1002195. doi:10.1371/journal.pbio.1002195. PMC PMC4494865. PMID 26151137. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC4494865.

- ↑ Olschanowsky, C.; Shannigrahi, S. Papadopolous, C. et al. (2014). "Supporting climate research using named data networking". Proceedings of the IEEE 20th International Workshop on Local & Metropolitan Area Networks: 1–6. doi:10.1109/LANMAN.2014.7028640.

- ↑ 15.0 15.1 15.2 15.3 Fan, C.; Shannigrahi, S.; DiBenedetto, S. et al. (2015). "Managing scientific data with named data networking". Proceedings of the Fifth International Workshop on Network-Aware Data Management: 1–7. doi:10.1145/2832099.2832100.

- ↑ 16.0 16.1 Shannigrahi, S.; Papadopolous, C.; Yeh, E. et al. (2015). "Named Data Networking in Climate Research and HEP Applications". Journal of Physics: Conference Series 664: 052033. doi:10.1088/1742-6596/664/5/052033.

- ↑ 17.0 17.1 17.2 Shannigrahi, S.; Fan, C.; Papadopolous, C. (2017). "Request aggregation, caching, and forwarding strategies for improving large climate data distribution with NDN: a case study". Proceedings of the 4th ACM Conference on Information-Centric Networking: 54–65. doi:10.1145/3125719.3125722.

- ↑ Shannigrahi, S.; Fan, C.; Papadopolous, C. et al. (2018). "Named Data Networking Strategies for Improving Large Scientific Data Transfers". Proceedings of the 2018 IEEE International Conference on Communications Workshops. doi:10.1109/ICCW.2018.8403576.

- ↑ 19.0 19.1 Hadish, J.; Biggs, T.; Shealy, B. et al. (22 January 2020). "SystemsGenetics/GEMmaker: Release v1.1". Zenodo. doi:10.5281/zenodo.3620945. https://zenodo.org/record/3620945.

- ↑ 20.0 20.1 Zhang, L.; Afanasyev, A.; Burke, J. et al. (2014). "Named data networking". ACM SIGCOMM Computer Communication Review 44 (3): 66-73. doi:10.1145/2656877.2656887.

- ↑ Hoque, A.K.M.M.; Amin, S.O.; Alyyan, A. et al. (2013). "NLSR: Named-data link state routing protocol". Proceedings of the 3rd ACM SIGCOMM workshop on Information-centric networking: 15–20. doi:10.1145/2491224.2491231.

- ↑ 22.0 22.1 Afanasyev, A.; Shi, J.; Zhang, B. et al. (October 2016). "NFD Developer’s Guide - Revision 7". NDN, Technical Report NDN-0021. https://named-data.net/publications/techreports/ndn-0021-7-nfd-developer-guide/.

- ↑ So, W.; Narayanan, A.; Oran, D. et al. (2013). "Named data networking on a router: Forwarding at 20gbps and beyond". ACM SIGCOMM Computer Communication Review 43 (4): 495–6. doi:10.1145/2534169.2491699.

- ↑ Khoussi, S.; Nouri, A.; Shi, J. et al. (2019). "Performance Evaluation of the NDN Data Plane Using Statistical Model Checking". Proceedings of the International Symposium on Automated Technology for Verification and Analysis: 534–50. doi:10.1007/978-3-030-31784-3_31.

- ↑ Chen, S.; Shi, W; Cao, A. et al. (2014). "NDN Repo: An NDN Persistent Storage Model" (PDF). Tsinghua University. http://learn.tsinghua.edu.cn:8080/2011011088/WeiqiShi/content/NDNRepo.pdf.

- ↑ "DDBJ". Research Organization of Information and Systems. 2019. https://www.ddbj.nig.ac.jp/index-e.html. Retrieved 21 October 2019.

- ↑ "EMBL-EBI". Wellcome Genome Campus. 2021. https://www.ebi.ac.uk/. Retrieved 02 February 2021.

- ↑ Lathe, W.; williams, J.; Mangan, M. et al. (2008). "Genomic Data Resources: Challenges and Promises". Nature Education 1 (3): 2. https://www.nature.com/scitable/topicpage/genomic-data-resources-challenges-and-promises-743721/.

- ↑ Dankar, F.K.; Ptisyn, A.; Dankar, S.K. (2018). "The development of large-scale de-identified biomedical databases in the age of genomics—principles and challenges". Human Genomics 12: 19. doi:10.1186/s40246-018-0147-5. PMC PMC5894154. PMID 29636096. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC5894154.

- ↑ National Genomics Data Center Members and Partners (2020). "Database Resources of the National Genomics Data Center in 2020". Nucleic Acids Research 48 (D1): D24–33. doi:10.1093/nar/gkz913. PMC PMC7145560. PMID 31702008. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC7145560.

- ↑ The LHC Study Group (May 1991). "Design Study of the Large Hadron Collider (LHC): A Multiparticle Collider in the LEP Tunnel". CERN. https://cds.cern.ch/record/220493.

- ↑ Taylor, K.E.; Doutriaux, C. (28 April 2010). "CMIP5 Model Output Requirements: File Contents and Format, Data Structure and Metadata" (PDF). PCMDI. https://pcmdi.llnl.gov/mips/cmip5/docs/CMIP5_output_metadata_requirements28Apr10.pdf?id=89.

- ↑ Munson, M.C.; Simu, S. (11 November 2013). "Bulk data transfer". ScienceON. https://scienceon.kisti.re.kr/srch/selectPORSrchPatent.do?cn=USP2015038996945.

- ↑ "Aspera". IBM. https://www.ibm.com/products/aspera.

- ↑ "Globus". University of Chicago. https://www.globus.org/. Retrieved 02 February 2021.

- ↑ Rajasekar, A.; Moore, R.; Hou, C.-Y. et al. (2010). "iRODS Primer: Integrated Rule-Oriented Data System". Synthesis Lectures on Information Concepts, Retrieval, and Services 2: 1–143. doi:10.2200/S00233ED1V01Y200912ICR012.

- ↑ Chiang, G.-T.; Clapham, P.; Qi, G. et al. (2011). "Implementing a genomic data management system using iRODS in the Wellcome Trust Sanger Institute". BMC Bioinformatics 12: 361. doi:10.1186/1471-2105-12-361. PMC PMC3228552. PMID 21906284. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC3228552.

- ↑ Eilbeck, K.; Lewis, S.E.; Mungall, C.J. et al. (2005). "The Sequence Ontology: A tool for the unification of genome annotations". Genome Biology 6 (5): R44. doi:10.1186/gb-2005-6-5-r44. PMC PMC1175956. PMID 15892872. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC1175956.

- ↑ Schriml, L.M.; Arze, C.; Nadendla, S. et al. (2012). "Disease Ontology: A backbone for disease semantic integration". Nucleic Acids Research 40 (DB 1): D940-6. doi:10.1093/nar/gkr972. PMC PMC3245088. PMID 22080554. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC3245088.

- ↑ Gene Ontology Consortium (2015). "Gene Ontology Consortium: Going forward". Nucleic Acids Research 43 (DB 1): D1049–56. doi:10.1093/nar/gku1179. PMC PMC4383973. PMID 25428369. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC4383973.

- ↑ 41.0 41.1 Shannigrahi, S.; Fan, C.; Partridge, C. (2020). "What's in a Name?: Naming Big Science Data in Named Data Networking". Proceedings of the 7th ACM Conference on Information-Centric Networking: 12–23. doi:10.1145/3405656.3418717.

- ↑ Shannigrahi, S. (Summer 2019). "The Future of Networking Is the Future of Big Data". Colorado State University. https://mountainscholar.org/bitstream/handle/10217/197325/Shannigrahi_colostate_0053A_15549.pdf?sequence=1&isAllowed=y.

- ↑ 43.0 43.1 43.2 Tierney, B.; Metzger, J. (July 2010). "Higher Performance Bulk Data Transfer". ESnet. https://fasterdata.es.net/assets/fasterdata/JT-201010.pdf. Retrieved 02 February 2021.

- ↑ Mills, N.; Bensman, E.M.; Poehlman, W.L. et al. (2019). "Moving Just Enough Deep Sequencing Data to Get the Job Done". Bioinformatics and Biology Insights 13: 1177932219856359. doi:10.1177/1177932219856359. PMC PMC6572328. PMID 31236009. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC6572328.

- ↑ Yu, Y.; Afanasyev, A.; Clark, D. et al. (2015). "Schematizing Trust in Named Data Networking". Proceedings of the 2nd ACM Conference on Information-Centric Networking: 177–186. doi:10.1145/2810156.2810170.

- ↑ "ESnet". ESnet. https://fasterdata.es.net/. Retrieved 02 February 2021.

- ↑ "Welcome to ICANN!". ICANN. https://www.icann.org/resources/pages/welcome-2012-02-25-en. Retrieved 14 November 2020.

- ↑ "CC*Data: National Cyberinfrastructure for Scientific Data Analysis at Scale (SciDAS)". National Science Foundation. 24 October 2019. https://www.nsf.gov/awardsearch/showAward?AWD_ID=1659300. Retrieved 02 February 2021.

- ↑ Zerbino, D.R.; Achuthan, P.; Akanni, W. et al. (2018). "Ensembl 2018". Nucleic Acids Research 46 (D1): D754–61. doi:10.1093/nar/gkx1098. PMC PMC5753206. PMID 29155950. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC5753206.

- ↑ "SystemsGenetics/pynome". GitHub. https://github.com/SystemsGenetics/pynome. Retrieved 02 February 2021.

- ↑ Olschanowsky, C.; Shannigrahi, S.; Papadopoulos, C. (2014). "Supporting climate research using named data networking". Proceedings of the 2014 IEEE 20th International Workshop on Local & Metropolitan Area Networks: 1–6. doi:10.1109/LANMAN.2014.7028640.

- ↑ Ficklin, S.P.; Dunwoodie, L.J.; Poehlman, W.L. et al. (2017). "Discovering Condition-Specific Gene Co-Expression Patterns Using Gaussian Mixture Models: A Cancer Case Study". Scientific Reports 7 (1): 8617. doi:10.1038/s41598-017-09094-4. PMC PMC5561081. PMID 28819158. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC5561081.

- ↑ Roche, K.; Feltus, F.A.; Park, J.P. et al. (2017). "Cancer cell redirection biomarker discovery using a mutual information approach". PLoS One 12 (6): e0179265. doi:10.1371/journal.pone.0179265. PMC PMC5464651. PMID 28594912. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC5464651.

- ↑ Dunwoodie, L.J.; Poehlman, W.L.; Ficklin, S.P. et al. (2018). "Discovery and validation of a glioblastoma co-expressed gene module". Oncotarget 9 (13): 10995-11008. doi:10.18632/oncotarget.24228. PMC PMC5834250. PMID 29541392. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC5834250.

- ↑ Poehlman, W.L.; Hsieh, J.J.; Feltus, F.A. et al. (2019). "Linking Binary Gene Relationships to Drivers of Renal Cell Carcinoma Reveals Convergent Function in Alternate Tumor Progression Paths". Scientific Reports 9 (1): 2899. doi:10.1038/s41598-019-39875-y. PMC PMC6393532. PMID 30814637. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC6393532.

- ↑ "Nextflow". Seqera Labs. https://nextflow.io/. Retrieved 21 October 2019.

- ↑ Di Tommaso, P.D.; Chatzou, M.; Floden, E.W. et al. (2017). "Nextflow enables reproducible computational workflows". Nature Biotechnology 35 (4): 316–19. doi:10.1038/nbt.3820. PMID 28398311.

- ↑ Wang, Y.; Li, Z.; Tyson, G. et al. (2013). "Optimal cache allocation for Content-Centric Networking". Proceedings of the 21st IEEE International Conference on Network Protocols: 1–10. doi:10.1109/ICNP.2013.6733577.

- ↑ "FABRIC". RENCI. https://fabric-testbed.net/. Retrieved 14 November 2020.

- ↑ Cock, P.J.A.; Fields, C.J.; Goto, N. et al. (2010). "The Sanger FASTQ file format for sequences with quality scores, and the Solexa/Illumina FASTQ variants". Nucleic Acids Research 38 (6): 1767-71. doi:10.1093/nar/gkp1137. PMC PMC2847217. PMID 20015970. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC2847217.

- ↑ Smarr, L.; Crittenden, C.; DeFanti, T. et al. (2018). "The Pacific Research Platform: Making High-Speed Networking a Reality for the Scientist". Proceedings of the Practice and Experience on Advanced Research Computing: 1–8. doi:10.1145/3219104.3219108.

Notes

This presentation is faithful to the original, with only a few minor changes to presentation. In some cases important information was missing from the references, and that information was added. The original paper listed references alphabetically; this wiki lists them by order of appearance, by design. The two footnotes were turned into inline references for convenience.