Difference between revisions of "User:Shawndouglas/sandbox/sublevel4"

Shawndouglas (talk | contribs) (Added content. Saving and adding more.) |

Shawndouglas (talk | contribs) (Added content. Saving and adding more.) |

||

| Line 68: | Line 68: | ||

==Results== | ==Results== | ||

A search of the literature returns thousands of papers related to Open Source software, but most are of limited value in regards to the scope of this project. The need for a process to assist in selecting between Open Source projects is mentioned in a number of these papers and there appear to be over a score of different published procedures. Regrettably, none of these methodologies appear to have gained large scale support in the industry. Stol and Babar have published a framework for comparing evaluation methods targeting Open Source software and include a comparison of 20 of them.<ref name="StolAComp10">{{cite book |chapter=A Comparison Framework for Open Source Software Evaluation Methods |title=Open Source Software: New Horizons |author=Stol, Klaas-Jan; Ali Babar, Muhammad |editor=Ågerfalk, P.J.; Boldyreff, C.; González-Barahona, J.M.; Madey, G.R.; Noll, J |publisher=Springer |year=2010 |pages=389–394 |isbn=9783642132445}}</ref> They noted that web sites that simply consisted of a suggestion list for selecting an Open Source application were not included in this comparison. This selection difficulty is nothing new with FLOSS applications. In their 1994 paper, Fritz and Carter review over a dozen existing selection methodologies, covering their strengths, weaknesses, the mathematics used, and other factors involved.<ref name="FritzAClass94">{{cite book |title=A Classification And Summary Of Software Evaluation And Selection Methodologies |author=Fritz, Catherine A.; Carter, Bradley D. |publisher=Department of Computer Science, Mississippi State University |location=Mississippi State, MS |date=23 August 1994 |url=http://citeseerx.ist.psu.edu/viewdoc/summary?doi=10.1.1.55.4470}}</ref> | A search of the literature returns thousands of papers related to Open Source software, but most are of limited value in regards to the scope of this project. The need for a process to assist in selecting between Open Source projects is mentioned in a number of these papers and there appear to be over a score of different published procedures. Regrettably, none of these methodologies appear to have gained large scale support in the industry. Stol and Babar have published a framework for comparing evaluation methods targeting Open Source software and include a comparison of 20 of them.<ref name="StolAComp10">{{cite book |chapter=A Comparison Framework for Open Source Software Evaluation Methods |title=Open Source Software: New Horizons |author=Stol, Klaas-Jan; Ali Babar, Muhammad |editor=Ågerfalk, P.J.; Boldyreff, C.; González-Barahona, J.M.; Madey, G.R.; Noll, J |publisher=Springer |year=2010 |pages=389–394 |isbn=9783642132445 |doi=10.1007/978-3-642-13244-5_36}}</ref> They noted that web sites that simply consisted of a suggestion list for selecting an Open Source application were not included in this comparison. This selection difficulty is nothing new with FLOSS applications. In their 1994 paper, Fritz and Carter review over a dozen existing selection methodologies, covering their strengths, weaknesses, the mathematics used, and other factors involved.<ref name="FritzAClass94">{{cite book |title=A Classification And Summary Of Software Evaluation And Selection Methodologies |author=Fritz, Catherine A.; Carter, Bradley D. |publisher=Department of Computer Science, Mississippi State University |location=Mississippi State, MS |date=23 August 1994 |url=http://citeseerx.ist.psu.edu/viewdoc/summary?doi=10.1.1.55.4470}}</ref> | ||

{| | {| | ||

| Line 183: | Line 183: | ||

|- | |- | ||

| style="background-color:white; padding-left:10px; padding-right:10px;"|18 | | style="background-color:white; padding-left:10px; padding-right:10px;"|18 | ||

| style="background-color:white; padding-left:10px; padding-right:10px;"|An operational approach for selecting open source components in a software development project<ref name="MajchrowskiAnOp08">{{cite book |chapter=An Operational Approach for Selecting Open Source Components in a Software Development Project |title=Software Process Improvement |author=Majchrowski, Annick; Deprez, Jean-Christophe |editor=O'Connor, R.; Baddoo, N.; Smolander, K.; Messnarz, R. |publisher=Springer |year=2008 |pages=176–188 |isbn=9783540859369}}</ref> | | style="background-color:white; padding-left:10px; padding-right:10px;"|An operational approach for selecting open source components in a software development project<ref name="MajchrowskiAnOp08">{{cite book |chapter=An Operational Approach for Selecting Open Source Components in a Software Development Project |title=Software Process Improvement |author=Majchrowski, Annick; Deprez, Jean-Christophe |editor=O'Connor, R.; Baddoo, N.; Smolander, K.; Messnarz, R. |publisher=Springer |year=2008 |pages=176–188 |isbn=9783540859369 |doi=10.1007/978-3-540-85936-9_16}}</ref> | ||

| style="background-color:white; padding-left:10px; padding-right:10px;"|2008 | | style="background-color:white; padding-left:10px; padding-right:10px;"|2008 | ||

| style="background-color:white; padding-left:10px; padding-right:10px;"|R | | style="background-color:white; padding-left:10px; padding-right:10px;"|R | ||

| Line 203: | Line 203: | ||

|} | |} | ||

<blockquote>'''Table 1.''': Comparison frameworks and methodologies for examination of FLOSS applications extracted from Stol and Babar.<ref name="StolAComp10" /> The selection<br />procedure is described in Stol's and Barbar's paper, however, 'Year' indicates the date of publication, 'Orig.' indicates whether the described<br /> process originated in industry (I) or research (R), while 'Method' indicates whether the paper describes a formal analysis method and procedure (Yes)<br />or just a list of evaluation criteria (No).</blockquote> | <blockquote>'''Table 1.''': Comparison frameworks and methodologies for examination of FLOSS applications extracted from Stol and Babar.<ref name="StolAComp10" /> The selection<br />procedure is described in Stol's and Barbar's paper, however, 'Year' indicates the date of publication, 'Orig.' indicates whether the described<br /> process originated in industry (I) or research (R), while 'Method' indicates whether the paper describes a formal analysis method and procedure (Yes)<br />or just a list of evaluation criteria (No).</blockquote> | ||

Extensive comparisons between some of these methods have also been published, such as Deprez's and Alexandre's comparative assessment of the OpenBRR and QSOS techniques.<ref name="Deprezcomp08">{{cite book |chapter=Comparing Assessment Methodologies for Free/Open Source Software: OpenBRR and QSOS |title=Product-Focused Software Process Improvement |author=Deprez,Jean-Christophe; Alexandre, Simon |editor=Jedlitschka, Andreas; Salo, Outi |publisher=Springer |year=2008 |pages=189-203 |isbn=9783540695660 |doi=10.1007/978-3-540-69566-0_17}}</ref> Wasserman and Pal have also published a paper under the title of ''Evaluating Open Source Software'', which appears to be more of an updated announcement and in-depth description of the Business Readiness Rating (BRR) framework.<ref name="WassermanEval10">{{cite web |url=http://oss.sv.cmu.edu/readings/EvaluatingOSS_Wasserman.pdf |archiveurl=https://web.archive.org/web/20150218173146/http://oss.sv.cmu.edu/readings/EvaluatingOSS_Wasserman.pdf |format=PDF |title=Evaluating Open Source Software |author=Wasserman, Anthony I.; Pal, Murugan |publisher=Carnegie Mellon University - Silicon Valley |date=2010 |archivedate=18 February 2015 |accessdate=31 May 2015}}</ref> Jadhav and Sonar have also examined the issue of both evaluating and selecting software packages. They include a helpful analysis of the strengths and weaknesses of the various techniques.<ref name="JadhavEval09">{{cite journal |title=Evaluating and selecting software packages: A review |journal=Information and Software Technology |author=Jadhav, Anil S.; Sonar, Rajendra M. |volume=51 |issue=3 |year=March 2009 |pages=555–563 |doi=10.1016/j.infsof.2008.09.003}}</ref> Perhaps more importantly, they clearly point out that there is no common list of evaluation criteria. While the majority of the articles they reviewed listed the criteria used, Jadhav and Sonar indicated that these criteria frequently did not include a detailed definition, which required each evaluator to use their own, sometimes conflicting, interpretation. | |||

Since the publication of Stol's and Babar's paper, additional evaluation methods have been published. Of particular interest are a series of papers by Pani, et al. describing their proposed FAME (Filter, Analyze, Measure and Evaluate) methodology.<ref name="PaniAMeth10">{{cite web |title=FAME, A Methodology for Assessing Software Maturity |work=Atti della IV Conferenza Italiana sul Software Libero |author=Pani, F.E.; Sanna, D. |date=11 June 2010 |location=Cagliari, Italy}}</ref><ref name="PaniTheFAMEApp10">{{cite book |chapter=The FAME Approach: An Assessing Methodology |title=Proceedings of the 9th WSEAS International Conference on Telecommunications and Informatics |author=Pani, F.E.; Concas, G.; Sanna, D.; Carrogu, L. |editor=Niola, V.; Quartieri, J.; Neri, F.; Caballero, A.A.; Rivas-Echeverria, F.; Mastorakis, N. |publisher=WSEAS |location=Stevens Point, WI |year=2010 |url=http://www.wseas.us/e-library/conferences/2010/Catania/TELE-INFO/TELE-INFO-10.pdf |format=PDF |isbn=9789549260021}}</ref><ref name="PaniTheFAMEtool10">{{cite journal |title=The FAMEtool: an automated supporting tool for assessing methodology |journal=WSEAS Transactions on Information Science and Applications |author=Pani, F.E.; Concas, G.; Sanna, S.; Carrogu, L. |volume=7 |issue=8 |pages=1078–1089 |year=August 2010 |url=http://www.wseas.us/e-library/transactions/information/2010/88-137.pdf |format=PDF}}</ref><ref name="PaniTrans10">{{cite book |chapter=Transferring FAME, a Methodology for Assessing Open Source Solutions, from University to SMEs |title=Management of the Interconnected World |author=Pani, F.E.; Sanna, D.; Marchesi, M.; Concas, G. |editor= D'Atri, A.; De Marco, M.; Braccini, A.M.; Cabiddu, F. |publisher=Springer |year=2010 |pages=495–502 |isbn=9783790824049 |doi=10.1007/978-3-7908-2404-9_57}}</ref> In their ''Transferring FAME'' paper, they emphasized that all of the evaluation frameworks previously described in the published literature were frequently not easy to apply to real environments, as they were developed using an analytic research approach which incorporated a multitude of factors.<ref name="PaniTrans10" /> | |||

Their stated design objective with FAME is to reduce the complexity of performing the application evaluation, particularly for small organizations. As specified ”The goals of FAME methodology are to aid the choice of high-quality F/OSS products, with high probability to be sustainable in the long term, and to be as simple and user friendly as possible.” They further state that “The main idea behind FAME is that the users should evaluate which solution amongst those available is more suitable to their needs by comparing technical and economical factors, and also taking into account the total cost of individual solutions and cash outflows. It is necessary to consider the investment in its totality and not in separate parts that are independent of one another.”<ref name="PaniTrans10" /> | |||

This paper breaks the FAME methodology into four activities: | |||

# Identify the constraints and risks of the projects | |||

# Identify user requirements and rank | |||

# Identify and rank all key objectives of the project | |||

# Generate a priority framework to allow comparison of needs and features | |||

Their paper includes a formula for generating a score from the information collected. The evaluated system with the highest 'major score', ''Pjtot'', indicates the system selected. While it is a common practice to define an analysis process which condenses all of the information gathered into a single score, I highly caution against blindly accepting such a score. FAME, as well as a number or the other assessment methodologies, is designed for iterative use. The logical purpose of this is to allow the addition of factors initially overlooked into your assessment, as well as to change the weighting of existing factors as you reevaluate their importance. However, this feature means that it is also very easy to unconsciously, or consciously, skew the results of the evaluation to select any system you wish. Condensing everything down into a single value also strips out much of the information that you have worked so hard to gather. Note that you can generate the same result score using significantly different input values. While of value, selecting a system based on just the highest score could potentially leave you with a totally unworkable system. | |||

Pani, et al. also describe a FAMEtool to assist in this data gathering and evaluation.<ref name="PaniTheFAMEtool10" /> However a general web search, as well as a review of their FAME papers revealed no indication of how to obtain this resource. While this paper includes additional comparisons with other FLOSS analysis methodologies and there are some hints suggesting that the FAMEtool is being provided as a web service, I have found no URL specified for it. As of now, I have received no responses from the research team via either e-mail or Skype, regarding FAME, the FAMEtool, or feedback on its use. | |||

During this same time frame Soto and Ciolkowski also published papers describing the QualOSS Open Source Assessment Model and compared it to a number of the procedures in Stol's and Barbar's table.<ref name="SotoTheQual09">{{cite book |chapter=The QualOSS open source assessment model measuring the performance of open source communities |title=3rd International Symposium on Empirical Software Engineering and Measurement, 2009 |author=Soto, M.; Ciolkowski, M. |publisher=IEEE |year=2009 |pages=498-501 |doi=10.1109/ESEM.2009.5314237 |isbn=9781424448425}}</ref><ref name="SotoTheQualProc09">{{cite book |chapter=The QualOSS Process Evaluation: Initial Experiences with Assessing Open Source Processes |title=Software Process Improvement |author=Soto, M.; Ciolkowski, M. |editor=O'Connor, R.; Baddoo, N.; Cuadrado-Gallego, J.J.; Rejas Muslera, R.; Smolander, K.; Messnarz, R. |publisher=Springer |year=2009 |pages=105–116 |isbn=9783642041334 |doi=10.1007/978-3-642-04133-4_9}}</ref> Their focus was primarily on three process perspectives: product quality, process maturity, and sustainability of the development community. Due to the lack of anything more than a rudimentary process perspective examination, they felt that the following OSS project assessment models were unsatisfactory: QSOS, CapGemni OSMM, Navica OSMM, and OpenBRR. They position QualOSS as an extension of the tralatitious CMMI and SPICE process maturity models. While there are multiple items in the second paper that are worth incorporating into an in-depth evaluation process, they do not seem suitable for what is intended as a quick survey. | |||

==References== | ==References== | ||

Revision as of 16:24, 6 October 2015

| Full article title | Generalized Procedure for Screening Free Software and Open Source Software Applications |

|---|---|

| Author(s) | Joyce, John |

| Author affiliation(s) | Arcana Informatica; Scientific Computing |

| Primary contact | Email: |

| Year published | 2015 |

| Distribution license | Creative Commons Attribution-ShareAlike 4.0 International |

Abstract

Free Software and Open Source Software projects have become a popular alternative tool in both scientific research and other fields. However, selecting the optimal application for use in a project can be a major task in itself, as the list of potential applications must first be identified and screened to determine promising candidates before an in-depth analysis of systems can be performed. To simplify this process we have initiated a project to generate a library of in-depth reviews of Free Software and Open Source Software applications. Preliminary to beginning this project, a review of evaluation methods available in the literature was performed. As we found no one method that stood out, we synthesized a general procedure using a variety of available sources for screening a designated class of applications to determine which ones to evaluate in more depth. In this paper, we will examine a number of currently published processes to identify their strengths and weaknesses. By selecting from these processes we will synthesize a proposed screening procedure to triage available systems and identify those most promising of pursuit. To illustrate the functionality of this technique, this screening procedure will be executed against a selected class of applications.

Introduction

There is much confusion regarding Free Software and Open Source Software and many people use these terms interchangeably, however, to some the connotations associated with the terms is highly significant. So perhaps we should start with an examination of the terms to clarify what we are attempting to screen. While there are many groups and organizations involved with Open Source software, two of the main ones are the Free Software Foundation (FSF) and the Open Source Initiative (OSI).

When discussing Free Software, we are not explicitly discussing software for which no fee is charged, rather we are referring to free in terms of liberty. To quote the Free Software Foundation (FSF)[1]:

A program is free software if the program's users have the four essential freedoms:

- The freedom to run the program as you wish, for any purpose (freedom 0).

- The freedom to study how the program works, and change it so it does your computing as you wish (freedom 1). Access to the source code is a precondition for this.

- The freedom to redistribute copies so you can help your neighbor (freedom 2).

- The freedom to distribute copies of your modified versions to others (freedom 3). By doing this you can give the whole community a chance to benefit from your changes. Access to the source code is a precondition for this.

This does not mean that a program is provided at no cost, or gratis, though some of these rights imply that it would be. In the FSF's analysis, any application that does not conform to these freedoms is unethical. While there is also 'free software' or 'freeware' that is given away at no charge, or gratis, but without the source code, this would not be considered Free Software under the FSF definition.

The Open Source Initiative (OSI), originally formed to promote Free Software, which they referred to as Open Source Software (OSS) to make it sound more business friendly. The OSI defines Open Source Software as any application that meets the following 10 criteria, which they based on the Debian Free Software Guidelines[2]:

- Free redistribution

- Source code Included

- Must allow derived works

- Must preserve the integrity of the authors source code

- License must not discriminate against persons or groups

- License must not discriminate against fields of endeavor

- Distribution of licenses

- License must not be specific to a production

- License must not restrict other software

- License must be technology neutral

Open Source Software adherents take what they consider the more pragmatic view of looking more at the license requirements and put significant effort into convincing commercial enterprises of the practical benefits of open source, meaning the free availability of application source code.

In an attempt to placate both groups when discussing the same software application, the term Free/Open Source Software (F/OSS) was developed. Since the term Free was still tending to confuse some people, the term libre, which connotes freedom, was added resulting in the term Free/Libre Open Source Software (FLOSS). If you perform a detailed analysis on the full specifications, you will find that all Free Software fits the Open Source Software definition, while not all Open Source Software fits the Free Software definition. However, any Open Source Software that is not also Free Software is the exception, rather than the rule. As a result, you will find these acronyms used almost interchangeably, but there are subtle differences in meaning, so stay alert. In the final analysis, the software license that accompanies the software is what you legally have to follow.

The reality is that since both groups trace their history back to the same origins, the practical differences between an application being Free Software or Open Source are generally negligible. Keep in mind that the above descriptions are to some degree generalizations, as both organizations are involved in multiple activities. There are many additional groups interested in Open Source for a wide variety of reasons. However, this diversity is also a strong point, resulting in a vibrant and dynamic community. You should not allow the difference in terminology to be divisive. The fact that all of these terms can be traced back to the same origin should unite us.[3] In practice, many of the organization members will use the terms interchangeably, depending on the point that they are trying to get across. With in excess of 300,000 FLOSS applications currently registered in SourceForge.net[4] and over 10 million repositories on GitHub[5], there are generally multiple options accessible for any class of application, be it a Laboratory Information Management System (LIMS), an office suite, a data base, or a document management system. Presumably you have gone through the assessment of the various challenges to using an Open Source application[6] and have decided to move ahead with selecting an appropriate application. The difficulty now becomes selecting which application to use. While there are multiple indexes of FOSS projects, these are normally just listings of the applications with a brief description provided by the developers with no indication of the vitality or independent evaluation of the project.

What is missing is a catalog of in-depth reviews of these applications, eliminating the need for each group to go through the process of developing a list of potential applications, screening all available applications, and performing in-depth reviews of the most promising candidates. While once they've made a tentative selection, the organization will need to perform their own testing to confirm that the selected application meets their specific needs, there is no reason for everyone to go through the tedious process of identifying projects and weeding out the untenable ones.

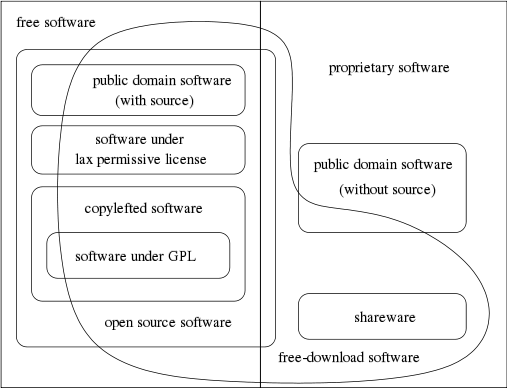

Illustration 1.: This diagram, originally by Chao-Kuei and updated by several others since,

explains the different categories of software. It's available as a Scalable Vector Graphic and

as an XFig document, under the terms of any of the GNU GPL v2 or later, the GNU FDL v1.2

or later, or the Creative Commons Attribution-Share Alike v2.0 or later

The primary goal of this document is to describe a general procedure capable of being used to screen any selected class of software applications. The immediate concern is with screening FLOSS applications, though allowances can be made to the process to allow at least rough cross-comparison of both FOSS and commercial applications. To that end it we start with an examination of published survey procedures. We then combine a subset of standard software evaluation procedures with recommendations for evaluating FLOSS applications. Because it is designed to screen such a diverse range of applications, the procedure is by necessity very general. However, as we move through the steps of the procedure, we will describe how to tune the process for the class of software that you are interested in.

You can also ignore any arguments regarding selecting between FLOSS and commercial applications. In this context, commercial is referring to the marketing approach, not to the quality of the software. Many FLOSS applications have comparable, if not superior quality, to products that are traditionally marketed and licensed. Wheeler discusses this issue in more detail, showing that by many definitions FLOSS is commercial software.[7]

The final objective of this process is to document a procedure that can then be applied to any class of FOSS applications to determine which projects in the class are the most promising to pursue, allowing us to expend our limited resources most effectively. As the information available for evaluating FOSS projects is generally quite different from that available for commercially licensed applications, this evaluation procedure has been optimized to best take advantage of this additional information.

Results

A search of the literature returns thousands of papers related to Open Source software, but most are of limited value in regards to the scope of this project. The need for a process to assist in selecting between Open Source projects is mentioned in a number of these papers and there appear to be over a score of different published procedures. Regrettably, none of these methodologies appear to have gained large scale support in the industry. Stol and Babar have published a framework for comparing evaluation methods targeting Open Source software and include a comparison of 20 of them.[8] They noted that web sites that simply consisted of a suggestion list for selecting an Open Source application were not included in this comparison. This selection difficulty is nothing new with FLOSS applications. In their 1994 paper, Fritz and Carter review over a dozen existing selection methodologies, covering their strengths, weaknesses, the mathematics used, and other factors involved.[9]

|

Table 1.: Comparison frameworks and methodologies for examination of FLOSS applications extracted from Stol and Babar.[8] The selection

procedure is described in Stol's and Barbar's paper, however, 'Year' indicates the date of publication, 'Orig.' indicates whether the described

process originated in industry (I) or research (R), while 'Method' indicates whether the paper describes a formal analysis method and procedure (Yes)

or just a list of evaluation criteria (No).

Extensive comparisons between some of these methods have also been published, such as Deprez's and Alexandre's comparative assessment of the OpenBRR and QSOS techniques.[14] Wasserman and Pal have also published a paper under the title of Evaluating Open Source Software, which appears to be more of an updated announcement and in-depth description of the Business Readiness Rating (BRR) framework.[15] Jadhav and Sonar have also examined the issue of both evaluating and selecting software packages. They include a helpful analysis of the strengths and weaknesses of the various techniques.[16] Perhaps more importantly, they clearly point out that there is no common list of evaluation criteria. While the majority of the articles they reviewed listed the criteria used, Jadhav and Sonar indicated that these criteria frequently did not include a detailed definition, which required each evaluator to use their own, sometimes conflicting, interpretation.

Since the publication of Stol's and Babar's paper, additional evaluation methods have been published. Of particular interest are a series of papers by Pani, et al. describing their proposed FAME (Filter, Analyze, Measure and Evaluate) methodology.[17][18][19][20] In their Transferring FAME paper, they emphasized that all of the evaluation frameworks previously described in the published literature were frequently not easy to apply to real environments, as they were developed using an analytic research approach which incorporated a multitude of factors.[20]

Their stated design objective with FAME is to reduce the complexity of performing the application evaluation, particularly for small organizations. As specified ”The goals of FAME methodology are to aid the choice of high-quality F/OSS products, with high probability to be sustainable in the long term, and to be as simple and user friendly as possible.” They further state that “The main idea behind FAME is that the users should evaluate which solution amongst those available is more suitable to their needs by comparing technical and economical factors, and also taking into account the total cost of individual solutions and cash outflows. It is necessary to consider the investment in its totality and not in separate parts that are independent of one another.”[20]

This paper breaks the FAME methodology into four activities:

- Identify the constraints and risks of the projects

- Identify user requirements and rank

- Identify and rank all key objectives of the project

- Generate a priority framework to allow comparison of needs and features

Their paper includes a formula for generating a score from the information collected. The evaluated system with the highest 'major score', Pjtot, indicates the system selected. While it is a common practice to define an analysis process which condenses all of the information gathered into a single score, I highly caution against blindly accepting such a score. FAME, as well as a number or the other assessment methodologies, is designed for iterative use. The logical purpose of this is to allow the addition of factors initially overlooked into your assessment, as well as to change the weighting of existing factors as you reevaluate their importance. However, this feature means that it is also very easy to unconsciously, or consciously, skew the results of the evaluation to select any system you wish. Condensing everything down into a single value also strips out much of the information that you have worked so hard to gather. Note that you can generate the same result score using significantly different input values. While of value, selecting a system based on just the highest score could potentially leave you with a totally unworkable system.

Pani, et al. also describe a FAMEtool to assist in this data gathering and evaluation.[19] However a general web search, as well as a review of their FAME papers revealed no indication of how to obtain this resource. While this paper includes additional comparisons with other FLOSS analysis methodologies and there are some hints suggesting that the FAMEtool is being provided as a web service, I have found no URL specified for it. As of now, I have received no responses from the research team via either e-mail or Skype, regarding FAME, the FAMEtool, or feedback on its use.

During this same time frame Soto and Ciolkowski also published papers describing the QualOSS Open Source Assessment Model and compared it to a number of the procedures in Stol's and Barbar's table.[21][22] Their focus was primarily on three process perspectives: product quality, process maturity, and sustainability of the development community. Due to the lack of anything more than a rudimentary process perspective examination, they felt that the following OSS project assessment models were unsatisfactory: QSOS, CapGemni OSMM, Navica OSMM, and OpenBRR. They position QualOSS as an extension of the tralatitious CMMI and SPICE process maturity models. While there are multiple items in the second paper that are worth incorporating into an in-depth evaluation process, they do not seem suitable for what is intended as a quick survey.

References

- ↑ "What is free software?". GNU Project. Free Software Foundation, Inc. 2015. http://www.gnu.org/philosophy/free-sw.html. Retrieved 17 June 2015.

- ↑ "The Open Source Definition". Open Source Initiative. 2015. http://opensource.org/osd. Retrieved 17 June 2015.

- ↑ Schießle, Björn (12 August 2012). "Free Software, Open Source, FOSS, FLOSS - same same but different". Free Software Foundation Europe. https://fsfe.org/freesoftware/basics/comparison.en.html. Retrieved 5 June 2015.

- ↑ "RepOSS: A Flexible OSS Assessment Repository" (PDF). Northeast Asia OSS Promotion Forum WG3. 5 November 2012. http://events.linuxfoundation.org/images/stories/pdf/lceu2012_date.pdf. Retrieved 05 May 2015.

- ↑ Doll, Brian (23 December 2013). "10 Million Repositories". GitHub, Inc. https://github.com/blog/1724-10-millionrepositories. Retrieved 08 August 2015.

- ↑ Sarrab, Mohamed; Elsabir, Mahmoud; Elgamel, Laila (March 2013). "The Technical, Non-technical Issues and the Challenges of Migration to Free and Open Source Software" (PDF). IJCSI International Journal of Computer Science Issues 10 (2.3). http://ijcsi.org/papers/IJCSI-10-2-3-464-469.pdf.

- ↑ Wheeler, David A. (14 June 2011). "Free-Libre / Open Source Software (FLOSS) is Commercial Software". dwheeler.com. http://www.dwheeler.com/essays/commercial-floss.html. Retrieved 28 May 2015.

- ↑ 8.0 8.1 Stol, Klaas-Jan; Ali Babar, Muhammad (2010). "A Comparison Framework for Open Source Software Evaluation Methods". In Ågerfalk, P.J.; Boldyreff, C.; González-Barahona, J.M.; Madey, G.R.; Noll, J. Open Source Software: New Horizons. Springer. pp. 389–394. doi:10.1007/978-3-642-13244-5_36. ISBN 9783642132445.

- ↑ Fritz, Catherine A.; Carter, Bradley D. (23 August 1994). A Classification And Summary Of Software Evaluation And Selection Methodologies. Mississippi State, MS: Department of Computer Science, Mississippi State University. http://citeseerx.ist.psu.edu/viewdoc/summary?doi=10.1.1.55.4470.

- ↑ "OpenBRR, Business Readiness Rating for Open Source: A Proposed Open Standard to Facilitate Assessment and Adoption of Open Source Software" (PDF). OpenBRR. 2005. http://docencia.etsit.urjc.es/moodle/file.php/125/OpenBRR_Whitepaper.pdf. Retrieved 13 April 2015.

- ↑ Wasserman, A.I.; Pal, M.; Chan, C. (10 June 2006). "The Business Readiness Rating: a Framework for Evaluating Open Source" (PDF). Proceedings of the Workshop on Evaluation Frameworks for Open Source Software (EFOSS) at the Second International Conference on Open Source Systems. Lake Como, Italy. pp. 1–5. Archived from the original on 11 January 2007. http://web.archive.org/web/20070111113722/http://www.openbrr.org/comoworkshop/papers/WassermanPalChan_EFOSS06.pdf. Retrieved 15 April 2015.

- ↑ Majchrowski, Annick; Deprez, Jean-Christophe (2008). "An Operational Approach for Selecting Open Source Components in a Software Development Project". In O'Connor, R.; Baddoo, N.; Smolander, K.; Messnarz, R.. Software Process Improvement. Springer. pp. 176–188. doi:10.1007/978-3-540-85936-9_16. ISBN 9783540859369.

- ↑ Petrinja, E.; Nambakam, R.; Sillitti, A. (2009). "Introducing the Open Source Maturity Model". ICSE Workshop on Emerging Trends in Free/Libre/Open Source Software Research and Development, 2009. IEEE. pp. 37–41. doi:10.1109/FLOSS.2009.5071358. ISBN 9781424437207.

- ↑ Deprez,Jean-Christophe; Alexandre, Simon (2008). "Comparing Assessment Methodologies for Free/Open Source Software: OpenBRR and QSOS". In Jedlitschka, Andreas; Salo, Outi. Product-Focused Software Process Improvement. Springer. pp. 189-203. doi:10.1007/978-3-540-69566-0_17. ISBN 9783540695660.

- ↑ Wasserman, Anthony I.; Pal, Murugan (2010). "Evaluating Open Source Software" (PDF). Carnegie Mellon University - Silicon Valley. Archived from the original on 18 February 2015. https://web.archive.org/web/20150218173146/http://oss.sv.cmu.edu/readings/EvaluatingOSS_Wasserman.pdf. Retrieved 31 May 2015.

- ↑ Jadhav, Anil S.; Sonar, Rajendra M. (March 2009). "Evaluating and selecting software packages: A review". Information and Software Technology 51 (3): 555–563. doi:10.1016/j.infsof.2008.09.003.

- ↑ Pani, F.E.; Sanna, D. (11 June 2010). "FAME, A Methodology for Assessing Software Maturity". Atti della IV Conferenza Italiana sul Software Libero. Cagliari, Italy.

- ↑ Pani, F.E.; Concas, G.; Sanna, D.; Carrogu, L. (2010). "The FAME Approach: An Assessing Methodology". In Niola, V.; Quartieri, J.; Neri, F.; Caballero, A.A.; Rivas-Echeverria, F.; Mastorakis, N. (PDF). Proceedings of the 9th WSEAS International Conference on Telecommunications and Informatics. Stevens Point, WI: WSEAS. ISBN 9789549260021. http://www.wseas.us/e-library/conferences/2010/Catania/TELE-INFO/TELE-INFO-10.pdf.

- ↑ 19.0 19.1 Pani, F.E.; Concas, G.; Sanna, S.; Carrogu, L. (August 2010). "The FAMEtool: an automated supporting tool for assessing methodology" (PDF). WSEAS Transactions on Information Science and Applications 7 (8): 1078–1089. http://www.wseas.us/e-library/transactions/information/2010/88-137.pdf.

- ↑ 20.0 20.1 20.2 Pani, F.E.; Sanna, D.; Marchesi, M.; Concas, G. (2010). "Transferring FAME, a Methodology for Assessing Open Source Solutions, from University to SMEs". In D'Atri, A.; De Marco, M.; Braccini, A.M.; Cabiddu, F.. Management of the Interconnected World. Springer. pp. 495–502. doi:10.1007/978-3-7908-2404-9_57. ISBN 9783790824049.

- ↑ Soto, M.; Ciolkowski, M. (2009). "The QualOSS open source assessment model measuring the performance of open source communities". 3rd International Symposium on Empirical Software Engineering and Measurement, 2009. IEEE. pp. 498-501. doi:10.1109/ESEM.2009.5314237. ISBN 9781424448425.

- ↑ Soto, M.; Ciolkowski, M. (2009). "The QualOSS Process Evaluation: Initial Experiences with Assessing Open Source Processes". In O'Connor, R.; Baddoo, N.; Cuadrado-Gallego, J.J.; Rejas Muslera, R.; Smolander, K.; Messnarz, R.. Software Process Improvement. Springer. pp. 105–116. doi:10.1007/978-3-642-04133-4_9. ISBN 9783642041334.

Notes

This article has not officially been published in a journal. However, this presentation is faithful to the original paper, with only a few minor changes to presentation. This article is being made available for the first time under the Creative Commons Attribution-ShareAlike 4.0 International license, the same license used on this wiki.