Journal:A systematic framework for data management and integration in a continuous pharmaceutical manufacturing processing line

| Full article title | A systematic framework for data management and integration in a continuous pharmaceutical manufacturing processing line |

|---|---|

| Journal | Process |

| Author(s) |

Cao, Huiyi; Mushnoori, Srinivas; Higgins, Barry; Kollipara, Chandrasekhar; Fernier, Adam; Hausner, Douglas; Jha, Shantenu; Singh, Ravendra; Ierapetritou, Marianthi; Ramachandran, Rohit |

| Author affiliation(s) | Rutgers University, Johnson & Johnson, Janssen Research & Development |

| Primary contact | Email: rohit dot r at rutgers dot edu; Tel.: +1-848-445-6278 |

| Year published | 2018 |

| Volume and issue | 6(5) |

| Page(s) | 53 |

| DOI | 10.3390/pr6050053 |

| ISSN | 2227-9717 |

| Distribution license | Creative Commons Attribution 4.0 International |

| Website | http://www.mdpi.com/2227-9717/6/5/53/htm |

| Download | http://www.mdpi.com/2227-9717/6/5/53/pdf (PDF) |

Abstract

As the pharmaceutical industry seeks more efficient methods for the production of higher value therapeutics, the associated data analysis, data visualization, and predictive modeling require dependable data origination, management, transfer, and integration. As a result, the management and integration of data in a consistent, organized, and reliable manner is a big challenge for the pharmaceutical industry. In this work, an ontological information infrastructure is developed to integrate data within manufacturing plants and analytical laboratories. The ANSI/ISA-88 batch control standard has been adapted in this study to deliver a well-defined data structure that will improve the data communication inside the system architecture for continuous processing. All the detailed information of the lab-based experiment and process manufacturing—including equipment, samples, and parameters—are documented in the recipe. This recipe model is implemented into a process control system (PCS), data historian, and electronic laboratory notebook (ELN). Data existing in the recipe can be eventually exported from this system to cloud storage, which could provide a reliable and consistent data source for data visualization, data analysis, or process modeling.

Keywords: data management, continuous pharmaceutical manufacturing, ISA-88, recipe, OSI Process Information (PI)

Introduction

For decades, the pharmaceutical industry has been dominated by a batch-based manufacturing process. This traditional method can lead to increased inefficiency and delay in time-to-market of product, as well as the possibility of errors and defects. Continuous manufacturing in contrast, is a newer technology in pharmaceutical manufacturing that can enable faster, cleaner, and more economical production. The U.S. Food and Drug Administration (FDA) has recognized the advancement of this manufacturing mode and has been encouraging its development as part of the FDA's "quality by design" (QbD) paradigm.[1] The application of process analytical technology (PAT) and control systems is a very useful effort to gain improved science-based process understanding.[2] One of the advantages of the continuous pharmaceutical manufacturing process is that it provides the ability to monitor and rectify data/product in real time. Therefore, it has been considered a data rich manufacturing process. However, in the face of the enormous amount of data generated from a continuous process, a sophisticated data management system is required for the integration of analytical tools to the control systems, as well as the off-line measurement systems.

In order to represent, manage, and analyze a large amount of complex information, an ontological informatics infrastructure will be necessary for process and product development in the pharmaceutical industry.[3] The ANSI/ISA-88 batch control standard[4][5][6] is an international standard addressing batch process control, which has already been implemented in other industries for years. Therefore, adapting this industrial standard into pharmaceutical manufacturing could provide a design philosophy for describing equipment, material, personnel, and reference models.[7][8] This recipe-based execution could work as a hierarchical data structure for the assembly of data from the control system, process analytical technology (PAT) tools, and off-line measurement devices. The combination of the ANSI/ISA-88 recipe model and the data warehouse informatics strategy[9] leads to the “recipe data warehouse” strategy.[10] This strategy could provide the possibility of data management across multiple execution systems, as well as the ability for data analysis and visualization.

Applying the “recipe data warehouse” strategy to continuous pharmaceutical manufacturing provides a possible approach to handle the data produced via analytical experimentation and process recipe execution. Not only the data itself but also the context of the data can be well-captured and saved for documentation and reporting. However, unlike batch operations, continuous manufacturing is a complicated process containing a series of interconnected unit operations with multiple execution layers. Therefore, it is quite challenging to integrate data across the whole system while maintaining an accurate representation of the complex manufacturing processes.

In addition to data collection and integration of the continuous manufacturing plant, the highly variable and unpredictable properties of raw materials are necessary to capture and store in a database because they could have an impact on the quality of the product.[11] These properties of relevance to continuous manufacturing are measured via many different analytical methods, including FT4 powder characterization[12], particle size analysis[13], and the Washburn technique.[14] The establishment of a raw material property database could be achieved by the “recipe data warehouse” strategy. Nevertheless, compared to the computer-aided manufacturing used in the production process, the degree of automation would vary significantly in different analytical platforms. Therefore, an easily accessible recipe management system would be highly desirable in characterization laboratories.

Moreover, a cloud computing technology for data management and storage is adapted to deal with the massive amount of data generated from the continuous manufacturing process. While traditional computational infrastructures involve huge investments on dedicated equipment, cloud computing offers a virtual environment for users to store or share infinite packets of information by renting hardware and storage systems for a defined time period.[15] Because of its many advantages—including flexibility, security, and efficiency—cloud computing is suitable for data management in the pharmaceutical industry.[16]

Objectives

In this work, a big data strategy following the ANSI/ISA-88 batch control standard is applied for data management in continuous pharmaceutical manufacturing. The ontology of recipe modeling and the design philosophy of a recipe is elaborated. A data management strategy is proposed for data integration in both a continuous manufacturing processes and the analytical platforms used for raw material characterization. This strategy has been applied to a pilot direct compaction continuous tablet manufacturing process to build up data flow from equipment to recipe database on the cloud. In the characterization of raw material and intermediate blend properties, experiment data is also captured and transferred via a web-based recipe management tool.

Materials and methods

Ontology

An ontology is an explicit specification of a conceptualization, where "conceptualization" refers to an abstract, simplified view of the world that we wish to represent for some purpose.[17] A more detailed definition of an ontology has been made where the ontology is described as a hierarchically structured set of terms for describing a domain that can be used as a skeletal foundation for a knowledge base.[18] Ontologies are created to support the sharing and reuse of formally represented knowledge among different computing systems, as well as human beings. They provide the shared and common domain structure for semantic integration of information sources. In this work, a conceptualization through the ANSI/ISA-88 standard shows the advantage of building up a general conceptualization in the pharmaceutical development and manufacturing domains.

Information technology has already developed the capabilities to support the implementation of such proposed informatics infrastructure. The language used in an ontology should be expressive, portable, and semantically defined, which is important for the future implementation and sharing of the ontology. The World Wide Web Consortium (W3C) has proposed several markup languages intended for web environment usage, and Extensive Markup Language (XML) is one of them.[19] XML is a metalanguage that defines a set of rules for encoding documents in a format which is both human readable and machine readable. The design of XML focuses on what data is and how to describe data. XML data is known as self-defining and self-describing, which means that the structure of the data is embedded with the data. There is no need to build the structure before storing the data when it arrives. The most basic building block of an XML document is an element, which has a beginning and ending tag. Since nested elements are supported in XML, it has the capability to embed hierarchical structures. Element names describe the content of the element, while the structure describes the relationship between the elements.

As an XML Schema defines the structure of XML documents, an XML document can be validated according to the corresponding XML Schema. XML Schema language is also referred to as XML Schema definition (XSD), which defines the constraints on the content and structure of documents of that type.[20]

Recipe model

In ANSI/ISA-88, a recipe is defined as the minimum set of information that uniquely identifies the production requirements for a particular product.[4] However, there will still be a significant amount and different types of necessary information, which is required to describe products and to make products. Holding all the information in one recipe would be complicated and cumbersome for human beings. As a result, four types of the recipe are defined in ANSI/ISA-88 to focus on different levels and accurate information.

Recipe types

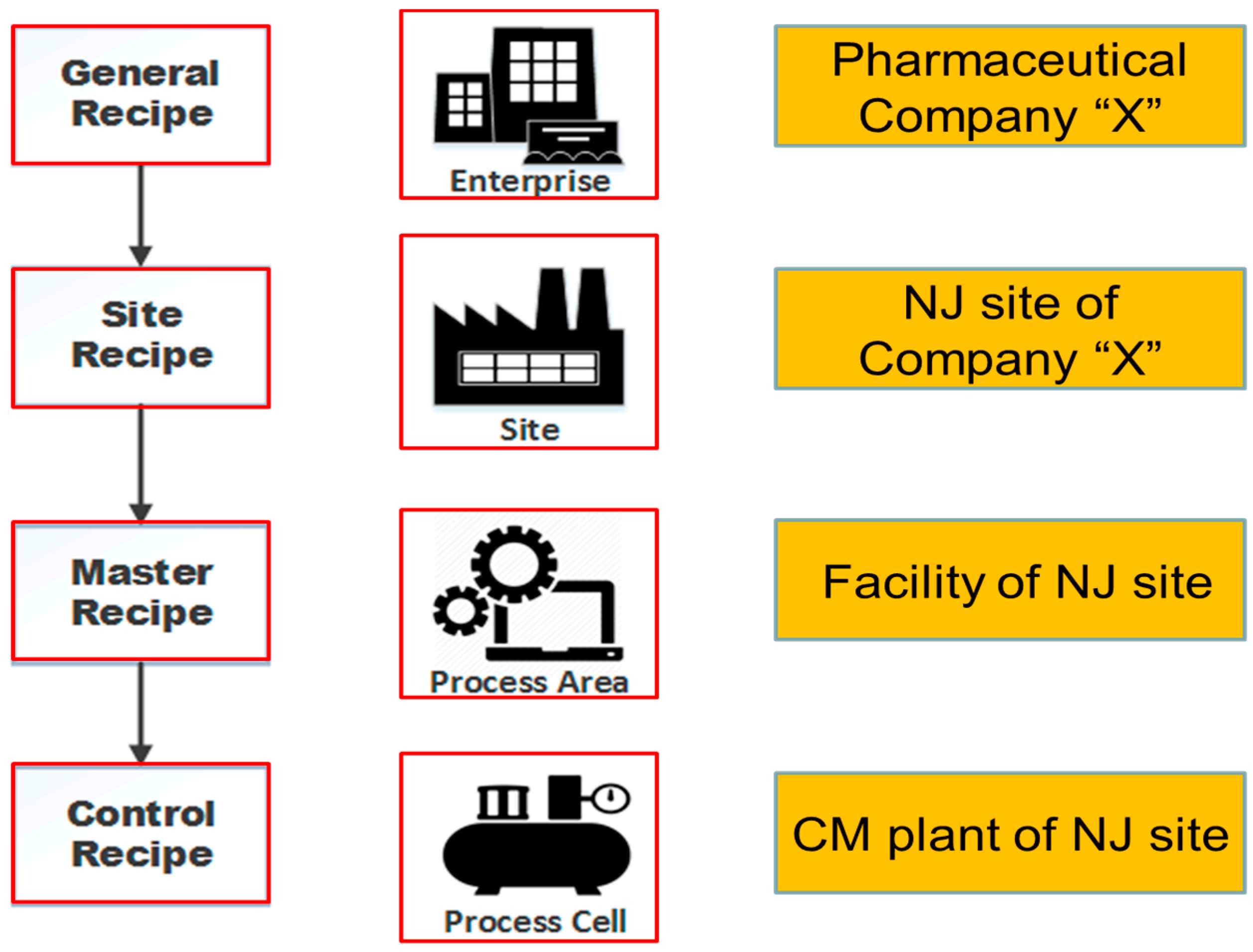

Table 1 shows the four recipe types, as defined in ANSI/ISA-88, and their relationship. The different recipe types are shown together with an example from the pharmaceutical industry in Figure 1.

| ||||||||||||

|

Process model

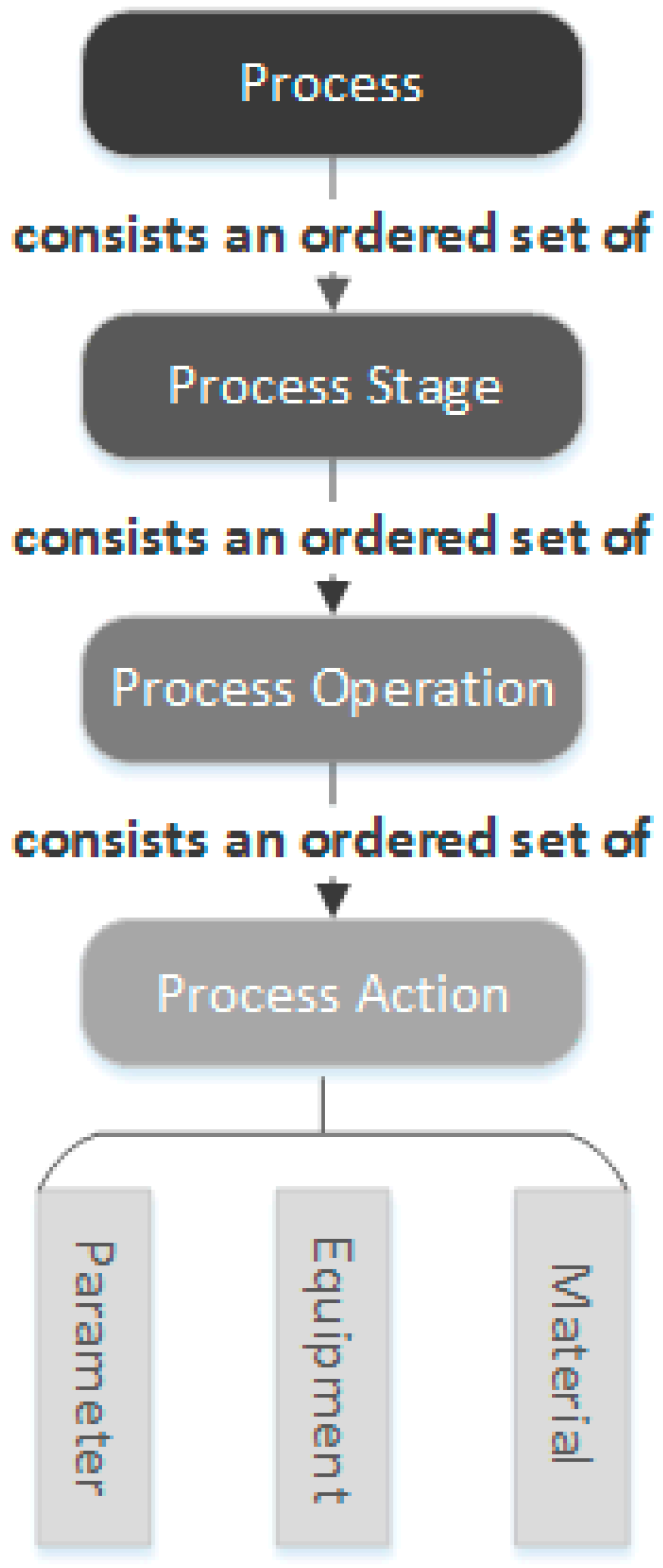

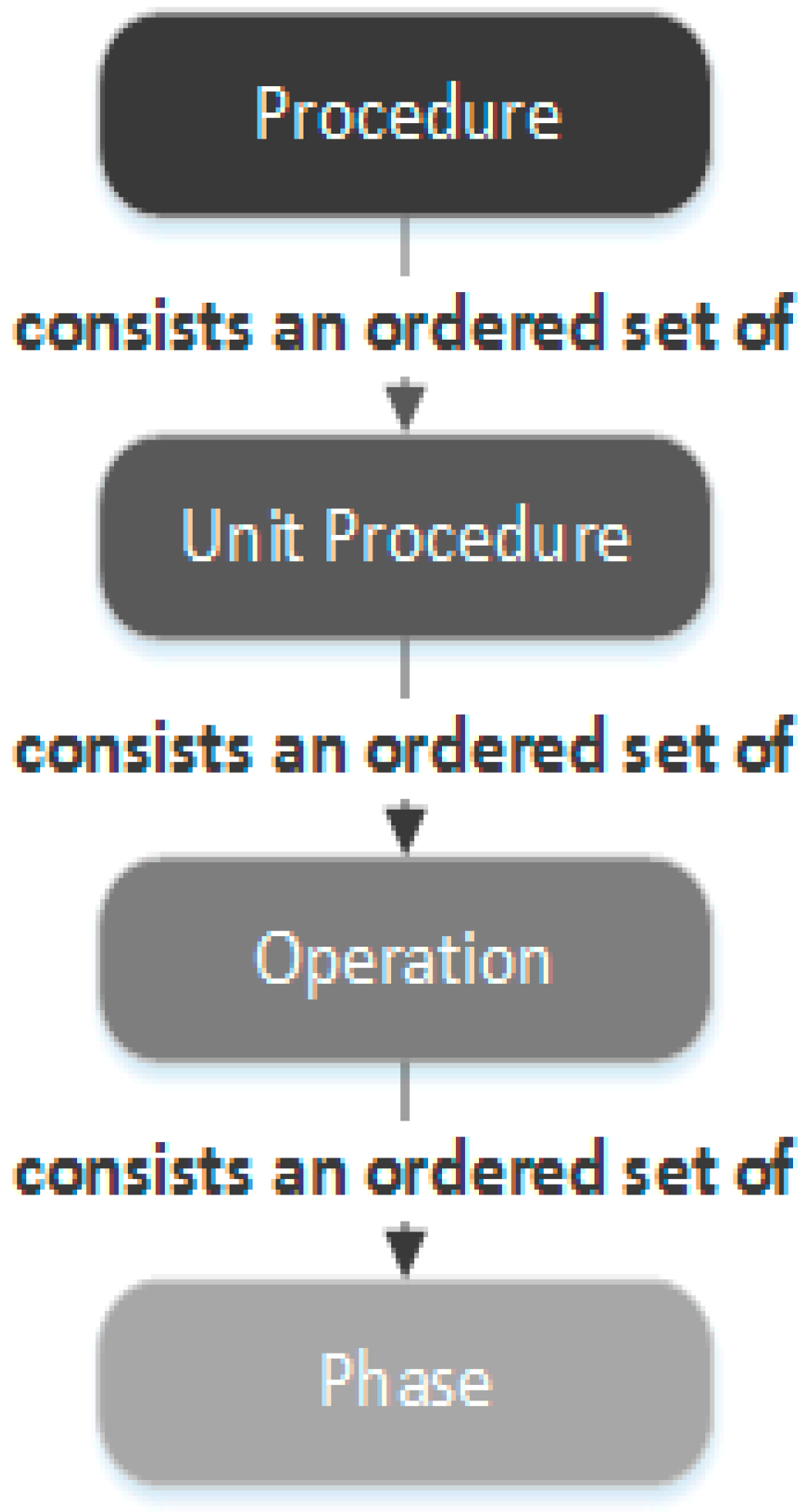

The ANSI/ISA-88 standard defines a process model that has four levels, including process, process stages, process operations, and process actions. The structure of this process model is shown in Figure 2. As shown in the figure, the higher level consists an ordered set of the lower levels. The information of equipment, parameter, and material can be included in the attributes of the process actions.

|

It is important to note that the reference model and guideline recommended in the ANSI/ISA-88 standard are not to be strictly normative. This feature provides the sufficient level of flexibility to represent the current manufacturing process according to the ANSI/ISA-88 standard, as well as the opportunity to expand the reference model to suit unusual manufacturing.[21] In this case, the ANSI/ISA-88 recipe model is extended to continuous manufacturing, instead of the batch manufacturing process, to provide a hierarchical structure for data management and integration.

Recipe model implementation

The purpose of this part is to illustrate the proposed methodology to assess the implementation of the ANSI/ISA-88 recipe model into continuous pharmaceutical manufacturing. Proven evidence has shown that developing a new product manufacturing process might be a difficult task. Although the ANSI/ISA-88 standard provides a practical reference model for sure, adapting a batch model to the continuous process will still be challenging. The implementation of the recipe model is divided into several steps to perform the activities in a structured way.

Step 1. ANSI/ISA-88 applicable area identification

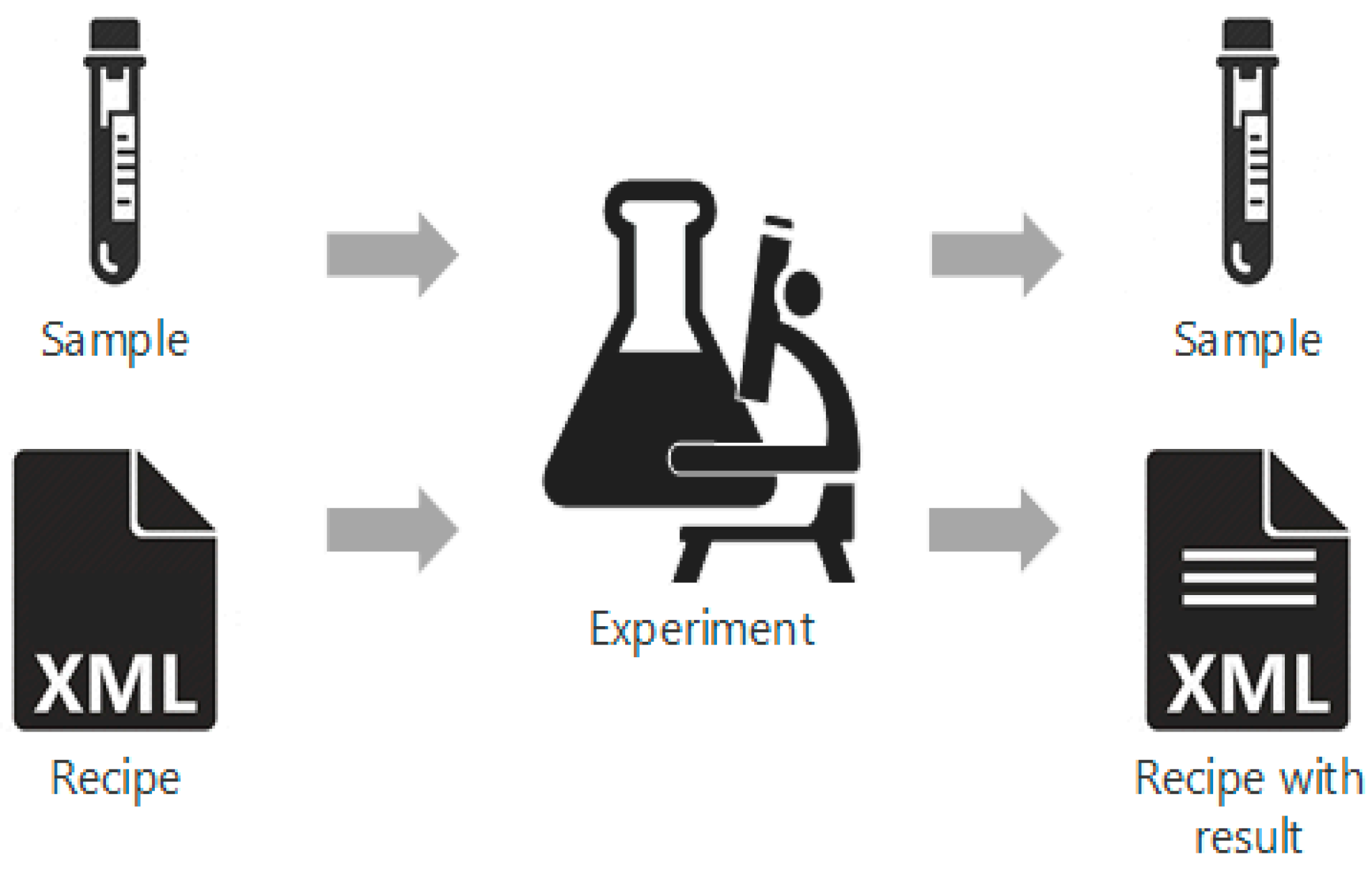

The initial scope and project boundaries will be defined in this step-in order to discuss the main objectives. As mentioned above, the ANSI/ISA-88 standard is not strictly defined and can be expanded to continuous manufacturing in contrast. Moreover, since analytical experiments are performed by each sample, one sample can be treated as a “batch” in the analytical process. Therefore, it is also reasonable to consider the offline characterization experiment of material to be applicable to the ANSI/ISA-88 standard. In this situation, a technique-specific recipe containing detailed process steps could provide instructions for specific experiment types, as well as the designed structure for result documentation. As shown in Figure 3, after analytical tests are performed on samples according to the procedures in the recipes, experiment results will be recorded in the recipe as well.

|

Preliminary data gathering is performed to provide a clear understanding of the stream process and the requirement of each particular case. The component that may be modified in the ANSI/ISA-88 recipe model could be a recipe type, recipe content structure, recipe representation, etc. The in-depth knowledge of process architecture, manufacturing execution systems, product and material information, and analytical platforms will be necessary in accomplishing this step.

Step 2. Recipe structure definition

An XML Schema is created for the implementation of an ANSI/ISA-88 standard to provide the recipe model a structured, system-independent XML markup. This step needs to be completed based on the data collected from the previous step. An XML Schema document works as a master recipe standard. The goals of this document include setting restrictions to the structure and content of the recipe, maximizing the recipe model’s expressive capability, and realizing the machine’s ability to validate recipes.

Step 3. Process analysis

Due to the master recipe’s equipment dependencies, it will be complicated and inefficient to develop a single master recipe to describe the whole continuous pharmaceutical process. The process itself consists of an ordered set of multiple unit operations, which may change from time to time depending on the equipment used. This does happen often in the product process development stage, especially in the early phases. Another barrier is the transformation from research and development (R&D) to production. Usually, R&D performs the product development process in small-scale pilot plants, which could be quite different from the primary production plant technology-wise. Moreover, the differences between technologies used in every plant are crucial to product manufacturing after the transformation. The entire problem stated above is critical to the implementation of ANSI/ISA-88 to continuous pharmaceutical manufacturing.

A suitable approach to the implementation of ANSI/ISA-88 into continuous manufacturing might be to develop a robust modular structure that could support a variety of equipment types and classes. In this case, a continuous process is divided into several unit operations, such as mixing and blending. Each unit operation could be performed by different equipment types that correspond to a different recipe module. In other words, each equipment type might take place in a continuous process and would be mapped to a recipe module individually. The ordered combination of these recipe modules would form the master recipe of continuous manufacturing.

PAT tools used in continuous pharmaceutical manufacturing, e.g., near-infrared NIR and Raman, are implemented in a continuous process to provide real-time monitoring of the material properties and are essential to manufacturing decision making. The PAT tools would optimally be treated as a unit operation that has a corresponding recipe module, in order to provide flexibility and reliability for the management of PAT.

Step 4. Process mapping

XML-based master recipes could be developed in accordance with an XML Schema to represent the manufacturing process. Firstly, the equipment information, operation procedures, and process parameters of unit operations would be mapped carefully to the ANSI/ISA-88 recipe model to generate re-specified recipe modules. These recipe modules could be transformed into the master recipe and used for the individual study and data capturing of unit operations.

Systematic framework of integration

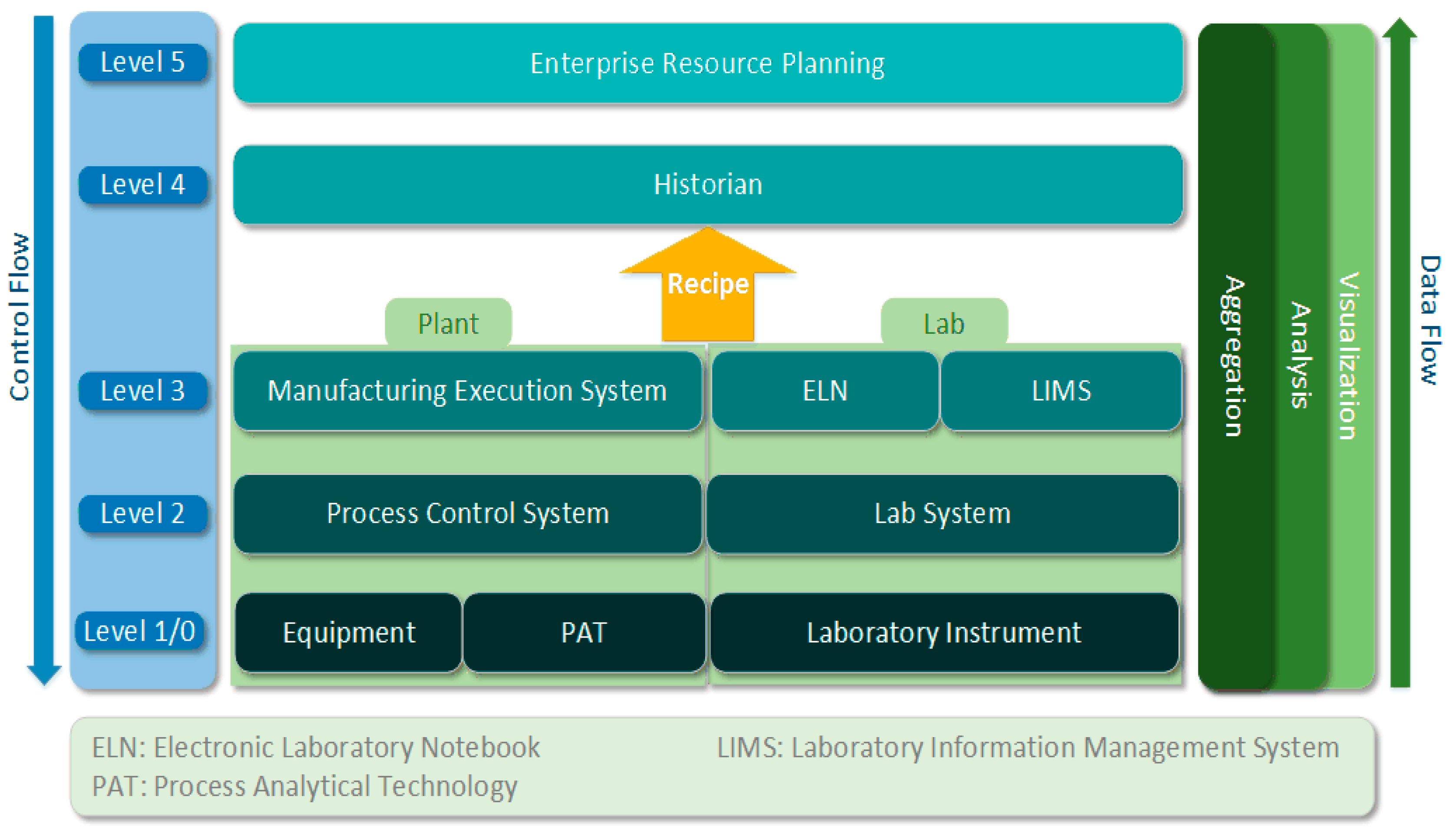

The fast development of information and communication technology, such as big data and cloud computing, provides the capability to increase productivity, quality, and flexibility across industries. The process industry is also seeking a possible approach to bringing together all data from different process levels and distributed manufacturing plants in a continuous and holistic way to generate meaningful information.[22] For continuous pharmaceutical manufacturing, a data flow across the whole process is proposed in Figure 1, following the traditional design pattern, an automation pyramid[23], to create an information and communication infrastructure.

Enterprise resource planning (ERP) systems are placed at the top of the structure, shown in Figure 4. It demonstrates an integrated view of the core business process, based on the data collected from various business activities.[24] Long-term resource planning—primarily human and material resources—would be its primary objective. The "historian", on Level 4, is intended for collecting and organizing data to provide an information infrastructure. Meaningful information in different representations, based on the ANSI/ISA-88 recipe document, could also be generated from the organizational assets.

|

In terms of the levels below, they are separated into two parts: the continuous manufacturing process and the analytical laboratory platform. Because of the distinct data acquisition methods used in these two areas, they will be elaborated on separately in the following section.

Continuous manufacturing

As shown in Figure 4, there are three more levels in the continuous manufacturing process. The manufacturing execution system (MES) performs the real-time managing and monitoring of work in progress on the plant floor. During the manufacturing process, the process control system plays a significant role in controlling the system states and conditions to prevent severe problems during operations. Process equipment located in Level 1/0 also contains PAT tools. Process equipment always consists of the field device and embedded programmable logic controllers (PLC), industrial computer control systems which monitor input devices such as machinery or sensors and make the decision to control output devices according to their programming.[25] The most powerful advantage of PLC is its capability to change the operation process while collecting information. In terms of PAT tools, it has corresponding PAT data management tools that could communicate with the control platform. Above the PLCs are the different levels of control systems across the manufacturing process.

Control flows are organized top-down, whereas the data capturing and information flows are bottom-up. After being generated from process equipment and PAT tools in the continuous process, various field data is fed into PCS to form a recipe structure which is in accordance with the ANSI/ISA-88 recipe model. In other words, manufacturing data is collected, organized, interpreted, and transformed into meaningful information starting from the PCS level. This recipe structure gets mapped identically into the historian for storage. The low-level network in this information architecture is mainly based on the communication over bus systems, such as from field device to PLC. However, most of high-level systems use connections based on the Ethernet technology named "object linking and embedding" (OLE) for process control, which is also known as OPC. OPC is a software interface standard that enables the communication between Windows programs and industrial hardware devices to provide reliable and performable data transformation.

Laboratory experiment

Unlike control systems used in continuous manufacturing process plants, the laboratory platform is more light-weight, but various types of instruments are generating analysis data in different formats. Level 3 of Figure 4 shows the electronic laboratory notebook (ELN) system and laboratory information management system (LIMS), which have the ability to support all kinds of analytical instruments. While material properties are measured by instruments and collected by laboratory systems, these data sets get transformed into ELN or LIMS systems for further organization and interpretation. Meaningful information is generated in recipe form according to the ANSI/ISA-88 standard.

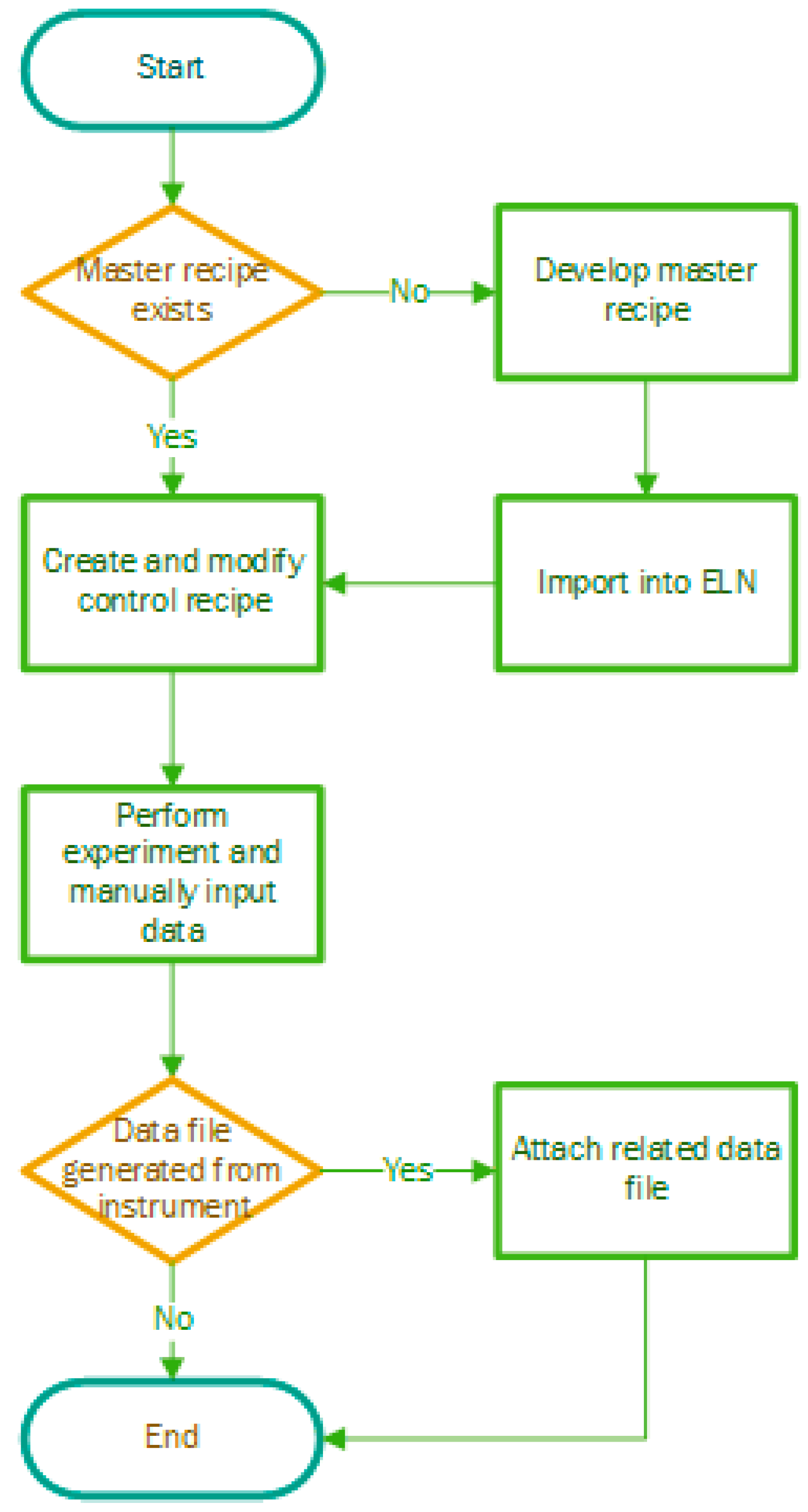

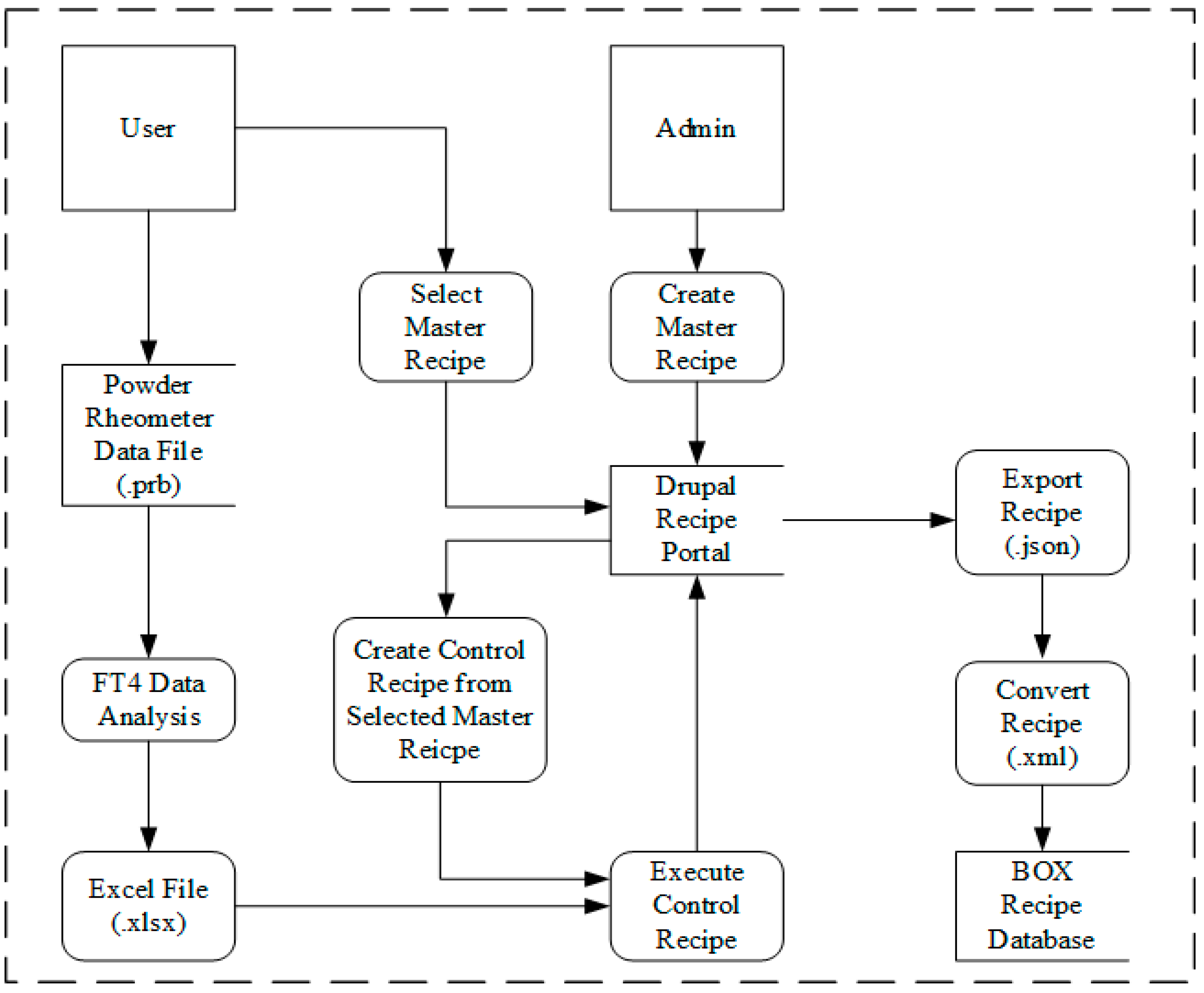

The basic workflow of using ELN to support experiment documentation is illustrated in Figure 5. The first step is to select the master recipe developed for this specific test and create a control recipe. If such master recipe doesn't exist, this issue needs to be reported to a laboratory manager with administrative access to create and import recipes. However, users are still able to change the control recipe, adding and deleting steps or parameters, according to their particular experiment plan. After the execution of the control recipe, users perform experiments according the steps in the recipe while the system records the test parameters at the same time. Eventually, all the data related to this single test—including operator, sample, instrument, and result—get documented in the recipe and transferred to cloud storage.

|

Transferring data from lab computer to cloud system

Transfer of data from a computing cloud to a storage cloud can be non-trivial, since cross platform support is not guaranteed to be available on the cloud service of choice. There is the option of using the application programming interface (API) provided by the cloud service, but the heterogeneity in the APIs provided by different services makes this approach difficult. This issue is highlighted in our current case: the cloud platform of choice here is Box.com. The computing cloud, i.e., the Amazon Elastic Compute Cloud (EC2), runs a Linux distribution (Amazon Linux AMI) based on RHEL (Red Hat Enterprise Linux) and CentOS. Box.com, however, neither has support for, nor has any plans to include support for this platform. This puts the user in a situation where there is no direct method to easily and trivially push data to the cloud using a tool such as an app. Additionally, there is the difficulty in transitioning to a different platform should that need ever arise.

In order to address these issues, we have put in place a data transfer pipeline between the compute and storage clouds using davfs2[26], a specific implementation of WebDAV (web-based Distributed Authoring and Versioning)[27], an extension of the hypertext transfer protocol (HTTP) that allows a user (client) to remotely create/edit web content. This method makes it possible to set up a directory on the Linux EC2 compute cloud that serves as a window into the cloud storage location. Now an rsync[28] may be set up to sync to this directory, and by extension, to the cloud.

Case studies

Two different case studies have been performed to verify the proposed systematic framework in both a pilot plant and an analytical laboratory.

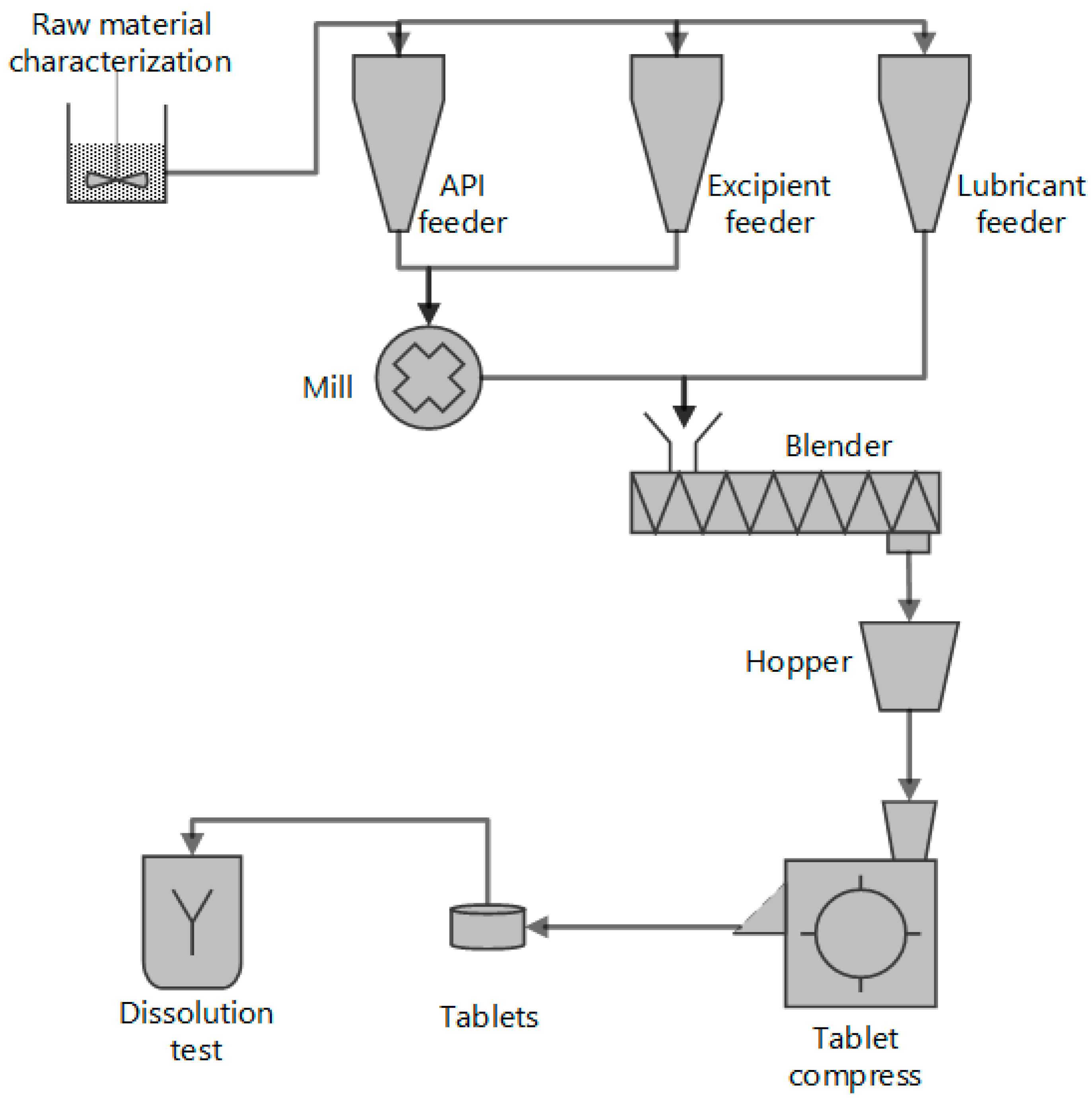

Direct compaction continuous tablet manufacturing process

A direct compaction continuous pharmaceutical tablet manufacturing process has been developed at the Engineering Research Center for Structured Organic Particulate Systems (C-SOPS), Rutgers University.[29] The schematic of the process is illustrated in Figure 6. Three gravimetric feeders were used to provide raw materials, including active pharmaceutical ingredient (API), lubricant, and excipient. The flow rate of powder was controlled via manipulating the rotational screw speed of the feeder. When necessary, a co-mill was used to delump the API and excipient while lubricant was directly added into the blender to avoid over lubrication. After the continuous blender, the homogeneous powder mixture was sent to tablet compaction. Some of the tablets produced would be sent for dissolution testing. In order to use gravity, the pilot plant was built three levels high for better material flow. The feeders (K-Tron) were located on the top level. The second level was used for delumping and blending, and the tablet compactor (FETTE) was located on the bottom floor.

|

FT4 powder characterization test

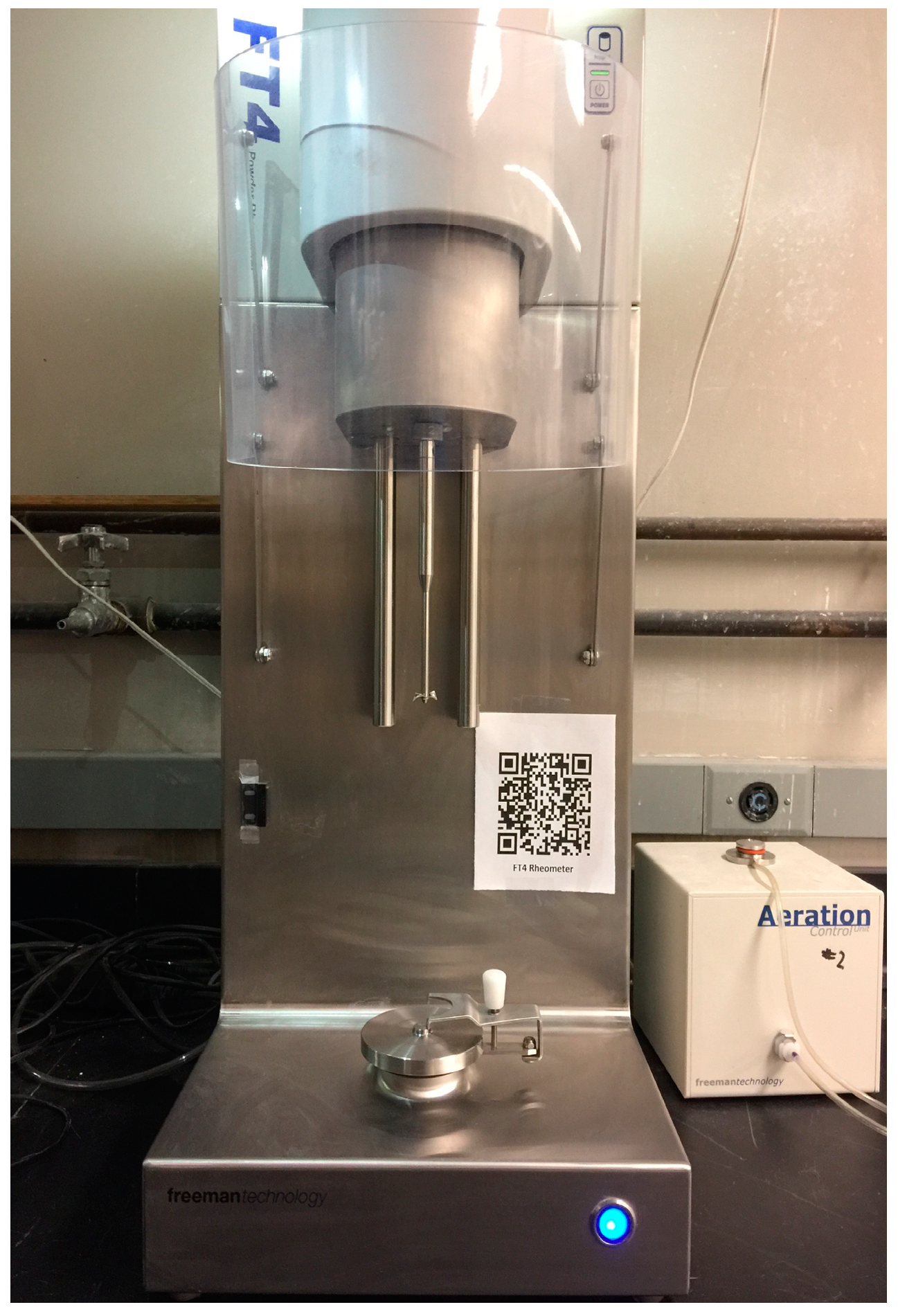

The input materials of continuous direct compaction tablet manufacturing processes are powders whose physical and mechanical properties vary. Since this variation may have an impact on the performance of the final dosage, it is important to develop effective measurement methods on the critical properties. The FT4 Powder Rheometer of Freeman Technology, developed over the last two decades, is an ideal solution.

The FT4 Powder Rheometer (Figure 7) is designed to characterize powders under different conditions in ways that resemble large-scale production environments. It provides a comprehensive series of methods that allow powder behavior to be characterized across a whole range of process conditions. The methods include rheological, torsional shear, compressibility, and permeability tests, which can be performed using small bulk samples such as 1, 10 or 25 mL.

|

Results and discussion

Data integration in continuous tablet manufacturing

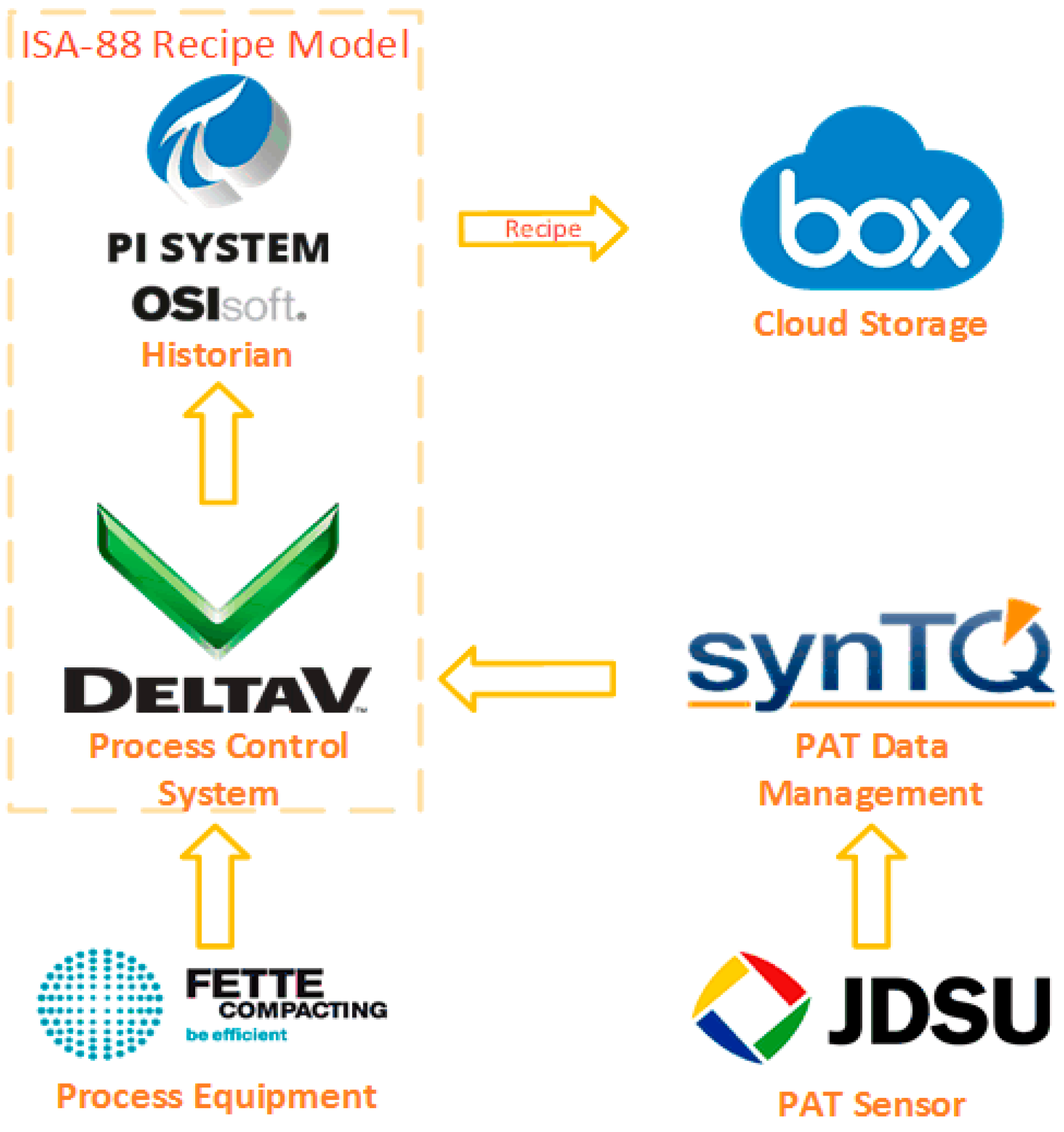

Figure 8 presents the data flow within the continuous direct compaction tablet manufacturing process. Data generated from both process equipment and PAT tools was sent into the DeltaV process control system and organized according to the ANSI/ISA-88 recipe model. PI System, playing the role of historian, was able to receive data from PCS and build up the recipe hierarchical structure using PI Event Frames. In the final step, cloud storage was chosen for permanent data storage and the portal of ERP.

|

DeltaV recipe model

The ANSI/ISA-88 batch manufacturing standard is supported by the DeltaV distributed control system. Recipes have been created and maintained by DeltaV’s Recipe Studio application. Recipe Studio supports two types of recipes: master recipes and control recipes. A master recipe is created and modified by process engineers, while control recipes can be modified and downloaded by operators. All recipes have four main parts: a header, a procedure (sequence or actions), the parameters, and the equipment. They are further elaborated on in Table 2.

| ||||||||||||

Instead of the ANSI/ISA-88 process model, the procedural control model can be implemented in DeltaV Recipe Studio. This model, shown in Figure 9, focuses on describing the process as it relates to physical equipment. Procedures, unit procedures, and operations of the continuous direct compaction process are constructed graphically using IEC 61131-3-compliant sequential function charts (SFCs).[30]

|

While the data generated from field devices is collected by DeltaV, PAT spectrum data is captured and organized by the PAT data management platform, synTQ. synTQ has been designed to provide harmonization and integration of all plant-wide PAT data, including spectral data, configuration data, models, raw and metadata, and orchestrations (PAT methods). It is worth noting that synTQ is fully compatible with the Emerson process management system. This feature means data collected and managed in synTQ can be transferred into the DeltaV system for process control and data integration.

OSI PI recipe structure

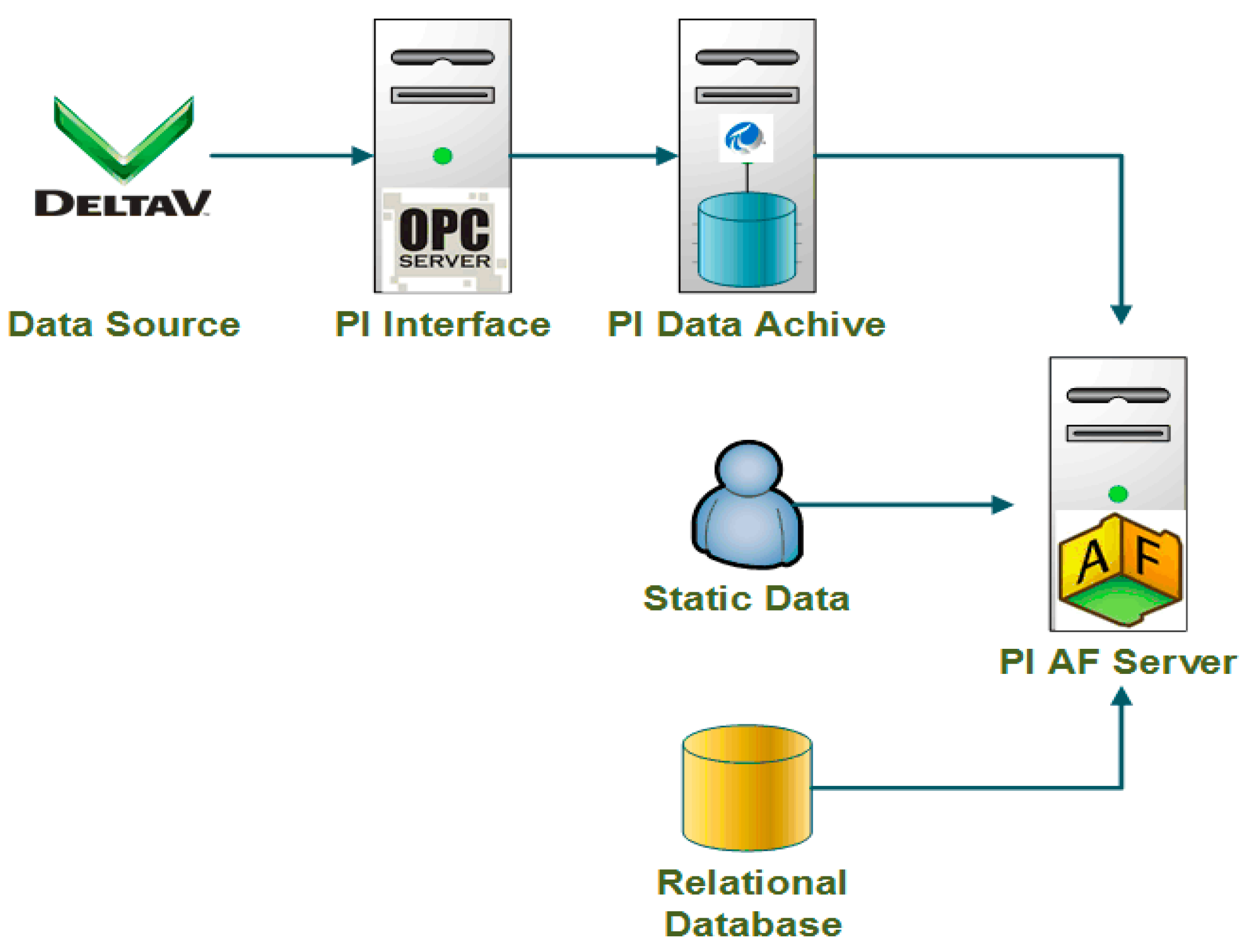

PI System is an enterprise infrastructure for management of real-time data and events, provided by OSIsoft. It is a suite of software products that are used for data collection, historicizing, finding, analyzing, delivering, etc. Figure 10 illustrates the structure and data flow of PI System, in which PI Data Archive and PI Asset Framework (AF) are the key parts.

|

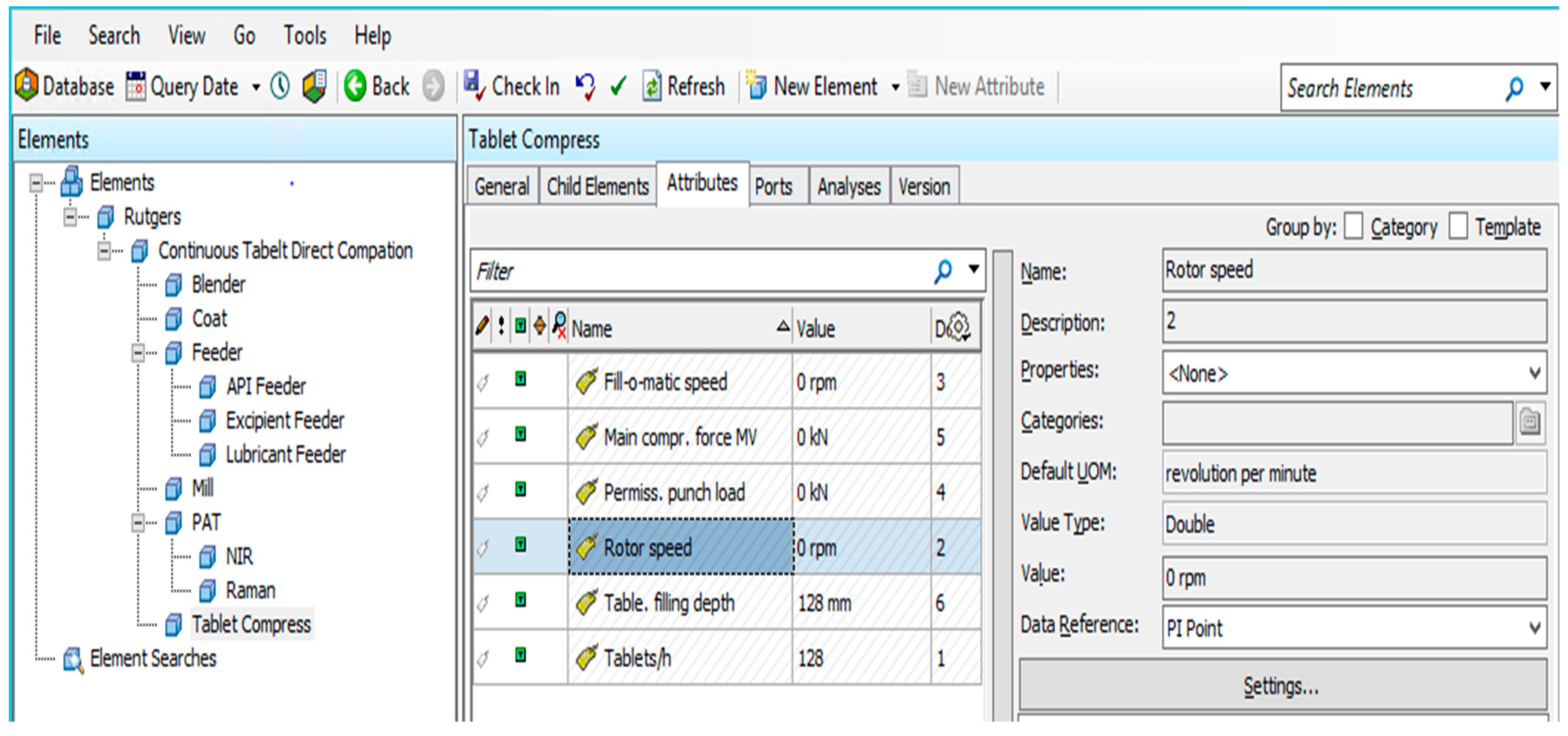

After the PI interface receives data from a data source (DeltaV system in this case) via the OPC server, the PI Data Archive gets the data and routes it throughout the PI System, providing a common set of real-time data. PI Asset Framework is a single repository for objects, equipment, hierarchies, and models. It is designed to integrate, contextualize, and reference data from multiple sources, including the PI Data Archive and non-PI sources such as human input and external relational databases. Together, these metadata and time series data provide a detailed description of equipment or assets. Figure 11 shows how equipment from the continuous tablet direct compaction process is mapped in PI System. The attributes of elements representing equipment performance parameters are configured to get value from the corresponding PI Data Archive points.

|

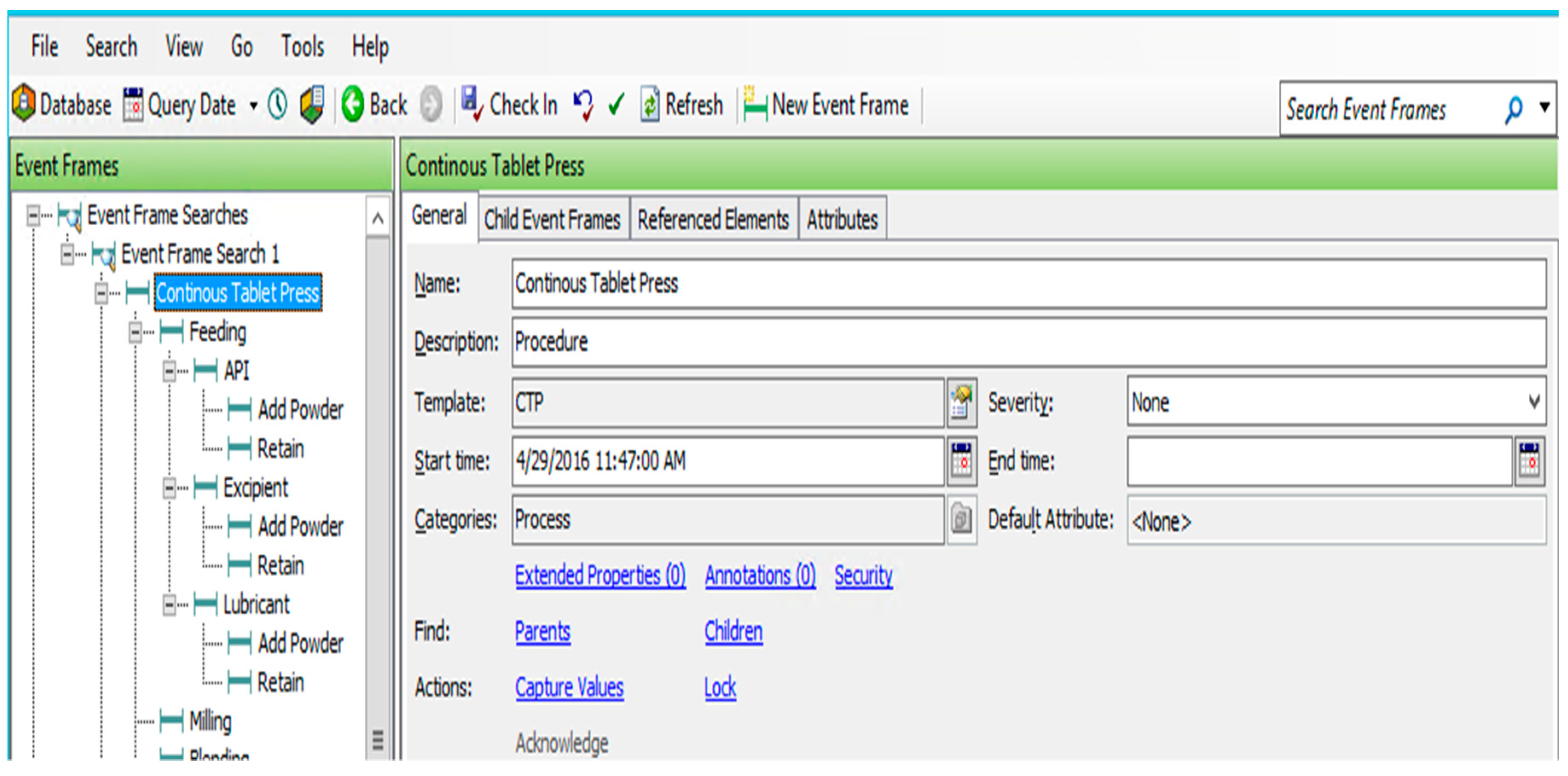

Moreover, PI Asset Framework supports the most significant function of implementing the ANSI/ISA-88 batch control standard, PI Event Frame. Events are critical process time periods that represent something happening and impacting the manufacturing process or operations. The PI Event Frame is intended to capture critical event contexts which could be names, start/end time, and related information that are useful for analysis. Complex hierarchical events, shown in Figure 12, are designed in order to map the ANSI/ISA-88 process model of the continuous tablet press process.

|

Recipe-based ELN system

A novel ELN system was developed and implemented for data management in raw material characterization laboratories to replace paper laboratory notebooks (PLN). Scientists could create, import, and modify recipes within the ELN following the ANSI/ISA-88 standards, as well as input data and upload related data files. This system had sufficient capability of documenting experiment processes and gathering data from various analytical platforms. It could also provide material property information that complies with the recipe model.

Information management

This recipe-based ELN system was developed as a custom module for Drupal, an open-source content management system (CMS) written in Hypertext Preprocessor (PHP). The standard release of Drupal, also referred to as Drupal core, contains the essential features of a CMS, including taxonomy, user account registration, menu management, and system administration. Beside this, Drupal provides many other features, such as high scalability, flexible content architecture, and mobile device support. A widespread distribution of Drupal, Open Atrium, was selected as the platform for laboratory data handling because of its advanced knowledge management and security features.

In this case, Drupal was installed and maintained on a cloud computing service, Amazon Web Service (AWS). AWS is an on-demand computing platform, as opposed to setting up actual workstations in computer rooms. AWS provides large computing capacity cheaper and quicker than actual physical servers. Drupal was run in a Linux operating system on one of AWS' services, Amazon Elastic Compute Cloud (EC2). The MySQL database which Drupal is connected to is supported by the Amazon Relational Database Service (RDS). The adoption of cloud computing technology into laboratory data management provides many benefits, including reduced computing resource expenses, extensible infrastructure capacity, etc.

After installation and configuration, this Drupal system could be accessed from a web browser. Within Open Atrium, spaces can be created as the highest level of content structure. While the public space is open to all system users, the content of the private space can only be accessed by users that have been added to such space. After the ELN module was installed in Drupal, a notebook could be created as a section housed in every space. Via the section visibility widget, specific permissions for certain member groups or people could be set for the ELN section. Although the CMS was accessible globally via the internet, the confidentiality of content in ELN was still well protected.

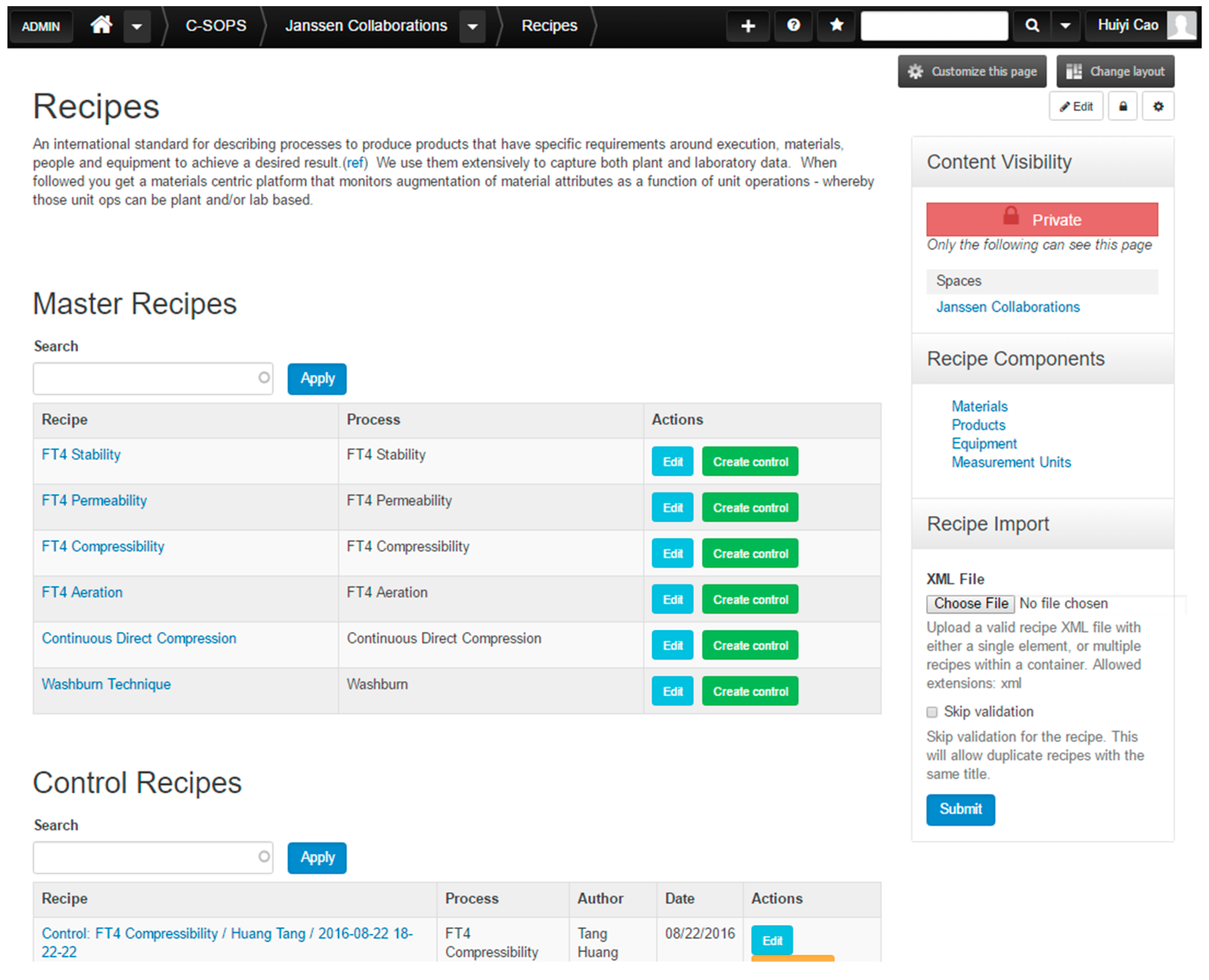

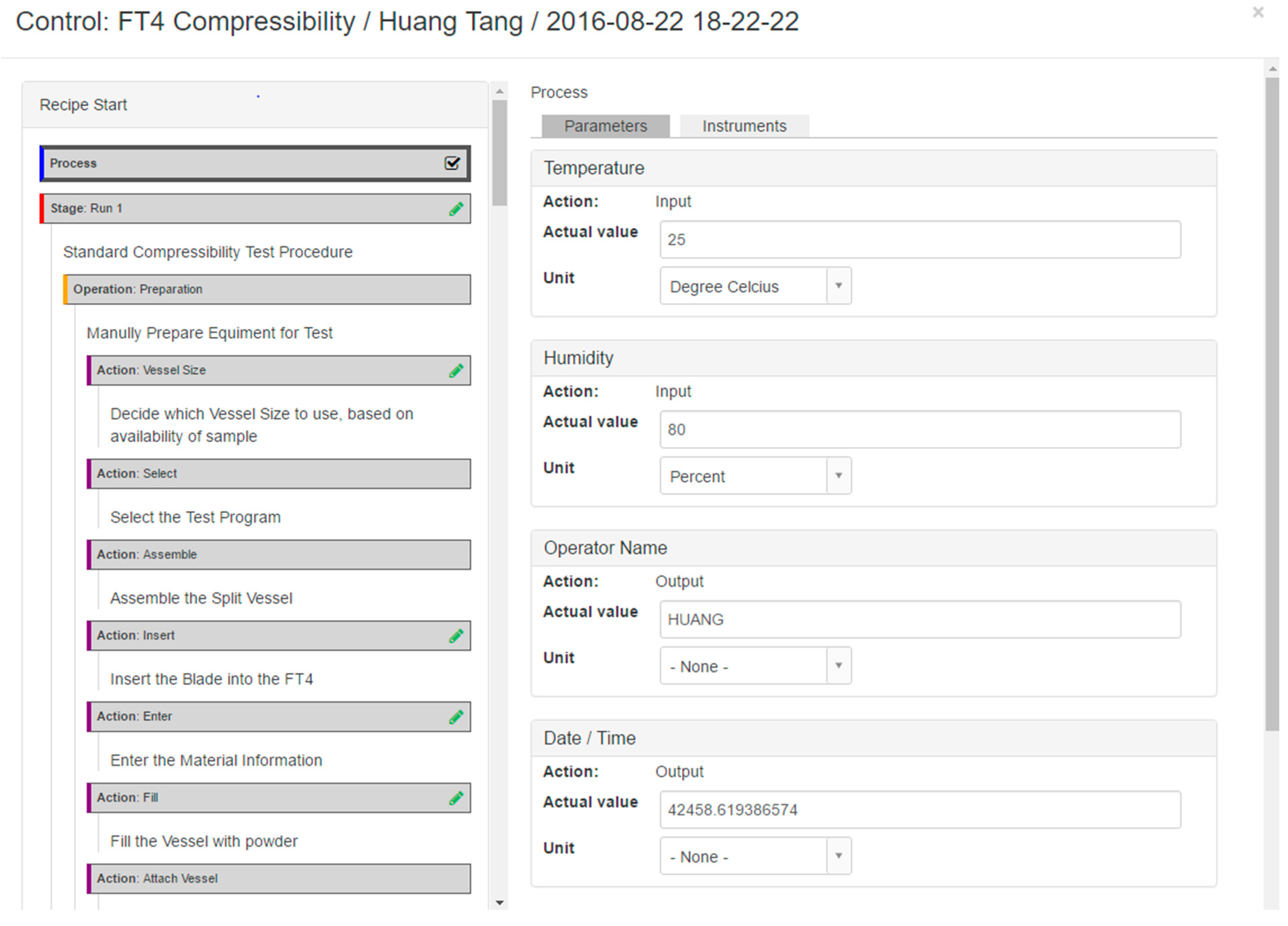

The web interface of the ELN system (Figure 13) contains two main parts: a master recipe list and a control recipe list. As mentioned in previous sections, master recipes are the templates for recipes used to perform experiments on individual samples, and they are dependent on analytical processes. Using the ELN tool, master recipes could be created within the system or imported from external recipe documents in XML format, seen in the “Recipe Import” area. After clicking the green “Create Control” button, a control recipe was generated as one copy of the master recipe, with username and time in the name. It served as documentation of experiment notes and collected experiment processes and data.

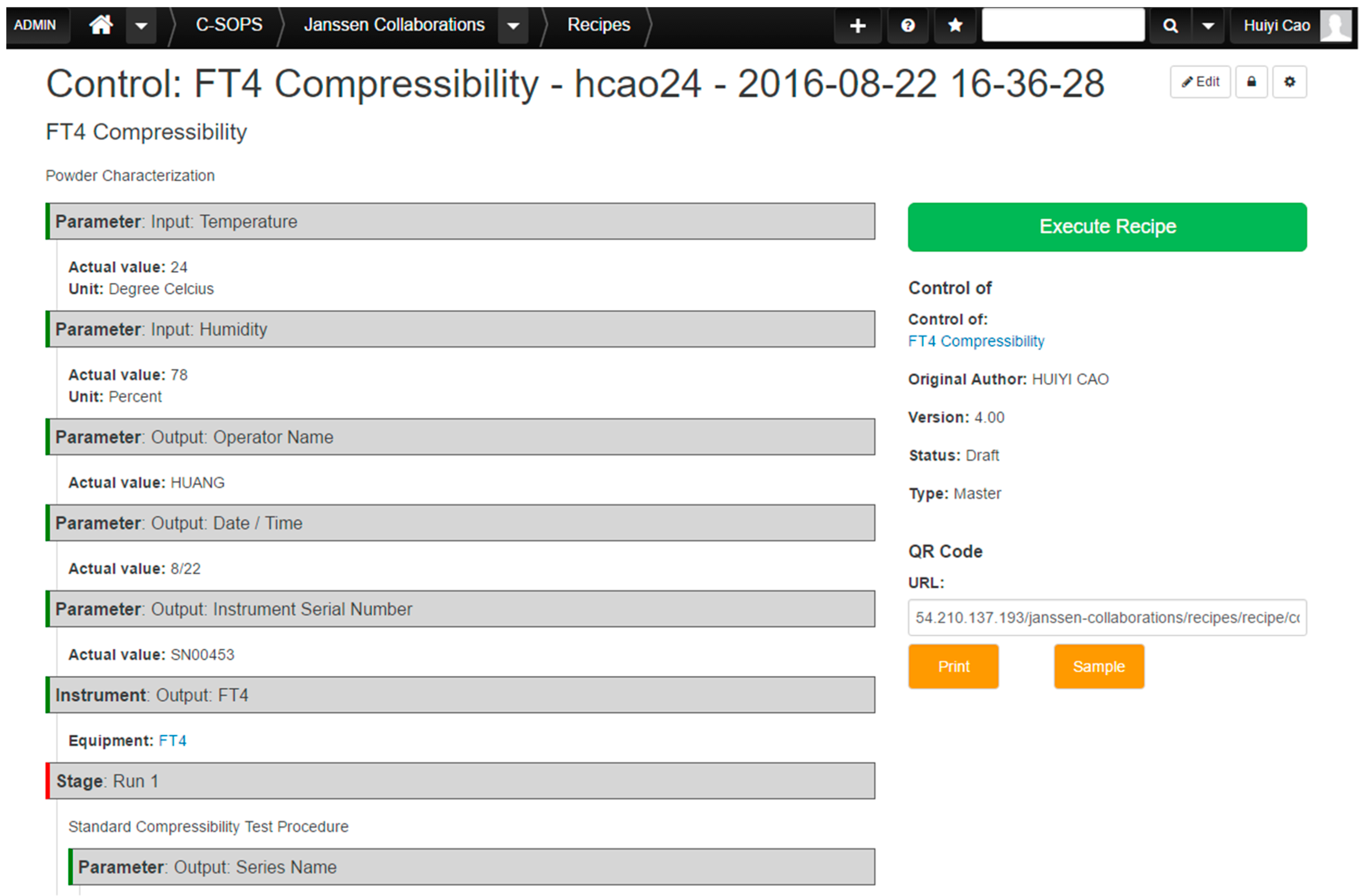

Figure 14 shows an example of a control recipe for an FT4 compressibility test, via the ELN.

|

|

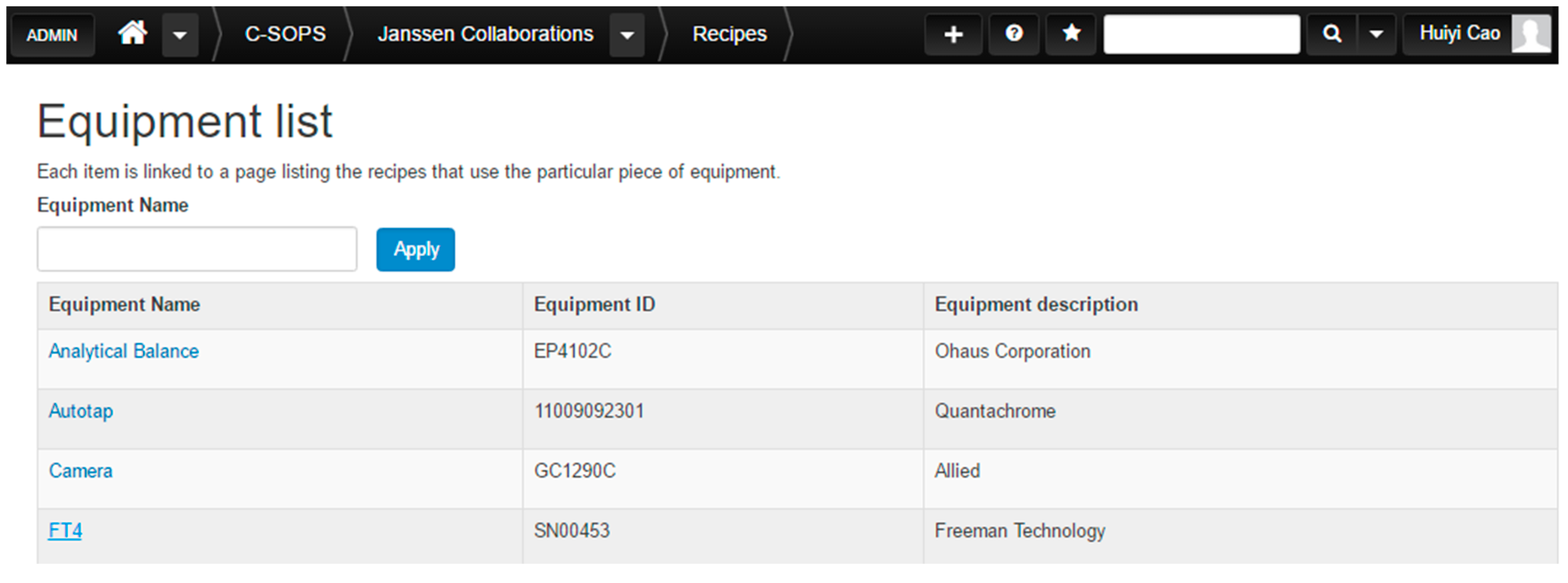

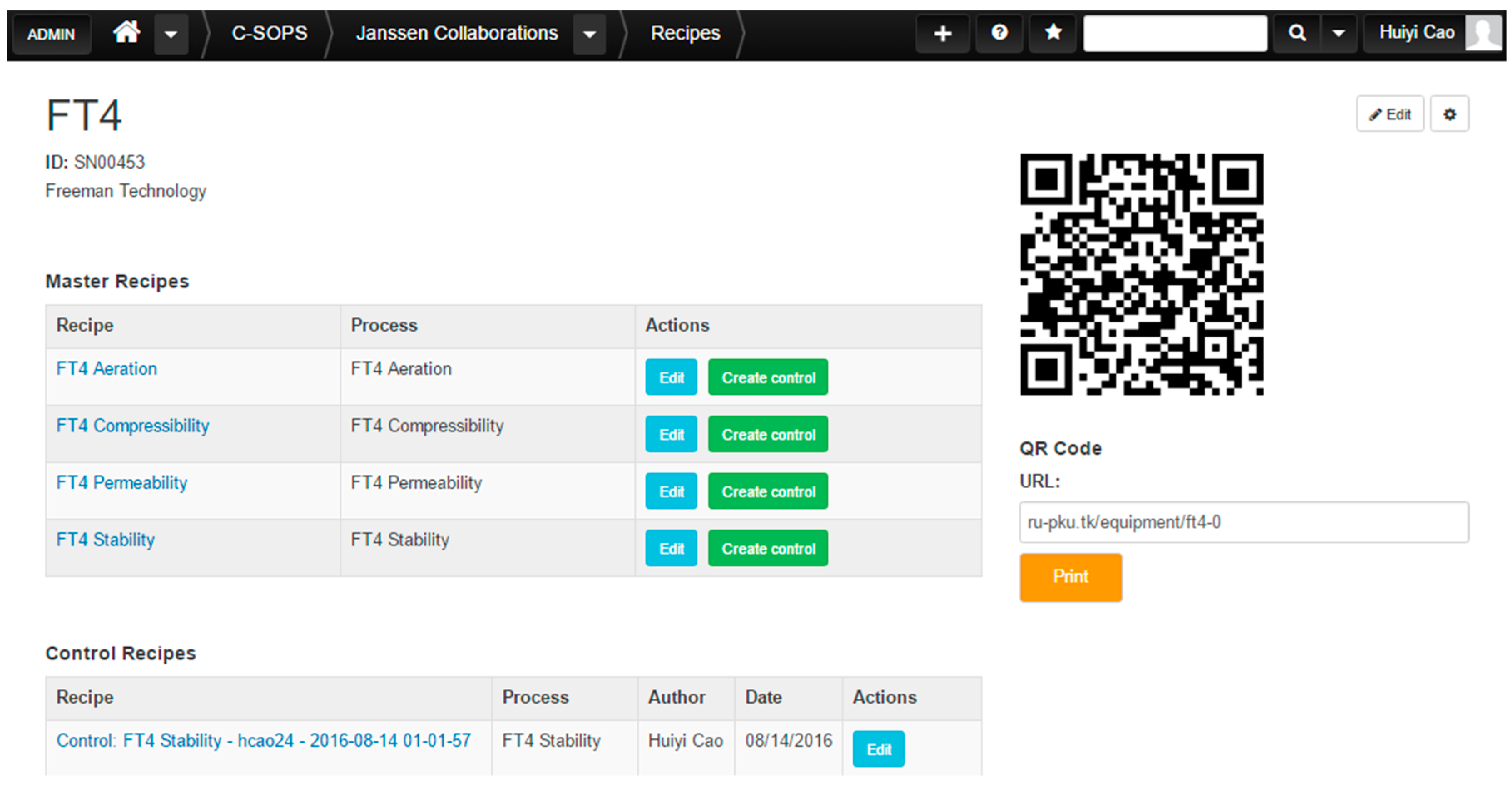

The “Recipe Components” placed on the right sidebar of the ELN front page contain four relevant pieces of information that may be included in the recipe, and they are further introduced in Table 3. The list of each recipe component includes all the items, as well as their related information and attributes. Each item is linked to a page listing the recipes that use the particular piece of the component. Figure 15 and Figure 16 show the equipment list and the information page of the FT4 Powder Rheometer, for example. The QR code displayed on the top right of the page is another feature of the ELN. Each piece of equipment or material item existing in the ELN system will have a unique QR code, which has the corresponding Uniform Resource Locator (URL) address encoded. By scanning the QR code attached to the material or equipment, its information and related recipes could be accessed on the website.

| ||||||||||||||||||

|

|

As shown in Figure 17, the left side of this execution dialog displays the recipe steps from process to process action. Part of the recipe steps are marked with a green pencil icon, which means there are step parameters, step ingredients, or step equipment linked to such steps. Related step information is presented on the right side of the prompt window. In terms of the step parameters, there are two types of them in regards to the actions that need to be taken: “input” and “output.” “Input” means the step parameter will be manually typed into the ELN by a scientist. “Output” indicates that such parameter is recorded in the output data file from an instrument system. Once the step parameters are saved, the green pencil icon will turn into a check mark. The last action in the workflow is to upload the data file generated by an analytical device system if there is any.

|

Other features

QR code printing

It is worth mentioning that the ELN system has the capability of directly printing QR codes for recipe components via a barcode printer. Within this system, there always exists a unique QR code corresponding to the process material and equipment. A QR code sidebar can certainly be found on the material and equipment information web pages. After simply clicking the “Print” button, the QR code is printed on a sticker from the barcode printer connected to the computer. Such a convenient feature is enabled by the custom Drupal module “zprint.” It is designed to send the command of printing the current web URL to the printer via the Google Cloud Print service.

Mobile device support

By reason of the ELN's web interface, the recipe portal can be accessed via all kinds of mobile devices, not just desktops or laptops. Scientists can open up recipes through the internet browsing apps on their smartphones or tablets, as well as execute recipes. Moreover, mobile devices are more convenient for scanning QR codes to acquire information on materiala, equipment, and samples.

Data flow

There are two primary data sources for the ELN system: human input and data files outputted by the instrument. Both will be loaded into the control recipe of the experiment as the actual value of step parameters. As mentioned before, manually inputting data can be done via the execution dialog, shown in Figure 18. However, the extraction of data from an instrument-generated data file will be performed by the ELN's Excel Parsing function. Considering the fact that most of the analytical device systems have the ability to export data into Excel-supported documents (.xlsx and .csv for example), the ELN's Excel Parsing capability is developed based on the contributed Drupal module PHPExcel. After the data file is attached to the recipe, the PHPExcel module converts the data into an array. Once there is a match within the step parameters, the value of an array item will be updated to that step parameter. As such, the ELN supports various analytical instruments, as well as manual data input.

|

Containing the information of the analytical process, test results, and instrument information, each control recipe is an integral documentation of experimentation. The ELN system can export control recipes into XML documents for archiving or data transformation. Drupal has the built-in functionality of dumping content into JavaScript Object Notation (JSON) format. JSON is an alternative to XML for storing and exchanging data. Thus, the recipe is transformed into a JSON document first. To complete a fast and strict conversion between JOSN and XML format, a small application was developed in the Haskell programming language. After these two steps, an XML file of a control recipe is generated from the ELN system, which is suitable for archiving and sharing.

Figure 8 (shown previously) shows data flow within the ELN system using the FT4 Powder Rheometer characterization for example. In this case, the final step involves uploading a recipe document into a BOX database, which is intended for data warehousing. BOX is a cloud computing business that provides content management and file sharing services. Keeping data in cloud storage is a secure and economical method of data archiving. This is also the cornerstone of the data analysis and visualization, which also happens on cloud computing.

Conclusions and future directions

A systematic framework for data management for continuous pharmaceutical manufacturing has been developed. The ANSI/ISA-88 batch control standard was adapted to continuous manufacturing in order to provide a design philosophy, as well as reference models. The implementation of such a recipe model into the continuous process is well-summarized and assessed. The recipe data warehouse strategy is used for the purpose of data integration in both the manufacturing plant and material characterization laboratory. The proper communication among PLC, PAT, PCS, and historian enable data collection and transformation across the continuous manufacturing plant. A recipe-based ELN system is in charge of capturing data from various analytical platforms. As such, data from different process levels and distributed locations can be integrated and contextualized with meaningful information. Such knowledge plays an important role in supporting process control and decision making.[31]

Future directions include validating the possibility of using this data management strategy to support the design of experiments (DOE). In terms of the laboratory platform, instrument configuration and calibration information could also be included into experiment recipes, which are helpful for error analysis. The importance of this developed data management and integration is important since it can enable industrial practitioners to better monitor and control the process, identify risk, and mitigate process failures. This can enable product to be produced with reduced time-to-market and increased quality, leading to potentially better and cheaper medicines.

Acknowledgements

Author contributions

A.F., S.J., M.I., D.H and R.R. contributed theory and methods. B.H., C.K. and R.S. provided equipment and materials. H.C. and S.M. designed and performed the case studies. H.C., S.M. and R.S. wrote the paper.

Funding

This work is supported by the National Science Foundation Engineering Research Center on Structured Organic Particulate Systems, through Grant NSF-ECC 0540855, and the Johnson and Johnson Company.

Conflicts of interest

The authors declare no conflicts of interest.

References

- ↑ Lee, S.L.; O'Connor, T.F.; Yang, X. et al. (2015). "Modernizing Pharmaceutical Manufacturing: from Batch to Continuous Production". Journal of Pharmaceutical Innovation 10 (3): 191–99. doi:10.1007/s12247-015-9215-8.

- ↑ "Guidance for Industry PAT—A Framework for Innovative Pharmaceutical Development, Manufacturing, and Quality Assurance" (PDF). U.S. Food and Drug Administration. September 2004. https://www.fda.gov/downloads/Drugs/GuidanceComplianceRegulatoryInformation/Guidances/ucm070305.pdf. Retrieved 15 May 2018.

- ↑ Venkatasubramanian, V.; Zhao, C.; Joglekar, G. et al. (2006). "Ontological informatics infrastructure for pharmaceutical product development and manufacturing". Computers & Chemical Engineering 30 (10–12): 1482–96. doi:10.1016/j.compchemeng.2006.05.036.

- ↑ 4.0 4.1 "ANSI/ISA-88.00.01-2010 Batch Control Part 1: Models and Terminology". International Society of Automation. 2010. https://www.isa.org/store/ansi/isa-880001-2010-batch-control-part-1-models-and-terminology/116649.

- ↑ "ISA-88.00.02-2001 Batch Control Part 2: Data Structures and Guidelines for Languages". International Society of Automation. 2001. https://www.isa.org/store/isa-880002-2001-batch-control-part-2-data-structures-and-guidelines-for-languages/116687.

- ↑ "ANSI/ISA-88.00.03-2003 Batch Control Part 3: General and Site Recipe Models and Representation". International Society of Automation. 2003. https://www.isa.org/store/ansi/isa-880003-2003-batch-control-part-3-general-and-site-recipe-models-and-representation-downloadable/118279.

- ↑ Dorresteijn, R.C.; Wieten, G.; van Santen, P.T. et al. (1997). "Current good manufacturing practice in plant automation of biological production processes". Cytotechnology 23 (1–3): 19–28. doi:10.1023/A:1007923820231. PMC PMC3449867. PMID 22358517. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC3449867.

- ↑ Verwater-Lukszo, Z. (1998). "A practical approach to recipe improvement and optimization in the batch processing industry". Computers in Industry 36 (3): 279–300. doi:10.1016/S0166-3615(98)00078-5.

- ↑ Kimball, R.; Ross, M. (2013). The Data Warehouse Toolkit: The Definitive Guide to Dimensional Modeling. John Wiley & Sons, Inc. pp. 600. ISBN 9781118530801.

- ↑ Fermier, A.; McKenzie, P.; Murphy, T. et al. (2012). "Bringing New Products to Market Faster". Pharmaceutical Engineering 32 (5): 1–8. https://www.ispe.org/pharmaceutical-engineering-magazine/2012-sept-oct.

- ↑ Ierapetritou, M.; Muzzio, F.; Reklaitis, G. (2016). "Perspectives on the continuous manufacturing of powder‐based pharmaceutical processes". AIChE Journal 62 (6): 1846-1862. doi:10.1002/aic.15210.

- ↑ Vasilenko, A.; Glasser, B.J.; Muzzio, F.J. (2011). "Shear and flow behavior of pharmaceutical blends — Method comparison study". Powder Technology 208 (3): 628-636. doi:10.1016/j.powtec.2010.12.031.

- ↑ Ramachandran, R.; Ansari, M.A.; Chaudhury, A. et al. (2012). "A quantitative assessment of the influence of primary particle size polydispersity on granule inhomogeneity". Chemical Engineering Science 71: 104–110. doi:10.1016/j.ces.2011.11.045.

- ↑ Llusa, M.; Levin, M.; Snee, R.D. et al. (2010). "Measuring the hydrophobicity of lubricated blends of pharmaceutical excipients". Powder Technology 198 (1): 101–107. doi:10.1016/j.powtec.2009.10.021.

- ↑ Armbrust, M.; Fox, A.; Griffith, R. et al. (2010). "A view of cloud computing". Communications of the ACM 53 (4): 50–58. doi:10.1145/1721654.1721672.

- ↑ Subramanian, B. (2012). "The disruptive influence of cloud computing and its implications for adoption in the pharmaceutical and life sciences industry". Journal of Medical Marketing: Device, Diagnostic and Pharmaceutical Marketing 12 (3): 192–203. doi:10.1177/1745790412450171.

- ↑ Bruber, T.R. (1993). "A translation approach to portable ontology specifications". Knowledge Acquisition 5 (2): 199–220. doi:10.1006/knac.1993.1008.

- ↑ Swartout, W.R.; Neches, R.; Patil, R. (1993). "Knowledge sharing: Prospects and challenges". Proceedings of the International Conference on Building and Sharing of Very Large-Scale Knowledge Bases '93 1993.

- ↑ Bray, T.; Paoli, J.; Sperberg-McQueen, C.M. et al., ed. (26 November 2008). "Extensible Markup Language (XML) 1.0 (Fifth Edition)". World Wide Web Consortium. https://www.w3.org/TR/2008/REC-xml-20081126/. Retrieved 01 March 2018.

- ↑ Gao, S.; Sperberg-McQueen, C.M.; Thompson, H.S., ed. (5 April 2012). "W3C XML Schema Definition Language (XSD) 1.1 Part 1: Structures". World Wide Web Consortium. https://www.w3.org/TR/xmlschema11-1/. Retrieved 01 March 2018.

- ↑ de Minicis, M.; Giordano, F.; Poli, F. et al. (2014). "Recipe Development Process Re-Design with ANSI/ISA-88 Batch Control Standard in the Pharmaceutical Industry". International Journal of Engineering Business Management 6. doi:10.5772/59025.

- ↑ Muñoz, E.; Capón-García, E.; Espuña, A. et al. (2012). "Ontological framework for enterprise-wide integrated decision-making at operational level". Computers & Chemical Engineering 42: 217–234. doi:10.1016/j.compchemeng.2012.02.001.

- ↑ "ANSI/ISA-95.00.01-2010 (IEC 62264-1 Mod) Enterprise-Control System Integration - Part 1: Models and Terminology". International Society of Automation. 20010. https://www.isa.org/store/ansi/isa-950001-2010-iec-62264-1-mod-enterprise-control-system-integration-part-1-models-and-terminology/116636.

- ↑ Hwang, Y.; Grant, D. (2014). "An empirical study of enterprise resource planning integration: Global and local perspectives". Information Development 32 (3): 260-270. doi:10.1177/0266666914539525.

- ↑ Alphonsus, E.R.; Abdullah, M.O. (2016). "A review on the applications of programmable logic controllers (PLCs)". Renewable and Sustainable Energy Reviews 60: 1185–1205. doi:10.1016/j.rser.2016.01.025.

- ↑ Baumann, W.. "davfs2 - Summary". Savannah. Free Software Foundation, Inc. http://savannah.nongnu.org/projects/davfs2. Retrieved 01 March 2018.

- ↑ Whitehead, J. (2005). "WebDAV: Versatile collaboration multiprotocol". IEEE Internet Computing 9 (1): 75–81. doi:10.1109/MIC.2005.26.

- ↑ Tridgell, A. (July 1999). "Efficient Algorithms for Sorting and Synchronizing" (PDF). Australian National University. http://maths-people.anu.edu.au/~brent/pd/Tridgell-thesis.pdf.

- ↑ Singh, R.; Ierapetritou, M.; Ramachandran, R. (2012). "An engineering study on the enhanced control and operation of continuous manufacturing of pharmaceutical tablets via roller compaction". International Journal of Pharmaceutics 438 (1–2): 307–26. doi:10.1016/j.ijpharm.2012.09.009. PMID 22982489.

- ↑ Godena, G.; Lukman, T.; Steiner, I. et al. (2015). "A new object model of batch equipment and procedural control for better recipe reuse". Computers in Industry 70: 46–55. doi:10.1016/j.compind.2015.02.002.

- ↑ Meneghetti, N.; Facco, P.; Bezzo, F. et al. (2016). "Knowledge management in secondary pharmaceutical manufacturing by mining of data historians-A proof-of-concept study". International Journal of Pharmaceutics 505 (1–2): 394–408. doi:10.1016/j.ijpharm.2016.03.035. PMID 27016500.

Notes

This presentation is faithful to the original, with only a few minor changes to presentation. A fair amount of grammar and punctuation was updated from the original. In some cases important information was missing from the references, and that information was added.