Journal:The development of data science: Implications for education, employment, research, and the data revolution for sustainable development

| Full article title | The development of data science: Implications for education, employment, research, and the data revolution for sustainable development |

|---|---|

| Journal | Big Data and Cognitive Computing |

| Author(s) | Murtagh, Fionn; Devlin, Keith |

| Author affiliation(s) | University of Huddersfield, Stanford University |

| Primary contact | Email: fmurtagh at acm dot org |

| Year published | 2018 |

| Volume and issue | 2(2) |

| Page(s) | 14 |

| DOI | 10.3390/bdcc2020014 |

| ISSN | 2504-2289 |

| Distribution license | Creative Commons Attribution 4.0 International |

| Website | http://www.mdpi.com/2504-2289/2/2/14/htm |

| Download | http://www.mdpi.com/2504-2289/2/2/14/pdf (PDF) |

Abstract

In data science, we are concerned with the integration of relevant sciences in observed and empirical contexts. This results in the unification of analytical methodologies, and of observed and empirical data contexts. Given the dynamic nature of convergence, the origins and many evolutions of the data science theme are described. The following are covered in this article: the rapidly growing post-graduate university course provisioning for data science; a preliminary study of employability requirements; and how past eminent work in the social sciences and other areas, certainly mathematics, can be of immediate and direct relevance and benefit for innovative methodology, and for facing and addressing the ethical aspect of big data analytics, relating to data aggregation and scale effects. Associated also with data science is how direct and indirect outcomes and consequences of data science include decision support and policy making, and both qualitative as well as quantitative outcomes. For such reasons, the importance is noted of how data science builds collaboratively on other domains, potentially with innovative methodologies and practice. Further sections point towards some of the major current research issues.

Keywords: big data training and learning, company and business requirements, ethics, impact, decision support, data engineering, open data, smart homes, smart cities, IoT

1. Introduction: Data science as the convergence and bridging of disciplines

The context of our problem solving and analytics will always be quite fundamental, very specific, and particularly oriented. (Section 4 of this article draws some interesting and relevant implications of this.) This article is oriented towards commonality and mutual influence of methodologies, and of analytical processes and procedures. A nice example of the parallel nature of such things is how "big data analytics" is often considered a synonym of "data science." In Section 2.2, it is mentioned how public transport may well use smartphone and mobile phone wireless connection data to observe locations of individuals. This close association or, perhaps even, identity of big data analytics and data science will have growing importance with the internet of things (IoT), and smart cities and smart homes, and so on (as noted in Section 8). The McKinsey Global Institute provided an outstanding perspective on this idea in their paper The age of analytics: Competing in a data-driven world.[1]

In Section 8 and Section 9 of this article, very important developments are at issue, encompassing newly oriented and pursued methodologies, and the integration of research domains. Section 7 notes how important all of the content here is to sustainable development. The phrase "data revolution" is based here on ongoing work by the United Nations, and by so many of us in this domain, and from national authorities in Africa and the Middle East discussing issues here at the most recent (2017) World Statistics Congress.

This converging and bridging of disciplines is increasingly important. For example, Mahabal et al.[2] discuss the parallels between astronomy and Earth science data, methodology transfer, and metadata and ontologies characterized as being crucial. They claim the convergence or bridging of disciplines must address “non-homogeneous observables, and varied spatial, temporal coverage at different resolutions.”[2] This quotation is very familiar to us in regard to how NoSQL databases are now widely used, as well as traditional relational databases. Another example is how text mining, social media, and many other domains have become so very important in many contexts. Then, given computational support, “it is the complexity more than the data volume that proves to be a bigger challenge.”[2] Further benefits of this data science convergence are termed here "tractability" and "reproducibility." Mahabal et al.[2] also discuss the complexity relating to resolution and distributions. In a separate work, Murtagh[3] characterized this in terms of data encoding. Plenty of work now emphasizes the importance of p-adic data encoding (binary or ternary when p = 2 or 3), compared with real-valued encoding (m-adic, especially when m = 10).

The convergence and bridging of disciplines is fully emphasized by Mahabal et al. as such[2]:

Methodology transfer can almost never be unidirectional. Diverse fields grow by learning tricks employed by other disciplines. The important thing is to abstract data—described by meaningful metadata—and the metadata in turn connected by a good ontology.

Further description is at issue in regard to collaboration in data science[2]:

We have described here a few techniques from astroinformatics that are finding use in geoinformatics. There would be many from earth science that space science would do well to emulate. Even other disciplines like bioinformatics provide ample opportunities for methodology transfer and collaboration. With growing data volumes, and more importantly the increasing complexity, data science is our only refuge. Collaboration in data science will be beneficial to all sciences.

2. Historical development of data science and some contemporary examples of cross-disciplinarity

A short historical perspective that follows is with reference to such disciplines as computer and information sciences, mathematics and statistics, physics, and, implicitly, social sciences. In concluding this description, a key point will be how data science encompasses and embraces all of the following: cross-disciplinarity, interdisciplinarity, and multidisciplinarity.

2.1 Historical prominence of data science in recent times

The origins of data science are largely due to Chikio Hayashi and others. Hayashi[4] says “I will present 'data science' as a new concept,” followed by a relevant introduction to the science of data: “Data Science consists of three phases: design for data, collection of data and analysis on data.”[4] In Ohsumi[5], the abstract has this: “In 1992, the author argued the urgency of the need to grasp the concept 'data science'. Despite the emergence of concepts such as data mining, this issue has not been addressed.”

Escoufier et al.[6] note how data science arises from the convergence of computer science and statistics, which "gives birth to a new science at its core." They conclude that "[t]o take data as a starting point provides a complementary vision of theory and practice, and avoids creating an unfortunate gap between two steps, both of which are essential in any scientific process."[6]

Cao provides a comprehensive overview of data science[7], noting how the “first conference to adopt 'data science' as a topic” was the International Federation of Classification Societies (IFCS) 1996 conference, in Kobe, Japan. This was fully consistent with our work as participants, then and now (IFCS 2017, in Tokyo, Japan, also had "data science" in its title). Ueno[8] makes a similar point about IFCS 1996 as the first conference with "data science" in its title, and he also claims that the journal Behaviormetrika is "the oldest journal addressing the topic of data science," when it started in 1974. He describes data science as "an interdisciplinary field that includes the use of statistical methods to extract meaningful knowledge from data in various forms: either structured or unstructured."[8]

Cao[7] provides additional historical perspectives, with the section heading "The Data Science journey," relating largely to work in the 1960s and 1970s. This includes "information discovery" as a continuing key objective in data science. Englmeier and Murtaugh[9] also make note of this objective, emphasizing the “semantic dimension of data science,” through the information discovery lifecyle, and the “discovery lifecycle in text mining.” While also emphasizing cooperation, and cross-disciplinarity, there is this: we see the data scientist’s responsibility...

- in the design of an overarching semantic layer addressing data and analysis tools,

- in identifying suitable data sources and data patterns that correspond to the appearance of structured and unstructured data, and

- in the management of the information discovery lifecycle and discovery teams.

An ever-more important issue arises from the data sources that are employed. As a summary expression, data science is, firstly, the integration of data sources and analytical and related data processing methodologies, and, secondly and quite fundamentally, arising from the convergence of disciplines. Convergence of disciplines can be quite beneficial in practice, particularly in regard to addressing and solving problems, and also in regard to the cooperation yielded by cross-disciplinarity. See Section 5, below, for some current discussion on how the problems and challenges to be addressed can and should be, quite naturally, arising out of all aspects of data science.

The current era of data science can be considered as a culmination of previous epochs that gave rise to major digital technology advances, with implications in all social domains. Largely, the first epoch (in the 1980s) brought about laptop and desktop computers, and the second epoch (in the 1990s) gave rise to the internet and the World Wide Web.

2.2 Practical association of disciplines and sub-disciplines

Cao[7] also makes mention of data science being centered on the following disciplines: statistics, informatics, sociology, and management science. Clearly there is emphasis on “synergy of several research disciplines” and how “interdisciplinary initiatives are necessary to bridge the gaps between the respective disciplines.”[7] This is exciting and not least because of how there is convergence of disciplines or subdisciplines. We may consider, for example, how the digital humanities can incorporate relevant areas of a few disciplines, how computational psychoanalysis can come to the fore.[10] With a major focus on psychometrics, Coombs[11] has chapters that proceed from “Basic Concepts” to “On Methods of Collecting Data,” and “Preferential Choice Data.”

Now, data is so very central to all of our sciences, and to all aspects of our engineering and technology. Murtagh[3] defines just what data is, which includes the concept of data coding, or perhaps also, this should be termed data encoding. After all, data is measurement. This underscores the importance of the mathematical underpinnings in data science. Implications that follow include the relevance and importance for new, innovative directions to be followed, and from effective problem solving. The mathematical view of what measurement means is all important, as well as in the discipline of physics. Murtagh[3] cites eminent physicist Paul Dirac as to how mathematics underpins all of physics, and how the work of eminent psychoanalyst Ignacio Matte Blanco has mathematics being integral to psychoanalysis.

From a major study of big data and surveying by the American Association for Public Opinion Research comes the following[12]: “The classic statistical paradigm was one in which researchers formulated a hypothesis, identified a population frame, designed a survey and a sampling technique and then analyzed the results … The new paradigm means it is now possible to digitally capture, semantically reconcile, aggregate, and correlate data.”

Abbany[13] notes that wireless connection data is forming a basis for public transport management. Such big data sources can be associated with, or even integrated with, personal and social behavioral patterns and activities. “Better living through data?” asks Abbany, followed by a very critical statement: “The other thing I need to declare is that I’m no fan of our contemporary belief that life can only get better the more data we have at our disposal.”[13] A response to this would be that data science, as the science of data, is everything relating to the path and trajectory connecting data, information, knowledge, and wisdom.

Darabi[14] reports that “The UK’s next census will be its last,” with administrative, governmental authorities’ data replacing the national census. This is acknowledged: “Collecting the data itself is only half the work. A great deal of effort must go into combining it with other sources, in order to answer real questions.” That can be understood as undertaking scientific investigation of such data, and other potentially relevant data. The cross-disciplinarity inherent in that also can, and perhaps must, lead to new interdisciplinary linkages. Arising out of the ending of the national census is the recognition that how the "government counts its people is changing, and it could transform policy.”

One issue here has been how mathematics underpins so much, across disciplines, and also in the commercial and in most social domains. Many universities in the recent past shut down their mathematics departments and no longer provide teaching in mathematics. However, this is being reversed, with university courses again being provided in mathematics.

3. Open data, reproducibility, and the data curation challenge

While generally recognized as so important for innovation in both application outcomes and in regard to analytics and methodologies, open data plays a key role for data scientists. (Information and news about open data is well provided by the organization Open Data Institute: https://theodi.org).

One major aspect of how big data analytics are quite central to data science is the increasing availability of open data. Cao[7] associates this with methodology, through “the open model rather than a closed one.” This concept was central to a May 2017 London presentation by Dr. Robert Hanisch, Director, Office of Data and Informatics, National Institute of Standards and Technology. Dr. Hanisch worked for 30 years on the Hubble Space Telescope (HST) project. Due to open access to observed data, from our cosmos, Dr. Hanisch noted that three times the number of people directly engaged in HST work were working on HST data. As such, there were three times the benefits drawn from HST data.

Dr. Hanisch noted how important the national metrology institutes were to their efforts. Arising from this was, and is, the importance of reproducibility and interoperability of all of analytics comprising data science. Underpinning these very important themes in data science work is data curation. Data curation is still a major challenge to be addressed. Noted in Dr. Hanisch’s presentation is the contemporary “crisis” of reproducibility. At issue is to support data management from acquisition to publication, whether it occurs in business, medical, governmental or other sectors. The computing expert will recognize this crucial theme of data curation as associated with metadata and evolving ontologies.

For the latter, i.e., Murtagh et al.[15] discuss in a broad and general context the very important and central role of evolving ontology, research publishing, and research funding. While challenges remain to be pursued and addressed, it is important to note that astronomy and astrophysics offer interesting paradigms for open data, and, in many ways, for data curation. Certainly, further research will be carried out on data curation, as well as evolving and interacting ontologies, all of which are core issues for metrology, hence the very basis of all manufacturing technology, and, as described in the latter citation, for research publications and research funding.

Cao[7] also discusses “the open model and open data.” From that discussion results the concept of multidisciplinarity—expressed here as the convergence of disciplines—which can be aided and facilitated by the openness of analytics, data management, and all data science methodologies. Keeping methodologies open allows domain experts to both link up with and perhaps even, if feasible, to integrate with all that is at issue in other relevant domains. As such, a plea for openness of data science as a discipline continues to grow, particularly when viewed as a convergence of disciplines.

4. Integration of data and analytics: Context of applications

The integration of data and analytics in data science has resulted in a strong need to acknowledge and address challenges and other issues with data and the underpinning or contextual reality of the data. Informally expressed, our data represents reality or the context from which the measurements arose, i.e., the data numeric values or qualitative representations. This requires data scientists to focus on quality and standards of work.

Hand[16] contributes numerous important points relevant to the discussion here, describing the problems of data quality—in the big data context—relating to administrative data. He notes that data curation is relevant for reproducibility of analytics. The implications for analytics[16]:

... the fact that data are often not of the highest quality has led to the development of relevant statistical methods and tools, such as detection methods based on integrity checks and on statistical properties ... However, this emphasis has often not been matched within the realm of machine learning, which places more emphasis on the final modelling stage of data analysis. This can be unfortunate: feed data into an algorithm and a number will emerge, whether or not it makes sense. However, even within the statistical community, most teaching implicitly assumes perfect data ... Challenge 1. Statistics teaching should cover data quality issues.

Our analytics should not be a “black box,” a term that was informally used in regard to neural networks in earlier times. Rather, transparency should always be a key property of analytical methodologies.

The view offered by Anderson[17], and discussed by Murtagh[18], quoting Peter Norvig, Google’s research director[18]:

Petabytes allow us to say: "Correlation is enough." We can stop looking for models. We can analyze the data without hypotheses about what it might show. We can throw the numbers into the biggest computing clusters the world has ever seen and let statistical algorithms find patterns where science cannot.

However, this interesting view, inspired by contemporary search engine technology, is provocative. The author maintains that "[c]orrelation supersedes causation, and science can advance even without coherent models, unified theories, or really any mechanistic explanation at all."

It cannot be accepted that correlation supersedes causation, i.e., that analytics can be automated fully, and thereby obfuscate, or make redundant, data science as well as health and well-being analytics. Englmeier and Murtagh[19] reflect similarly on the above, stating their case for comprehensive information governance, encompassing fully the contextualization of all the analytics that are being carried out. Murtagh and Farid[20] discuss quite a good deal of the contextualization of analytics of health and well-being data. In the discussion accompanying the seminal work by Allin and Hand[21] in statistical perspectives on health and well-being, the authors responded to our comments: “We agree with Murtagh that ‘big data’ may offer insights, provided that there are appropriate analytics.”

It is quite relevant to note here that data science has inherent and integral involvement in the sourcing and in the origins of data, i.e., selection and measurement. Wessel[22] makes this point clear while discussing software applications. This implies full integration of the analytics with what data is selected and sourced, and that may well imply what and how measurement is carried out.

The takeaway here may be the priority to be accorded to induction-based, i.e., inductive, reasoning (cf., Murtagh[3]). This could be a minor argument for the importance of approaches that follow from data mining, unsupervised classification, latent semantic analysis, and various other themes. Clearly, however, all of one’s studying and teaching, as well as one’s work for companies, government agencies, and health and other authorities should and really must be properly focused on the aims and objectives. The latter, of course, may need, partially in any case, to be determined by the expert data scientist.

5. Short review of contemporary data science in education and in employment

It is quite clear to all involved with many businesses and educational institutions that data science is becoming one of the most important employment prospects. A comparison of employment salaries in the U.S. has data science as having the highest median salary in 2017.[23] It follows that data science and big data will certainly be studied in university courses, and these will of course be related to the prospects and potential for the students.

In this section, two themes relate to the contemporary context: higher education in data science and company employment advertisements. We turn to accessible and available data to discuss these themes. Of course, an expert data scientist is very likely to be involved in many discussions and debates with current and potential students, company executives, and many others. It can even be seen that most disciplines have to be integrated into data science, requiring pedagogical innovation in education.[24]

5.1. Teaching and learning for data science

Briefly considered here are current higher education post-graduate programs (usually termed MSc courses) in data science. This is also possibly relevant for undergraduate programs, and certainly relevant for undergraduate projects and company placements of students.

In universities in all countries worldwide, in recent years, there has been a great increase in graduate level courses in data science, and increasingly also in undergraduate level courses. Press[25] maintains a listing of graduate courses, in some cases, but not in all, with the title "data science." This listing, with links to the host institute, contains 102 MSc courses, 19 online courses, 11 free online courses, and eight for a fee, for a total of 140 graduate level courses.

The theme of having data and analytics well integrated is again reiterated. Consider the most essential requirements of a data scientist, as described by Englmeier and Murtagh[19], and then note the close linkage between data science and big data. Emphasized is the great need to avoid false positives coming from the data science analytics that is carried out. This arises from treating the data without fully linking and even integrating the analytics with the context, the relevance, and all that is to do with application and problem conceptualization. They note the well-known errors arising out of the Google Flu Trends, arising from Google searching, and service usage patterns obtained from taxi company, Uber. These were outcomes that produced false positives. There must be fully comprehensive information governance, encompassing all levels of information discovery, through conceptualization that can benefit significantly if collectively undertaken.

5.2. Employment requirements in data science

Many employment opportunities are now on offer for data scientists. For example, the comprehensive review of data science by Cao[7] has a section entitled “Data economy: Data industrialization and services” that describes the expanding business opportunities available to budding data scientists. More examples of opportunities can be found at the increasingly popular web service DataScientistJobs: https://datascientistjobs.co.uk.

The stated requirements for data science jobs vary. While one must give the fullest perspective to the companies that one works with, and the university data science courses that one teaches, what follows is both a consideration and a selection of job requirement data, and preliminary results. This preliminary study of requirements for data science roles, some of them in senior management, represents what will become expanded research over time, both for the benefit of our data science students so they may be better prepared for work, and association, with companies both nationally and globally.

Online discussion of data science and big data have become commonplace, and they often include discussion of surveys that have been conducted. Examples include NewVantage Partners' Big Data Executive Survey[26] of senior corporate executives, which found the dominant sector was financial services. This survey concerned internal investment and organizational matters, as well as business practices and plans. Another survey by Hayes[27] asked questions of more than 620 data professionals in regard to skills required and at issue in data science. The author provides an interesting summary and presentation of results obtained using factor analysis.

Descriptions of new data scientist job listings from 2015 to 2017 were considered, all from the distribution list StatsJobs (sometimes indirectly, through links) in England. In most cases, specific programming languages or software environments at issue were indicated. In a few cases, the job advertisements did not explicitly list these details. Retained for use here were 73 such job descriptions. The frequent (more than four advertisements) software languages and software environments were as follows: R (50), Python (44), SQL (30), SAS (28), Hadoop (25), Matlab (17), SPSS (17), Java (16), Hive (14), Excel (9), MapReduce (9), NoSQL (8), Spark (7), C++ (6), Pig (6), Tableau (6), HBase (5), C# (4), Mahout (4), QlikView (4), and Scala (4).

To have sufficient comparability of software languages or environments, 21 of the above were selected that were required by at least four employers. Since some employers failed to list any software language or environment requirements, and indeed about three of the set of 72 had no explanation at all of what was required, consequently the set of employers was reduced to 60. Thus, a cross-tabulation of 60 employers seeking to employ a data scientist was used, with a few requirements or desires for expertise in particular software languages and software environments. The manner of expression was most often "one or another or another again."

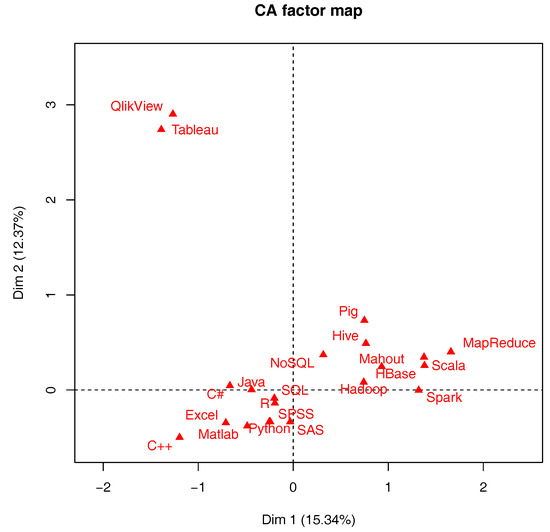

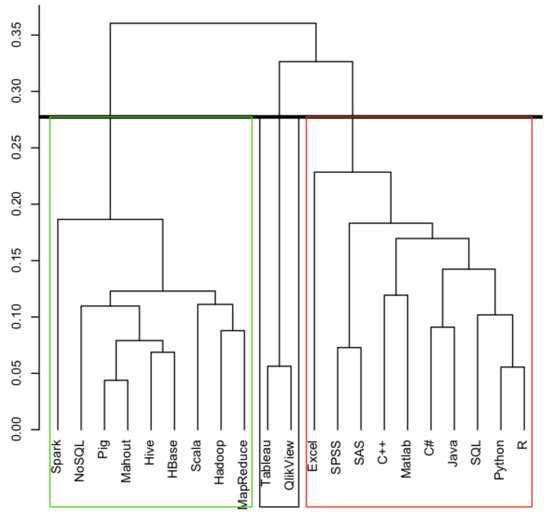

Correspondence analysis takes the employer set, and the software set, in the dual multidimensional spaces, both endowed with the chi squared metric, and maps both clouds into a factor space endowed with the Euclidean metric. Hierarchical clustering was carried out from the full dimensionality (therefore with no loss of, or decrease in, information content) factor space. The goal was to see what associations of software are most likely to be the case from these data scientist job advertisements.

Figure 1 and Figure 2 display the clustering of the software languages or environments. It is our intention to take such a mapping much further, with supplementary elements, also termed contextual elements, to locate them in the factor space, and these would include country or location of the job, industrial sector or government agency, or global corporate firm for the job. The objective would be to determine sector or regional preferences in the skills and abilities of the data scientists employed.

|

|

6. Data science methodology to address: Selection bias, scale and aggregation effects, and qualitative evaluation of decision-making impact

After reviewing some of the challenges of big data analytics, involving selection bias and replacement of individual attributes with aggregated attributes (hence, the collective attributes of groups to which the individual can belong), it's time to point to innovative new methodological perspectives that can both address such issues and challenges, but also benefit from the context, for example of using big data sources. Murtagh[28] uses as an example a case study involving work for a major company, with its own example of how aggregated data can be used, if required, for individual-related analysis.

Ethical as well as methodological issues arise in scale effects, representation and expression, and particular context effect. Here, both the ethical implications, and the potential for qualitatively and quantitatively evaluating impact of decision-making and policy-making are summarized.

The quite regular lacking of coordination, alignment, and integration of methodology, including modelling, with data sourcing, is pointed to by Hand[29], noting the “ignorance of selection mechanisms has led to mistakes,” and that selection or distortion processes can apply to not only "human interactions—where it has been suggested that the notion that ‘data=all’ can replace the need for careful theorising and statistical modelling—but also in the hard sciences and medicine.”

Keiding and Louis[30] point out how one case study discussed “shows the value of using ‘big data’ to conduct research on surveys (as distinct from survey research).” Limitations though are clear: “Although randomization in some form is very beneficial, it is by no means a panacea. Trial participants are commonly very different from the external ... pool, in part because of self-selection ...”

The authors address these contemporary issues by noting “[w]hen informing policy, inference to identified reference populations is key.”[30] This is part of the bridge which is needed, between data analytics technology and deployment of outcomes.

They also warn of additional caveats. "In all situations, modelling is needed to accommodate non-response, dropouts and other forms of missing data.”[30] Noting that “[r]epresentativity should be avoided,” here is a fundamental way to address what we need to address: “Assessment of external validity, i.e. generalization to the population from which the study subjects originated or to other populations, will in principle proceed via formulation of abstract laws of nature similar to physical laws.”[30] When considering Keiding and Louis' words, it is worth noting how—related to eminent social scientist Pierre Bourdieu’s work—homology between fields of study offer clear perspectives on how beneficial innovative practice can be pursued.

This incorporates our need to “rehabilitate the individual” in our analytics, and not simply replace the individual by the mean of some group. Many case studies of the latter are provided by eminent mathematical data scientist Cathy O’Neill.[31] Le Roux and Lebaron[32] have a similar sentiment: “Rehabilitation of individuals. The context model is always formulated at the individual level, being opposed therefore to modelling at an aggregate level for which the individuals are only an ‘error term’ of the model.”

Calibrating surveys and other data sources, through use of big data, has been at issue in addressing challenges and obstacles described in Keiding and Louis.[30] In regard to decision-making and policy-making, the analysis of discourse in a data-driven way can provide relevant or necessary contextualization. Without having such an approach, a limited capability on the part of those in authority emerges: “top-down communication campaigns both predominate and are advised by those involved in social marketing ... However, this rarely manifests itself through measurable behaviour change ...”[33]

Instead, mediated by the latent semantic mapping of the discourse, we develop semantic distance measures between deliberative actions and the aggregate social effect.[33] We let the data speak in regard to influence, impact, and reach. Impact is defined in terms of semantic distance between the initiating action and the net aggregate outcome. This can be statistically tested. It can be visualized. It can be further visualized and evaluated.

For research and for all engagement in data science, it is motivational to both address and have significant achievements in regard to innovative methodology.

7. Benefits of high profiling of data science

Many blog posting declare “big data is dead.” (A Google query of the phrase, dated 2017-12-29, lists 153,000 results.) At issue is just this: complete priority is to be given to the problems to be solved and the challenges to be addressed. In Cao's extensive and outstanding detailing of many aspects of data science[7], in [7] is acknowledgement that there is much that is still currently “tremendous hype and buzz,” and “engendering enormous hype and even bewilderment.” There is this perspective, too, which can be a viewpoint if the sole aim were for data science to automate data analytics in all domains of application: counterposed to advanced analytics, “dummy analytics is becoming the default setting of management and operational systems.”[7]

Fully in line with the context of those perspectives, a major theme of this article is that the convergence of disciplines in the data science framework builds on cooperative and collaborative expertise, and thus does not seek to replace or supplant such expertise. A major conclusion is not to replace current disciplines (mathematics, statistics, computing, engineering, physics and chemistry, arts and humanities, social and psychological sciences, and so on) but—where relevant and where appropriate, and also where motivated and where justified—to re-orientate and to bridge primary as well as foundational levels of disciplines.

In somewhat humorous fashion, in the sense of revolution versus evolution, let the following be noted. At the 61st World Statistics Congress, in July 2017, in Marrakech, Morocco, there was a session organized jointly by the High Commission for Planning (HCP) of Morocco and the Ministry of Development Planning and Statistics (MDPS) of Qatar. This session was entitled “The Data Revolution for the Sustainable Development Goals.” One comment raised in the question and answer session was a request for evolution to be at issue rather than revolution. At the same time, it's interesting to note how there is an important advisory group in the United Nations, called the Data Revolution Group (see http://www.undatarevolution.org) which seeks “[m]obilising the data revolution for sustainable development.” For data science, it is clear that there is great inspiration here. Some other organizational initiatives will now be mentioned. This is to complement a great deal that is being done already by major organizations in statistics, classification and data research, engineering, and explicitly in data science.

In European research funding, i.e., Horizon 2020, an important supported project is that of the European Data Science Academy or EDSA (http://edsa-project.eu), which dates back to 2005. There could well be an important role for such an organization in the future, in regard to sponsoring fellowship levels of organizational memberships, and it would be interesting to promote chartered membership. In the European Commission context, dating from July 2014, we find the "best practice guidelines for public authorities and open data” under the scope of governments embracing the "potential of big data.”[34]

At the U.K. national level, an important initiative, directly or indirectly related to much that was under discussion in this article (in Section 3, in particular) is open data. The Open Data Institute (see https://theodi.org) in the U.K. was founded in 2012 by Sir Tim Berners-Lee and Sir Nigel Shadbolt. In welcoming membership applications, there is this: “Membership: Join the data revolution.” There is this prominent statement too: “Data is changing our world.”

In a practical sense, focused on data to begin with and entirely relevant for data curation now and in the future, we find in comparison the Research Data Alliance or RDA (see https://www.rd-alliance.org). RDA is supported by the E.U., by the NSF (National Science Foundation) and NIST (National Institute of Standards and Technology) in the U.S., by the JISC (Joint Information Systems Committee) and other agencies in the U.K., and by Australia and Japan.

8. Important new research challenges from data

This and the following section engage with major new developments, for problem solving, and for data science and big data analytics, with the partial or complete integration of relevant sciences and technologies, and methodologies, in observed and empirical contexts.

Data science—integrating potentially all application domains, with mathematical foundations for methodology as befits observational science, and integrated observational and experimental science—fully relates data to all that is accomplished and achieved from the data sources. This results in the great importance of the contemporary increasing orientation towards, and requirement for, open data. Mahabal et al.[2] offer a good explanation of this development in data science, and of the potential here for application transfers, in parallel with methodology transfers.

The Open Universe initiative (http://www.openuniverse.asi.it) was established by the United Nations.[35]

The initiative stated that "acknowledging that open data access drives innovation and productivity is a well-established principle in every scientific discipline. However, there is still a considerable degree of unevenness in the services currently offered by providers of data ..." Among six objectives is the goal of "advancing calibration quality and statistical integrity,” with outcomes for education, globally, and private sector involvement. Here, and through transference to each and all domains for data science, what is required for open data and all associated open information, is that it must be findable, accessible, interoperable, and reusable (the FAIR Principles), as well as reproducible.[36]

Supporting the FAIR principles is ESASKY (European Space Agency, Sky, accessible from http://sci.esa.int/home), described as "a discovery portal that provides full access to the entire sky. This open-science application allows computer, tablet and mobile users to visualise cosmic objects near and far across the electromagnetic spectrum.” The interesting new research challenges in Data Science can be stated to be foremostly related to the transfer to many domains of FAIR-based open science, discovery portals.

An important application domain in this regard will be emerging smart technologies, which encompass smart homes, smart cities, smart environments in general, and the internet of things. An important "situation theory" methodology, in an information space that is mathematically based, furnishing a comprehensive representational system, is proposed by Devlin.[37] Associated with this are the social, legal, and economic aspects of emerging smart technologies in real-life applications.

9. Information space theory for big data analytics in internet of things and smart environments

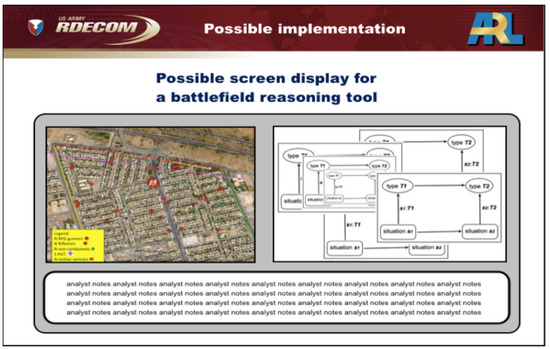

Context is extremely important in big data analytics, and in many other domains.[20] Situation theory provides humans (generally, trained domain experts) with powerful, flexible representations that enable them to perform better, both as analysts and decision makers. Systems such as the one outlined in Figure 3 for the U.S. Army (sourced from Devlin[38]) have a software back-end, possibly including artificial intelligence (AI), but they are in no way “calculators” or expert systems for making decisions. What was done was to harness the power of mathematics primarily as a representational system, compared to its computational capacity. While the back-end software can manipulate the network—each completion diagram is a structurally identical piece of code—perhaps permitting the eventual application of familiar-looking network-optimization algorithms, many of those completion diagrams represent inherently human thoughts, intentions, and actions, and, for the foreseeable future, the human mind remains the best tool to handle them. This work for the United States Army used situation theory to develop a first-iteration specification for a workstation to be used by a field commander, in both mission planning and real-time control. This work takes into account the many different ontologies in a modern battlefield. The role of ontologies is very central in qualitative analysis of research, cf., Darabi[14].

|

9.1 Context, situation theory, and completion diagrams

In the early 1980s, a group of researchers at or connected to Stanford University started to develop an analogous mathematically-based representation of communicating humans, looking deeper than the mere fact of communication (captured by the network model used by the telecommunication engineers) to take account of what was being communicated. (Part of the challenge was to decide how far it is possible to go into categorizing that “what” in order to achieve a representation that is useful in analyzing communication and designing communication-based activities such as work.) That approach is generally referred to as situation theory. Devlin was one of those early pioneers, who wrote a theoretical book on the subject, Logic and Information.[37] Subsequently, the techniques developed by the Stanford group were applied by Devlin and Rosenberg[39][40] to solve an actual workplace problem involving communication in the workplace.

The representation[40] used was (of necessity) similar to that used by telecommunication engineers, Google, the postal system, UPS, and FedEx in that the domain is represented by a network. However, whereas those earlier examples had networks of point nodes, the nodes in the network were more complicated objects, which were termed “completion diagrams.” See the right-hand side of Figure 3, where “situation s1” results in “type T1”, and “situation s2” results in “type T2”, so that transition from “situation 1” to “situation s2” has the related association between “type T1” and “type T2”. The exact nature of the entities in such a completion diagram: they can be considered as capturing the key elements of a basic human act, here military and managerial action, including a communicative act. Much of Logic and Information is devoted to the development and explication of such a completion diagram. It has its origins in work by Barwise and Perry.[41]

Information is a vehicle for the use of a big data approach to underpin the study of interaction and communication in smart environments (e.g., cities, workplaces, and homes). "Information space theory" is to provide the focus for building an inter-disciplinary community concerned with social and technological issues associated with recent technological advances. Relevant emerging research and innovation disciplines include the internet of things, internet of everything, and big data analytics, among others, that contribute to the design, development, and effective implementation of smart environments in real life.

Research projects related to both “information space theory” and “interaction space theory” include SANE, “Sustainable Accommodation for the New Economy”, a European Framework 5 research project with very innovative aims and outcomes for research and for industrial companies. It's described as "a multi-disciplinary and multi-cultural R&D project that takes a location independent approach to the design of a sustainable workplace to ensure compatibility between fixed and mobile, local and remote work areas" and one that will specify, prototype, and develop a set of ICT tools with "emphasis being on the innovative application of emerging technologies and services."[42] Another project involving universities in the U.K. and in Germany was IS-VIT, “Interaction Space of the Virtual IT Workplace”. Related outcomes of these projects are described by Rosenberg et al.[43] and Walkowski et al.[44]

Information space theory takes into account the following: (i) People who inhabit smart environments and spontaneously generate data and information in the course of their day-to-day activities; (ii) Place which can be public (smart cities), privileged (workplaces) or private (homes) with varying degrees of privacy and security constraints that shape information sharing; and (iii) Patterns of interaction between people and technology that is an integral part of smart environments and influences human–human, human–device and device–device interaction.

A summary follows of inter-disciplinary information space theory and its application in smart environments: (i) an introduction to studies of information, data and interaction; (ii) big data analytics as a tool for the development of information space theory; (iii) information space theory and its impact on the design of smart environments; (iv) information space and human communication research, involving an account of the evolution of smart interaction systems; (v) further refinement of information space theory informed by cross-disciplinary perspectives and requirements of application in smart systems and emerging technologies, including contribution to the application of big data analytics in real-life smart environments; and (vi) the concept of information space as a distinct feature of human context that makes it possible for people to achieve coordination and reciprocity of perspectives through smart interaction systems that safeguard their privacy and security.

Such work builds on the work of an inter-disciplinary group of researchers within mathematics, computer, and social sciences who are attempting to address key research questions: How do emerging smart technologies influence information sharing in interaction between people and technology in smart environments? What are the social, legal, and economic impacts of emerging smart technologies in real-life application?

To this end, the concept of information space will guide the investigation into interactions that occur within smart environments, taking account of human–human, human–device, and device–device interaction in a uniform framework. Special attention is given to information sharing—pathways, enablers and gatekeepers—to incorporate security and privacy concerns that urgently need to be addressed in order to optimize the technology potential in real-life applications of smart environments. The working assumption behind this approach is that inter-disciplinary, formal, and theoretical understanding of the nature of these interactions is essential for these concerns to be addressed and resolved.

In this context, mathematics plays a crucial role in developing and using a mathematically-based representation framework for the analysis and design of work in the era of the internet of things. Both in life and in scientific studies, what we can achieve depends on, and is constrained by, the representational system we use. The greater the complexity of the domain, the more significant is the representation at our disposal—representations are what make it possible for us to understand and reason about the world. For instance, trade, commerce, and financial activity in Europe were revolutionized by the introduction of the Hindu-Arabic, decimal arithmetic system (“modern arithmetic”) in the thirteenth century, which made it possible for anyone to become proficient in arithmetic after just a few weeks practice. A similar revolution occurred in the 1980s, when the introduction of the modern, windows–icons–mouse interface for personal computers made it possible for ordinary people to use what had until then been a tool for trained experts. Long before those two examples, the introduction of numbers themselves, in the form of a monetary system, transformed human life by providing a simple, quantitative representation system for property ownership and social indebtedness.

The rise of natural science involved a new representation system that assigned numerical values to various features of the environment (features given names such as length, area, volume, mass, temperature, momentum, etc.) and shifted the focus from trying to understand why things occurred to simply measuring how one quantified feature varied with another—an approach that proved to be extremely fruitful for society. The representation systems of the natural sciences have all been based on mathematics to a considerable extent. In the social realm, mathematically-based representation systems are less common, but when they have been developed, they have proved to be extremely powerful. (Money is a particularly dramatic example.) Indeed, one of the most widespread applications of mathematics in today’s world is the optimization of various human activities. Computer queries depend on optimization in a mathematical space that treats every living human as a node in a simple mathematical structure called a graph. “Modelling” a person as a point node in a mathematical network omits all information about a person save for one factor: the connections of that human to all other humans. However, for questions that hinge on that one factor, the representation enables mathematical algorithms to be applied that provide society with one of its most important tools.

Another example is provided by the algorithms that route our telephone calls, our internet communication, or mail and package delivery systems, and our transportation systems. In those cases, whereas a search engine like Google represents the human domain as a two-dimensional network of nodes and edges, the domains of communicating devices such as phones or computers, of letters and packages in shipment, and of travelers are represented as high dimensional “polytopes,” generalizations of the familiar polygons of high school geometry to higher dimensions, to which mathematical methods such as the Simplex Method or Karmarkar’s can be applied to determine optimal routing. These representations work by ignoring almost everything about the entities in the domain apart from the one or two features that are germane to the task. The result is that the power of mathematics can be brought to bear to a problem that, on the face of it, is part of the complex web of human activity that defies the methods of science in terms of its complexity and (local) unpredictability.

10. Conclusions

Having indicated a few highly important and relatively recent organizational initiatives, data science—viewed as the convergence of disciplines, or, in practice, sub-disciplines—should very much incorporate open methodology, open data, and transparency, reproducibility, and interoperability.

This article has sought to form a foundation for further study of the specific content of data science education and training, and of business sector importance. After all, progress and impact ensure development and evolution over time. As noted above, too, we may, if we wish, refer to the contemporary data revolution.

Both challenges and impactful potential are prominent, and it is good to see them as predominant in our rapidly growing discipline of data science. There are also important directions (in new research challenges and application of information space theory) to both follow and to incorporate in other domains.

Acknowledgements

Author contributions

Section 9 by K.D. and other sections by F.M. All represent our extensive research work, teaching, and some consultancy also.

Conflicts of interest

The authors declare no conflict of interest.

References

- ↑ Henke, N.; Bughin, J.; Chui, M. et al. (December 2016). "The age of analytics: Competing in a data-driven world". McKinsey & Company. pp. 136. https://www.mckinsey.com/business-functions/mckinsey-analytics/our-insights/the-age-of-analytics-competing-in-a-data-driven-world. Retrieved 18 June 2018.

- ↑ 2.0 2.1 2.2 2.3 2.4 2.5 2.6 Mahabal, A.A.; Crichton, D.; Djorgovki, S.G. et al. (2017). "From Sky to Earth: Data Science Methodology Transfer". Proceedings of the International Astronomical Union: 1–10. doi:10.1017/S1743921317000060.

- ↑ 3.0 3.1 3.2 3.3 Murtagh, F. (2017). Data Science Foundations: Geometry and Topology of Complex Hierarchic Systems and Big Data Analytics. CRC Press. pp. 206. ISBN 9781498763936.

- ↑ 4.0 4.1 Hayashi, C. (1998). "What is Data Science? Fundamental concepts and a heuristic example". In Hayashi, C.; Yajima, K.; Bock H.H. et al.. Data Science, Classification, and Related Methods. Springer. pp. 40–51. ISBN 9784431702085.

- ↑ Ohsumi, N. (2000). "From data analysis to data science". In Kiers, H.A.L.; Rasson, J.-P.; Groenen, P.J.F. et al.. Data Science, Classification, and Related Methods. Springer. pp. 329–34. ISBN 9783540675211.

- ↑ 6.0 6.1 Escoufier, Y.; Fichet, B.; Lebart, L. et al., ed. (1995). Data Science and Its Applications. Academic Press.

- ↑ 7.0 7.1 7.2 7.3 7.4 7.5 7.6 7.7 7.8 Cao, L. (2017). "Data Science: A Comprehensive Overview". ACM Computing Surveys 50 (3): 43. doi:10.1145/3076253.

- ↑ 8.0 8.1 Ueno, M. (2017). "As the oldest journal of data science". Behaviormetrika 44 (1): 1–2. doi:10.1007/s41237-016-0011-7.

- ↑ Englmeier, K.; Murtagh, F. (2017). "Data scientist - Manager of the discovery lifecycle". Proceedings of the 6th International Conference on Data Science, Technology and Applications: 133–140. doi:10.5220/0006393801330140.

- ↑ Murtagh, F. (2017). "Chapter 8: Geometry and Topology of Matte Blanco's Bi-Logic in Psychoanalytics". Data Science Foundations: Geometry and Topology of Complex Hierarchic Systems and Big Data Analytics. CRC Press. pp. 147–62. ISBN 9781498763936.

- ↑ Coombs, C.H. (1964). A Theory of Data. Wiley.

- ↑ Japec, L.; Kreuter, F.; Berg, M. et al. (12 February 2015). "AAPORT Report: Big Data". AAPOR. https://www.aapor.org/Education-Resources/Reports/Big-Data.aspx. Retrieved 18 June 2018.

- ↑ 13.0 13.1 Abbany, Z. (27 November 2017). "A public transport model built on open data". DW. Deutsche Welle. https://www.dw.com/en/a-public-transport-model-built-on-open-data/a-41546053. Retrieved 27 November 2017.

- ↑ 14.0 14.1 Darabi, A. (5 December 2017). "The UK’s next census will be its last—here’s why". Apolitical. Apolitical Group Limited. https://apolitical.co/solution_article/uks-next-census-will-last-heres/.

- ↑ Murtagh, F.; Orlov, M.; Mirkin, B. (2018). "Qualitative Judgement of Research Impact: Domain Taxonomy as a Fundamental Framework for Judgement of the Quality of Research". Journal of Classification 35 (1): 5–28. doi:10.1007/s00357-018-9247-0.

- ↑ 16.0 16.1 Hand, D.J. (2018). "Statistical challenges of administrative and transaction data". Statistics in Society Series A 181 (3): 555–605. doi:10.1111/rssa.12315.

- ↑ Anderson, C. (23 June 2008). "The End of Theory: The Data Deluge Makes The Scientific Method Obsolete". Wired. Condé Nast. https://www.wired.com/2008/06/pb-theory/.

- ↑ 18.0 18.1 Murtagh, F. (2008). "Origins of Modern Data Analysis Linked to the Beginnings and Early Development of Computer Science and Information Engineering". Electronic Journal for History of Probability and Statistics 4 (2): 1–26. https://arxiv.org/abs/0811.2519.

- ↑ 19.0 19.1 Englmeier, K.; Murtagh, F. (2017). "Editorial: What Can We Expect from Data Scientists?". Journal of Theoretical and Applied Electronic Commerce Research 12 (1): 1–5. doi:10.4067/S0718-18762017000100001.

- ↑ 20.0 20.1 Murtagh, F.; Farid, M. (2017). "Contextualizing Geometric Data Analysis and Related Data Analytics: A Virtual Microscope for Big Data Analytics". Journal of Interdisciplinary Methodologies and Issues in Sciences 3 (Digital Contextualization): 1–19. doi:10.18713/JIMIS-010917-3-1.

- ↑ Allin, P.; Hand, D.J. (2016). "New statistics for old?—Measuring the wellbeing of the UK". Statistics in Society Series A 180 (1): 3–43. doi:10.1111/rssa.12188.

- ↑ Wessel, M. (3 November 2016). "You Don't Need Big Data - You Need the Right Data". Harvard Business Review. https://hbr.org/2016/11/you-dont-need-big-data-you-need-the-right-data. Retrieved 18 June 2018.

- ↑ "Jobs Rated Report 2017: Ranking 200 Jobs". CareerCast.com. 2017. https://www.careercast.com/jobs-rated/2017-jobs-rated-report. Retrieved 18 June 2018.

- ↑ Daniel, B.K. (2018). "Reimaging Research Methodology as Data Science". Big Data and Cognitive Computing 2 (1): 4. doi:10.3390/bdcc2010004.

- ↑ Press, G. (28 February 2018). "Graduate Programs in Data Science and Big Data Analytics". What's the Big Data?. https://whatsthebigdata.com/2012/08/09/graduate-programs-in-big-data-and-data-science/. Retrieved 18 June 2018.

- ↑ "Big Data Executive Survey 2017" (PDF). NewVantage Partners LLC. January 2017. http://newvantage.com/wp-content/uploads/2017/01/Big-Data-Executive-Survey-2017-Executive-Summary.pdf. Retrieved 18 June 2018.

- ↑ Hayes, B. (18 January 2016). "Empirically-Based Approach to Understanding the Structure of Data Science". Business Over Broadway. http://businessoverbroadway.com/empirically-based-approach-to-understanding-the-structure-of-data-science. Retrieved 18 June 2018.

- ↑ Murtagh, F. (2018). "Security and ethics in Big Data: Analytical foundations for surveys". Archives of Data Science Submitted.

- ↑ Hand, D. (6 April 2017). "The dangers of not seeing what isn’t there: Selection bias in statistical modelling". Irish Statistical Association (ISA) Gossett Lecture 2017. https://www.ucc.ie/en/matsci/news/irish-statistical-association-isa-gossett-lecture-2017.html.

- ↑ 30.0 30.1 30.2 30.3 30.4 Keiding, N.; Louis, T.A. (2016). "Perils and potentials of self‐selected entry to epidemiological studies and surveys". Statistics in Society Series A 179 (2): 319–76. doi:10.1111/rssa.12136.

- ↑ O'Neil, C. (2016). Weapons of Math Destruction. Crown. pp. 272. ISBN 9780553418811.

- ↑ "Chapitre 1. Idées–clefs de l’analyse géométrique des données". La Méthodologie de Pierre Bourdieu en Action: Espace Culturel, Espace Social et Analyse des Données. Dunod. 2015. pp. 3–20. doi:10.3917/dunod.lebar.2015.01.0003. ISBN 9782100703845.

- ↑ 33.0 33.1 Murtagh, F.; Pianosi, M.; Bull, R. (2016). "Semantic mapping of discourse and activity, using Habermas’s theory of communicative action to analyze process". Quality & Quantity 50 (4): 1675–1694. doi:10.1007/s11135-015-0228-7.

- ↑ "Commission urges governments to embrace potential of Big Data". Press Release Database. European Commission. 2 July 2014. http://europa.eu/rapid/press-release_IP-14-769_en.htm.

- ↑ Committee on the Peaceful Uses of Outer Space (14 June 2016). "“Open Universe” proposal, an initiative under the auspices of the Committee on the Peaceful Uses of Outer Space for expanding availability of and accessibility to open source space science data" (PDF). United Nations Office for Outer Space Affairs. http://www.unoosa.org/res/oosadoc/data/documents/2016/aac_1052016crp/aac_1052016crp_6_0_html/AC105_2016_CRP06E.pdf. Retrieved 18 June 2018.

- ↑ Wilkinson, M.D.; Dumontier, M.; Aalbersberg, I.J. et al. (2016). "The FAIR Guiding Principles for scientific data management and stewardship". Scientific Data 3: 160018. doi:10.1038/sdata.2016.18.

- ↑ 37.0 37.1 Devlin, K. (1991). Logic and Information. Cambridge University Press. ISBN 0521499712.

- ↑ Devlin, K. (July 2011). "A uniform framework for describing and analyzing the modern battlefield" (PDF). Standford University. http://web.stanford.edu/~kdevlin/Papers/Army_report_0711.pdf. Retrieved 18 June 2018.

- ↑ Devlin, K.; Rosenberg, D. (1996). Language at Work: Analyzing Communication Breakdown in the Workplace to Inform Systems Design. Center for the Study of Language and Information. pp. 212. ISBN 9781575860510.

- ↑ 40.0 40.1 Devlin, K.; Rosenberg, D. (2008). "Information in the Study of Human Interaction". In Adriaana, P.; van Benthem, J.; Gabbay, D. et al.. Philosophy of Information. Elsevier. pp. 685–709. doi:10.1016/B978-0-444-51726-5.50021-2.

- ↑ Barwise, J.; Perry, J. (1999). Situations and Attitudes. Center for the Study of Language and Information. pp. 376. ISBN 9781575861937.

- ↑ "Sustainable Accommodation in the New Economy". CORDIS. European Commission. 15 May 2008. https://cordis.europa.eu/project/rcn/58059_en.html. Retrieved 18 June 2018.

- ↑ Rosenberg, D.; Foley, S.; Lievonen, M. et al. (2005). "Interaction spaces in computer-mediated communication". AI & Society 19 (1): 22–33. doi:10.1007/s00146-004-0299-9.

- ↑ Walkoski, S.; Dörner, R.; Lievonen, M. et al. (2011). "Using a game controller for relaying deictic gestures in computer-mediated communication". International Journal of Human-Computer Studies 69: 362–74. doi:10.1016/j.ijhcs.2011.01.002.

Notes

This presentation is faithful to the original, with only a few minor changes to grammar, spelling, and presentation, including the addition of PMCID and DOI when they were missing from the original reference. The original inline citation method was unorthodox; these inline citations have been made clearer with the addition of the author of the citation. This often required sentences containing inline citations to be reconstructed. Several URL mentions in the text were turned into full citations. Several vanity statements and irrelevant comments were removed for improved readability.