Journal:An overview of data warehouse and data lake in modern enterprise data management

| Full article title | An overview of data warehouse and data lake in modern enterprise data management |

|---|---|

| Journal | Big Data and Cognitive Computing |

| Author(s) | Nambiar, Athira; Mundra, Divyansh |

| Author affiliation(s) | SRM Institute of Science and Technology |

| Primary contact | Email: athiram at srmist dot edu dot in |

| Year published | 2022 |

| Volume and issue | 6(4) |

| Article # | 132 |

| DOI | 10.3390/bdcc6040132 |

| ISSN | 2504-2289 |

| Distribution license | Creative Commons Attribution 4.0 International |

| Website | https://www.mdpi.com/2504-2289/6/4/132 |

| Download | https://www.mdpi.com/2504-2289/6/4/132/pdf (PDF) |

|

|

This article should be considered a work in progress and incomplete. Consider this article incomplete until this notice is removed. |

Abstract

Data is the lifeblood of any organization. In today’s world, organizations recognize the vital role of data in modern business intelligence systems for making meaningful decisions and staying competitive in the field. Efficient and optimal data analytics provides a competitive edge to its performance and services. Major organizations generate, collect, and process vast amounts of data, falling under the category of "big data." Managing and analyzing the sheer volume and variety of big data is a cumbersome process. At the same time, proper utilization of the vast collection of an organization’s information can generate meaningful insights into business tactics. In this regard, two of the more popular data management systems in the area of big data analytics—the data warehouse and data lake—act as platforms to accumulate the big data generated and used by organizations. Although seemingly similar, both of them differ in terms of their characteristics and applications.

This article presents a detailed overview of the roles of data warehouses and data lakes in modern enterprise data management. We detail the definitions, characteristics, and related works for the respective data management frameworks. Furthermore, we explain the architecture and design considerations of the current state of the art. Finally, we provide a perspective on the challenges and promising research directions for the future.

Keywords: big data, data warehousing, data lake, enterprise data management, OLAP, ETL tools, metadata, cloud computing, internet of things

Introduction

"Big data analytics" is one of the buzzwords in today’s digital world. It entails examining big data and uncovering any hidden patterns, correlations, etc. available in the data. [1] Big data analytics extracts and analyzes random data sets, forming them into meaningful information. According to statistics, the overall amount of data generated in the world in 2021 was approximately 79 zettabytes, and this is expected to double by 2025. [2] This unprecedented amount of data was the result of a data explosion that occurred during the last decade, wherein data interactions increased by 5,000 percent. [3]

Big data deals with the volume, variety, and velocity of data, while seeking the veracity (insightfulness) and value to data. These are known, in part, as the "Vs" of big data. [4] An unprecedented amount of diverse data is acquired, stored, and processed with high data quality for various application domains. These data originate from business transactions, real-time streaming, social media, video analytics, and text mining, creating a huge amount of semi- or unstructured data to be stored in different information silos. [5] The efficient integration and analysis of these multiple data across silos are required to divulge complete insight into the database. This is an open research topic of interest.

Big data and its related emerging technologies have been changing the way e-commerce and e-services operate and have been opening new frontiers in business analytics and related research. [6] Big data analytics systems play a big role in the modern enterprise management domain, from product distribution to sales and marketing, as well as the analysis of hidden trends, similarities, and other insights, giving companies opportunities to optimize their data to find new opportunities within it. [7] Since organizations with better and more accurate data can make more informed business decisions by looking at market trends and customer preferences, they can gain competitive advantages over others. Hence, organizations invest tremendously in artificial intelligence (AI) and big data technologies to strive toward digital transformation and data-driven decision making, which ultimately leads to advanced business intelligence (BI). [6] As per reports, the worldwide big data analytics and BI software applications markets seem as though they will increase by USD 68 billion and 17.6 billion by 2024–2025, respectively. [8]

Big data repositories exist in many forms, as per the requirements of corporations. [9] An effective data repository needs to unify, regulate, evaluate, and deploy a huge amount of data resources to enhance analytics and query performance. Based on the nature and the application scenario, there are many different types of data repositories outside of traditional relational databases. Two of the more popular data repositories among them are enterprise data warehouses and data lakes. [10,11,12]

A data warehouse (DW) is a data repository which stores structured, filtered, and processed data that has been treated for a specific purpose, whereas a data lake (DL) is a vast pool of data for which the purpose is not or has not yet been defined. [9] In detail, DWs store large amounts of data collected by different sources, typically using predefined schemas. Typically, a DW is a purpose-built relational database running on specialized hardware either on the premises of an organization or in the cloud. [13] DWs have been used widely for storing enterprise data and fueling BI and analytics applications. [14,15,16]

Data lakes (DLs) have emerged as big data repositories that store raw data and provide a rich list of functionalities with the help of metadata descriptions. [10] Although the DL is also a form of enterprise data storage, it does not inherently include the same analytics features commonly associated with DWs. Instead, they are repositories storing raw data in their original formats and providing a common access interface. From the lake, data may flow downstream to a DW to get processed, packaged, and become ready for consumption. As a relatively new concept, there has been very limited research discussing various aspects of DLs, especially in internet articles or blogs.

Although DWs and DLs share some overlapping features and use cases, there are fundamental differences in the data management philosophies, design characteristics, and ideal use conditions for each of these technologies. In this context, we provide a detailed overview and differences between both the DW and DL data management schemes in this survey paper. Furthermore, we consolidate the concepts and give a detailed analysis of different design aspects, various tools and utilities, etc., along with recent developments that have come into existence.

The remainder of this paper is organized as follows. In the next section, the terminology and basic definitions of big data analytics and the data management schemes are analyzed. Furthermore, the related works in the field are also summarized. After this summation, the architectures of both the DW and DL are presented, followed by their key design and practical aspects, presented at length. Afterwards, the various popular tools and services available for enterprise data management are summarized, followed by explanations of the open challenges and promising directions for these technologies. In particular, the pros and cons of various methods are critically discussed, and the observations are presented. Finally, conclusions are drawn from this research effort.

Definitions of big data analytics, data warehouse, and data lake

The definitions and fundamental notions of various data management schemes are provided in this section. Furthermore, related works and review papers on this topic are also summarized.

Big data analytics

With significant advancements in technology has come the unprecedented usage of computer networks, multimedia, the internet of things (IoT), social media, and cloud computing. [17] As a result, a huge amount of data, known as “big data,” has been generated. Effective data processing is required to collect, manage, and analyze these data efficiently. The process of big data processing is aimed at data mining (i.e., extracting knowledge from large amounts of data), leveraging data management, machine learning (ML), high-performance computing, statistical charting, pattern recognition, etc. The important characteristics of big data (known as the seven "Vs" of big data) are as follows[1]:

- Volume, or the available amount of data;

- Velocity, or the speed of data processing;

- Variety, or the different types of big data;

- Volatility, or the variability of the data;

- Veracity, or the accuracy of the data;

- Visualization, or the depiction of big data-generated insights through visual representation; and

- Value, or the benefits organizations derive from the data.

Typically, there are mainly three kinds of big data processing possible: batch processing, stream processing, and hybrid processing. [18] In batch processing, data stored in the non-volatile memory will be processed, and the probability and temporal characteristics of data conversion processes will be decided by the requirements of the problems. In stream processing, the collected data will be processed without storing them in non-volatile media, and the temporal characteristics of data conversion processes will mainly be determined by the incoming data rate. This is suitable for domains that require low response times. Another kind of big data processing, known as hybrid processing, combines both the batch and stream processing techniques to achieve high accuracy and a low processing time. [19] Some examples of hybrid big data processing are Lambda and Kappa Architecture. [20] Lambda Architecture processes huge quantities of data, enabling batch processing and stream processing methods with a hybrid approach. Kappa Architecture is a simpler alternative to Lambda Architecture, since it leverages the same technology stack to handle both real-time stream processing and historical batch processing. However, it avoids maintaining two different code bases for the batch and speed layers. The major notion is to facilitate real-time data processing using a single stream-processing engine, thus bypassing the multi-layered Lambda Architecture without compromising the standard quality of service.

Data warehouse

The concept of DWs was introduced in the late 1980s by IBM researchers Barry Devlin and Paul Murphy, with the aim to deliver an architectural model to solve the flow of data to decision support environments. [21] According to the definition by Inmon, “a data warehouse is a subject-oriented, nonvolatile, integrated, time-variant collection of data in support of management decisions.” [22] Formally, a DW is a large data repository wherein data can be stored and integrated from various sources in a well-structured manner and help in the decision-making process via proper data analytics. [23] The process of compiling information into a DW is known as data warehousing.

In enterprise data management, data warehousing is referred to as a set of decision-making systems targeted toward empowering the information specialist (leader, administrator, or analyst) to improve decision making and make decisions quicker. Hence, DW systems act as an important tool of BI, being used in enterprise data management by most medium and large organizations. [24,25] The past decade has seen unprecedented development both in the number of products and services offered and in the wide-scale adoption of these advancements by the industry. According to a comprehensive research report by Market Research Future (MRFR) titled “Data Warehouse as a Service Market information by Usage, by Deployment, by Application and Organization Size—forecast to 2028,” the market size will reach USD 7.69 billion, growing at a compound annual growth rate of 24.5%, by 2028. [26]

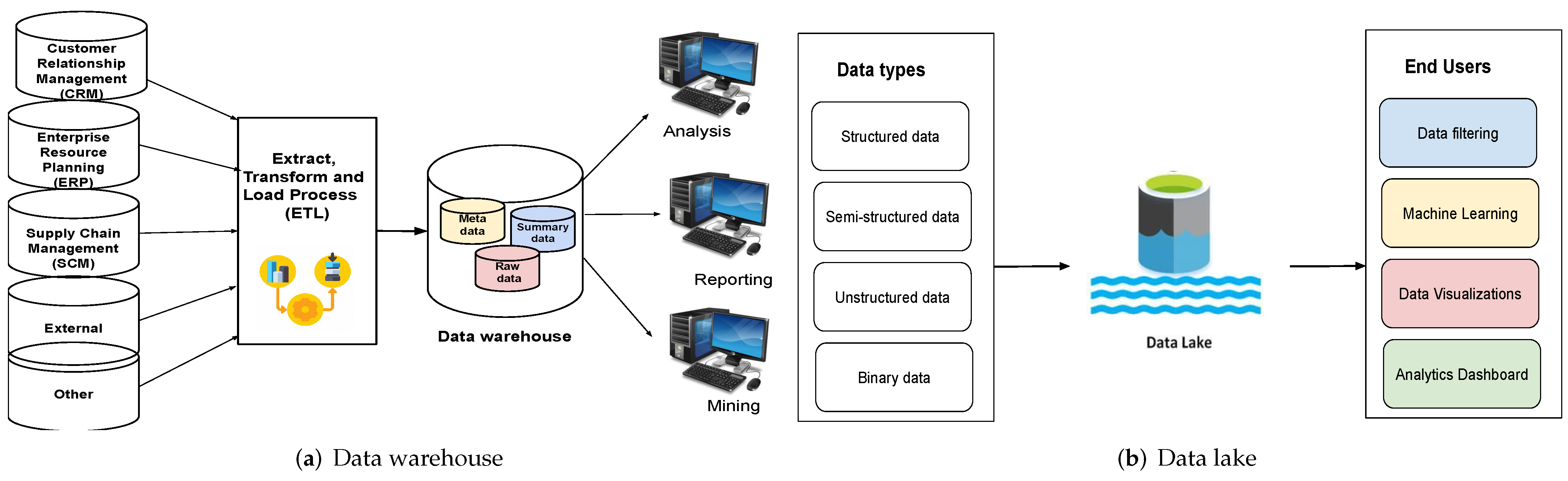

In the DW framework, data are periodically extracted from programs that aid in business operations and duplicated onto specialized processing units. They may then be approved, converted, reconstructed, and augmented with input from various options. The developed DW then becomes a primary origin of data for the production, analysis, and presentation of reports via instantaneous reports, e-portals, and digital readouts. It employs “online analytical processing” (OLAP), whose utility and execution needs differ from those of the “online transaction processing” (OLTP) implementations typically backed up by functional databases. [27,28] OLTP programs often computerize the handling of administrative data processes, such as order entry and banking transactions, which are an organization’s necessary activities. DWs, on the other hand, are primarily concerned with decision assistance. As shown in Figure 1a, a DW integrates data from various sources and helps with analysis, data mining, and reporting. A detailed description of a DW’s architecture is presented in the next major section.

|

DW advancements have benefited various sectors, including production (for supply shipment and client assistance), business (for profiling of clients and stock governance), monetary administration (for claims investigation, risk assessment, billing examination, and detecting fraud), logistics (for vehicle administration), broadcast communications (in order to analyze calls), utility companies (in order to analyze power use), and medical services. [29] The field of data warehousing has seen immense research and development over the last two decades in various research categories such as DW architecture, design, and evolution.

Data lake

By the beginning of the twenty-first century, new types of diverse data were emerging in ever-increasing volumes on the internet and at its interface to the enterprise (e.g., web-based business transactions, real-time streaming, sensor data, and social media). With the huge amount of data around, the need to have better solutions for storing and analyzing large amounts of semi-structured and unstructured data to gain relevant information and valuable insight became apparent. Traditional schema-on-write approaches such as the extract, transform, and load (ETL) process are too inefficient for such data management requirements. This gave rise to another popular modern enterprise data management scheme, the DL. [30,31,32]

DLs are centralized storage repositories that enable users to store raw, unprocessed data in their original format, including unstructured, semi-structured, or structured data, at scale. These help enterprises to make better business decisions via visualizations or dashboards from big data analysis, ML, and real-time analytics. A pictorial representation of a DL is given in Figure 1b, above.

According to Dixon, “whilst a data warehouse seems to be a bottle of water cleaned and ready for consumption, then 'Data Lake' is considered as a whole lake of data in a more natural state.” [33] Another definition for the DL is provided by King [34], as a mechanism that “stores disparate information while ignoring almost everything.” An explanation of DLs from an architectural viewpoint is given by Alrehamy and Walker [35]: “A data lake uses a flat architecture to store data in its raw format. Each data entity in the lake is associated with a unique identifier and a set of extended metadata, and consumers can use purpose-built schemas to query relevant data, which will result in a smaller set of data that can be analyzed to help answer a consumer’s question.” A DL houses data in its original raw form. The data in DLs can vary drastically in size and structure, and they lack any specific organizational structure. A DL can accommodate either very small or huge amounts of data as required. All of these features provide flexibility and scalability to DLs. At the same time, challenges related to the implementation and data analytics associated with DLs also arise.

DLs are becoming increasingly popular for organizations to store their data in a centralized manner. A DL may contain unstructured or multi-structured data, where most of them may have unrealized value for the enterprise. This allows organizations to store their data from different sources without any overhead related to the transformation of the data. [30] This also allows ad hoc data analyses to be performed on this data, which can then be used by organizations to drive key insights and data-driven decision making. DLs replace the previous way of organizing and processing data from various sources with a centralized, efficient, and flexible repository that allows organizations to maximize their gains from a data-driven ecosystem. DLs also allow organizations to scale them to their needs. This is achieved by separating storage from the computational part. Complex transformation and preprocessing of data in the case of DWs is eliminated. The upfront financial overhead of data ingestion is also reduced. Once data are collated in the lake or hub, it is available for analysis for the organization.

The differences between data warehouses and data lakes

Although DWs and DLs are used as two interchangeable terms, they are not the same. [21] One of the major differences between them is the different structures (i.e., processed vs. raw data). A DW stores data in processed and filtered form, whereas a DL stores raw or unprocessed data. Specifically, data are processed and organized into a single schema before being put into the warehouse, whereas raw and unstructured data are fed into a DL. Analysis is performed on the cleansed data in the warehouse. On the contrary, in a DL, data are selected and organized as and when needed.

As for storing processed data, a DW is economical. On the contrary, DLs have a comparatively larger capacity than the DW and are ideal for raw and unprocessed data analysis and employing ML. Another key difference is the objective or purpose of use. Typically, processed data that flow into DWs are used for specific purposes, and hence the storage space will not be wasted, whereas the purpose of usage for the DL is not defined and can ideally be used for any purpose. To use processed or filtered data, no specialized expertise is required, as merely familiarization with the presentation of data (e.g., charts, sheets, tables, and presentations) will do. Hence, DWs can be used by any business or individual. On the contrary, it is comparatively difficult to analyze DLs without familiarity with unprocessed data, hence requiring data scientists with appropriate skills or tools to comprehend them for specific business use. Accessibility or ease of use of data repositories is yet another aspect that differentiates DWs and DLs. Since the architecture of a DL has no proper structure, it has flexibility of use. Instead, the structure of a DW makes sure that no foreign particles invade it, and it is very costly to manipulate. This feature makes it very secure, too. A detailed analysis of the differences between DWs and DLs is given in Table 1.

| ||||||||||||||||||||||||||||||||||||||||||

A literature review of data warehouses and data lakes

A summary of various research works in the field of DWs and DLs is presented here. Table 2 presents a list of various survey articles on DWs and DLs. Mainly, DW review works address architecture modeling and its comparisons [36,37], the evolution of the DW concept [38], real-time data warehousing and ETL [39], etc. Compared with the DW literature reviews, DL papers are relatively fewer in number. DL review works summarize recent approaches and the architecture of DLs [31,32] as well as the design and implementation aspects. [30] To the best of our knowledge, only one work on comparing DWs and DLs was found in the literature. [12] In contrast to that article, our work provides a comprehensive analysis of both data management schemes by addressing various aspects, such as definitions, architecture, practical design considerations, tools and services, challenges, and opportunities in detail. In addition to the survey papers, we also consolidate various works on DWs and DLs in the reported literature and classify them in Table 3 based on their functions and utility.

| |||||||||||||||||||||||||||||||||||||||||||||

| |||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

Architecture

In this section, the architectures of the DW and DL schemes are described in detail. Furthermore, the classification of DW and DL solutions based on function is carried out and summarized as a table.

Data warehouse architecture

The DW architecture contains historical and commutative data from multiple sources. Basically, there are three kinds of architectures [55]:

- Single-tier architecture: This kind of single-layer model minimizes the amount of data stored. It helps remove data redundancy. However, its disadvantage is the lack of a component that separates analytical and transactional processing. This kind of architecture is not frequently used in practice.

- Two-tier architecture: This model separates physically available sources and the DW by means of a staging area. Such an architecture makes sure that all data loaded into the warehouse are in an appropriate cleansed format. Nevertheless, this architecture is not expandable nor can it support many end users. Additionally, it has connectivity problems due to network limitations.

- Three-tier architecture: This is the most widely used architecture for DWs. [56,57] It consists of a top, middle, and bottom tier. In the bottom tier, data are cleansed, transformed, and loaded via backend tools. This tier serves as the database of the data warehouse. The middle tier is an OLAP server that presents an abstract view of the database by acting as a mediator between the end user and the database. The top tier, the front-end client layer, consists of the tools and an API that are used to connect and get data out from the DW (e.g., query tools, reporting tools, managed query tools, analysis tools, and data mining tools).

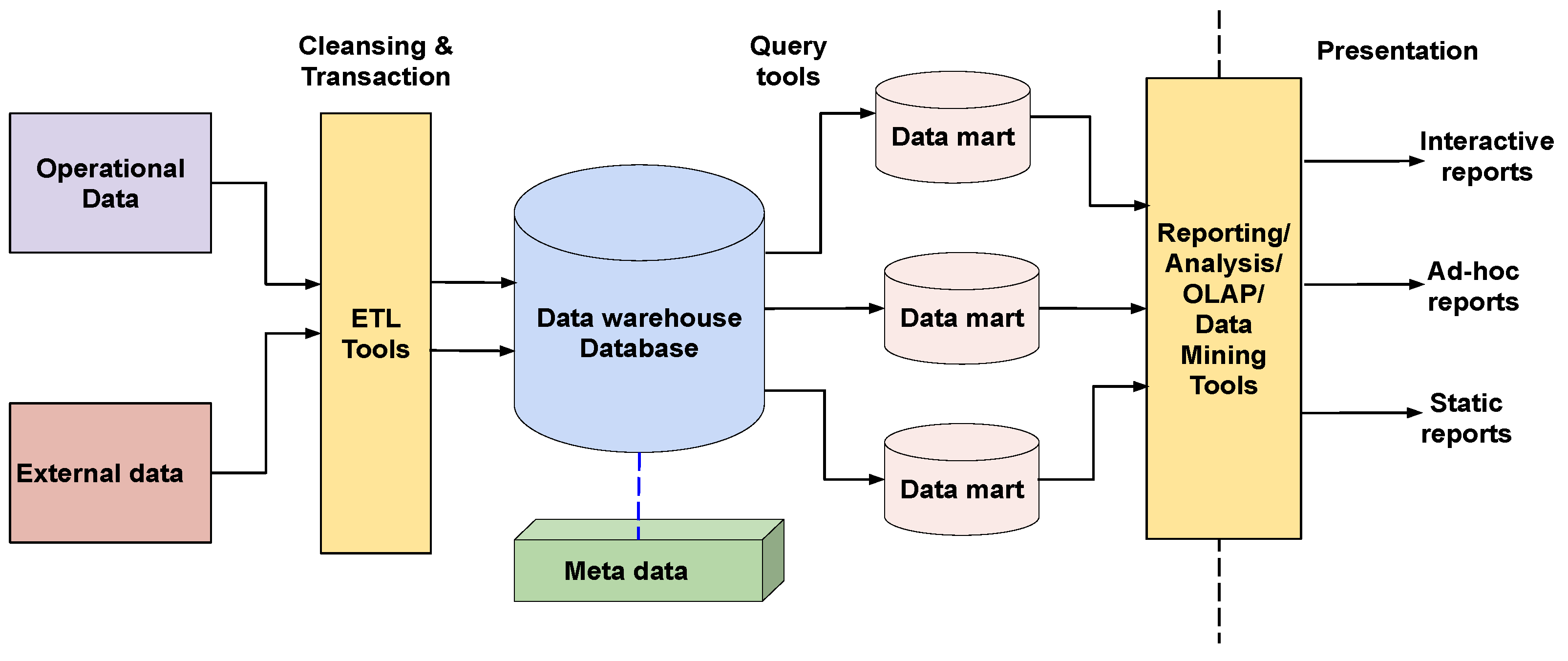

The architecture of a data warehouse is shown in Figure 2. It consists of a central information repository that is surrounded by some key DW components, making the entire environment functional, manageable, and accessible.

|

Various components of the DW (from Figure 2) are now described.

Data warehouse database: The core foundation of the DW environment is its central database. This is implemented using relational database management system (RDBMS) technology. [58] However, there is a limitation to such implementations, since the traditional RDBMS system is optimized for transactional database processing and not for data warehousing. In this regard, the alternative means are (1) the usage of relational databases in parallel, which enables shared memory on various multiprocessor configurations or parallel processors, (2) new index structures to get rid of relational table scanning and improve the speed, and (3) multidimensional databases (MDDBs) used to circumvent the limitations caused by the relational DW models.

Extract, transform, and load (ETL) tools: All the conversions, summarizations, and changes required to transform data into a unified format in the DW are carried out via ETL tools. [59] This ETL process helps the DW achieve enhanced system performance and BI, timely access to data, and a high return on investment:

- Extraction: This involves connecting systems and collecting the data needed for analytical processing.

- Transformation: The extracted data are converted into a standard format.

- Loading: The transformed data are imported into a large data warehouse.

ETL anonymizes data as per regulatory stipulations, thereby anonymizing confidential and sensitive information before loading it into the target data store. [60] ETL eliminates unwanted data in operational databases from loading into DWs. ETL tools carry out amendments to the data arriving from different sources and calculate summaries and derived data. Such ETL tools generate background jobs, Cobol programs, shell scripts, etc. that regularly update the data in the DW. ETL tools also help with maintaining the metadata.

Metadata: Metadata is the data about the data that define the data warehouse. [61] It deals with some high-level technological concepts and helps with building, maintaining, and managing the DW. Metadata plays an important role in transforming data into knowledge, since it defines the source, usage, values, and features of the DW and how to update and process the data in a DW. This is the most difficult tool to choose due to the lack of a clear standard. Efforts are being made among data warehousing tool vendors to unify a metadata model. One category of metadata known as technical metadata contains information about the warehouse that is used by its designers and administrators, whereas another category called business metadata contains details that enable end users to understand the information stored in the DW.

Query tools: Query tools allow users to interact with the DW system and collect information relevant to businesses to make strategic decisions. Such tools can be of different types:

- Query and reporting tools: Such tools help organizations generate regular operational reports and support high-volume batch jobs such as printing and calculating. Some popular reporting tools are Brio, Oracle, Powersoft, and SAS Institute. Similarly, query tools help end users to resolve pitfalls in SQL and database structure by inserting a meta-layer between the users and the database.

- Application development tools: In addition to the built-in graphical and analytical tools, application development tools are leveraged to satisfy the analytical needs of an organization.

- Data mining tools: This tool helps in automating the process of discovering meaningful new correlations and structures by mining large amounts of data.

- OLAP tools: OLAP tools exploit the concepts of a multidimensional database and help analyze the data using complex multidimensional views. [28, 62]. There are two types of OLAP tools: multidimensional OLAP (MOLAP) and relational OLAP (ROLAP). [63] With MOLAP, a cube is aggregated from the relational data source. Based on the user report request, the MOLAP tool generates a prompt result, since all the data are already pre-aggregated within the cube. [64] In the case of ROLAP, the ROLAP engine acts as a smart SQL generator. It comes with a “designer” piece, wherein the administrator specifies the association between the relational tables, attributes, and hierarchy map and the underlying database tables. [65]

Data lake architecture

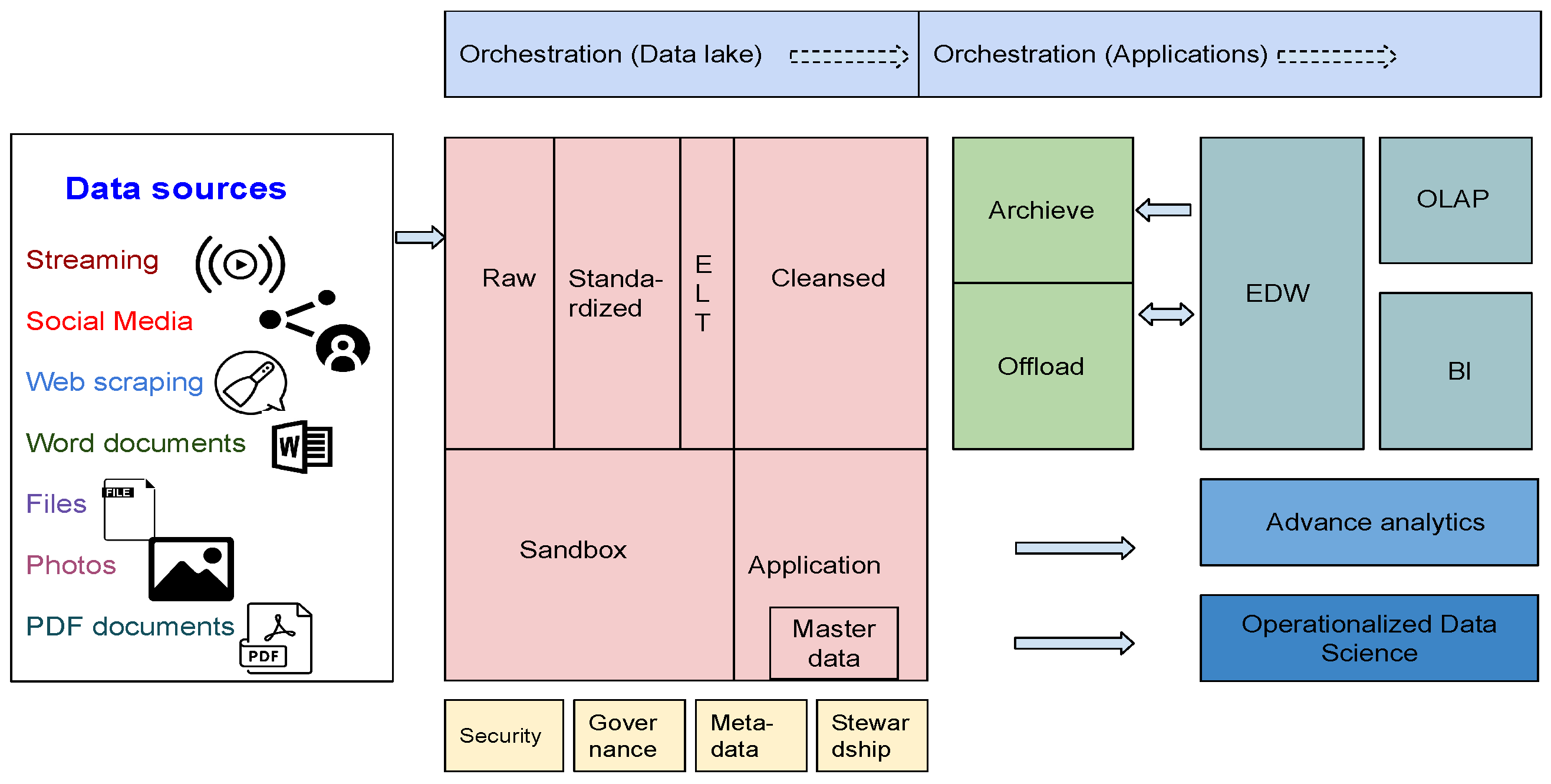

The architecture of a business DL is depicted in Figure 3. Although it is treated as a single repository, it can be distinguished as separate layers in most cases.

|

Various components of the DL (from Figure 3) are now described.

Raw data layer: This layer is also known as the ingestion layer or landing area because it acts as the sink of the DL. The prime goal is to ingest raw data as quickly and as efficiently as possible. No transformations are allowed at this stage. With the help of the archive, it is possible to get back to a point in time with raw data. Overriding (i.e., handling duplicate versions of the same data) is not permitted. End users are not granted access to this layer. These are not ready-to-use data, and they need a lot of knowledge in terms of relevant consumption.

Standardized data layer: This is optional in most implementations. If one expects fast growth for his or her DL architecture, then this is a good option. The prime objective of the standardized layer is to boost the performance of the data transfer from the raw layer to the curated layer. In the raw layer, data are stored in their native format, whereas in the standardized layer, the appropriate format that fits best for cleansing is selected.

Cleansed layer or curated layer: In this layer, data are transformed into consumable data sets and stored in files or tables. This is one of the most complex parts of the whole DL solution since it requires cleansing, transformation, denormalization, and consolidation of different objects. Furthermore, the data are organized by purpose, type, and file structure. Usually, end users are granted access only to this layer.

Application layer: This is also known as the trusted layer, secure layer, or production layer. This is sourced from the cleansed layer and enforced with requisite business logic. In case the applications use ML models on the DL, they are obtained from here. The structure of the data is the same as in the cleansed layer.

Sandbox data layer: This is also another optional layer that is meant for analysts’ and data scientists’ work to carry out experiments and search for patterns or correlations. The sandbox data layer is the proper place to enrich the data with any source from the internet.

Security: While DLs are not exposed to a broad audience, the security aspects are of great importance, especially during the initial phase and architecture. These are not like relational databases, which have an artillery of security mechanisms.

Governance: Monitoring and logging operations become crucial at some point while performing analysis.

Metadata: This is the data about data. Most of the schemas reload additional details of the purpose of data, with descriptions on how they are meant to be exploited.

Stewardship: Based on the scale that is required, either the creation of a separate role or delegation of this responsibility to the users will be carried out, possibly through some metadata solutions.

Master data: This is an essential part of serving ready-to-use data. It can be either stored on the DL or referenced while executing ELT processes.

Archive: DLs keep some archive data that come from data warehousing. Otherwise, performance and storage-related problems may occur.

Offload: This area helps to offload some time- and resource-consuming ETL processes to a DL in case of relational data warehousing solutions.

Orchestration and ELT processes: Once the data are pushed from the raw layer through the cleansed layer and to the sandbox and application layers, a tool is required to orchestrate the flow. Either an orchestration tool or some additional resources to execute them are leveraged in this regard.

Many implementations of a DL are originally based on Apache Hadoop. The Highly Available Object Oriented Data Platform (Hadoop) is a widely popular big data tool especially suitable for batch processing workloads of big data. [66] It uses the Hadoop Distributed File System (HDFS) as its core storage and MapReduce (MR) as the basic computing model. Novel computing models are constantly proposed to cope with the increasing needs for batch processing performance (e.g., Tez, Spark, and Presto). [67,68] The MR model has also been replaced with the directed acyclic graph (DAG) model, which improves computing models’ abstract concurrency.

The second phase of DL evolution has happened with the arrival of Lambda Architecture [69,70], owing to the constant changes in data processing capabilities and processing demand. It presents stream computing engines, such as Storm, Spark Streaming, and Flink. [71] In such a framework, batch processing is combined with stream computing to meet the needs of many emerging applications. Yet another advanced phase is seen with Kappa Architecture. [72] The two models of batch processing and stream computing are unified by improving the stream computing concurrency and increasing the time window of streaming data. In this regard, stream computing is used that features an inherent and scalable distributed architecture.

Design aspects

References

- ↑ Moore, M. (7 April 2016). "The 7 V’s of Big Data". Impact.com. https://impact.com/marketing-intelligence/7-vs-big-data/. Retrieved 25 September 2022.

- ↑ Quix, Christoph; Hai, Rihan; Vatov, Ivan (30 December 2016). "Metadata Extraction and Management in Data LakesWith GEMMS". Complex Systems Informatics and Modeling Quarterly (9): 67–83. doi:10.7250/csimq.2016-9.04. https://csimq-journals.rtu.lv/article/view/csimq.2016-9.04.

Notes

This presentation is faithful to the original, with only a few minor changes to presentation. In some cases important information was missing from the references, and that information was added. Inline URLs from the original were turned into full citations for this version. The original, in Table 3, attributes the reference as "Metadata Extraction and Management in Data Lakes with GEMMS" but uses citation 30, which is something different, and a citation for "Metadata Extraction and Management in Data Lakes with GEMMS" doesn't exist in the original; it was added as new for this version.