Journal:Privacy preservation techniques in big data analytics: A survey

| Full article title | Privacy preservation techniques in big data analytics: A survey |

|---|---|

| Journal | Journal of Big Data |

| Author(s) | Rao, P. Ram Mohan; Krishna, S. Murali; Kumar, A.P. Siva |

| Author affiliation(s) | MLR Institute of Technology, Sri Venkateswara College of Engineering, JNTU Anantapur |

| Primary contact | Email: rammohan04 at gmail dot com |

| Year published | 2018 |

| Volume and issue | 5 |

| Page(s) | 33 |

| DOI | 10.1186/s40537-018-0141-8 |

| ISSN | 2196-1115 |

| Distribution license | Creative Commons Attribution 4.0 International |

| Website | https://link.springer.com/article/10.1186/s40537-018-0141-8 |

| Download | https://link.springer.com/content/pdf/10.1186%2Fs40537-018-0141-8.pdf (PDF) |

Abstract

Incredible amounts of data are being generated by various organizations like hospitals, banks, e-commerce, retail and supply chain, etc. by virtue of digital technology. Not only humans but also machines contribute to data streams in the form of closed circuit television (CCTV) streaming, web site logs, etc. Tons of data is generated every minute by social media and smart phones. The voluminous data generated from the various sources can be processed and analyzed to support decision making. However data analytics is prone to privacy violations. One of the applications of data analytics is recommendation systems, which are widely used by e-commerce sites like Amazon and Flipkart for suggesting products to customers based on their buying habits, leading to inference attacks. Although data analytics is useful in decision making, it will lead to serious privacy concerns. Hence privacy preserving data analytics became very important. This paper examines various privacy threats, privacy preservation techniques, and models with their limitations. The authors then propose a data lake-based modernistic privacy preservation technique to handle privacy preservation in unstructured data.

Keywords: data, data analytics, privacy threats, privacy preservation

Introduction

There is exponential growth in the volume and variety of data due to diverse applications of computers in all domain areas. The growth has been achieved due to affordable availability of computer technology, storage, and network connectivity. The large scale data—which also include person specific private and sensitive data like gender, zip code, disease, caste, shopping cart, religion, etc.—is being stored in a variety of public and private domains. The data holder can then release this data to a third-party data analyst to gain deeper insights and identify hidden patterns which are useful in making important decisions that may help in improving businesses and provide value-added services to customers[1], as well in activities such as prediction, forecasting, and recommendation.[2] One of the prominent applications of data analytics is the recommendation system, which is widely used by e-commerce sites like Amazon and Flipkart for suggesting products to customers based on their buying habits. Facebook does something similar by suggesting friends, places to visit, and even movies to watch based on our interest. However releasing user activity data may lead to inference attacks like identifying gender based on user activity.[3] We have studied a number of privacy preserving techniques which are being employed to protect against privacy threats. Each of these techniques has their own merits and demerits. This paper explores the merits and demerits of each of these techniques and also describes the research challenges in the area of privacy preservation. Always there exists a trade off between data utility and privacy. This paper also proposes a data lake-based modernistic privacy preservation technique to handle privacy preservation in unstructured data with maximum data utility.

Privacy threats in data analytics

Privacy is the ability of an individual to determine what data can be shared, and employ access control. If the data is in the public or private domain, then it is a threat to individual privacy as the data is held by a data holder. The data holder can be a social networking application, website, mobile app, e-commerce site, bank, hospital, etc. It is the responsibility of the data holder to ensure privacy of the users data. Apart from the data held in various domains, knowingly or unknowingly users may contribute to data leakage. For example, most of mobile apps seek access to our contacts, files, camera, etc., and without reading the privacy statement we agree to all its terms and conditions, there by contributing to data leakage.

Hence there is a need to educate smart phone users regarding privacy and privacy threats. Some of the key privacy threats include (1) surveillance, (2) disclosure, (3) discrimination, and (4) personal embracement and abuse.

Surveillance

Many retail, e-commerce, etc. businesses study their customers' buying habits and try to come up with various offers and value-added services.[4] Based on the opinion data and sentiment analysis, social media sites may provide recommendations of new friends, places to visit, people to follow, etc. This is possible only when they continuously monitor their customers' transactions. This is a serious privacy threat as no individual accepts surveillance.

Disclosure

Consider a hospital holding a patient's data, often containing identifying or revealing information such as zip code, gender, age, and disease.[5][6][7] The data holder, the hospital, has released data to a third party for analysis by anonymizing sensitive personal information so that the person cannot be identified. The third party data analyst can map this information with freely available external data sources like census data and then identify the person suffering a disorder. This is how the private information of a person can be disclosed, which is considered to be a serious privacy breach.

Discrimination

Discrimination is the bias or inequality which can happen when some private information of a person is disclosed. For instance, statistical analysis of electoral results proved that people of one community were completely against the party, which formed the government. Now the government can neglect that community or can have bias over them.

Personal embracement and abuse

Whenever a person's private information is disclosed, it can even lead to personal embracement or abuse. For example, a person was privately taking medication for some specific problem and was buying the medicine on a regular basis from a medical shop. As part of their regular business model, the medical shop may send a reminder and offers related to the medicine over the phone. If another family member has noticed this, it may lead to personal embracement and even abuse.[8]

Data analytics activity creates data privacy issues. Many countries are enacting and enforcing privacy preservation laws. Yet something as simple as lack of awareness can still generate privacy attacks despite these mechanisms. For example, many smart phones users are not aware of the information that is stolen from their phones by many apps. Previous research shows only 17 percent of smart phone users are aware of such privacy threats.[9]

Privacy preservation methods

Many privacy preserving techniques have been developed, but most of them are based on anonymization of data. A list of privacy preservation techniques includes:

- K anonymity

- L diversity

- T closeness

- Randomization

- Data distribution

- Cryptographic techniques

- Multidimensional sensitivity-based anonymization (MDSBA)

K anonymity

Anonymization is the process of modifying data before it is given for data analytics[10], so that de-identification is not possible and will lead to K indistinguishable records if an attempt is made to de-identify by mapping the anonymized data with external data sources. K anonymity[11] is prone to two attacks namely homogeneity attack and back ground knowledge attack. Some of the algorithms applied include Incognito[12] and Mondrian[13] to ensure anonymization. K-anonymity is applied on the patient data shown in Table 1. The table shows data before anonymization.

| ||||||||||||||||||||||||||||||||||||||||||||

The K-anonymity algorithm is applied with a K value of 3 to ensure three indistinguishable records when an attempt is made to identify a particular person's data. K-anonymity is applied on the two attributes, viz., zip and age shown in Table 1. The result of applying anonymization on the zip and age attributes is shown in Table 2.

| ||||||||||||||||||||||||||||||||||||||||||||

The above technique used generalization[14] to achieve anonymization. Suppose we know that John is 27 years old and lives in the 57677 zip code. We can then still conclude John to have cardiac problem even after anonymization as shown in Table 2. This is called a homogeneity attack. For example, if John is 36 years old and we know that John does not have cancer, then definitely John must have cardiac problem. This is called a background knowledge attack. Achieving K-anonymity[15][16] can be done either by using generalization or suppression. K-anonymity can be optimized if the minimal generalization can be done without significant data loss.[17] Identity disclosure is the major privacy threat, which cannot be guaranteed by K-anonymity.[18] Personalized privacy is the most important aspect of individual privacy.[19]

L-diversity

To address the homogeneity attack, another technique called L-diversity has been proposed. As per L-diversity, there must be L well represented values for the sensitive attribute (disease) in each equivalence class.

Implementing L-diversity is not possible every time because of the variety of data. L-diversity is also prone to the skewness attack, meaning that when the overall distribution of data is skewed into few equivalence classes, attribute disclosure cannot be ensured. For example, if the entire records are distributed into only three equivalence classes, then semantic closeness of these values may lead to attribute disclosure. Also L-diversity may lead to a similarity attack. From Table 3, it can be noticed that if we know that John is 27 year old and lives in the 57677 zip code, then John definitely falls under the low income group because salaries of all three persons in the 576** zip are low compared to others in the table. This is called a similarity attack.

| ||||||||||||||||||||||||||||||||||||||||

T-closeness

Another improvement to L-diversity is the T-closeness measure, where an equivalence class is considered to have T-closeness if the distance between the distributions of the sensitive attribute in the class is no more than a threshold and all equivalence classes have T-closeness.[20] T-closeness can be calculated on every attribute with respect to the sensitive attribute.

From Table 4 it can be observed that if we know John is 27 year old, still it will be difficult to estimate whether John has a cardiac problem or not, and if he falls under the low income group or not. T-closeness may ensure attribute disclosure, but implementing T-closeness may not give a proper distribution of data every time.

| ||||||||||||||||||||||||||||||||||||||||

Randomization technique

Randomization is the process of adding noise to the data, generally done by probability distribution.[21] Randomization is applied in surveys, sentiment analysis, etc. Randomization does not need knowledge of other records in the data. It can be applied during data collection and pre-processing time. There is no anonymization overhead in randomization. However, applying randomization on large datasets is not possible because of time complexity and data utility, which has been proved in our experiment described below.

We have loaded 10,000 records from an employee database into the Hadoop Distributed File System and processed them by executing a MapReduce job. We have experimented to classify the employees based on their salary and age groups. In order to apply randomization we added noise in the form of 5,000 records which are randomly added to make a database of 15,000 records. The following observations were made after running the MapReduce job.

- More Mappers and Reducers were used as data volume increased.

- Results before and after randomization were significantly different.

- Some of the records, which are outliers, remain unaffected with randomization and are vulnerable to an adversary attack.

- Privacy preservation at the cost of data utility is not appreciated, and hence randomization may not be suitable for privacy preservation, especially attribute disclosure.

Data distribution technique

In this technique, the data is distributed across many sites. Distribution of the data can be done in two ways: horizontal distribution of data and vertical distribution of data.

Horizontal distribution

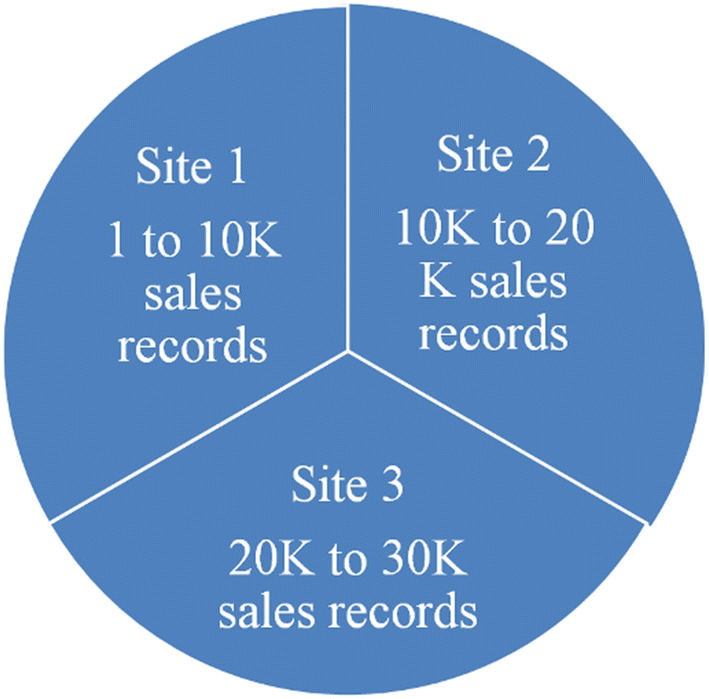

When data is distributed across many sites with the same attributes, then the distribution is said to be a horizontal distribution, which is described in Fig. 1.

|

Horizontal distribution of data can be applied only when some aggregate functions or operations are to be applied on the data without actually sharing the data. For example, if a retail store wants to analyze their sales across various branches, they may employ some analytics which does computations on aggregate data. However, as part of data analysis the data holder may need to share the data with a third-party analyst, which may lead to a privacy breach. Classification and clustering algorithms can be applied on distributed data, but that application does not ensure privacy. If the data is distributed across different sites which belong to different organizations, then the results of aggregate functions may help one party in detecting the data held with other parties. In such situations we expect all participating sites to be honest with each other.[21]

Vertical distribution of data

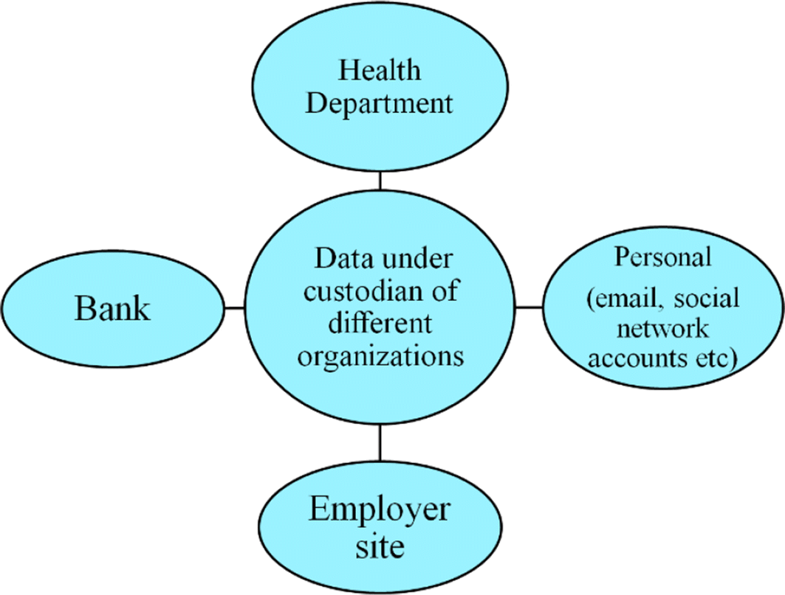

When personal identifying information is distributed across different sites under the custodianship of different organizations, then the distribution is called vertical distribution, as shown in Fig. 2. For example, in crime investigations, the police officials would like to know details of a particular criminal, including health, professional, financial, and personal information. All this information may not be available at one site. Such a distribution is called a vertical distribution, where each site holds few sets of attributes of a person. When some form of analytics has to be performed, data has to be pooled in from all these sites, which opens up the possibility of a privacy breach.

|

In order to perform data analytics on vertically distributed data, where the attributes are distributed across different sites under the custodianship of different parties, it is highly difficult to ensure privacy if the datasets are shared. For example, as part of a police investigation, the investigating officer wants to access some information about the accused from his employer, health department, or bank to gain more insights about the character of the person. In this process some of the personal and sensitive information of the accused may be disclosed to the investigating officer, potentially leading to personal embarrassment or abuse. Anonymization cannot be applied when entire records are not needed for analytics. Distribution of data will not ensure privacy preservation but it closely overlaps with cryptographic techniques.

Cryptographic techniques

The data holder may encrypt the data before releasing the same for analytics. But encrypting large scale data using conventional encryption techniques is highly difficult and must be applied only at time of data collection. Differential privacy techniques have already been applied where some aggregate computations on the data are done without actually sharing the inputs. For example, if x and y are two data items, then a function F(x, y) will be computed to gain some aggregate information from both x and y without actually sharing x and y. This can be applied when x and y are held with different parties, as in the case of vertical distribution. However, if the data is at a single location under the custodianship of a single organization, then differential privacy cannot be employed. Another similar technique called secure multiparty computation has been used, but it proves to be inadequate in privacy preservation. Data utility will be less if encryption is applied during data analytics. Thus encryption is not only difficult to implement; it also reduces data utility.[22]

Multidimensional sensitivity-based anonymization (MDSBA)

Bottom-up generalization[23] and top-down generalization[24] are the conventional methods of anonymization applied to well represented, structured data records. However, applying the same techniques to large scale data sets is very difficult, leading to issues of scalability and information loss. Multidimensional sensitivity-based anonymization is an improved version of anonymization proved to be more effective than conventional anonymization techniques.

Multidimensional sensitivity-based anonymization is an improved anonymization[25] technique such that it can be applied on large data sets with reduced loss of information and predefined quasi-identifiers. As part of this technique, an Apache MapReduce[26] framework has been used to handle large data sets. In the conventional Hadoop Distributed File System, the data will be divided into blocks of either 64 MB or 128 MB each and distributed across different nodes without considering the data inside the blocks. As part of the MDSBA[27] technique, the data is split into different packets based on the probability distribution of the quasi-identifiers by making use of filters in the Apache Pig scripting language.

MDSBA makes use of bottom-up generalization but on a set of attributes with certain class values where class represents a sensitive attribute. Data distribution is made effective when compared to a conventional method of blocks. Data anonymization can be done using four quasi-identifiers using Apache Pig.

Since the data is vertically partitioned into different groups, it can protect from background knowledge attack if the packet contains only a few attributes. This method also makes it difficult to map the data with external sources to disclose any personal identifying information.

In this method, the implementation is done using Apache Pig, a scripting language, hence development effort is less. However, code efficiency of Apache Pig is relatively lower when compared to a MapReduce job because ultimately every Apache Pig script has to be converted into a MapReduce job. MDSBA is more appropriate for large scale data but only when the data is at rest.[28] MDSBA cannot be applied to streaming data.

Analysis

Various privacy preservation techniques have been studied with respect to features, including type of data, data utility, attribute preservation, and complexity. The comparison of various privacy preservation techniques is shown in Table 5.

| |||||||||||||||||||||||||||||||||||||||||||||||

Results and discussion

As part of systematic literature review, it has been observed that all existing mechanisms of privacy preservation are with respect to structured data. More than 80 percent of data being generated today is unstructured.[29] As such, there is a need to address the following challenges:

- i. Develop a concrete solution to protect privacy in both structured and unstructured data.

- ii. Develop scalable and robust techniques to handle large scale heterogeneous data sets.

- iii. Data should be allowed to stay in its native form without need for transformation, and data analytics can be carried out while ensuring privacy preservation.

- iv. New techniques apart from anonymization must be developed to ensure protection against key privacy threats, which include identity disclosure, discrimination, surveillance, etc.

- v. Maximize data utility while ensuring data privacy.

Conclusion

No concrete solution for unstructured data has been developed yet. Conventional data mining algorithms can be applied for classification and clustering problems but cannot be used in privacy preservation, especially when dealing with personal identifying information. Machine learning and soft computing techniques can be used to develop new and more appropriate solutions to privacy problems, which include identity disclosure that can lead to personal embarrassment and abuse.

There is a strong need for law enforcement by governments of all countries to ensure individual privacy. The European Union[30] is making an attempt to enforce privacy preservation law. Apart from technological solutions, there is a strong need to create awareness among the people regarding privacy hazards to safeguard themselves from privacy breaches. One of the more serious privacy threats comes from the smart phone. Significant amounts of personal information in the form of contacts, messages, chats, and files are being accessed by many apps running in our smart phone without our knowledge. Most of the time people do not even read the privacy statement before installing an application. Hence there is a strong need to educate people on the various vulnerabilities which can contribute to leakage of private information.

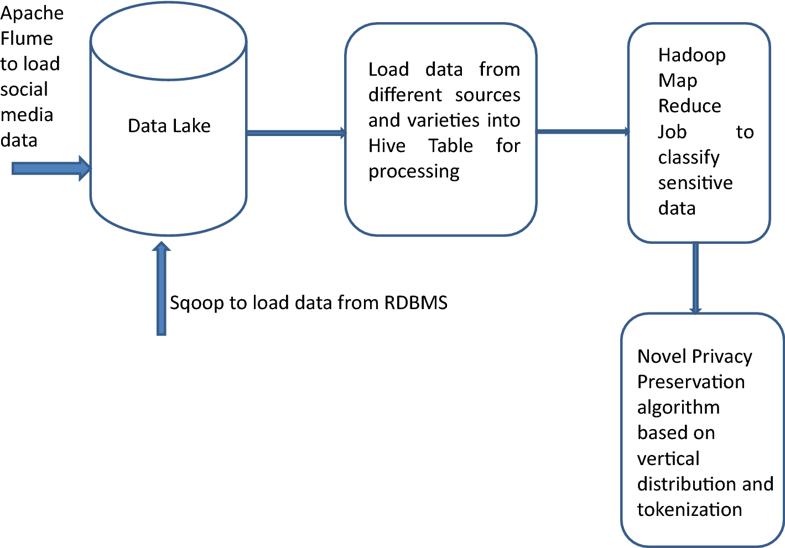

We propose a novel privacy preservation model based on the "data lake" concept to hold a variety of data from diverse sources. A data lake is a repository used to hold data from diverse sources in their raw format.[31][32] Data ingestion from a variety of sources can be done using Apache Flume, and an intelligent algorithm based on machine learning can be applied to identify sensitive attributes dynamically.[33][34] The algorithm will be trained with existing data sets with known sensitive attributes, and rigorous training of the model will help in predicting the sensitive attributes in a given data set.[35] Accuracy of the model can be improved by adding more layers of training, leading to deep learning techniques.[36] Advanced computing techniques like Apache Spark can be used in implementing privacy preserving algorithms, using distributed massive parallel computing with in-memory processing to ensure very fast processing.[37] The proposed model is shown in Fig. 3.

|

Data analytics is done on the data collected from various sources. If an e-commerce site would like to perform data analytics, they need transactional data, website logs, and customer opinions through social media pages. A data lake is used to collect data from different sources. Apache Flume is used to ingest data from social media sites, while website logs flow into the Hadoop Distributed File System (HDFS). Using SQOOP, relational data can be loaded into HDFS.

In a data lake, the data can remain in its native form, which is either structured or unstructured. When data has to be processed, it can be transformed into HIVE tables. A Hadoop MapReduce job using machine learning can be executed on the data to classify the sensitive attributes.[38] The data can be then vertically distributed to separate the sensitive attributes from the rest of the data and tokenization applied to map the vertically distributed data. The data without any sensitive attributes can be published for data analytics.

Acknowledgements

I would like to thank my guides for supporting my work and for suggesting necessary corrections.

Authors’ contributions

PRMR: as part of Ph.D. work I have done my literature survey and submitted my work in the form of a paper. SMK: supported me in compiling the paper. APSK: suggested necessary amendments and helped in revising the paper. All authors read and approved the final manuscript.

Availability of data and materials

If anyone is interested in our work, we are ready to provide more details of the MapReduce job which we have executed and the data processing techniques applied. However, the data is used in our work and is freely available in many repositories.

Funding

No funding.

Competing interests

The authors declare that they have no competing interests.

Abbreviations

- CCTV: closed circuit television

- MDSBA: multidimensional sensitivity-based anonymization

References

- ↑ Ducange, P.; Pecori, R.; Mezzina, P. (2018). "A glimpse on big data analytics in the framework of marketing strategies". Soft Computing 22 (1): 325–42. doi:10.1007/s00500-017-2536-4.

- ↑ Chauhan, A.; Kummamuru, K.; Toshniwal, D. (2017). "Prediction of places of visit using tweets". Knowledge and Information Systems 50 (1): 145–66. doi:10.1007/s10115-016-0936-x.

- ↑ Yang, D.; Qu, B.; Cudre-Mauroux, P. (2018). "Privacy-Preserving Social Media Data Publishing for Personalized Ranking-Based Recommendation". IEEE Transactions on Knowledge and Data Engineering. doi:10.1109/TKDE.2018.2840974.

- ↑ Liu, Y.; Guo, W.; Fan, C.-I. et al. (2018). "A Practical Privacy-Preserving Data Aggregation (3PDA) Scheme for Smart Grid". IEEE Transactions on Industrial Informatics. doi:10.1109/TII.2018.2809672.

- ↑ Duncan, G.T.; Fienberg, S.E.; Krishnan, R. et al. (2001). "Disclosure limitation methods and information loss for tabular data". In Doyle, P.; Lane, J.; Theeuwes, J. et al.. Confidentiality, disclosure and data access: Theory and practical applications for statistical agencies. Elsevier. pp. 135–66. ISBN 9780444507617.

- ↑ Duncan, G.T.; Lambert, D. (1986). "Disclosure-Limited Data Dissemination". Journal of the American Statistical Association 81 (393): 10-18. doi:10.1080/01621459.1986.10478229.

- ↑ Lambert, D. (1993). "Measures of disclosure risk and harm". Journal of Official Statistics 9 (2): 313–31.

- ↑ Spiller, K.; Ball, K; Bandara, A. et al. (2017). "Data Privacy: Users’ Thoughts on Quantified Self Personal Data". In Ajana, B.. Self-Tracking. Palgrave Macmillan, Cham. pp. 111–24. doi:10.1007/978-3-319-65379-2_8. ISBN 9783319653792.

- ↑ Hettig, M.; Kiss, E.; Jassel, J.-F. et al. (2013). "Visualizing Risk by Example: Demonstrating Threats Arising From Android Apps". Symposium on Usable Privacy and Security (SOUPS) 2013: 1-2. https://cups.cs.cmu.edu/soups/2013/risk/paper.pdf.

- ↑ Iyengar, V.S. (2002). "Transforming data to satisfy privacy constraints". Proceedings of the Eighth ACM SIGKDD International Conference on Knowledge Discovery and Data Mining: 279–288. doi:10.1145/775047.775089.

- ↑ Bayardo, R.J.; Agrawal, R. (2005). "Data privacy through optimal k-anonymization". Proceedings of the 21st International Conference on Data Engineering: 217–28. doi:10.1109/ICDE.2005.42.

- ↑ LeFevre, K.; DeWitt, D.J.; Ramakrishnan, R. (2005). "Incognito: Efficient full-domain K-anonymity". Proceedings of the 2005 ACM SIGMOD International Conference on Management of Data: 49–60. doi:10.1145/1066157.1066164.

- ↑ LeFevre, K.; DeWitt, D.J.; Ramakrishnan, R. (2006). "Mondrian: Multidimensional K-Anonymity". Proceedings of the 22nd International Conference on Data Engineering: 25. doi:10.1109/ICDE.2006.101.

- ↑ Samarati, P.; Sweeney, L. (1998). "Protecting Privacy when Disclosing Information: k-Anonymity and its Enforcement through Generalization and Suppression". Technical Report SRI-CSL-98-04. SRI International. http://www.csl.sri.com/papers/sritr-98-04/.

- ↑ Sweeney, L. (2002). "Achieving k-anonymity privacy protection using generalization and suppression". International Journal of Uncertainty, Fuzziness and Knowledge-Based Systems 10 (5): 571–88. doi:10.1142/S021848850200165X.

- ↑ Sweeney, L. (2002). "K-Anonymity: A model for protecting privacy". International Journal of Uncertainty, Fuzziness and Knowledge-Based Systems 10 (5): 557–70. doi:10.1142/S0218488502001648.

- ↑ Meyerson, A.; Williams, R. (2004). "On the complexity of optimal K-anonymity". Proceedings of the Twenty-Third ACM SIGMOD-SIGACT-SIGART Symposium on Principles of Database Systems: 223-28. doi:10.1145/1055558.1055591.

- ↑ Xiao, X.; Tao, Y. (2006). "Personalized privacy preservation". Proceedings of the 2006 ACM SIGMOD International Conference on Management of Data: 229-40. doi:10.1145/1142473.1142500.

- ↑ Rubner, Y.; Tomasi, C.; Guibas, L.J. (2000). "The Earth Mover's Distance as a Metric for Image Retrieval". International Journal of Computer Vision 40 (2): 99-121. doi:10.1023/A:1026543900054.

- ↑ 21.0 21.1 Aggarwal, C.C.; Yu, P.S. (2008). "A General Survey of Privacy-Preserving Data Mining Models and Algorithms". In Aggarwal, C.C.; Yu, P.S.. Privacy-Preserving Data Mining. Springer. pp. 11–52. doi:10.1007/978-0-387-70992-5_2. ISBN 9780387709925.

- ↑ Jiang, R.; Lu, R.; Choo, K.-K. R. (2018). "Achieving high performance and privacy-preserving query over encrypted multidimensional big metering data". Future Generation Computer Systems 78 (Part 1): 392–401. doi:10.1016/j.future.2016.05.005.

- ↑ Wang, K.; Yu, P.S.; Chakraborty, S. (2004). "Bottom-up generalization: a data mining solution to privacy protection". Proceedings from the Fourth IEEE International Conference on Data Mining: 249-256. doi:10.1109/ICDM.2004.10110.

- ↑ Fung, B.C.M.; Wang, K.; Yu, P.S. (2005). "Top-down specialization for information and privacy preservation". Proceedings from the 21st International Conference on Data Engineering: 205-16. doi:10.1109/ICDE.2005.143.

- ↑ Zhang, X; Yang, C.; Nepal, S. et al. (2013). "A MapReduce Based Approach of Scalable Multidimensional Anonymization for Big Data Privacy Preservation on Cloud". Proceedings from the 2013 International Conference on Cloud and Green Computing: 105-12. doi:10.1109/CGC.2013.24.

- ↑ Zhang, X; Yang, L.T.; Liu, C. et al. (2013). "A Scalable Two-Phase Top-Down Specialization Approach for Data Anonymization Using MapReduce on Cloud". IEEE Transactions on Parallel and Distributed Systems 25 (2): 363-73. doi:10.1109/TPDS.2013.48.

- ↑ Al-Zobbi, M.; Shahrestani, S.; Ruan, C. (2017). "Improving MapReduce privacy by implementing multi-dimensional sensitivity-based anonymization". Journal of Big Data 4: 45. doi:10.1186/s40537-017-0104-5.

- ↑ Al-Zobbi, M.; Shahrestani, S.; Ruan, C. (2017). "Implementing A Framework for Big Data Anonymity and Analytics Access Control". Proceedings from the 2017 IEEE Trustcom/BigDataSE/ICESS: 873-80. doi:10.1109/Trustcom/BigDataSE/ICESS.2017.325.

- ↑ Schneider, C. (25 May 2016). "The biggest data challenges that you might not even know you have". IBM Blogs - Watson. IBM. https://www.ibm.com/blogs/watson/2016/05/biggest-data-challenges-might-not-even-know/.

- ↑ Mukherjee, S.; Venkataraman, S. (2016). "Strengthening Privacy Protection with the European General Data Protection Regulation" (PDF). TATA Consultancy Services Ltd. https://www.tcs.com/content/dam/tcs/pdf/technologies/Cyber-Security/Abstract/Strengthening-Privacy-Protection-with-the-European-General-Data-Protection-Regulation.pdf.

- ↑ He, X.; Wang, K.; Huang, H. et al. (2018). "QoE-Driven Big Data Architecture for Smart City". IEEE Communications Magazine 56 (2): 88-93. doi:10.1109/MCOM.2018.1700231.

- ↑ Ramakrishnan, R.; Sridharan, B.; Douceur, J.R. et al. (2017). "Azure Data Lake Store: A Hyperscale Distributed File Service for Big Data Analytics". Proceedings of the 2017 ACM International Conference on Management of Data: 51-63. doi:10.1145/3035918.3056100.

- ↑ Baheshti, A.; Benatallah, B.; Nouri, R. et al. (2017). "CoreDB: A Data Lake Service". Proceedings of the 2017 ACM on Conference on Information and Knowledge Management: 2451-54. doi:10.1145/3132847.3133171.

- ↑ Shang, T.; Zhao, Z.; Guan, Z. et al. (2017). "A DP Canopy K-Means Algorithm for Privacy Preservation of Hadoop Platform". In Wen, S.; Wu, W.; Castiglione, A.. Cyberspace Safety and Security. Springer. pp. 189–98. doi:10.1007/978-3-319-69471-9_14. ISBN 9783319694719.

- ↑ Jia, Q.; Guio, L.; Jin, Z. et al. (2018). "Preserving Model Privacy for Machine Learning in Distributed Systems". IEEE Transactions on Parallel and Distributed Systems 29 (8): 1808-22. doi:10.1109/TPDS.2018.2809624.

- ↑ Psychoula, I.; Merdivan, E.; Singh, D. et al. (2018). A Deep Learning Approach for Privacy Preservation in Assisted Living. https://arxiv.org/abs/1802.09359.

- ↑ Guller, M. (2015). Big Data Analytics with Spark. APress. pp. 277. ISBN 9781484209646.

- ↑ Fung, B.C.M.; Wang, K.; Yu, P.S. et al. (2007). "Anonymizing Classification Data for Privacy Preservation". IEEE Transactions on Knowledge and Data Engineering 19 (5): 711-25. doi:10.1109/TKDE.2007.1015.

Notes

This presentation is faithful to the original, with only a few minor changes to presentation. Grammar was cleaned up for smoother reading. In some cases important information was missing from the references, and that information was added. The citations for 10 and 11 were flipped because the original applied the citation to the title, which we don't do here.