Journal:SCADA system testbed for cybersecurity research using machine learning approach

| Full article title | SCADA system testbed for cybersecurity research using machine learning approach |

|---|---|

| Journal | Future Internet |

| Author(s) |

Teixeira, Marcio Andrey; Salman, Tara; Zolanvari, Maede; Jain, Raj; Meskin, Nader; Samaka, Mohammed |

| Author affiliation(s) |

Federal Institute of Education, Science, and Technology of Sao Paulo, Washington University in Saint Louis, Qatar University |

| Primary contact | Email: marcio dot andrey at ifsp dot edu dot br |

| Year published | 2018 |

| Volume and issue | 10(8) |

| Page(s) | 76 |

| DOI | 10.3390/fi10080076 |

| ISSN | 1999-5903 |

| Distribution license | Creative Commons Attribution 4.0 International |

| Website | https://www.mdpi.com/1999-5903/10/8/76/htm |

| Download | https://www.mdpi.com/1999-5903/10/8/76/pdf (PDF) |

|

|

This article contains rendered mathematical formulae. You may require the TeX All the Things plugin for Chrome or the Native MathML add-on and fonts for Firefox if they don't render properly for you. |

Abstract

This paper presents the development of a supervisory control and data acquisition (SCADA) system testbed used for cybersecurity research. The testbed consists of a water storage tank’s control system, which is a stage in the process of water treatment and distribution. Sophisticated cyber-attacks were conducted against the testbed. During the attacks, the network traffic was captured, and features were extracted from the traffic to build a dataset for training and testing different machine learning algorithms. Five traditional machine learning algorithms were trained to detect the attacks: Random Forest, Decision Tree, Logistic Regression, Naïve Bayes, and KNN. Then, the trained machine learning models were built and deployed in the network, where new tests were made using online network traffic. The performance obtained during the training and testing of the machine learning models was compared to the performance obtained during the online deployment of these models in the network. The results show the efficiency of the machine learning models in detecting the attacks in real time. The testbed provides a good understanding of the effects and consequences of attacks on real SCADA environments.

Keywords: cybersecurity, machine learning, SCADA system, network security

Introduction

Supervisory control and data acquisition (SCADA) systems are industrial control systems (ICS) widely used by industries to monitor and control different processes such as oil and gas pipelines, water distribution systems, electrical power grids, etc. These systems provide automated control and remote monitoring of services being used in daily life. For example, state and municipal governments use SCADA systems to monitor and regulate water levels in reservoirs, pipe pressure, and water distribution.

A typical SCADA system includes components like computer workstations, a human-machine interface (HMI), programmable logic controllers (PLCs), sensors, and actuators.[1] Historically, these systems had private and dedicated networks. However, due to the wide-range deployment of remote management, open IP networks (e.g., the internet) are now used for SCADA system communication.[2] This exposes SCADA systems to the cyberspace and makes them vulnerable to cyber-attacks using the internet.

Machine learning (ML) and artificial intelligence techniques have been widely used to build intelligent and efficient intrusion detection systems (IDS) dedicated to ICS. However, researchers generally develop and train their ML-based security system using network traces obtained from publicly available datasets. Due to malware evolution and changes in attack strategies, these datasets fail to protect the system from new types of attacks, and consequently, the benchmark datasets should be updated periodically.

This paper presents the deployment of a SCADA system testbed for cybersecurity research and investigates the feasibility of using ML algorithms to detect cyber-attacks in real time. The testbed was built using equipment deployed in real industrial settings. Sophisticated attacks were conducted on the testbed to develop a better understanding of the attacks and their consequences in SCADA environments. The network traffic was captured, including both abnormal and normal traffic. The behavior of both types of traffic (abnormal and normal) was analyzed, and features were extracted to build a new SCADA-IDS dataset. This dataset was then used for training and testing ML models, which were further deployed in the network. The performance of the ML model depends highly on the available datasets. One of the main contributions of this paper is building a new dataset updated with recent and more sophisticated attacks. We argue that IDS using ML models trained with a dataset generated at the process control level could be more efficient, less complicated, and more cost-effective as compared to traditional protection techniques. Five traditional machine learning algorithms were trained to detect the attacks: Random Forest, Decision Tree, Logistic Regression, Naïve Bayes, and KNN. Once trained and tested, the ML models were deployed in the network, where real network traffic was used to analyze the effectiveness and efficiency of the ML models in a real-time environment. We compared the performance obtained during the training and test phase of the ML models with the performance obtained during the online deployment of these models in the network. The online deployment is another contribution of this paper since most of the published papers present the performance of the ML models obtained during the training and test phases. We conducted this research to build an IDS software based on ML models to be deployed in ICS/SCADA systems.

The remainder of this paper is organized as follows. The next section presents a brief background of the ICS-SCADA system reference model and related works. Afterwards, we describe the developed SCADA system testbed, and then we describe the ML algorithms and the performance measurements used in this work. The last three sections show conducted attack scenarios and the main features of the dataset used to train the algorithms, the results and the interoperations behind them, and a summary of the main points and outcomes.

Background

In this section, we briefly present a description of the ICS-SCADA reference model and some related works in the domain of ML algorithms for SCADA system security.

ICS reference model

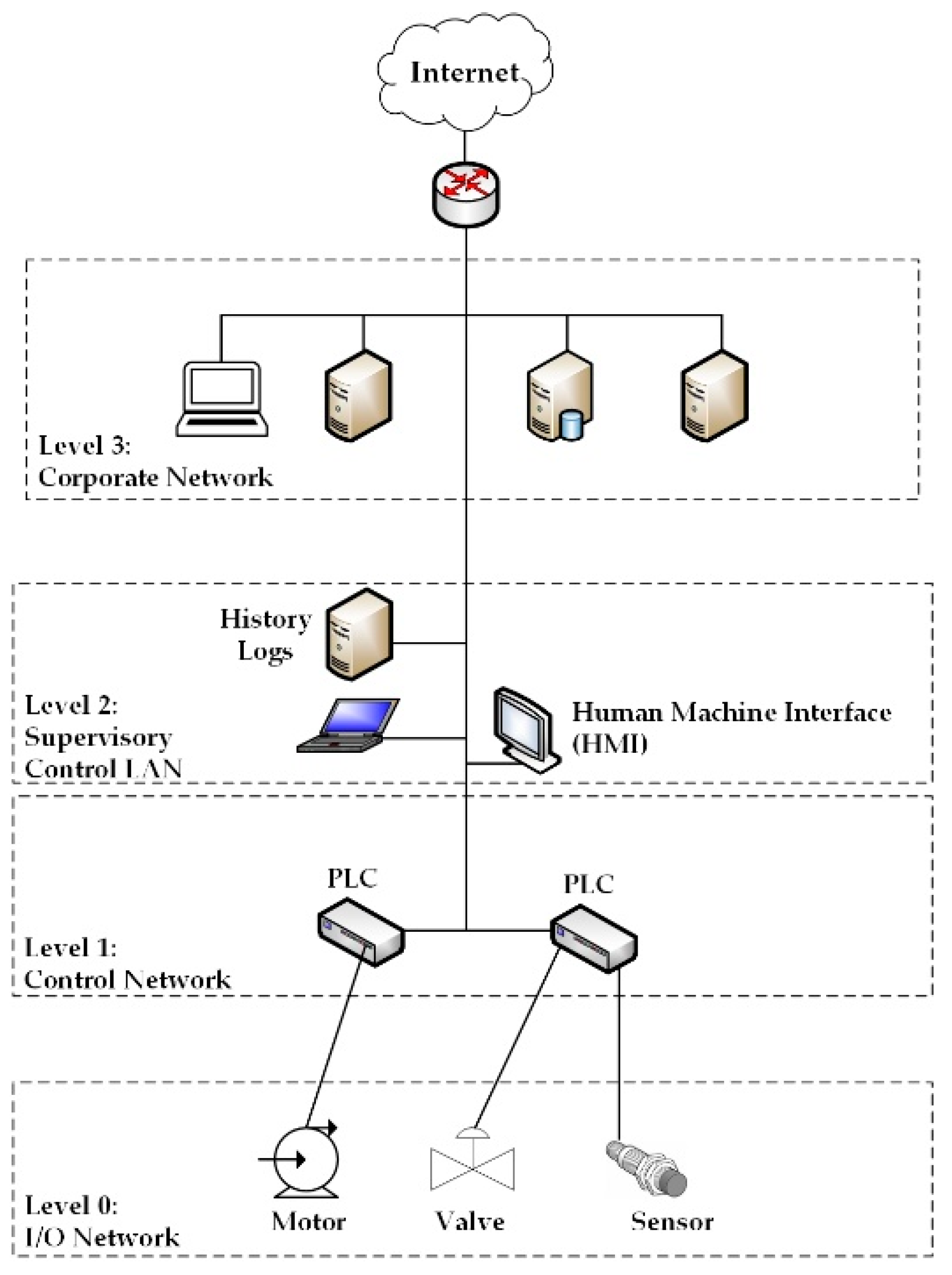

"ICS" is a general term that covers numerous control systems, including SCADA systems, distributed control systems, and other control system configurations.[3] An ICS consists of combinations of control components (e.g., electrical, mechanical, hydraulic, pneumatic) that are used to achieve various industrial objectives (e.g., manufacturing, transportation of matter or energy). Figure 1 shows an example of an ICS reference model.[4]

|

As can be seen from Figure 1, the ICS model is divided into four levels, from 3 to 0. Level 3 (the corporate network) consists of traditional information technology, including the general deployment of services and systems, such as file transfer, websites, mail servers, resource planning, and office automation systems. Level 2 (the supervisory control local area network) includes the functions involved in monitoring and controlling the physical processes and the general deployment of systems such as HMIs, engineering workstations, and history logs. Level 1 (the control network) includes the functions involved in sensing and manipulating physical processes, e.g., receiving the information, processing the data, and triggering outputs, which are all done in PLCs. Level 0 (the I/O network) consists of devices (sensors/actuators) that are directly connected to the physical process.

As shown in Figure 1, Level 3 is composed of the traditional IT infrastructure system (internet access service, file transfer protocol server, virtual private network (VPN) remote access, etc.). Levels 2, 1, and 0 represent a typical SCADA system, which is composed of the following components:

- HMI: Used to observe the status of the system or to adjust the system parameters for processes control and management purposes

- Engineering workstation: Used by engineers for programming the control functions of the HMI

- History logs: Used to collect the data in real-time from the automation processes for current or later analysis

- PLCs: Slave stations in the SCADA architecture that are connected to sensors or actuators

The SCADA communication protocol

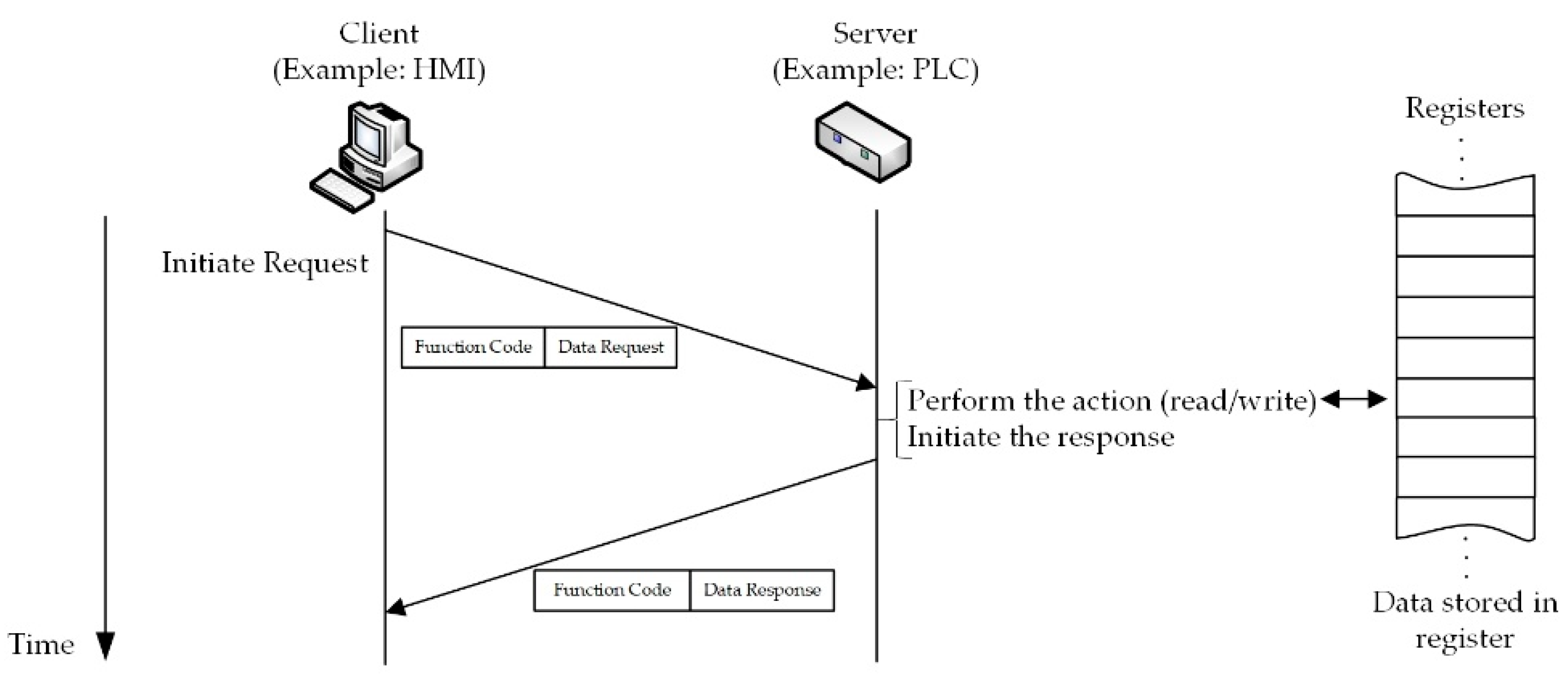

There are several communication protocols developed for use in SCADA systems. These protocols define the standard message format for all inter-device communications in the network. One popular protocol, which is widely used in SCADA system environments, is the Modbus protocol.[5] Modbus is an application-layer messaging protocol that provides the client/server communications between devices connected to an Ethernet network and offers services specified by function codes. The function codes tell the server what action to take. For example, a client can read the status of the discrete outputs or the values of digital inputs from the PLC; or it can read/write the data contents of a group of registers inside the PLC. Figure 2 illustrates an example of Modbus client/server communication.

|

The Modbus register address type consists of four data reference types[5][6] which are summarized in Table 1. The “xxxx” following a leading digit represents a four-digit address location in the user data memory.

| ||||||||||||||||||

Related works

Cyber-attacks are continuously evolving and changing behavior to bypass security mechanisms. Thus, the utilization of advanced security mechanisms is essential to identify and prevent new attacks. In this sense, the development of real testbeds advances the research in this area.

Morris et al.[7] describe four datasets to be used for cybersecurity research. The datasets include network traffic, process control, and process measurement features from a set of attacks against testbeds which use Modbus application layer protocol. The authors argue there are several datasets developed to train and validate IDS associated with traditional information technology systems, but in the SCADA security area there is a lack of availability and access to SCADA network traffic. In our work, a new dataset with new types of attacks was created. So, once our dataset is available, we are providing a resource that could be used by researchers to train, validate, and compare their results with other datasets.

In order to investigate the security of the Modbus/TCP protocol, Miciolino et al.[8] explored a complex cyber-physical testbed, conceived for the control and monitoring of a water system. The analysis of the experimental results highlights the critical characteristics of the Modbus/TCP as a popular communication protocol in ICS environments. They concluded that by obtaining sufficient knowledge of the system, an attacker is able to change the commands of the actuators or the sensor readings in order to achieve its malicious objectives. Obtaining knowledge of the system is the first step in attacking a system. This attack is also known as a reconnaissance attack. Hence, in our work, our ML models are trained to recognize this kind of attack.

Rosa et al.[9] describe some practical cyber-attacks using an electricity grid testbed. This testbed consists of a hybrid environment of SCADA assets (e.g., PLCs, HMIs, process control servers) controlling an emulated power grid. The work explains their attacks and discusses some of the challenges faced by an attacker in implementing them. One of the attacks is the reconnaissance network attack. The authors argue that this kind of attack can be used not only to discover devices and types of services but also to perform fingerprinting and discover PLCs behind the gateways. Hence, in our work, advanced reconnaissance attacks were carried out, and ML algorithms were used to detect them.

Keliris et al.[10] developed a process-aware supervised learning defense strategy that considers the operational behavior of an ICS to detect attacks in real-time. They used a benchmark chemical process and considered several categories of attack vectors on their hardware controllers. They used their trained SVM model to detect abnormalities in real-time and to distinguish between disturbances and malicious behavior as well. In our work, we used five ML algorithms to identify the abnormal behavior in real-time and evaluated their detection performance.

Tomin et al.[11] presented a semi-automated method for online security assessment using ML techniques. They outline their experience obtained at the Melentiev Energy Systems Institute, Russia in developing ML-based approaches for detecting potentially dangerous states in power systems. Multiple ML algorithms were trained offline using a resampling cross-validation method. Then, the best model among the ML algorithms was selected based on performance and was used online. They argue that the use of ML techniques provides reliable and robust solutions that can resolve the challenges in planning and operating future industrial systems with an acceptable level of security.

Cherdantseva et al.[12] reviewed the state of the art in cybersecurity risk assessment of SCADA systems. This review indicates that despite the popularity of the machine learning techniques, research groups in ICS security have reported a lack of standard datasets for training and testing machine learning algorithms. The lack of standard datasets has resulted in an inability to develop robust ML models to detect the anomalies in ICS. Using the testbed proposed in this paper, we built a new dataset for training and testing ML algorithms.

The SCADA system testbed

In this section, we describe the configuration of our SCADA system testbed for cybersecurity research.

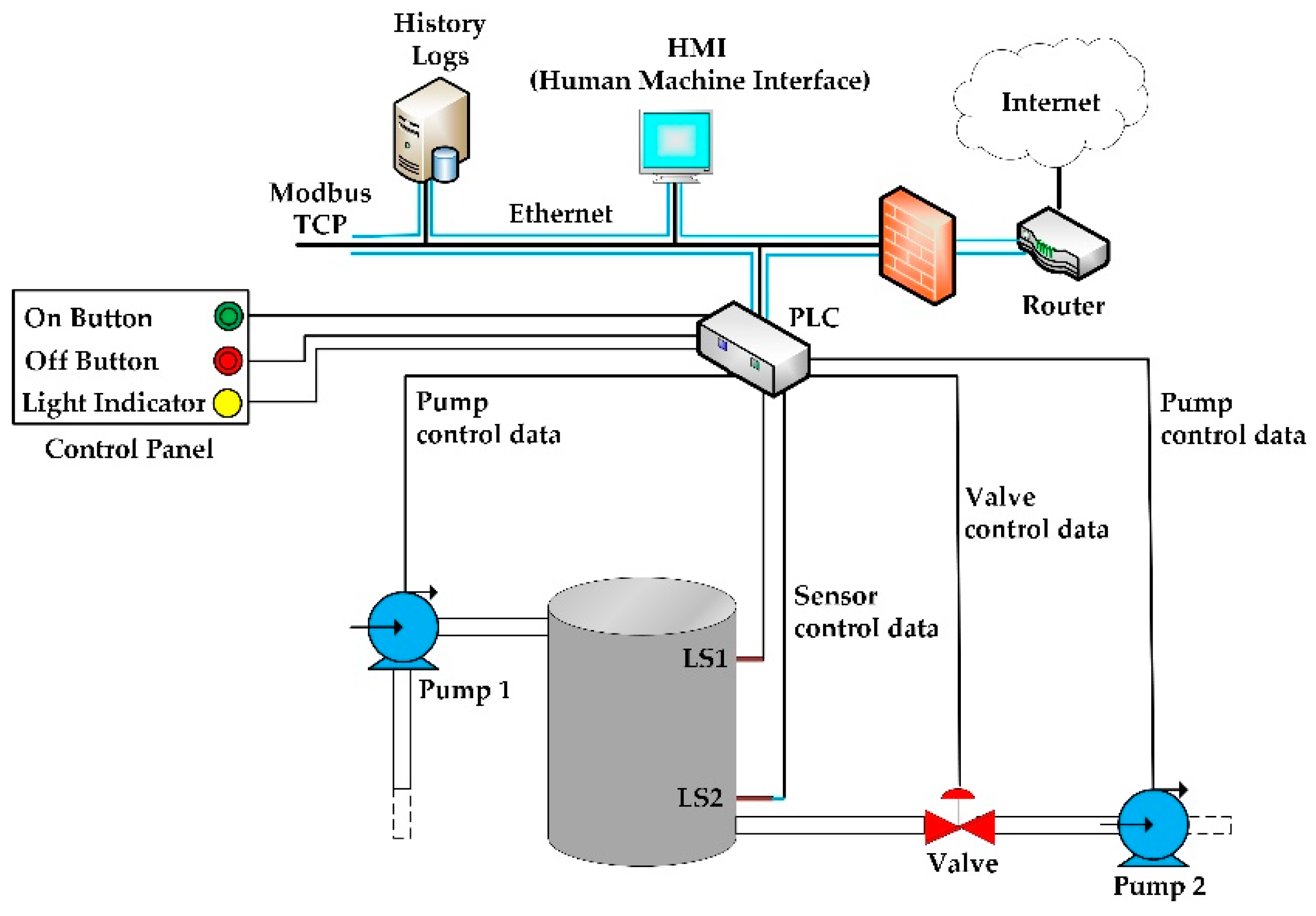

The purpose of our testbed is to emulate real-world industrial systems as closely as possible without replicating an entire plant or assembly system.[13] The utilization of a testbed allows us to carry out real cyber-attacks. Our testbed is dedicated to controlling a water storage tank, which is a part of the process of water treatment and distribution. The components used in our testbed are commonly used in real SCADA environments. Figure 3 shows the SCADA testbed framework for our targeted application and Table 2 shows a brief description of the equipment used to build the testbed.

|

| ||||||||||||||||||||||||||

As shown in Figure 3, the storage tank has two level sensors: Level Sensor 1 (LS1) and Level Sensor 2 (LS2) that monitor the water level in the tank. When the water reaches the maximum level defined in the system, the LS1 sends a signal to the PLC. The PLC turns off Water Pump 1 used to fill up the tank, opens the valve, and turns on Water Pump 2 to draw the water from the tank. When the water reaches the minimal level defined in the system, LS2 sends a signal to the PLC, which closes the valve, turns off Water Pump 2, and turns on Water Pump 1 to fill up the tank. This process starts over when the water level reaches LS1. The SCADA system gets data from the PLC using the Modbus communication protocol and displays them to the system operator through the HMI interface.

There are other ICS protocols which could be used instead of Modbus in our testbed. For example, DNP3 is an ICS protocol that provides some security mechanisms.[14][15] However, in recent research, Li et al.[16] reported that they found 17,546 devices connected to the internet using the Modbus protocol spread all over the world. They did not count the amount of equipment not directly connected to the internet. Although there are other ICS protocols, many industries still use SCADA systems with Modbus protocol because their equipment does not support other protocols. In this case, solutions to detect attacks can be cheaper than other solutions, for example, changing the devices.

PLC Schneider model M241CE40[17] is used in our testbed to control the process of the water storage tank. The logic programming of the PLC is done using the LADDER programming language[18](not covered in this paper). The sensors described in Table 2 are connected to the digital inputs of the PLC. The pumps and valves are connected to the output of the PLC.

Machine learning algorithms and performance measurements

In this section, we describe the ML algorithms used in our work as well as the measurements used to evaluate their performances.

Machine learning algorithms

The ML algorithms can be classified as supervised, unsupervised, and semi-supervised. Each class has its own characteristics and applicability. The discussion of all algorithms is beyond the scope of this paper. However, we refer the reader to Mantere et al.[19] and Ng and Jordan[20] for detailed technical discussions of these algorithms. In this paper, we use traditional ML algorithms to detect the attacks. Our target is to build supervised machine learning models, and we chose the followings algorithms for attack detection and classification:

The performance of these algorithms is discussed in the "Numerical results" section.

Performance measurements

Traditionally, the performance of ML algorithms is measured by metrics which are derived from the confusion matrix.[25] Table 3 shows the confusion matrix in the IDS context.

| ||||||||||||

In the IDS context, the following parameters are used to create the confusion matrix:

- TN: Represents the number of normal flows correctly classified as normal (e.g., normal traffic);

- TP: Represents the number of abnormal flows (attacks) correctly classified as abnormal (e.g., attack traffic);

- FP: Represents the number of normal flows incorrectly classified as abnormal; and

- FN: Represents the number of abnormal flows incorrectly classified as normal.

Next, we present several evaluation metrics and their respective formulas which are derived from the confusion matrix parameters:

- Accuracy: The percentage of correctly predicted flows considering the total number of predictions:

- False Alarm Rate (FAR): This represents the percentage of the normal flows misclassified as abnormal flows (attack) by the model:

- Un-Detection Rate (UND): The fraction of the abnormal flows (attack) which are misclassified as normal flows by the model:

Accuracy (as shown in the first equation) is the most frequently used metric for evaluating the performance of learning models in classification problems. However, this metric is not very reliable for evaluating the ML performance in scenarios with imbalanced classes.[26] In this case, one class is dominant in number, and it has more samples relatively compared to another class. For example, in IDS scenarios, the proportion of normal flows to attack flows is very high in any realistic dataset. That is, the number of samples in the dataset which represent the normal flows is enormous compared to the number of samples which represents the attack flows. This problem is prevalent in scenarios where anomaly detection is crucial, such as fraudulent transactions in banks, identification of rare diseases, and in the identification of cyber-attacks in critical infrastructure. New metrics have been developed to avoid a biased analysis.[27] So, in addition to the accuracy, we also used the FAR and UND metrics.

Attack scenarios, features selection, and evaluation scenarios

In this section, we describe the attacks carried out in our testbed and the features used to build our dataset. This dataset was used for training and testing the ML algorithms, as described in the following section on numerical results.

Attack scenarios

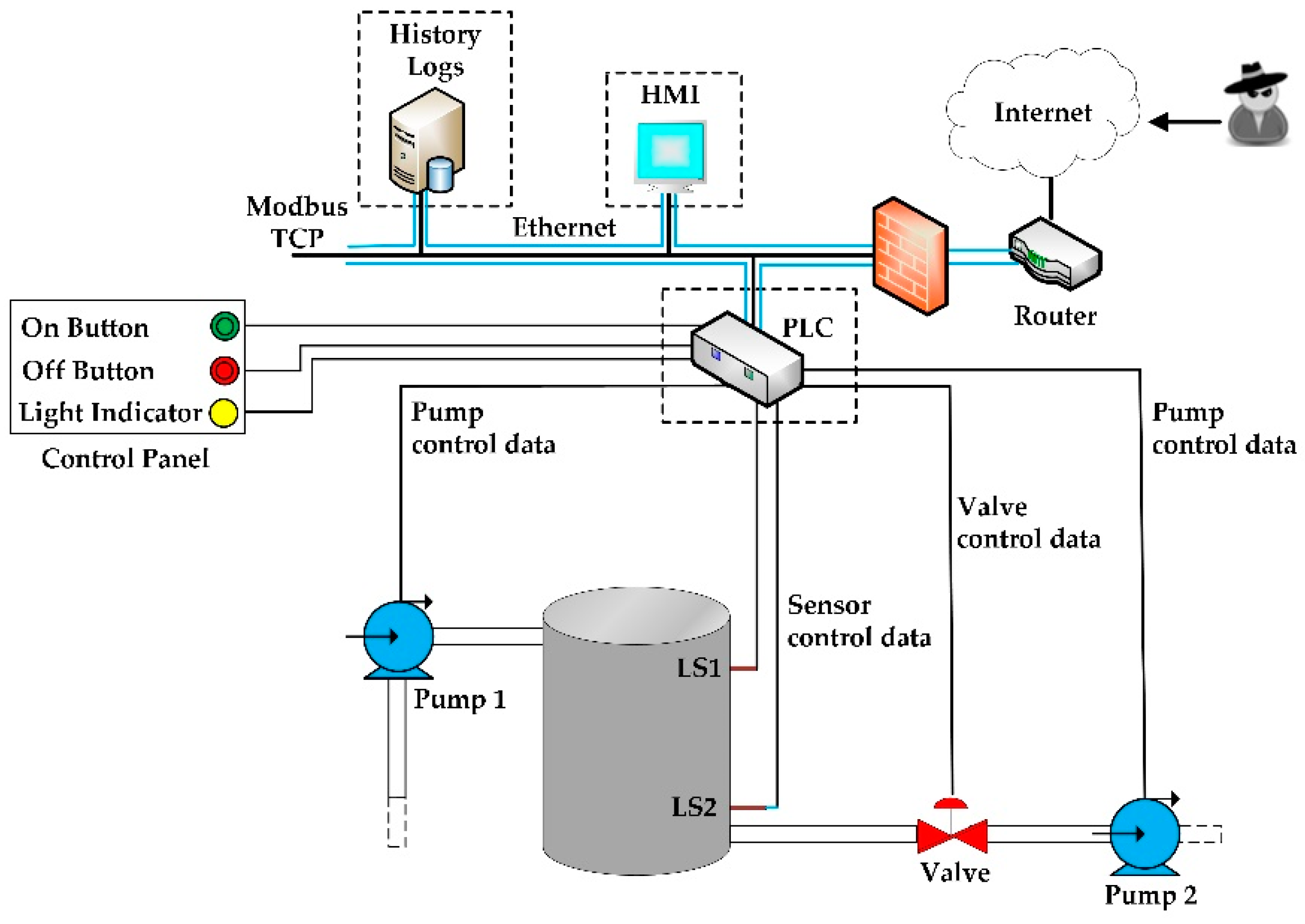

Network attacks on SCADA systems can be divided into three categories: reconnaissance, command injection, and denial of service (DoS).[7] Our focus in this paper is on the reconnaissance attacks where the network is scanned for possible vulnerabilities to be used for later attacks. A reconnaissance attack is the first stage of any attack on a networking system. In this stage, hackers use scan tools to inspect the topology of the victim network and identify the devices in the network as well as their vulnerabilities. Figure 4 shows our testbed attack scenario where the dashed rectangles highlight the vulnerable spots and possible attack targets in the system.

|

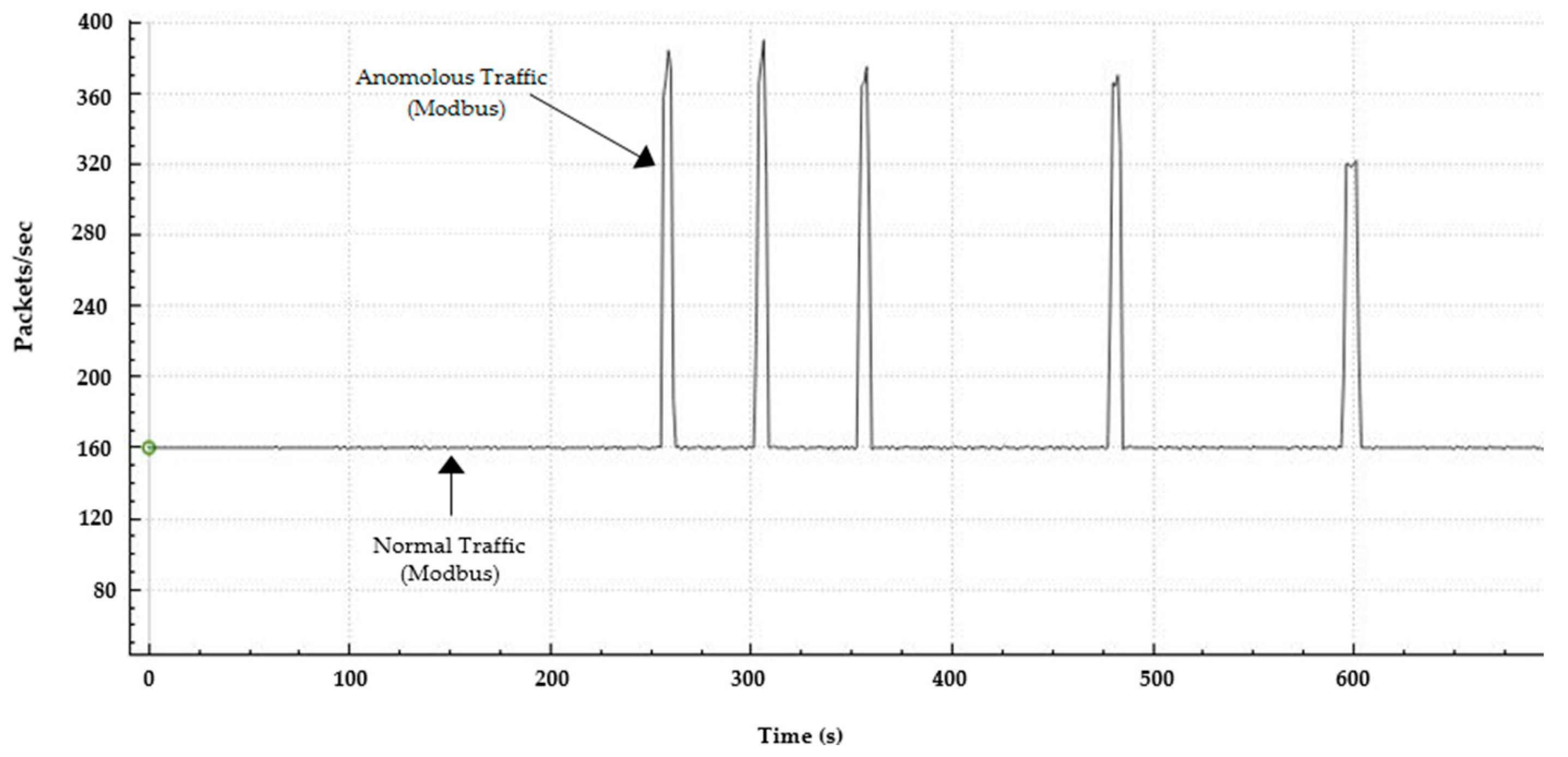

Some reconnaissance attacks can be easily detected. For example, there are scanning tools which send a large number of packets per second under Modbus/TCP to the targeted device and wait for acknowledgment of the packets from them. If a response is received, the host (i.e., the device) is active. This attack generates a considerable variation in the traffic behavior which can be easily detected by the traditional IDS or even the traditional firewall or rule-based mechanisms. Figure 5 shows an example of the traffic behavior when a scanning tool was used in our testbed.

|

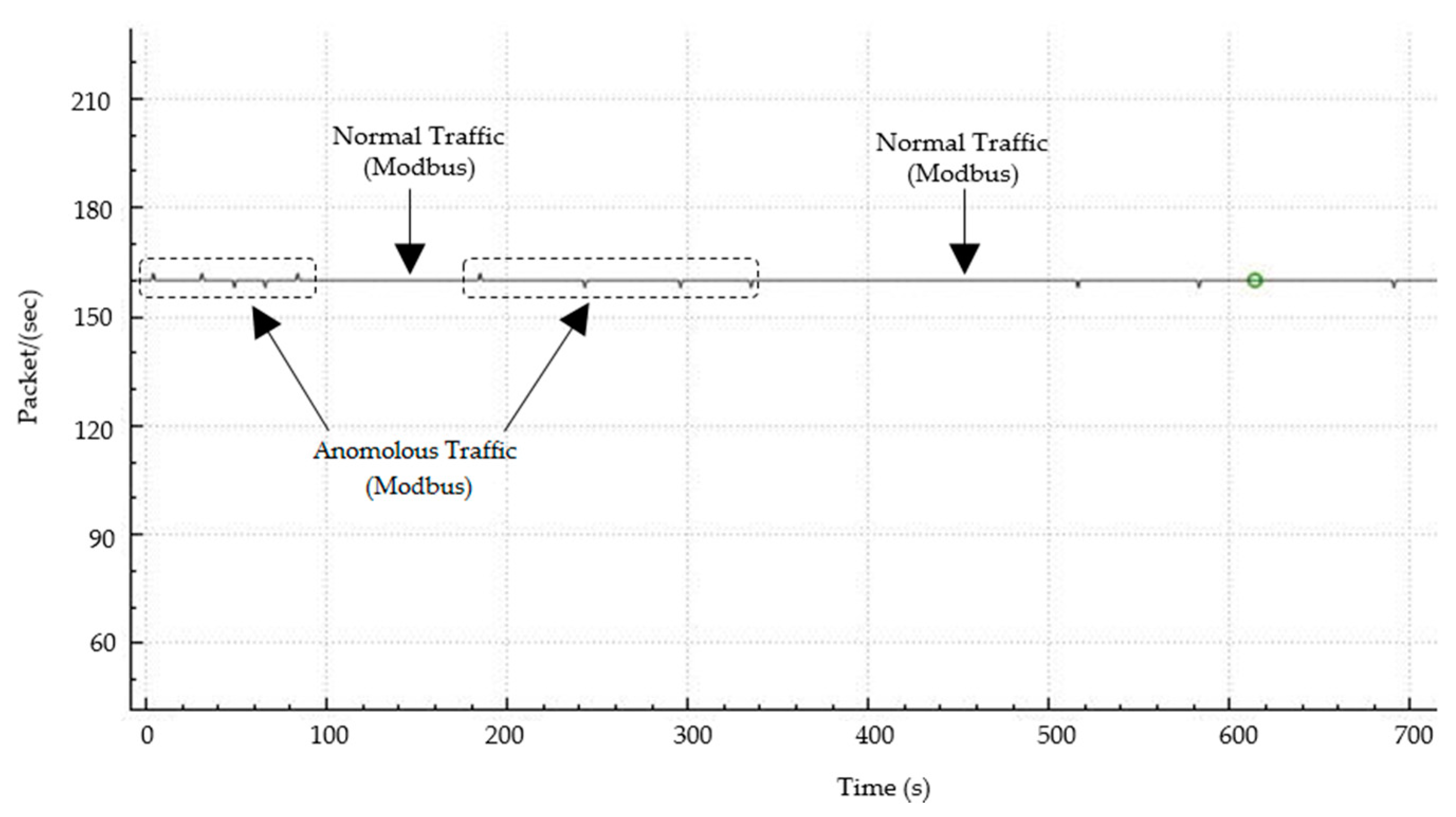

On the other hand, there are some sophisticated reconnaissance attacks which are more difficult to detect. For example, some exploits can be used to map the network, which results in an attack behavior very similar to normal traffic. Figure 6 illustrates the network traffic behavior during such exploit attacks. As can be seen, the change in the traffic behavior is negligible under the attack. Thus, it is difficult to detect the attack. The use of rule-based mechanisms would fail because the signature of the Modbus and TCP traffic do not change, and the language used to express the detection rules may not be expressive enough. On the other hand, the use of ML can improve the detection rate as ML algorithms can be trained to detect these attack scenarios.

|

We conducted the following reconnaissance and exploit attacks specific to the ICS environment described in Table 4. Details of the commands used to perform the attacks can be found in works by Calderon[28] and Mnemon et al.[29] During the attacks, the network traffic was captured to be analyzed. We used Wireshark[30] and Argus[31] to analyze the captured traffic. The captured traffic included unencrypted control information of the devices (valve, pumps, sensors) as well as information regarding their type (function codes, type of data). Table 5 presents statistical information about the captured traffic.

| ||||||||||||||

| ||||||||||||||||||||||||||||

Features selection

Once the network traffic is captured, the next step is to select potential features which can distinguish the anomalous traffic from the normal traffic. Mantere et al.[19] selected 12 useful features for ML-based network security monitoring in the ICS networks. Some of those researchers later went on to further study those potential features.[32] In our work, we analyzed the variation of the features during the normal and attack traffic, and we analyzed those features that did not vary during the normal and attack traffic. Based on these prior works and our studies, Table 6 shows the features selected for our dataset.

| ||||||||||||||||

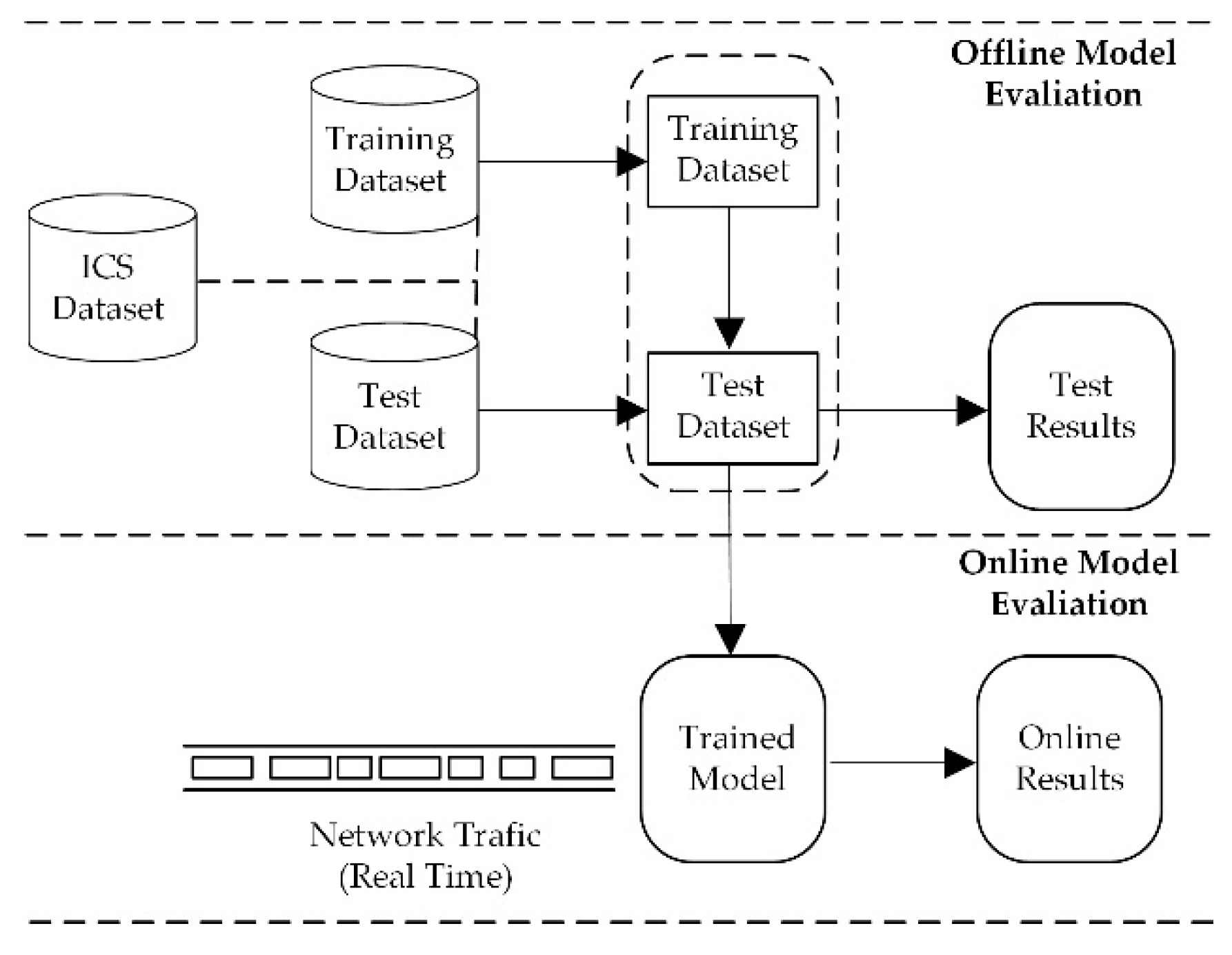

Evaluation scenario

After defining the dataset, the features were extracted as discussed in the previous subsection. Then, the data was labeled either as normal traffic or attack traffic. Following that, the dataset was split into training and test datasets. The training dataset was composed of 80 percent of the total data, and it was used to train our ML model. The test dataset consists of the remaining 20 percent of the data, and it was used to evaluate the performance of our trained ML model. We call this training and test phase “offline evaluation” because the ML models were trained and tested offline. Figure 7 shows our evaluation scenario.

|

After training and testing, the trained ML models were created and deployed in the network. Then, their performance was analyzed using real network traffic. This phase was called “online evaluation.” We compared the results obtained from the two phases (offline and online). This is described next.

Numerical results

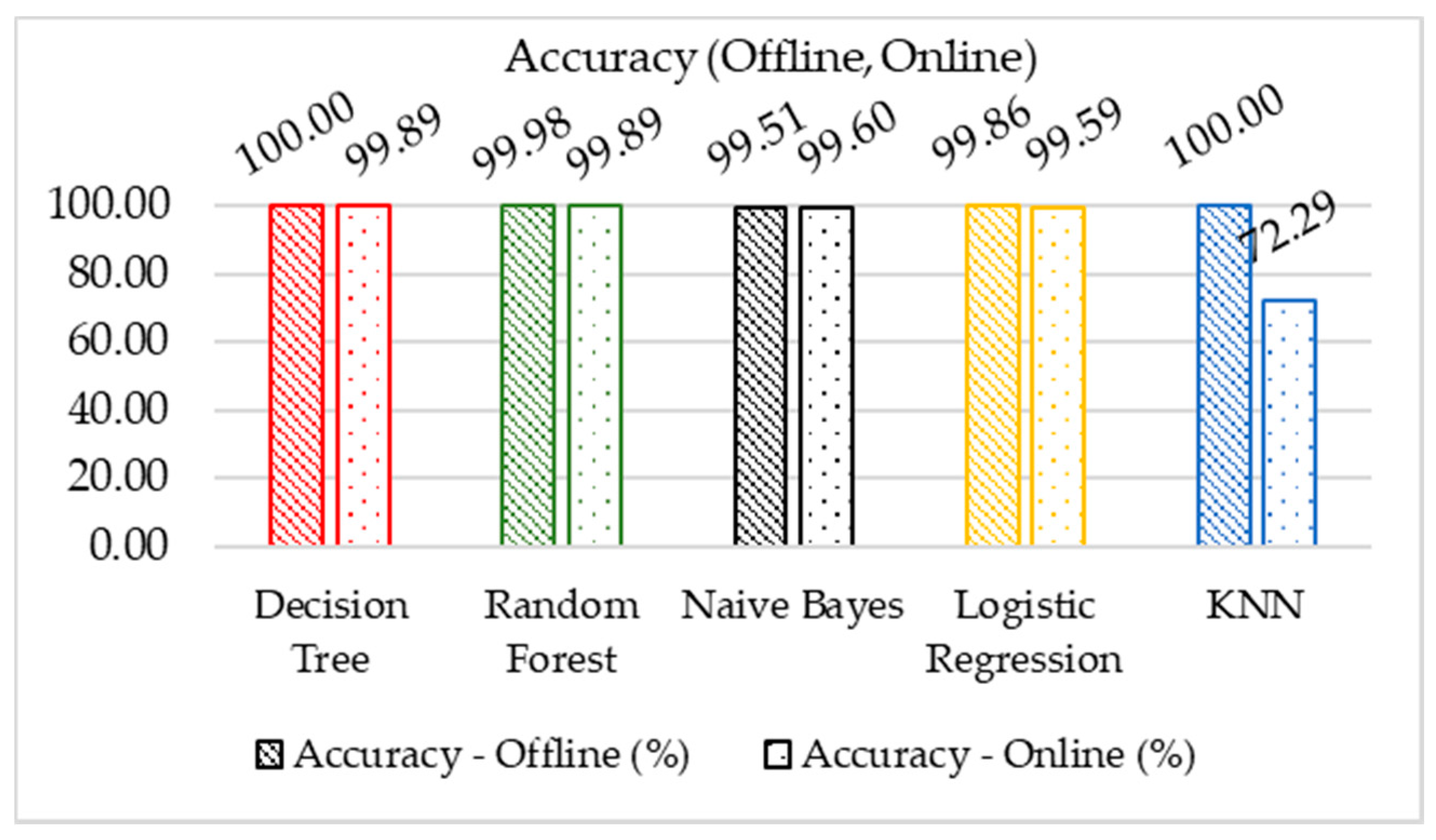

In this section, we present the numerical results of the attacks described previously. Figure 8 shows the results for the accuracy of the ML algorithms that were used.

|

As shown previously, the accuracy represents the total number of correct predictions divided by the total number of samples. Considering the offline evaluations, Figure 8 shows Decision Tree and KNN have the best accuracy (100%) compared to other ML models. However, the difference in the accuracy is small among all trained models. In other words, all chosen ML algorithms performed well in terms of accuracy during the offline phase. During the online phase, Decision Tree, Random Forest, Naïve Bayes and Logistic Regression show a small difference, hence, the performance of these algorithms in both phases (offline and online), are similar. The same does not apply to the KNN model. There was a significant difference between the online and offline phase, which indicates that in practice the KNN does not provide good accuracy.

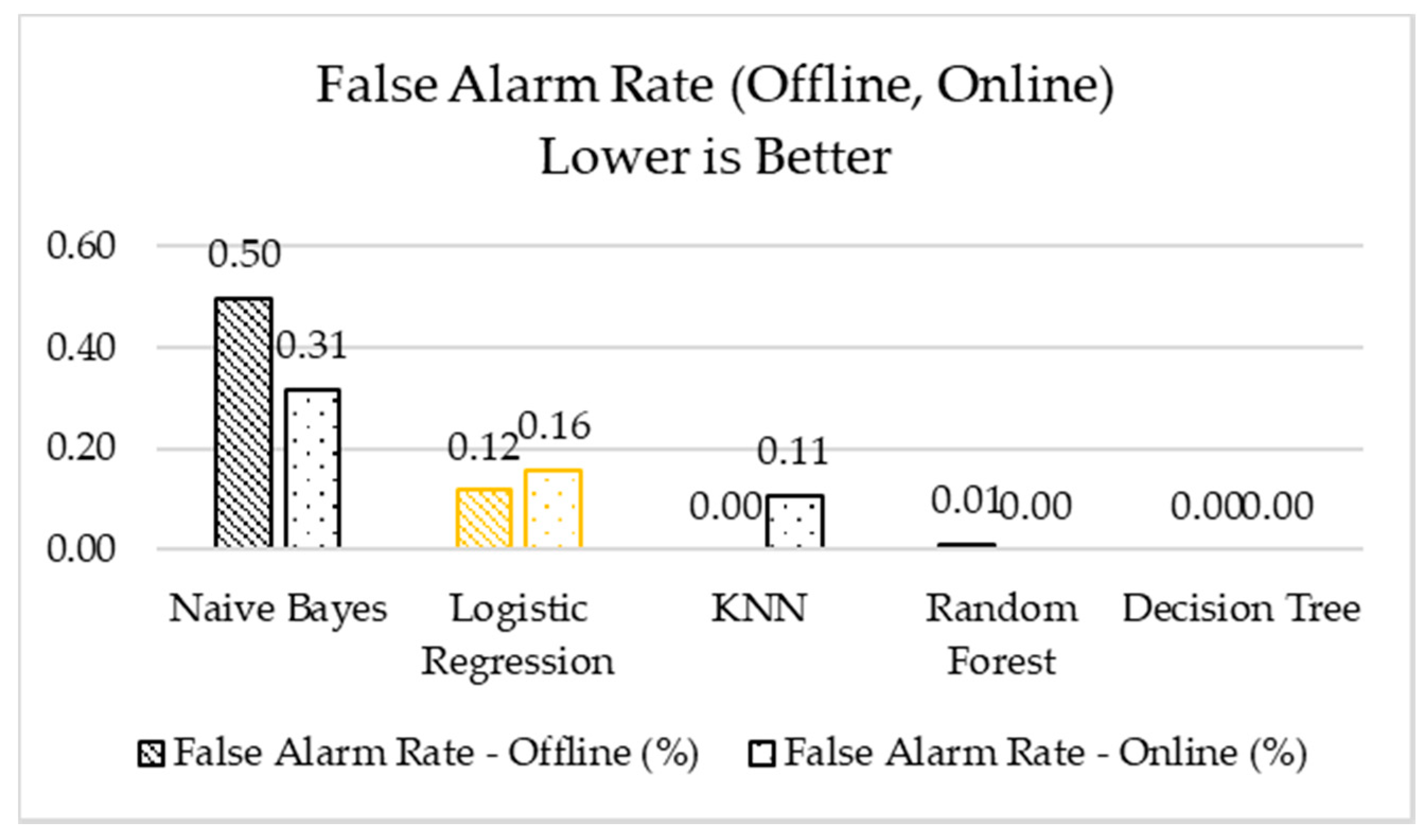

As shown in Table 5, our dataset is unbalanced. Therefore, accuracy is not the ideal measure to evaluate performance.[33] Other metrics are needed to compare the performance of the ML algorithms. Figure 9 shows the false alarm rate (FAR) results. The FAR metric is the percentage of the regular traffic which has been misclassified as anomalous by the model.

|

Regarding the offline and online evaluations, as shown in Figure 9, the Random Forest and Decision Tree models performed best, followed by the KNN model. These three models had the lowest false alarm percentages, followed by Logistical Regression and Naïve Bayes. These lowest percentages mean that Random Forest, Decision Tree, and KNN perform better in detecting normal traffic. In our dataset, normal traffic is the dominant traffic; therefore, it is expected to have a low FAR value. This low FAR value could be due to the model’s bias toward estimating the normal traffic perfectly, which is common in unbalanced datasets. Further, the clustering done in the Random Forest, Decision Tree, and KNN models can be helpful, especially when dealing with two types of data having different network features.

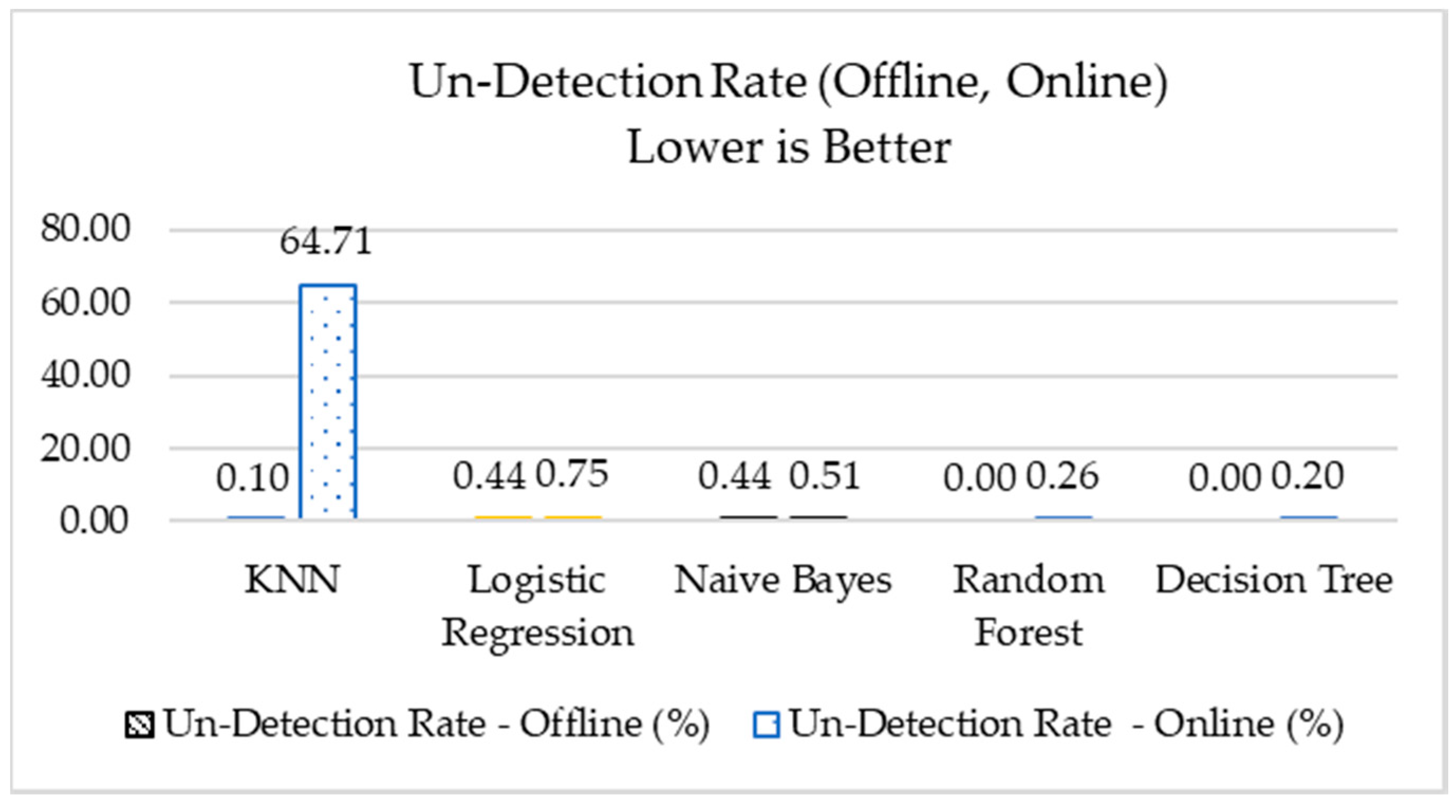

Figure 10 shows the results of the un-detection rate metric. The UND (as shown in the third equation, prior) represents the percentage of the traffic which is an anomaly but is misclassified as normal (the opposite of the FAR). The traffic represented by this metric is more critical than the traffic represented by the FAR metric because, in this case, an attack can happen without being detected. Further, in our unbalanced dataset, the models are biased toward normal traffic, and this metric would show how biased the models are.

|

As shown in Figure 10, considering the offline performance results, the percentage of the UND is small for the Naïve Bayes, Logistic Regression, and KNN models, and zero for the Decision Tree and Random Forest models. That is, all algorithm show excellent performance on this critical metric. However, considering the online performances, the KNN model had the worst performance, which was markedly different from the offline evaluation. The same did not happen to the other models, and their online performances are very close to their offline performance. This excellent performance shows that the features selected in this work are also very good as they were able to detect attacks even in an unbalanced dataset.

Conclusions

This paper presents the development of a SCADA system testbed to be used in cybersecurity research. The testbed was dedicated to controlling a water storage tank, which is one of several stages in the process of water treatment and distribution. The testbed was used to analyze the effects of the attacks on SCADA systems. Using the network traffic, a new dataset was developed for use by researchers to train machine learning algorithms as well as to validate and compare their results with other available datasets.

Five reconnaissance attacks specific to the ICS environment were conducted against the testbed. During the attacks, the network traffic with information about the devices (valves, pumps, sensors) was captured. Using Wireshark and Argus network tools, features were extracted to build a dataset for training and testing machine learning algorithms.

Once the dataset was generated, five traditional machine learning algorithms were used to detect the attacks: Random Forest, Decision Tree, Logistic Regression, Naïve Bayes, and KNN. These algorithms were evaluated in two phases: during the training and testing of the machine learning models (offline), and during the deployment of these models in the network (online). The performance obtained during the online phase was compared to the performance obtained during the offline phase.

Three metrics were used to evaluate the performance of the used algorithms: accuracy, FAR, and UND. Regarding the accuracy metric, in the offline phase, all ML algorithms showed an excellent performance. In the online phase, almost all the algorithms performed very close to the offline results. The KNN algorithm was the only one which did not perform well. Moreover, considering an unbalanced dataset and analyzing the FAR and UND metrics, we concluded that Random Forest and Decision Tree models performed best in both phases compared to the other models.

The results show the feasibility of detecting reconnaissance attacks in ICS environments. Our future plans include generating more attacks and checking the models’ feasibility and performance in different environments. Moreover, experiments using unsupervised algorithms will be done.

Acknowledgements

The statements made herein are solely the responsibility of the authors. The authors would like to thank the Instituto Federal de Educação, Ciência e Tecnologia de São Paulo (IFSP), Washington University in Saint Louis, and Qatar University.

Author contributions

M.A.T. built the testbed and performed the experiments. T.S. and M.Z. assisted with revisions and improvements. The work was done under the supervision and guidance of R.J., N.M. and M.S., who also formulated the problem.

Funding

This work has been supported under the grant ID NPRP 10-901-2-370 funded by the Qatar National Research Fund (QNRF) and grant #2017/01055-4 São Paulo Research Foundation (FAPESP).

Conflicts of interest

The authors declare no conflicts of interest.

References

- ↑ Aragó, A.S.; Martínez, E.R.; Clares, S.S. (2014). "SCADA Laboratory and Test-bed as a Service for Critical Infrastructure Protection". Proceedings of the 2nd International Symposium on ICS & SCADA Cyber Security Research 2014: 25–9. doi:10.14236/ewic/ics-csr2014.4.

- ↑ Communication Technologies, Inc. (October 2004). "Supervisory Control and Data Acquisition (SCADA) Systems" (PDF). Technical Information Bulletin 04-1. National Communications System. https://www.cedengineering.com/userfiles/SCADA%20Systems.pdf. Retrieved 08 August 2018.

- ↑ Filkins, B. (2 February 2016). "IT Security Spending Trends". SANS Analyst Papers. SANS Institute. https://www.sans.org/reading-room/whitepapers/analyst/membership/36697. Retrieved 05 June 2018.

- ↑ 4.0 4.1 Stouffer, K.; Pilitteri, V.; Lightman, S. et al. (May 2015). "Guide to Industrial Control Systems (ICS) Security" (PDF). NIST Special Publication 800-82 Revision 2. National Institute of Standards and Technology. doi:10.6028/NIST.SP.800-82r2. https://nvlpubs.nist.gov/nistpubs/SpecialPublications/NIST.SP.800-82r2.pdf. Retrieved 05 June 2018.

- ↑ 5.0 5.1 "Modbus Technical Resources". Modbus Organization, Inc. http://www.modbus.org/tech.php. Retrieved 05 December 2017.

- ↑ 6.0 6.1 "Modbus Application Protocol Specification V1.1b3" (PDF). Modbus Organization, Inc. 26 April 2012. http://www.modbus.org/docs/Modbus_Application_Protocol_V1_1b3.pdf. Retrieved 08 August 2018.

- ↑ 7.0 7.1 7.2 Morris, T.; Wei, G. (2014). "Industrial Control System Traffic Data Sets for Intrusion Detection Research". Proceedings from the International Conference on Critical Infrastructure Protection VIII: 65–78. doi:10.1007/978-3-662-45355-1_5.

- ↑ Miciolino, E.E.; Bernieri, G; Pascucci, F.; Setola, R. (2015). "Communications network analysis in a SCADA system testbed under cyber-attacks". Proceedings of the 23rd Telecommunications Forum TELFOR: 341-344. doi:10.1109/TELFOR.2015.7377479.

- ↑ Rosa, L.; Cruz, T.; Simões, P. et al. (2017). "Attacking SCADA systems: A practical perspective". IFIP/IEEE Symposium on Integrated Network and Service Management: 741-746. doi:10.23919/INM.2017.7987369.

- ↑ Keliris, A.; Salehghaffari, H.; Cairl, B. et al. (2016). "Machine learning-based defense against process-aware attacks on Industrial Control Systems". Proceedings from the 2016 IEEE International Test Conference: 1-10. doi:10.1109/TEST.2016.7805855.

- ↑ Tomin, N.V.; Kurbatsky, V.G.; Sidorov, D.N. et al. (2016). "Machine Learning Techniques for Power System Security Assessment". IFAC-PapersOnLine 49 (27): 445–50. doi:10.1016/j.ifacol.2016.10.773.

- ↑ Cherdantseva, Y.; Burnap, P.; Blyth, A. et al. (2016). "A review of cyber security risk assessment methods for SCADA systems". Computers & Security 56: 1–27. doi:10.1016/j.cose.2015.09.009.

- ↑ Candell, R.; Zimmerman, T.; Stouffer, K. (November 2015). "An Industrial Control System Cybersecurity Performance Testbed" (PDF). NISTIR 80089. National Institute of Standards and Technology. doi:10.6028/NIST.IR.8089. https://nvlpubs.nist.gov/nistpubs/ir/2015/NIST.IR.8089.pdf. Retrieved 03 June 2018.

- ↑ "Overview of the DNP3 Protocol". DNP User Group. https://www.dnp.org/Pages/AboutDefault.aspx. Retrieved 03 June 2018.

- ↑ Darwish, I.; Igbe, O.; Saadawi. et al. (2015). "Experimental and theoretical modeling of DNP3 attacks in smart grids". Proceedings from the 36th IEEE Sarnoff Symposium: 155–60. doi:10.1109/SARNOF.2015.7324661.

- ↑ Li, Q.; Feng, X.; Wang, H. et al. (2018). "Understanding the Usage of Industrial Control System Devices on the Internet". IEEE Internet of Things Journal 5 (3): 2178–89. doi:10.1109/JIOT.2018.2826558.

- ↑ "Modicon M241 Micro PLC - TM241CE40R". Schneider Electric. https://www.schneider-electric.us/en/product/TM241CE40R/controller-m241-40-io-relay-ethernet/. Retrieved 08 August 2018.

- ↑ Erickson, K.T. (2011). Programmable Logic Controllers: An Emphasis on Design and Application (2nd ed.). Dogwood Valley Press. ISBN 9780976625902.

- ↑ 19.0 19.1 Mantere, M.; Uusitalo, I.; Sailio, M. et al. (2012). "Challenges of Machine Learning Based Monitoring for Industrial Control System Networks". Proceedings from the 26th International Conference on Advanced Information Networking and Applications Workshops: 968-972. doi:10.1109/WAINA.2012.135.

- ↑ 20.0 20.1 Ng, A.Y.; Jordan, M.I. (2001). "On discriminative vs. generative classifiers: A comparison of logistic regression and naive Bayes". Proceedings of the 14th International Conference on Neural Information Processing Systems: Natural and Synthetic: 841–48.

- ↑ Zhang, J.; Zulkernine, M.; Haque, A. (2008). "Random-Forests-Based Network Intrusion Detection Systems". IEEE Transactions on Systems, Man, and Cybernetics, Part C 38 (5): 649–59. doi:10.1109/TSMCC.2008.923876.

- ↑ Amor, N.B.; Benferhat, S.; Elouedi, Z. (2004). "Naive Bayes vs decision trees in intrusion detection systems". Proceedings of the 2004 ACM Symposium on Applied Computing: 420–24. doi:10.1145/967900.967989.

- ↑ Chen, W.-H.; Hsu, S.-H.; Shen, H.-P. (2005). "Application of SVM and ANN for intrusion detection". Computers & Operations Research 32 (10): 2617–34. doi:10.1016/j.cor.2004.03.019.

- ↑ Zhang, H.; Berg, A.C.; Maire, M. et al. (2006). "SVM-KNN: Discriminative Nearest Neighbor Classification for Visual Category Recognition". Proceedings of the 2006 IEEE Computer Society Conference on Computer Vision and Pattern Recognition: 2126-2136. doi:10.1109/CVPR.2006.301.

- ↑ Sokolova, M.; Lapalme, G. (2009). "A systematic analysis of performance measures for classification tasks". Information Processing & Management 45 (4): 427–37. doi:10.1016/j.ipm.2009.03.002.

- ↑ Buda, M.; Maki, A.; Mazurowski, M.A.. "A systematic study of the class imbalance problem in convolutional neural networks". Neural Networks 106: 249–59. doi:10.1016/j.neunet.2018.07.011.

- ↑ He, H.; Garcia, E.A. (2009). "Learning from Imbalanced Data". IEEE Transactions on Knowledge and Data Engineering 21 (9): 1263-84. doi:10.1109/TKDE.2008.239.

- ↑ Calderon, P. (2017). Nmap: Network Exploration and Security Auditing Cookbook (2nd Revised ed.). Packt Publishing. ISBN 9781786467454.

- ↑ 29.0 29.1 29.2 29.3 29.4 29.5 29.6 Mnemon, E.; Soullie, A.; Torrents, A. et al.. "Vulnerability & Exploit Database". Rapid7 LLC. https://www.rapid7.com/db/modules/auxiliary/scanner/scada/modbusclient. Retrieved 30 January 2017.

- ↑ 30.0 30.1 30.2 "Wireshark". Wireshark Foundation. https://www.wireshark.org/. Retrieved 20 October 2017.

- ↑ "Argus". QoSient, LLC. https://qosient.com/argus/. Retrieved 10 November 2017.

- ↑ Mantere, M., Sailio, M., Noponen, S. (2013). "Network Traffic Features for Anomaly Detection in Specific Industrial Control System Network". Future Internet 5 (4): 460–73. doi:10.3390/fi5040460.

- ↑ Salman, T.; Bhamare, D.; Erbad, A. et al.. "Machine Learning for Anomaly Detection and Categorization in Multi-Cloud Environments". Proceedings from the 2017 IEEE 4th International Conference on Cyber Security and Cloud Computing: 97–103. doi:10.1109/CSCloud.2017.15.

Notes

This presentation is faithful to the original, with only a few minor changes to presentation, grammar, and punctuation. In some cases important information was missing from the references, and that information was added. The Buda et al. article cited in the original has since been published fully, and the citation here is updated to reflect that.