Journal:What Is health information quality? Ethical dimension and perception by users

| Full article title | What Is health information quality? Ethical dimension and perception by users |

|---|---|

| Journal | Frontiers in Medicine |

| Author(s) | Al-Jefri, Majed; Evans, Roger; Uchyigit, Gulden; Ghezzi, Pietro |

| Author affiliation(s) | University of Brighton, Brighton and Sussex Medical School |

| Primary contact | Email: pietro dot ghezzi at gmail dot com |

| Editors | Sampaio, Cristina |

| Year published | 2018 |

| Volume and issue | 5 |

| Page(s) | 260 |

| DOI | 10.3389/fmed.2018.00260 |

| ISSN | 2296-858X |

| Distribution license | Creative Commons Attribution 4.0 International |

| Website | https://www.frontiersin.org/articles/10.3389/fmed.2018.00260/full |

| Download | https://www.frontiersin.org/articles/10.3389/fmed.2018.00260/pdf (PDF) |

Abstract

Introduction: The popularity of seeking health information online makes information quality (IQ) a public health issue. The present study aims at building a theoretical framework of health information quality (HIQ) that can be applied to websites and defines which IQ criteria are important for a website to be trustworthy and meet users' expectations.

Methods: We have identified a list of HIQ criteria from existing tools and assessment criteria and elaborated them into a questionnaire that was promoted via social media and, mainly, the university. Responses (329) were used to rank the different criteria for their importance in trusting a website and to identify patterns of criteria using hierarchical cluster analysis.

Results: HIQ criteria were organized in five dimensions based on previous theoretical frameworks, as well as on how they cluster together in the questionnaire response. We could identify a top-ranking dimension (scientific completeness) that describes what the user is expecting to know from the websites (in particular: description of symptoms, treatments, side effects). Cluster analysis also identified a number of criteria borrowed from existing tools for assessing HIQ that could be subsumed to a broad “ethical” dimension (such as conflict of interests, privacy, advertising policies) that were, in general, ranked of low importance by the participants. Subgroup analysis revealed significant differences in the importance assigned to the various criteria based on gender, language, and whether or not a biomedical educational background was evident.

Conclusions: We identified criteria of HIQ and organized them in dimensions. We observed that ethical criteria, while regarded highly in the academic and medical environment, are not considered highly by the public.

Keywords: internet, information quality, ethics, online information, public health

Introduction

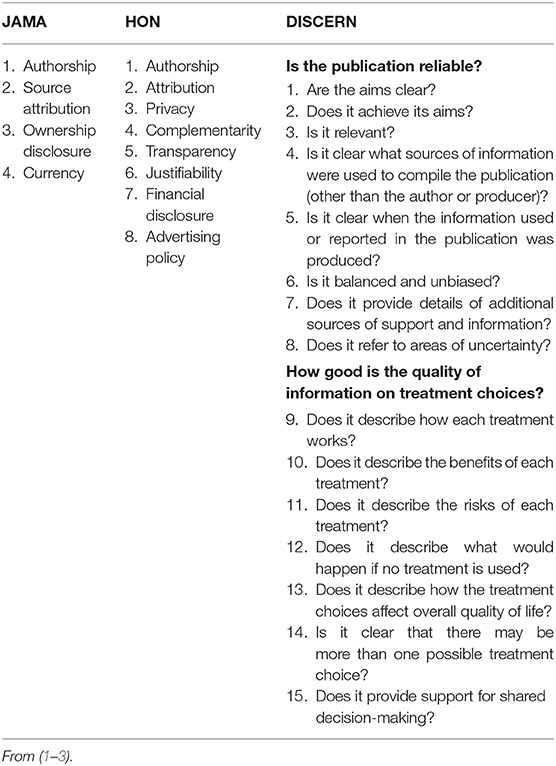

With the diffusion of the internet, many have been concerned that, due to its unregulated and unfiltered nature, it could misinform or disinform the public. The lack of widely used search engines (Google was founded in 1998) left entirely up to the users which websites to trust among the relatively few ones (compared to 2018) available. These concerns led to the development, in the late 1990s, of instruments and organizations to assess health information quality (HIQ) of websites, including the Journal of the American Medical Association (JAMA) criteria[1], DISCERN[2], and the criteria for meeting the health-on-the-net (HON) code of conduct.[3] These instruments were developed for different purposes: the JAMA and DISCERN tools were aimed at providing customers with instruments to assess websites[1][2]; the HON criteria are used by the HON foundation to certify health websites with the display of the HONCode quality seal, and this was originally aimed at organizations to help them develop websites.[3] The criteria of HIQ considered by these three approaches are listed in Table 1.

|

There are no data available to know how many information seekers have used these tools to make assessments. On the other hand, the high number of citations in the scientific literature for the JAMA (1100) and DISCERN (600) tools indicate that these are also widely used, particularly the JAMA criteria, in academic research analyzing HIQ. It should be noted, however, that DISCERN was developed by an expert panel, but then it was actually tested on 13 self-help group members.[2]

An important issue, and one that is not assessed by the existing HIQ instruments, is whether websites informing the public on therapies mention therapies approved by regulatory agencies or public health authorities, or non-approved ones. Drug approval requires a high level of evidence of efficacy and benefit/risk ratio, an approach termed “evidence-based medicine” (EBM).[4] In a way, this is related to the reliability of the information. For instance, a website describing AIDS as a disease due to the HIV virus that can be treated with antiretroviral therapy is higher quality than one stating that AIDS is not due to a virus and should be treated with nutritional supplements.[5]

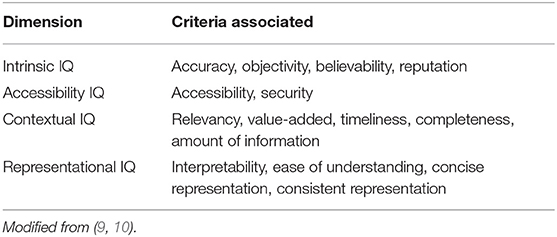

Health information quality should be seen in the wider context of information quality (IQ) generally. The latter has been extensively studied for its applications in business and manufacturing. Information quality is generally considered as a concept with multiple dimensions[6]; depending on an author's philosophical view-point, information quality can have different attributes and characteristics.[7][8] Several studies have developed IQ frameworks based on the definition of IQ dimensions.[6] The best known of these frameworks was developed by Wang[9] and Wang[10], based on a survey among 355 Masters in Business and Administration alumni, aiming to capture aspects of IQ that are important for consumers in the business field. A second study by the same group involved 52 information professionals from the financial, healthcare, and manufacturing sectors.[11] These studies defined 15 IQ criteria, that were grouped into four dimensions[9][10] as shown in Table 2.

|

It is probably difficult to fit the HIQ criteria from Table 1—which are centered on trustworthiness and scientific correctness—into the theoretical framework of IQ dimensions in Table 2, which are borrowed from other fields. Recent studies have proposed a categorization of HIQ criteria into classical IQ dimensions focusing on IQ criteria identified through focus group, and focusing on the scientific content of webpages.[12]

We undertook this project to define the IQ criteria and dimensions relevant to HIQ. To do so, we have used a mixed approach, identifying relevant HIQ criteria using a theoretical approach broadly based on the existing criteria, the JAMA score, HONcode, and DISCERN, as well as an empirical approach, based on a questionnaire, to rank the importance of the various criteria to the end user. In particular, our aim was to evaluate user perceptions of HIQ criteria and their relative importance in trusting health-related websites. Criteria of HIQ were then classified in dimensions based on the existing literature and, using cluster analysis, the ranking by users.

Methods

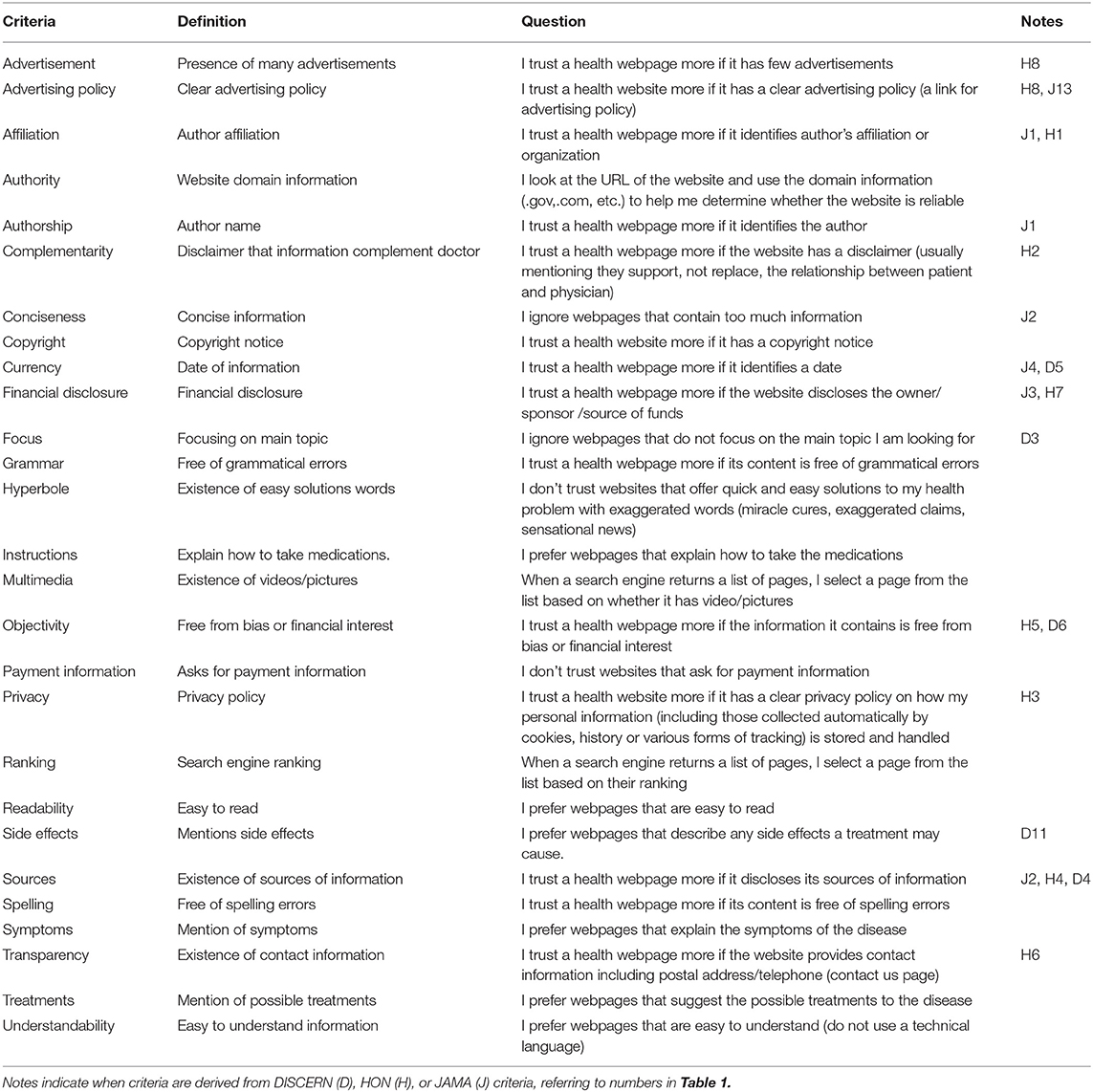

To design a questionnaire, we first identified relevant IQ criteria. These were based on the existing literature on HIQ, the instruments described above (Table 1) the standard IQ criteria listed in Table 2 and other studies.[10][13][14] General criteria, such as correct spelling and grammar or the importance of the presence of multimedia or the ranking by the search engine were also included. Other questions are related to the content of the webpage, such as whether the webpage explains disease symptoms, therapies, how to take medications and their side effects, and if responders are wary of webpages offering quick solutions and miracle cures (we defined this as “hyperbole”). The respondents were also asked to rate importance that the information describes treatments based on evidence-based medicine or complementary medicine, as this question would be defining a criterion of reliability (from the scientific point of view) of the information.

The full list of HIQ criteria considered is provided in Table 3, that also reports the questions aiming at identifying the importance of those criteria in trusting a health-related website that were used in the questionnaire. The table also shows which criteria were derived from the ones in the known HIQ tools (JAMA, HON, DISCERN). For most of the criteria, the questions were formulated in the form “I trust a health webpage more if…” or “I prefer webpages that…” that were assessed using a 5-point Likert scale (5 = strongly agree, 4 = somewhat agree, 3 = neither agree not disagree, 2 = somewhat disagree, 1 = strongly disagree). Other questions were aiming at defining the demographics of the sample (gender, age, country, education, whether studying in a medically-related subject of not and others) or internet usage (time spent, main search engine used, device used, how often they searched health information, whether searching symptoms or therapies). The entire questionnaire (42 questions) is available as supplementary online information (Supplementary Table 1).

|

The project was approved on January 26, 2017 by the Research Ethics Panel of the School of Computer Engineering and Mathematics of the University of Brighton. The questionnaire was published online using Google forms and promoted using social media such as Twitter, Facebook, and via email, including students and staff at the University of Brighton and students at the Brighton and Sussex Medical School. We set the Google forms to limit one response per user to avoid duplicate responses. Eligibility criteria for participation were understanding the English language and to be over 18 years of age. A total of 329 anonymous responses were recorded in the period February 1–June 16. We considered this a sufficient number as previous studies in the field of IQ and its dimensions are based on surveys with a number of responses ranging from 235 to 355.[10][11][15]

Statistical analysis of the responses was performed using the statistical analysis software package SPSS, and the specific test is described in the legend of each figure or table. Hierarchical cluster analysis of questionnaire responses (average linkage clustering using the weighted pair group method with arithmetic mean) was performed using GENE-E (Broad Institute, Cambridge, MA) for Windows.

Results

Sample characteristics

We received 329 responses, 66% male and 33.7% female. Age groups were: 18–25 years, 26.4%; 26–40, 52.3%; 41–60, 18.8%; over 60, 1.5%. The responses came from 32 different countries: United Kingdom 41.5%, Yemen 20.4%, Saudi Arabia 13.4%, Germany 5.1%, Canada 3.8%, and 15.8% various other countries. Of the respondents, 49.5% had, or were studying toward, a postgraduate degree, 40.7% another higher education diploma, and 9.8% high school; of them 26.5% were of a biomedical background (a degree or studying toward a degree in medicine, pharmacology or biomedical sciences). Ten out of 329 participants responded that they do not seek health information online, and these were excluded from the analyses.

Ranking of IQ criteria

Figure 1 show how all respondents ranked each of the IQ criteria described in Table 3. The full results of the questionnaire (raw data, mean, median) are provided as a supplementary file (Supplementary File 1). All responses had a satisfactory inter-rater reliability, with an overall Cronbach's Alpha for all 27 questions of 0.882 (for individual questions, Cronbach's Alpha ranged between 0.874 and 0.883).

|

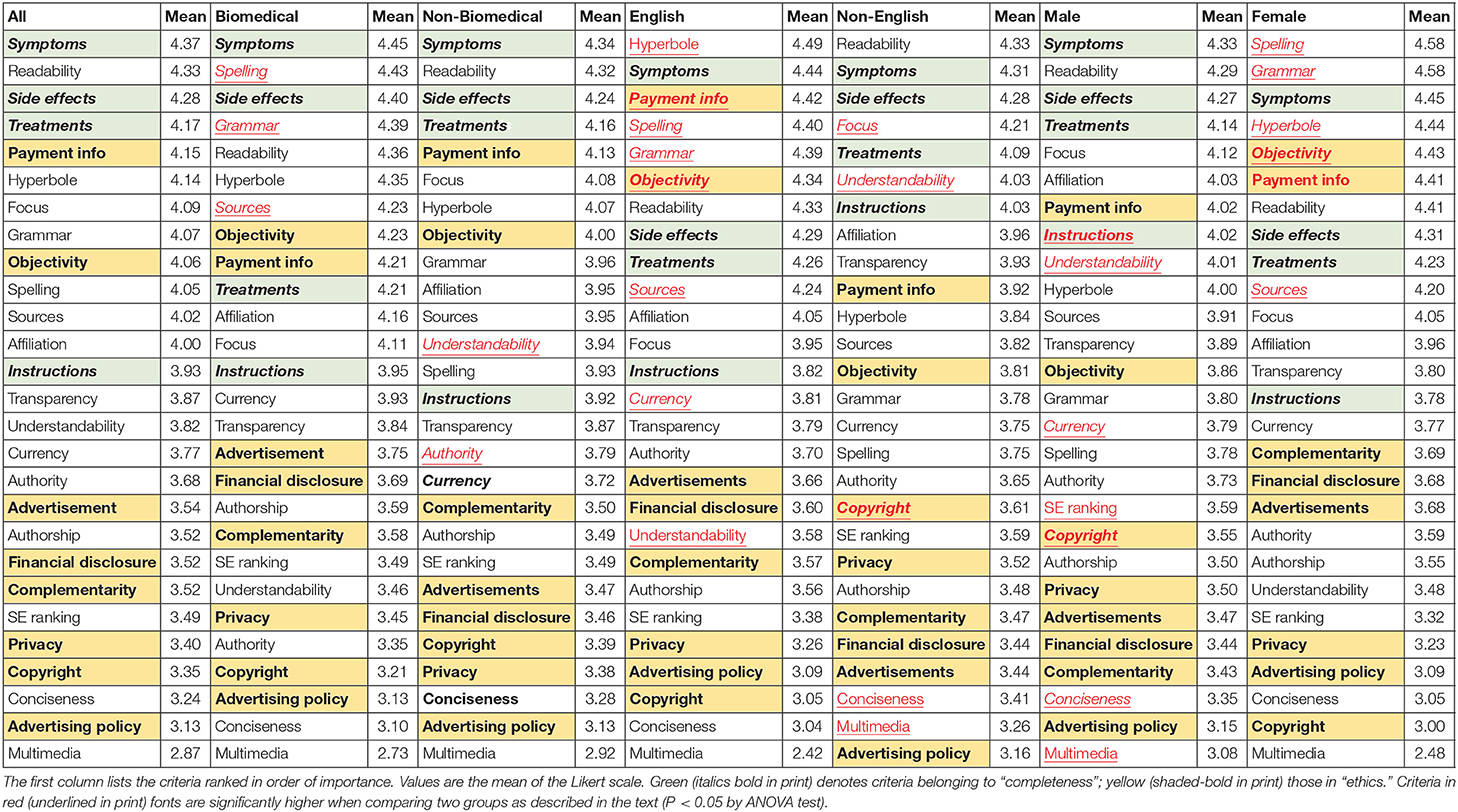

The ranking by the average Likert score is shown in Table 4 (first two columns). The median score of all 27 responses listed here was 3.87. It can be seen that a group of criteria that relate to the very specific context of health and disease (symptoms, side effects, treatments and instructions; in bold-italics in Table 4) are ranked high, indicating that users want information that is, above all, relevant and helpful.

|

On the other hand, criteria related to the four JAMA criteria (authorship, currency, sources, financial disclosure) are not considered particularly important and, with the only exception of “sources,” are all ranked below the median value.

Of the eight criteria related to the HONcode principles, only one was slightly above the median (affiliation; termed “authority” in the HON principles) while all the others (complementarity, privacy, attribution/sources, transparency, financial disclosure, advertising policy) were not deemed highly important (one criterion, “justifiability,” was not assessed in the questionnaire). With the exception of “sources,” a criterion that belongs to those in the JAMA criteria, all the criteria above could be broadly related to “ethics” and are highlighted in bold in Table 4. Authority, which we define as the affiliation of the website—whether governmental, from an international health organization, for instance (while we define "affiliation" as that of the author)—also ranked low.

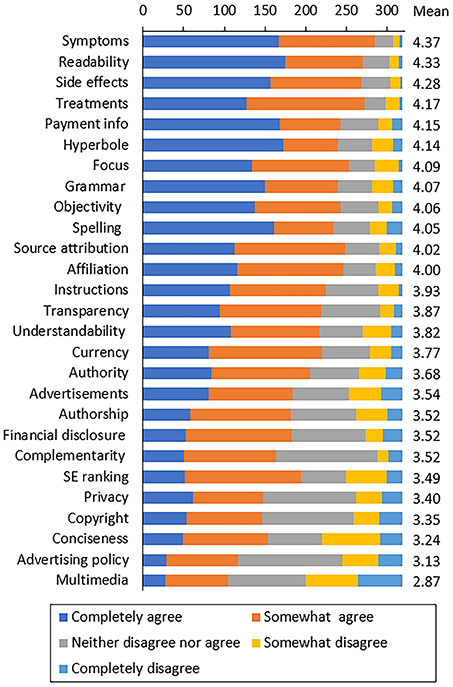

Identification of main dimensions of HIQ

We attempted to group the various criteria in IQ dimensions. To do so we have used a mixed approach. In part we relied on an ontological/theoretical approach and the existing classification described in Table 2. Then, with an empirical approach, we assessed whether some of these criteria followed a similar pattern in the responses to the questionnaire. For this purpose, we analyzed all individual responses using hierarchical cluster analysis.

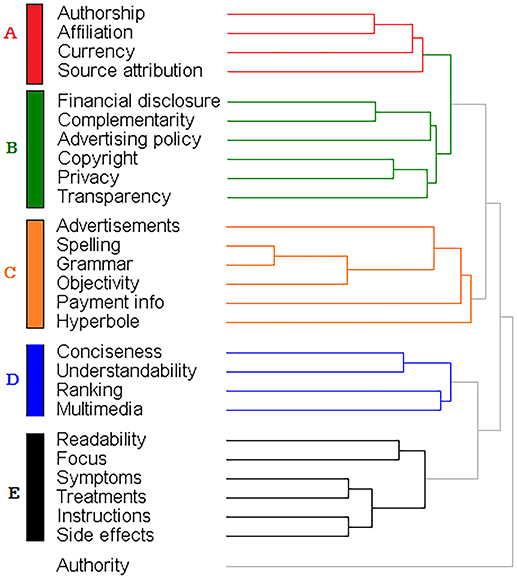

As shown in Figure 2, we identified five main clusters. Cluster A includes three of the JAMA criteria (authorship, currency and sources) and affiliation. Cluster B includes financial disclosure, complementarity, advertising policy, copyright, privacy and transparency, all criteria that somewhat relate to ethical aspects of IQ. Cluster C includes basic features of webpages (number of advertisements, spelling, grammar and objectivity) as well as hyperbole and payment info. Cluster D includes IQ criteria (conciseness, ranking, and multimedia) that specifically relate to online information in addition to understandability.

|

Cluster E includes criteria that relate to the practical usefulness for an information seeker in the specific context of health and disease (focus, symptoms, treatments, side effects of drugs, and information on their usage). This cluster also includes readability, and although at first one may think that this is a feature of the text (like spelling or grammar), it has probably a more practical value.

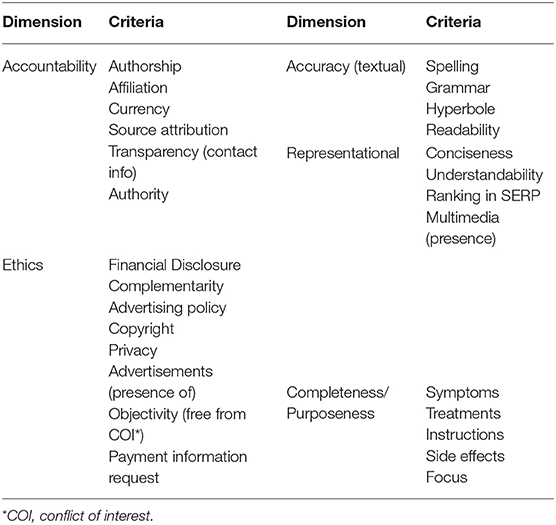

We now propose an organization of criteria of HIQ into dimensions, as outlined in Table 5. A first dimension relates to trustworthiness but could be better defined as “accountability” and includes information that defines basic criteria such as not being anonymous. This dimension includes four of the components of the JAMA score that are present in cluster A. We also included in this dimension “authority” that did not belong to any cluster. In fact, our questionnaire defined authority as features of a website (such as the domain, whether a .com, .edu, or .org) and this is very similar to “affiliation,” defined as the affiliation of the individual author. We also included in this dimension “transparency” because, although in cluster B, it was defined as the presence of contact information for the author or website. The criteria of accountability are all intrinsic dimensions of HIQ and would apply equally well to information online and in print and would also apply to non-health related information.

|

A second dimension, ethics, defines ethical aspects of trustworthiness and includes all the criteria in cluster B except transparency (see above). We also included here “objectivity,” “advertisement,” and “payment information” although they clustered elsewhere, as this would fit with the description of this dimension. These are criteria of HIQ that could also be applied to non-health IQ with the exception of complementarity (the presence of a statement to say information supports, but does not replace, the relationship between patient and physician). Financial disclosure might be important in other types of information, but the issue of funding and conflict of interest is regarded as particularly important in health.

A third dimension defines textual accuracy and includes spelling, grammar, readability, and use of hyperbole or exaggeration. To define this dimension, we started from cluster C. However, because “hyperbole” can be considered a characteristic of the text, we decided to subsume it under “accuracy.” This dimension could apply equally to non-health, and in print, information, with the possible exception of hyperbole or exaggeration, that is more common in the news about scientific advancements.

A fourth dimension, defined as “representational” dimension comprises criteria (understandability, conciseness, search engine ranking, and presence of multimedia) that is probably more important in online information (that one wants to access quickly and concisely, so it can be read on a small screen) but would apply to non-health subjects. These criteria are present exactly in cluster D.

A last dimension defines the much sought-after elements of information that characterize its scientific completeness: presence of information specific to the medical condition or its treatment, as well as focus. In fact, all these criteria relate to focus. As such, even if these specific criteria relate to health, it would be easy to identify homologous criteria in other fields. Also, this dimension could also apply to printed information, although focus is probably more important when information is accessed online, often on a small mobile device.

Subgroup analysis by educational subject, gender, and language

We first analyzed differences in the ranking given by participants based on whether they studied, or had a degree in, a biomedical field or not. Then we looked at native language (English vs. non-English) and gender.

The results are shown in Table 4 that reports, in columns 3rd to 14th, the ranking (as mean score) for all subgroups. When comparing biomedical students/graduates with non-biomedical ones, it was clear that biomedical education was associated with giving higher importance to text accuracy (spelling, grammar, sources). Higher importance to text accuracy (spelling, grammar, hyperbole) was also evident for English speakers, compared to non-English. There were also significant gender differences with textual accuracy being ranked higher in females, while males ranked higher “instructions” and “understandability.”

Importance of the scientific correctness of the information provided

We noted earlier that information about disease diagnosis and treatment is ranked highest in the whole sample (in the top quartile). However, the fact that a webpage describes a treatment for a disease does not mean that website is scientifically correct. One could come up with a webpage that meets all the criteria in the “completeness” dimension but misinforms the reader.

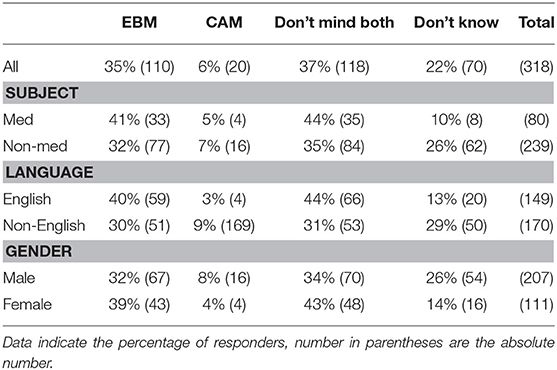

We recently proposed to use the information about the treatment suggested or promoted as a proxy for the scientific soundness of a web page.[16] Therefore, we asked participants whether they prefer websites that provide EBM information, complementary or alternative medicine (CAM), or don't care. The results shown in Table 6 indicate that only 6% preferred websites on CAM, 35% preferred EBM, and 37% did not assign this a particular importance. However, the preference for EBM was higher with biomedical education, English speakers, and females, and in these groups, there was a lower percentage of participants who did not know whether they prefer EBM or CAM. The association with biomedical education, language, and gender was statistically significant (P = 0.02, P < 0.001, P = 0.029, respectively, by the Pearson Chi-Square test). There was no significant association with EBM preference and education level (P = 0.866, data not shown).

|

Discussion

We propose dimensions and criteria of HIQ based on the importance assigned to them by internet users. We used an empirical approach, like what was done 20 years ago by Wang and Strong[10] and Lee[11] for IQ in the context of industries and organizations, with two major differences: the focus on the health-related content of the information provided by websites and that on trustworthiness, and that on online information. The results were not only used to rank the different criteria in order of perceived importance but also, using cluster analysis, to help with classifying them into dimensions.

Although the terminology is always ambiguous, we suggest that criteria of HIQ could be subsumed to dimensions as described in Table 5, bearing in mind that there may be areas of overlap. For instance, we assigned the criterion “hyperbole,” that in the context of HIQ means presenting a potential treatment as a “miracle drug,” to the dimension of textual accuracy but on theoretical grounds it could also fit the ethical dimension of trustworthiness.

Of the criteria in the dimension “accountability,” which includes the four JAMA criteria (authorship, currency, sources financial disclosure), “sources” is the one that ranks highest, but sill only 11th. Authorship (19th) ranked lower than authority (17th) and affiliation (12th), indicating that the link to an institution or a medical degree, or the type of website (for instance whether a government website or a commercial one) are considered more important than the indication of the name of the author. The generally low importance given to the JAMA criteria was also observed in a survey by Eysenbach and Kohler[17], as they reported that “[c]ontrary to the statements made in the focus groups, in practice we observed that none of the participants actively searched for information on who stood behind the sites or how the information had been compiled.”[17]

The ethics dimension of trustworthiness includes aspects that are particularly important in medicine (conflict of interest, data privacy, financial disclosure). Of note, one criterion, “complementarity” (whether information should support, not replace, the doctor-patient relationship) is one of the HONcode principles[3] and specific for health.

The contextual information that we define as “textual accuracy” are also ranked high, and these include spelling and grammar but also include the health-specific criterion “hyperbole,” that is very common in health news stories and web pages when authors portray a treatment with an overly positive tone or “spin.”[18]

The “completeness” dimension defines contextual information, which is necessary for the information to fulfill its task.[19] It includes both basic IQ criteria as well as some that are specific to health, and we could define it as “scientific completeness,” the information that users look for and rank high in our questionnaire. This is in agreement with a recent study performed in the United States showing that completeness of the information—which the authors defined as “the proportion of priori-defined elements covered by the website; breath of information”—also ranked higher in a study where participants were asked to rank health websites for some IQ criteria.[12] The importance given by participants to criteria related to “completeness/purposeness,” as indicated by the high ranking of information on symptoms, side effects, and treatments in Table 4, reflects the main use of the internet when searching health information. In fact, a survey of 622 patients in the MetroNet practices in the Detroit area reported that of the topics most often searched online, specific disease conditions and treatments were at the top.[20] To “find out about treatments” was also the top purpose of health-related internet use in a survey of patients of a general practice surgery in semi-rural England.[21]

Of the representational criteria, understandability ranked rather high. On the other hand, representational criteria specific for webpages (ranking by the search engine, presence of multimedia, conciseness) are deemed as the least important.

Another aspect highlighted by the present study is that the ranking of criteria of HIQ is not a one-size-fits-all situation, differing depending on education, gender, and linguistic background. This is not a novel concept, and already Wang and Strong suggested that the classification of IQ criteria in dimensions is different for academic and practitioners, in a way, an extension of the concept of data “fit-for-use.”[10] Floridi also noted that IQ should also consider purposeness, and that the value of IQ criteria may be different in different users.[22]

In this sense, the difference in the ranking of HIQ criteria among subjects with a biomedical degree or biomedical students (pharmacy, biomedical science, medicine) and those in other education areas could be extrapolated to the difference between health professionals and lay persons. Those with a biomedical background give more importance to criteria such as correct spelling and grammar than those with non-biomedical background. Not surprisingly, “sources” are ranked higher in a biomedical background, as identifying and citing references is key to this field. On the other hand, in a non-biomedical background, “understandability” is ranked higher. Interestingly, we have not found any significant difference in the ranking of the “ethical” criteria by subjects with a biomedical background.

Native English speakers also assign more importance to textual accuracy (spelling, grammar,), as well as to the ethical criterion of “objectivity.” Attention to “hyperbole” is also ranked higher by this group and we discussed above how this criterion has also an “ethical” value. A very similar pattern was observed in females, when compared with males, with the added higher importance assigned to “payment information,” suggesting a stronger ethical focus in females.

The differences in ranking identified in the subgroup analysis hint at a limitation of any classification into dimensions based on a questionnaire, as the results will vary with the population investigated, and any subsequent analysis (including the cluster analysis used here) will vary accordingly. This suggests that when IQ is defined, the target user should be well defined.

The other aspect of this study was on which criteria are regarded as important and which are not. The fact that the ranking by the search engine is not seen as an indicator of trustworthiness of a website is very interesting, but this does not mean that the user is likely to go through several search engine result pages rather than limiting to the first 10 to 20. The significance of this response should be assessed experimentally, for instance using eye-tracking software to validate the importance of the different criteria.

The low ranking on “ethical” trustworthiness criteria is worrying, as it might indicate that users are somewhat vulnerable to information that has a conflict of interest, such as that from commercial sources promoting potentially ineffective treatments or to other types of health misinformation. This is probably something that educators, particularly those in the biomedical field, should consider improving, and males seem to be more “at risk” as they value “ethical” criteria lower than females. This difference is supported by a recent study reporting that males are more likely to disseminate fake health information than females.[23] It should be noted that this is at variance with results from the MetroNet study cited above, where patients ranked “Endorsement by a government agency or professional organization” and “reliable source/author” as the most important factors influencing their trust (“perceived accuracy”) of healthcare websites.[20] Likewise, “reputable/trustworthy organization” was the most important factor in trusting health information in a 2002–2003 survey of 55 participants to United Kingdom health support groups, although this study was not restricted to online information but included information provided by healthcare professionals, brochures, books, TV/radio, and others.[24] It is difficult to say whether these differences are due to the different time periods when those studies were carried out or whether this is due to the difference in the population, and patients exposed to medical research and support groups may have a higher health literacy than our sample.

We suggest that our proposed dimensions of HIQ may be an attempt to build a more comprehensive theoretical framework than the one that can be derived from the existing studies. For instance, the recent paper by Tao et al.[12] proposing a definition of HIQ dimensions does not take into account some of the criteria that we derived from the HONcode and DISCERN tools, particularly those related to what we call “ethical criteria.”[12]

In conclusion, this study describes a possible organization of HIQ criteria into dimensions that identifies dimensions not previously recognized as such in IQ, such as the ethical dimension, which was identified through this ranking approach. Contrary to our expectations, given this is a hot topic in the news, we observed that ethical criteria, while regarded highly in the academic and medical environment, are not considered highly by the public.

Clearly, the main limitation of this study, which could affect its external validity, is that the focus on university-level participants mainly may lead to an underestimation of the importance of criteria aimed at the average user. It would be important to extend this study to a more general sample of the public, and particularly patients and carers, to see whether there is a different perception of HIQ and if this goes in the same direction of the results in the comparison between non-biomedical and biomedical educational background reported here. Another important point to consider when extrapolating the conclusions of this study is that our survey asked generically what users would look for to trust a website when searching for a health topic. It is possible that the factors that account for trust in a webpage with health-related information is different depending on the topic searched, and this may be particularly important for highly controversial topics, such as abortion, vaccines, or genetic modifications.

Supplementary material

The supplementary material for this article can be found online at: https://www.frontiersin.org/articles/10.3389/fmed.2018.00260/full#supplementary-material

Acknowledgements

MA-J was supported by a Ph.D. studentship from the University of Brighton. We thank Audrey Marshall for critical review of the questionnaire.

Author contributions

All authors designed research, analyzed the data, wrote the paper. MA-J designed research, performed research, analyzed the data, wrote the paper.

Conflict of interest statement

The authors declare that the research was conducted in the absence of any commercial or financial relationships that could be construed as a potential conflict of interest.

References

- ↑ 1.0 1.1 Silberg, W.M.; Lundberg, G.D.; Musacchio, R.A. (1997). "Assessing, controlling, and assuring the quality of medical information on the Internet: Caveant lector et viewor--Let the reader and viewer beware". JAMA 277 (15): 1244–5. doi:10.1001/jama.1997.03540390074039. PMID 9103351.

- ↑ 2.0 2.1 2.2 Charnock, D.; Shepperd, S.; Needham, G.; Gann, R. (1999). "DISCERN: An instrument for judging the quality of written consumer health information on treatment choices". Journal of Epidemiology and Community Health 53 (2): 105–11. doi:10.1136/jech.53.2.105. PMC PMC1756830. PMID 10396471. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC1756830.

- ↑ 3.0 3.1 3.2 Boyer, C.; Selby, M.; Scherrer, J.R.; Appel, R.D. (1998). "The Health On the Net Code of Conduct for medical and health Websites". Computers in Biology and Medicine 28 (5): 603-10. doi:10.1016/S0010-4825(98)00037-7. PMID 9861515.

- ↑ Howick, J. (2011). The Philosophy of Evidence-Based Medicine. John Wiley & Sons. ISBN 9781405196673.

- ↑ Smith, T.C.; Novella, S.P. (2007). "HIV denial in the Internet era". PLoS Medicine 4 (8): e256. doi:10.1371/journal.pmed.0040256. PMC PMC1949841. PMID 17713982. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC1949841.

- ↑ 6.0 6.1 Illari, P.; Floridi, L. (2014). "Information Quality, Data and Philosophy". The Philosophy of Information Quality. 358. Springer. doi:10.1007/978-3-319-07121-3_2. ISBN 9783319071213.

- ↑ Klein, B.D. (2001). "User Perceptions of Data Quality: Internet and Traditional Text Sources". Journal of Computer Information Systems 41 (4): 9–15. doi:10.1080/08874417.2001.11647016.

- ↑ Knight, S.-A.; Burn, J. (2005). "Developing a Framework for Assessing Information Quality on the World Wide Web". Informing Science: The International Journal of an Emerging Transdiscipline 8: 159–72. doi:10.28945/493.

- ↑ 9.0 9.1 Wand, Y.; Wang, R.Y. (1996). "Anchoring data quality dimensions in ontological foundations". Communications of the ACM 39 (11): 86-95. doi:10.1145/240455.240479.

- ↑ 10.0 10.1 10.2 10.3 10.4 10.5 Wang, R.Y.; Strong, D.M. (2015). "Beyond Accuracy: What Data Quality Means to Data Consumers". Journal of Management Information Systems 12 (4): 5–33. doi:10.1080/07421222.1996.11518099.

- ↑ 11.0 11.1 11.2 Lee, Y.W.; Strong, D.M.; Kahn, B.K. et al. (2002). "AIMQ: A methodology for information quality assessment". Information & Management 40 (2): 133-146. doi:10.1016/S0378-7206(02)00043-5.

- ↑ 12.0 12.1 12.2 12.3 Tao, D.; LeRouge, C.; Smith, K.J.; De Leo, G. (2017). "Defining Information Quality Into Health Websites: A Conceptual Framework of Health Website Information Quality for Educated Young Adults". JMIR Human Factors 4 (4): e25. doi:10.2196/humanfactors.6455. PMC PMC5650677. PMID 28986336. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC5650677.

- ↑ Bernstam, E.V.; Shelton, D.M.; Walji, M. et al. (2005). "Instruments to assess the quality of health information on the World Wide Web: what can our patients actually use?". International Journal of Medical Informatics 74 (1): 13–19. doi:10.1016/j.ijmedinf.2004.10.001. PMID 15626632.

- ↑ Zhang, Y.; Sun, Y.; Xie, B. (2015). "Quality of health information for consumers on the web: A systematic review of indicators, criteria, tools, and evaluation results". Journal of the Association for Information Science and Technology 66 (10): 2071–84. doi:10.1002/asi.23311.

- ↑ Pitt, L.F.; Watson, R.T.; Kavan, C.B. (1995). "Service Quality: A Measure of Information Systems Effectiveness". MIS Quarterly 19 (2): 173–87. doi:10.2307/249687.

- ↑ Yaqub, M.; Ghezzi, P. (2015). "Adding Dimensions to the Analysis of the Quality of Health Information of Websites Returned by Google: Cluster Analysis Identifies Patterns of Websites According to their Classification and the Type of Intervention Described". Frontiers in Public Health 3: 204. doi:10.3389/fpubh.2015.00204. PMC PMC4548082. PMID 26380250. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC4548082.

- ↑ 17.0 17.1 Eysenbach, G.; Köhler, C. (2002). "How do consumers search for and appraise health information on the world wide web? Qualitative study using focus groups, usability tests, and in-depth interviews". BMJ 324 (7337): 573–7. doi:10.1136/bmj.324.7337.573. PMC PMC78994. PMID 11884321. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC78994.

- ↑ Walsh-Childers, K.; Braddock, J.; Rabaza, C.; et al. (2018). "One Step Forward, One Step Back: Changes in News Coverage of Medical Interventions". Health Communication 33 (2): 174–87. doi:10.1080/10410236.2016.1250706. PMID 27983868.

- ↑ Sebastian-Coleman, L. (2013). Measuring Data Quality for Ongoing Improvement: A Data Quality Assessment Framework (1st ed.). Morgan Kaufmann. pp. 376. ISBN 9780123970336.

- ↑ 20.0 20.1 Schwartz, K.L.; Roe, T.; Northrup. J. et al. (2006). "Family medicine patients' use of the Internet for health information: a MetroNet study". Journal of the American Board of Family Medicine 19 (1): 39–45. doi:10.3122/jabfm.19.1.39. PMID 16492004.

- ↑ Rose, P.W.; Jenkins, L.; Fuller, A. et al. (2002). "Doctors' and patients' use of the Internet for healthcare: a study from one general practice". Health Information and Libraries Journal 19 (4): 233-5. doi:10.1046/j.1471-1842.2002.00402.x. PMID 12485155.

- ↑ Floridi, L. (2013). "Information Quality". Philosophy & Technology 26 (1): 1–6. doi:10.1007/s13347-013-0101-3.

- ↑ Yuelin, L.; Zhang, X.; Wang, S. (2017). "Fake vs. real health information in social media in China". Proceedings of the Association for Information Science and Technology 54 (1): 742–43. doi:10.1002/pra2.2017.14505401139.

- ↑ Childs, S. (2004). "Developing health website quality assessment guidelines for the voluntary sector: Outcomes from the Judge Project". Health Information and Libraries Journal 21 (Suppl. 2): 14–26. doi:10.1111/j.1740-3324.2004.00520.x. PMID 15317572.

Notes

This presentation is faithful to the original, with only a few minor changes to presentation.