Journal:Wrangling environmental exposure data: Guidance for getting the best information from your laboratory measurements

| Full article title | Wrangling environmental exposure data: Guidance for getting the best information from your laboratory measurements |

|---|---|

| Journal | Environmental Health |

| Author(s) | Udesky, Julia O.; Dodson, Robin E.; Perovich, Laura J.; Rudel, Ruthann A. |

| Author affiliation(s) | Silent Spring Institute, MIT Media Lab |

| Primary contact | Email: Use journal website to contact |

| Year published | 2019 |

| Volume and issue | 18 |

| Article # | 99 |

| DOI | 10.1186/s12940-019-0537-8 |

| ISSN | 1476-069X |

| Distribution license | Creative Commons Attribution 4.0 International |

| Website | https://ehjournal.biomedcentral.com/articles/10.1186/s12940-019-0537-8 |

| Download | https://ehjournal.biomedcentral.com/track/pdf/10.1186/s12940-019-0537-8 (PDF) |

Abstract

Background: Environmental health and exposure researchers can improve the quality and interpretation of their chemical measurement data, avoid spurious results, and improve analytical protocols for new chemicals by closely examining lab and field quality control (QC) data. Reporting QC data along with chemical measurements in biological and environmental samples allows readers to evaluate data quality and appropriate uses of the data (e.g., for comparison to other exposure studies, association with health outcomes, use in regulatory decision-making). However many studies do not adequately describe or interpret QC assessments in publications, leaving readers uncertain about the level of confidence in the reported data. One potential barrier to both QC implementation and reporting is that guidance on how to integrate and interpret QC assessments is often fragmented and difficult to find, with no centralized repository or summary. In addition, existing documents are typically written for regulatory scientists rather than environmental health researchers, who may have little or no experience in analytical chemistry.

Objectives: We discuss approaches for implementing quality assurance/quality control (QA/QC) in environmental exposure measurement projects and describe our process for interpreting QC results and drawing conclusions about data validity.

Discussion: Our methods build upon existing guidance and years of practical experience collecting exposure data and analyzing it in collaboration with contract and university laboratories, as well as the Centers for Disease Control and Prevention. With real examples from our data, we demonstrate problems that would not have come to light had we not engaged with our QC data and incorporated field QC samples in our study design. Our approach focuses on descriptive analyses and data visualizations that have been compatible with diverse exposure studies, with sample sizes ranging from tens to hundreds of samples. Future work could incorporate additional statistically grounded methods for larger datasets with more QC samples.

Conclusions: This guidance, along with example table shells, graphics, and some sample R code, provides a useful set of tools for getting the best information from valuable environmental exposure datasets and enabling valid comparison and synthesis of exposure data across studies.

Keywords: exposure science, environmental epidemiology, environmental chemicals, environmental monitoring, quality assurance/quality control (QA/QC), data validation, exposure measurement, measurement error

Background

Chemical measurements play a critical role in the study of links between the environment and health, yet many researchers in this field receive little if any training in analytical chemistry. The growing interest in measuring and evaluating health effects of co-exposure to a multitude of chemicals[1][2] makes this gap in training increasingly problematic, as the task at hand becomes ever-more complicated (i.e., analyzing for more and for new chemicals of concern). If steps are not taken throughout samples collection and analysis to minimize and characterize likely sources of measurement error, the impact on the interpretation of these valuable measurements can vary along the spectrum from false negative to false positive, as we will illustrate with real examples from our own data.

Some important considerations when measuring and interpreting environmental chemical exposures have been discussed in other peer-reviewed articles or official guidance documents. For example, a recent document from the Environmental Protection Agency (EPA) provides citizen scientists with guidance on how to develop a field measurement program, including planning for the collection of quality control (QC) samples.[3] The Centers for Disease Control and Prevention (CDC) also gives guidance related to collection, storage, and shipment of biological samples for analysis of environmental chemicals or nutritional factors.[4] To assess the quality of already-collected data, LaKind et al. (2014) developed a tool to evaluate epidemiologic studies that use biomonitoring data on short-lived chemicals, with a focus on critical elements of study design such as choice of analytical and sampling methods.[5] The tool was recently incorporated into “ExpoQual,” a framework for assessing suitability of both measured and modeled exposure data for a given use (“fit-for-purpose”).[6] Other useful guidance has been published, for example on automated quality assurance/quality control (QA/QC) processes for sensors collecting continuous streams of environmental data[7] and for establishing an overall data management plan, including documentation of metadata and strategies for data storage.[8]

Despite these helpful documents, there is still a lack of readily accessible, practical guidance on how to interpret and use the results of both field and laboratory QC checks to qualify exposure datasets (i.e., flag results for certain compounds or certain samples that are imprecise, estimated, or potentially over- or under-reported), and this gap is reflected in the environmental health literature. While the vast majority of environmental health studies report robust findings based on high-quality measurements, questions about measure validity have led to confusion and lack of confidence in some topic areas. For example, a number of studies have measured rapidly metabolized chemicals such as phthalates and bisphenol A (BPA) in blood or other non-urine matrices, despite the fact that urine is the preferred matrix for these chemicals. Phthalates and BPA are present at higher levels in urine and, when the proper metabolites are measured, there is less concern about contamination from external sources, including contamination from plastics during specimen collection.[9]

More commonly, however, exposure studies simply do not adequately report on QA/QC or describe how QC results informed reporting and interpretation of the data. In the context of systematic review and weight of evidence approaches, not reporting on QA/QC may result in a study being given less weight. For example, the risk of bias tool employed in case studies of the Navigation Guide method for systematic review includes reporting of certain QA/QC results in its criteria for a “low risk of bias” rating (e.g., reference Lam et al.[10]). When we applied the Navigation Guide's QA/QC criterion to 30 studies of biological or environmental measurements that we included in a recent review of environmental exposures and breast cancer[11], we found that more than half either did not report QA/QC details that were required for a “low risk of bias” assessment, or if they did report QA/QC, they did not interpret or use them adequately to inform the analysis (e.g., reported poor precision but did not discuss how/whether this could affect findings) (see Additional file 1 for details). Similarly, when LaKind et al. applied their study quality assessment tool to epidemiologic literature on BPA and neurodevelopmental and respiratory health, they found that QA/QC issues related to contamination and analyte stability were not well-reported.[12] Of note, several of the studies in our breast cancer review that did not provide adequate QA/QC information had their samples analyzed at the CDC Environmental Health Laboratory. It is helpful to include summaries of QA/QC assessments in published work, even if researchers are using a well-established lab, because this provides a useful standard for comparing QA/QC in other studies.

Over many years of collecting and interpreting environmental exposure data, we have developed a standard approach for (1) using field and laboratory QA/QC to validate and qualify chemical measurement data for environmental samples and (2) presenting our QC findings in our research publications (e.g., reference Rudel et al.[13]). These methods are based on data validation procedures from the EPA, Army Corps of Engineers, and U.S. Geological Survey[14][15][16][17], as well as the guidance of the many experienced chemists with whom we have collaborated. In this commentary, we compile our methods into a practical guide, focusing on how to use the information to make decisions about data usability and how to make the information transparent in publications. Our guide is organized in three sections, presenting questions to consider during study design, implementation, and data analysis. We describe key elements of QA/QC, including for assessing precision, accuracy, and sample contamination, and we include suggested graphics (Additional files 2 and 4), and table shells (Additional file 2) that clearly present QC data, emphasizing how it may affect interpretation of study measurements. Minimizing and characterizing potential errors requires close collaboration between the researchers who may have designed the study and plan to analyze the data and the chemists performing the analysis. As such, our guidance also includes example correspondence (Additional file 2) to help establish this relationship at the start of a project.

We present a detailed approach based on our own studies, acknowledging that this is an example, not a one-size-fits-all approach. Every study is unique and some will require specialized quality assessment not covered here. Still, we anticipate that many environmental health scientists will find this example to be a useful framework for building their own processes.

About the wrangling guide

Our guide is organized by a series of questions that we ask when we start a new study, and then ask again when we receive measurement data from the lab. Key QA/QC concepts are introduced in the section on study design, and they are more thoroughly addressed in sections concerning study implementation and data interpretation.

Not every question is relevant to every study; for example, researchers working with a lab to develop a new analytical method will need to focus more on method validation and quality control than those using a well-established method and credentialed lab. Still, controlling for issues related to sample collection and transport remain important in the latter scenario, as does variation in method performance and/or sources of contamination when samples are analyzed at the laboratory in multiple batches. Our guidance is most relevant to targeted organic chemical analyses, which use liquid or gas chromatography, often in combination with mass spectrometry, to determine whether a pre-defined set of chemicals are present in samples. QA/QC approaches for non-targeted methods, where tentative identities are established by matching to a library of mass spectra such as the National Institute of Standards and Technology (NIST) database[18], are addressed elsewhere.[19]

This guide is not a set of rules, but rather establishes a framework for evaluating and reporting QC data for chemical measurements in environmental or biological samples. While it may be most useful to environmental health scientists who have little or no experience in analytical chemistry, we hope that researchers with a range of experience will find it helpful to consult our approach for evaluating and presenting QC data in publications.

Because the number of QC samples available is often limited by budgetary constraints, many of the methods we use rely on visualization and conservative action (i.e., removing chemicals from our dataset or qualifying their interpretation unless there is evidence that the analytical method was accurate and precise) rather than on statistical methods. Whether statistical methods are incorporated or not, tabulating, visualizing, and communicating about QA/QC for environmental exposure measurements is important in order to reveal systematic error in the laboratory[20] or in the field, supporting future use of the data.[6]

Study design

What can we measure and how?

One of our first priorities when designing a new study is to consult with a chemist to establish an analyte list and method for analysis.

Chemical identities

Given the complexity of chemical synonyms, it is helpful to be as specific as possible when communicating about the chemicals to be analyzed. One approach is to send the lab a list of the chemical names (avoiding the use of trade names, which can be imprecise), Chemical Abstracts Service (CAS) numbers, and configurations (e.g., branched or linear, if relevant) of all desired analytes (see Additional file 1 for example correspondence). For biomonitoring, it is also important to determine if the parent chemical or metabolites will be targeted.

Matrix

Another consideration in developing the analyte list is what type of samples are available (if working with stored samples) or will be collected. As discussed previously, certain biological matrices are preferred over others for measurement, depending on the chemicals (e.g., reference Calafat et al.[9]). Matrix type is also relevant for environmental samples; for example, physical chemical properties like the octanol air partitioning coefficient inform whether an analyte is more likely to be found in air or dust.[21]

Method

The process of determining a final list of analytes will differ depending on whether the lab has an established method or is developing a new method, and whether it is targeted to a few chemicals with similar structure versus many chemicals with different properties (different polarities, solubilities, etc.). Targeting a broad suite of chemicals may limit the degree of precision and accuracy that can be achieved for each individual chemical, and the lab may need to invest substantial effort to develop a multi-residue method—that is, a method that can analyze for many chemicals at once—and determine a final list of target chemicals with acceptable method performance. In any case, a new method should be validated to characterize performance measures—precision, accuracy, expected quantitation and method detection limits, and the range of concentrations that can be quantitated with demonstrated precision and accuracy—before analyzing study samples. If the lab already has an established method for the chemicals of interest, the research team should review method performance measures to ensure they are consistent with study objectives.

Quantification method

The method of quantification affects the types of QC data that are expected from the lab. Three common approaches include external calibration, internal calibration and isotope dilution (a form of internal calibration). External calibration, where the response (i.e., chromatogram peak) from the sample is compared to the response from calibration standards containing known amounts of the analytes of interest, is a simple method that can be used for a variety of different analyses. However, results can be influenced by interference from other chemicals present in the sample matrix and resulting fluctuations in the analytical instrument response.[22] With internal calibration, on the other hand, one or more labeled compounds—either one of the targeted analytes or a closely related compound—are added to each of the samples just before they are injected into the instrument for analysis and used to correct for variation in the instrument response. The internal standard must be similar to the target compounds in physical chemical properties (e.g., a labeled polychlorinated biphenyl should not be used to represent a brominated diphenyl ether). Finally, for isotope dilution methods—which are the most accurate—labeled isotopes for each of the target compounds are added to samples prior to extraction. Additional internal standards are added to the samples just prior to injection to monitor loss of the labeled isotopes, and the analytical software then corrects for loss during sample extraction and for effects of the sample matrix (e.g., presence of other compounds in the sample that interfere with the analysis).[22] Many laboratories that analyze chemical levels in blood, urine, or tissues (e.g., the CDC National Exposure Research Laboratory) use isotope dilution quantification. However, isotopically labeled standards are not available for every compound and may be cost-prohibitive. If quantification is by internal or external calibration, researchers will likely need to review and report more extensive QC data from the lab compared to when using isotope dilution, as discussed in the section on study implementation.

Sensitivity of the method

Another important factor in selecting a method is to make sure it is sensitive enough to detect the anticipated concentrations in the field samples (samples submitted to the lab) down to levels that are relevant to the research question. For example, commercial labs measuring environmental chemicals may establish reporting limits to meet the needs of occupational or regulatory safety compliance testing; these limits may be much higher than levels that are meaningful for research questions about general population exposure and could result in most data being reported as non-detect or qualified as estimated and imprecise. On the other hand, lower reporting limits generally translate to more expensive testing, so researchers have the opportunity to balance sensitivity and cost.

How to minimize sample contamination?

There are ample opportunities for sample contamination during collection, storage, shipment, and analysis, especially when targeting ubiquitous chemicals commonly encountered in consumer products, home and office furnishings, or laboratory equipment. An important aspect of method validation is to check for contamination of samples during field activities, from collection containers, during transport and storage, and during laboratory extraction and analysis (see discussion of blanks in the section on study implementation). The CDC’s guidance on sample collection and management identifies some possible sources of contamination when analyzing for common chemicals like plastics chemicals, antimicrobials, and preservatives in blood or urine. Key considerations, depending on the particular chemicals being targeted, include selecting appropriate collection containers (e.g., glass containers if analyzing for plastics chemicals), avoiding the use of urine preservatives (e.g., when analyzing for parabens, BPA), and providing adequate instructions to participants collecting their own samples (e.g., avoid using antimicrobial soaps or wipes during collection).[4] As noted previously, contamination can also be minimized in biomonitoring of some chemicals by measuring a metabolite rather than parent chemical, and possibly by measuring a conjugated rather than free form of the metabolite.[9] In some cases, the lab may need to pre-screen collection containers or other sampling materials to see if they contain any target chemicals. For example, when we used polyurethane foam (PUF) sorbent to collect air samples for analysis of flame retardants, plastics chemicals, and preservatives, we asked the lab to pre-screen the PUF matrix for target analytes. Another important precaution was to ship the samplers wrapped in aluminum foil that had been baked in a muffle furnace to ensure it was clean and uncoated.

How will the lab report the data?

Three key elements of data typically reported by the lab are the identity of the chemical, the reporting limit for each chemical and sample, and how much of each chemical is present in each sample. Sometimes an additional measure is needed to normalize mass of chemical per sample, for example, grams of urinary creatinine, urine specific gravity, grams of serum lipid, or cubic meters of air (see work by LaKind et al.[5] for discussion of issues related to matrix adjustment and presentation of measurements).

Chemical identities

It is helpful to request in advance that the lab report CAS numbers and configurations (if relevant) along with chemical names (see Additional files 2 and 3 for example reporting requests).

Reporting limits

Common terms used by laboratories to discuss reporting limits include "instrument detection limit" (IDL), "method detection limit" (MDL) and "limit of quantitation" (LOQ). The IDL and MDL are both related to the level of an analyte that can be detected with confidence that it is truly present. The IDL captures the smallest true signal (change in instrument response when an analyte is present) that can be distinguished from background noise (variation in the instrument response to blank samples), while the MDL takes into account additional sources of error introduced during sample preparation (e.g., the extraction process, possible concentration or dilution of samples) and thus is higher than the IDL. The MDL is also often referred to as the limit of detection (LOD) or detection limit (DL). The LOQ, on the other hand, describes the lowest mass or concentration that can be detected with confidence in the amount detected. The reporting limit (RL) or method reporting limit (MRL), which is either the lowest value that the lab will report or the lowest value that the lab will report without flagging the data as estimated, is often (but not always) the same as the quantitation limit or LOQ.

Before submitting samples for analysis, it is helpful to find out (1) the methods and terminology that the laboratory will use to describe reporting limits (LOD, LOQ, etc.) and (2) whether reporting limits will be consistent within a chemical or whether limits could vary between samples or batches. Equally critical is to clarify how the lab will report non-detects. Several different values could appear in the amount or concentration fields for non-detects, including but not limited to zeroes, the detection limit, the reporting limit, or “ND.”

Amount

Another important point to discuss in advance with the laboratory is how they will report values for compounds with a confirmed identity but measured at levels below what accurately can be quantitated. For example, when measuring chemicals of emerging interest, we ask laboratories to report estimated values below the RL and we flag them during data analysis. This practice has some limitations[23] but is preferable to falsely reducing variance in the dataset by treating estimated values below the RL as equivalent to non-detects below the detection limit. Non-detects can present significant data analysis challenges, and while a discussion of the best available methods and the problems with common approaches such as substituting the RL, RL/2, or zero for non-detects is beyond the scope of this commentary, it is a critical issue, and we refer the reader to several helpful resources.[23][24][25][26] Reporting estimated values is not standard practice for many laboratories, so it is important to raise this issue early on (see Additional file 2 for example correspondence). If the lab reports data qualifier flags, it may be necessary to clarify the interpretation of those flags, including but not limited to which flags distinguish non-detects from detects above the MRL and estimated values. In other words, it is best not to make assumptions.

Study implementation

What QA/QC is needed?

QA/QC occurs both inside and outside the analytical laboratory (see Table 1). Field QC samples, namely blanks and duplicates, capture the sum of contamination and measurement error from collection, storage, transport, and laboratory sources. We base the number of QC samples we collect in the field on budget and our sample size, generally aiming for at least 20% QC samples (e.g., if collecting 80 field samples, then collect 16 field QC samples), though a higher percentage is needed in small studies. Lab analysts should be blinded to the identity of field QC samples whenever possible. Maintaining blinding can be challenging, so it is worth putting some thought into sample names (e.g., QC samples should not have obviously different IDs than other samples, should not be labeled with a “D” for duplicate or “B” for blank). Logs retained at the site must contain sufficient information to allow the data analysts to identify field QC samples and sample types.

| ||||||||||||||||||||||||||||||||||||

QC samples prepared in the lab can include spiked samples or certified reference materials (CRMs) for target chemicals to evaluate the accuracy of the analytical method, surrogate compounds added to field samples to estimate recovery during extraction and analysis, and blanks to assess contamination with target chemicals from some source in the laboratory. While laboratories generally conduct rigorous review of their own QC data, considering lab and field QC together can help to identify specific sources of contamination, imprecision, and systematic error. As such, we typically request to review the lab’s raw QC data in conjunction with the field QC data.

Spiked samples and certified reference material

Spiked samples and CRMs establish the accuracy of the method by assessing the recoveries of known amounts of each target chemical from a clean or representative matrix. A CRM is a matrix comparable to that used for sampling (e.g., drinking water) that has been certified to contain a specific amount of analyte with a well-characterized uncertainty. If CRMs aren’t available, the laboratory can prepare laboratory control samples (LCSs) by spiking known amounts of target chemicals into a clean sample of the matrix of interest, such as a dust wipe, air sampler, purified water, or synthetic urine or blood that has been analyzed and shown to be free of the analytes of interest, or to contain a consistent amount of analytes of interest that can be subtracted from the amounts measured in the spiked sample to calculate a percent recovery. The LCS or CRM—at least one per analytical batch—is run through the same sample preparation, extraction, and analysis as the field samples to capture the accuracy of the complete method; calculating the percent of the known/spiked amount recovered for each analyte tells us whether the method is accurate in the matrix.

Another type of spiked sample, called a matrix spike, can be used to check the extraction efficiency for a complex sampling matrix that may interfere with the analysis. These samples are typically included if there is concern about interference from the sampling matrix, for example, with house dust, soil or sediment samples, consumer products, or biological samples like blood. Instead of recovery from a clean matrix, these QC checks capture recovery from a representative field sample. Here the “matrix” refers to all elements of the sample other than the targeted analytes; this includes the sampling medium (e.g., dust, PUF, foam) itself as well as any other chemicals present in the sample that might interfere with measurement of target chemicals. A matrix spike can be created, for example, by splitting a representative sample collected in the field and spiking the target analytes into one half prior to extraction and analysis. The recovery of spiked analyte is determined as the amount measured in the spiked sample minus the amount measured in the non-spiked sample divided by the spike amount. A limitation of this approach is that the analytes are spiked in an already dissolved state, so it is possible that the analytes in the environmental matrix would not be extracted as readily from the matrix as the spiked chemicals. Thus, the true extraction efficiency may be lower than represented by the matrix spike.

For newly developed methods where performance is not characterized, we request results for all recoveries of spiked samples and/or CRMs so that we can perform visual checks that have at times revealed systematic problems with the analytical method that were not noted by the lab (see the data interpretation section for further discussion). For well-established methods, and particularly when isotope dilution quantification is used, it is sufficient to request a table summarizing the spike recovery or CRM recovery results (by batch, if relevant) for reporting in publications.

Surrogate recovery standards

Whereas recoveries from LCSs, matrix spikes, and/or CRMs tell us about the performance of the method in a clean or representative matrix, surrogate compounds are used to evaluate recoveries from individual samples. Recoveries of surrogate compounds can help identify any individual samples that may have inaccurate quantification, for example due to extraction errors or chemical interferences. Surrogates, like internal standards, are spiked into each sample; however, surrogates are added prior to sample extraction to assess the efficiency of this process. Internal standards, on the other hand, are added after extraction, just prior to injection into the chromatographic system, to account for matrix effects and other variation in the instrument response during analysis. The ideal surrogate is a chemical that is not typically present in the environment but that is representative of the physical and chemical properties of target analytes.[16] It is best to have a representative surrogate for each individual chemical, though when analyzing for numerous chemicals at once with multi-residue methods, cost and time restraints may result in one or a few surrogates being selected to represent a class of compounds. In this case, it is critical that the lab selects an appropriate surrogate.

For analyses using external or internal calibration, we ask the lab to provide us with the recovery results for each surrogate in each sample, so that we can flag any samples or compounds that might have had extraction problems. However, if the lab uses isotope dilution quantification, we are less concerned about obtaining this raw data from the laboratory given that the reported results are already automatically corrected for extraction and matrix effects.

Blanks

Collecting and preparing several types of blank samples helps us to distinguish sources of contamination. Laboratory blanks alert us to possible contamination originating in the lab. These blanks can capture contamination during sample extraction (solvent blanks), from reagents and other materials used in the analytical method (solvent method blanks), or from “typical” background levels of target analytes present in the sampling matrix (matrix blanks). Field blanks, on the other hand, capture all possible contamination during sample collection and analysis. Field blanks are clean samples (e.g., distilled water, air sampling cartridge detached from pump immediately following calibration) that are transported to the sampling location and exposed to all of the same conditions as the real samples (e.g., the sampler is opened, if applicable) except the actual collection process. We aim for at least 10% of our samples to be field blanks, with an absolute minimum of three field blanks.

Unfortunately, in some cases there aren’t good options for representative field blanks. For example, field blanks can be created for biomonitoring programs by taking empty collection containers into the field and using purified water or synthetic urine or blood to create a blank.[4] However, important shortcomings of this approach are that (1) it is difficult to capture contamination that can be introduced by sample collection materials such as needles and plastic tubing used to collect blood, (2) water may not perform the same as urine or blood in the extraction and analysis, and (3) the lab will likely be able to identify the field blanks. Similarly, it is difficult to maintain lab blinding when using a “clean” matrix like vacuumed quartz sand as a field blank for vacuumed house dust.

Duplicates

Collecting side-by-side duplicate samples in the field helps assess the precision of both the sample collection and analytical methods. Duplicate samples can also be created by collecting a single sample and splitting it prior to analysis, which is the only option for biological samples; however, this method only captures the precision of the analysis process[14][17] and could lead to un-blinding of the lab analyst, if for example the split samples are noticeably smaller than others. When planning for duplicate collection, the best practice is to label these samples so that the lab analyst is blinded to duplicate pairs (i.e., use different Sample IDs for the two samples). Ideally, researchers should plan to collect or create (that is, split) one duplicate pair per every 10–20 samples collected and spread duplicate pairs across analytical batches.

Analytical batches

Analytical performance can shift over time, and even between multiple extractions or instrument runs within a short time window. Laboratories often analyze samples in multiple batches, that is, sets of field samples and associated laboratory QC samples that are analyzed together in one analytical run. The time between batches can vary from days to months or even years, though ideally this time span is minimized in order to maintain consistent equipment and procedures throughout the study.

Two approaches help address batch-to-batch variability: (1) randomizing participant samples between batches by specifying the order and grouping of samples (and blind field QC samples) when submitting samples to the lab (this may require corresponding with the lab to determine the batch size in advance), and (2) running CRMs—such as standard reference material (SRM) from NIST[27]—in each batch of samples in order to characterize drift. When CRMs are not available, another option is for the researcher to prepare identical/split reference samples. We have done this, for example, by pooling together several urine specimens and making many aliquots of the pool, then including 1–2 blinded samples from this pool with each set of samples we send to the lab. If the laboratory analysis is performed in multiple batches, all QC elements should be examined on a batch-specific basis. Not every laboratory will specify whether or not samples were analyzed in batches; it is a good idea to request that a variable for batch be included in the results report.

In Additional files 2 and 3, we provide example correspondence for requesting QC data and consistent formatting from the lab.

Data interpretation

What was measured?

Chemical identities

No amount of QA/QC can save a dataset from basic misunderstandings about what is being reported. After receiving data, it is helpful to ask the chemists to double check the analyte list (chemical name, CAS, isomer details) against the list of standards used in the analysis, particularly if this information was not included in the report from the lab. It is worthwhile to make this verification even when chemical identities were specified in advance of the analysis, as it is possible that the standard used for analysis was slightly different than planned. Only through this process, for example, did we discover that a lab had accidentally purchased a standard for 2,2,4-trimethyl-1,3-pentanediol isobutyrate rather than 2,2,4-trimethyl-1,3-pentanediol diisobutyrate (two different chemicals).

Table 2 summarizes some steps for getting acquainted with a new dataset received from the lab. We have also published sample R code on GitHub that may be helpful for getting acquainted with a new dataset, including examining trends in QC and field samples over time.[28]

|

Were there trends over time?

Analytical batches

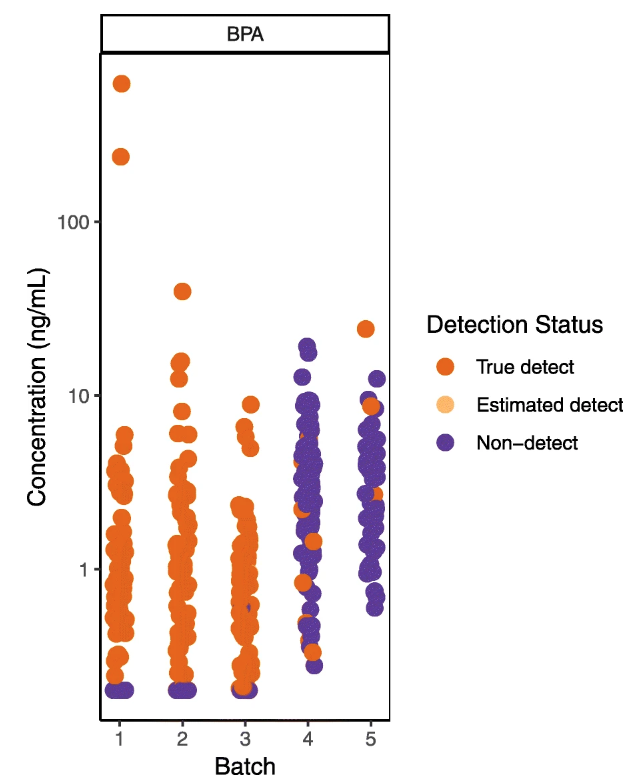

Examining results by batch or even by sample run order can reveal trends in QC samples over time, identifying systematic laboratory errors that may be missed by summary statistics or visualizations.[20] Shifts in method performance over time may require batch-specific corrections or dropping or flagging data from certain batches. Notably, a trend in QC sample results over time can be problematic even if they remain within the acceptable limits established by the lab. In our own work, for example, examining our data by analytical batch revealed an upward trend in sample-specific detection limits for some analytes, such that detection limits in later batches were within the range of sample results from earlier batches (Fig. 1). The detection limits in the later batches still met the specifications of our contract with the lab, but it was clear that we would not be able to compare results in the latter two batches to those in the first three. We showed the plot in Fig. 1 to the lab and they agreed to re-analyze the samples in the later batches, which resulted in more consistent detection limits.

|

Is the method accurate?

Spiked samples and certified reference material

Table 3 outlines our approach for analyzing LCS or matrix spike recovery or CRM data. The approach is similar for all of these samples. However, one distinction is that if LCS recovery and other QC measures, such as lab blanks (matrix, solvent method, or other) are acceptable, a poor matrix spike recovery (higher or lower than acceptable bounds) can alert chemists to interferences from matrix effects, suggesting steps to address this such as matrix-matched calibration.[17] We typically only use data for analytes that have average LCS, matrix spike and/or CRM recoveries between 50 and 150%, though this decision criterion can be adjusted based on the needs of the project. If we do retain data for chemicals with spike or CRM recoveries outside of this acceptable range, we note in publications that concentrations in our data may be under- or over-reported.

|

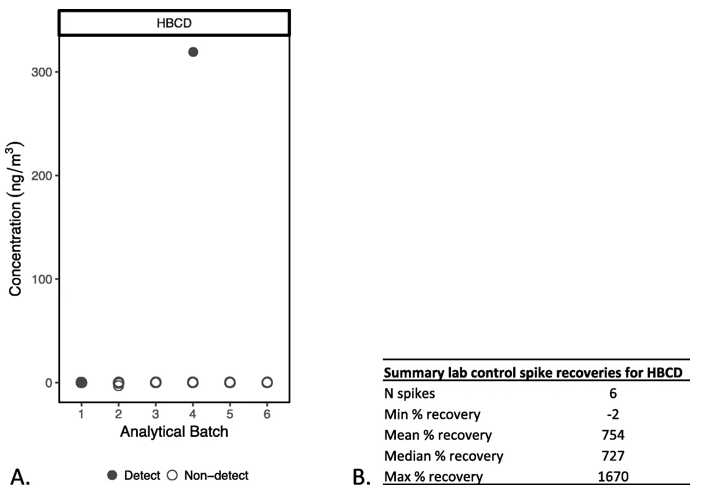

Figure 2 illustrates a case from our own data where the laboratory reported that 1,2,5,6,9,10-Hexabromocyclododecane (HBCD), a brominated flame retardant, was mostly “not detected,” but the LCS recoveries, which ranged from − 2 to 1670% and averaged about 750%, indicated that the method was not able to accurately quantify this chemical. We removed this compound from our dataset and did not report on it. Examining spike recoveries thus prevents us from reporting a chemical as “not detected,” or from reporting an unreliable detect, if the analytical method is not performing accurately for that compound.

|

A summary of the recovery information should be included in the peer-reviewed manuscript to demonstrate accuracy. See Additional file 2: Tables S1-S2 and Figure S1 for an example of how to present this information.

Were there problems with certain samples?

Surrogate recovery standards

When isotope dilution quantitation with automatic recovery correction is not employed, we review the surrogate recovery standard data for each individual sample, generally considering 50–150% recovery to be acceptable. Interpretation of an out-of-range surrogate recovery depends both on its direction and on the levels of the associated analytes (i.e., those represented by the surrogate compound) measured in the sample. In samples with low surrogate recoveries, the concern is that if similar target analytes are present in the sample, the measurements will be underestimated/biased low. For samples with high surrogate recoveries, on the other hand, we can be confident that similar target compounds should be detected if present, but the amount may be overestimated or biased high. If surrogate recoveries are out-of-range in all samples, and particularly if they are also out-of-range in blank samples, this is likely indicative of a broader problem with the analytical method.[16][29] Table 4 outlines our approach for analyzing surrogate recovery data.

|

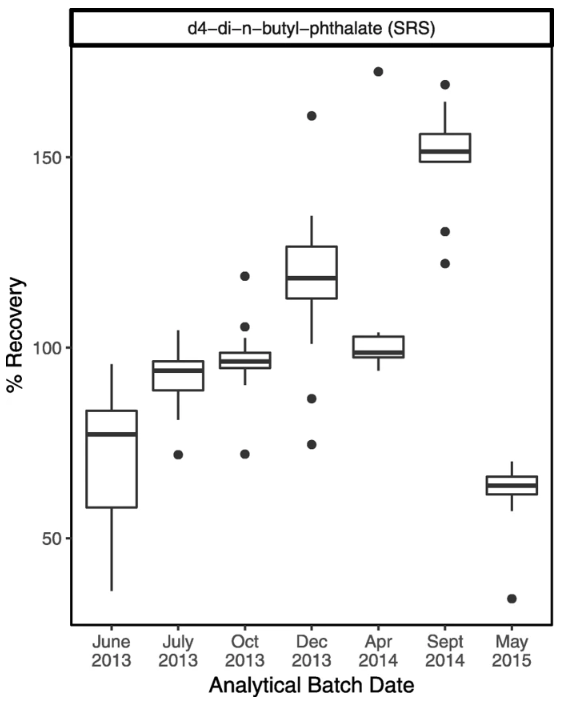

Figure 3 shows an example where our examination of surrogate recoveries on a batch-specific basis indicated trends in the recoveries over time, even though most remained within the generally acceptable range (50–150%). This plot led to a discussion with the lab analyst, who suggested that stock solutions for surrogate compounds may have concentrated over time as solvent evaporated, until a new stock solution was prepared for the last batch. On the advice of the lab analyst, we looked at trends in the “spike check”—solvent that is spiked with target analytes but not extracted or concentrated—sample recoveries. Spike check recoveries indicated good reproducibility, giving us confidence that the drift in surrogate recoveries did not reflect changes in instrument calibration over time.

|

Is there evidence of contamination or analytical bias?

Blanks

Once we have determined that we can accurately measure the target analytes in our sampling matrix, the next step is to ensure that we are confident about whether those target analytes came from the study site or participant—or from somewhere else. Table 5 outlines our approach to reviewing data from blank samples. When it is not straight forward to collect field blanks (e.g., for blood samples), any assessment of contamination introduced from sampling (e.g., pre-screening of collection materials) should be thoroughly described and limitations acknowledged.

|

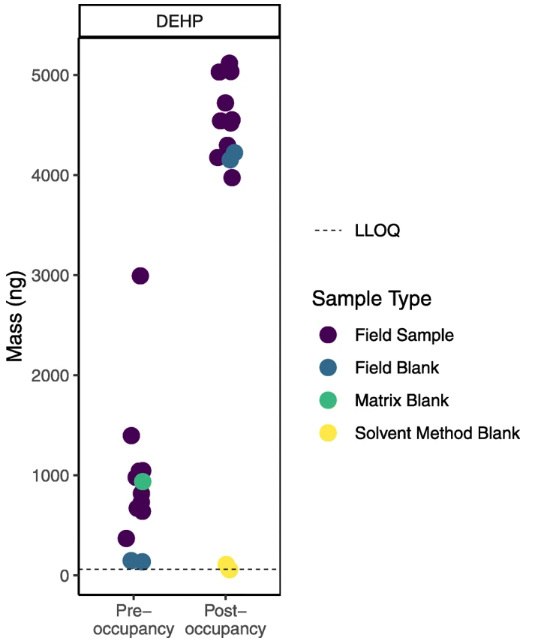

Figure 4 illustrates an example from our study comparing levels of chemicals in air in college dorm rooms before and after students moved in (data not yet published) where field blanks proved particularly crucial. Our first look at the sample data suggested that bis(2-ethylhexyl) phthalate (DEHP), a chemical commonly used in plastics, was present at notably higher levels after students moved in. However, upon further review, we found that DEHP levels in the field blanks were also higher and in the range of the sample data at the post- compared to pre-occupancy time point. At the same time, levels of DEHP in the laboratory blanks (matrix and solvent method) were not elevated. A conversation with the lab revealed that different plastic bags may have been used to transport samples during the later round of sampling (i.e., the post-occupancy sampling). These bags may have contained higher levels of DEHP.

|

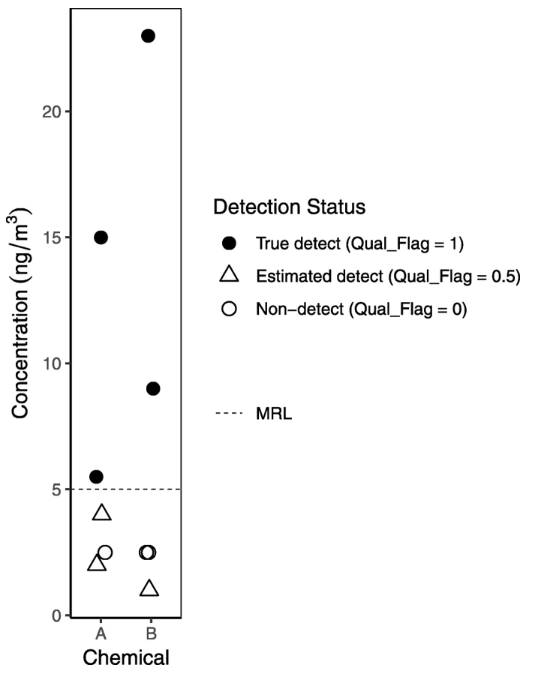

Typically, we use blanks to qualify values rather than remove measurements from our data. Specifically, we use detected values in field blanks and sometimes other blanks (see Table 6) as a basis to qualify data by raising the method reporting limit (MRL), flagging low values as estimated, until we feel confident in the levels we’re reporting. Values reported by the lab but below the MRL are considered estimated (see Fig. 5 for an example of graphical presentation distinguishing estimated detects below the MRL from true detects above the MRL). In the example of the potentially DEHP-contaminated plastic bags used to transport samples, however, we decided not to report DEHP levels for the post-occupancy samples, given the evidence that contamination might have significantly biased the results in that batch. Unexpected findings, such as a chemical or chemicals detected at much higher levels in a lab blank (matrix, solvent method, or other) than in the field blanks, warrant further investigation. In this case, we might suspect that the lab blank was contaminated by another sample; examining the sample run order (which must be requested from the lab; see example correspondence in Additional file 2) could shed light on whether a very high sample was run directly before the lab blank.

|

|

After we establish the MRL for chemicals that are detected in blanks, we are confident that levels in samples above that value are true detects and that they are correctly ranked, but there may still be concern about consistent bias in the actual numeric values being reported, both from contamination in the field or lab or from bias in the analytical method. Consistent bias in levels would not be a major concern for ranking individual exposure or comparing groups within a study but is misleading when comparing to levels reported in other studies. For each chemical, we check for evidence of consistent bias across many blanks and correct concentrations reported in summary tables in our papers to reduce this bias (see Table 7).

|

How precise are these measurements?

Duplicates

Duplicate samples indicate whether variation in our data is explained by imprecision. If duplicate samples have high reproducibility, meaning that the relative percent difference between measurements in duplicate samples is less than 30%, it adds to confidence in the field sample results. In fact, excellent precision in duplicate samples can influence a decision about how to treat data for a chemical that has sporadic blank contamination or variable spiked sample or CRM recoveries because it can indicate that the results are reproducible. On the other hand, consistently poor precision for dust wipe samples, for example, has informed our decision to rely more heavily on measured air concentrations as an indicator of home exposure.[30] Table 8 outlines our approach for analyzing duplicate data.

|

Publication: How do we tell others about our data?

While it is imperative that a researcher has a thorough understanding of the quality of their own data, it is equally important they clearly communicate the results of the QA/QC review. When we considered the articles included in our recent review of epidemiologic studies of environmental chemicals and breast cancer[11], we identified gaps in reporting and/or interpretation of QA/QC data, an issue also noted by LaKind et al..[12] To encourage more regular and consistent reporting of QA/QC results, in supplementary material we provide examples of the tables and plots (Additional file 2: Tables S3, S4, and Figure S1) we have used to communicate QA/QC findings in our publications. Consistently publishing QA/QC findings allows readers to think for themselves about the quality of the data and can inform risk of bias assessments in a systematic review. QA/QC data also provides a basis for determining whether further analyses of the published data (e.g., comparisons to or pooling with other datasets) are appropriate.

Conclusion

Several real examples from our data demonstrate that close examination of lab and field quality control data is worth the effort. By providing a detailed example of how we have processed and drawn conclusions about our own environmental exposure data (Additional file 4), we aim to make our guidelines explicit and straightforward so that others may adopt and build on them.

Abbreviations

CAS: Chemical Abstracts Service

CDC: Centers for Disease Control and Prevention

CRM: Certified reference material

CRQL: Contract required quantitation limit

DEHP: Bis(2-ethylhexyl) phthalate

DL: Detection limit

EPA: United States Environmental Protection Agency

HBCD: 1,2,5,6,9,10-Hexabromocyclododecane

LCS: Laboratory control sample

LOD: Limit of detection

LOQ: Limit of quantitation

LQL: Laboratory quantitation level

MDL: Method detection limit

MRL: Method reporting limit

NIST: National Institute of Standards and Technology

PQL: Practical quantitation limit

PUF: Polyurethane foam

QA/QC: Quality assurance/quality control

QC: Quality control

RL: Reporting limit

RPD: Relative percent difference

RSD: Relative standard deviation

SRM: Standard reference material

Supplementary information

- Additional file 1: Table S1. Summary of our application of the Navigation Guide Criteria for Low Risk of Bias Assessment for the question "Were exposure assessment methods robust?"

- Additional file 2: Section I: Example lab correspondence. Section II: Table S2. Summary statistics table shell. Table S3. Quality assurance and quality control (QA/QC) summary table shell. Table S4. Findings and actions from review of quality assurance and quality control (QA/QC) data table shell. Section III: Figure S1. Distribution of surrogate recoveries by study visit

- Additional file 3: Example of report formatting request to send to the lab

- Additional file 4: Example QA/QC report

Acknowledgements

We thank David Camann, Alice Yau, Marcia Nishioka, Martha McCauley, and Adrian Covaci for helping us make thoughtful decisions about how to interpret our data. We also thank Vincent Bessonneau for helpful discussion and for his valuable comments on a draft of this manuscript. Silent Spring Institute is a scientific research organization dedicated to studying environmental factors in women’s health.

Contributions

RAR, RED, and LP developed the detailed data processing approach provided as an example in this manuscript. JU drafted the manuscript. RAR, RED, and LP critically reviewed and revised the manuscript. All authors read and approved the final manuscript.

Funding

This work was funded by the U.S. Department of Housing and Urban Development (Grant No. MAHHU0005–12) and by charitable gifts to Silent Spring Institute.

Competing interests

The authors declare that they have no competing interests.

References

- ↑ Wild, C.P. (2005). "Complementing the genome with an "exposome": the outstanding challenge of environmental exposure measurement in molecular epidemiology". Cancer Epidemiology, Biomarkers & Preventions 14 (8): 1847–50. doi:10.1158/1055-9965.EPI-05-0456. PMID 16103423.

- ↑ Carlin, D.J.; Rider, C.V.; Woychik, R. et al. (2013). "Unraveling the health effects of environmental mixtures: An NIEHS priority". Environmental Health Perspectives 121 (1): A6–8. doi:10.1289/ehp.1206182. PMC PMC3553446. PMID 23409283. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC3553446.

- ↑ U.S. Environmental Protection Agency (March 2019). "Quality Assurance Handbook and Guidance Documents for Citizen Science Projects". U.S. Environmental Protection Agency. https://www.epa.gov/citizen-science/quality-assurance-handbook-and-guidance-documents-citizen-science-projects. Retrieved 28 May 2019.

- ↑ 4.0 4.1 4.2 Centers for Disease Control and Preventions (March 2018). "Improving the Collection and Management of Human Samples Used for Measuring Environmental Chemicals and Nutrition Indicators" (PDF). Centers for Disease Control and Prevention. https://www.cdc.gov/biomonitoring/pdf/Human_Sample_Collection-508.pdf. Retrieved 28 May 2019.

- ↑ 5.0 5.1 LaKind, J.S.; Sobus, J.R.; Goodman, M. et al. (2014). "A proposal for assessing study quality: Biomonitoring, Environmental Epidemiology, and Short-lived Chemicals (BEES-C) instrument". Environment International 73: 195–207. doi:10.1016/j.envint.2014.07.011. PMC PMC4310547. PMID 25137624. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC4310547.

- ↑ 6.0 6.1 LaKind, J.S.; O'Mahony, C.; Armstrong, T. et al. (2019). "ExpoQual: Evaluating measured and modeled human exposure data". Environmental Research 171: 302-312. doi:10.1016/j.envres.2019.01.039. PMID 30708234.

- ↑ Campbell, J.L.; Rustad, L.E.; Porter, J.H. et al. (2013). "Quantity is Nothing without Quality: Automated QA/QC for Streaming Environmental Sensor Data". BioScience 63 (7): 574–585. doi:10.1525/bio.2013.63.7.10.

- ↑ Michener, W.K. (2015). "Ten Simple Rules for Creating a Good Data Management Plan". PLoS Computational Biology 11 (10): e1004525. doi:10.1371/journal.pcbi.1004525. PMC PMC4619636. PMID 26492633. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC4619636.

- ↑ 9.0 9.1 9.2 Calafat, A.M.; Longnecker, M.P.; Koch, H.M. et al. (2015). "Optimal Exposure Biomarkers for Nonpersistent Chemicals in Environmental Epidemiology". Environmental Health Perspectives 123 (7): A166–8. doi:10.1289/ehp.1510041. PMC PMC4492274. PMID 26132373. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC4492274.

- ↑ 11.0 11.1 Rodgers, K.M.; Udesky, J.O.; Rudel, R.A. et al. (2018). "Environmental chemicals and breast cancer: An updated review of epidemiological literature informed by biological mechanisms". Environmental Research 160: 152–82. doi:10.1016/j.envres.2017.08.045. PMID 28987728.

- ↑ 12.0 12.1 LaKind, J.S.; Goodman, M.; Barr, D.B. et al. (2015). "Lessons learned from the application of BEES-C: Systematic assessment of study quality of epidemiologic research on BPA, neurodevelopment, and respiratory health". Environment International 80: 41–71. doi:10.1016/j.envint.2015.03.015. PMID 25884849.

- ↑ Rudel, R.A.; Dodson, R.E.; Perovich, L.J. et al. (2010). "Semivolatile endocrine-disrupting compounds in paired indoor and outdoor air in two northern California communities". Environmental Science & Technology 44 (17): 6583-90. doi:10.1021/es100159c. PMC PMC2930400. PMID 20681565. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC2930400.

- ↑ 14.0 14.1 Wentworth, N. (28 September 2001). "Review of Guidance on Data Quality Indicators (EPA QA/G-5i)" (PDF). U.S. Environmental Protection Agency. http://colowqforum.org/pdfs/whole-effluent-toxicity/documents/g5i-prd.pdf. Retrieved 28 May 2019.

- ↑ U.S. Environmental Protection Agency (December 1994). "Laboratory Data Validation Functional Guidelines For Evaluating Organics Analyses". U.S. Environmental Protection Agency. https://nepis.epa.gov/Exe/ZyPURL.cgi?Dockey=20012TGE.TXT. Retrieved 28 May 2019.

- ↑ 16.0 16.1 16.2 U.S. Army Corps of Engineers (30 June 2005). "Guidance for Evaluating Performance-Based Chemical Data" (PDF). U.S. Army Corps of Engineers. https://www.publications.usace.army.mil/Portals/76/Publications/EngineerManuals/EM_200-1-10.pdf?ver=2013-09-04-070852-230. Retrieved 28 May 2019.

- ↑ 17.0 17.1 17.2 Geboy, N.J.; Engle, M.A. (7 September 2011). "Quality Assurance and Quality Control of Geochemical Data: A Primer for the Research Scientist". Open-File Report 2011–1187. U.S. Geological Survey. https://pubs.usgs.gov/of/2011/1187/. Retrieved 28 May 2019.

- ↑ NIST (19 June 2014). "NIST Standard Reference Database 1A v17". https://www.nist.gov/srd/nist-standard-reference-database-1a-v17. Retrieved 28 May 2019.

- ↑ Ulrich, E.M.; Sobus, J.R.; Grulke, C.M. et al. (2019). "EPA's non-targeted analysis collaborative trial (ENTACT): Genesis, design, and initial findings". Analytical and Bioanalytical Chemistry 411 (4): 853–66. doi:10.1007/s00216-018-1435-6. PMID 30519961.

- ↑ 20.0 20.1 Lötsch, J. (2017). "Data visualizations to detect systematic errors in laboratory assay results". Pharmacology Research & Perspectives 5 (6): e00369. doi:10.1002/prp2.369. PMC PMC5723702. PMID 29226627. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC5723702.

- ↑ Dodson, R.E.; Camann, D.E.; Morello-Frosch, R. et al. (2015). "Semivolatile organic compounds in homes: Strategies for efficient and systematic exposure measurement based on empirical and theoretical factors". Environmental Science & Technology 49 (1): 113-22. doi:10.1021/es502988r. PMC PMC4288060. PMID 25488487. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC4288060.

- ↑ 22.0 22.1 U.S. Environmental Protection Agency (December 2015). "Method 8000D: Determinative Chromatographic Separations" (PDF). U.S. Environmental Protection Agency. https://www.epa.gov/sites/production/files/2015-12/documents/8000d.pdf. Retrieved 16 August 2019.

- ↑ 23.0 23.1 23.2 Helsel, D.R. (2012). Statistics for Censored Environmental Data Using Minitab and R (2nd ed.). Wiley. ISBN 9780470479889.

- ↑ Helsel, D. (2010). "Much ado about next to nothing: Incorporating nondetects in science". Annals of Occupational Hygiene 54 (3): 257-62. doi:10.1093/annhyg/mep092. PMID 20032004.

- ↑ 25.0 25.1 Newton, E.; Rudel, R. (2007). "Estimating correlation with multiply censored data arising from the adjustment of singly censored data". Environmental Science & Technology 41 (1): 221–8. doi:10.1021/es0608444. PMC PMC2565512. PMID 17265951. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC2565512.

- ↑ Shoari, N.; Dubé, J.S. (2018). "Toward improved analysis of concentration data: Embracing nondetects". Environmental Toxicology and Chemistry 37 (3): 643-656. doi:10.1002/etc.4046. PMID 29168890.

- ↑ NIST. "SRM Order Request System". https://www-s.nist.gov/srmors/. Retrieved 28 May 2019.

- ↑ Silent Spring Institute. "SilentSpringInstitute/QAQC-Toolkit". GitHub. https://github.com/SilentSpringInstitute/QAQC-Toolkit. Retrieved 28 May 2019.

- ↑ Dawson, B.J.M.; Bennett V, G.L.; Belitz, K. (2008). "Ground-Water Quality Data in the Southern Sacramento Valley, California, 2005—Results from the California GAMA Program" (PDF). U.S. Geological Survey. https://pubs.usgs.gov/ds/285/ds285.pdf. Retrieved 28 May 2019.

- ↑ Dodson, R.E.; Udesky, J.O.; Colton, M.D. et al. (2017). "Chemical exposures in recently renovated low-income housing: Influence of building materials and occupant activities". Environmental International 109: 114—27. doi:10.1016/j.envint.2017.07.007. PMID 28916131.

Notes

This presentation is faithful to the original, with only a few minor changes to presentation, spelling, and grammar. We also added PMCID and DOI when they were missing from the original reference.