Featured article of the week: December 19–25:

Featured article of the week: December 12–18:

"VennDiagramWeb: A web application for the generation of highly customizable Venn and Euler diagrams"

Visualization of data generated by high-throughput, high-dimensionality experiments is rapidly becoming a rate-limiting step in computational biology. There is an ongoing need to quickly develop high-quality visualizations that can be easily customized or incorporated into automated pipelines. This often requires an interface for manual plot modification, rapid cycles of tweaking visualization parameters, and the generation of graphics code. To facilitate this process for the generation of highly-customizable, high-resolution Venn and Euler diagrams, we introduce VennDiagramWeb: a web application for the widely used VennDiagram R package. (Full article...)

|

Featured article of the week: December 5–11:

"Molmil: A molecular viewer for the PDB and beyond"

Molecular viewers are a vital tool for our understanding of protein structures and functions. The shift from regular desktop platforms such as Windows, Mac OSX and Linux to mobile platforms such as iOS and Android in the last half-decade, however, prevents traditional online molecular viewers such as PDBj’s previously developed jV and the popular Jmol from running on these new platforms as these platforms do not support Java Applets. For mobile platforms a native application (i.e., an application specifically designed and optimized for each of these platforms) can be created and distributed via their respective application stores. However, with new platforms on the horizon, or already available, in addition to the already established desktop platforms, it would be a tedious and inefficient job to make a molecular viewer available on all platforms, current and future. (Full article...)

|

Featured article of the week: November 28–December 4:

"Smart electronic laboratory notebooks for the NIST research environment"

Laboratory notebooks have been a staple of scientific research for centuries for organizing and documenting ideas and experiments. Modern laboratories are increasingly reliant on electronic data collection and analysis, so it seems inevitable that the digital revolution should come to the ordinary laboratory notebook. The most important aspect of this transition is to make the shift as comfortable and intuitive as possible, so that the creative process that is the hallmark of scientific investigation and engineering achievement is maintained, and ideally enhanced. The smart electronic laboratory notebooks described in this paper represent a paradigm shift from the old pen and paper style notebooks and provide a host of powerful operational and documentation capabilities in an intuitive format that is available anywhere at any time. (Full article...)

|

Featured article of the week: November 21–27:

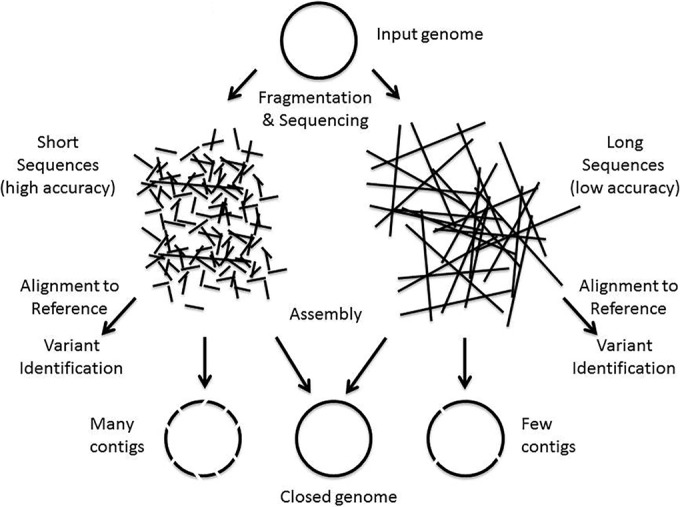

"Making the leap from research laboratory to clinic: Challenges and opportunities for next-generation sequencing in infectious disease diagnostics"

Next-generation DNA sequencing (NGS) has progressed enormously over the past decade, transforming genomic analysis and opening up many new opportunities for applications in clinical microbiology laboratories. The impact of NGS on microbiology has been revolutionary, with new microbial genomic sequences being generated daily, leading to the development of large databases of genomes and gene sequences. The ability to analyze microbial communities without culturing organisms has created the ever-growing field of metagenomics and microbiome analysis and has generated significant new insights into the relation between host and microbe. The medical literature contains many examples of how this new technology can be used for infectious disease diagnostics and pathogen analysis. The implementation of NGS in medical practice has been a slow process due to various challenges such as clinical trials, lack of applicable regulatory guidelines, and the adaptation of the technology to the clinical environment. In April 2015, the American Academy of Microbiology (AAM) convened a colloquium to begin to define these issues, and in this document, we present some of the concepts that were generated from these discussions. (Full article...)

|

Featured article of the week: November 14–20:

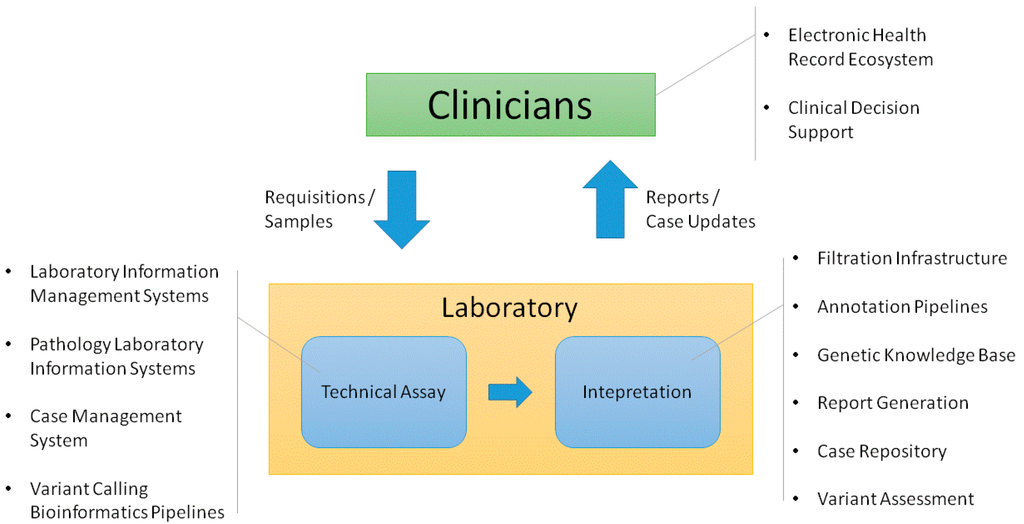

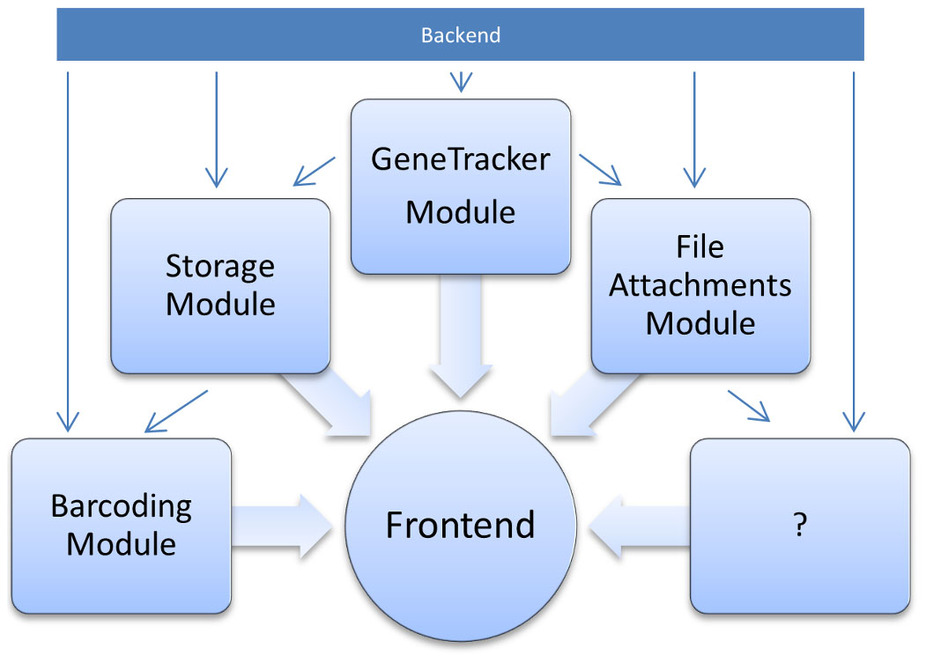

"Information technology support for clinical genetic testing within an academic medical center"

Academic medical centers require many interconnected systems to fully support genetic testing processes. We provide an overview of the end-to-end support that has been established surrounding a genetic testing laboratory within our environment, including both laboratory and clinician-facing infrastructure. We explain key functions that we have found useful in the supporting systems. We also consider ways that this infrastructure could be enhanced to enable deeper assessment of genetic test results in both the laboratory and clinic. (Full article...)

|

Featured article of the week: November 07–13:

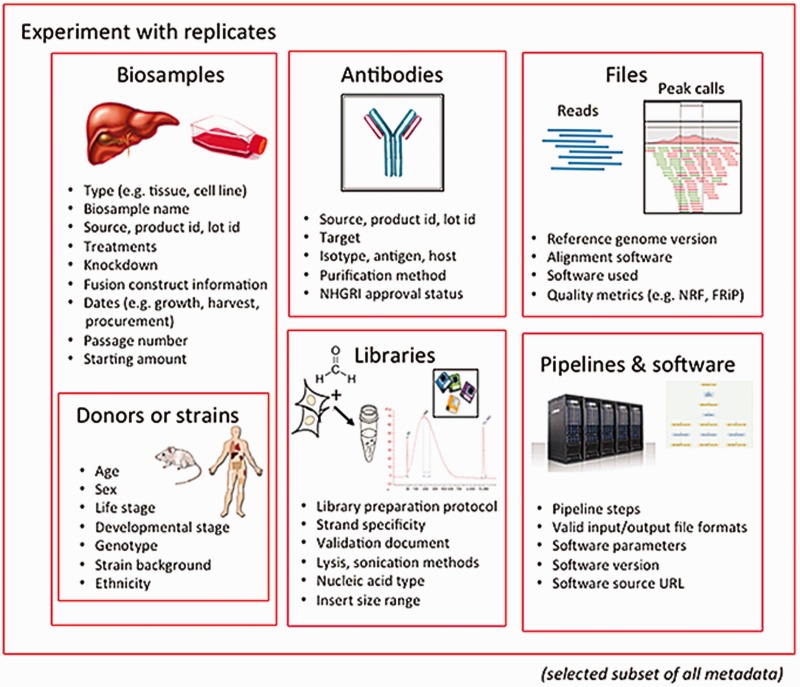

"Principles of metadata organization at the ENCODE data coordination center"

The Encyclopedia of DNA Elements (ENCODE) Data Coordinating Center (DCC) is responsible for organizing, describing and providing access to the diverse data generated by the ENCODE project. The description of these data, known as metadata, includes the biological sample used as input, the protocols and assays performed on these samples, the data files generated from the results and the computational methods used to analyze the data. Here, we outline the principles and philosophy used to define the ENCODE metadata in order to create a metadata standard that can be applied to diverse assays and multiple genomic projects. In addition, we present how the data are validated and used by the ENCODE DCC in creating the ENCODE Portal. (Full article...)

|

Featured article of the week: October 31–November 06:

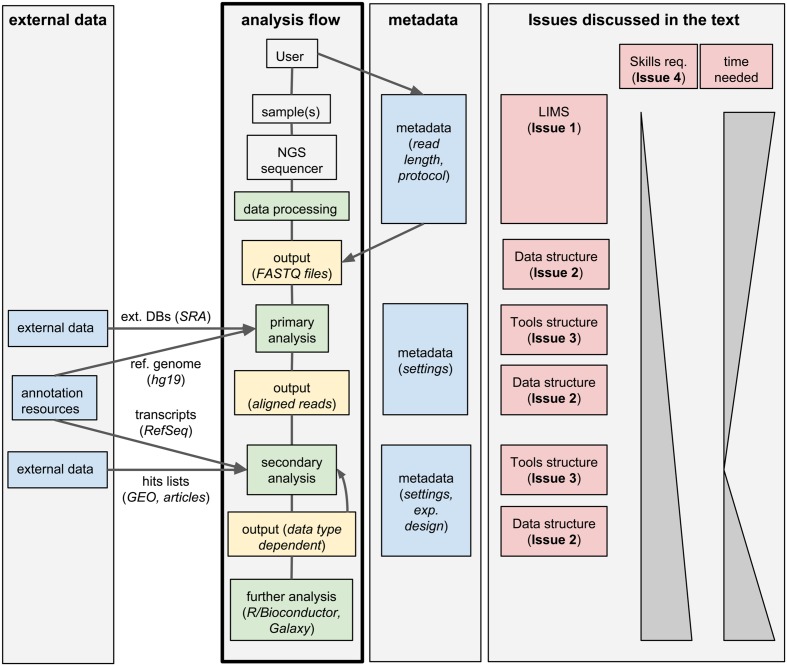

"Integrated systems for NGS data management and analysis: Open issues and available solutions"

Next-generation sequencing (NGS) technologies have deeply changed our understanding of cellular processes by delivering an astonishing amount of data at affordable prices; nowadays, many biology laboratories have already accumulated a large number of sequenced samples. However, managing and analyzing these data poses new challenges, which may easily be underestimated by research groups devoid of IT and quantitative skills. In this perspective, we identify five issues that should be carefully addressed by research groups approaching NGS technologies. In particular, the five key issues to be considered concern: (1) adopting a laboratory information management system (LIMS) and safeguard the resulting raw data structure in downstream analyses; (2) monitoring the flow of the data and standardizing input and output directories and file names, even when multiple analysis protocols are used on the same data; (3) ensuring complete traceability of the analysis performed; (4) enabling non-experienced users to run analyses through a graphical user interface (GUI) acting as a front-end for the pipelines; (5) relying on standard metadata to annotate the datasets, and when possible using controlled vocabularies, ideally derived from biomedical ontologies. (Full article...)

|

Featured article of the week: October 24–30:

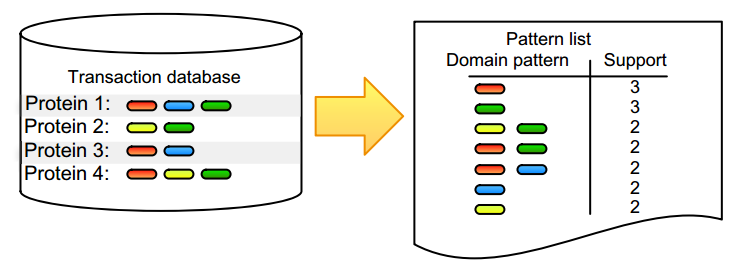

"Practical approaches for mining frequent patterns in molecular datasets"

Pattern detection is an inherent task in the analysis and interpretation of complex and continuously accumulating biological data. Numerous itemset mining algorithms have been developed in the last decade to efficiently detect specific pattern classes in data. Although many of these have proven their value for addressing bioinformatics problems, several factors still slow down promising algorithms from gaining popularity in the life science community. Many of these issues stem from the low user-friendliness of these tools and the complexity of their output, which is often large, static, and consequently hard to interpret. Here, we apply three software implementations on common bioinformatics problems and illustrate some of the advantages and disadvantages of each, as well as inherent pitfalls of biological data mining. Frequent itemset mining exists in many different flavors, and users should decide their software choice based on their research question, programming proficiency, and added value of extra features. (Full article...)

|

Featured article of the week: October 17–23:

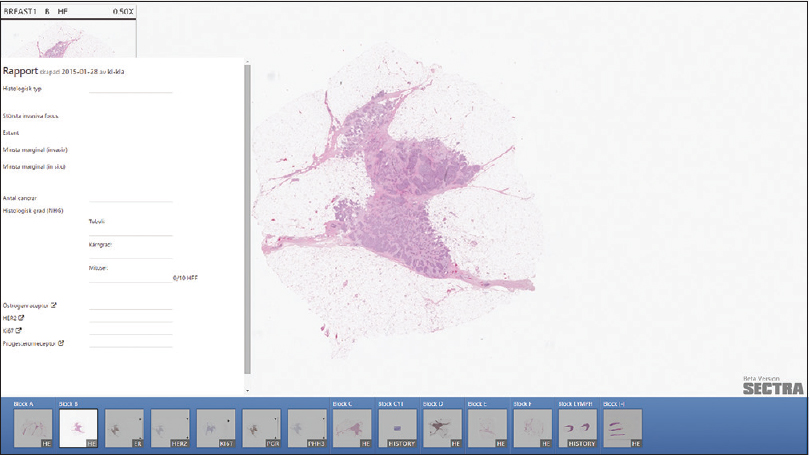

"Improving the creation and reporting of structured findings during digital pathology review"

Today, pathology reporting consists of many separate tasks, carried out by multiple people. Common tasks include dictation during case review, transcription, verification of the transcription, report distribution, and reporting the key findings to follow-up registries. Introduction of digital workstations makes it possible to remove some of these tasks and simplify others. This study describes the work presented at the Nordic Symposium on Digital Pathology 2015, in Linköping, Sweden.

We explored the possibility of having a digital tool that simplifies image review by assisting note-taking, and with minimal extra effort, populates a structured report. Thus, our prototype sees reporting as an activity interleaved with image review rather than a separate final step. We created an interface to collect, sort, and display findings for the most common reporting needs, such as tumor size, grading, and scoring. (Full article...)

|

Featured article of the week: October 10–16:

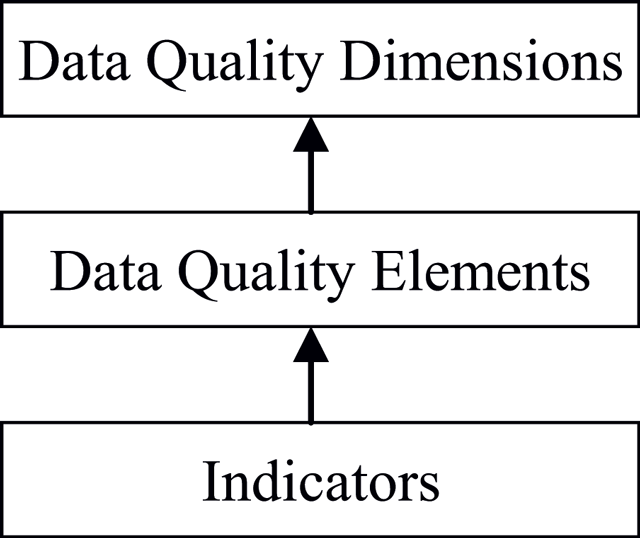

"The challenges of data quality and data quality assessment in the big data era"

High-quality data are the precondition for analyzing and using big data and for guaranteeing the value of the data. Currently, comprehensive analysis and research of quality standards and quality assessment methods for big data are lacking. First, this paper summarizes reviews of data quality research. Second, this paper analyzes the data characteristics of the big data environment, presents quality challenges faced by big data, and formulates a hierarchical data quality framework from the perspective of data users. This framework consists of big data quality dimensions, quality characteristics, and quality indexes. Finally, on the basis of this framework, this paper constructs a dynamic assessment process for data quality. This process has good expansibility and adaptability and can meet the needs of big data quality assessment. The research results enrich the theoretical scope of big data and lay a solid foundation for the future by establishing an assessment model and studying evaluation algorithms. (Full article...)

|

Featured article of the week: October 3–9:

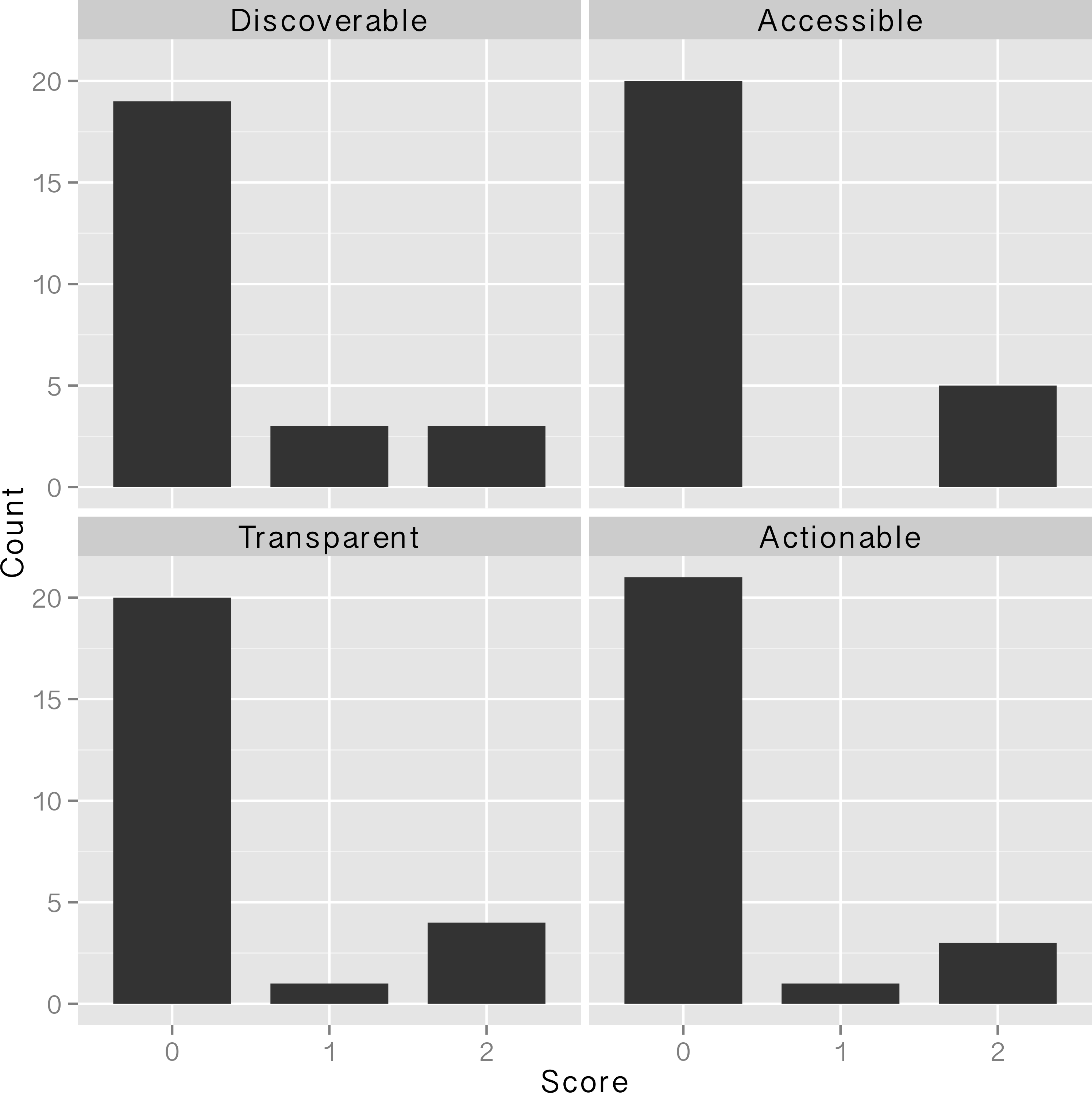

"Water, water, everywhere: Defining and assessing data sharing in academia"

Sharing of research data has begun to gain traction in many areas of the sciences in the past few years because of changing expectations from the scientific community, funding agencies, and academic journals. National Science Foundation (NSF) requirements for a data management plan (DMP) went into effect in 2011, with the intent of facilitating the dissemination and sharing of research results. Many projects that were funded during 2011 and 2012 should now have implemented the elements of the data management plans required for their grant proposals. In this paper we define "data sharing" and present a protocol for assessing whether data have been shared and how effective the sharing was. We then evaluate the data sharing practices of researchers funded by the NSF at Oregon State University in two ways: by attempting to discover project-level research data using the associated DMP as a starting point, and by examining data sharing associated with journal articles that acknowledge NSF support. Sharing at both the project level and the journal article level was not carried out in the majority of cases, and when sharing was accomplished, the shared data were often of questionable usability due to access, documentation, and formatting issues. We close the article by offering recommendations for how data producers, journal publishers, data repositories, and funding agencies can facilitate the process of sharing data in a meaningful way. (Full article...)

|

Featured article of the week: September 26–October 2:

"Principles and application of LIMS in mouse clinics"

Large-scale systemic mouse phenotyping, as performed by mouse clinics for more than a decade, requires thousands of mice from a multitude of different mutant lines to be bred, individually tracked and subjected to phenotyping procedures according to a standardised schedule. All these efforts are typically organised in overlapping projects, running in parallel. In terms of logistics, data capture, data analysis, result visualisation and reporting, new challenges have emerged from such projects. These challenges could hardly be met with traditional methods such as pen and paper colony management, spreadsheet-based data management and manual data analysis. Hence, different laboratory information management systems (LIMS) have been developed in mouse clinics to facilitate or even enable mouse and data management in the described order of magnitude. This review shows that general principles of LIMS can be empirically deduced from LIMS used by different mouse clinics, although these have evolved differently. Supported by LIMS descriptions and lessons learned from seven mouse clinics, this review also shows that the unique LIMS environment in a particular facility strongly influences strategic LIMS decisions and LIMS development. As a major conclusion, this review states that there is no universal LIMS for the mouse research domain that fits all requirements. Still, empirically deduced general LIMS principles can serve as a master decision support template, which is provided as a hands-on tool for mouse research facilities looking for a LIMS. (Full article...)

|

Featured article of the week: September 19–25:

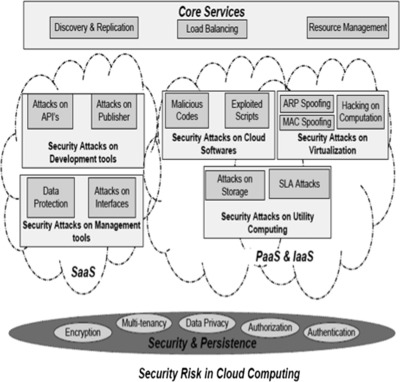

"Multilevel classification of security concerns in cloud computing"

Threats jeopardize some basic security requirements in a cloud. These threats generally constitute privacy breach, data leakage and unauthorized data access at different cloud layers. This paper presents a novel multilevel classification model of different security attacks across different cloud services at each layer. It also identifies attack types and risk levels associated with different cloud services at these layers. The risks are ranked as low, medium and high. The intensity of these risk levels depends upon the position of cloud layers. The attacks get more severe for lower layers where infrastructure and platform are involved. The intensity of these risk levels is also associated with security requirements of data encryption, multi-tenancy, data privacy, authentication and authorization for different cloud services. The multilevel classification model leads to the provision of dynamic security contract for each cloud layer that dynamically decides about security requirements for cloud consumer and provider. (Full article...)

|

Featured article of the week: September 12–18:

"Assessment of and response to data needs of clinical and translational science researchers and beyond"

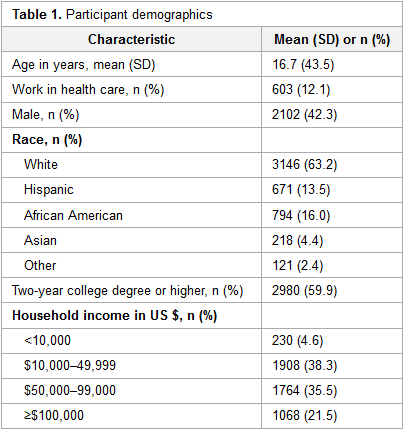

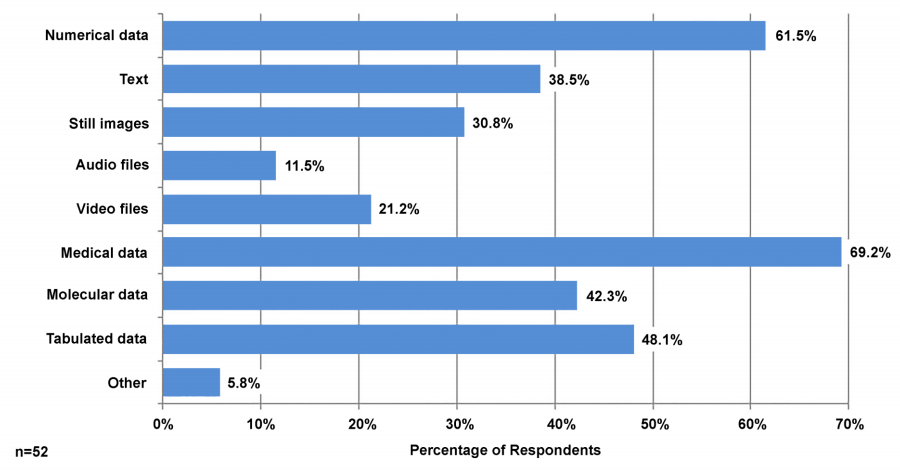

As universities and libraries grapple with data management and “big data,” the need for data management solutions across disciplines is particularly relevant in clinical and translational science research, which is designed to traverse disciplinary and institutional boundaries. At the University of Florida Health Science Center Library, a team of librarians undertook an assessment of the research data management needs of clinical and translation science (CTS) researchers, including an online assessment and follow-up one-on-one interviews.

The 20-question online assessment was distributed to all investigators affiliated with UF’s Clinical and Translational Science Institute (CTSI) and 59 investigators responded. Follow-up in-depth interviews were conducted with nine faculty and staff members.

Results indicate that UF’s CTS researchers have diverse data management needs that are often specific to their discipline or current research project and span the data lifecycle. A common theme in responses was the need for consistent data management training, particularly for graduate students; this led to localized training within the Health Science Center and CTSI, as well as campus-wide training. (Full article...)

|

Featured article of the week: September 5–11:

"SUSHI: An exquisite recipe for fully documented, reproducible and reusable NGS data analysis"

Next generation sequencing (NGS) produces massive datasets consisting of billions of reads and up to thousands of samples. Subsequent bioinformatic analysis is typically done with the help of open-source tools, where each application performs a single step towards the final result. This situation leaves the bioinformaticians with the tasks of combining the tools, managing the data files and meta-information, documenting the analysis, and ensuring reproducibility.

We present SUSHI, an agile data analysis framework that relieves bioinformaticians from the administrative challenges of their data analysis. SUSHI lets users build reproducible data analysis workflows from individual applications and manages the input data, the parameters, meta-information with user-driven semantics, and the job scripts. As distinguishing features, SUSHI provides an expert command line interface as well as a convenient web interface to run bioinformatics tools. SUSHI datasets are self-contained and self-documented on the file system. This makes them fully reproducible and ready to be shared. With the associated meta-information being formatted as plain text tables, the datasets can be readily further analyzed and interpreted outside SUSHI. (Full article...)

|

Featured article of the week: August 29–September 04:

"Open source data logger for low-cost environmental monitoring"

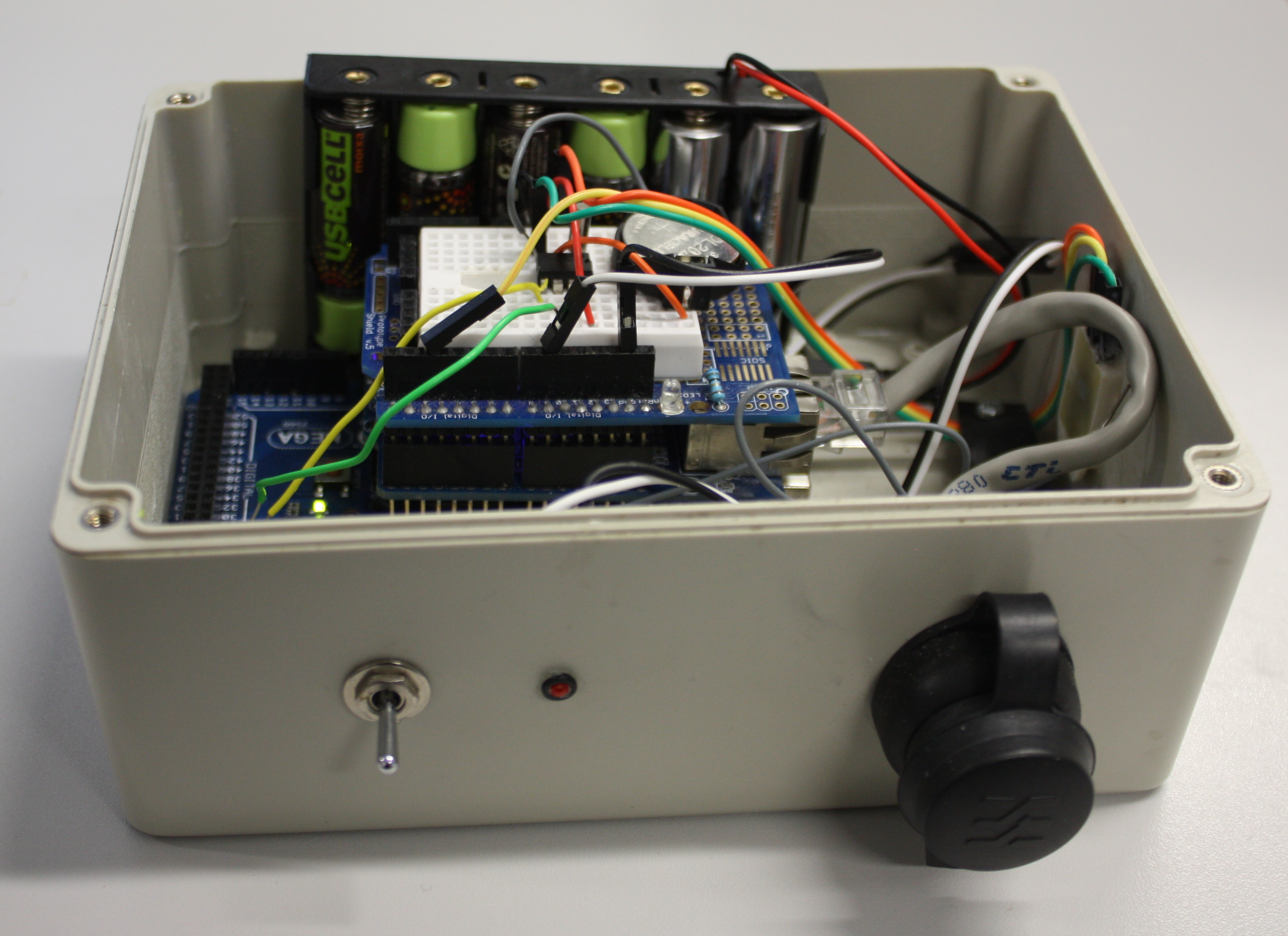

The increasing transformation of biodiversity into a data-intensive science has seen numerous independent systems linked and aggregated into the current landscape of biodiversity informatics. This paper outlines how we can move forward with this program, incorporating real-time environmental monitoring into our methodology using low-power and low-cost computing platforms.

Low power and cheap computational projects such as Arduino and Raspberry Pi have brought the use of small computers and micro-controllers to the masses, and their use in fields related to biodiversity science is increasing (e.g. Hirafuji shows the use of Arduino in agriculture. There is a large amount of potential in using automated tools for monitoring environments and identifying species based on these emerging hardware platforms, but to be truly useful we must integrate the data they generate with our existing systems. This paper describes the construction of an open-source environmental data logger based on the Arduino platform and its integration with the web content management system Drupal which is used as the basis for Scratchpads among other biodiversity tools. (Full article...)

|

Featured article of the week: August 22–28:

"Evaluating health information systems using ontologies"

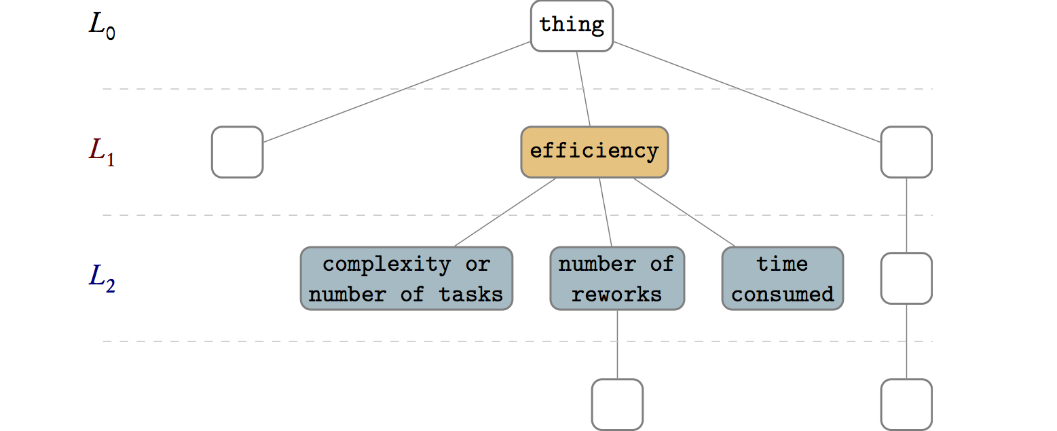

There are several frameworks that attempt to address the challenges of evaluation of health information systems by offering models, methods, and guidelines about what to evaluate, how to evaluate, and how to report the evaluation results. Model-based evaluation frameworks usually suggest universally applicable evaluation aspects but do not consider case-specific aspects. On the other hand, evaluation frameworks that are case-specific, by eliciting user requirements, limit their output to the evaluation aspects suggested by the users in the early phases of system development. In addition, these case-specific approaches extract different sets of evaluation aspects from each case, making it challenging to collectively compare, unify, or aggregate the evaluation of a set of heterogeneous health information systems.

The aim of this paper is to find a method capable of suggesting evaluation aspects for a set of one or more health information systems — whether similar or heterogeneous — by organizing, unifying, and aggregating the quality attributes extracted from those systems and from an external evaluation framework. (Full article...)

|

Featured article of the week: August 15–21:

"From the desktop to the grid: Scalable bioinformatics via workflow conversion"

Reproducibility is one of the tenets of the scientific method. Scientific experiments often comprise complex data flows, selection of adequate parameters, and analysis and visualization of intermediate and end results. Breaking down the complexity of such experiments into the joint collaboration of small, repeatable, well defined tasks, each with well defined inputs, parameters, and outputs, offers the immediate benefit of identifying bottlenecks, pinpoint sections which could benefit from parallelization, among others. Workflows rest upon the notion of splitting complex work into the joint effort of several manageable tasks.

There are several engines that give users the ability to design and execute workflows. Each engine was created to address certain problems of a specific community, therefore each one has its advantages and shortcomings. Furthermore, not all features of all workflow engines are royalty-free — an aspect that could potentially drive away members of the scientific community. (Full article...)

|

Featured article of the week: August 8–14:

"Terminology spectrum analysis of natural-language chemical documents: Term-like phrases retrieval routine"

This study seeks to develop, test and assess a methodology for automatic extraction of a complete set of ‘term-like phrases’ and to create a terminology spectrum from a collection of natural language PDF documents in the field of chemistry. The definition of ‘term-like phrases’ is one or more consecutive words and/or alphanumeric string combinations with unchanged spelling which convey specific scientific meanings. A terminology spectrum for a natural language document is an indexed list of tagged entities including: recognized general scientific concepts, terms linked to existing thesauri, names of chemical substances/reactions and term-like phrases. The retrieval routine is based on n-gram textual analysis with a sequential execution of various ‘accept and reject’ rules with taking into account the morphological and structural information.

The assessment of the retrieval process, expressed quantitatively with a precision (P), recall (R) and F1-measure, which are calculated manually from a limited set of documents (the full set of text abstracts belonging to five EuropaCat events were processed) by professional chemical scientists, has proved the effectiveness of the developed approach. (Full article...)

|

Featured article of the week: August 1–7:

"A legal framework to support development and assessment of digital health services"

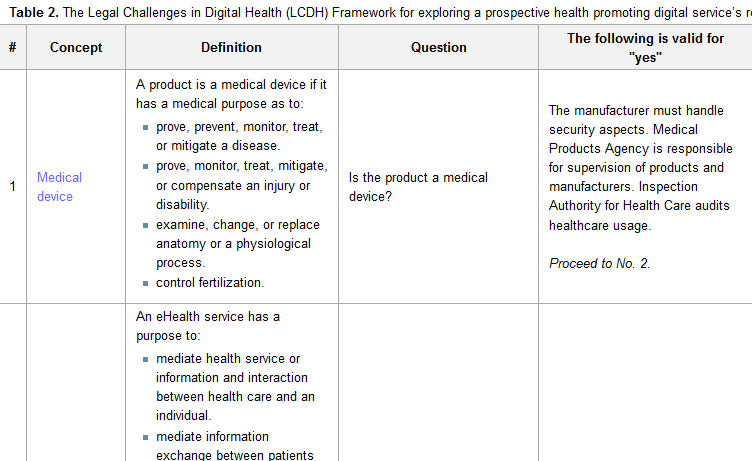

Digital health services empower people to track, manage, and improve their own health and quality of life while delivering a more personalized and precise health care, at a lower cost and with higher efficiency and availability. Essential for the use of digital health services is that the treatment of any personal data is compatible with the Patient Data Act, Personal Data Act, and other applicable privacy laws.

The aim of this study was to develop a framework for legal challenges to support designers in development and assessment of digital health services. A purposive sampling, together with snowball recruitment, was used to identify stakeholders and information sources for organizing, extending, and prioritizing the different concepts, actors, and regulations in relation to digital health and health-promoting digital systems. The data were collected through structured interviewing and iteration, and three different cases were used for face validation of the framework. A framework for assessing the legal challenges in developing digital health services (Legal Challenges in Digital Health [LCDH] Framework) was created and consists of six key questions to be used to evaluate a digital health service according to current legislation. (Full article...)

|

Featured article of the week: July 25–31:

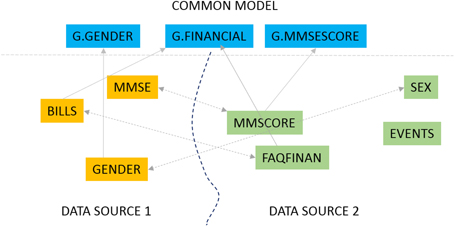

"The GAAIN Entity Mapper: An active-learning system for medical data mapping"

This work is focused on mapping biomedical datasets to a common representation, as an integral part of data harmonization for integrated biomedical data access and sharing. We present GEM, an intelligent software assistant for automated data mapping across different datasets or from a dataset to a common data model. The GEM system automates data mapping by providing precise suggestions for data element mappings. It leverages the detailed metadata about elements in associated dataset documentation such as data dictionaries that are typically available with biomedical datasets. It employs unsupervised text mining techniques to determine similarity between data elements and also employs machine-learning classifiers to identify element matches. It further provides an active-learning capability where the process of training the GEM system is optimized. Our experimental evaluations show that the GEM system provides highly accurate data mappings (over 90 percent accuracy) for real datasets of thousands of data elements each, in the Alzheimer's disease research domain. (Full article...)

|

Featured article of the week: July 18–24:

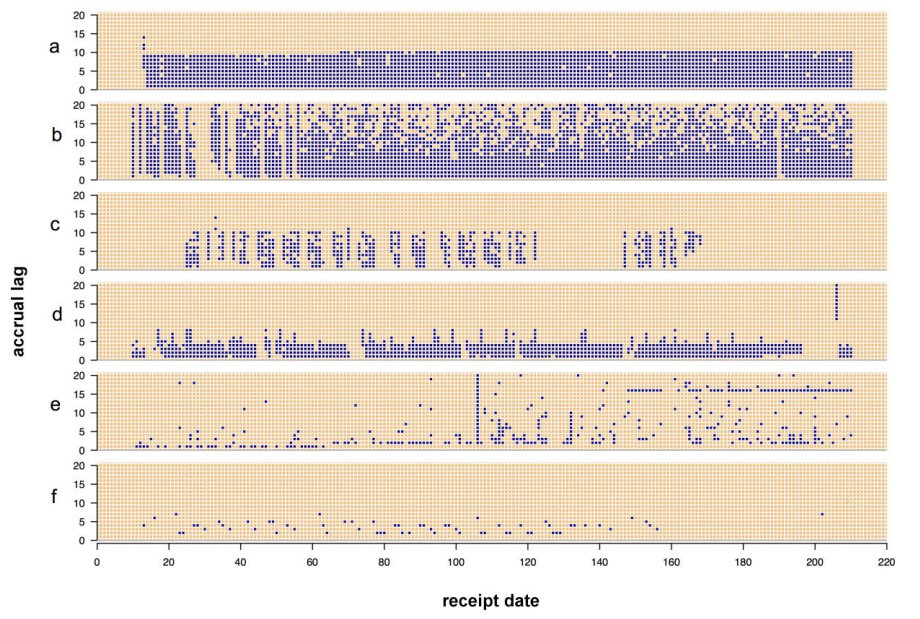

"Visualizing the quality of partially accruing data for use in decision making"

Secondary use of clinical health data for near real-time public health surveillance presents challenges surrounding its utility due to data quality issues. Data used for real-time surveillance must be timely, accurate and complete if it is to be useful; if incomplete data are used for surveillance, understanding the structure of the incompleteness is necessary. Such data are commonly aggregated due to privacy concerns. The Distribute project was a near real-time influenza-like-illness (ILI) surveillance system that relied on aggregated secondary clinical health data. The goal of this work is to disseminate the data quality tools developed to gain insight into the data quality problems associated with these data. These tools apply in general to any system where aggregate data are accrued over time and were created through the end-user-as-developer paradigm. Each tool was developed during the exploratory analysis to gain insight into structural aspects of data quality. (Full article...)

|

Featured article of the week: July 11–17:

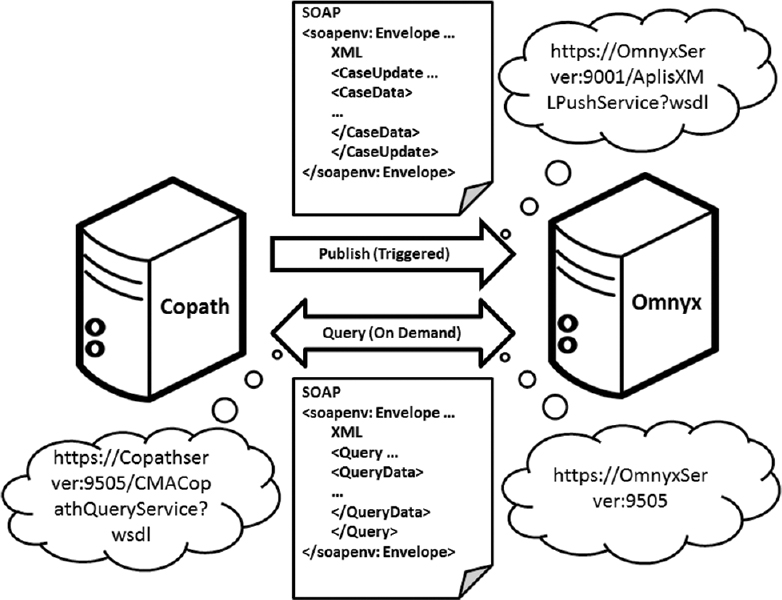

"Digital pathology and anatomic pathology laboratory information system integration to support digital pathology sign-out"

The adoption of digital pathology offers benefits over labor-intensive, time-consuming, and error-prone manual processes. However, because most workflow and laboratory transactions are centered around the anatomical pathology laboratory information system (APLIS), adoption of digital pathology ideally requires integration with the APLIS. A digital pathology system (DPS) integrated with the APLIS was recently implemented at our institution for diagnostic use. We demonstrate how such integration supports digital workflow to sign-out anatomical pathology cases.

Workflow begins when pathology cases get accessioned into the APLIS (CoPathPlus). Glass slides from these cases are then digitized (Omnyx VL120 scanner) and automatically uploaded into the DPS (Omnyx; Integrated Digital Pathology (IDP) software v.1.3). The APLIS transmits case data to the DPS via a publishing web service. The DPS associates scanned images with the correct case using barcode labels on slides and information received from the APLIS. When pathologists remotely open a case in the DPS, additional information (e.g. gross pathology details, prior cases) gets retrieved from the APLIS through a query web service. (Full article...)

|

Featured article of the week: July 4–10:

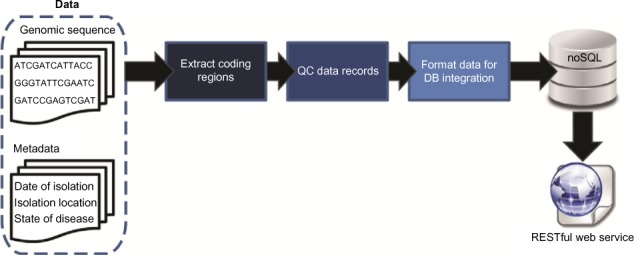

"A polyglot approach to bioinformatics data integration: A phylogenetic analysis of HIV-1"

As sequencing technologies continue to drop in price and increase in throughput, new challenges emerge for the management and accessibility of genomic sequence data. We have developed a pipeline for facilitating the storage, retrieval, and subsequent analysis of molecular data, integrating both sequence and metadata. Taking a polyglot approach involving multiple languages, libraries, and persistence mechanisms, sequence data can be aggregated from publicly available and local repositories. Data are exposed in the form of a RESTful web service, formatted for easy querying, and retrieved for downstream analyses. As a proof of concept, we have developed a resource for annotated HIV-1 sequences. Phylogenetic analyses were conducted for >6,000 HIV-1 sequences revealing spatial and temporal factors influence the evolution of the individual genes uniquely. Nevertheless, signatures of origin can be extrapolated even despite increased globalization. The approach developed here can easily be customized for any species of interest. (Full article...)

|

Featured article of the week: June 27–July 3:

"The systems biology format converter"

Interoperability between formats is a recurring problem in systems biology research. Many tools have been developed to convert computational models from one format to another. However, they have been developed independently, resulting in redundancy of efforts and lack of synergy.

Here we present the System Biology Format Converter (SBFC), which provide a generic framework to potentially convert any format into another. The framework currently includes several converters translating between the following formats: SBML, BioPAX, SBGN-ML, Matlab, Octave, XPP, GPML, Dot, MDL and APM. This software is written in Java and can be used as a standalone executable or web service. The SBFC framework is an evolving software project. Existing converters can be used and improved, and new converters can be easily added, making SBFC useful to both modellers and developers. The source code and documentation of the framework are freely available from the project web site. (Full article...)

|

Featured article of the week: June 20–26:

"Chemozart: A web-based 3D molecular structure editor and visualizer platform"

Chemozart is a 3D Molecule editor and visualizer built on top of native web components. It offers an easy to access service, user-friendly graphical interface and modular design. It is a client centric web application which communicates with the server via a representational state transfer style web service. Both client-side and server-side application are written in JavaScript. A combination of JavaScript and HTML is used to draw three-dimensional structures of molecules.

With the help of WebGL, three-dimensional visualization tool is provided. Using CSS3 and HTML5, a user-friendly interface is composed. More than 30 packages are used to compose this application which adds enough flexibility to it to be extended. Molecule structures can be drawn on all types of platforms and is compatible with mobile devices. No installation is required in order to use this application and it can be accessed through the internet. This application can be extended on both server-side and client-side by implementing modules in JavaScript. Molecular compounds are drawn on the HTML5 Canvas element using WebGL context. (Full article...)

|

Featured article of the week: June 13–19:

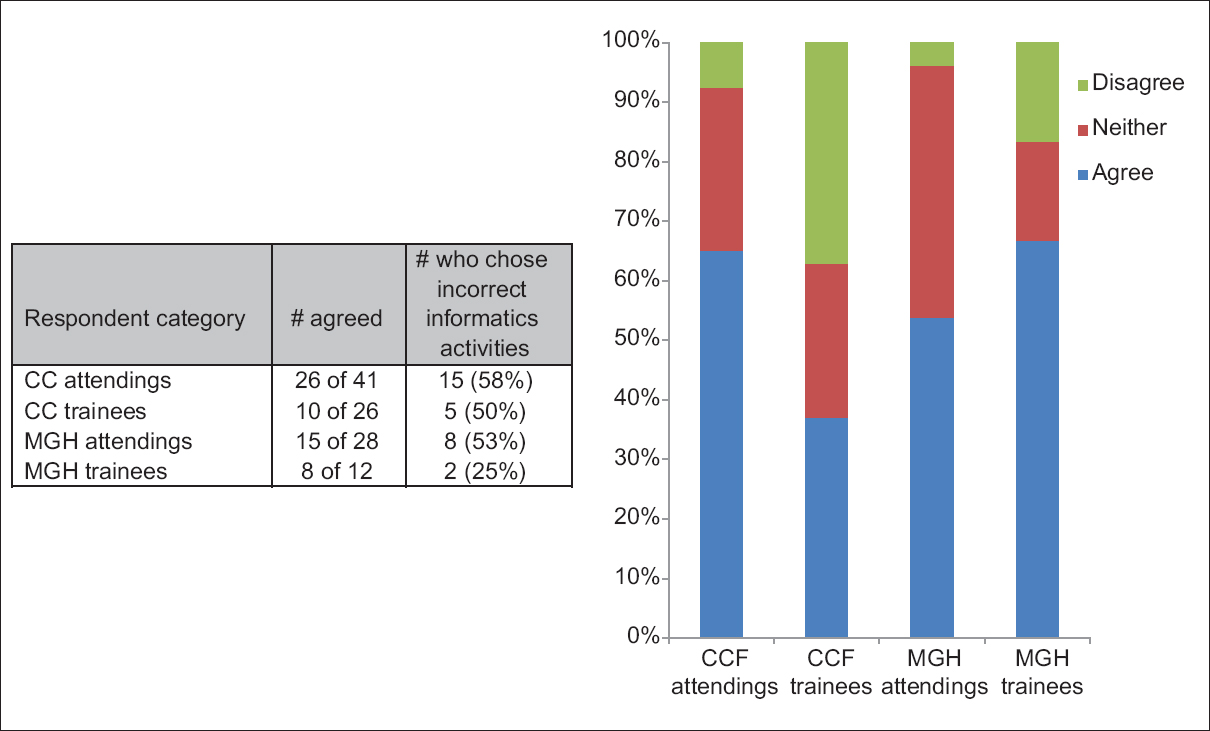

"Perceptions of pathology informatics by non-informaticist pathologists and trainees"

Although pathology informatics (PI) is essential to modern pathology practice, the field is often poorly understood. Pathologists who have received little to no exposure to informatics, either in training or in practice, may not recognize the roles that informatics serves in pathology. The purpose of this study was to characterize perceptions of PI by noninformatics-oriented pathologists and to do so at two large centers with differing informatics environments. Pathology trainees and staff at Cleveland Clinic (CC) and Massachusetts General Hospital (MGH) were surveyed. At MGH, pathology department leadership has promoted a pervasive informatics presence through practice, training, and research. At CC, PI efforts focus on production systems that serve a multi-site integrated health system and a reference laboratory, and on the development of applications oriented to department operations. The survey assessed perceived definition of PI, interest in PI, and perceived utility of PI. (Full article...)

|

Featured article of the week: June 06–12:

"A pocket guide to electronic laboratory notebooks in the academic life sciences"

Every professional doing active research in the life sciences is required to keep a laboratory notebook. However, while science has changed dramatically over the last centuries, laboratory notebooks have remained essentially unchanged since pre-modern science. We argue that the implementation of electronic laboratory notebooks (ELN) in academic research is overdue, and we provide researchers and their institutions with the background and practical knowledge to select and initiate the implementation of an ELN in their laboratories. In addition, we present data from surveying biomedical researchers and technicians regarding which hypothetical features and functionalities they hope to see implemented in an ELN, and which ones they regard as less important. We also present data on acceptance and satisfaction of those who have recently switched from paper laboratory notebook to an ELN. We thus provide answers to the following questions: What does an electronic laboratory notebook afford a biomedical researcher, what does it require, and how should one go about implementing it? (Full article...)

|

Featured article of the week: May 30–June 05:

"Diagnostic time in digital pathology: A comparative study on 400 cases"

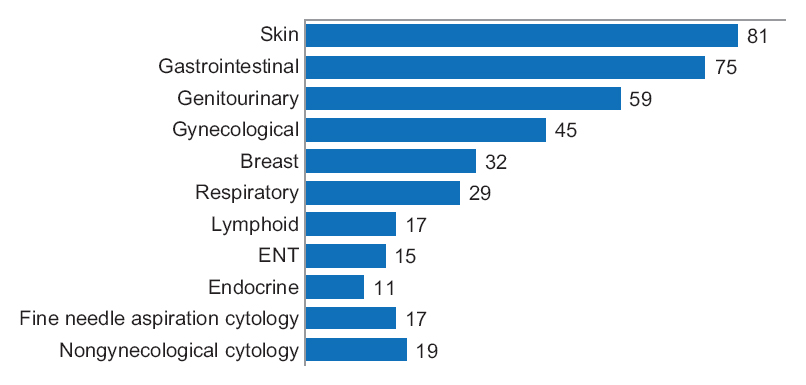

Numerous validation studies in digital pathology confirmed its value as a diagnostic tool. However, a longer time to diagnosis than traditional microscopy has been seen as a significant barrier to the routine use of digital pathology. As a part of our validation study, we compared a digital and microscopic diagnostic time in the routine diagnostic setting.

One senior staff pathologist reported 400 consecutive cases in histology, nongynecological, and fine needle aspiration cytology (20 sessions, 20 cases/session), over 4 weeks. Complex, difficult, and rare cases were excluded from the study to reduce the bias. A primary diagnosis was digital, followed by traditional microscopy, six months later, with only request forms available for both. Microscopic slides were scanned at ×20, digital images accessed through the fully integrated laboratory information management system (LIMS) and viewed in the image viewer on double 23” displays. (Full article...)

|

Featured article of the week: May 23–29:

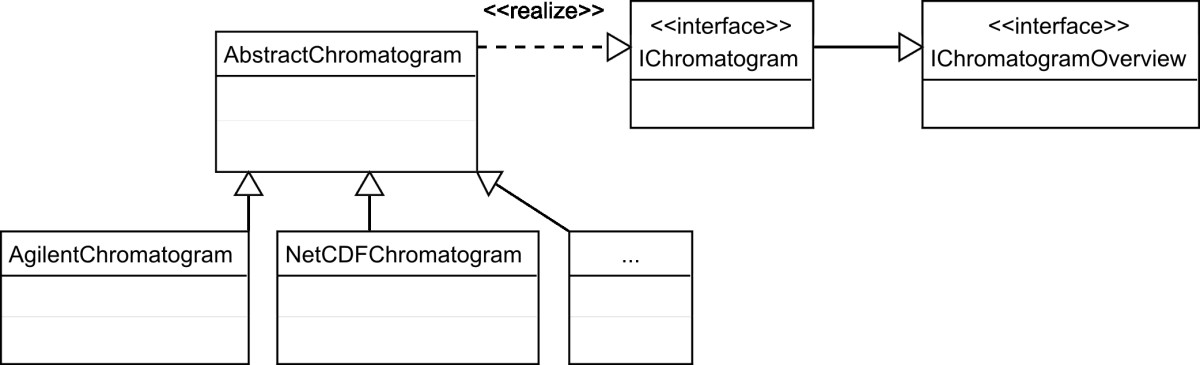

"OpenChrom: A cross-platform open source software for the mass spectrometric analysis of chromatographic data"

Today, data evaluation has become a bottleneck in chromatographic science. Analytical instruments equipped with automated samplers yield large amounts of measurement data, which needs to be verified and analyzed. Since nearly every GC/MS instrument vendor offers its own data format and software tools, the consequences are problems with data exchange and a lack of comparability between the analytical results. To challenge this situation a number of either commercial or non-profit software applications have been developed. These applications provide functionalities to import and analyze several data formats but have shortcomings in terms of the transparency of the implemented analytical algorithms and/or are restricted to a specific computer platform. (Full article...)

|

Featured article of the week: May 16–22:

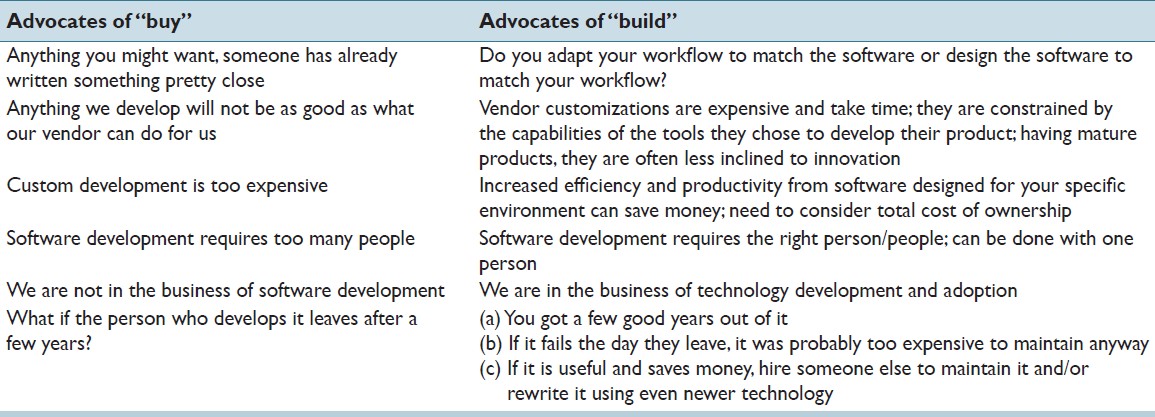

"Custom software development for use in a clinical laboratory"

In-house software development for use in a clinical laboratory is a controversial issue. Many of the objections raised are based on outdated software development practices, an exaggeration of the risks involved, and an underestimation of the benefits that can be realized. Buy versus build analyses typically do not consider total costs of ownership, and unfortunately decisions are often made by people who are not directly affected by the workflow obstacles or benefits that result from those decisions. We have been developing custom software for clinical use for over a decade, and this article presents our perspective on this practice. A complete analysis of the decision to develop or purchase must ultimately examine how the end result will mesh with the departmental workflow, and custom-developed solutions typically can have the greater positive impact on efficiency and productivity, substantially altering the decision balance sheet. (Full article...)

|

Featured article of the week: May 09–15:

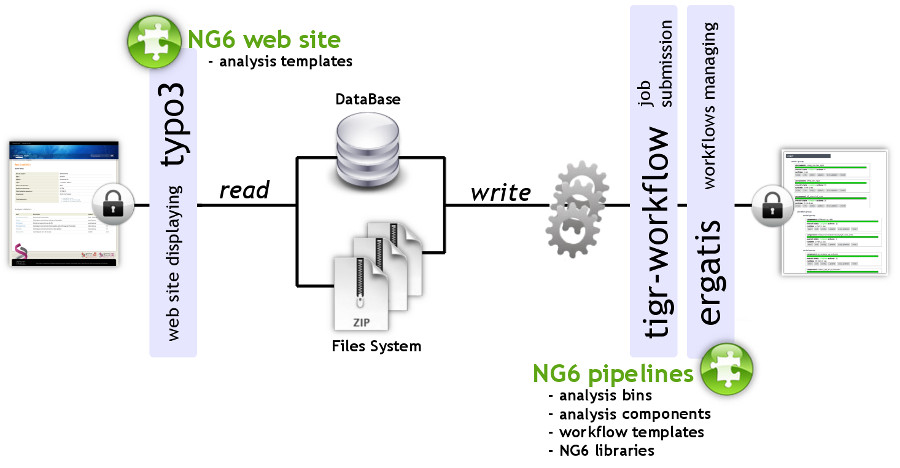

"NG6: Integrated next generation sequencing storage and processing environment"

Next generation sequencing platforms are now well implanted in sequencing centres and some laboratories. Upcoming smaller scale machines such as the 454 junior from Roche or the MiSeq from Illumina will increase the number of laboratories hosting a sequencer. In such a context, it is important to provide these teams with an easily manageable environment to store and process the produced reads.

We describe a user-friendly information system able to manage large sets of sequencing data. It includes, on one hand, a workflow environment already containing pipelines adapted to different input formats (sff, fasta, fastq and qseq), different sequencers (Roche 454, Illumina HiSeq) and various analyses (quality control, assembly, alignment, diversity studies,…) and, on the other hand, a secured web site giving access to the results. The connected user will be able to download raw and processed data and browse through the analysis result statistics. (Full article...)

|

Featured article of the week: May 02–08:

"STATegra EMS: An experiment management system for complex next-generation omics experiments"

High-throughput sequencing assays are now routinely used to study different aspects of genome organization. As decreasing costs and widespread availability of sequencing enable more laboratories to use sequencing assays in their research projects, the number of samples and replicates in these experiments can quickly grow to several dozens of samples and thus require standardized annotation, storage and management of preprocessing steps. As a part of the STATegra project, we have developed an Experiment Management System (EMS) for high throughput omics data that supports different types of sequencing-based assays such as RNA-seq, ChIP-seq, Methyl-seq, etc, as well as proteomics and metabolomics data. The STATegra EMS provides metadata annotation of experimental design, samples and processing pipelines, as well as storage of different types of data files, from raw data to ready-to-use measurements. The system has been developed to provide research laboratories with a freely-available, integrated system that offers a simple and effective way for experiment annotation and tracking of analysis procedures. (Full article...)

|

Featured article of the week: April 25–May 01:

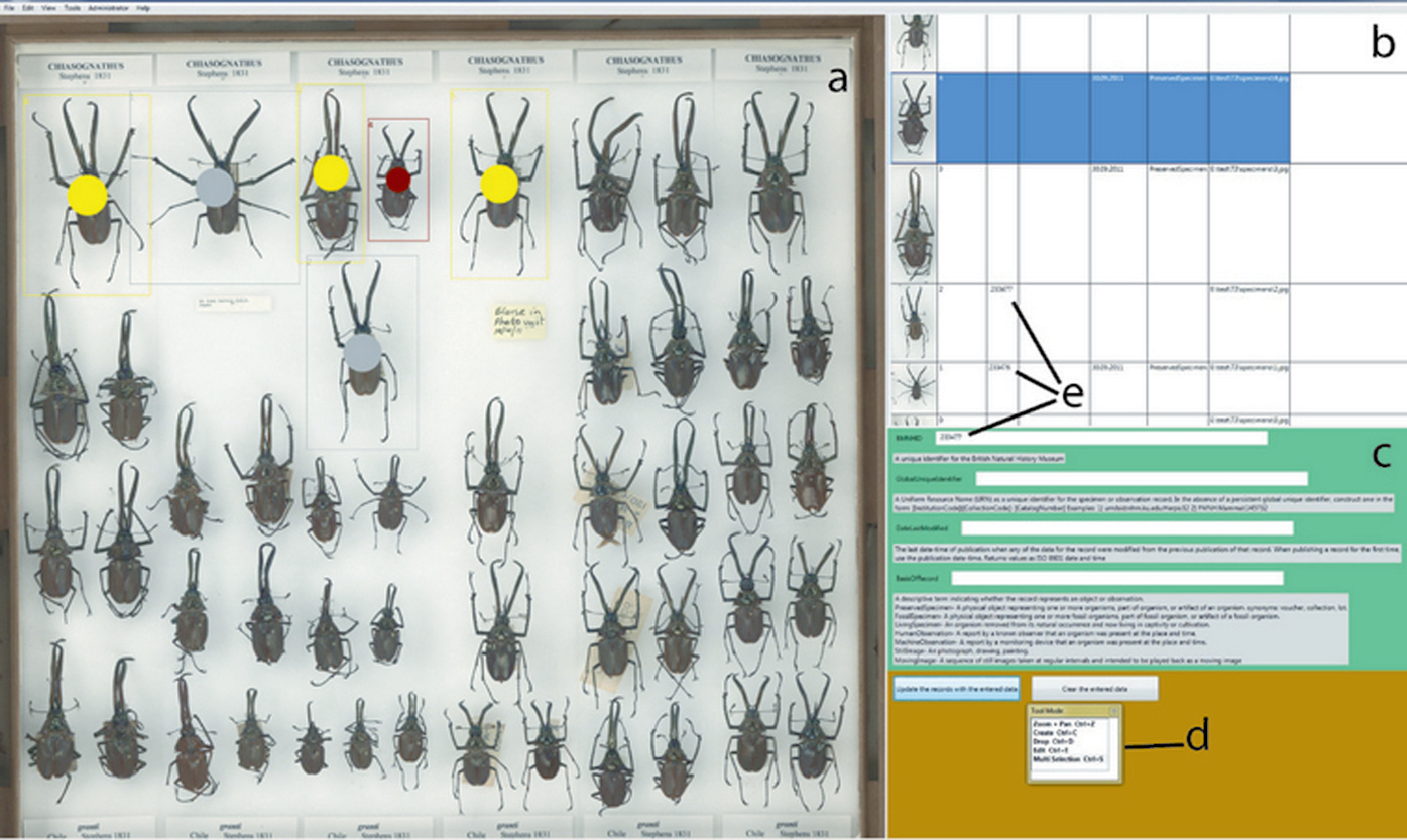

"No specimen left behind: Industrial scale digitization of natural history collections"

Traditional approaches for digitizing natural history collections, which include both imaging and metadata capture, are both labour- and time-intensive. Mass-digitization can only be completed if the resource-intensive steps, such as specimen selection and databasing of associated information, are minimized. Digitization of larger collections should employ an “industrial” approach, using the principles of automation and crowd sourcing, with minimal initial metadata collection including a mandatory persistent identifier. A new workflow for the mass-digitization of natural history museum collections based on these principles, and using SatScan® tray scanning system, is described. (Full article...)

|

Featured article of the week: April 18–24:

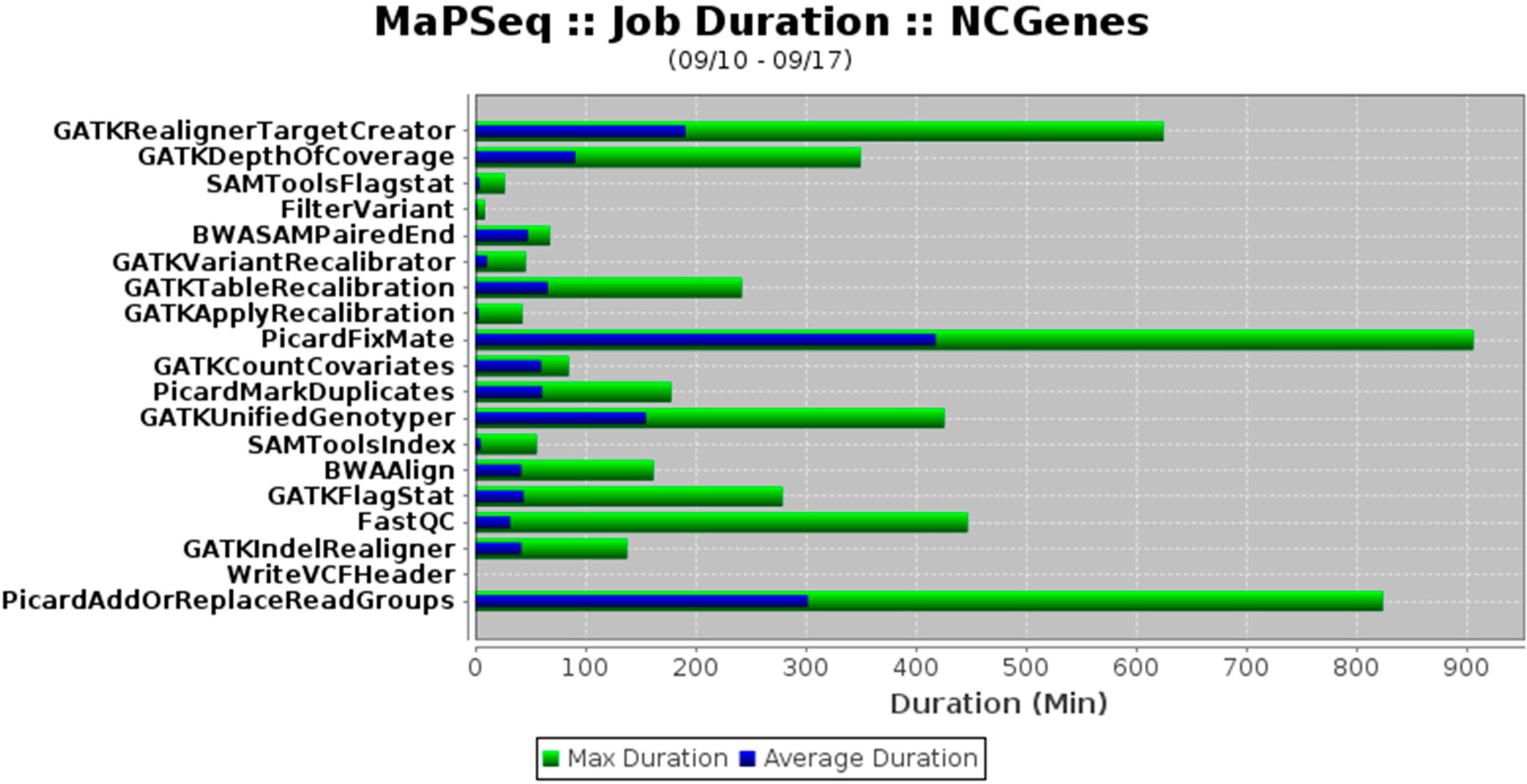

"MaPSeq, a service-oriented architecture for genomics research within an academic biomedical research institution"

Genomics research presents technical, computational, and analytical challenges that are well recognized. Less recognized are the complex sociological, psychological, cultural, and political challenges that arise when genomics research takes place within a large, decentralized academic institution. In this paper, we describe a Service-Oriented Architecture (SOA) — MaPSeq — that was conceptualized and designed to meet the diverse and evolving computational workflow needs of genomics researchers at our large, hospital-affiliated, academic research institution. We present the institutional challenges that motivated the design of MaPSeq before describing the architecture and functionality of MaPSeq. We then discuss SOA solutions and conclude that approaches such as MaPSeq enable efficient and effective computational workflow execution for genomics research and for any type of academic biomedical research that requires complex, computationally-intense workflows. (Full article...)

|

Featured article of the week: April 11–17:

"Grand challenges in environmental informatics"

We live in an era of environmental deterioration through depletion and degradation of resources such as air, water, and soil; the destruction of ecosystems and the extinction of wildlife. As a matter of fact, environmental degradation is one of three main threats identified in 2004 by the High Level Threat Panel of the United Nations, the other two being poverty and infectious diseases. In particular, air pollution ranked seventh on the worldwide list of risk factors, contributing to approximately three million deaths each year. (Full article...)

|

Featured article of the week: April 04–10:

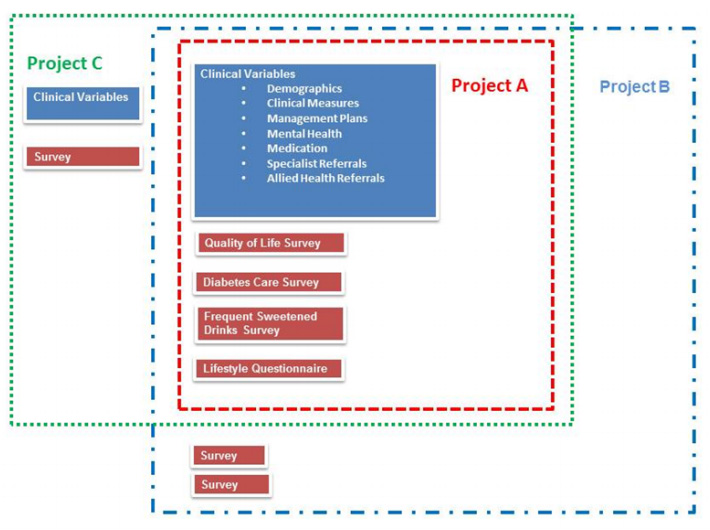

"The development of the Public Health Research Data Management System"

The design and development of the Public Health Research Data Management System highlights how it is possible to construct an information system, which allows greater access to well, preserved public health research data to enable it to be reused and shared. The Public Health Research Data Management System (PHRDMS) manages clinical, health service, community and survey research data within a secure web environment. The conceptual model under pinning the PHRDMS is based on three main entities: participant, community and health service. The PHRDMS was designed to provide data management to allow for data sharing and reuse. The system has been designed to enable rigorous research and ensure that: data that are unmanaged be managed, data that are disconnected be connected, data that are invisible be findable, data that are single use be reusable, within a structured collection. The PHRDMS is currently used by researchers to answer a broad range of policy relevant questions, including monitoring incidence of renal disease, cardiovascular disease, diabetes and mental health problems in different risk groups. (Full article...)

|

Featured article of the week: March 28–April 03:

"The need for informatics to support forensic pathology and death investigation"

As a result of their practice of medicine, forensic pathologists create a wealth of data regarding the causes of and reasons for sudden, unexpected or violent deaths. This data have been effectively used to protect the health and safety of the general public in a variety of ways despite current and historical limitations. These limitations include the lack of data standards between the thousands of death investigation (DI) systems in the United States, rudimentary electronic information systems for DI, and the lack of effective communications and interfaces between these systems. Collaboration between forensic pathology and clinical informatics is required to address these shortcomings and a path forward has been proposed that will enable forensic pathology to maximize its effectiveness by providing timely and actionable information to public health and public safety agencies. (Full article...)

|

Featured article of the week: March 21–27:

"Efficient sample tracking with OpenLabFramework"

The advance of new technologies in biomedical research has led to a dramatic growth in experimental throughput. Projects therefore steadily grow in size and involve a larger number of researchers. Spreadsheets traditionally used are thus no longer suitable for keeping track of the vast amounts of samples created and need to be replaced with state-of-the-art laboratory information management systems. Such systems have been developed in large numbers, but they are often limited to specific research domains and types of data. One domain so far neglected is the management of libraries of vector clones and genetically engineered cell lines. OpenLabFramework is a newly developed web-application for sample tracking, particularly laid out to fill this gap, but with an open architecture allowing it to be extended for other biological materials and functional data. Its sample tracking mechanism is fully customizable and aids productivity further through support for mobile devices and barcoded labels. (Full article...)

|

Featured article of the week: March 14–20:

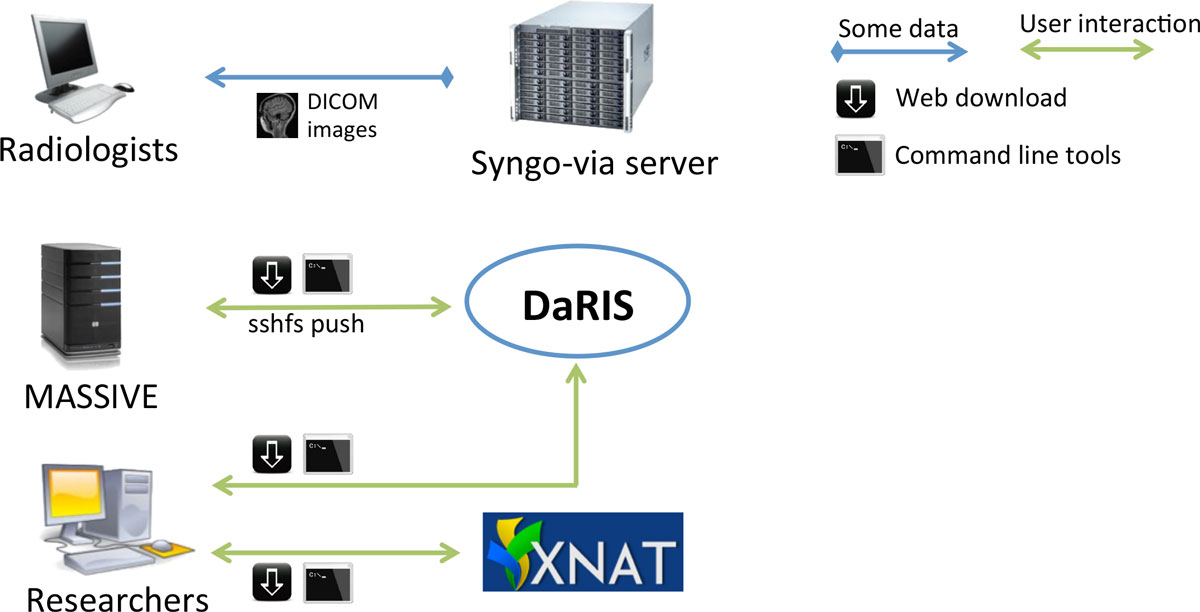

"Design, implementation and operation of a multimodality research imaging informatics repository"

Biomedical imaging research increasingly involves acquiring, managing and processing large amounts of distributed imaging data. Integrated systems that combine data, meta-data and workflows are crucial for realising the opportunities presented by advances in imaging facilities. This paper describes the design, implementation and operation of a multi-modality research imaging data management system that manages imaging data obtained from biomedical imaging scanners operated at Monash Biomedical Imaging (MBI), Monash University in Melbourne, Australia. In addition to Digital Imaging and Communications in Medicine (DICOM) images, raw data and non-DICOM biomedical data can be archived and distributed by the system. Imaging data are annotated with meta-data according to a study-centric data model and, therefore, scientific users can find, download and process data easily. The research imaging data management system ensures long-term usability, integrity inter-operability and integration of large imaging data. Research users can securely browse and download stored images and data, and upload processed data via subject-oriented informatics frameworks including the Distributed and Reflective Informatics System (DaRIS), and the Extensible Neuroimaging Archive Toolkit (XNAT). (Full article...)

|

Featured article of the week: March 07–13:

Forensic science (often shortened to forensics) is the application of a broad spectrum of sciences — from anthropology to toxicology — to answer questions of interest to a legal system. During the course of an investigation, forensic scientists collect, preserve, and analyze scientific evidence using a variety of special laboratory equipment and special techniques for such interests. In addition to their laboratory role, the forensic scientists may also testify as an expert witness in both criminal and civil cases and can work for either the prosecution or the defense.

Much of the work of forensic science is conducted in the forensic laboratory. Such a laboratory has many similarities to a traditional clinical or research lab in so much that it contains various lab instruments and several areas set aside for different tasks. However, it differs in other ways. Windows, for example, represent a point of entry into a forensic lab, which must be secure as it contains evidence to crimes. (Full article...)

|

Featured article of the week: February 29–March 06:

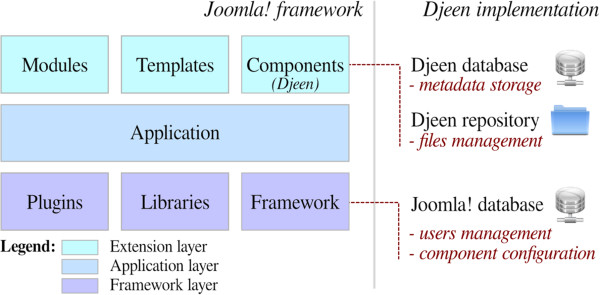

"Djeen (Database for Joomla!’s Extensible Engine): A research information management system for flexible multi-technology project administration"

With the advance of post-genomic technologies, the need for tools to manage large scale data in biology becomes more pressing. This involves annotating and storing data securely, as well as granting permissions flexibly with several technologies (all array types, flow cytometry, proteomics) for collaborative work and data sharing. This task is not easily achieved with most systems available today.

We developed Djeen (Database for Joomla!’s Extensible Engine), a new Research Information Management System (RIMS) for collaborative projects. Djeen is a user-friendly application, designed to streamline data storage and annotation collaboratively. Its database model, kept simple, is compliant with most technologies and allows storing and managing of heterogeneous data with the same system. Advanced permissions are managed through different roles. Templates allow Minimum Information (MI) compliance. (Full article...)

|

Featured article of the week: February 22–28:

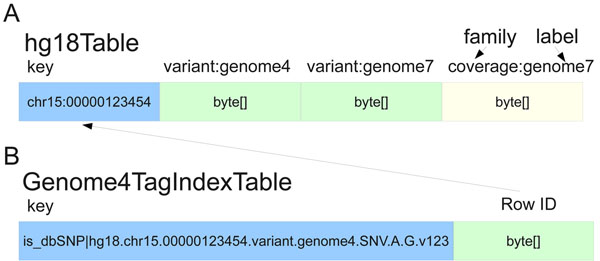

"SeqWare Query Engine: Storing and searching sequence data in the cloud"

Since the introduction of next-generation DNA sequencers the rapid increase in sequencer throughput, and associated drop in costs, has resulted in more than a dozen human genomes being resequenced over the last few years. These efforts are merely a prelude for a future in which genome resequencing will be commonplace for both biomedical research and clinical applications. The dramatic increase in sequencer output strains all facets of computational infrastructure, especially databases and query interfaces. The advent of cloud computing, and a variety of powerful tools designed to process petascale datasets, provide a compelling solution to these ever increasing demands.

In this work, we present the SeqWare Query Engine which has been created using modern cloud computing technologies and designed to support databasing information from thousands of genomes. Our backend implementation was built using the highly scalable, NoSQL HBase database from the Hadoop project. We also created a web-based frontend that provides both a programmatic and interactive query interface and integrates with widely used genome browsers and tools. (Full article...)

|

Featured article of the week: February 15–21:

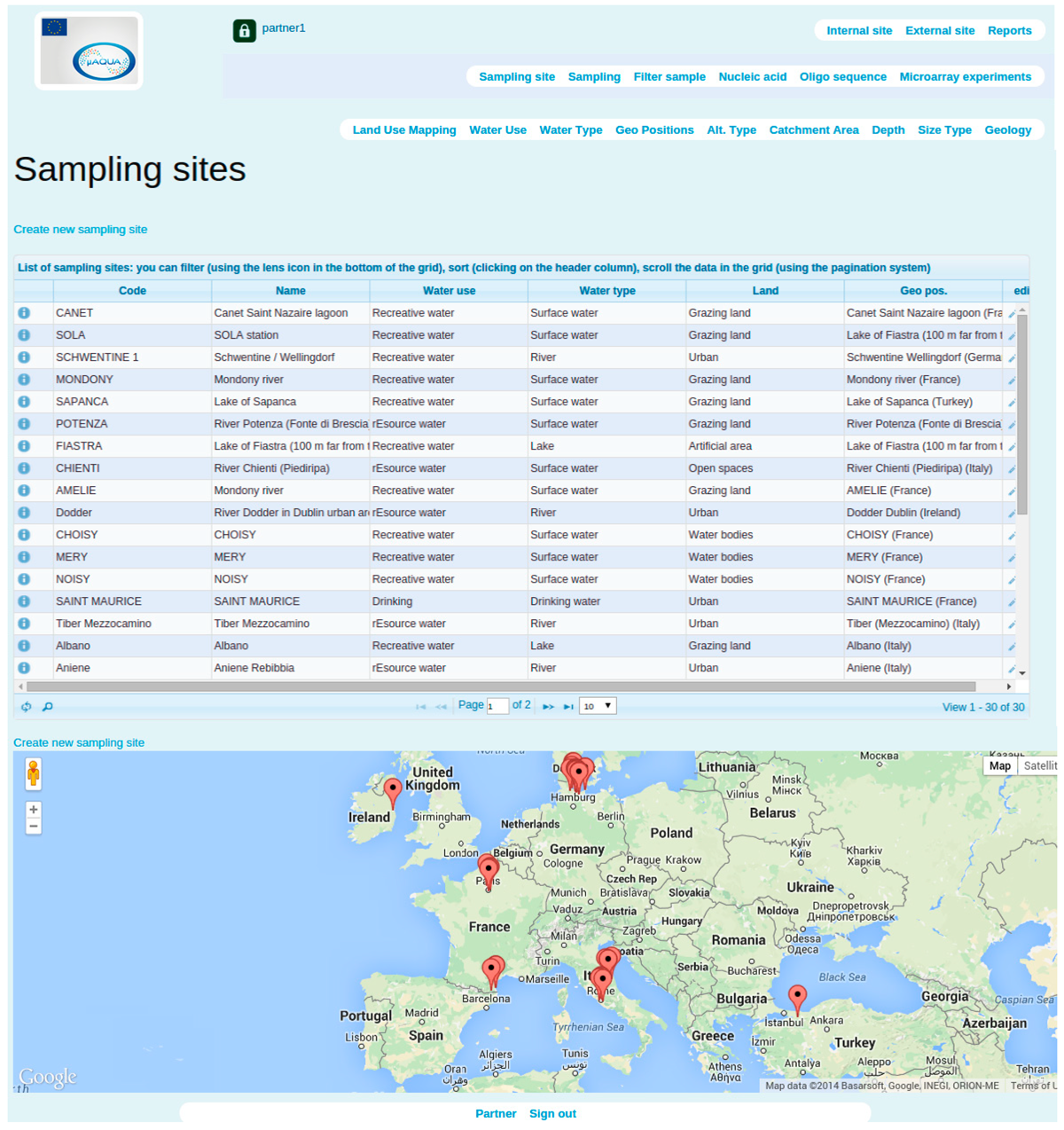

"SaDA: From sampling to data analysis—An extensible open source infrastructure for rapid, robust and automated management and analysis of modern ecological high-throughput microarray data"

One of the most crucial characteristics of day-to-day laboratory information management is the collection, storage and retrieval of information about research subjects and environmental or biomedical samples. An efficient link between sample data and experimental results is absolutely important for the successful outcome of a collaborative project. Currently available software solutions are largely limited to large scale, expensive commercial Laboratory Information Management Systems (LIMS). Acquiring such LIMS indeed can bring laboratory information management to a higher level, but most of the times this requires a sufficient investment of money, time and technical efforts. There is a clear need for a light weighted open source system which can easily be managed on local servers and handled by individual researchers. Here we present a software named SaDA for storing, retrieving and analyzing data originated from microorganism monitoring experiments. SaDA is fully integrated in the management of environmental samples, oligonucleotide sequences, microarray data and the subsequent downstream analysis procedures. It is simple and generic software, and can be extended and customized for various environmental and biomedical studies. (Full article...)

|

Featured article of the week: January 25–31:

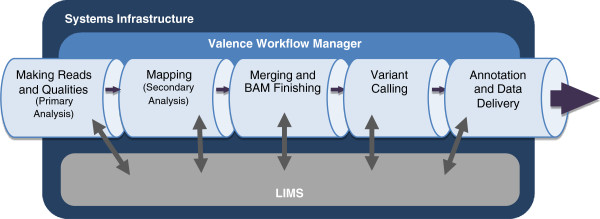

"Launching genomics into the cloud: Deployment of Mercury, a next generation sequence analysis pipeline"

Massively parallel DNA sequencing generates staggering amounts of data. Decreasing cost, increasing throughput, and improved annotation have expanded the diversity of genomics applications in research and clinical practice. This expanding scale creates analytical challenges: accommodating peak compute demand, coordinating secure access for multiple analysts, and sharing validated tools and results.

To address these challenges, we have developed the Mercury analysis pipeline and deployed it in local hardware and the Amazon Web Services cloud via the DNAnexus platform. Mercury is an automated, flexible, and extensible analysis workflow that provides accurate and reproducible genomic results at scales ranging from individuals to large cohorts.

By taking advantage of cloud computing and with Mercury implemented on the DNAnexus platform, we have demonstrated a powerful combination of a robust and fully validated software pipeline and a scalable computational resource that, to date, we have applied to more than 10,000 whole genome and whole exome samples. (Full article...)

|

Featured article of the week: January 18–24:

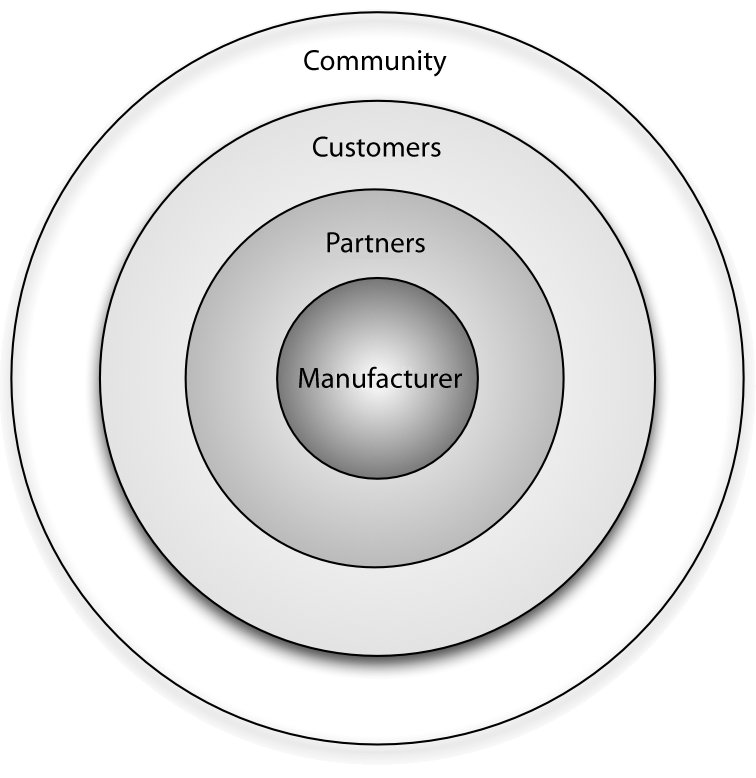

"Benefits of the community for partners of open source vendors"

Open source vendors can benefit from business ecosystems that form around their products. Partners of such vendors can utilize this ecosystem for their own business benefit by understanding the structure of the ecosystem, the key actors and their relationships, and the main levers of profitability. This article provides information on all of these aspects and identifies common business scenarios for partners of open source vendors. Armed with this information, partners can select a strategy that allows them to participate in the ecosystem while also maximizing their gains and driving adoption of their product or solution in the marketplace.

Every free/libre open source software (F/LOSS) vendor strives to create a business ecosystem around its software product. Doing this offers two primary advantages from a sales and marketing perspective: i) it increases the viability and longevity of the product in both commercial and communal spaces, and ii) it opens up new channels for communication and innovation. (Full article...)

|

Featured article of the week: January 11–17:

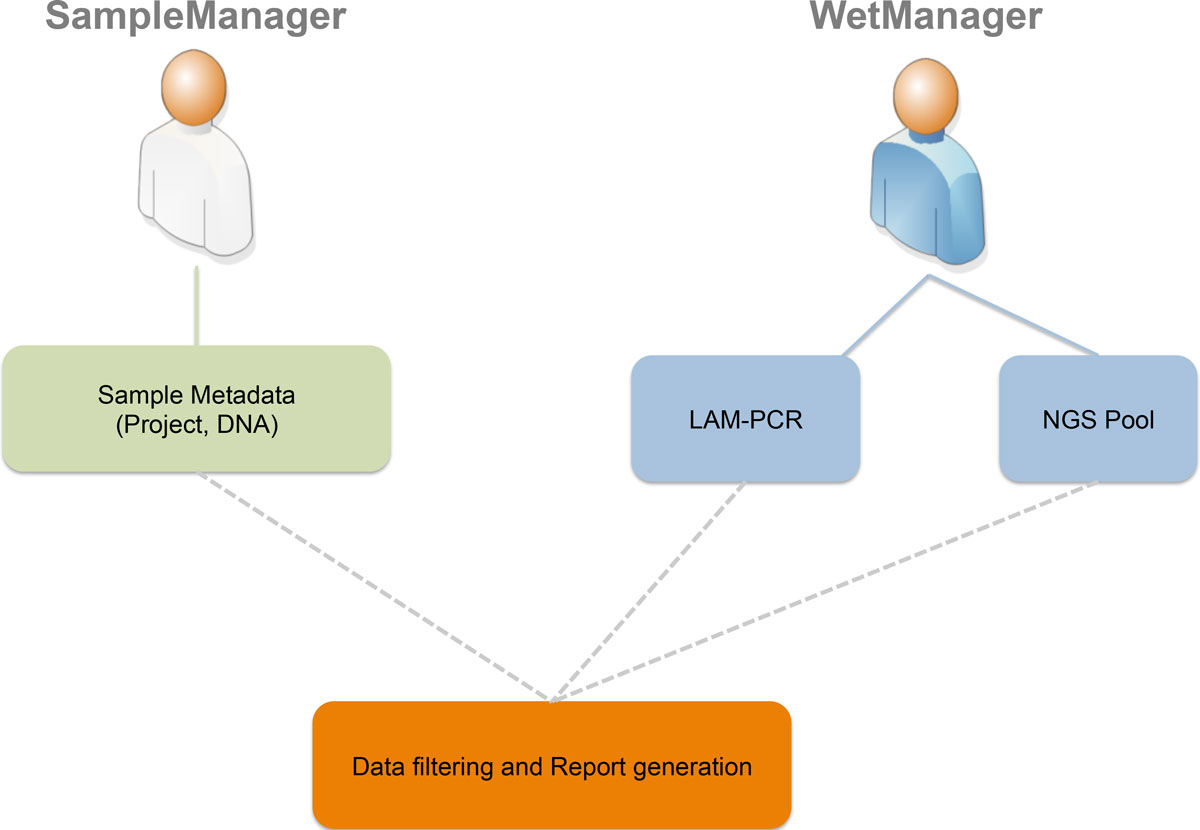

"adLIMS: A customized open source software that allows bridging clinical and basic molecular research studies"

Many biological laboratories that deal with genomic samples are facing the problem of sample tracking, both for pure laboratory management and for efficiency. Our laboratory exploits PCR techniques and Next Generation Sequencing (NGS) methods to perform high-throughput integration site monitoring in different clinical trials and scientific projects. Because of the huge amount of samples that we process every year, which result in hundreds of millions of sequencing reads, we need to standardize data management and tracking systems, building up a scalable and flexible structure with web-based interfaces, which are usually called Laboratory Information Management System (LIMS).

We extended and customized ADempiere ERP to fulfill LIMS requirements and we developed adLIMS. It has been validated by our end-users verifying functionalities and GUIs through test cases for PCRs samples and pre-sequencing data and it is currently in use in our laboratories. adLIMS implements authorization and authentication policies, allowing multiple users management and roles definition that enables specific permissions, operations and data views to each user. (Full article...)

|

Featured article of the week: January 4–10:

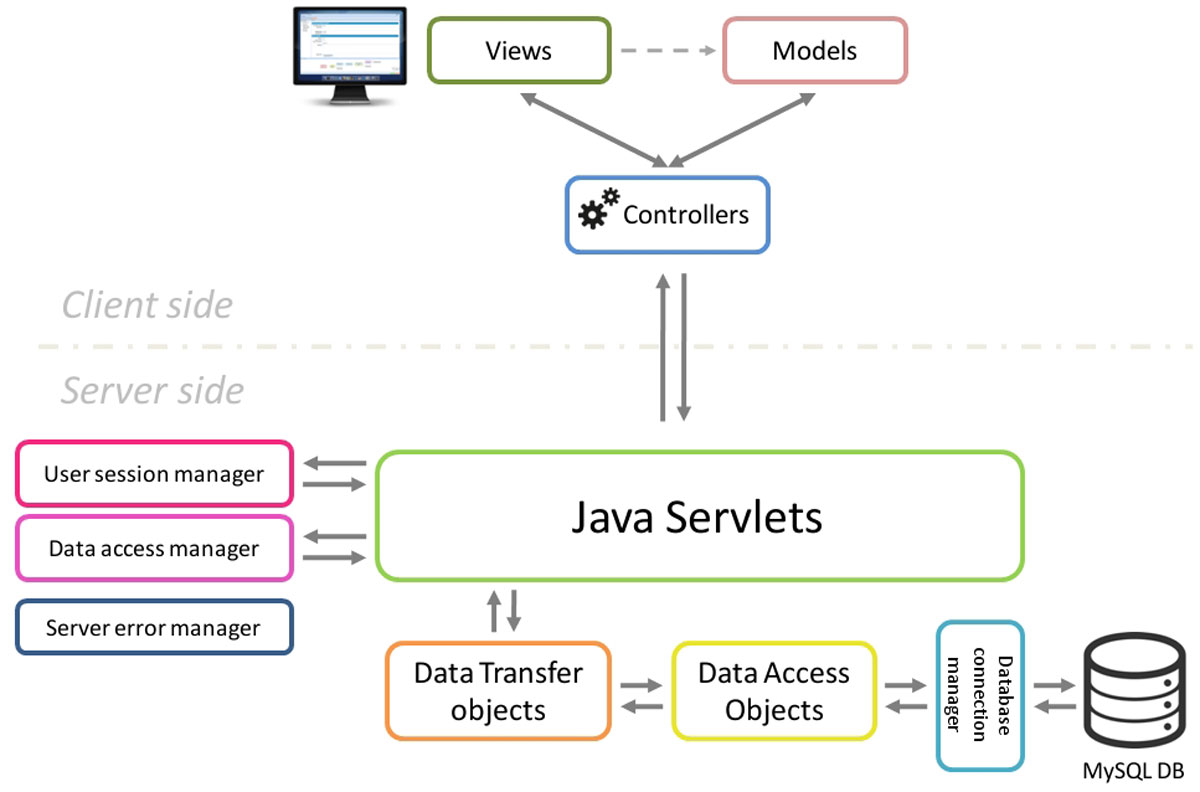

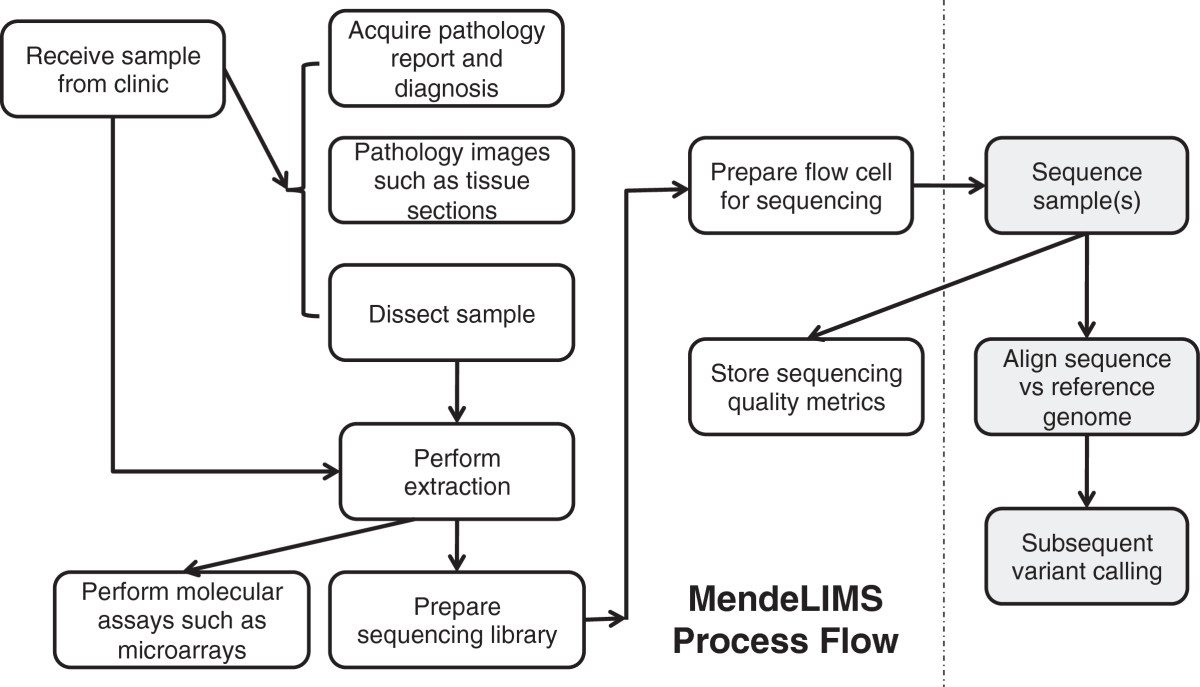

"MendeLIMS: A web-based laboratory information management system for clinical genome sequencing"

Large clinical genomics studies using next generation DNA sequencing require the ability to select and track samples from a large population of patients through many experimental steps. With the number of clinical genome sequencing studies increasing, it is critical to maintain adequate laboratory information management systems to manage the thousands of patient samples that are subject to this type of genetic analysis.

To meet the needs of clinical population studies using genome sequencing, we developed a web-based laboratory information management system (LIMS) with a flexible configuration that is adaptable to continuously evolving experimental protocols of next generation DNA sequencing technologies. Our system is referred to as MendeLIMS, is easily implemented with open source tools and is also highly configurable and extensible. MendeLIMS has been invaluable in the management of our clinical genome sequencing studies. (Full article...)

|

|