Featured article of the week: December 27–January 2:"Towards a risk catalog for data management plans"

Although data management and its careful planning are not new topics, there is little published research on risk mitigation in data management plans (DMPs). We consider it a problem that DMPs do not include a structured approach for the identification or mitigation of risks, because it would instill confidence and trust in the data and its stewards, and foster the successful conduction of data-generating projects, which often are funded research projects. In this paper, we present a lightweight approach for identifying general risk in DMPs. We introduce an initial version of a generic risk catalog for funded research and similar projects. By analyzing a selection of 13 DMPs for projects from multiple disciplines published in the Research Ideas and Outcomes (RIO) journal, we demonstrate that our approach is applicable to DMPs and transferable to multiple institutional constellations. As a result, the effort for integrating risk management in data management planning can be reduced. (Full article...)

Featured article of the week: December 20–26:

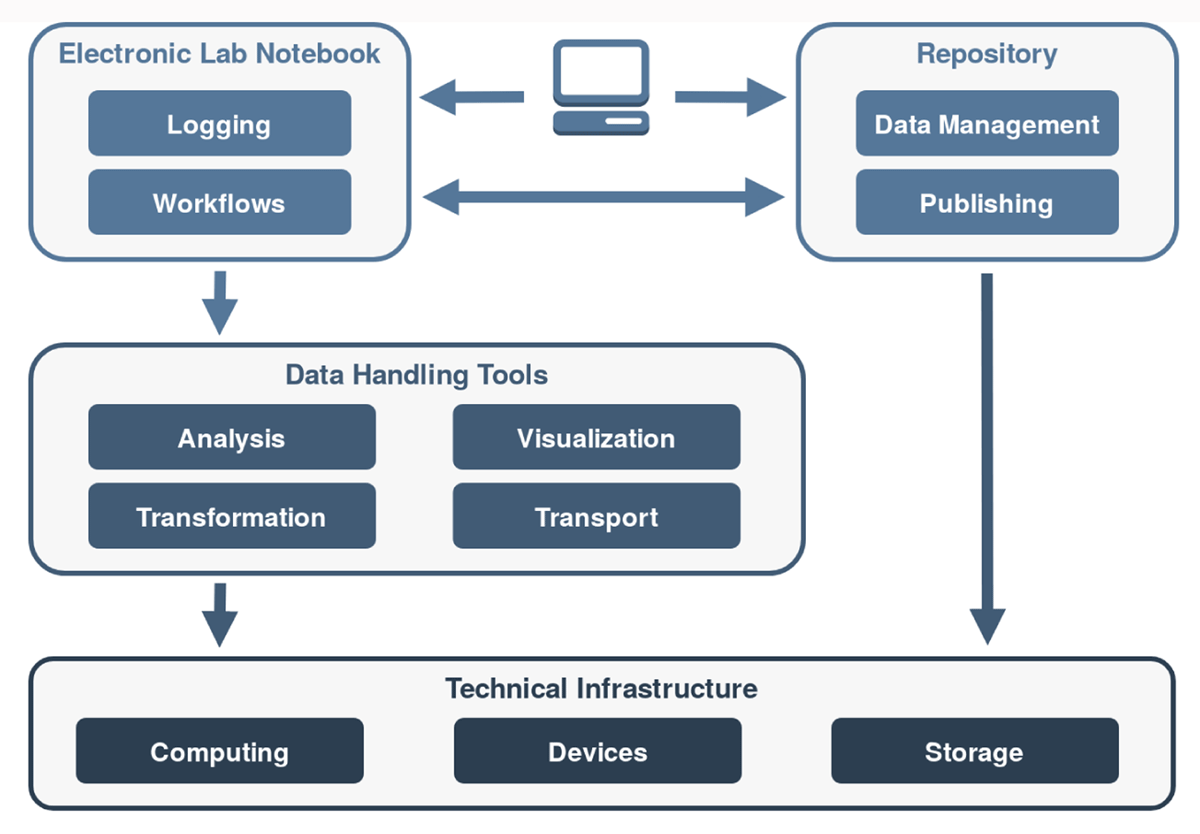

"Kadi4Mat: A research data infrastructure for materials science"

The concepts and current developments of a research data infrastructure for materials science are presented, extending and combining the features of an electronic laboratory notebook (ELN) and a repository. The objective of this infrastructure is to incorporate the possibility of structured data storage and data exchange with documented and reproducible data analysis and visualization, which finally leads to the publication of the data. This way, researchers can be supported throughout the entire research process. The software is being developed as a web-based and desktop-based system, offering both a graphical user interface (GUI) and a programmatic interface. The focus of the development is on the integration of technologies and systems based on both established as well as new concepts. Due to the heterogeneous nature of materials science data, the current features are kept mostly generic, and the structuring of the data is largely left to the users. As a result, an extension of the research data infrastructure to other disciplines is possible in the future. The source code of the project is publicly available under a permissive Apache 2.0 license. (Full article...)

|

Featured article of the week: December 13–19:

"Making data and workflows findable for machines"

Research data currently face a huge increase of data objects, with an increasing variety of types (data types, formats) and variety of workflows by which objects need to be managed across their lifecycle by data infrastructures. Researchers desire to shorten the workflows from data generation to analysis and publication, and the full workflow needs to become transparent to multiple stakeholders, including research administrators and funders. This poses challenges for research infrastructures and user-oriented data services in terms of not only making data and workflows findable, accessible, interoperable, and reusable (FAIR), but also doing so in a way that leverages machine support for better efficiency. One primary need yet to be addressed is that of findability, and achieving better findability has benefits for other aspects of data and workflow management. In this article, we describe how machine capabilities can be extended to make workflows more findable, in particular by leveraging the Digital Object Architecture, common object operations, and machine learning techniques. (Full article...)

|

Featured article of the week: December 6–12:

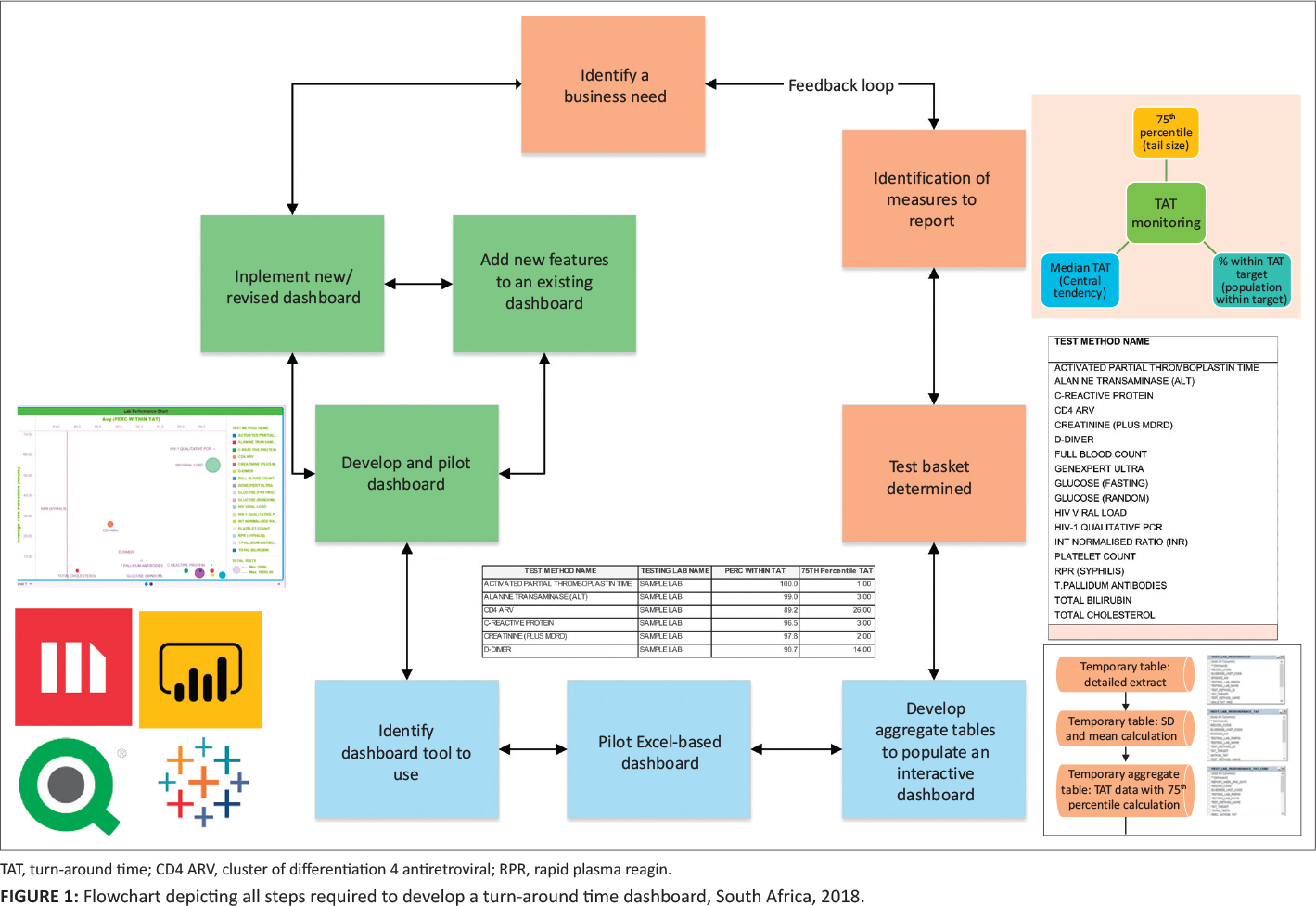

"Timely delivery of laboratory efficiency information, Part I: Developing an interactive turnaround time dashboard at a high-volume laboratory"

Mean turnaround time (TAT) reporting for testing laboratories in a national network is typically static and not immediately available for meaningful corrective action and does not allow for test-by-test or site-by-site interrogation of individual laboratory performance. The aim of this study was to develop an easy-to-use, visual dashboard to report interactive graphical TAT data to provide a weekly snapshot of TAT efficiency. An interactive dashboard was developed by staff from the National Priority Programme and Central Data Warehouse of the National Health Laboratory Service in Johannesburg, South Africa, during 2018. Steps required to develop the dashboard were summarized in a flowchart. To illustrate the dashboard, one week of data from a busy laboratory for a specific set of tests was analyzed using annual performance plan TAT cutoffs. Data were extracted and prepared to deliver an aggregate extract, with statistical measures provided, including test volumes, global percentage of tests that were within TAT cutoffs, and percentile statistics. (Full article...)

|

Featured article of the week: November 29–December 5:

"Advanced engineering informatics: Philosophical and methodological foundations with examples from civil and construction engineering"

We argue that the representation and formalization of complex engineering knowledge is the main aim of inquiries in the scientific field of advanced engineering informatics. We introduce ontology and logic as underlying methods to formalize knowledge. We also suggest that it is important to account for the purpose of engineers and the context they work in while representing and formalizing knowledge. Based on the concepts of ontology, logic, purpose, and context, we discuss different possible research methods and approaches that scholars can use to formalize complex engineering knowledge and to validate whether a specific formalization can support engineers with their complex tasks. On the grounds of this discussion, we suggest that research efforts in advanced engineering should be conducted in a bottom-up manner, closely involving engineering practitioners. We also suggest that researchers make use of social science methods while both eliciting knowledge to formalize and validating that formalized knowledge. (Full article...)

|

Featured article of the week: November 22–28:

"Explainability for artificial intelligence in healthcare: A multidisciplinary perspective"

Explainability is one of the most heavily debated topics when it comes to the application of artificial intelligence (AI) in healthcare. Even though AI-driven systems have been shown to outperform humans in certain analytical tasks, the lack of explainability continues to spark criticism. Yet, explainability is not a purely technological issue; instead, it invokes a host of medical, legal, ethical, and societal questions that require thorough exploration. This paper provides a comprehensive assessment of the role of explainability in medical AI and makes an ethical evaluation of what explainability means for the adoption of AI-driven tools into clinical practice. Taking AI-based clinical decision support systems as a case in point, we adopted a multidisciplinary approach to analyze the relevance of explainability for medical AI from the technological, legal, medical, and patient perspectives. Drawing on the findings of this conceptual analysis, we then conducted an ethical assessment using Beauchamp and Childress' Principles of Biomedical Ethics (autonomy, beneficence, nonmaleficence, and justice) as an analytical framework to determine the need for explainability in medical AI. (Full article...)

|

Featured article of the week: November 15–21:

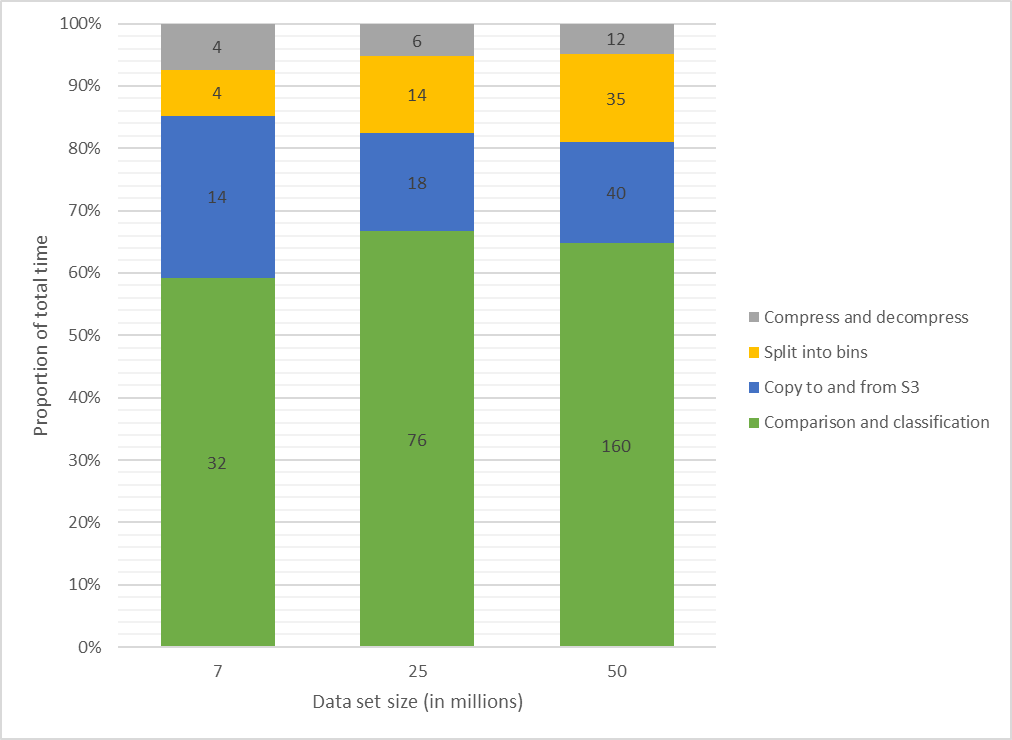

"Secure record linkage of large health data sets: Evaluation of a hybrid cloud model"

The linking of administrative data across agencies provides the capability to investigate many health and social issues, with the potential to deliver significant public benefit. Despite its advantages, the use of cloud computing resources for linkage purposes is scarce, with the storage of identifiable information on cloud infrastructure assessed as high-risk by data custodians. This study aims to present a model for record linkage that utilizes cloud computing capabilities while assuring custodians that identifiable data sets remain secure and local. A new hybrid cloud model was developed, including privacy-preserving record linkage techniques and container-based batch processing. An evaluation of this model was conducted with a prototype implementation using large synthetic data sets representative of administrative health data. (Full article...)

|

Featured article of the week: November 8–14:

"Risk assessment for scientific data"

Ongoing stewardship is required to keep data collections and archives in existence. Scientific data collections may face a range of risk factors that could hinder, constrain, or limit current or future data use. Identifying such risk factors to data use is a key step in preventing or minimizing data loss. This paper presents an analysis of data risk factors that scientific data collections may face, and a data risk assessment matrix to support data risk assessments to help ameliorate those risks. The goals of this work are to inform and enable effective data risk assessment by: a) individuals and organizations who manage data collections, and b) individuals and organizations who want to help to reduce the risks associated with data preservation and stewardship. The data risk assessment framework presented in this paper provides a platform from which risk assessments can begin, and a reference point for discussions of data stewardship resource allocations and priorities. (Full article...)

|

Featured article of the week: November 1–7:

"Methods for quantification of cannabinoids: A narrative review"

Around 144 cannabinoids have been identified in the Cannabis plant; among them tetrahydrocannabinol (THC) and cannabidiol (CBD) are the most prominent ones. Because of the legal restrictions on cannabis in many countries, it is difficult to obtain standards to use in research; nonetheless, it is important to develop a cannabinoid quantification technique, with practical pharmaceutical applications for quality control of future therapeutic cannabinoids. To find relevant articles for this narrative review paper, a combination of keywords such as "medicinal cannabis," "analytical," "quantification," and "cannabinoids" were searched for in PubMed, EMBASE, MEDLINE, Google Scholar, and Cochrane Library (Wiley) databases. (Full article...)

|

Featured article of the week: October 25–31:

"Utilizing connectivity and data management systems for effective quality management and regulatory compliance in point-of-care testing"

Point-of-care testing (POCT) is one of the fastest growing disciplines in clinical laboratory medicine. POCT devices are widely used in both acute and chronic patient management in the hospital and primary care physician office settings. As demands for POCT in various healthcare settings increase, managing POCT testing quality and regulatory compliance are continually challenging. Despite technological advances in applying automatic system checks and built-in quality control to prevent analytical and operator errors, poor planning for POCT connectivity and informatics can limit data accessibility and management efficiency which impedes the utilization of POCT to its full potential. This article will summarize how connectivity and data management systems can improve timely access to POCT results, effective management of POCT programs, and ensure regulatory compliance. (Full article...)

|

Featured article of the week: October 18–24:

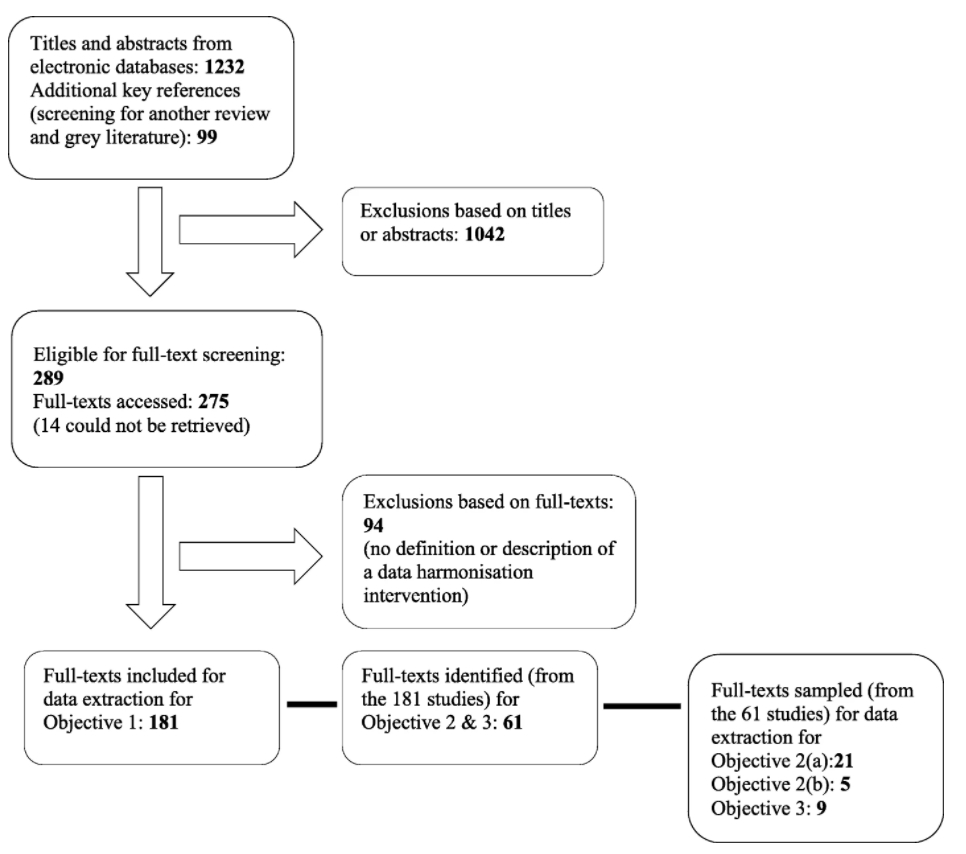

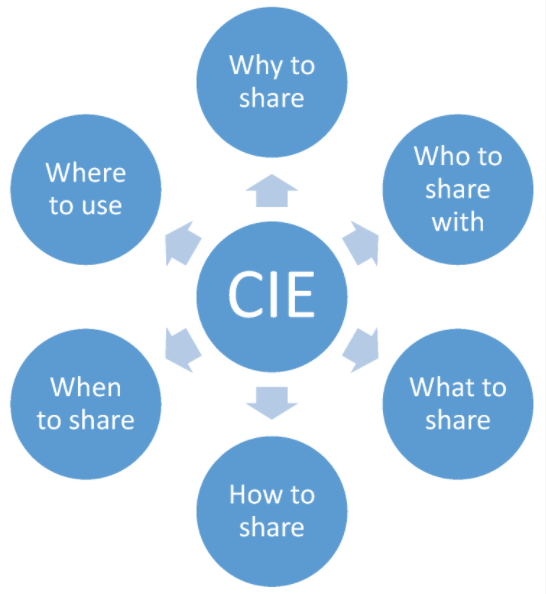

"Definitions, components and processes of data harmonization in healthcare: A scoping review"

Data harmonization (DH) has is increasingly being used by health managers, information technology specialists, and researchers as an important intervention for routine health information systems (RHISs). It is important to understand what DH is, how it is defined and conceptualized, and how it can lead to better health management decision-making. This scoping review identifies a range of definitions for DH, its characteristics (in terms of key components and processes), and common explanations of the relationship between DH and health management decision-making. This scoping review identified more than 2,000 relevant studies (date filter) written in English and published in PubMed, Web of Science, and CINAHL. (Full article...)

|

Featured article of the week: October 11–17:

"Interoperability challenges in the cybersecurity information sharing ecosystem"

Threat intelligence helps businesses and organizations make the right decisions in their fight against cyber threats, and strategically design their digital defenses for an optimized and up-to-date security situation. Combined with advanced security analysis, threat intelligence helps reduce the time between the detection of an attack and its containment. This is achieved by continuously providing information, accompanied by data, on existing and emerging cyber threats and vulnerabilities affecting corporate networks. This paper addresses challenges that organizations are bound to face when they decide to invest in effective and interoperable cybersecurity information sharing, and it categorizes them in a layered model. Based on this, it provides an evaluation of existing sources that share cybersecurity information. The aim of this research is to help organizations improve their cyber threat information exchange capabilities, to enhance their security posture and be more prepared against emerging threats. (Full article...)

|

Featured article of the week: October 4–10:

"Data without software are just numbers"

Great strides have been made to encourage researchers to archive data created by research and provide the necessary systems to support their storage. Additionally, it is recognized that data are meaningless unless their provenance is preserved, through appropriate metadata. Alongside this is a pressing need to ensure the quality and archiving of the software that generates data, through simulation and control of experiment or data collection, and that which analyzes, modifies, and draws value from raw data. In order to meet the aims of reproducibility, we argue that data management alone is insufficient: it must be accompanied by good software practices, the training to facilitate it, and the support of stakeholders, including appropriate recognition for software as a research output. (Full article...)

|

Featured article of the week: September 27–October 3:

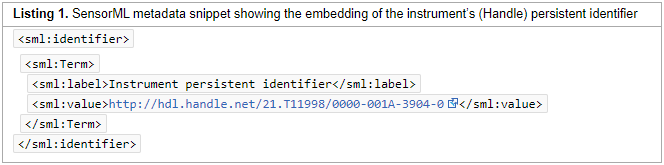

"Persistent identification of instruments"

Instruments play an essential role in creating research data. Given the importance of instruments and associated metadata to the assessment of data quality and data reuse, globally unique, persistent, and resolvable identification of instruments is crucial. The Research Data Alliance Working Group Persistent Identification of Instruments (PIDINST) developed a community-driven solution for persistent identification of instruments, which we present and discuss in this paper. Based on an analysis of 10 use cases, PIDINST developed a metadata schema and prototyped schema implementation with DataCite and ePIC as representative persistent identifier infrastructures, and with HZB (Helmholtz-Zentrum Berlin für Materialien und Energie) and the BODC (British Oceanographic Data Centre) as representative institutional instrument providers. (Full article...)

|

Featured article of the week: September 20–26:

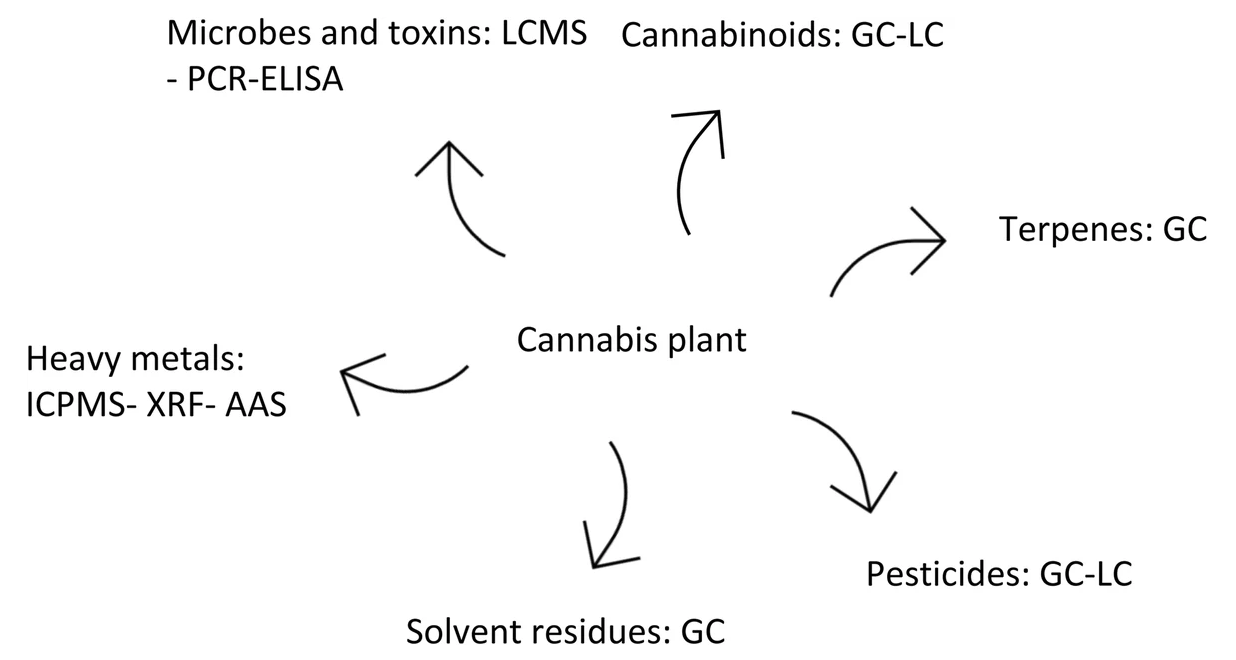

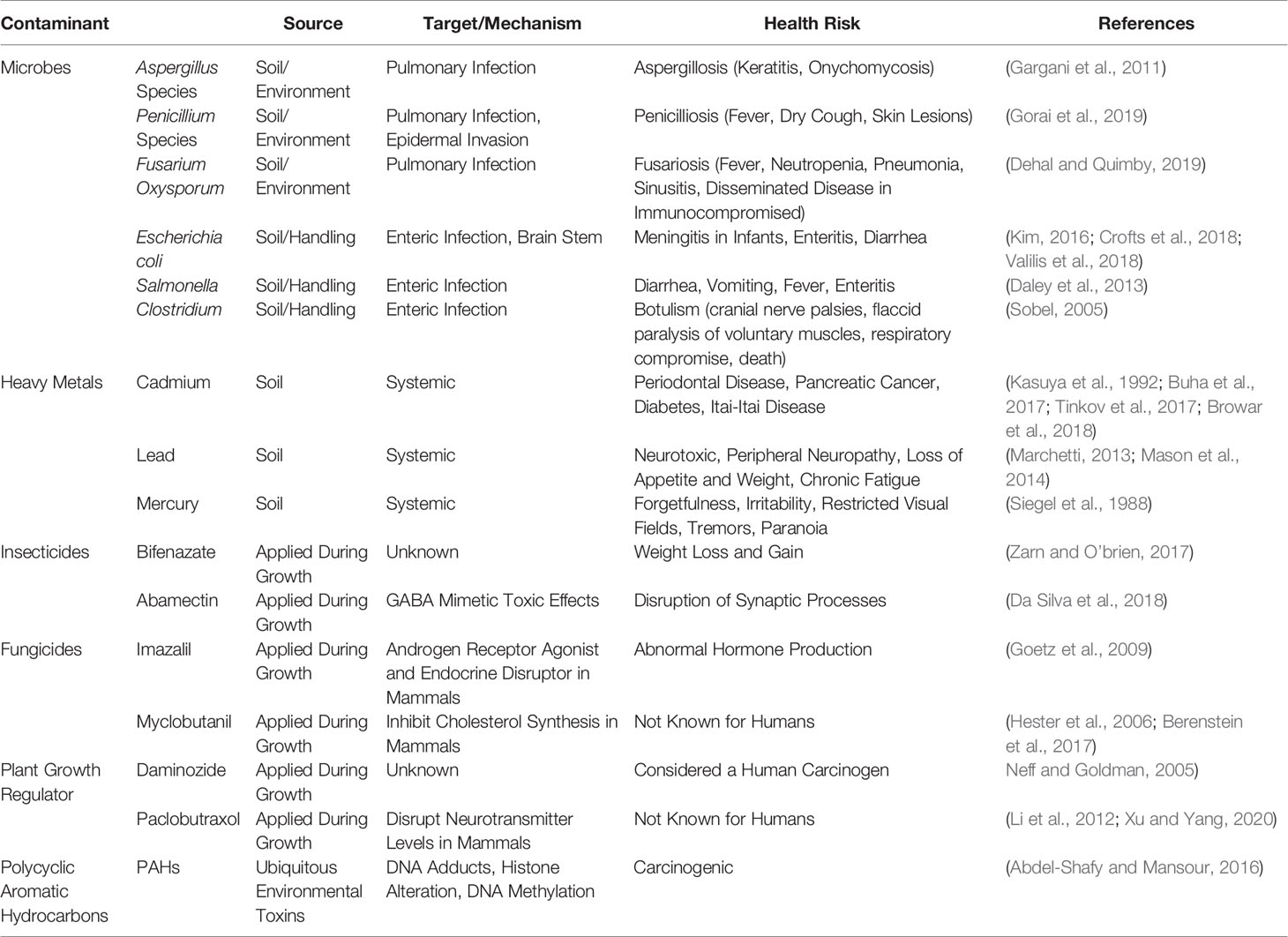

"Cannabis contaminants limit pharmacological use of cannabidiol"

For nearly a century, cannabis has been stigmatized and criminalized across the globe, but in recent years, there has been a growing interest in cannabis due to the therapeutic potential of phytocannabinoids. With this emerging interest in cannabis, concerns have arisen about the possible contaminations of hemp with pesticides, heavy metals, microbial pathogens, and carcinogenic compounds during the cultivation, manufacturing, and packaging processes. This is of particular concern for those turning to cannabis for medicinal purposes, especially those with compromised immune systems. This review aims to provide types of contaminants and examples of cannabis contamination using case studies that elucidate the medical consequences consumers risk when using adulterated cannabis products. (Full article...)

|

Featured article of the week: September 13–19:

"Development of an informatics system for accelerating biomedical research"

The Biomedical Research Informatics Computing System (BRICS) was developed to support multiple disease-focused research programs. Seven service modules are integrated together to provide a collaborative and extensible web-based environment. The modules—Data Dictionary, Account Management, Query Tool, Protocol and Form Research Management System, Meta Study, Data Repository, and Globally Unique Identifier—facilitate the management of research protocols, including the submission, processing, curation, access, and storage of clinical, imaging, and derived genomics data within the associated data repositories. Multiple instances of BRICS are deployed to support various biomedical research communities focused on accelerating discoveries for rare diseases, traumatic brain injuries, Parkinson’s disease, inherited eye diseases, and symptom science research. No personally identifiable information is stored within the data repositories. Digital object identifiers (DOIs) are associated with the research studies. (Full article...)

|

Featured article of the week: September 6–12:

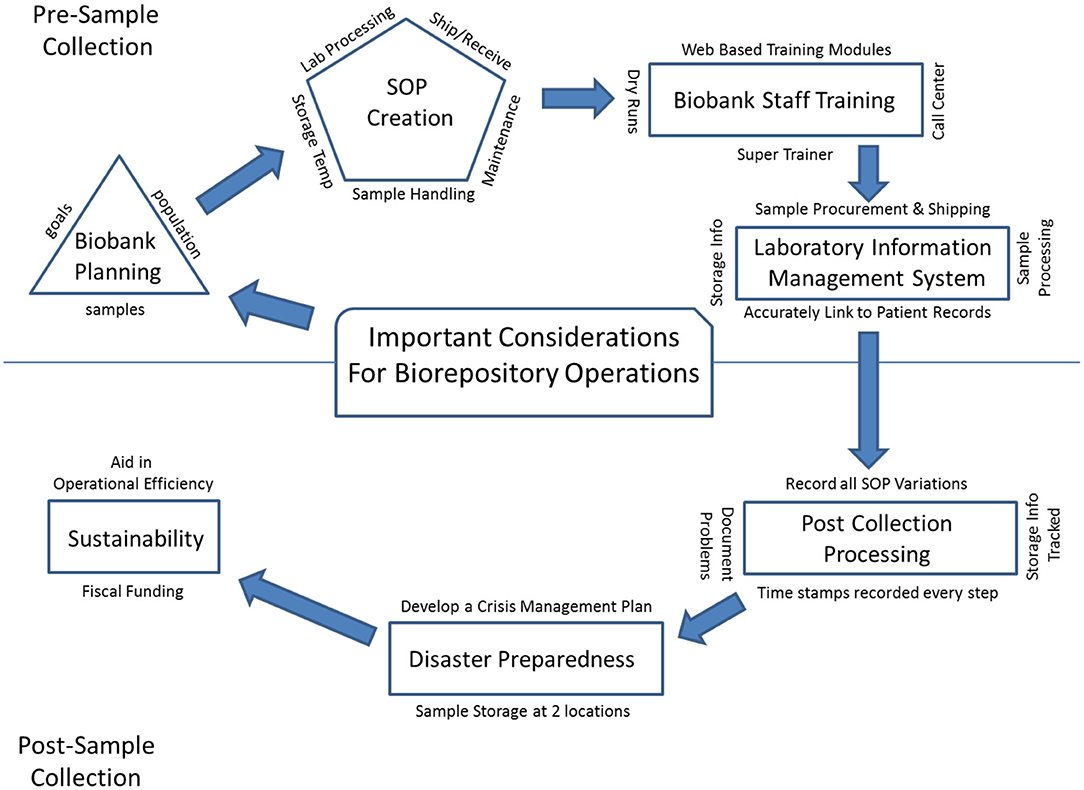

"Mini-review of laboratory operations in biobanking: Building biobanking resources for translational research"

Biobanks have become integral to improving population health. We are in a new era in medicine as patients, health professionals, and researchers increasingly collaborate to gain new knowledge and explore new paradigms for diagnosing and treating disease. Many large-scale biobanking efforts are underway worldwide at the institutional, national, and even international level. When linked with subject data from questionnaires and medical records, biobanks serve as valuable resources in translational research. A biobank must have high-quality biospecimens that meet researcher's needs. Biobank laboratory operations require an enormous amount of support, from lab and storage space, information technology expertise, and a laboratory information management system to logistics for biospecimen tracking, quality management systems, and appropriate facilities. (Full article...)

|

Featured article of the week: August 30–September 5:

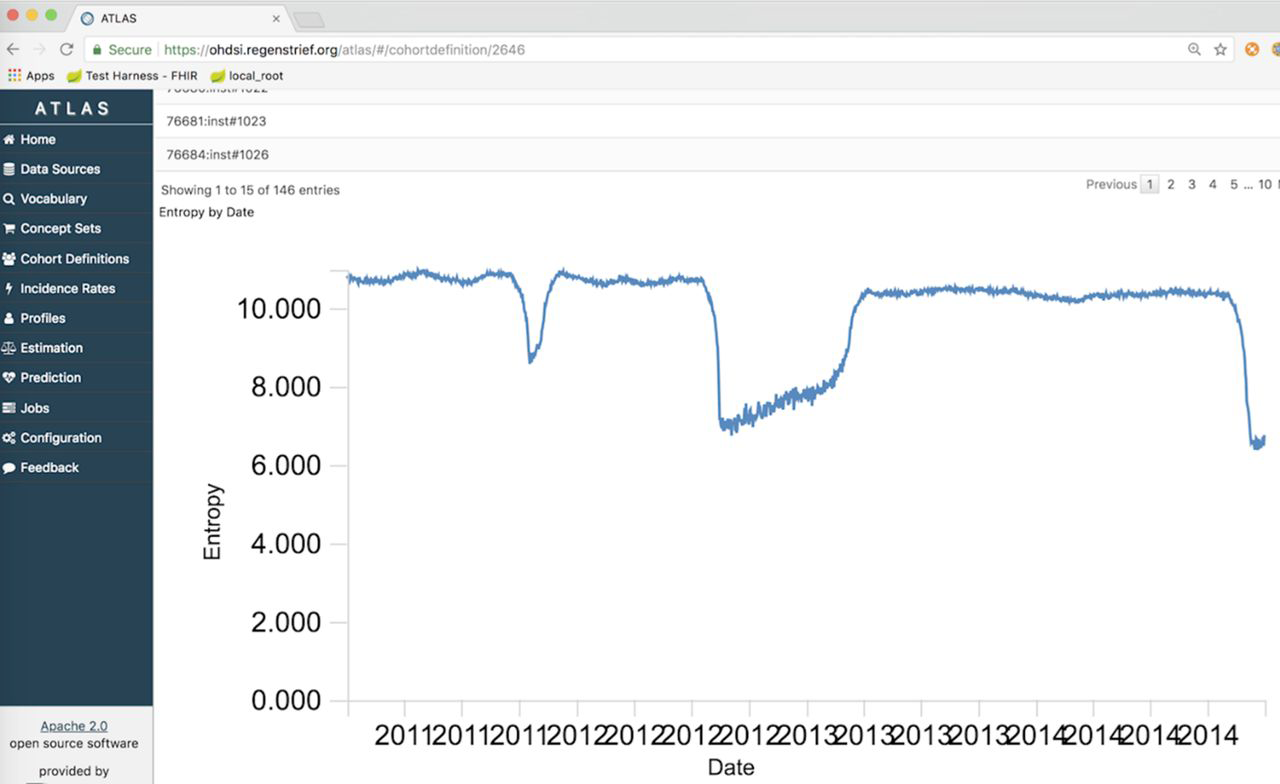

"Extending an open-source tool to measure data quality: Case report on Observational Health Data Science and Informatics (OHDSI)"

As the health system seeks to leverage large-scale data to inform population outcomes, the informatics community is developing tools for analyzing these data. To support data quality assessment within such a tool, we extended the open-source software Observational Health Data Sciences and Informatics (OHDSI) to incorporate new functions useful for population health. We developed and tested methods to measure the completeness, timeliness, and entropy of information. The new data quality methods were applied to over 100 million clinical messages received from emergency department information systems for use in public health syndromic surveillance systems. (Full article...)

|

Featured article of the week: August 23–29:

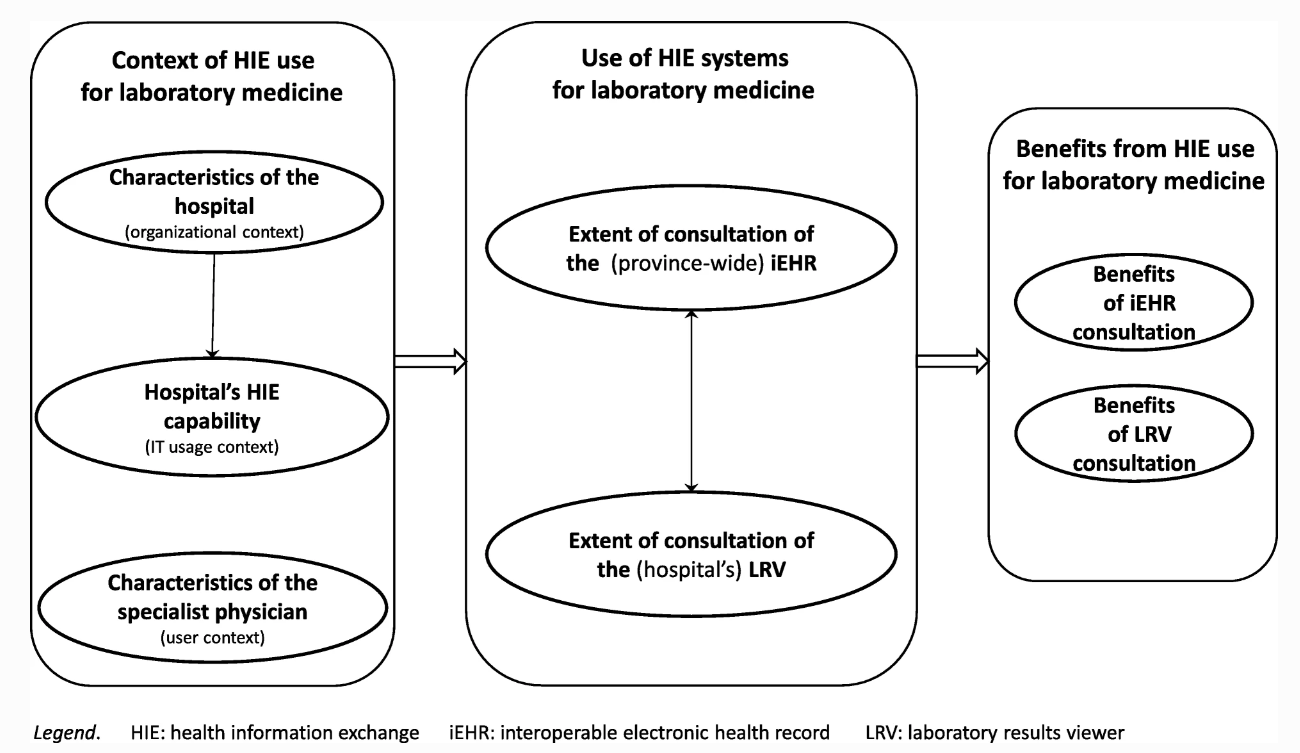

"Advancing laboratory medicine in hospitals through health information exchange: A survey of specialist physicians in Canada"

Laboratory testing occupies a prominent place in healthcare. Information technology systems have the potential to empower laboratory experts and to enhance the interpretation of test results in order to better support physicians in their quest for better and safer patient care. This study sought to develop a better understanding of which laboratory information exchange (LIE) systems and features specialist physicians are using in hospital settings to consult their patients’ laboratory test results, and what benefit they derive from such use. As part of a broader research program on the use of health information exchange systems for laboratory medicine in Quebec, Canada, this study was designed as on online survey. Our sample is composed of 566 specialist physicians working in hospital settings, out of the 1,512 physicians who responded to the survey (response rate of 17%). Respondents are representative of the targeted population of specialist physicians in terms of gender, age, and hospital location. (Full article...)

|

Featured article of the week: August 16–22:

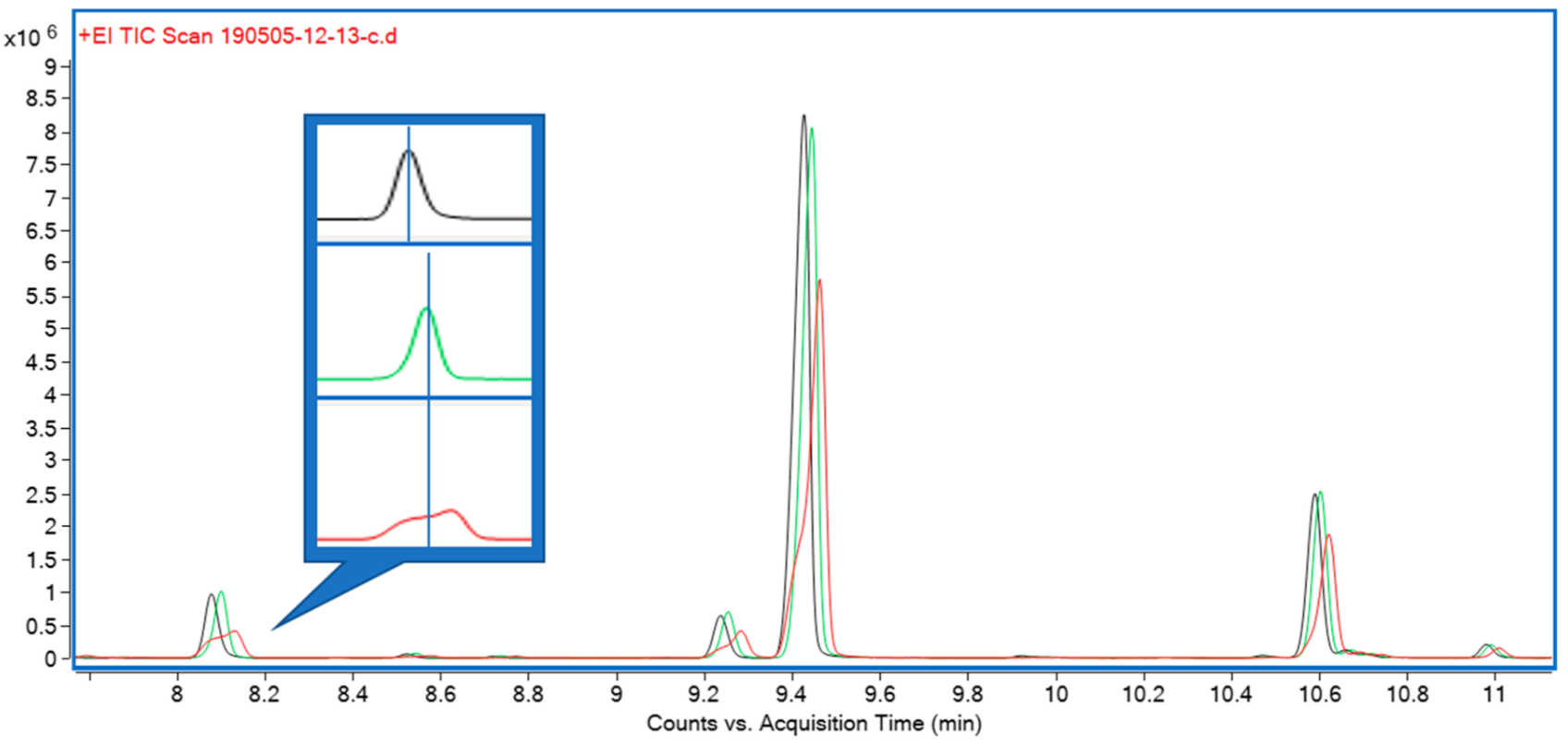

"A high-throughput method for the comprehensive analysis of terpenes and terpenoids in medicinal cannabis biomass"

Cannabis and its secondary metabolite content have recently seen a surge in research interest. Cannabis terpenes and terpenoids in particular are increasingly the focus of research efforts due to the possibility of their contribution to the overall therapeutic effect of medicinal cannabis. Current methodology to quantify terpenes in cannabis biomass mostly relies on large quantities of biomass, long extraction protocols, and long gas chromatography (GC) gradient times, often exceeding 60 minutes. They are therefore not easily applicable in the high-throughput environment of a cannabis breeding program. (Full article...)

|

Featured article of the week: August 9–15:

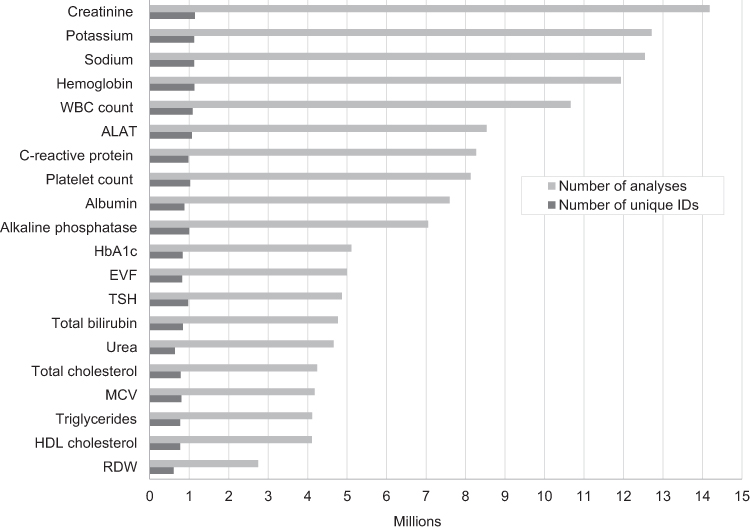

"Existing data sources in clinical epidemiology: Laboratory information system databases in Denmark"

Routine biomarker results from hospital laboratory information systems (LIS)—covering hospitals and general practitioners—in Denmark are available to researchers through access to the regional Clinical Laboratory Information System Research Database at Aarhus University and the nationwide Register of Laboratory Results for Research. This review describes these two data sources. The laboratory databases have different geographical and temporal coverage. They both include individual-level biomarker results that are electronically transferred from LISs. The biomarker results can be linked to all other Danish registries at the individual level using the unique identifier, the CPR number. (Full article...)

|

Featured article of the week: August 2–8:

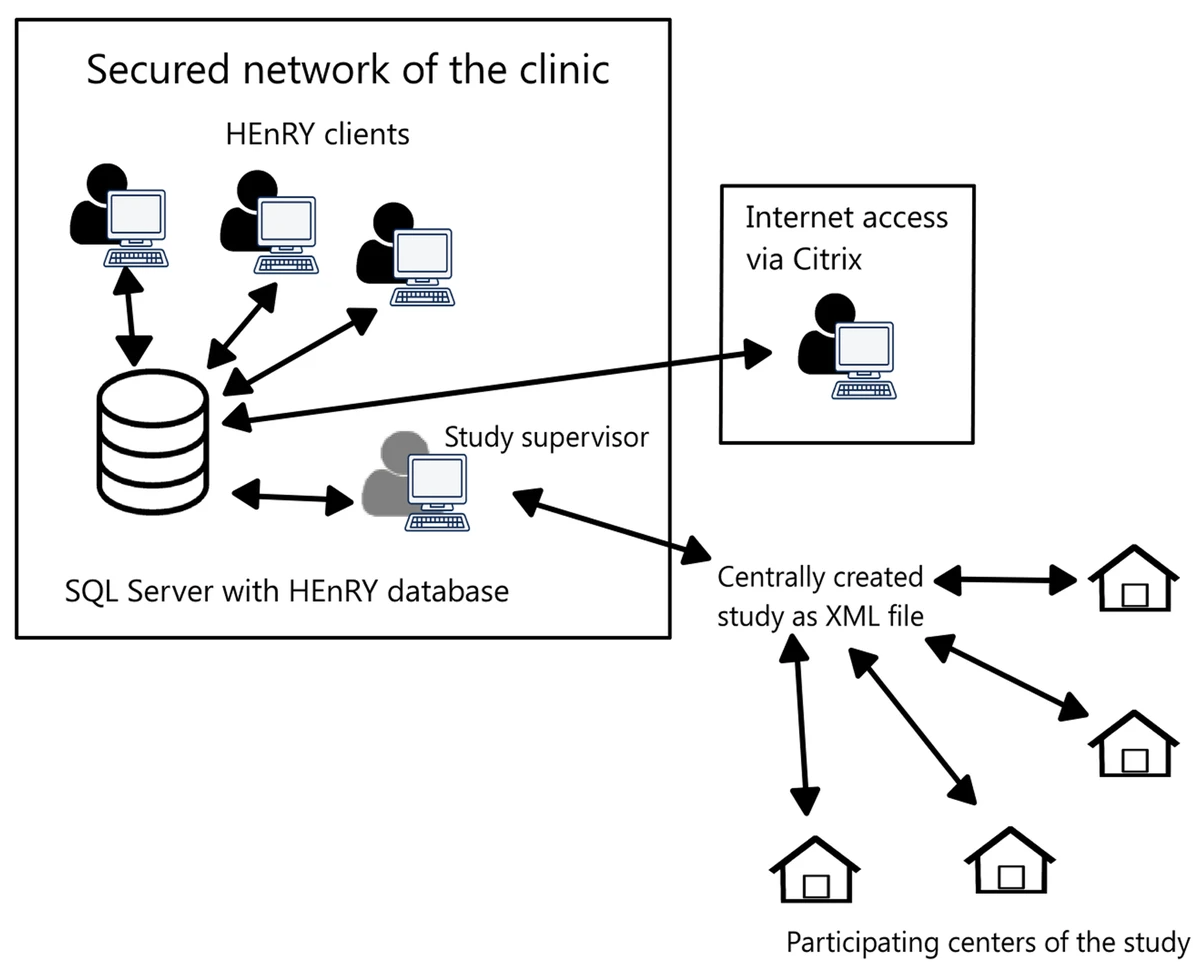

"HEnRY: A DZIF LIMS tool for the collection and documentation of biospecimens in multicentre studies"

Well-characterized biological specimens (biospecimens) of high quality have great potential for the acceleration of and quality improvement in translational biomedical research. To improve accessibility of local specimen collections, efforts have been made to create central repositories (biobanks) and catalogues. Available technical solutions for creating professional local specimen catalogues and connecting them to central systems are cost intensive and/or technically complex to implement. Therefore, the HIV-focused Thematic Translational Unit (TTU) of the German Center for Infection Research (DZIF) developed a laboratory information management system (LIMS) called HIV Engaged Research Technology (HEnRY) for implementation into the HIV Translational Platform (TP-HIV) at the DZIF and other research networks. (Full article...)

|

Featured article of the week: July 26–August 1:

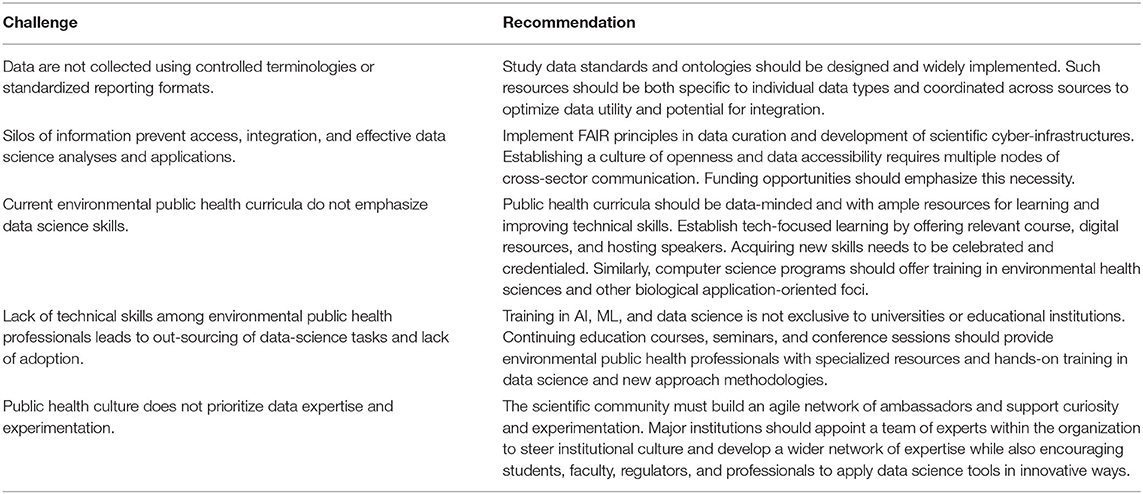

"Bringing big data to bear in environmental public health: Challenges and recommendations"

Understanding the role that the environment plays in influencing public health often involves collecting and studying large, complex data sets. There have been a number of private and public efforts to gather sufficient information and confront significant unknowns in the field of environmental public health, yet there is a persistent and largely unmet need for findable, accessible, interoperable, and reusable (FAIR) data. Even when data are readily available, the ability to create, analyze, and draw conclusions from these data using emerging computational tools, such as augmented intelligence, artificial intelligence (AI), and machine learning, requires technical skills not currently implemented on a programmatic level across research hubs and academic institutions. (Full article...)

|

Featured article of the week: July 19–25:

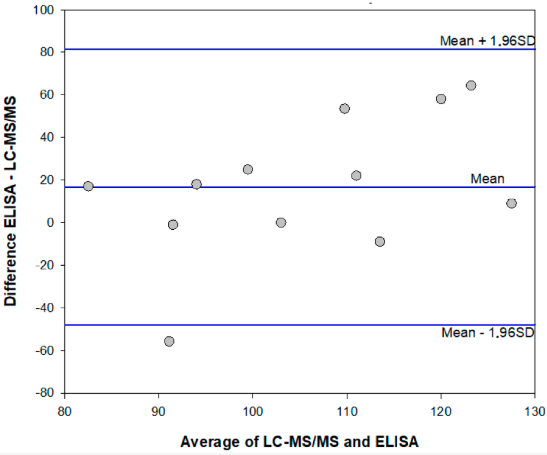

"Enzyme immunoassay for measuring aflatoxin B1 in legal cannabis"

The diffusion of the legalization of cannabis for recreational, medicinal, and nutraceutical uses requires the development of adequate analytical methods to assure the safety and security of such products. In particular, aflatoxins are considered to pose a major risk for the health of cannabis consumers. Among analytical methods that allow for adequate monitoring of food safety, immunoassays play a major role thanks to their cost-effectiveness, high-throughput capacity, simplicity, and limited requirement for equipment and skilled operators. Therefore, a rapid and sensitive enzyme immunoassay has been adapted to measure the most hazardous aflatoxin B1 in cannabis products. The assay was acceptably accurate (recovery rate: 78–136%), reproducible (intra- and inter-assay means coefficients of variation 11.8% and 13.8%, respectively), and sensitive (limit of detection and range of quantification: 0.35 ng mL−1 and 0.4–2 ng mL−1 ... (Full article...)

|

Featured article of the week: July 12–18:

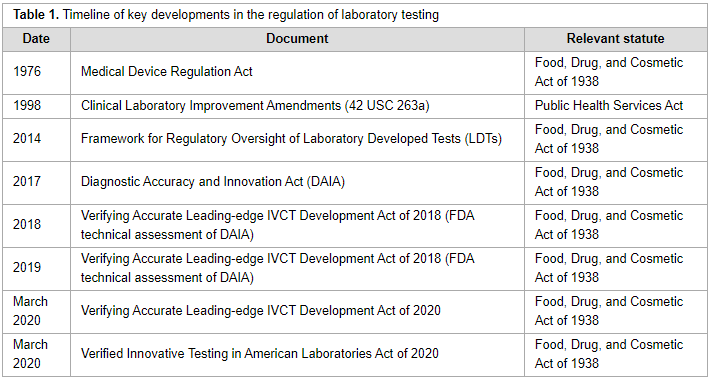

"The regulatory landscape of precision oncology laboratory medicine in the United States: Perspective on the past five years and considerations for future regulation"

The regulatory landscape for precision oncology in the United States is complicated, with multiple governmental regulatory agencies with different scopes of jurisdiction. Several regulatory proposals have been introduced since the Food and Drug Administration released draft guidance to regulate laboratory developed tests in 2014. Key aspects of the most recent proposals and discussion of central arguments related to the regulation of precision oncology laboratory tests provides insight to stakeholders for future discussions related to regulation of laboratory tests. (Full article...)

|

Featured article of the week: July 5–11:

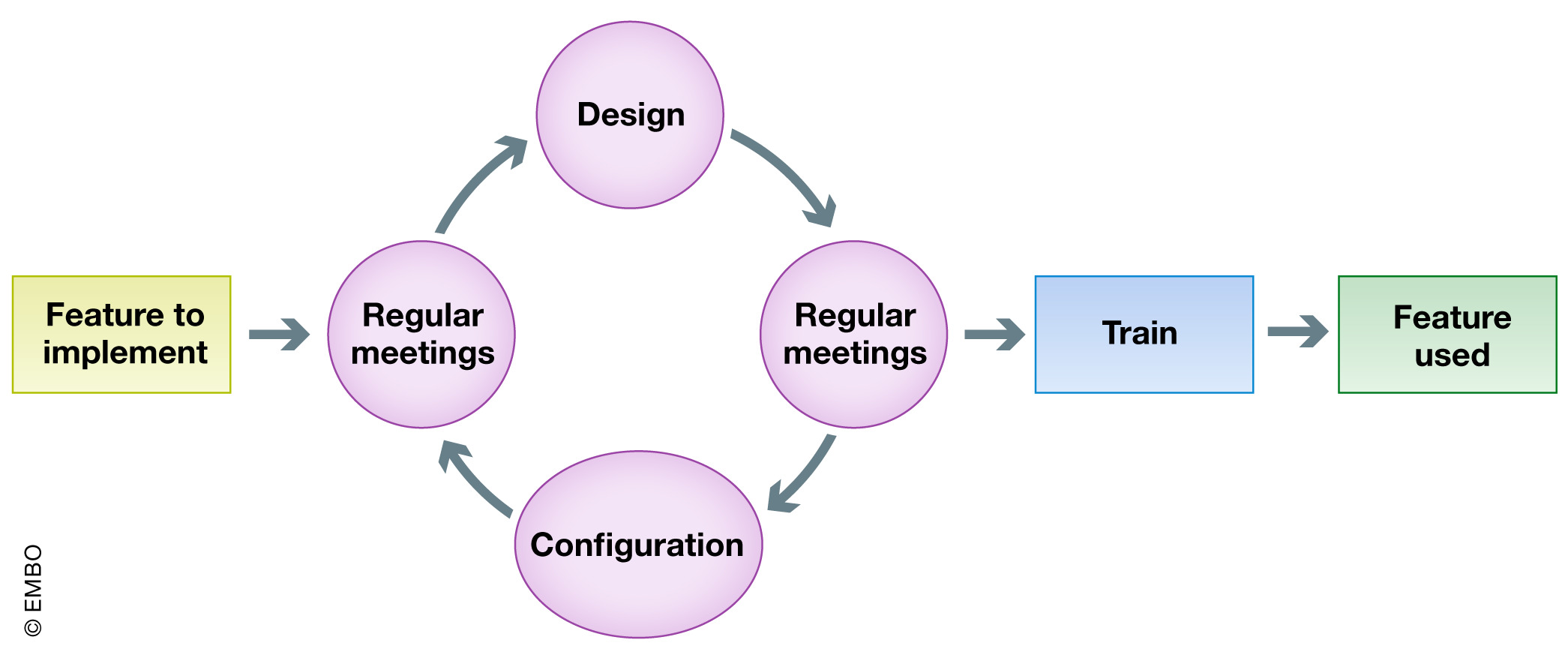

"Institutional ELN-LIMS deployment: Highly customizable ELN-LIMS platform as a cornerstone of digital transformation for life sciences research institutes"

The systematic recording and management of experimental data in academic life science research remains an open problem. École Polytechnique Fédérale de Lausanne (EPFL) engaged in a program of deploying both an electronic laboratory notebook (ELN) and a laboratory information management system (LIMS) six years ago, encountering a host of fundamental questions at the institutional level and within each laboratory. Here, based on our experience, we aim to share with research institute managers, principal investigators (PIs), and any scientists involved in a combined ELN-LIMS deployment helpful tips and tools, with a focus on surrounding yourself with the right people and the right software at the right time. In this article we describe the resources used, the challenges encountered, key success factors, and the results obtained at each phase of our project. Finally, we discuss the current and next challenges we face, as well as how our experience leads us to support the creation of a new position in the research group: the laboratory data manager. (Full article...)

|

Featured article of the week: June 21–27:

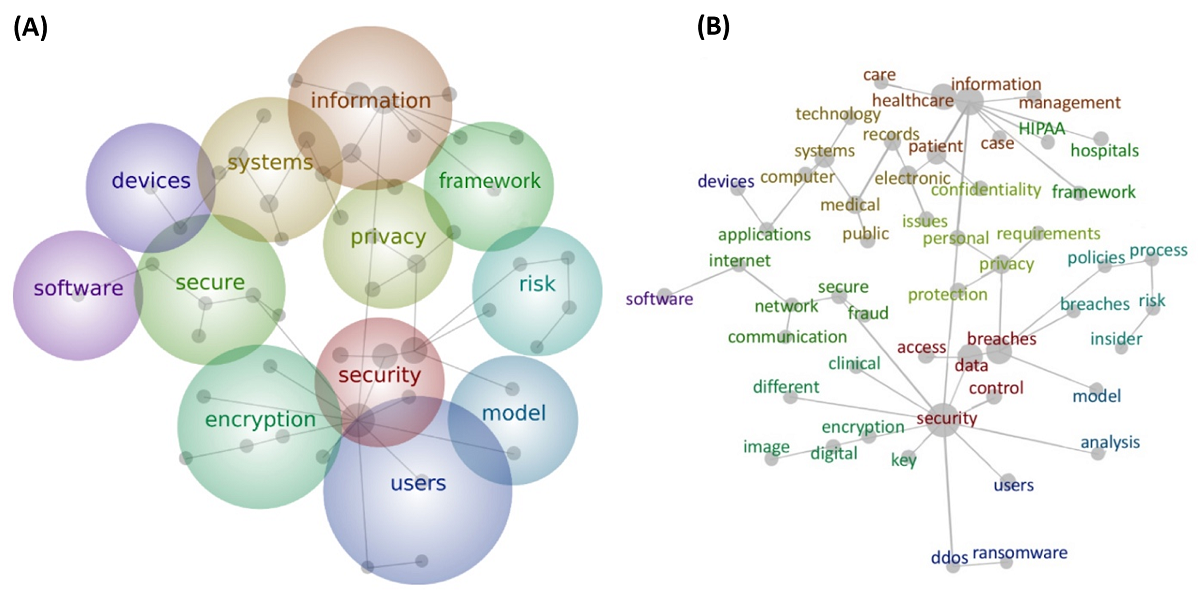

"Health care and cybersecurity: Bibliometric analysis of the literature"

Over the past decade, clinical care has become globally dependent on information technology. The cybersecurity of health care information systems is now an essential component of safe, reliable, and effective health care delivery. The objective of this study was to provide an overview of the literature at the intersection of cybersecurity and health care delivery. A comprehensive search was conducted using PubMed and Web of Science for English-language peer-reviewed articles. We carried out chronological analysis, domain clustering analysis, and text analysis of the included articles to generate a high-level concept map composed of specific words and the connections between them. Our final sample included 472 English-language journal articles. Our review results revealed that a majority of the articles were focused on technology. Technology–focused articles made up more than half of all the clusters, whereas managerial articles accounted for only 32 percent of all clusters. (Full article...)

|

Featured article of the week: June 14–20:

"Epidemiological data challenges: Planning for a more robust future through data standards"

Accessible epidemiological data are of great value for emergency preparedness and response, understanding disease progression through a population, and building statistical and mechanistic disease models that enable forecasting. The status quo, however, renders acquiring and using such data difficult in practice. In many cases, a primary way of obtaining epidemiological data is through the internet, but the methods by which the data are presented to the public often differ drastically among institutions. As a result, there is a strong need for better data sharing practices. This paper identifies, in detail and with examples, the three key challenges one encounters when attempting to acquire and use epidemiological data: (1) interfaces, (2) data formatting, and (3) reporting. (Full article...)

|

Featured article of the week: June 7–13:

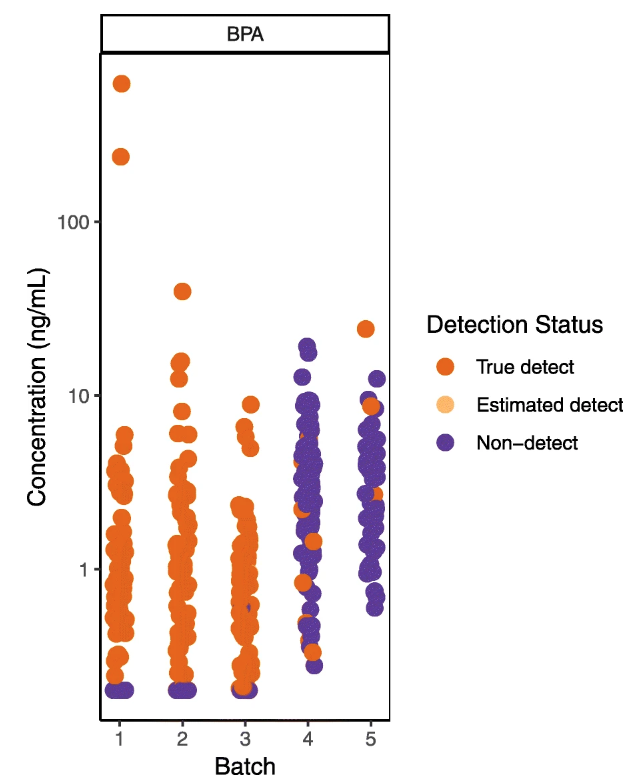

"Wrangling environmental exposure data: Guidance for getting the best information from your laboratory measurements"

Environmental health and exposure researchers can improve the quality and interpretation of their chemical measurement data, avoid spurious results, and improve analytical protocols for new chemicals by closely examining lab and field quality control (QC) data. Reporting QC data along with chemical measurements in biological and environmental samples allows readers to evaluate data quality and appropriate uses of the data (e.g., for comparison to other exposure studies, association with health outcomes, use in regulatory decision-making). However many studies do not adequately describe or interpret QC assessments in publications, leaving readers uncertain about the level of confidence in the reported data. One potential barrier to both QC implementation and reporting is that guidance on how to integrate and interpret QC assessments is often fragmented and difficult to find, with no centralized repository or summary. In addition, existing documents are typically written for regulatory scientists rather than environmental health researchers, who may have little or no experience in analytical chemistry. (Full article...)

|

|